neural Turing Machines

Neural Turing Machines

Even though recurrent neural networks (RNNs) are Turing complete in theory, the control of logical flow and usage of external memory have been largely ignored in the machine learning literature. This might be due to the fact that the RNNs have to be wired properly to achieve the Turing completeness and this is not necessarily easy to achieve in practice. By adding an addressable memory, Graves et al. try to overcome this limitation and name their approach Neural Turing Machine (NTM) as analogy to Turing machines that are finite-state machines extended with an infinite memory<ref name="main">Graves, A., Wayne, G., & Danihelka, I. (2014). Neural Turing Machines. arXiv preprint arXiv:1410.5401.</ref>. Furthermore, every component of an NTM is differentiable, implying each component can be learned.

Theoretical Background

The authors state that the design of the NTM is inspired by past research spanning the disciplines of neuroscience, psychology, cognitive science and linguistics, and that the NTM can be thought of as a working memory system of the sort described in various accounts of cognitive architecture. However, the authors propose to ignore the known capacity limitations of working memory, and to introduce sophisticated gating and memory addressing operations that are typically absent in models of the sort developed throughout the computational neuroscience literature.

With respect to historical precedents in cognitive science and linguistics literature, the authors relate their work to a longstanding debate concerning the effectiveness of neural networks for cognitive modeling. They present their work as advancing a line of research on encoding recursively-structured representations in neural networks that stemmed out of criticisms presented by Fodor and Pylyshyn in 1988 <ref name=fodor>Fodor, J. A., & Pylyshyn, Z. W. (1988). Connectionism and cognitive architecture: A critical analysis. Cognition, 28(1), 3-71.</ref> (though it is worth pointing out the authors give an incorrect summary of these criticisms - they state that Fodor and Pylyshyn argued that neural networks could not implement variable binding or perform tasks involving variable length structures, when in fact they argued that successful models of cognition require representations with constituent structure and processing mechanisms that strictly structure sensitive - see <ref name=fodor></ref> for details). The NTM is able to deal variable length inputs and arguably performs variable binding in the sense that the memory slots in the NTM can be treated as variables to which data is bound, but the authors do not revisit these issues in any detail after presenting the results of their simulations with the NTM.

Architecture

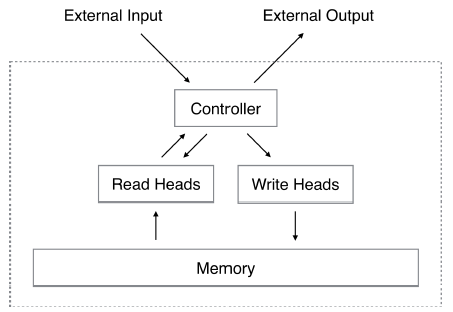

A Neural Turing Machine consists of a memory and a controller neural network. The controller receives input and produces output with help of the memory that is addressed with a content- and location based addressing mechanism. Figure 1 presents a high-level diagram of the NTM architecture.

Memory

The memory at time [math]\displaystyle{ t }[/math] is given by an [math]\displaystyle{ N \times M }[/math] matrix [math]\displaystyle{ M_t }[/math], where [math]\displaystyle{ N }[/math] is the number of memory locations and [math]\displaystyle{ M }[/math] the vector size at each memory location. To address memory locations for reading or writing an [math]\displaystyle{ N }[/math]-element vector [math]\displaystyle{ w_t }[/math] is used. The elements in this vector need to satisfy [math]\displaystyle{ 0 \leq w_t(i) \leq 1 }[/math] and have to sum to 1. Thus, it gives weighting of memory locations and the access to a location might be blurry.

Reading

Given an address [math]\displaystyle{ w_t }[/math] the read vector is just the weighted sum of memory locations:

[math]\displaystyle{ r_t \leftarrow \sum_i w_t(i) M_t(i) }[/math]

which is clearly differentiable with respect to both the memory and the weighting.

Writing

The write process is split up into an erase and an add operation (inspired by the input and forget gates in LSTM). This allows the NTM to both overwrite or add to a memory location in a single time step. Otherwise it would be necessary to do a read for one of the operations first before the updated result can be written.

The erase update is given by

[math]\displaystyle{ \tilde{M}_t(i) \leftarrow M_{t-1}(i) [1 - w_t(i) e_t] }[/math]

with an [math]\displaystyle{ M }[/math]-element erase vector [math]\displaystyle{ e_t }[/math] with elements in the range [math]\displaystyle{ (0, 1) }[/math] selecting which vector elements to reset at the memory locations selected by [math]\displaystyle{ w_t }[/math].

Afterwords an add vector [math]\displaystyle{ a_t }[/math] is added according to

[math]\displaystyle{ M_t(i) \leftarrow \tilde{M}_t(i) + w_t(i) a_t. }[/math]

The order in which the adds are performed by multiple heads is irrelevant. The combined erase and add operations of all the write heads produced the final content of the memory at time t.

Addressing Mechanisms

Two methods, content-based addressing and location-based addressing, are employed to generate the read/write weightings [math]\displaystyle{ w_t }[/math]. Depending on the task either mechanism can be more appropriate. These two methods of addressing are summarized in the figure below.

Content-based addressing

For content-addressing, each head (whether employed for reading or writing) first produces a length [math]\displaystyle{ M }[/math] key vector [math]\displaystyle{ k_t }[/math] that is compared to each vector [math]\displaystyle{ M_t (i) }[/math] by a similarity measure [math]\displaystyle{ K[.,.] }[/math]. The content-based system produces a normalised weighting [math]\displaystyle{ w_c^t }[/math] based on the similarity and a positive key strength, [math]\displaystyle{ \beta_t }[/math], which can amplify or attenuate the precision of the focus:

[math]\displaystyle{

w_c^t \leftarrow \frac{exp(\beta_t K[K_t,M_t(i)])}{\sum_{j} exp(\beta_t K[K_t,M_t(j)])}

}[/math]

In this current implementation, the similarity measure is cosine similarity:

[math]\displaystyle{ K[u,v] = \frac{u.v}{||u||.||v||} }[/math]

Location-based addressing

The location-based addressing mechanism is designed to facilitate both simple iterations across the locations of the memory and random-access jumps. It does so by implementing a rotational shift of a weighting. Prior to rotation, each head emits a scalar interpolation gate [math]\displaystyle{ g_t }[/math] in the range (0, 1). The value of [math]\displaystyle{ g }[/math] is used to blend between the weighting [math]\displaystyle{ w_{t-1} }[/math] produced by the head at the previous time-step and the weighting [math]\displaystyle{ w_t^c }[/math] produced by the content system at the current time-step, yielding the gated weighting [math]\displaystyle{ w_t^g }[/math] :

[math]\displaystyle{ w_t^g \leftarrow g_t w_t^c + (1-g_t) w_{t-1} }[/math]

After interpolation, each head emits a shift weighting [math]\displaystyle{ s_t }[/math] that defines a normalised distribution over the allowed integer shifts. Each element in this vector gives the degree by which different integer shifts are performed. For example, if shifts of -1, 0, 1 are allowed a (0, 0.3, 0.7) shift vector would denote a shift of 1 with strength 0.7 and a shift of 0 (no-shift) with strength 0.3. The actual shift is performed with a circular convolution

[math]\displaystyle{ \tilde{w}_t(i) \leftarrow \sum_{j=0}^{N-1} w_t^g(j) s_t(i - j) }[/math]

where all index arithmetic is modulo N. This circular convolution can lead to blurring of the weights and [math]\displaystyle{ _t }[/math] will be sharpened with

[math]\displaystyle{ w_t(i) \leftarrow \frac{\tilde{w}_t(i)^{\gamma_t}}{\sum_j \tilde{w}_t(j)^{\gamma_t}} }[/math]

where [math]\displaystyle{ _t 1 }[/math] is an additional scalar outputted by the write head.

Controller

The controller receives the external input and read head output and produces the addressing vectors and related values (for example shift weighting) for the read and write heads. It also produces an external output.

Different types of controllers can be used. The paper discusses feed-forward and LSTM controllers. Feed-forward controllers are simpler, but are more limited than LSTM controllers since the type of operations they can perform are limited by the number of concurrent read and write heads. The LSTM controller, given it's internal register-like memory, does not suffer from this limitation.

Experiments

Team wanted to see if a network can be trained to copy sequences of length up to 20 could copy a sequence of length 100 with no further training. For all of the experiments, three architectures were compared: NTM with a feedforward controller, TML with an LSTM controller, and a standard LSTM network. All the tasks were supervised learning problems with binary targets; all networks had logistic sigmoid output layers and were trained with the cross-entropy objective function. Sequence prediction errors are reported in bits-per-sequence.

Results

The authors tested the NTM with a feed-forward and an LSTM controller against a pure LSTM on multiple tasks:

- Copy Task: An input sequence has to reproduced.

- Repeat Copy Task: An input sequence has to reproduced multiple times.

- Associative Recall: After providing an input sequence the network is queried with one item of the sequence and has to produce the next.

- Dynamic N-Grams: Predict the probability of the next bit being 0 or 1 given the last six bits.

- Priority Sort: Sort an input sequence according to given priorities.

In all tasks the combination of NTM with Feedforward or LTSM converges faster and obtains better generalization than a pure LSTM controller.

Discussion

- While the experimental results show great promise of the NTM architecture, the paper could be serviced with a more in-depth experimental results discussion as to why NTM performs very well when combined with Feedforward or LTSM compared to a pure LTSM.

- The convergence performance difference between choosing NTM controller with FeedForward vs LTSM appears to hinge on whether the task requires LTSM's internal memory or NTM's external memory as an effective way to store data. Otherwise both controllers are comparable in terms of performance with each other.

- A bit skeptical about the efforts in tuning LTSM, the paper gives the feeling that the authors spent a lot of time tuning the NTM with different number of heads and controller size in order to achieve desired results for publication.

- Interested in knowing quantitatively how it would compare against other algorithms such as Genetic Programming to evolve a turing machines <ref>Naidoo, Amashini, and Nelishia Pillay. "Using genetic programming for turing machine induction." Genetic Programming. Springer Berlin Heidelberg, 2008. 350-361.</ref>, where by its output is a "Program" which theoretically should be better because it doesn't use weights, the "Program" should be more robust, and will require a lot less parameters.

References

<references/>