STAT946F17/ Learning Important Features Through Propagating Activation Differences

This is a summary of ICML 2017 paper [1].

Introduction

Deep neural networks are, by nature, [black boxes] which serves as a barrier for adoption in applications where the interpretability of a model is essential. It also presents difficulties for analyzing and improving the structure of the model. In this paper, a novel method called Deep Linear Importance Feature Tracker (DeepLIFT) is presented to decompose the output of a neural network on a specific input by backpropagating the contributions of all neurons in the network to every feature of the input. DeepLIFT compares the activation of each neuron to its ‘reference activation’ and assigns contribution scores according to the difference. This is a form of sensitivity analysis and it helps to better understand the model.

Sensitivity Analysis

Sensitivity Analysis is a concept in risk management and actuarial science. According to [Investopedia], a sensitivity analysis is a technique used to determine how changes in an independent variable influence a particular dependent variable under given assumptions. This technique is used within specific boundaries that depend on one or more input variables, such as the effect that changes in interest rates have on bond prices.

In our topic, we have a well-trained deep neuron network with two high-dimensional input vectors $x_0, x_1$ and output $y_0=f(x_0), y_1=f(x_1)$. Now we know $x_1$ is a perturbation of $x_0$ and we want to know which element in $x_1 - x_0$ contributes the most to $y_1 - y_0$.

As one can imagine, if $\left| x_1 - x_0 \right|$ is small, the most "crude" approximation is to calculate

$\left . \frac{\partial y}{\partial x} \right|_{x = x_0} $

and get its largest element in terms of absolute value. This is well feasible because back-propagation enables us to calculate the differentials layer by layer. However, this method doesn't always work well.

Failures of traditional methods

Failures of traditional (derivative-based) methods fall into several categories:

First, derivatives are local and not quite useful for comparative analysis. Since derivatives are local, if the input to compare is far from reference input, it may not be appropriate to assign contribution based on derivatives. An example can be image recognition, where a change in a single pixel makes no sense. In some cases where values in an input are discrete (not continuous), derivatives are not available.

Second, using derivatives may mislead the analysis when derivatives have completely different behaviour in different parts of the output function. For example, on ReLU and (hard) max layers, taking derivative may lead to the conclusion that changes in all values make no difference as long as they don't touch the boundary. This is true for most activation functions when the model is saturated.

Perturbation-based methods also suffer from the problem of model saturation. In addition to this, they tend to be computationally inefficient, since we need to compute all possible perturbations and each perturbation involves a feed-forward pass through the network.

DeepLIFT Philosophy

DeepLIFT seeks to explain a difference in output, from some reference output, based on the differences of the input. Concisely, what varied in the input which caused the observed variance of the input? Definitionally, this requires a reference input-output, which is meant to serve as the "default." A mathematical formulation is derived such that the differences in an output neuron can be decomposed into the contributions of the previous neurons. In essence, DeepLIFT compares the activation of each neuron within the network to its 'reference activation' and thereafter assigns a "contribution score" based on the computed difference between the neurons. In order for DeepLIFT to provide useful information, a relevant reference must be selected. This selection, practically speaking, should be made by a domain expert; it is the answer to the question "What are we interested in measuring differences against?" It also may be necessary, in practice, to try several references in order to achieve desired results (for instance, using the CIFAR-10 dataset, an all 0 (black image) reference highlighted "hard-to-interpret" pixels in the background, while a blurred version of an original image outlined key objects).

DeepLIFT scheme

Let y represent some target output neuron of interest and let $x_1, x_2, \dots, x_n$ represent some neurons in some intermediate layer or set of layers that are necessary and sufficient to compute y. $C_{\Delta x_i \Delta y}$ is defined as contribution factor and can be thought of as the amount of difference-from-reference in y that is attributed to or blamed on the difference-from-reference of $x_i$.

For a given input neuron x with difference-from-reference ∆x, and target neuron y with difference-from-reference ∆y that we wish to compute the contribution to, we define the multiplier $m_{\Delta x \Delta y}$ as: $$ m_{\Delta x \Delta y} = \frac{C_{\Delta x \Delta y}}{\Delta x} $$

DeepLIFT assigns contribution scores $C_{ \Delta x_i \Delta y} = m_{ \Delta x_i \Delta y} \Delta x_i$ to each element $x_i$ so that sum of contribution scores satisfies $\sum_{i=1}^N C_{ \Delta x_i \Delta y} = \Delta y$.

Chain Rule

First, by the appendix of [1] we know the DeepLIFT multiplier $m$ behaves just like derivatives and satisfies the chain rule: If $z = z\left( y(x_1,...,x_N) \right)$ then

$m_{\Delta z / \Delta x_i} = m_{\Delta z / \Delta y} m_{\Delta y / \Delta x_i} $

Linear Rule

Second, let's consider a simple neuron $y = f(s)$ where $s = \sum_{i=1}^N w_i x_i$. We separate the positive and negative contribution of each $x_i$ and assign $m_{\Delta s / \Delta x_i}$ by the following rule, named as linear rule. The positive contribution of $x_i$ to $s$ is defined as

$ \begin{align} \Delta s^{+} & = \sum_{i=1}^N 1_{\left\{ w_i \Delta x_i > 0 \right\}} w_i \Delta x_i \\ & = \sum_{i=1}^N \left( 1_{\left\{ w_i > 0 \right\}} w_i \Delta x_{i}^{+} + 1_{\left\{ w_i < 0 \right\}} w_i \Delta x_{i}^{-} \right) \\ \end{align} $

We then assign the secant as $ m_{\Delta s^{+} / \Delta x_{i}^{+} } = 1_{\left\{ w_i > 0 \right\}} w_i $ and $ m_{\Delta s^{+} / \Delta x_{i}^{-} } = 1_{\left\{ w_i < 0 \right\}} w_i $. Similarly, we have $ m_{\Delta s^{-} / \Delta x_{i}^{+} } = 1_{\left\{ w_i < 0 \right\}} w_i $ and $ m_{\Delta s^{-} / \Delta x_{i}^{-} } = 1_{\left\{ w_i > 0 \right\}} w_i $. As to the occasion when $\Delta x_{i} = 0$, we let $ m_{\Delta s^{\pm} / \Delta x_{i}^{\pm} } = \frac{1}{2} w_i $.

Rescale Rule

For the function $f(s)$, it is possible to use the easiest method called rescale rule:

$ \Delta y = \frac{\Delta y}{\Delta s} \Delta s = \frac{\Delta y}{\Delta s} \left( \Delta s^{+} + \Delta s^{-} \right) $, where $\Delta y^{+} = \frac{\Delta y}{\Delta x} \Delta x^{+}=C_{\Delta x^{+}\Delta y^{+}}$, and $\Delta y^{-} = \frac{\Delta y}{\Delta x} \Delta x^{-}=C_{\Delta x^{-}\Delta y^{-}}$

Reveal-Cancel Rule

Rescale rule works for simple functions such as ReLU, but it does not always work well especially for some cases like pooling layers. To solve this we introduce reveal-cancel rule:

Suppose our reference input is $x_0$ and $s_0 = \sum_{i=1}^N w_i x_{0,i}$ is the sum in $y_0 = f(s_0)$. We define:

$ \Delta y^{+} = \frac{1}{2} \left[ f(s_0 + \Delta s^{+}) - f(s_0) \right] + \frac{1}{2} \left[ f(s_0 + \Delta s^{+} + \Delta s^{-}) - f(s_0 + \Delta s^{-}) \right] $

$ \Delta y^{-} = \frac{1}{2} \left[ f(s_0 + \Delta s^{-}) - f(s_0) \right] + \frac{1}{2} \left[ f(s_0 + \Delta s^{+} + \Delta s^{-}) - f(s_0 + \Delta s^{+}) \right] $

$ m_{\Delta y / \Delta s^{+} } = \Delta y^{+} / \Delta s^{+} , m_{\Delta y / \Delta s^{-} } = \Delta y^{-} / \Delta s^{-} $

By considering the impact of the positive terms in the absence of negative terms, and the impact of negative terms in the absence of positive terms, we alleviate some of the issues that arise from positive and negative terms canceling each other out.

Adjustments for softmax layers

Since softmax layer normalizes its input, we can let the contribution of softmax layer $y=softmax(z)$ be that of its preceding layer $z = z(x)$ minus the average contribution of that preceding layer:

$ C^{\prime}_{\Delta z_i / \Delta x} = C_{\Delta z_i / \Delta x} - \frac{1}{n} \sum_{j=1}^{N} C_{\Delta z_j / \Delta x} $

Given the rules above, it is easy to calculate $ m_{\Delta y / \Delta x_i^{\pm} } $ for each $x_i$, and thus to calculate the DeepLIFT multiplier $m$ and contribution $C$ of a certain input and output compared to a given reference input $x_0$. It is suggested by the author that reference input should be case-specific and no general rule for choosing $x_0$ is currently available.

Numerical results

The authors performed two main experiments to test whether the suggestions in DeepLIFT method are based on correct "understanding" of what the model is doing.

MNIST handwriting re-morphing

Suppose we have a well-trained MNIST handwriting recognition model which can identify 0-9 correctly. For experimental purposes, the authors train a convolutional neural network that obtains 99.2% test set accuracy. The model consists of two convolutional layers, followed by a fully connected layer, followed by the softtmax output layer. Strides greater than 1 are used as a substitute for pooling layers. It is noted how this did not lead to a drop in performance. Next we have a hand-written 8, and we want to know "how can we erase a part of this handwritten 8 to change it, say, to 3?"

In this test, we take our reference input as all-zeros (black image) as this is the background of the images. We subtract contribution scores of pixels of class 8 to that of class 3, by finding $S_{x_{i}diff} = S_{x_{i}co} − S_{x_{i}ct}$ (where $S_{x_{i}c}$ is the score for pixel $x_i$ and class c) and erasing up to 157 pixels (20% of the image) ranked in descending order of $S_{x_{i}diff}$ for which $S_{x_{i}diff} > 0$. We then evaluate the change in the log-odds score between classes $c_o$ and $c_t$ for the original image and the image with the pixels erased.

It appears that DeepLIFT can identify the left side of 8 as to be erased, which outperforms the other backpropagation-based methods.

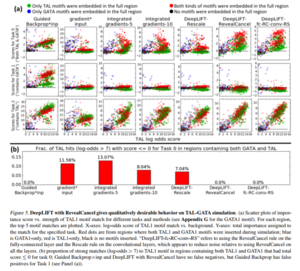

DNA motif detection

Pattern discovery in DNA sequences is one of the most challenging problems in molecular biology and computer science [4]. A DNA motif is defined as a nucleic acid sequence pattern that has some biological significance such as being DNA binding sites for a regulatory protein.

Suppose we have a long sequence of DNA $\left\{ x_n \right\}$, and we have a neuron network detecting whether the sequence contains GATA motif (a short DNA sub-sequence) or TAL motif. The training dataset is a mixture of randomly generated DNA sequences and real-world DNA sequences equipped with GATA and TAL, and the network is well-trained. We know the behavior of the neuron network:

- The network adhere tag (0,0) for these sequences which are believed to be randomly generated.

- The network adhere tag (1,0) for these sequences which are believed to be real-world and contains GATA but not TAL, and (0,1) for these sequences which contains TAL but not GATA.

- The network adhere tag (1,1) for these sequences which are believed to be real-world and contains both GATA and TAL.

The question is: "What makes sequences with tag (1,0) different from these with tag (0,0)?" The answer should be "Whether it contains GATA sequence". Now we sample a sequence $\left\{ a_n \right\}$ with tag (1,0) and another one randomly generated with tag (0,0), $\left\{ b_n \right\}$, as reference, and assign contribution scores to the sequence by different schemes. We expect a good analyzing scheme to show us the answer above by highlighting the GATA motif in $\left\{ a_n \right\}$. The authors of [1] show that DeepLIFT performs much better than other schemes in highlighting the requested motif.

Conclusions

- DeepLIFT is an efficient backpropagation-based approach for defining importance scores that leverages differences from a "reference" activation.

- Using the difference-from-reference allows information to propagate even when the gradient is zero, which could prove especially useful in Neural Networks where saturating activations are popular such as RNNs.

- By allowing separate treatment of positive and negative contributions, the DeepLIFT can identify dependencies missed by other methods

- DeepLIFT avoids placing potentially misleading importance on bias terms

- Paper has suggested way to define a "reference" activations which has yielded good results empirically, however there is more opportunities to experiment with it.

Critique

It is worth raising the following point: the authors of the paper seem to have reduced sensitivity analysis with respect to inputs to a problem of learning what is basically a discrete version of the manifold learned by hidden units. Moreover, the rules for DeepLIFT seem to correspond essentially to rules for moving across this discrete manifold using discrete steps. Given that models like variational autoencoders already learn a smooth manifold for datasets like MNIST and even provide smooth transition heuristics on the manifold, we should ask whether DeepLIFT is simply doing a lot of work to suss out a discrete approximation of something that can already be approximated pretty well in a more natural, i.e. smooth, setting. This criticism carries some force when we consider the example given in the paper of how one would turn an 8 into a 3. The idea followed in the paper is to learn which input pixels of the image are responsible for the left loops of the 8 and then to excise half-loops to get a 3. However, the manifold learned by something like a variational autoencoder not only allows us to achieve the same thing but it does so in a smooth manner whereby we can even get all the intermediate steps involved in turning an 8 into a 3.

References

[1] Shrikumar, A., Greenside, P., and Kundaje, A. Learning Important Features Through Propagating Activation Differences. arXiv:1704.02685

[2] Video tutorial to DeepLIFT: http://goo.gl/qKb7pL

[3] Implementation for DeepLIFT: https://github.com/kundajelab/deeplift