Improving neural networks by preventing co-adaption of feature detectors: Difference between revisions

(→MNIST) |

|||

| Line 5: | Line 5: | ||

= MNIST = | = MNIST = | ||

The MNIST dataset contains 70,000 digit images of size 28 x 28. To see the impact of dropout, they used 4 different neural networks (784-800-800-10, 784-1200-1200-10, 784-2000-2000-10, 784-1200-1200-1200-10), using the same dropout rates as 50% for hidden neurons and 20% for visible neurons. Stochastic gradient descent was used with minibatches of size 100 and a cross-entropy objective function as the loss function. Weights were updated after each minibatch, and training was done for 3000 epochs. An exponentially decaying learning rate (epsilon) was used, with the initial value set as 10.0, and it was multiplied by 0.998 at the end of each epoch. At each hidden layer, the incoming weight vector for each hidden neuron was set an upper bound of its length, l, and they found from cross validation that the results were the best when l = 15. Initial weights values were pooled from a normal distribution with mean 0 and standard deviation 0.01. To update weights, an additional variable, p, called momentum, was used to accelerate learning. The initial value of p was 0.5, and it increased linearly to the final value 0.99 during the first 500 epochs, remaining unchanged after. Also, when updating weights, the learning rate was multiplied by 1 – p. L denotes the gradient of loss function. | |||

= TIMIT = | = TIMIT = | ||

Revision as of 01:12, 28 November 2020

Presented by

Kyle Jung, Dae Hyun Kim, Seokho Lim, Stan Lee

Introduction to Dropout + Dataset

MNIST

The MNIST dataset contains 70,000 digit images of size 28 x 28. To see the impact of dropout, they used 4 different neural networks (784-800-800-10, 784-1200-1200-10, 784-2000-2000-10, 784-1200-1200-1200-10), using the same dropout rates as 50% for hidden neurons and 20% for visible neurons. Stochastic gradient descent was used with minibatches of size 100 and a cross-entropy objective function as the loss function. Weights were updated after each minibatch, and training was done for 3000 epochs. An exponentially decaying learning rate (epsilon) was used, with the initial value set as 10.0, and it was multiplied by 0.998 at the end of each epoch. At each hidden layer, the incoming weight vector for each hidden neuron was set an upper bound of its length, l, and they found from cross validation that the results were the best when l = 15. Initial weights values were pooled from a normal distribution with mean 0 and standard deviation 0.01. To update weights, an additional variable, p, called momentum, was used to accelerate learning. The initial value of p was 0.5, and it increased linearly to the final value 0.99 during the first 500 epochs, remaining unchanged after. Also, when updating weights, the learning rate was multiplied by 1 – p. L denotes the gradient of loss function.

TIMIT

Consisting of recordings of 630 speakers of 8 dialects of American English each reading 10 phonetically-rich sentences, the TIMIT is a standard dataset used for evaluation of automatic speech recognition systems. The objective is to convert a given speech signal into a transcription sequence of phones. Hidden Markov Models (HMMs) is an acoustic model that is typically used to deal with variance and determines a level of fit from coefficients of input to each state of HMMs. Recent results show that mapping feedforward neural networks with an acoustic input coupled with a probability distribution over HMM states perform better than the traditional Gaussian mixture models on speech recognition datasets including TIMIT.

A Neural network was constructed to output the classification error rate on the test set of TIMIT dataset. They have built the neural network with four fully-connected hidden layers with 4000 neurons per layer. The output layer distinguishes distinct classes from one hundred 185 softmax output neurons that are merged into 39 classes. After constructing the neural network, 21 adjacent frames with an advance of 10ms per frame was given as an input. The results show that applying dropout with 50% of hidden units on various neural networks exceed classification performance from the neural networks without dropout. The decoder, a network that knows transition probabilities between HMM states, runs the Viterbi algorithm on class probabilities for each frame from the output of the neural network to predict the best single sequence of HMM states. The classification error achieved 19.7% with dropout and 22.7% without dropout.

Pre-training

Deep Belief Network was used to pretrain the neural network. Since the inputs are real-valued, Gaussian RBM was used for pretraining the first layer. Initializing visible biases with zero, weights were sampled from random numbers that followed normal distribution N(0, 0.01). Each visible neuron’s variance was set to 1.0 and remained unchanged during training. Minimizing Contrastive Divergence (CD) was used to facilitate learning. Since momentum is used to speed up learning, it was initially set to 0.5 and increased linearly to 0.9 over 20 epochs. The average gradient had 0.001 of a learning rate which was then multiplied by (1-momentum) and L2 weight decay was set to 0.001. After setting up the hyperparameters, the model was done training after 100 epochs. Binary RBMs were used for training all subsequent layers with a learning rate of 0.01. Then, p was set as the mean activation of a neuron in the data set and the visible bias of each neuron was initialized to log(p/(1 − p)). Training each layer with 50 epochs, all remaining hyper-parameters were the same as those for the Gaussian RBM.

Dropout tuning

The initial weights were set in a neural network from the pretrained RBMs. To finetune the network with dropout-backpropagation, momentum was initially set to 0.5 and increased linearly up to 0.9 over 10 epochs. The model had a small constant learning rate of 1.0 and it was used to apply to the average gradient on a minibatch. The model also retained all other hyperparameters the same as the model from MNIST dropout finetuning. The model required approximately 200 epochs to converge. For comparison purpose, they also finetuned the same network with standard backpropagation with a learning rate of 0.1 with the same hyperparameters.

Comparing the performance of dropout with standard backpropagation on several network architectures and input representations, dropout consistently achieved lower error and cross-entropy. Results showed that it significantly controls overfitting, making the method robust to choices of network architecture. It also allowed much larger nets to be trained and removed the need for early stopping. Neural network architectures with dropout are not very sensitive to the choice of learning rate and momentum.

Reuters

CNN

Feed-forward neural networks consist of several layers of neurons where each neuron in a layer applies a linear filter to the input image data and is passed on to the neurons in the next layer. When calculating the neuron’s output, scalar bias aka weights is applied to the filter with nonlinear activation function as parameters of the network that are learned by training data. There are several differences between Convolutional Neural networks and ordinary neural networks. First, CNN’s neurons are organized topographically into a bank and laid out on a 2D grid, so it reflects the organization of dimensions of the input data. Secondly, neurons in CNN apply filters which are local, and which are centered at the neuron’s location in the topographic organization. Meaning that useful metrics or clues to identify the object in an input image which can be found by examining local neighborhoods of the image. Next, all neurons in a bank apply the same filter at different locations in the input image. By looking at the image example. Green is an input to one neuron bank, yellow is filter bank, and pink is the output of one neuron bank (convolved feature). A bank of neurons in a CNN applies a convolution operation, aka filters, to its input where a single layer in a CNN typically has multiple banks of neurons, each performing a convolution with a different filter. The resulting neuron banks become distinct input channels into the next layer. The whole process reduces the net’s representational capacity, but also reduces the capacity to overfit.

Pooling

Pooling layer summarizes the activities of local patches of neurons in the convolutional layer by subsampling the output of a convolutional layer. Pooling is useful for extracting dominant features, to decrease the computational power required to process the data through dimensionality reduction. The procedure of pooling goes on like this; output from convolutional layers is divided into sections called pooling units and they are laid out topographically, connected to a local neighborhood of other pooling units from the same convolutional output. Then, each pooling unit is computed with some function which could be maximum and average. Maximum pooling returns the maximum value from the section of the image covered by the pooling unit while average pooling returns the average of all the values inside the pooling unit (see example). In result, there are fewer total pooling units than convolutional unit outputs from the previous layer, this is due to larger spacing between pixels on pooling layers. Using the max-pooling function reduces the effect of outliers and improves generalization.

Local Response Normalization

This network includes local response normalization layers which are implemented in lateral form and used on neurons with unbounded activations and permits the detection of high-frequency features with a big neuron response. This regularizer encourages competition among neurons belonging to different banks. Normalization is done by dividing the activity of a neuron in bank i at position (x,y) by the equation below, where the sum runs over N ‘adjacent’ banks of neurons at the same position as in the topographic organization of neuron bank. The constants, N, alpha and betas are hyper-parameters whose values are determined using a validation set. This technique is replaced by better techniques such as the combination of dropout and regularization methods (L1,and L2)

Neuron nonlinearities

All of the neurons for this model use the max-with-zero nonlinearity where output within a neuron is computed as [math]\displaystyle{ function }[/math] is the total input to the neuron. The reason they use nonlinearity is because it has several advantages over traditional saturating neuron models, such as significant reduction in training time required to reach a certain error rate. Another advantage is that nonlinearity reduces the need for contrast-normalization and data pre-processing since neurons do not saturate- meaning activities simply scale up little by little with usually large input values. For this model’s only pre-processing step, they subtract the mean activity from each pixel and the result is a centered data.

Objective function

The objective function of their network maximizes the multinomial logistic regression objective which is the same as minimizing the average cross-entropy across training cases between the true label and the model’s predicted label.

Weight Initialization

It’s important to note that if a neuron always receives a negative value during training, it will not learn because its output is uniformly zero under the max-with-zero nonlinearity. Hence, the weights in their model were sampled from a zero-mean normal distribution with a high enough variance. High variance in weights will set a certain number of neurons with positive values for learning to happen, and in practice, it’s necessary to try out several candidates for variances until a working initialization is found. In their experiment, setting a positive constant, or 1, as biases of the neurons in the hidden layers was helpful in finding it.

Training

In this model, a batch size of 128 samples and momentum of 0.9, we train our model using stochastic gradient descent. The update rule for weight w is [math]\displaystyle{ 3232 }[/math], where i is the iteration index, v is a momentum variable, e is the learning rate and dE/dw is the average over the ith batch of the derivative of the objective with respect to w_i. The whole training process on CIFAR-10 takes roughly 90minuts and ImageNet takes 4 days with dropout and two days without.

Learning

To determine the learning rate for the network, it is a must to start with an equal learning rate for each layer which produces the largest reduction in the objective function with power of ten. Usually, it is in the order of 10^-2 or 10^-3. In this case, they reduce the learning rate twice by a factor of ten before termination of training.

CIFAR-10

Models for CIFAR-10:

CIFAR-10 is a popular object recognition dataset with size 32 x 32 color images searched from the web. It contains 10 classes and the images were labels with the noun used to search the image. It has images of 6000 train images and 1000 test images of a single dominant object from the label name for each 10 classes.

They implemented two different models for CIFAR-10, one with dropout and the other without. The one with dropout enables us to use more parameters because dropout forces a strong regularization on the network, and a fourth weight layer is added to take the input from the previous pooling layer. We add a fourth weight layer that is locally connected but not convolutional and this layer contains 16 banks of filters of size 3 × 3 (50% dropout). And then, the softmax layer takes its input from this fourth weight layer.

The one without dropout is a CNN with three convolutional layers each with a pooling layer. The max-pooling method is performed by the pooling layer which follows the first convolutional layer, and the average-pooling method is performed by remaining 2 pooling layers. The first and second pooling layers with N = 9, α = 0.001, and β = 0.75 are followed by response normalization layers.

A ten-unit softmax layer, which is used to output a probability distribution over class labels, is connected with the upper-most pooling layer. Using filter size of 5×5, all convolutional layers have 64 filter banks.

Thus, with a neural network with 3 convolutional hidden layers with 3 max-pooling layers, the classification error achieved 16.6% to beat 18.5% from the best published error rate without using transformed data. Then, adding one locally-connected layer after these 6 layers and dropout at the last hidden layer produced the error rate of 15.6%.

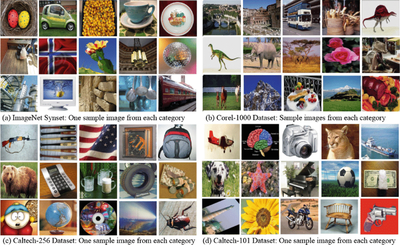

ImageNet

ImageNet is a dataset of millions of high-resolution labeled images in thousands of categories which were collected from the web and labelled by human labellers using MTerk tool (Amazon’s Mechanical Turk crowd-sourcing tool). Because this dataset has millions of labeled images in thousands of categories, it is very difficult to have perfect accuracy on this dataset even for humans because the ImageNet images contain multiple instances of ImageNet objects and there are a large number of object classes. ImageNet and CIFAR-10 are very similar, but the scale of ImageNet is about 20 times bigger (1,300,000 vs 60,000). The size of ImageNet is about 1.3 million training images, 50,000 validation images, and 150,000 testing images. They used resized images of 256 x 256 pixels for their experiments.

Example of ambiguous image to label:

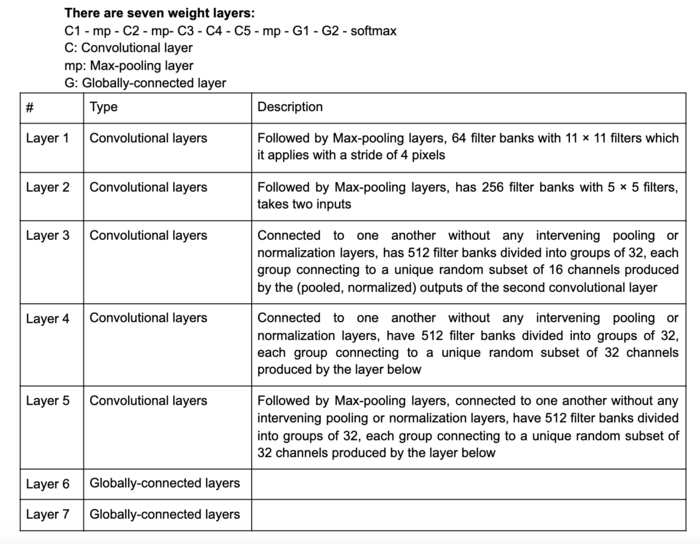

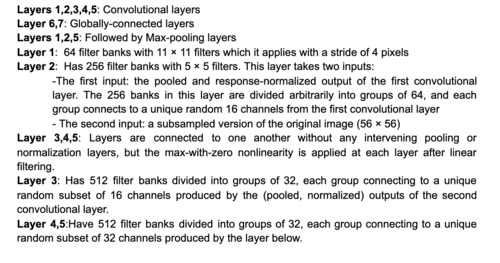

When this paper was written, the best score on this dataset is 45.7% by High-dimensional signature compression for large-scale image classification (J. Sanchez, F. Perronnin, CVPR11 (2011)). The authors of this paper could achieve a comparable performance of 48.6% error using a single neural network with five convolutional hidden layers with a max-pooling layer in between, followed by two globally connected layers and a final 1000-way softmax layer. Also, 42.4% could be achieved by using 50% dropout in the 6th hidden layer.

ImageNet dataset:

It was demonstrated that making a large number of decisions was important for the architecture of the net design for the speech recognition (TIMIT) and object recognition datasets (CIFAR-10 and ImageNet). A separate validation set which evaluated the performance of a large number of different architectures was used to make those decisions, and then they chose the best performance architecture with dropout on the validation set so that they could apply it to the real test set.

Models for ImageNet:

The models for ImageNet with dropout (the one without dropout had a similar approach, but there was a serious issue with overfitting): They used a convolutional neural network trained by 224×224 patches randomly extracted from the 256 × 256 images. It can reduce the network’s capacity to overfit the training data and helps generalization as a form of data augmentation. The method of averaging the prediction of the net on ten 224 × 224 patches of the 256 × 256 input image was used for a testing (patched at the center, the four corner patches, and their horizontal reflections).

To maximize the performance on the validation set, it was necessary to use the very complicated network architecture described above. They could show that dropout is a helpful factor for very complex neural nets that have been developed by the joint efforts of many groups over many years to be really good at object recognition. Using non-convolutional higher layers with a lot of parameters leads to a big improvement with dropout, but makes things worse without dropout. Training with dropout improved the performance even for a complicated model as described above.