DREAM TO CONTROL: LEARNING BEHAVIORS BY LATENT IMAGINATION

Presented by

Bowen You

Introduction

Reinforcement learning is one of the three basic machine learning paradigms, alongside supervised and unsupervised learning, and it refers to training a neural network to make a series of decisions dependent on a complex, evolving environment. Typically, this is accomplished by 'rewarding' or 'penalizing' the network based on its behaviors over time. Intelligent agents are able to accomplish tasks that may not have been seen in prior experiences. For recent reviews of reinforcement learning, see [3,4]. One way to achieve this is to represent the world based on past experiences. In this paper, the authors propose an agent that learns long-horizon behaviors purely by latent imagination and outperforms previous agents in terms of data efficiency, computation time, and final performance.

Preliminaries

This section aims to define a few key concepts in reinforcement learning. In the typical reinforcement problem, an agent interacts with the environment. The environment is typically defined by a model that may or may not be known. The environment may be characterized by its state [math]\displaystyle{ s \in \mathcal{S} }[/math]. The agent may choose to take actions [math]\displaystyle{ a \in \mathcal{A} }[/math] to interact with the environment. Once an action is taken, the environment returns a reward [math]\displaystyle{ r \in \mathcal{R} }[/math] as feedback.

The actions an agent decides to take is defined by a policy function [math]\displaystyle{ \pi : \mathcal{S} \to \mathcal{A} }[/math]. Additionally we define functions [math]\displaystyle{ V_{\pi} : \mathcal{S} \to \mathbb{R} \in \mathcal{S} }[/math] and [math]\displaystyle{ Q_{\pi} : \mathcal{S} \times \mathcal{A} \to \mathbb{R} }[/math] to represent the value function and action-value functions of a given policy [math]\displaystyle{ \pi }[/math] respectively. Informally, [math]\displaystyle{ V_{\pi} }[/math] tells one how good a state is in terms of the expected return when starting in the state [math]\displaystyle{ s }[/math] and then following the policy [math]\displaystyle{ \pi }[/math]. Similarly [math]\displaystyle{ Q_{\pi} }[/math] gives the value of the expected return starting from the state [math]\displaystyle{ s }[/math], taking the action [math]\displaystyle{ a }[/math], and subsequently following the policy [math]\displaystyle{ \pi }[/math].

Thus the goal is to find an optimal policy [math]\displaystyle{ \pi_{*} }[/math] such that \[ \pi_{*} = \arg\max_{\pi} V_{\pi}(s) = \arg\max_{\pi} Q_{\pi}(s, a) \]

Feedback Loop

Given this framework, agents are able to interact with the environment in a sequential fashion, namely a sequence of actions, states, and rewards. Let [math]\displaystyle{ S_t, A_t, R_t }[/math] denote the state, action, and reward obtained at time [math]\displaystyle{ t = 1, 2, \ldots, T }[/math]. We call the tuple [math]\displaystyle{ (S_t, A_t, R_t) }[/math] one episode. This can be thought of as a feedback loop or a sequence \[ S_1, A_1, R_1, S_2, A_2, R_2, \ldots, S_T \]

Motivation

In many problems, the amount of actions an agent is able to take is limited. Then it is difficult to interact with the environment to learn an accurate representation of the world. The proposed method in this paper aims to solve this problem by "imagining" the state and reward that the action will provide. That is, given a state [math]\displaystyle{ S_t }[/math], the proposed method generates \[ \hat{A}_t, \hat{R}_t, \hat{S}_{t+1}, \ldots \]

By doing this, an agent is able to plan-ahead and perceive a representation of the environment without interacting with it. Once an action is made, the agent is able to update their representation of the world by the actual observation. This is particularly useful in applications where experience is not easily obtained.

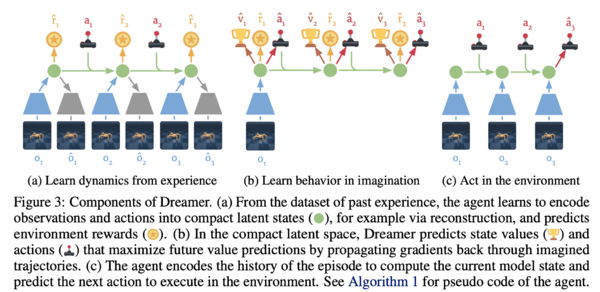

Dreamer

The authors of the paper call their method Dreamer. In a high-level perspective, Dreamer first learns latent dynamics from past experience, then it learns actions and states from imagined trajectories to maximize future action rewards. Finally, it predicts the next action and executes it. This whole process is illustrated below.

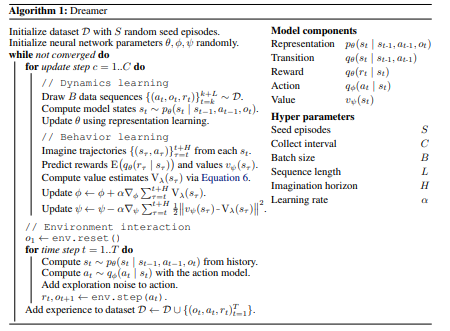

Let's look at Dreamer in detail. It consists of :

- Representation [math]\displaystyle{ p_{\theta}(s_t | s_{t-1}, a_{t-1}, o_{t}) }[/math]

- Transition [math]\displaystyle{ q_{\theta}(s_t | s_{t-1}, a_{t-1}) }[/math]

- Reward [math]\displaystyle{ q_{\theta}(r_t | s_t) }[/math]

- Action [math]\displaystyle{ q_{\phi}(a_t | s_t) }[/math]

- Value [math]\displaystyle{ v_{\psi}(s_t) }[/math]

where [math]\displaystyle{ o_{t} }[/math] is the observation at time [math]\displaystyle{ t }[/math] and [math]\displaystyle{ \theta, \phi, \psi }[/math] are learned neural network parameters.

The main three components of agent learning in imagination are dynamics learning, behavior learning, and environment interaction. In the compact latent space of the world model, the behavior is learned by predicting hypothetical trajectories. Throughout the agent's lifetime, Dreamer performs the following operations either in parallel or interleaved as shown in Figure 3 and Algorithm 1:

- Dynamics Learning: Using past experience data, the agent learns to encode observations and actions into latent states and predicts environment rewards. One way to do this is via representation learning.

- Behavior Learning: In the latent space, the agent predicts state values and actions that maximize future rewards through back-propagation.

- Environment Interaction: The agent encodes the episode to compute the current model state and predict the next action to interact with the environment.

The proposed algorithm is described below.

Notice that there are three neural networks that are trained simultaneously. The neural networks with parameters [math]\displaystyle{ \theta, \phi, \psi }[/math] correspond to models of the environment, action and values respectively.

Results

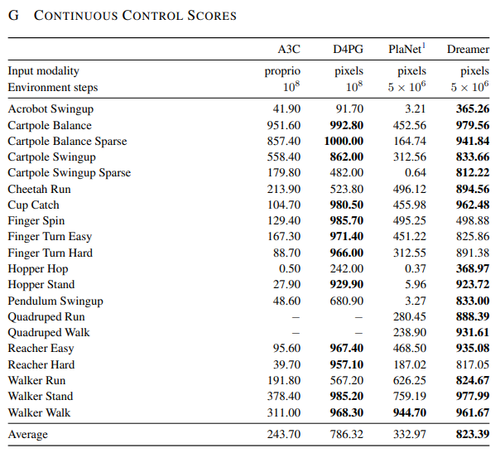

The figure below summarizes the performance of Dreamer compared to other state-of-the-art reinforcement learning agents for continuous control tasks. Using the same hyper parameters for all tasks, Dreamer exceeds previous model-based and model-free agents in terms of data-efficiency, computation time, and final performance and overall, it achieves the most consistent performance among them. Additionally, while other agents heavily rely on prior experience, Dreamer is able to learn behaviors with minimal interactions with the environment.

Conclusion

This paper presented a new algorithm for training reinforcement learning agents with minimal interactions with the environment. The algorithm outperforms many previous algorithms in terms of computation time and overall performance. This has many practical applications as many agents rely on prior experience which may be hard to obtain in the real-world. Although it may be an extreme example, consider a reinforcement learning agent that learns how to perform rare surgeries may not have enough data samples. This paper shows that it is possible to train agents without requiring many prior interactions with the environment. Also, as a future work on representation learning, the ability to scale latent imagination to environments of higher visual complexity can be investigated.

Source Code

The code for this paper is freely available at https://github.com/google-research/dreamer.

Critique

This paper presents an approach that involves learning a latent dynamics model to learn 20 visual control tasks.

In the model components in Appendix A, they have mentioned that "three dense layers of size 300 with ELU activations" and "30-dimensional diagonal Gaussians" have been used for distributions in latent space. The paper would have benefitted from pointing out how come they have come up with this architecture as their model. In other words, how the latent vector determines the performance of the agent.

.

References

[1] D. Hafner, T. Lillicrap, J. Ba, and M. Norouzi. Dream to control: Learning behaviors by latent imagination. In International Conference on Learning Representations (ICLR), 2020.

[2] R. S. Sutton and A. G. Barto. Reinforcement learning: An introduction. MIT press, 2018.

[3] Arulkumaran, K., Deisenroth, M. P., Brundage, M., & Bharath, A. A. (2017). Deep reinforcement learning: A brief survey. IEEE Signal Processing Magazine, 34(6), 26–38.

[4] Nian, R., Liu, J., & Huang, B. (2020). A review On reinforcement learning: Introduction and applications in industrial process control. Computers and Chemical Engineering, 139, 106886.