stat946w18/Synthetic and natural noise both break neural machine translation

Introduction

- Humans have surprisingly robust language processing systems which can easily overcome typos, e.g.

Aoccdrnig to a rscheearch at Cmabrigde Uinervtisy, it deosn't mttaer in waht oredr the ltteers in a wrod are, the olny iprmoetnt tihng is taht the frist and lsat ltteer be at the rghit pclae.

- A person's ability to read this text comes as no surprise to the Psychology literature

- Saberi & Perrott (1999) found that this robustness extends to audio as well.

- Rayner et al. (2006) found that in noisier settings reading comprehension only slowed by 11%.

- McCusker et al. (1981) found that the common case of swapping letters could often go unnoticed by the reader.

- Mayall et al (1997) shows that we rely on word shape.

- Reicher, 1969; Pelli et al., (2003) found that we can switch between whole word recognition but the first and last letter positions are required to stay constant for comprehension

However, NMT(neural machine translation) systems are brittle. i.e. The Arabic word

means a blessing for good morning, however

means hunt or slaughter.

Facebook's MT system mistakenly confused two words that only differ by one character, a situation that is challenging for a character-based NMT system.

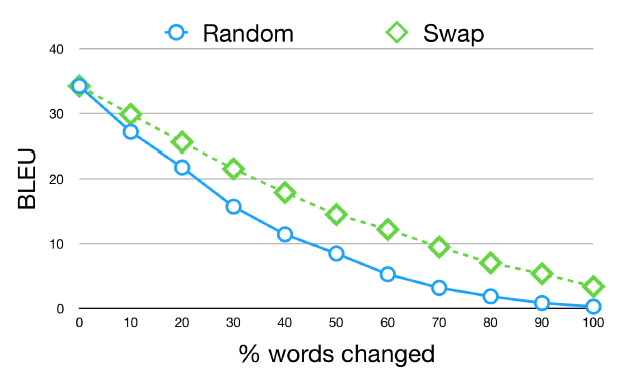

Figure 1 shows the performance translating German to English as a function of the percent of German words modified. Here we show two types of noise: (1) Random permutation of the word and (2) Swapping a pair of adjacent letters that does not include the first or last letter of the word. The important thing to note is that even small amounts of noise lead to substantial drops in performance.

BLEU (bilingual evaluation understudy) is an algorithm for evaluating the quality of text which has been machine-translated from one natural language to another. Quality is considered to be the correspondence between a machine's output and that of a human: "the closer a machine translation is to a professional human translation, the better it is". BLEU is between 0 and 1.

This paper explores two simple strategies for increasing model robustness:

- using structure-invariant representations ( character CNN representation)

- robust training on noisy data, a form of adversarial training.

The goal of the paper is two-fold:

- to initiate a conversation on robust training and modeling techniques in NMT

- to promote the creation of better and more linguistically accurate artificial noise to be applied to new languages and tasks

Adversarial examples

The growing literature on adversarial examples has demonstrated how dangerous it can be to have brittle machine learning systems being used so pervasively in the real world. Small changes to the input can lead to dramatic failures of deep learning models. This leads to a potential for malicious attacks using adversarial examples. An important distinction is often drawn between white-box attacks, where adversarial examples are generated with access to the model parameters, and black-box attacks, where examples are generated without such access.

The paper devises simple methods for generating adversarial examples for NMT. They do not assume any access to the NMT models' gradients, instead relying on cognitively-informed and naturally occurring language errors to generate noise.

MT system

We experiment with three different NMT systems with access to character information at different levels.

- Use

char2char, the fully character-level model of (Lee et al. 2017). This model processes a sentence as a sequence of characters. The encoder works as follows: the characters are embedded as vectors, and then the sequence of vectors is fed to a convolutional layer. The sequence output by the convolutional layer is then shortened by max pooling in the time dimension. The output of the max-pooling layer is then fed to a four-layer highway network (Srivasta et al. 2015), and the output of the highway network is in turn fed to a bidirectional GRU, producing a sequence of hidden units. The sequence of hidden units is then processed by the decoder, a GRU with attention, to produce probabilities over sequences of output characters. - Use

Nematus(Sennrich et al., 2017), a popular NMT toolkit. It is another sequence-to-sequence model with several architecture modifications, especially operating on sub-word units using byte-pair encoding. Byte-pair encoding (Sennich et al. 2015, Gage 1994) is an algorithm according to which we begin with a list of characters as our symbols, and repeatedly fuse common combinations to create new symbols. For example, if we begin with the letters a to z as our symbol list, and we find that "th" is the most common two-letter combination in a corpus, then we would add "th" to our symbol list in the first iteration. After we have used this algorithm to create a symbol list of the desired size, we apply a standard encoder-decoder with attention. - Use an attentional sequence-to-sequence model with a word representation based on a character convolutional neural network (

charCNN). ThecharCNNmodel is similar tochar2char, but uses a shallower highway network and, although it reads the input sentence as characters, it produces as output a probability distribution over words, not characters.

Data

MT Data

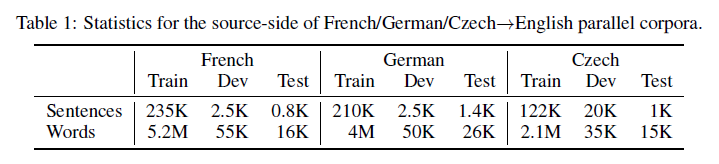

We use the TED talks parallel corpus prepared for IWSLT 2016 (Cettolo et al., 2012) for testing all of the NMT systems.

Natural and Artificial Noise

Natural Noise

The three languages, French, German, and Czech, each have their own frequent natural errors. The corpora of edits used for these languages are:

- French : Wikipedia Correction and Paraphrase Corpus (WiCoPaCo)

- German : RWSE Wikipedia Correction Dataset and The MERLIN corpus

- Czech : Manually annotated essays written by non-native speakers

The authors harvested naturally occurring errors (typos, misspellings, etc.) corresponding to these three languages from available corpora of edits to build a look-up table of possible lexical replacements.

Synthetic Noise

In addition to naturally collected sources of error, we also experiment with four types of synthetic noise: Swap, Middle Random, Fully Random, and Key Typo.

Swap: The first and simplest source of noise is swapping two letters (do not alter the first or last letters, only apply to words of length >=4).Middle Random: Randomize the order of all the letters in a word except for the first and last (only apply to words of length >=4).Fully RandomCompletely randomized words.Keyboard TypoRandomly replace one letter in each word with an adjacent key

Table 3 shows BLEU scores of models trained on clean (Vanilla) texts and tested on clean and noisy texts. All models suffer a significant drop in BLEU when evaluated on noisy texts. This is true for both natural noise and all kinds of synthetic noise. The more noise in the text, the worse the translation quality, with random scrambling producing the lowest BLEU scores.

Dealing with noise

Structure Invariant Representations

The three NMT models are all sensitive to word structure. The char2char and charCNN models both have convolutional layers on character sequences, designed to capture character n-grams (which are sequences of characters or words, of length n). The model in Nematus is based on sub-word units obtained with BPE. It thus relies on character order.

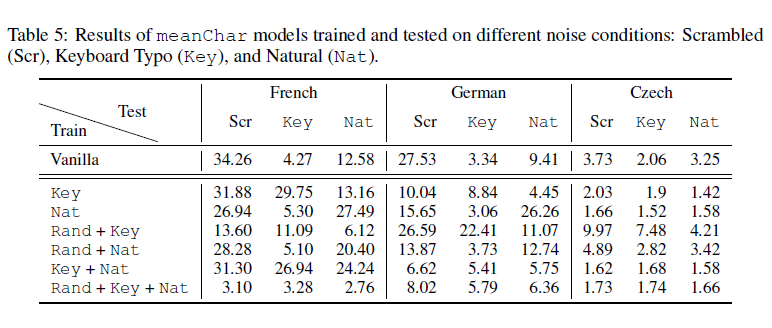

The simplest way to improve such a model is to take the average character embeddings as a word representation. This model, referred to as meanChar, first generates a word representation by averaging character embeddings, and then proceeds with a word-level encoder similar to the charCNN model.

meanChar is good with the other three scrambling errors (Swap, Middle Random and Fully Random), but bad with Keyboard errors and Natural errors.

Black-Box Adversarial Training

Analysis

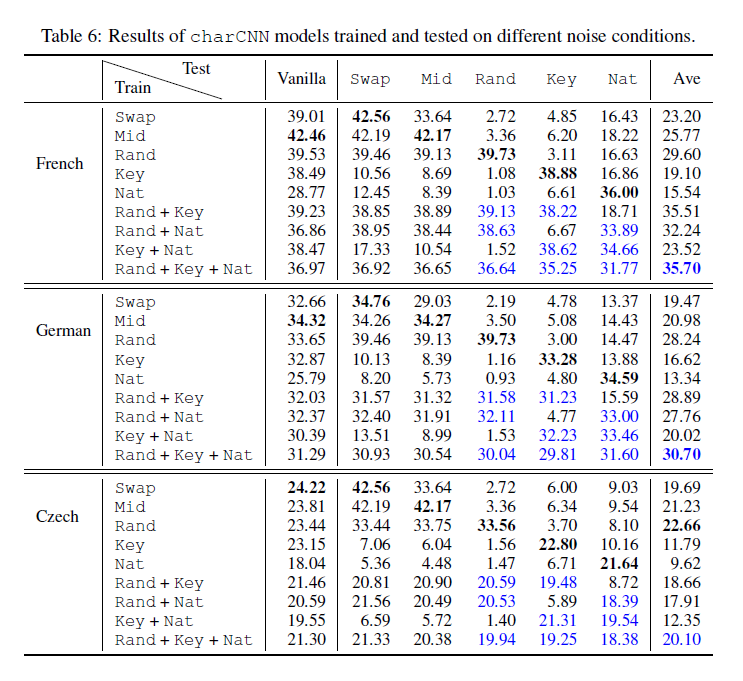

Learning Multiple Kinds of Noise in charCNN

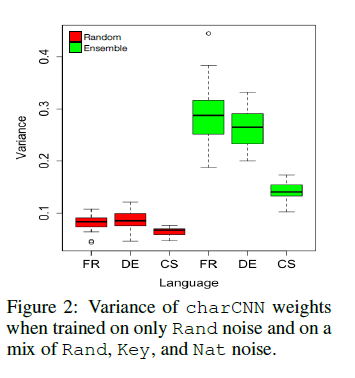

As Table 6 above shows, charCNN models performed quite well across different noise types on the test set when they are trained on a mix of noise types, which led the authors to speculate that filters from different convolutional layers learned to be robust to different types of noises. To test this hypothesis, they analyzed the weights learned by charCNN models trained on two kinds of input: completely scrambled words (Rand) without other kinds of noise, and a mix of Rand+Key+Nat kinds of noise. For each model, they computed the variance across the filter dimension for each one of the 1000 filters and for each one of the 25 character embedding dimensions, which were then averaged across the filters to yield 25 variances.

As Figure 2 below shows, the variances for the ensemble model are higher and more varied, which indicates that the filters learned different patterns and the model differentiated between different character embedding dimensions. Under the random scrambling scheme, there should be no patterns for the model to learn, so it makes sense for the filter weights to stay close uniform weights, hence the consistently lower variance measures.

Conclusion

In this work, the authors have shown that character-based NMT models are extremely brittle and tend to break when presented with both natural and synthetic kinds of noise. After a comparison of the models, they found that a character-based CNN can learn to address multiple types of errors that are seen in training. For the future work, the author suggested generating more realistic synthetic noise by using phonetic and syntactic structure. Also, they suggested that a better NMT architecture could be designed which can be robust to noise without seeing it in the training data.

Criticism

A major critique of this paper is that the solutions presented do not adequately solve the problem. The response to the meanChar architecture has been mostly negative and the method of noise injection has been seen as a simple start. However, the authors have acknowledged these critiques stating that they realize their solution is just a starting point. They argue that this paper has opened the discussion on dealing with noise in machine translation which has been mostly left untouched.

References

- Yonatan Belinkov and Yonatan Bisk. Synthetic and Natural Noise Both Break Neural Machine Translation. In International Conference on Learning Representations (ICLR), 2017.

- Mauro Cettolo, Christian Girardi, and Marcello Federico. WIT: Web Inventory of Transcribed and Translated Talks. In Proceedings of the 16th Conference of the European Association for Machine Translation (EAMT), pp. 261–268, Trento, Italy, May 2012.

- Jason Lee, Kyunghyun Cho, and Thomas Hofmann. Fully Character-Level Neural Machine Translation without Explicit Segmentation. Transactions of the Association for Computational Linguistics (TACL), 2017.

- Rico Sennrich, Orhan Firat, Kyunghyun Cho, Alexandra Birch, Barry Haddow, Julian Hitschler, Marcin Junczys-Dowmunt, Samuel Laubli, Antonio Valerio Miceli Barone, Jozef Mokry, and Maria Nadejde. Nematus: a Toolkit for Neural Machine Translation. In Proceedings of the Software Demonstrations of the 15th Conference of the European Chapter of the Association for Computational Linguistics, pp. 65–68, Valencia, Spain, April 2017. Association for Computational Linguistics. URL http://aclweb.org/anthology/E17-3017.