stat946w18/Predicting Floor-Level for 911 Calls with Neural Networks and Smartphone Sensor Data

Introduction

During emergency 911 calls, knowing the exact position of the victim is crucial to a fast response. Problems arise when the caller is unable to give their position accurately. This can happen, for instance, when the caller is disoriented, held in hostage, or a child calling for the victim. GPS in our smartphones is useful in these situations. However, in a tall building, GPS fails to give an accurate floor level. Previous works have explored using Wi-Fi signal or beacons placed inside the buildings, but these methods are not self-contained and require prior infrastructure knowledge.

Fortunately, today’s smartphones are equipped with many more sensors including barometer and magnetometer. Deep learning can be applied to predict floor level based on these sensor readings. Firstly, an LSTM is trained to classify whether the caller is indoor or outdoor using GPS, RSSI, and magnetometer sensor readings. Next, an unsupervised clustering algorithm is used to predict the floor level depending on the barometric pressure difference. With these two parts working together, a self-contained floor level prediction system can achieve 100% accuracy, without any outside knowledge.

Data Description

The author developed an iOS app called Sensory and used it to collect data on an iPhone 6. The following sensor readings are recorded: indoors, created at, session id, floor, RSSI strength, GPS latitude, GPS longitude, GPS vertical accuracy, GPS horizontal accuracy, GPS course, GPS speed, barometric relative altitude, barometric pressure, environment context, environment mean building floors, environment activity, city name, country name, magnet x, magnet y, magnet z, magnet total.

The indoor-outdoor data has to be manually entered as soon as the user enters or exits a building. To gather the data for floor level prediction, the authors conducted 63 trials among five different buildings throughout New York City. The actual floor level was recorded manually for validation purposes only, since unsupervised learning is being used.

Methods

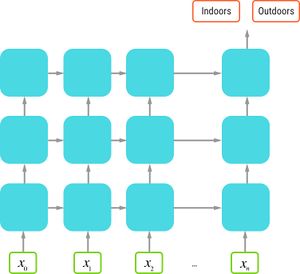

An LSTM network is used to solve the indoor-outdoor classification problem. Here is a diagram of the network architecture.

Figure 1: LSTM network architecture. A 3-layer LSTM. Inputs are sensor readings for d consecutive time-steps. Target is y = 1 if indoors and y = 0 if outdoors.

The LSTM contains three layers. Layers one and two have 50 neurons followed by a dropout layer set to 0.2. Layer 3 has two neurons fed directly into a one-neuron feedforward layer with a sigmoid activation function. The input is the sensor readings, and the output is the indoor-outdoor label. The objective function is the cross-entropy between the true label and the prediction.

\begin{equation} C(y_i, \hat{y}_i) = \frac{1}{n} \sum_{i=1}^{n} -(y_i log(\hat{y_i}) + (1 - y_i) log(1 - \hat{y_i})) \label{equation:binCE} \end{equation}

The main reason why the neural network is able to predict whether the user is indoor or outdoor is that walls of buildings interfere with the GPS signals. The LSTM is able to find the pattern in the GPS signal strength in combination with other sensor readings to give an accurate prediction. However, the change in GPS signal does not happen instantaneously as the user walks indoor. Thus, a window of 20 seconds is allowed, and the minimum barometric pressure reading within that window is recorded as the ground floor.

To determine the exact time the user makes an indoor-outdoor transition, two vector masks are convolved across the LSTM predictions.

\begin{equation} V_1 = [1, 1, 1, 1, 1, 0, 0, 0, 0, 0] \label{equation:VMask} \end{equation}

\begin{equation} V_2 = [0, 0, 0, 0, 0, 1, 1, 1, 1, 1] \label{equation:VMask} \end{equation}