stat946f11: Difference between revisions

| Line 228: | Line 228: | ||

We can then easily compute <math>V_i</math> for say <math>i=3,6</math>. <br /> | We can then easily compute <math>V_i</math> for say <math>i=3,6</math>. <br /> | ||

<center><math> V_3 = \{2\}, V_6 = \{1,3,4\}\,\!</math></center> | <center><math> V_3 = \{2\}, V_6 = \{1,3,4\}\,\!</math></center> | ||

while <math>\pi_i</math> for <math>i=3,6</math> will be. <br /> | |||

<center><math> \pi_3 = \{1\}, \pi6 = \{2,5\}\,\!</math></center> | |||

We would be interested in finding the conditional independence between random variables in this graph. We know <math>X_i \perp X_{v_i} | X_{\pi_i}</math> for each <math>i</math>. In other words, given its parents the node is independent of all earlier nodes. So:<br /> | We would be interested in finding the conditional independence between random variables in this graph. We know <math>X_i \perp X_{v_i} | X_{\pi_i}</math> for each <math>i</math>. In other words, given its parents the node is independent of all earlier nodes. So:<br /> | ||

Revision as of 10:04, 7 October 2011

Editor Sign Up

Sign up for your presentation

Assignments

Introduction

Notation

We will begin with short section about the notation used in these notes. Capital letters will be used to denote random variables and lower case letters denote observations for those random variables:

- [math]\displaystyle{ \{X_1,\ X_2,\ \dots,\ X_n\} }[/math] random variables

- [math]\displaystyle{ \{x_1,\ x_2,\ \dots,\ x_n\} }[/math] observations of the random variables

The joint probability mass function can be written as:

or as shorthand, we can write this as [math]\displaystyle{ p( x_1, x_2, \dots, x_n ) }[/math]. In these notes both types of notation will be used. We can also define a set of random variables [math]\displaystyle{ X_Q }[/math] where [math]\displaystyle{ Q }[/math] represents a set of subscripts.

Example

Let [math]\displaystyle{ A = \{1,4\} }[/math], so [math]\displaystyle{ X_A = \{X_1, X_4\} }[/math]; [math]\displaystyle{ A }[/math] is the set of indices for

the r.v. [math]\displaystyle{ X_A }[/math].

Also let [math]\displaystyle{ B = \{2\},\ X_B = \{X_2\} }[/math] so we can write

Graphical Models

Graphs can be represented as a pair of vertices and edges: [math]\displaystyle{ G = (V, E). }[/math]

Two branches of graphical representations of distributions are commonly used in graphical models; Bayesian networks and Markov networks. Both families encompass the properties of factorization and independence, but they differ in the factorization of the distribution that they induce.

- [math]\displaystyle{ V }[/math] is the set of nodes (vertices).

- [math]\displaystyle{ E }[/math] is the set of edges.

If the edges have a direction associated with them then we consider the graph to be directed as in Figure 1, otherwise the graph is undirected as in Figure 2.

We will use graphs in this course to represent the relationship between different random variables.

Directed graphical models (Bayesian networks)

In the case of directed graphs, the direction of the arrow indicates "causation". For example:

[math]\displaystyle{ A \longrightarrow B }[/math]: [math]\displaystyle{ A\,\! }[/math] "causes" [math]\displaystyle{ B\,\! }[/math].

In this case we must assume that our directed graphs are acyclic. If our causation graph contains a cycle then it would mean that for example:

- [math]\displaystyle{ A }[/math] causes [math]\displaystyle{ B }[/math]

- [math]\displaystyle{ B }[/math] causes [math]\displaystyle{ C }[/math]

- [math]\displaystyle{ C }[/math] causes [math]\displaystyle{ A }[/math], again.

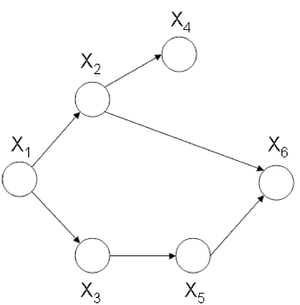

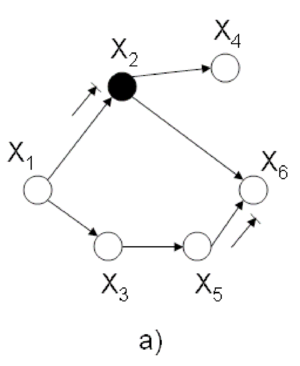

Clearly, this would confuse the order of the events. An example of a graph with a cycle can be seen in Figure 3. Such a graph could not be used to represent causation. The graph in Figure 4 does not have cycle and we can say that the node [math]\displaystyle{ X_1 }[/math] causes, or affects, [math]\displaystyle{ X_2 }[/math] and [math]\displaystyle{ X_3 }[/math] while they in turn cause [math]\displaystyle{ X_4 }[/math].

We will consider a 1-1 map between our graph's vertices and a set of random variables. Consider the following example that uses boolean random variables. It is important to note that the variables need not be boolean and can indeed be discrete over a range or even continuous.

Speaking about random variables, we can now refer to the relationship between random variables in terms of dependence. Therefore, the direction of the arrow indicates "conditional dependence". For example:

[math]\displaystyle{ A \longrightarrow B }[/math]: [math]\displaystyle{ B\,\! }[/math] "is dependent on" [math]\displaystyle{ A\,\! }[/math].

Example

In this example we will consider the possible causes for wet grass.

The wet grass could be caused by rain, or a sprinkler. Rain can be caused by clouds. On the other hand one can not say that clouds cause the use of a sprinkler. However, the causation exists because the presence of clouds does affect whether or not a sprinkler will be used. If there are more clouds there is a smaller probability that one will rely on a sprinkler to water the grass. As we can see from this example the relationship between two variables can also act like a negative correlation. The corresponding graphical model is shown in Figure 5.

This directed graph shows the relation between the 4 random variables. If we have the joint probability [math]\displaystyle{ P(C,R,S,W) }[/math], then we can answer many queries about this system.

This all seems very simple at first but then we must consider the fact that in the discrete case the joint probability function grows exponentially with the number of variables. If we consider the wet grass example once more we can see that we need to define [math]\displaystyle{ 2^4 = 16 }[/math] different probabilities for this simple example. The table bellow that contains all of the probabilities and their corresponding boolean values for each random variable is called an interaction table.

Example:

Now consider an example where there are not 4 such random variables but 400. The interaction table would become too large to manage. In fact, it would require [math]\displaystyle{ 2^{400} }[/math] rows! The purpose of the graph is to help avoid this intractability by considering only the variables that are directly related. In the wet grass example Sprinkler (S) and Rain (R) are not directly related.

To solve the intractability problem we need to consider the way those relationships are represented in the graph. Let us define the following parameters. For each vertex [math]\displaystyle{ i \in V }[/math],

- [math]\displaystyle{ \pi_i }[/math]: is the set of parents of [math]\displaystyle{ i }[/math]

- ex. [math]\displaystyle{ \pi_R = C }[/math] \ (the parent of [math]\displaystyle{ R = C }[/math])

- [math]\displaystyle{ f_i(x_i, x_{\pi_i}) }[/math]: is the joint p.d.f. of [math]\displaystyle{ i }[/math] and [math]\displaystyle{ \pi_i }[/math] for which it is true that:

- [math]\displaystyle{ f_i }[/math] is nonnegative for all [math]\displaystyle{ i }[/math]

- [math]\displaystyle{ \displaystyle\sum_{x_i} f_i(x_i, x_{\pi_i}) = 1 }[/math]

Claim: There is a family of probability functions [math]\displaystyle{ P(X_V) = \prod_{i=1}^n f_i(x_i, x_{\pi_i}) }[/math] where this function is nonnegative, and

To show the power of this claim we can prove the equation (\ref{eqn:WetGrass}) for our wet grass example:

We want to show that

Consider factors [math]\displaystyle{ f(C) }[/math], [math]\displaystyle{ f(R,C) }[/math], [math]\displaystyle{ f(S,C) }[/math]: they do not depend on [math]\displaystyle{ W }[/math], so we can write this all as

since we had already set [math]\displaystyle{ \displaystyle \sum_{x_i} f_i(x_i, x_{\pi_i}) = 1 }[/math].

Let us consider another example with a different directed graph.

Example:

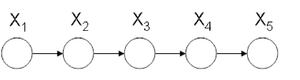

Consider the simple directed graph in Figure 6.

Assume that we would like to calculate the following: [math]\displaystyle{ p(x_3|x_2) }[/math]. We know that we can write the joint probability as:

We can also make use of Bayes' Rule here:

We also need

Thus,

Theorem 1.

.

In our simple graph, the joint probability can be written as

Instead, had we used the chain rule we would have obtained a far more complex equation:

The Markov Property, or Memoryless Property is when the variable [math]\displaystyle{ X_i }[/math] is only affected by [math]\displaystyle{ X_j }[/math] and so the random variable [math]\displaystyle{ X_i }[/math] given [math]\displaystyle{ X_j }[/math] is independent of every other random variable. In our example the history of [math]\displaystyle{ x_4 }[/math] is completely determined by [math]\displaystyle{ x_3 }[/math].

By simply applying the Markov Property to the chain-rule formula we would also have obtained the same result.

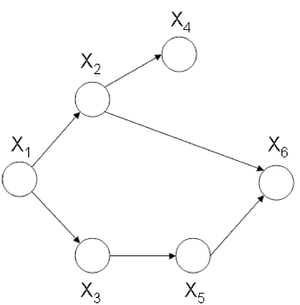

Now let us consider the joint probability of the following six-node example found in Figure 7.

If we use Theorem 1 it can be seen that the joint probability density function for Figure 7 can be written as follows:

Once again, we can apply the Chain Rule and then the Markov Property and arrive at the same result.

Independence

Marginal independence

We can say that [math]\displaystyle{ X_A }[/math] is marginally independent of [math]\displaystyle{ X_B }[/math] if:

Conditional independence

We can say that [math]\displaystyle{ X_A }[/math] is conditionally independent of [math]\displaystyle{ X_B }[/math] given [math]\displaystyle{ X_C }[/math] if:

Aside: Before we move on further, we first define the following terms:

- I is defined as an ordering for the nodes in graph C.

- For each [math]\displaystyle{ i \in V }[/math], [math]\displaystyle{ V_i }[/math] is defined as a set of all nodes that appear earlier than i excluding its parents [math]\displaystyle{ \pi_i }[/math].

Let us consider the example of the six node figure given above (Figure 7). We can define [math]\displaystyle{ I }[/math] as follows:

We can then easily compute [math]\displaystyle{ V_i }[/math] for say [math]\displaystyle{ i=3,6 }[/math].

while [math]\displaystyle{ \pi_i }[/math] for [math]\displaystyle{ i=3,6 }[/math] will be.

We would be interested in finding the conditional independence between random variables in this graph. We know [math]\displaystyle{ X_i \perp X_{v_i} | X_{\pi_i} }[/math] for each [math]\displaystyle{ i }[/math]. In other words, given its parents the node is independent of all earlier nodes. So:

[math]\displaystyle{ X_1 \perp \phi | \phi }[/math],

[math]\displaystyle{ X_2 \perp \phi | X_1 }[/math],

[math]\displaystyle{ X_3 \perp X_2 | X_1 }[/math],

[math]\displaystyle{ X_4 \perp \{X_1,X_3\} | X_2 }[/math],

[math]\displaystyle{ X_5 \perp \{X_1,X_2,X_4\} | X_3 }[/math],

[math]\displaystyle{ X_6 \perp \{X_1,X_3,X_4\} | \{X_2,X_5\} }[/math]

To illustrate why this is true we can take a simple example. Show that:

Proof: first, we know [math]\displaystyle{ P(X_1,X_2,X_3,X_4,X_5,X_6) = P(X_1)P(X_2|X_1)P(X_3|X_1)P(X_4|X_2)P(X_5|X_3)P(X_6|X_5,X_2)\,\! }[/math]

then

The other conditional independences can be proven through a similar process.

Sampling

Even if using graphical models helps a lot facilitate obtaining the joint probability, exact inference is not always feasible. Exact inference is feasible in small to medium-sized networks only. Exact inference consumes such a long time in large networks. Therefore, we resort to approximate inference techniques which are much faster and usually give pretty good results.

In sampling, random samples are generated and values of interest are computed from samples, not original work.

As an input you have a Bayesian network with set of nodes [math]\displaystyle{ X\,\! }[/math]. The sample taken may include all variables (except evidence E) or a subset. Sample schemas dictate how to generate samples (tuples). Ideally samples are distributed according to [math]\displaystyle{ P(X|E)\,\! }[/math]

Some sampling algorithms:

- Forward Sampling

- Likelihood weighting

- Gibbs Sampling (MCMC)

- Blocking

- Rao-Blackwellised

- Importance Sampling

Bayes Ball

The Bayes Ball algorithm can be used to determine if two random variables represented in a graph are independent. The algorithm can show that either two nodes in a graph are independent OR that they are not necessarily independent. The Bayes Ball algorithm can not show that two nodes are dependant. The algorithm will be discussed further in later parts of this section.

Canonical Graphs

In order to understand the Bayes Ball algorithm we need to first introduce 3 canonical graphs.

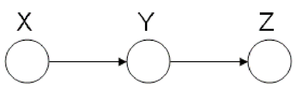

Markov Chain (also called serial connection)

In the following graph (Figure 8 X is independent of Z given Y.

We say that: [math]\displaystyle{ X }[/math] [math]\displaystyle{ \perp }[/math] [math]\displaystyle{ Z }[/math] [math]\displaystyle{ | }[/math] [math]\displaystyle{ Y }[/math]

We can prove this independence:

Where

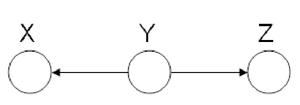

Hidden Cause (diverging connection)

In the Hidden Cause case we can say that X is independent of Z given Y. In this case Y is the hidden cause and if it is known then Z and X are considered independent.

We say that: [math]\displaystyle{ X }[/math] [math]\displaystyle{ \perp }[/math] [math]\displaystyle{ Z }[/math] [math]\displaystyle{ | }[/math] [math]\displaystyle{ Y }[/math]

The proof of the independence:

The Hidden Cause case is best illustrated with an example:

In Figure 10 it can be seen that both "Shoe Size" and "Grey Hair" are dependant on the age of a person. The variables of "Shoe size" and "Grey hair" are dependent in some sense, if there is no "Age" in the picture. Without the age information we must conclude that those with a large shoe size also have a greater chance of having gray hair. However, when "Age" is observed, there is no dependence between "Shoe size" and "Grey hair" because we can deduce both based only on the "Age" variable.

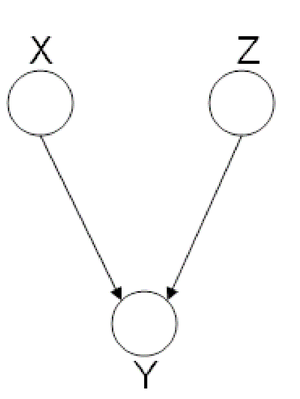

Explaining-Away (converging connection)

Finally, we look at the third type of canonical graph: Explaining-Away Graphs. This type of graph arises when a phenomena has multiple explanations. Here, the conditional independence statement is actually a statement of marginal independence: [math]\displaystyle{ X \amalg Z }[/math].

In these types of scenarios, variables X and Z are independent. However, once the third variable Y is observed, X and Z become dependent (Fig. 11).

To clarify these concepts, suppose Bob and Mary are supposed to meet for a noontime lunch. Consider the following events:

If Mary is late, then she could have been kidnapped by aliens. Alternatively, Bob may have forgotten to adjust his watch for daylight savings time, making him early. Clearly, both of these events are independent. Now, consider the following probabilities:

We expect [math]\displaystyle{ P( late = 1 ) \lt P( aliens = 1 ~|~ late = 1 ) }[/math] since [math]\displaystyle{ P( aliens = 1 ~|~ late = 1 ) }[/math] does not provide any information regarding Bob's watch. Similarly, we expect [math]\displaystyle{ P( aliens = 1 ~|~ late = 1 ) \lt P( aliens = 1 ~|~ late = 1, watch = 0 ) }[/math]. Since [math]\displaystyle{ P( aliens = 1 ~|~ late = 1 ) \neq P( aliens = 1 ~|~ late = 1, watch = 0 ) }[/math], aliens and watch are not independent given late. To summarize,

- If we do not observe late, then aliens [math]\displaystyle{ ~\amalg~ watch }[/math] ([math]\displaystyle{ X~\amalg~ Z }[/math])

- If we do observe late, then aliens [math]\displaystyle{ ~\cancel{\amalg}~ watch ~|~ late }[/math] ([math]\displaystyle{ X ~\cancel{\amalg}~ Z ~|~ Y }[/math])

Bayes Ball Algorithm

Goal: We wish to determine whether a given conditional statement such as [math]\displaystyle{ X_{A} ~\amalg~ X_{B} ~|~ X_{C} }[/math] is true given a directed graph.

The algorithm is as follows:

- Shade nodes, [math]\displaystyle{ X_{C} }[/math], that are conditioned on.

- The initial position of the ball is [math]\displaystyle{ X_{A} }[/math].

- If the ball cannot reach [math]\displaystyle{ X_{B} }[/math], then the nodes [math]\displaystyle{ X_{A} }[/math] and [math]\displaystyle{ X_{B} }[/math] must be conditionally independent.

- If the ball can reach [math]\displaystyle{ X_{B} }[/math], then the nodes [math]\displaystyle{ X_{A} }[/math] and [math]\displaystyle{ X_{B} }[/math] are not necessarily independent.

The biggest challenge in the Bayes Ball Algorithm is to determine what happens to a ball going from node X to node Z as it passes through node Y. The ball could continue its route to Z or it could be blocked. It is important to note that the balls are allowed to travel in any direction, independent of the direction of the edges in the graph.

We use the canonical graphs previously studied to determine the route of a ball traveling through a graph. Using these three graphs we establish base rules which can be extended upon for more general graphs.

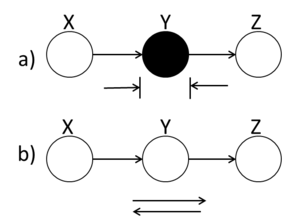

Markov Chain

A ball traveling from X to Z or from Z to X will be blocked at node Y if this node is shaded. Alternatively, if Y is unshaded, the ball will pass through.

In (Fig. 12(a)), X and Z are conditionally independent ( [math]\displaystyle{ X ~\amalg~ Z ~|~ Y }[/math] ) while in (Fig.12(b)) X and Z are not necessarily independent.

Hidden Cause

A ball traveling through Y will be blocked at Y if it is shaded. If Y is unshaded, then the ball passes through.

(Fig. 13(a)) demonstrates that X and Z are conditionally independent when Y is shaded.

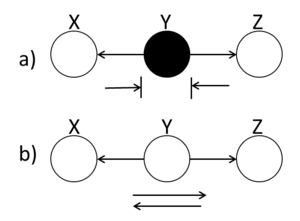

Explaining-Away

A ball traveling through Y is blocked when Y is unshaded. If Y is shaded, then the ball passes through. Hence, X and Z are conditionally independent when Y is unshaded.

Bayes Ball Examples

Example 1

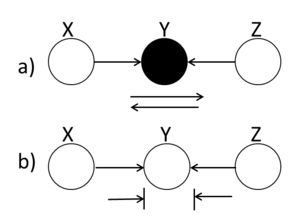

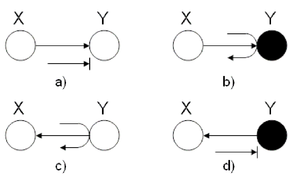

In this first example, we wish to identify the behavior of a ball going from X to Y in two-node graphs.

The four graphs in (Fig. 15 show different scenarios. In (a), the ball is blocked at Y. In (b) the ball passes through Y. In both of these cases, we use the rules of the Explaining Away Canonical Graph (refer to Fig. 14.) Finally, for the last two graphs, we used the rules of the Hidden Cause Canonical Graph (Fig. 13). In (c), the ball passes through Y while in (d), the ball is blocked at Y.

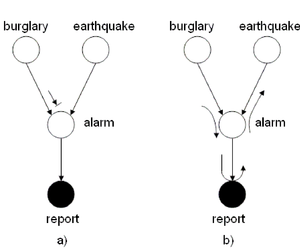

Example 2

Suppose your home is equipped with an alarm system. There are two possible causes for the alarm to ring:

- Your house is being burglarized

- There is an earthquake

Hence, we define the following events:

The burglary and earthquake events are independent

if the alarm does not ring. However, if the alarm does ring, then

the burglary and the earthquake events are not

necessarily independent. Also, if the alarm rings then it is

possible for a police report to be issued.

We can use the Bayes Ball Algorithm to deduce conditional independence properties from the graph. Firstly, consider figure (16(a)) and assume we are trying to determine whether there is conditional independence between the burglary and earthquake events. In figure (\ref{fig:AlarmExample1}(a)), a ball starting at the burglary event is blocked at the alarm node.

Nonetheless, this does not prove that the burglary and earthquake events are independent. Indeed, (Fig. 16(b)) disproves this as we have found an alternate path from burglary to earthquake passing through report. It follows that [math]\displaystyle{ burglary ~\cancel{\amalg}~ earthquake ~|~ report }[/math]

Example 3

Referring to figure (Fig. 17), we wish to determine whether the following conditional probabilities are true:

To determine if the conditional probability Eq.\ref{eq:c1} is true, we shade node [math]\displaystyle{ X_{2} }[/math]. This blocks balls traveling from [math]\displaystyle{ X_{1} }[/math] to [math]\displaystyle{ X_{3} }[/math] and proves that Eq.\ref{eq:c1} is valid.

After shading nodes [math]\displaystyle{ X_{3} }[/math] and [math]\displaystyle{ X_{4} }[/math] and applying the Bayes Balls Algorithm}, we find that the ball travelling from [math]\displaystyle{ X_{1} }[/math] to [math]\displaystyle{ X_{5} }[/math] is blocked at [math]\displaystyle{ X_{3} }[/math]. Similarly, a ball going from [math]\displaystyle{ X_{5} }[/math] to [math]\displaystyle{ X_{1} }[/math] is blocked at [math]\displaystyle{ X_{4} }[/math]. This proves that Eq.\ref{eq:c2 also holds.

Example 4

Consider figure (Fig. 18). Using the Bayes Ball Algorithm we wish to determine if each of the following statements are valid:

To disprove Eq.\ref{eq:c3}, we must find a path from [math]\displaystyle{ X_{4} }[/math] to [math]\displaystyle{ X_{1} }[/math] and [math]\displaystyle{ X_{3} }[/math] when [math]\displaystyle{ X_{2} }[/math] is shaded (Refer to Fig. 19(a)). Since there is no route from [math]\displaystyle{ X_{4} }[/math] to [math]\displaystyle{ X_{1} }[/math] and [math]\displaystyle{ X_{3} }[/math] we conclude that Eq.\ref{eq:c3} is true.

Similarly, we can show that there does not exist a path between [math]\displaystyle{ X_{1} }[/math] and [math]\displaystyle{ X_{6} }[/math] when [math]\displaystyle{ X_{2} }[/math] and [math]\displaystyle{ X_{3} }[/math] are shaded (Refer to Fig.19(b)). Hence, Eq.\ref{eq:c4} is true.

Finally, (Fig. 19(c)) shows that there is a route from [math]\displaystyle{ X_{2} }[/math] to [math]\displaystyle{ X_{3} }[/math] when [math]\displaystyle{ X_{1} }[/math] and [math]\displaystyle{ X_{6} }[/math] are shaded. This proves that the statement \ref{eq:c4} is false.

Theorem 2.

Define [math]\displaystyle{ p(x_{v}) = \prod_{i=1}^{n}{p(x_{i} ~|~ x_{\pi_{i}})} }[/math] to be the factorization as a multiplication of some local probability of a directed graph.

Let [math]\displaystyle{ D_{1} = \{ p(x_{v}) = \prod_{i=1}^{n}{p(x_{i} ~|~ x_{\pi_{i}})}\} }[/math]

Let [math]\displaystyle{ D_{2} = \{ p(x_{v}): }[/math]satisfy all conditional independence statements associated with a graph [math]\displaystyle{ \} }[/math].

Then [math]\displaystyle{ D_{1} = D_{2} }[/math].

Example 5

Given the following Bayesian network (Fig.19 ): Determine whether the following statements are true or false?

a.) [math]\displaystyle{ x4\perp \{x1,x3\} }[/math]

Ans. True

b.) [math]\displaystyle{ x1\perp x6\{x2,x3\} }[/math]

Ans. True

c.) [math]\displaystyle{ x2\perp x3 \{x1,x6\} }[/math]

Ans. False

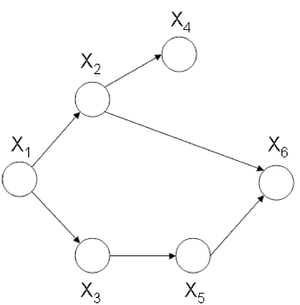

Undirected Graphical Model

Generally, the graphical model is divided into two major classes, directed graphs and undirected graphs. Directed graphs and its characteristics was described previously. In this section we discuss undirected graphical model which is also known as Markov random fields. We can define an undirected graphical model with a graph [math]\displaystyle{ G = (V, E) }[/math] where [math]\displaystyle{ V }[/math] is a set of vertices corresponding to a set of random variables and [math]\displaystyle{ E }[/math] is a set of undirected edges as shown in (Fig.20)

Conditional independence

For directed graphs Bayes ball method was defined to determine the conditional independence properties of a given graph. We can also employ the Bayes ball algorithm to examine the conditional independency of undirected graphs. Here the Bayes ball rule is simpler and more intuitive. Considering (Fig.21) , a ball can be thrown either from x to z or from z to x if y is not observed. In other words, if y is not observed a ball thrown from x can reach z and vice versa. On the contrary, given a shaded y, the node can block the ball and make x and z conditionally independent. With this definition one can declare that in an undirected graph, a node is conditionally independent of non-neighbors given neighbors. Technically speaking, [math]\displaystyle{ X_A }[/math] is independent of [math]\displaystyle{ X_C }[/math] given [math]\displaystyle{ X_B }[/math] if the set of nodes [math]\displaystyle{ X_B }[/math] separates the nodes [math]\displaystyle{ X_A }[/math] from the nodes [math]\displaystyle{ X_C }[/math]. Hence, if every path from a node in [math]\displaystyle{ X_A }[/math] to a node in [math]\displaystyle{ X_C }[/math] includes at least one node in [math]\displaystyle{ X_B }[/math], then we claim that [math]\displaystyle{ X_A \perp X_c | X_B }[/math].

Question

Is it possible to convert undirected models to directed models or vice versa?

In order to answer this question, consider (Fig.22 ) which illustrates an undirected graph with four nodes - [math]\displaystyle{ X }[/math], [math]\displaystyle{ Y }[/math],[math]\displaystyle{ Z }[/math] and [math]\displaystyle{ W }[/math]. We can define two facts using Bayes ball method:

It is simple to see there is no directed graph satisfying both conditional independence properties. Recalling that directed graphs are acyclic, converting undirected graphs to directed graphs result in at least one node in which the arrows are inward-pointing(a v structure). Without loss of generality we can assume that node [math]\displaystyle{ Z }[/math] has two inward-pointing arrows. By conditional independence semantics of directed graphs, we have [math]\displaystyle{ X \perp Y|W }[/math], yet the [math]\displaystyle{ X \perp Y|\{W,Z\} }[/math] property does not hold. On the other hand, (Fig.23 ) depicts a directed graph which is characterized by the singleton independence statement [math]\displaystyle{ X \perp Y }[/math]. There is no undirected graph on three nodes which can be characterized by this singleton statement. Basically, if we consider the set of all distribution over [math]\displaystyle{ n }[/math] random variables, a subset of which can be represented by directed graphical models while there is another subset which undirected graphs are able to model that. There is a narrow intersection region between these two subsets in which probabilistic graphical models may be represented by either directed or undirected graphs.

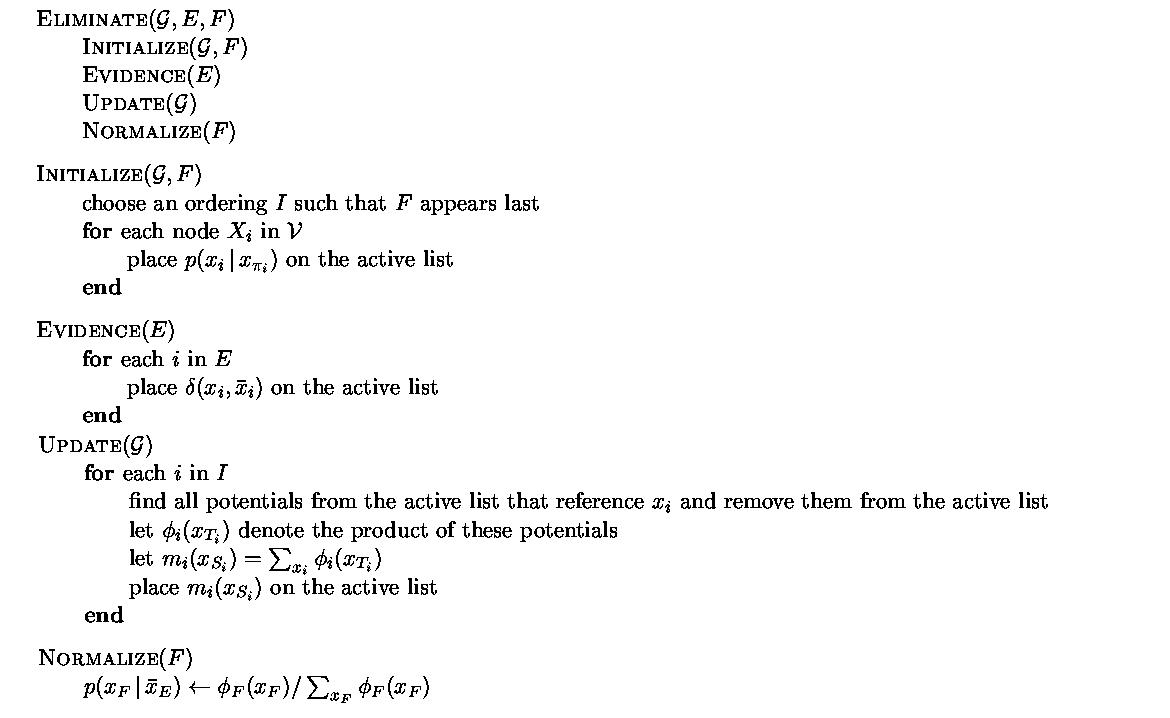

Elimination Algorithm on Directed Graphs

Given a graph G =(V,E), an evidence set E, and a query node F, we first choose an elimination ordering I such that F appears last in this ordering. The following figure shows the steps required to perform the elimination algorithm for probabilistic inference on directed graphs:

Example:

For the graph in figure 21 [math]\displaystyle{ G =(V,''E'') }[/math]. Consider once again that node [math]\displaystyle{ x_1 }[/math] is the query node and [math]\displaystyle{ x_6 }[/math] is the evidence node.

[math]\displaystyle{ I = \left\{6,5,4,3,2,1\right\} }[/math] (1 should be the last node, ordering is crucial)

We must now create an active list. There are two rules that must be followed in order to create this list.

- For i[math]\displaystyle{ \in{V} }[/math] put [math]\displaystyle{ p(x_i|x_{\pi_i}) }[/math] in active list.

- For i[math]\displaystyle{ \in }[/math]{E} put [math]\displaystyle{ p(x_i|\overline{x_i}) }[/math] in active list.

Here, our active list is: [math]\displaystyle{ p(x_1), p(x_2|x_1), p(x_3|x_1), p(x_4|x_2), p(x_5|x_3),\underbrace{p(x_6|x_2, x_5)\delta{(\overline{x_6},x_6)}}_{\phi_6(x_2,x_5, x_6), \sum_{x6}{\phi_6}=m_{6}(x2,x5) } }[/math]

We first eliminate node [math]\displaystyle{ X_6 }[/math]. We place [math]\displaystyle{ m_{6}(x_2,x_5) }[/math] on the active list, having removed [math]\displaystyle{ X_6 }[/math]. We now eliminate [math]\displaystyle{ X_5 }[/math].

Likewise, we can also eliminate [math]\displaystyle{ X_4, X_3, X_2 }[/math](which yields the unnormalized conditional probability [math]\displaystyle{ p(x_1|\overline{x_6}) }[/math] and [math]\displaystyle{ X_1 }[/math]. Then it yields [math]\displaystyle{ m_1 = \sum_{x_1}{\phi_1(x_1)} }[/math] which is the normalization factor, [math]\displaystyle{ p(\overline{x_6}) }[/math].

Elimination Algorithm on Undirected Graphs

The first task is to find the maximal cliques and their associated potential functions.

maximal clique: [math]\displaystyle{ \left\{x_1, x_2\right\} }[/math], [math]\displaystyle{ \left\{x_1, x_3\right\} }[/math], [math]\displaystyle{ \left\{x_2, x_4\right\} }[/math], [math]\displaystyle{ \left\{x_3, x_5\right\} }[/math], [math]\displaystyle{ \left\{x_2,x_5,x_6\right\} }[/math]

potential functions: [math]\displaystyle{ \varphi{(x_1,x_2)},\varphi{(x_1,x_3)},\varphi{(x_2,x_4)}, \varphi{(x_3,x_5)} }[/math] and [math]\displaystyle{ \varphi{(x_2,x_3,x_6)} }[/math]

[math]\displaystyle{ p(x_1|\overline{x_6})=p(x_1,\overline{x_6})/p(\overline{x_6})\cdots\cdots\cdots\cdots\cdots(*) }[/math]

[math]\displaystyle{ p(x_1,x_6)=\frac{1}{Z}\sum_{x_2,x_3,x_4,x_5,x_6}\varphi{(x_1,x_2)}\varphi{(x_1,x_3)}\varphi{(x_2,x_4)}\varphi{(x_3,x_5)}\varphi{(x_2,x_3,x_6)}\delta{(x_6,\overline{x_6})} }[/math]

The [math]\displaystyle{ \frac{1}{Z} }[/math] looks crucial, but in fact it has no effect because for (*) both the numerator and the denominator have the [math]\displaystyle{ \frac{1}{Z} }[/math] term. So in this case we can just cancel it.

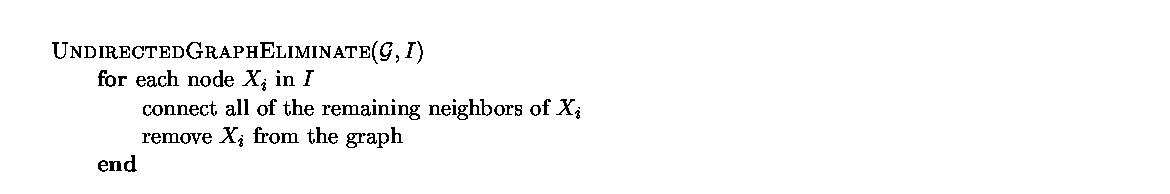

The general rule for elimination in an undirected graph is that we can remove a node as long as we connect all of the parents of that node together. Effectively, we form a clique out of the parents of that node.

The algorithm used to eliminate nodes in an undirected graph is given in figure 23.

Example:

For the graph G in figure 24

when we remove x1, G becomes as in figure 25

while if we remove x2, G becomes as in figure 26

An interesting thing to point out is that the order of the elimination matters a great deal. Consider the two results. If we remove one node the graph complexity is slightly reduced. But if we try to remove another node the complexity is significantly increased. The reason why we even care about the complexity of the graph is because the complexity of a graph denotes the number of calculations that are required to answer questions about that graph. If we had a huge graph with thousands of nodes the order of the node removal would be key in the complexity of the algorithm. Unfortunately, there is no efficient algorithm that can produce the optimal node removal order such that the elimination algorithm would run quickly.