stat841f14: Difference between revisions

m (Conversion script moved page Stat841f14 to stat841f14: Converting page titles to lowercase) |

|||

| (922 intermediate revisions by 36 users not shown) | |||

| Line 6: | Line 6: | ||

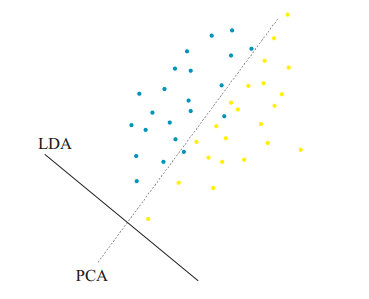

Principal Component Analysis (PCA), first invented by [http://en.wikipedia.org/wiki/Karl_Pearson Karl Pearson] in 1901, is a statistical technique for data analysis. Its main purpose is to reduce the dimensionality of the data. | Principal Component Analysis (PCA), first invented by [http://en.wikipedia.org/wiki/Karl_Pearson Karl Pearson] in 1901, is a statistical technique for data analysis. Its main purpose is to reduce the dimensionality of the data. | ||

Suppose there is a set of data points in a d-dimensional space. The goal of PCA is to find a linear subspace with lower dimensionality p ( | Suppose there is a set of data points in a d-dimensional space. The goal of PCA is to find a linear subspace with lower dimensionality <math> \, p </math> (<math>p \leq d </math>), such that maximum variance is retained in this lower-dimensional space. The linear subspace can be specified by p orthogonal vectors, such as <math>\, u_1 , u_2 , ... , u_p</math>, which form a new coordinate system. Ideally <math>\, p \ll d </math> (worst case would be to have <math>\, p = d </math>). These vectors are called the ''''Principal Components''''. In other words, PCA aims to reduce the dimensionality of the data, while preserving its information (or minimizing the loss of information). Information comes from variation. In other words, capturing more variation in the lower dimensional subspace means that more information about the original data is preserved. | ||

For example, if all data points have the same value along one dimension (as depicted in the figures below), then that dimension does not carry any information. To preserve the original information in a lower dimensional subspace, the subspace needs to contain components (or dimensions) along which data has most of its variability. In this case, we can ignore the dimension where all data points have the same value. | |||

In practical problems, however, finding a linear subspace of lower dimensionality, where no information about the data is lost, is not possible. Thus the loss of information is inevitable. Through PCA, we try to reduce this loss and capture most of the features of data. | |||

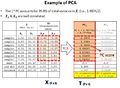

The figure below demonstrates an example of PCA. Data is transformed from original 3D space to 2D coordinate system where each coordinate is a principal component. | |||

<gallery> | <gallery> | ||

| Line 17: | Line 20: | ||

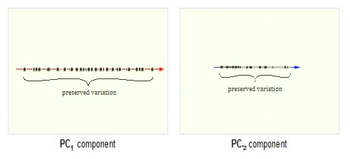

Now, consider the ability of two of the above | Now, consider the ability of the two of the above example components (PC<sub>1</sub> and PC<sub>2</sub>) to retain information from the original data. The data in the original space is projected onto each of these two components separately in the figures below. Notice that PC<sub>1</sub> is better able to capture the variation in the original data than PC<sub>2</sub>. If the goal was to reduce the original d=3 dimensional data to p=1 dimension, PC<sub>1</sub> would be preferable to PC<sub>2</sub>. | ||

[[Image:PCA 4.png|thumb|center|350px|Comparison of two different components used for dimensionality reduction]] | [[Image:PCA 4.png|thumb|center|350px|Comparison of two different components used for dimensionality reduction]] | ||

| Line 25: | Line 28: | ||

For example<ref> | For example<ref> | ||

https://onlinecourses.science.psu.edu/stat857/sites/onlinecourses.science.psu.edu.stat857/files/lesson05/PCA_plot.gif | https://onlinecourses.science.psu.edu/stat857/sites/onlinecourses.science.psu.edu.stat857/files/lesson05/PCA_plot.gif | ||

</ref>, in the top left corner of the image below, the point <math> x_1 </math> | </ref>, in the top left corner of the image below, the point <math> x_1 </math> is shown in a two-dimensional space and it's coordinates are <math>(x_i, 1)</math> and <math>(x_i, 2) </math> | ||

All the red points in the plot represented by their projected values on the two original coordinators (Feature 1, Feature 2). | All the red points in the plot represented by their projected values on the two original coordinators (Feature 1, Feature 2). | ||

| Line 54: | Line 57: | ||

Image:PCA in Neuroscience.png|PCA in Neuroscience. Data in different classes in 3 dimensional space is mapped to a 2 dimensional space using PCA. The mapped data is clearly linearly sparable. | Image:PCA in Neuroscience.png|PCA in Neuroscience. Data in different classes in 3 dimensional space is mapped to a 2 dimensional space using PCA. The mapped data is clearly linearly sparable. | ||

</gallery> | </gallery> | ||

Tracking the useful features based on PCA: | |||

Since in real world application, we need to know which features are important after dimensionality reduction. Then we can use those features as our new data set to continuous our project. To do this, we need to check the principal components have been chosen. Values of principal components or eigenvectors represent the weight of features within that specific principal component. Based on these weights we can tell the importance of features in the principal component. Then choose several most importance features from the original data instead of using all. | |||

=== Mathematical details === | === Mathematical details === | ||

PCA is a transformation from original space to a linear subspace with a new coordinate system. Each coordinate of this subspace is called a '''Principle Component'''. First principal component is the coordinate of this system along which the data points has the maximum variation. That is, if we project the data points along this coordinate, maximum variance of data is obtained (compared to any other vector in original space). Second principal component is the coordinate in the direction of the second greatest variance of the data, and so on. | PCA is a transformation from original (d-dimensional) space to a linear subspace with a new (p-dimensional) coordinate system. Each coordinate of this subspace is called a '''Principle Component'''. First principal component is the coordinate of this system along which the data points has the maximum variation. That is, if we project the data points along this coordinate, maximum variance of data is obtained (compared to any other vector in original space). Second principal component is the coordinate in the direction of the second greatest variance of the data, and so on. | ||

For example, if we are mapping from 3 dimensional space to 2 dimensions, the first principal component is the 2 dimensional plane which has the maximum variance of data when each 3D data point is mapped to its nearest 2D point on the 2D plane. | |||

Lets denote the basis of original space by <math> \mathbf{v_1}</math>, <math>\mathbf{v_2}</math>, ... , <math>\mathbf{v_d}</math>. Our goal is to find the principal components (coordinate of the linear subspace), denoted by <math>\mathbf{u_1}</math>, <math>\mathbf{u_2}</math>, ... , <math>\mathbf{u_p}</math> in the hope that <math> p \leq d </math>. First, we would like to obtain the first principal component <math>\mathbf{u_1}</math> or the components in the direction of maximum variance. This component can be treated as a vector in the original space and so is written as a linear combination of the basis in original space. | Lets denote the basis of original space by <math> \mathbf{v_1}</math>, <math>\mathbf{v_2}</math>, ... , <math>\mathbf{v_d}</math>. Our goal is to find the principal components (coordinate of the linear subspace), denoted by <math>\mathbf{u_1}</math>, <math>\mathbf{u_2}</math>, ... , <math>\mathbf{u_p}</math> in the hope that <math> p \leq d </math>. First, we would like to obtain the first principal component <math>\mathbf{u_1}</math> or the components in the direction of maximum variance. This component can be treated as a vector in the original space and so is written as a linear combination of the basis in original space. | ||

| Line 75: | Line 84: | ||

<math> \Phi = Var(\mathbf{w}^T \mathbf{x_i}) = \mathbf{w}^T \mathbf{S} \mathbf{w} </math> | <math> \Phi = Var(\mathbf{w}^T \mathbf{x_i}) = \mathbf{w}^T \mathbf{S} \mathbf{w} </math> | ||

we would like to maximize <math> \Phi </math> over set of all vectors <math> \mathbf{w} </math> in original space. But, this problem is not yet well-defined, because for any choice of <math> \mathbf{w} </math>, we can increase <math> \Phi </math> by simply multiplying <math> \mathbf{w} </math> | we would like to maximize <math> \Phi </math> over set of all vectors <math> \mathbf{w} </math> in original space. But, this problem is not yet well-defined, because for any choice of <math> \mathbf{w} </math>, we can increase <math> \Phi </math> by simply multiplying <math> \mathbf{w} </math> by a positive scalar greater than one. And the maximum will goes to infinity which is not the proper solution for our problem. So, we add the following constraint to the problem to bound the length of vector <math> \mathbf{w} </math>: | ||

<math> | <math> max_w \Phi = \mathbf{w}^T \mathbf{S} \mathbf{w} </math> | ||

subject to : <math>\mathbf{w}^T \mathbf{w} =1 </math> | subject to : <math>\mathbf{w}^T \mathbf{w} =1 </math> | ||

This constraint makes <math> \mathbf{w} </math> the unit vector in d-dimensional euclidian space and as a result the optimization problem is no longer unconstrained. | |||

Using [http://en.wikipedia.org/wiki/Lagrange_multiplier Lagrange Multiplier] technique we have: | Using [http://en.wikipedia.org/wiki/Lagrange_multiplier Lagrange Multiplier] technique we have: | ||

| Line 129: | Line 140: | ||

and make another matrix <math> \mathbf{X}^* </math> simply by subtracting the mean of data points from <math> \mathbf{X} </math>. | and make another matrix <math> \mathbf{X}^* </math> simply by subtracting the mean of data points from <math> \mathbf{X} </math>. | ||

<math> \mathbf{X}^* = \mathbf{X} - \mu_X | <math> \mathbf{X}^* = \mathbf{X} - \mu_X[1, 1, ... , 1], \mu_X = \frac{1}{n} \sum_{i=1}^n x_i </math> | ||

Then we will get a zero-mean version of our data points for which <math> \mathbf{X^*} \mathbf{X^{*^T}} </math> is the covariance matrix. | Then we will get a zero-mean version of our data points for which <math> \mathbf{X^*} \mathbf{X^{*^T}} </math> is the covariance matrix. | ||

| Line 173: | Line 183: | ||

[http://en.wikipedia.org/wiki/Principal_component_analysis PCA] is a method to reduce dimensions in data or to extract features from data. | [http://en.wikipedia.org/wiki/Principal_component_analysis PCA] is a method to reduce dimensions in data or to extract features from data. | ||

Given a data point in vector form | Given a data point in vector form <math>\,x</math> the goal of PCA is to map <math>\,x</math> to <math>\,y</math> where <math>\,x</math> <math>\,\isin</math> <math>\,\real</math><sup>d</sup> and <math>\,y</math> <math>\,\isin</math> <math>\,\real</math><sup>p</sup> such that p is much less than d. For example we could map a two-dimensional data point onto any one-dimensional vector in the original 2D space or we could map a three-dimensional data point onto any 2D plane in the original 3D space. | ||

The transformation from | The transformation from d-dimensional space to p-dimensional space is chosen in such a way that maximum variance is retained in the lower dimensional subspace. This is useful because variance represents the main differences between the data points, which is exactly what we are trying to capture when we perform data visualization. For example, when taking 64-dimensional images of hand-written digits, projecting them onto 2-dimensional space using PCA captures a significant amount of the structure we care about. | ||

<gallery> | <gallery> | ||

| Line 183: | Line 193: | ||

In terms of data visualization, it is fairly clear why PCA is important. High dimensional data is sometimes impossible to visualize. Even a 3D space can be fairly difficult to visualize, while a 2D space, which can be printed on a piece of paper, is much easier to visualize. If a higher dimensional dataset can be reduced to only 2 dimensions, it can be easily represented by plots and graphs. | In terms of data visualization, it is fairly clear why PCA is important. High dimensional data is sometimes impossible to visualize. Even a 3D space can be fairly difficult to visualize, while a 2D space, which can be printed on a piece of paper, is much easier to visualize. If a higher dimensional dataset can be reduced to only 2 dimensions, it can be easily represented by plots and graphs. | ||

In the case of many data points | In the case of many data points <math>\,x_i</math> in d-dimensional space: | ||

<math>\,X_{dxn} = [x_1 x_2 ... x_n]</math> | |||

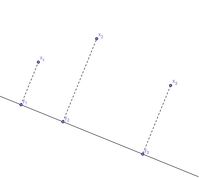

There is an infinite amount of vectors in <math>\real</math><sup>p</sup> to which the points in X can be mapped. When mapping these data points to lower dimensions information will be lost. To preserve as much information as possible the points are mapped to a vector in <math>\real</math><sup>p</sup> which will preserve as much variation in the data as possible. In other words, the data is mapped to the vector in <math>\real</math><sup>p</sup> that will have maximum [http://en.wikipedia.org/wiki/Variance variance]. In the images below, the data points mapped onto '''u''' in Mapping 1 have greater variance than in Mapping 2, and thus preserves more information in the data. | There is an infinite amount of vectors in <math>\,\real</math><sup>p</sup> to which the points in X can be mapped. When mapping these data points to lower dimensions information will be lost. To preserve as much information as possible the points are mapped to a vector in <math>\real</math><sup>p</sup> which will preserve as much variation in the data as possible. In other words, the data is mapped to the vector in <math>\real</math><sup>p</sup> that will have maximum [http://en.wikipedia.org/wiki/Variance variance]. In the images below, the data points mapped onto '''u''' in Mapping 1 have greater variance than in Mapping 2, and thus preserves more information in the data. | ||

<gallery> | <gallery> | ||

Image:PCA2_1.png| Two dimensional point mapped onto one dimensional vector | Image:PCA2_1.png| Figure 1. Two dimensional point mapped onto one dimensional vector | ||

Image:PCA2_2a.png| Mapping 1: Mapping of a two dimensional data set onto a one dimensional vector | Image:PCA2_2a.png| Figure 2. Mapping 1: Mapping of a two dimensional data set onto a one dimensional vector | ||

Image:PCA2_2b.png| Mapping 2: Mapping of a two dimensional data set onto a one dimensional vector | Image:PCA2_2b.png| Figure 3. Mapping 2: Mapping of a two dimensional data set onto a one dimensional vector | ||

</gallery> | </gallery> | ||

Notice(Figure 1.) the projection of a single data point '''x''' on '''u''' is '''p''' = '''u'''<sup>T</sup>'''x''' (a scalar), thus the projection of multiple data points(Figure 2.) onto '''u''' is computed by '''Y''' = '''u'''<sup>T</sup>'''X''', where '''X''' is the matrix representing multiple data points. The variance of '''Y''' can be calculated easily as Y is a 1 by n vector. | |||

Expand to higher dimensional space <math>\,\real</math><sup>q</sup> where q > 1, [http://en.wikipedia.org/wiki/Covariance Covariance] is used to find the variance of '''Y'''. The formula is given by: Var('''u'''<sup>T</sup>X) = '''u'''<sup>T</sup>S'''u''' where '''S''' is the sample covariance matrix of '''X'''. | |||

Var('''u'''<sup>T</sup>X) = '''u'''<sup>T</sup>S'''u''' where S is the sample covariance matrix of X. | |||

In finding the first principal component the objective is to find the direction '''u''' which will have the greatest variance of the points projected onto it. This can be expressed as the following optimization problem: | In finding the first principal component the objective is to find the direction '''u''' which will have the greatest variance of the points projected onto it. This can be expressed as the following optimization problem: | ||

| Line 213: | Line 215: | ||

The restriction '''u'''<sup>T</sup>'''u''' = 1 is to bound the problem. If the length of '''u''' were not restricted, '''u'''<sup>T</sup>S'''u''' would go to infinity as the magnitude of '''u''' goes to infinity. The selection of 1 as the bound is arbitrary. | The restriction '''u'''<sup>T</sup>'''u''' = 1 is to bound the problem. If the length of '''u''' were not restricted, '''u'''<sup>T</sup>S'''u''' would go to infinity as the magnitude of '''u''' goes to infinity. The selection of 1 as the bound is arbitrary. | ||

Also from the three images above, we can see that the first principal component is the "line of best fit" that passes through the multi-dimensional mean of all data points, that minimizes the sum of distance squared from data points to the vector. Tho not illustrated but if the above example is doing dimensional reduction from R3 to R1, then the second principal component will have the same fashion as first component, with correlation between the first component and data points removed. | |||

=== Lagrange multipliers === | === Lagrange multipliers === | ||

| Line 227: | Line 231: | ||

<math>\ L(x,y,\lambda)= f(x,y) - \lambda(g(x,y)-c)</math> | <math>\ L(x,y,\lambda)= f(x,y) - \lambda(g(x,y)-c)</math> | ||

,where <math>\ \lambda </math> term may be either added or subtracted. | |||

Which implies, where <math> \bigtriangledown\ </math> is the gradient, that: | Which implies, where <math> \bigtriangledown\ </math> is the gradient, that: | ||

| Line 252: | Line 258: | ||

==== Lagrange multiplier example ==== | ==== Lagrange multiplier example ==== | ||

Consider the function <math>f(x,y) = x - y</math> such that <math>g(x,y) = x^2 + y^2 = 1</math>. We would like to maximize this objective function. | Consider the function <math>\ f(x,y) = x - y</math> such that <math>\ g(x,y) = x^2 + y^2 = 1</math>. We would like to maximize this objective function. | ||

Calculating the Lagrangian gives | Calculating the Lagrangian gives | ||

:<math>\text{L}(x,y,\lambda) = x - y - \lambda g(x,y) = x - y - \lambda (x^2 + y^2 - 1)</math> | :<math>\ \text{L}(x,y,\lambda) = x - y - \lambda g(x,y) = x - y - \lambda (x^2 + y^2 - 1)</math> | ||

This gives three partial derivatives: | This gives three partial derivatives: | ||

:<math>\frac{\partial L}{\partial x} = 1 - 2\lambda x = 0, \frac{\partial L}{\partial y} = -1 - 2\lambda y = 0, \frac{\partial L}{\partial \lambda} = x^2 + y - 1 = 0</math> | :<math>\frac{\partial L}{\partial x} = 1 - 2\lambda x = 0, \frac{\partial L}{\partial y} = -1 - 2\lambda y = 0, \frac{\partial L}{\partial \lambda} = - (x^2 + y^2 - 1) = 0</math> | ||

This gives two solutions: | This gives two solutions: | ||

| Line 279: | Line 285: | ||

==== Connection of Singular Value Decomposition to PCA==== | ==== Connection of Singular Value Decomposition to PCA==== | ||

If | If <math>\,X</math> is centered (has a mean of 0) then <math>\,XX^T</math> is the covariance matrix. | ||

Recall that for a square, symmetric matrix <math>\,A</math>, we can have <math> \,A = W \Lambda W^T </math> where columns of <math>\,W</math> are eigenvectors of <math>\,A</math>, and <math> \,\Lambda </math> is a diagonal matrix containing the eigenvalues. | |||

Using the SVD of <math>\,X</math> we get <math>\,X=U \Sigma V^T</math>. Then consider <math>\,XX^T</math>: | |||

<math> \ XX^T = (U \Sigma V^T)(U \Sigma V^T)^T</math> | <math> \ XX^T = (U \Sigma V^T)(U \Sigma V^T)^T</math> | ||

| Line 294: | Line 301: | ||

<math> \ XX^T = U \Sigma^2 U^T</math> | <math> \ XX^T = U \Sigma^2 U^T</math> | ||

Thus <math>\,U</math> is the matrix containing eigenvectors of the covariance matrix <math>\,XX^T</math>. | |||

It is important to remember that U and V are unitary matricies, i.e.: <math> U^TU=UU^T=I</math> and <math>V^TV=VV^T=I</math>, each equality using the appropriate dimensional identity matrix | It is important to remember that <math>\,U</math> and <math>\,V</math> are unitary matricies, i.e.: <math>\, U^TU=UU^T=I</math> and <math>\,V^TV=VV^T=I</math>, each equality using the appropriate dimensional identity matrix. | ||

It is also interesting that the nonsquare matrix formed by taking a subset of the eigenvectors contained in <math>U</math> or <math>V</math> has the property that <math>V^TV=I</math> (the appropriate lower dimensional identity matrix) | It is also interesting that the nonsquare matrix formed by taking a subset of the eigenvectors contained in <math>\,U</math> or <math>\,V</math> has the property that <math>\,V^TV=I</math> (the appropriate lower dimensional identity matrix). | ||

=== Matlab example of dimensionality reduction using PCA === | === Matlab example of dimensionality reduction using PCA === | ||

| Line 335: | Line 343: | ||

=== PCA Algorithm (Algorithm 1) === | === PCA Algorithm (Algorithm 1) === | ||

:'''Recover basis''' | |||

To find the basis for PCA we first center the data by removing the mean of the sample, then calculate the covariance: | ::To find the basis for PCA we first center the data by removing the mean of the sample, then calculate the covariance: | ||

<div class="center"><math>XX^T = \sum_{i=1}^n x_i x_i^T </math></div> | |||

Let '''<math>U</math>''' be the eigenvectors of '''<math>XX^T</math>''' corresponding to the top '''<math>\,d</math>''' eigenvalues. | ::Let '''<math>U</math>''' be the eigenvectors of '''<math>XX^T</math>''' corresponding to the top '''<math>\,d</math>''' eigenvalues. | ||

:'''Encode training data''' | |||

To encode our data using PCA we let | ::To encode our data using PCA we let | ||

<div class="center"><math>\,Y = U^TX</math></div> | |||

where '''<math>Y</math>''' is an encoded matrix of the original data | ::where '''<math>Y</math>''' is an encoded matrix of the original data | ||

:''' Reconstruct training data''' | |||

To project our data back to the higher dimension | ::To project our data back to the higher dimension | ||

<div class="center"><math>\hat{X} = UY = UU^T X </math></div> | |||

:''' Encode test example''' | |||

<div class="center"><math>\,y = U^T x</math></div> | |||

where <math>\,y</math> is an encoding of <math>\,x</math> | ::where <math>\,y</math> is an encoding of <math>\,x</math> | ||

:'''Reconstruct test example''' | |||

<div class="center"><math>\hat{x} = Uy = UU^T x</math></div> | |||

=== Dual principal component analysis === | === Dual principal component analysis === | ||

:''' Motivation''' | |||

In some cases the dimension of our data can be much larger than the number of data points '''<math>( d >> n )</math>'''. | ::<p>In some cases the dimension of our data can be much larger than the number of data points '''<math>( d >> n )</math>'''. For example, suppose we are interested in weekly closing rates of the Dow Jones Industrial Average, S&P 500 Index, and the NASDAQ Composite Index over the past forty years. In this example we only have three data points '''<math>( n = 3 )</math>''', but our dimension is over two thousand! <math>( d \approx 2080 )</math> Consequently, the matrix '''<math> XX^T</math>''' used to find the basis '''<math>U</math>''' is <math>2080\times2080</math>, which is computationally heavy to calculate. As another example is studying genes for patients since the number of genes studied is likely much larger than the number of patients. When the data's dimensionality is high relative to the number of data points collected, a less compute-intensive way of recovering the basis is desirable. Calculating dual PCA is more efficient than PCA when the number of data points is lower relative to the dimensionality of the data. This is intuitive because it allows us to model our optimization problem as a decomposition of our data, rather than our dimensions.</p> | ||

For example, suppose we are interested in weekly closing rates of the Dow Jones Industrial Average, S&P 500 Index, and the NASDAQ Composite Index over the past forty years. In this example we only have three data points '''<math>( n = 3 )</math>''', but our dimension is over two thousand! <math>( d \approx 2080 )</math> Consequently, the matrix '''<math> XX^T</math>''' used to find the basis '''<math>U</math>''' is <math>2080\times2080</math>, which is computationally heavy to calculate. As another example is studying genes for patients since the number of genes studied is likely much larger than the number of patients. When the data's dimensionality is high, a less compute-intensive way of recovering the basis is desirable. | |||

Recall from Lecture 2 how we used singular value decomposition for PCA: | ::Recall from Lecture 2 how we used singular value decomposition for PCA: | ||

<div class="center"><math>\,X = U \Sigma V^T </math></div> | |||

Multiply both sides by '''V''' to get: | ::Multiply both sides by '''V''' to get: | ||

<div class="center"><math>\,X V = U \Sigma V^T V </math></div> | |||

Since '''<math> V^T V = I </math>''' (the identity matrix) the SVD can be rearranged as | ::Since '''<math> V^T V = I </math>''' (the identity matrix) the SVD can be rearranged as | ||

<div class="center"><math>\,X V = U \Sigma </math></div> | |||

Then, multiply both sides by '''<math> \Sigma </math><sup>-1</sup>''' to get: | ::Then, multiply both sides by '''<math> \Sigma </math><sup>-1</sup>''' to get: | ||

<div class="center"><math>\,U = X V \Sigma</math><sup>-1</sup></div> | |||

And so '''<math>U</math>''' can be calculated in terms of '''<math>V</math>''' and '''<math>\Sigma</math>'''. '''<math>V</math>''' is the eigenvectors of <math>X^T X_{n \times n}</math>, so it is much easier to find than '''<math>U</math>''' because '''<math>n<<d</math>'''. | ::And so '''<math>U</math>''' can be calculated in terms of '''<math>V</math>''' and '''<math>\Sigma</math>'''. '''<math>V</math>''' is the eigenvectors of <math>X^T X_{n \times n}</math>, so it is much easier to find than '''<math>U</math>''' because '''<math>n<<d</math>'''. | ||

:'''Algorithm''' | |||

We can replace all instances of <math>\,U</math> in Algorithm 1 with the dual form, <math>\,U = X V \Sigma^{-1} </math>, to obtain the algorithm for Dual PCA. | :We can replace all instances of <math>\,U</math> in Algorithm 1 with the dual form, <math>\,U = X V \Sigma^{-1} </math>, to obtain the algorithm for Dual PCA. | ||

<b>Recover Basis:</b><br /> | :<b>Recover Basis:</b><br /> | ||

For the basis of Dual PCA, we calculate | ::For the basis of Dual PCA, we calculate | ||

<div class="center">'''<math>\,XX^T</math>''' </div> | |||

then let '''<math>V</math>''' be the eigenvectors of '''<math>XX^T</math>''' with respect to the top | ::then let '''<math>\,V</math>''' be the eigenvectors of '''<math>\,XX^T</math>''' with respect to the top d eigenvalues. Finally we let '''<math>\,\Sigma</math>''' be the diagonal matrix of square roots of the top d eigenvalues. | ||

<b>Encode Training Data:</b> <br /> | :<b>Encode Training Data:</b> <br /> | ||

Using Dual PCA to encode the data, let | ::Using Dual PCA to encode the data, let | ||

<div class="center"><math>\,Y = U^TX = \Sigma V^T</math></div> | |||

where '''<math>Y</math>''' is an encoded matrix of the original data | ::where '''<math>Y</math>''' is an encoded matrix of the original data | ||

<b>Reconstruct Training Data:</b> <br /> | :<b>Reconstruct Training Data:</b> <br /> | ||

Project the data back to the higher dimension by | ::Project the data back to the higher dimension by | ||

<div class="center"><math>\hat{X} = UY = U \Sigma V^T = X V \Sigma^{-1} \Sigma V^T = X V V^T</math></div> | |||

<b>Encode Test Example:</b> <br /> | :<b>Encode Test Example:</b> <br /> | ||

<div class="center"><math>\,y = U^T x = \Sigma^{-1} V^T X^T x = \Sigma^{-1} V^T X^T x</math></div> | |||

where <math>\,y</math> is an encoding of <math>\,x</math> | ::where <math>\,y</math> is an encoding of <math>\,x</math> | ||

<b>Reconstruct Test Example:</b> | :<b>Reconstruct Test Example:</b> | ||

<div class="center"><math>\hat{x} = Uy = UU^T x = X V \Sigma^{-2} V^T X^T x = X V \Sigma^{-2} V^T X^T x</math></div> | |||

Note that the steps of '''Reconstructing training data''' and '''Reconstructioning test example''' still depend on '''<math>d</math>''', and therefore still will | ::Note that the steps of '''Reconstructing training data''' and '''Reconstructioning test example''' still depend on '''<math>d</math>''', and therefore still will | ||

be impractical in the case that the original dimensionality of the data '''(<math>d</math>)''' is very large | be impractical in the case that the original dimensionality of the data '''(<math>d</math>)''' is very large | ||

=== Kernel | === Kernel Principal Component Analysis === | ||

:'''Kernel methods''' | |||

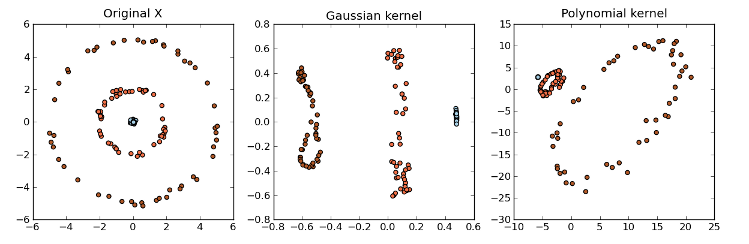

Kernel methods are a class of algorithms used for analyzing patterns and measuring similarity. They are used for finding relations within a data set. Kernel methods map data into a higher-dimensional space to make it easier to find these relations. While PCA is designed for linear variabilities, a lot of higher-dimensional data are nonlinear. This is where Kernel PCA can be useful to help model the data variability when it comes to a nonlinear manifold. Generally speaking, Kernels is a measure of "similarity" between data points without explicitly performing the dot product between data points pairs. This can be done using different methods. For example, the dot product looks at whether vectors are orthogonal. The Gaussian kernel looks at whether two objects are similar on a scale of 0 to 1. Kernels also measure similarity between trees. We will not go in too much detail at the moment. | ::<p>Kernel methods are a class of algorithms used for analyzing patterns and measuring similarity. They are used for finding relations within a data set. Kernel methods map data into a higher-dimensional space to make it easier to find these relations. While PCA is designed for linear variabilities, a lot of higher-dimensional data are nonlinear. This is where Kernel PCA can be useful to help model the data variability when it comes to a nonlinear manifold. Generally speaking, Kernels is a measure of "similarity" between data points without explicitly performing the dot product between data points pairs. This can be done using different methods. For example, the dot product looks at whether vectors are orthogonal. The Gaussian kernel looks at whether two objects are similar on a scale of 0 to 1. Kernels also measure similarity between trees. We will not go in too much detail at the moment.</p> | ||

Some common examples of kernels include: | :Some common examples of kernels include: | ||

# Linear kernel:<br/><math>\, k_{ij} = <x_{i},x_{j}></math> | # Linear kernel:<br/><math>\, k_{ij} = <x_{i},x_{j}></math> | ||

| Line 432: | Line 439: | ||

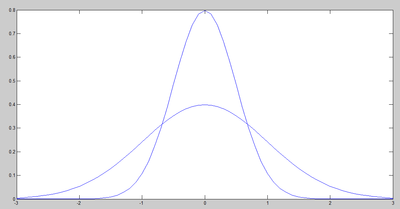

# Gaussian kernel:<br /><math>\, k_{ij} = exp(||x_{i}-x_{j}||^2/2\sigma^2)</math> | # Gaussian kernel:<br /><math>\, k_{ij} = exp(||x_{i}-x_{j}||^2/2\sigma^2)</math> | ||

Other kernel examples can be found [http://crsouza.blogspot.ca/2010/03/kernel-functions-for-machine-learning.html here]: | Where <math>\ x_{i},x_{j} ,\isin</math> <math>\,\real</math><sup>d</sup> | ||

The kernel matrix <math>\, K_{n \times n} </math> is the matrix where <math>\, k_{ij} </math> is the chosen kernel between d-dimensional points <math>\ x_{i}</math> and <math>\ x_{j}</math> | |||

:Other kernel examples can be found [http://crsouza.blogspot.ca/2010/03/kernel-functions-for-machine-learning.html here]: | |||

[[File:Kernel_pca1.png|frame|center|alt=Kernel PCA|Source:http://mlpy.sourceforge.net/docs/3.2/dim_red.html]] | [[File:Kernel_pca1.png|frame|center|alt=Kernel PCA|Source:http://mlpy.sourceforge.net/docs/3.2/dim_red.html]] | ||

: '''Kernel PCA''' | |||

::With the Kernel PCA, we take the original or observed space and map it to the feature space and then map the feature space to the embedded space of a lower dimension. | |||

<div class="center"><math> X_{observed space} \rightarrow \Eta_{feature space} \rightarrow Y_{embedded space} </math></div> | |||

::In the Kernel PCA algorithm, we can use the same argument as PCA and again use the Singular Value Decomposition (SVD) where: | |||

: | |||

<div class="center"><math>\, \Phi(X) = U \Sigma V^T </math></div> | |||

:<math>\ | ::where <math> U </math> contains the eigenvectors of '''<math> \Phi(X)\Phi(X)^T </math>''' | ||

::The goal is to reduce the dimensionality from the space X to the dimensionality of space Y by passing through H without having to know '''<math>\Phi(X)</math>''' exactly. | |||

::The algorithm is similar to the Dual PCA algorithm except that the training and the test data cannot be reconstructed since '''<math>\Phi(X)</math>''' is unknown. | |||

:'''Algorithm''' | |||

:<b>Recover Basis:</b><br /> | |||

::For the basis of Kernel PCA, we calculate | |||

< | <div class="center"><math>\, K = \Phi(X)^T \Phi(X)</math> </div> | ||

:<math> | ::using the kernel '''<math>K</math>''' and let '''<math>V</math>''' be the eigenvectors of '''<math>\Phi(X)^T \Phi(X)</math>''' with respect to the top '''<math>p</math>''' eigenvalues. Finally we let '''<math>\Sigma</math>''' be the diagonal matrix of square roots of the top '''<math>p</math>''' eigenvalues. | ||

:<b>Encode Training Data:</b> <br /> | |||

::Using Kernel PCA to encode the data, let | |||

< | <div class="center"><math>\,Y = U^T \Phi(X) = \Sigma V^T </math></div> | ||

:<math> | ::Recall '''<math> U = \Phi(X) V \Sigma^{-1} </math>''': | ||

<div class="center"><math>\,Y = U^T \Phi(X) = \Sigma^{-1} V^T \Phi(X)^T \Phi(X) = \Sigma^{-1} V^T K(X,X) </math></div> | |||

:<math> | ::where '''<math>Y</math>''' is an encoded matrix of the image of the original data in the function <math>\Phi</math>. '''<math>Y</math>''' is computable without knowledge of '''<math>\Phi(X)</math>''' via the kernel function. | ||

:<b>Reconstruct Training Data:</b> <br /> | |||

< | <div class="center"><math>\hat{\Phi(X)} = UY = UU^T\Phi(X)=\Phi(X)V\Sigma^{-1}\Sigma V^T=\Phi(X) V V^T</math> | ||

<math>\hat{X} = \Phi^{-1}(\hat{\Phi(X)})</math></div> | |||

: | ::However, '''<math>\Phi(X)</math>''' is unknown so we cannot reconstruct the data. | ||

:<math> | |||

:<b>Encode Test Example:</b> <br /> | |||

<div class="center"><math>\,y = U^T \Phi(x) = \Sigma^{-1} V^T \Phi(X)^T \Phi(x) = \Sigma^{-1} V^T K(X,x)</math></div> | |||

::This is possible because we know that '''<math>\Phi(X)^T \Phi(X) = K(X,x)</math>'''. | |||

:<math> | |||

:'''R'''ecall | |||

::'''<math> U = \Phi(X) V \Sigma^{-1} </math>''': | |||

''' | ::'''<math>\Phi(X)</math>''' is the transformation of the training data <math>X_{d \times n}</math> while '''<math>\Phi(x)</math>''' is the transformation of the test example <math>x_{d \times 1}</math> | ||

'''<math> U = \Phi(X) V \Sigma^{-1} </math>''': | |||

'''<math>\Phi(X)</math>''' is the transformation of the training data <math>X_{d \times n}</math> while '''<math>\Phi(x)</math>''' is the transformation of the test example <math>x_{d \times 1}</math> | |||

<b>Reconstruct Test Example:</b> | :<b>Reconstruct Test Example:</b> | ||

<div class="center"><math>\hat{\Phi(x)} = Uy = UU^T \Phi(x) = \Phi(X) V \Sigma^{-2} V^T \Phi(X)^T \Phi(x) = \Phi(X) V \Sigma^{-2} V^T K(X,x)</math></div> | |||

Once again, since '''<math>\Phi(X)</math>''' is unknown, we cannot reconstruct the data. | ::Once again, since '''<math>\Phi(X)</math>''' is unknown, we cannot reconstruct the data. | ||

==Centering for kernel PCA and multidimensional scaling (Lecture 4: Sept. 22, 2014)== | ==Centering for kernel PCA and multidimensional scaling (Lecture 4: Sept. 22, 2014)== | ||

| Line 505: | Line 515: | ||

:<math>\tilde{K}:(x,y)\mapsto \tilde{\Phi}(x)^T \tilde{\Phi}(y)</math> | :<math>\tilde{K}:(x,y)\mapsto \tilde{\Phi}(x)^T \tilde{\Phi}(y)</math> | ||

Then | Then | ||

:<math>\tilde{K}(x,y) = (\Phi(x)-\mu)^T (\Phi(y)-\mu)=\Phi(x)^T\Phi(y)-\mu^T\Phi( | :<math>\tilde{K}(x,y) = (\Phi(x)-\mu)^T (\Phi(y)-\mu)=\Phi(x)^T\Phi(y)-\mu^T\Phi(y)-\Phi(x)^T\mu+\mu^T\mu</math><br /> | ||

<math>=K(x,y)-\frac{1}{n}\sum_{j=1}^n\Phi(x_j)^T\Phi( | <math>=K(x,y)-\frac{1}{n}\sum_{j=1}^n\Phi(x_j)^T\Phi(y)-\frac{1}{n}\sum_{j=1}^n\Phi(x_j)^T\Phi(x)+\frac{1}{n^2}\sum_{j=1}^n\sum_{i=1}^n\Phi(x_j)^T\Phi(x_i)</math><br /> | ||

<math>=K(x,y)-\frac{1}{n}\sum_{j=1}^n K(x_j,x)-\frac{1}{n}\sum_{j=1}^n K(x_j,y)+\frac{1}{n^2}\sum_{j=1}^n\sum_{i=1}^n K(x_j,x_i)</math><br /> | <math>=K(x,y)-\frac{1}{n}\sum_{j=1}^n K(x_j,x)-\frac{1}{n}\sum_{j=1}^n K(x_j,y)+\frac{1}{n^2}\sum_{j=1}^n\sum_{i=1}^n K(x_j,x_i)</math><br /> | ||

<math>=K(x,y)-\frac{1}{n}\bold{1}^TK(X,x)-\frac{1}{n}\bold{1}^TK(X,y)+\frac{1}{n^2}\bold{1}^TK(X,X)\bold{1}</math><br /> | <math>=K(x,y)-\frac{1}{n}\bold{1}^TK(X,x)-\frac{1}{n}\bold{1}^TK(X,y)+\frac{1}{n^2}\bold{1}^TK(X,X)\bold{1}</math><br /> | ||

where <math>\bold{1}=([1,1,...,1]_{1\times n})^T</math><br /> | where <math>\bold{1}=([1,1,...,1]_{1\times n})^T</math><br /> | ||

Here <math>X_{d\times n}</math> and <math>\{x_j\}_{j=1}^n</math> | Here <math>X_{d\times n}</math> and <math>\{x_j\}_{j=1}^n</math> denotes the data set matrix and elements respectively and <math>x,y</math> represent test elements at which the centered kernel is evaluated. | ||

In practice, with <math>\, X = [x_1 | x_2 | ... | x_n] </math> as the data matrix, the Kernel Matrix, denoted by '''<math>K</math>''', will have elements <math>\, [K]_{ij} = K(x_i,x_j)</math>. | In practice, with <math>\, X = [x_1 | x_2 | ... | x_n] </math> as the data matrix, the Kernel Matrix, denoted by '''<math>K</math>''', will have elements <math>\, [K]_{ij} = K(x_i,x_j)</math>. | ||

| Line 517: | Line 527: | ||

We evaluate <math>\tilde{K} = K - \frac{1}{n}\bold{1}^TK-\frac{1}{n}K\bold{1}+\frac{1}{n^2}\bold{1}^TK\bold{1} </math>, and find the eigenvectors/eigenvalues of this matrix for Kernel PCA. | We evaluate <math>\tilde{K} = K - \frac{1}{n}\bold{1}^TK-\frac{1}{n}K\bold{1}+\frac{1}{n^2}\bold{1}^TK\bold{1} </math>, and find the eigenvectors/eigenvalues of this matrix for Kernel PCA. | ||

===Multidimensional | ===Metric Multidimensional Scaling=== | ||

Multidimensional Scaling (MDS) is | Multidimensional Scaling (MDS) is another dimension reduction method that attempts to preserve the pair-wise distance, '''<math>\,d_{ij}</math>''', between data points. MDS helps with the problem of making a configuration of points in Euclidean space by using the distance information between the patterns. For example, suppose we want to map a set of points '''X''', <math>x \in \mathbb{R}^d</math>, to a set of points '''Y''', <math>y \in \mathbb{R}^p</math>. We want to preserve the pair-wise distances such that '''<math>\,d(x_i, x_j)</math>''' is approximately the same as '''<math>\,d(y_i, y_j)</math>'''. | ||

====MDS example==== | ====MDS example==== | ||

| Line 548: | Line 558: | ||

* If '''<math>x_i</math>''''s don't overlap, then all elements that are not on the diagonal are positive | * If '''<math>x_i</math>''''s don't overlap, then all elements that are not on the diagonal are positive | ||

'''Triangle inequality''': '''<math>d_{ab} + d_{bc} >= d_{ac}</math>''' whenever '''<math>a, b, c</math>''' are three different indices | * Triangle inequality holds for all distance | ||

** '''Triangle inequality''': '''<math>d_{ab} + d_{bc} >= d_{ac}</math>''' whenever '''<math>a, b, c</math>''' are three different indices | |||

'''Example:'''<br /> | |||

For:<br /> | |||

<math>x_1 = (0,1)</math>'''<br /> | |||

<math>x_2 = (1,0)</math>'''<br /> | |||

<math>x_3 = (1,1)</math>'''<br /> | |||

Or:<br /> | |||

:<math>X = \begin{bmatrix} 0 & 1 & 1\\1 & 0 & 1 \end{bmatrix}</math> | |||

The distance matrix is: | |||

:<math>D^X_{3 \times 3} = \begin{bmatrix} 0 & \sqrt{2} & 1\\\sqrt{2} & 0 & 1\\1 & 1 & 0 \end{bmatrix}</math> | |||

For any mapping to matrix <math>Y_{p \times n}</math>, we can calculate the corresponding distance matrix | For any mapping to matrix <math>Y_{p \times n}</math>, we can calculate the corresponding distance matrix | ||

| Line 572: | Line 598: | ||

====Theorem==== | ====Theorem==== | ||

If '''<math>D^{(X)}</math>''' is a distance matrix and if <math>K=-\frac{1}{2}HD^{(X)}H</math>, then '''<math>D^{(X)}</math>''' is Euclidean | If '''<math>D^{(X)}</math>''' is a distance matrix and if <math>K=-\frac{1}{2}HD^{(X)}H</math>, then '''<math>D^{(X)}</math>''' is Euclidean if and only if '''<math>K</math>''' is Positive Semi-Definite, <br/> | ||

where <math>H=I-\frac{1}{n}\bold{ | where <math>H=I-\frac{1}{n}\bold{e}\bold{e} ^T</math> and <math>\bold{e}=([1,1,...,1]_{1\times n})^T</math> | ||

====Relation between MDS and PCA==== | ====Relation between MDS and PCA==== | ||

It has been shown that the goal of MDS is to obtain '''<math> Y </math>''' by minimizing <math>\text{min}_Y \sum_{i=1}^n{\sum_{j=1}^n{(d_{ij}^{(X)}-d_{ij}^{(Y)})^2}}</math><br /> | |||

'''K''' is positive semi-definite so it can be written '''<math>K = X^TX</math>'''. | '''K''' is positive semi-definite so it can be written as '''<math>K = X^TX</math>'''. | ||

Thus | |||

: <math>\text{min}_Y \sum_{i=1}^n{\sum_{j=1}^n{(d_{ij}^{(X)}-d_{ij}^{(Y)})^2}} =\text{min}_Y(\sum_{i=1}^n\sum_{j=1}^n(x_i^Tx_j-y_i^Ty_j)^2) </math> | : <math>\text{min}_Y \sum_{i=1}^n{\sum_{j=1}^n{(d_{ij}^{(X)}-d_{ij}^{(Y)})^2}} =\text{min}_Y(\sum_{i=1}^n\sum_{j=1}^n(x_i^Tx_j-y_i^Ty_j)^2) </math> | ||

and | and | ||

| Line 593: | Line 619: | ||

Since <math>\, \Lambda</math> and <math>\hat{\Lambda}</math> are diagonal matrices containing the eigenvalues of '''<math>X^TX,\text{ and } Y^TY</math>''' respectively, and all said eigenvalues are positive, then the min is clearly obtained when the '''<math>p</math>''' nonzero diagonal elements in '''<math>Y^TY</math>''' are the '''<math>p</math>''' largest eigenvalues in '''<math>X^TX</math>''' | Since <math>\, \Lambda</math> and <math>\hat{\Lambda}</math> are diagonal matrices containing the eigenvalues of '''<math>X^TX,\text{ and } Y^TY</math>''' respectively, and all said eigenvalues are positive, then the min is clearly obtained when the '''<math>p</math>''' nonzero diagonal elements in '''<math>Y^TY</math>''' are the '''<math>p</math>''' largest eigenvalues in '''<math>X^TX</math>''' | ||

Therefore in Euclidean space, the solution of MDS is the same as that of PCA. | |||

'''Approximation to MDS Algorithm''' <ref> http://www.cmap.polytechnique.fr/~peyre/cours/x2005signal/dimreduc_landmarks.pdf </ref> | |||

The problem with the MDS algorithm is that the matrices <math>D^X</math> and <math>K</math> are not sparse. It is therefore expensive to compute eigen-decompositions when the number of data points is very large. To reduce the computational work required we can use Landmark Multidimensional Scaling (LMDS). LMDS is an algorithm designed to run classical MDS to embed a chosen subset of data, landmark points, and then located the remaining data points within this space given knowledge of its distance to the landmark points. The following paper has details on [http://research.microsoft.com/en-us/um/people/jplatt/nystrom2.pdf FastMap, MetricMap, and Landmark MDS] and unifies the mathematical foundation of the three presented MDS algorithms. | |||

==ISOMAP and LLE (Lecture 5: Sept. 29, 2014)== | |||

===ISOMAP=== | ===ISOMAP=== | ||

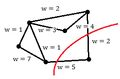

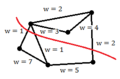

Isomap is a nonlinear dimensionality reduction method. In Multidimensional scaling we seek to find a low dimensional embedding '''<math>Y</math>''' for some high dimensional data '''<math>X</math>''', minimizing <math>\|D^X - D^Y\|^2</math> where <math>\, D^Y</math> is a matrix of euclidean distances of points on '''<math> Y</math>''' (same with '''<math> D^X</math>'''). In Isomap we assume the high dimensional data in <math> X</math> lie on a low dimensional manifold, and we'd like to replace the euclidean distances in '''<math> D^X</math>''' with the distance on this manifold. | Isomap is a nonlinear dimensionality reduction method. In Multidimensional scaling we seek to find a low dimensional embedding '''<math>\,Y</math>''' for some high dimensional data '''<math>\,X</math>''', minimizing <math>\,\|D^X - D^Y\|^2</math> where <math>\, D^Y</math> is a matrix of euclidean distances of points on '''<math> \,Y</math>''' (same with '''<math> \,D^X</math>'''). In Isomap we assume the high dimensional data in <math> X</math> lie on a low dimensional manifold, and we'd like to replace the euclidean distances in '''<math>\, D^X</math>''' with the distance on this manifold. We don't have information about this manifold, so we approximate it by forming the k-nearest-neighbour graph between points in the dataset. The distance on the manifold can then be approximated by the shortest path between points. This is called the geodesic distance, which is a generalization of the notion of straight line to curved spaces and is denoted by '''<math>\, D^G</math>'''. This is useful because it allows for nonlinear patterns to be accounted for in the lower dimensional space. From this point, the rest of the algorithm is the same as MDS. The approach of Isomap is capable of discovering nonlinear degrees of freedom that underlie the complex nature of the data. This algorithm efficiently computes a globally optimal solution which is guaranteed to converge asymptotically to the true underlying structure. (Further Reading: A Global Geometric Framework for Nonlinear Dimensionality Reduction, by Joshua B. Tenenbaum, Vin de Silva, John C. Langford) | ||

'''The Isomap Algorithm can thus be described through the following steps:''' | |||

1. Construct the Neighbourhood Graph | |||

<blockquote> | |||

Determine which points are neighbours on manifold M based on distances <math>d_{i,j}^{(X)}</math> between pairs of points i and j in the input space X. | |||

To do this, we can use one of the two methods below: | |||

<br /> | |||

<br /> | |||

a) '''K-Nearest Neighbours:''' For each point i, select the k points with the minimum distance to point i as neighbours. | |||

<br /> | |||

b) '''<math>{\epsilon}</math>-Radius Neighbourhood:''' For each point i, the set of neighbours is given by <math>\, \{ j : d_{i,j}^{ (X) } < \epsilon \} </math> | |||

<br /> | |||

<br /> | |||

The neighbourhood relations are represented as a weighted graph G over the data points, with edges of weight <math>d_{i,j}^{(X)}</math> between the neighbouring points. | |||

</blockquote> | |||

2. Compute shortest paths | |||

<blockquote> | |||

Estimate geodesic distances <math>d_{i,j}^{(M)}</math> between all pairs of points on the manifold M by computing their shortest path distance <math>d_{i,j}^{(G)}</math> in graph G. | |||

</blockquote> | |||

3. Construct low dimensional embeddings (classical MDS) | |||

<blockquote> | |||

Apply classical MDS to the matrix of graph distances <math>D_{G} = \{ d_{i,j}^{(G)} \}</math>, constructing an embedding of the data in a d-dimensional Euclidean space Y that best represents the manifolds estimated intrinsic geometry. | |||

</blockquote> | |||

It worth noting that as <math>D^{G}</math> is not | |||

It is worth noting that as <math>D^{G}</math> is not a Euclidean distance, the matrix <math>K = -\frac{1}{2}HD^{(G)}H</math> is not guaranteed to be positive semi-definite. This is handled in the implementation [http://isomap.stanford.edu/] by considering only the positive value of the real part only, which can be considered as projecting the matrix onto the | |||

cone of nearest positive semi-definite matrix. | cone of nearest positive semi-definite matrix. | ||

| Line 608: | Line 671: | ||

==== ISOMAP Results ==== | ==== ISOMAP Results ==== | ||

[http://web.mit.edu/cocosci/Papers/sci_reprint.pdf A Global Geometric Framework for Nonlinear Dimensionality Reduction] paper proposed ISOMAP, the below figures show some images for ISOMAP results. The first image | [http://web.mit.edu/cocosci/Papers/sci_reprint.pdf A Global Geometric Framework for Nonlinear Dimensionality Reduction] is the paper that proposed ISOMAP, the below figures show some images for ISOMAP results. The first image shows the different orientation of the face images dataset, as shown in the figure the x axis captures the horizontal orientation, while the y axis captures the vertical orientation and the bar captures the lightning direction. The second image shows the 2 handwritten digits and ISOMAP results in this dataset. | ||

<gallery> | <gallery> | ||

| Line 625: | Line 688: | ||

The method optimizes the equation <math>\mathbf{x_i-w_1x_{i1}+w_2x_{i2}+...+w_kx_{ik}}</math> for each <math>x_i \in X</math> with corresponding k-th nearest neighbour set <math>\mathbf{\, {x_{i1},x_{i2},...,x_{ik}}}</math>. LLE is therefore an optimization problem for: <br/> | The method optimizes the equation <math>\mathbf{x_i-w_1x_{i1}+w_2x_{i2}+...+w_kx_{ik}}</math> for each <math>x_i \in X</math> with corresponding k-th nearest neighbour set <math>\mathbf{\, {x_{i1},x_{i2},...,x_{ik}}}</math>. LLE is therefore an optimization problem for: <br/> | ||

<div class="center" style="width: auto; margin-left: auto; margin-right: auto;"><math>\mathbf{ \text{min}_W \sum_{i=1}^n{(x_i-\sum_{j=1}^n{w_{ij}x_j)^2}}} </math> such that <math> \mathbf{\sum_{j=1}^n w_{ij} = 1} </math> and also <math> \mathbf{w_{ij} = 0} </math> if <math>\mathbf{x_i} </math> and<math>\mathbf{x_j} </math>are not neighbors </div> | <div class="center" style="width: auto; margin-left: auto; margin-right: auto;"><math>\mathbf{ \text{min}_W \sum_{i=1}^n{(x_i-\sum_{j=1}^n{w_{ij}x_j)^2}}} </math> such that <math> \mathbf{\sum_{j=1}^n w_{ij} = 1} </math> and also <math> \mathbf{w_{ij} = 0} </math> if <math>\mathbf{x_i} </math> and <math>\mathbf{x_j} </math> are not neighbors </div> | ||

==== Algorithm ==== | ==== Algorithm ==== | ||

| Line 648: | Line 711: | ||

'''Notes: ''' | '''Notes: ''' | ||

<math>G_{d*d}</math> is invertible under some conditions depending on <math>k</math>, as it must have rank <math>d</math> so <math>k</math> must be greater than or equal <math>d</math> | <math>\, G_{d*d} </math> is invertible under some conditions depending on <math>\, k</math>, as it must have rank <math>\, d </math> so <math>\, k</math> must be greater than or equal <math>\, d </math> | ||

LLE doesn't work for out of sample case there are extensions for it to handle this as in this [http://cseweb.ucsd.edu/~saul/papers/smdr_ssl05.pdf] | LLE doesn't work for out of sample case there are extensions for it to handle this as in this [http://cseweb.ucsd.edu/~saul/papers/smdr_ssl05.pdf] | ||

If we want to reduce the dimensionality to p, we need to choose the p+1 smallest eigenvectors. | If we want to reduce the dimensionality to p, we need to choose the p+1 smallest eigenvectors. | ||

'''Pros of Isomap:''' | |||

# Calculation is based on eigenvalue and eigenvectors, thus overall stability and optimality is maintained. | |||

# Only need one pre-determined parameter: k(nearest neighbours) or <math>\, {\epsilon} </math> (neighbourhood radius) | |||

# Able to determine intrinsic dimension of signal from residual. | |||

'''Cons of Isomap''' | |||

# It is slow - it requires comparing all possible paths between each N(N-1)/2 pairs of points and determining which is shortest. (But this can be fixed via the Nystrom approximation which we will see later.) | |||

# It is not obvious how to handle out of sample data | |||

'''Pros of LLE:''' | |||

# LLE is capable of retain the structure and characterstic of original data. | |||

# LLE requires little parameters to calculate | |||

# Combining 1 & 2, LLE is good for trouble shooting and error dignostics, since it can retain most from original data and needs minimal amount of parameter to calculate. | |||

# Much faster than ISOMAP, often with comparable or better performance | |||

'''Cons of LLE''' | |||

# It is not obvious how to handle out of sample data | |||

# There is no way of knowing if reducing the data to p dimensions is the optimal reduction. | |||

==LLE continued, introduction to maximum variance unfolding (MVU) (Lecture 6: Oct. 1, 2014)== | ==LLE continued, introduction to maximum variance unfolding (MVU) (Lecture 6: Oct. 1, 2014)== | ||

| Line 674: | Line 757: | ||

Note that: <math>I_{i:} Y^T = y_i^T</math> where <math>I_{i:}</math> is the <math>i^{th}</math> row from the identity matrix | Note that: <math>I_{i:} Y^T = y_i^T</math> where <math>I_{i:}</math> is the <math>i^{th}</math> row from the identity matrix | ||

Similarly, <math>\sum w_{ij} y_i = | Similarly, <math>\sum w_{ij} y_i = W_{i:} Y^T</math> | ||

So the objective is equivalent to minimizing <math>\sum_{i=1}^n||I_{i:} Y^T - W_{i:}Y^T||^2 = ||IY^T - WY^T||^2</math> | So the objective is equivalent to minimizing <math>\sum_{i=1}^n||I_{i:} Y^T - W_{i:}Y^T||^2 = ||IY^T - WY^T||^2</math> | ||

| Line 689: | Line 772: | ||

By letting <math>\, M=(I-W)(I-W)^T</math>, the objective function will become | By letting <math>\, M=(I-W)(I-W)^T</math>, the objective function will become | ||

:<math>\min_Y Tr(Y^T M Y)</math> | :<math>\, \min_Y Tr(Y^T M Y)</math> | ||

This minimization has a well-known solution: We can set the rows of '''<math>Y</math>''' to be the '''<math>d</math>''' lowest eigenvectors of '''<math>M</math>''', where '''<math>d</math>''' is the dimension of the reduced space. | This minimization has a well-known solution: We can set the rows of '''<math>Y</math>''' to be the '''<math>d</math>''' lowest eigenvectors of '''<math>M</math>''', where '''<math>d</math>''' is the dimension of the reduced space. | ||

| Line 712: | Line 795: | ||

\end{bmatrix} </math> | \end{bmatrix} </math> | ||

If we want to reduce the dimensions to p, we need to choose p + 1 smallest eigenvectors. This is because | If we want to reduce the dimensions to p, we need to choose p + 1 smallest eigenvectors. This is because the first eigenvalue of the matrix is equal to 1 since it is a Laplacian (the number of <math>0</math> eigenvalues of a Laplacian is the number of connected components). | ||

Note that for LLE, the bottom d+1 eigenvectors of the matrix M represents a free translation mode of eigenvalue 0. By discarding this eigenvector, we enforce the constraint of <math>\sum_{i=1}^n = y_i=0</math> since it means that the components of the other eigenvectors must sum to 0, because of orthogonality. The remaining d eigenvectors form the d embedding coordinates found by LLE. | |||

=== Comparison between Dimensionality Reduction Approaches (Unified Framework) === | |||

{| class="wikitable" style="text-align:center;width: | {| class="wikitable" style="text-align:center;width:800px; height:200px;background-color:white;" | ||

|- | |- | ||

! Approach | ! Approach | ||

! Solution\Eigenvectors of | ! Solution \ Eigenvectors of | ||

|- | |- | ||

! scope="row" | PCA | ! scope="row" | PCA | ||

| <math> \ | | <math> \boldsymbol{ X^T X }</math> or <math> \boldsymbol{ \Sigma V^T } </math> | ||

|- | |- | ||

! scope="row" | MDS | ! scope="row" | MDS | ||

| <math> \ | | <math> \boldsymbol{ - \frac{1}{2} (I - \frac{1}{n} e e^T) D (I - \frac{1}{n} e e^T) }</math> a.k.a. <math> \boldsymbol{-\frac{1}{2} H D H}</math> where <math> \boldsymbol{H=(I - \frac{1}{n} e e^T)}</math> | ||

|- | |- | ||

! scope="row" | ISOMAP | ! scope="row" | ISOMAP | ||

| <math>\ | | <math>\boldsymbol{ - \frac{1}{2} (I - \frac{1}{n} e e^T) D^G (I - \frac{1}{n} e e^T) }</math> | ||

|- | |- | ||

! scope="row" | LLE | ! scope="row" | LLE | ||

| <math>\ | | <math>\boldsymbol{ (I - W)(I - W)^T }</math> or <math>\boldsymbol{ \lambda I - (I - W)(I - W)^T }</math> | ||

|- | |- | ||

! scope="row" | KPCA | ! scope="row" | KPCA | ||

| <math> \ | | <math> \boldsymbol{K} </math> | ||

|} | |} | ||

From the above table we can interpret all of these algorithms as kernel PCA | From the above table we can interpret all of these algorithms as variations of kernel PCA. More details are presented in this paper: [http://www.kyb.mpg.de/fileadmin/user_upload/files/publications/pdfs/pdf2326.pdf A kernel view of the dimensionality reduction of manifolds]. | ||

Note that PCA, MDS, ISOMAP and KPCA choose the largest eigenvalue/eigenvector pairs for embedding while LLE chooses the smallest pair(For MDS, D is Euclidean distance). However, in LLE, if we let <math>\lambda_{max}</math> be the largest eigenvalue of <math>\mathbf{L = (I-W)^T(I-W)}</math> and replace the matrix <math>\mathbf{ (I - W)(I - W)^T }</math> with <math>\mathbf{ \lambda_{max}I - (I - W)(I - W)^T }</math>, the largest eigenvalue/eigenvector pairs will be chosen instead. | |||

=== Relationship between LLE and Kernel PCA on LLE kernel === | |||

The smallest eigenvalue of LLE Kernel <math>\,(I-W)^T(I-W)</math> is 0. | |||

The eigenvector corresponding to this eigenvalue is <math>\,c[1,1,1,....1,1,1]</math>. | |||

===Maximum variance unfolding=== | So when we do LLE we find eigenvectors corresponding to smallest p+1 eigenvalues and discard the first eigenvector which is <math>\,c[1,1,1,....1,1,1]</math>. | ||

In maximum variance unfolding (MVU), as in isomap and LLE, we try to reduce the dimensionality of a nonlinear data. We may assume that the data is sampled from a low-dimensional [http://en.wikipedia.org/wiki/Manifold manifold] that is embedded within a higher-dimensional Euclidean space. The aim is to '''"unfold"''' this manifold in a lower-dimensional Euclidean space such that the local structure of each point is preserved. We would like to preserve the distance from any point to each of its '''<math>k</math>''' nearest neighbours, while maximizing the pairwise variance for all points which are not its neighbours. The MVU algorithm uses semidefinite programming to find such a kernel. | |||

Now, If we construct a new matrix, a so-called deflated matrix by multiplying <math>\,\Phi(x)=I-W </math> with a factor <math>\,(I-\frac{1}{n}ee^T)</math>, we will get a new kernel with rank d-1. | |||

i.e. <math>\,\hat{K} = \hat{\phi(x)}^T \hat{\phi(x)} = (I-\frac{1}{n}ee^T)K(I-\frac{1}{n}ee^T)</math> has the eigenvector <math>\,c[1,1,1,....1,1,1]</math> removed. | |||

Next we only need to find eigenvectors corresponding to smallest p eigenvalues and do not need to discard the first one, since it has been moved already. | |||

However, we realize that <math>\,(I-\frac{1}{n}ee^T)</math> equals the centering matrix <math>\,H</math>. | |||

So what we do in LLE is similar to what we do in Kernel PCA. | |||

In fact doing LLE is equivalent to perform Kernel PCA on LLE kernel up to a scaling factor. | |||

''' Supervised Locally Linear Embedding''' | |||

[http://www.iipl.fudan.edu.cn/~zhangjp/literatures/MLF/manifold%20learning/icann03.pdf Dick et al] (2003) proposed a supervised LLE. The big difference between LLE and SLLE is that in SLLE, the class information of data would be considered to get the nearest neighbors. | |||

===Maximum variance unfolding=== | |||

In maximum variance unfolding (MVU), as in isomap and LLE, we try to reduce the dimensionality of a nonlinear data. We may assume that the data is sampled from a low-dimensional [http://en.wikipedia.org/wiki/Manifold manifold] that is embedded within a higher-dimensional Euclidean space. The aim is to '''"unfold"''' this manifold in a lower-dimensional Euclidean space such that the local structure of each point is preserved. We would like to preserve the distance from any point to each of its '''<math>k</math>''' nearest neighbours, while maximizing the pairwise variance for all points which are not its neighbours. The MVU algorithm uses semidefinite programming to find such a kernel. | |||

====Optimization constraints==== | ====Optimization constraints==== | ||

| Line 751: | Line 856: | ||

# '''<math>\Phi</math>''' must be centred, i.e. <math>\sum\limits_{i,j} k_{ij} = 0</math>. | # '''<math>\Phi</math>''' must be centred, i.e. <math>\sum\limits_{i,j} k_{ij} = 0</math>. | ||

# '''<math> k </math>''' should be positive semi-definite, i.e. <math>k \succeq 0 </math>. | # '''<math> k </math>''' should be positive semi-definite, i.e. <math>k \succeq 0 </math>. | ||

# The local distance should be maintained between data and its embedding. <br/> This means that for '''<math>i, j</math>''' such that '''<math>x_i, x_j</math>''' are neighbours, we require that '''<math>\Phi</math>''' satisfies <br/> | # The local distance should be maintained between data and its embedding. <br/> This means that for '''<math>i, j</math>''' such that '''<math>x_i, x_j</math>''' are neighbours, we require that '''<math>\Phi</math>''' satisfies <br/> <math>\, | x_i - x_j |^2 = |\phi(x_i) - \phi(x_j)|^2</math> <br/> | ||

<math>\, = [\phi(x_i) - \phi(x_j)]^T [\phi(x_i) - \phi(x_j)] </math> <br/> <math>\, =\phi(x_i)^T \phi(x_i) - \phi(x_i)^T \phi(x_j) - \phi(x_j)^T \phi(x_i) + \phi(x_j)^T \phi(x_j)</math> <br/> <math>\, =k_{ii} - k_{ij} - k_{ji} + k_{jj}</math> <br/> <math>\, =k_{ii} -2k_{ij} + k_{jj}</math> <br/> where <math>\, K=\phi^T \phi</math> is the <math>n \times n</math> kernel matrix. <br/> We can summarize this third constraint as: <br/> <math>\, n_{ij} ||x_i - x_j||^2 = n_{ij} || \phi(x_i) - \phi(x_J) ||^2</math> <br/> where '''<math> n_{ij}</math>''' is the neighbourhood indicator, that is, '''<math> n_{ij} = 1</math>''' if '''<math>x_i, x_j</math>''' are neighbours and <math>0</math> otherwise. | |||

====Optimization problem==== | ====Optimization problem==== | ||

One option for finding the correct '''K''' would be to minimize the rank of '''K'''. The intuition behind this is that even though the data might be high-dimensional, it lies on a manifold of low dimension. Since <math>rank</math> is not a convex function, the objective function is ill-defined and is replaced with <math>Tr(K),</math> since when '''K''' is semidefinite the trace is just equal to the sum of the singular values. | |||

One option for finding the correct '''K''' would be to minimize the rank of '''K'''. The intuition behind this is that even though the data might be high-dimensional, it lies on a manifold of low dimension. Since <math>rank</math> is not a convex function, the objective function is ill-defined and is replaced with <math>Tr(K),</math> since when '''K''' is semidefinite the trace is just equal to the sum of the singular values. However, in practice minimizing the trace does not result in a faithful low-dimensional representation. An explanation for this phenomenon is still an open problem. | |||

Recall that in PCA, <math> Var(X) = \boldsymbol{\lambda}_1 + \boldsymbol{\lambda}_2 + \cdots + \boldsymbol{\lambda}_d = Tr(S) </math>. | |||

To find a useful '''K''', the objective of <math>Tr(K)</math> can still be used, but the problem has to be changed from a minimization to a maximization. The intuition here is that you are maximizing the total variance explained by the kernel. | To find a useful '''K''', the objective of <math>Tr(K)</math> can still be used, but the problem has to be changed from a minimization to a maximization. The intuition here is that you are maximizing the total variance explained by the kernel. | ||

Thus the problem is | Thus the problem is | ||

| Line 762: | Line 870: | ||

which is a semidefinite program. | which is a semidefinite program. | ||

====MVU (Maximum Variance | ====MVU (Maximum Variance Unfolding) Method==== | ||

# Construct the neighbourhood graph using k-nearest neighbours | # Construct the neighbourhood graph using k-nearest neighbours | ||

# Find the kernel K by solving the SDP problem: max <math>Tr(K)</math> such that <br/><math>K_{ii}+K_{jj}-2K_{ij} = D_{ij}, \sum_{ij} K_{ij} = 0, K \succeq 0</math> | # Find the kernel K by solving the SDP problem: max <math>Tr(K)</math> such that <br/><math>K_{ii}+K_{jj}-2K_{ij} = D_{ij}, \sum_{ij} K_{ij} = 0, K \succeq 0</math> | ||

# Apply Kernel PCA on the learned kernel K | # Apply Kernel PCA on the learned kernel K | ||

Maximum Variance Unfolding is a useful non-linear interpretation of the PCA intuition. MVU is robust and will preserve the nearest neighbours of the original data in any mapping. | |||

To categorize dimension reduction methods we have learnt so far | |||

{| class="wikitable" | |||

|- | |||

! | |||

! '''Locality''' Preserving methods | |||

! '''Globality''' Preserving methods | |||

|- | |||

| '''Linear''' methods | |||

| '''LLE''' | |||

| '''MDS, PCA''' | |||

|- | |||

| '''Non-linear''' methods | |||

| '''ISOMAP, LPP'''(locality preserving projection) | |||

| '''MVU, KPCA''' | |||

|} | |||

==Action Respecting Embedding and Spectral Clustering (Lecture 7: Oct. 6, 2014)== | ==Action Respecting Embedding and Spectral Clustering (Lecture 7: Oct. 6, 2014)== | ||

| Line 771: | Line 897: | ||

=== SDE Paper Notes === | === SDE Paper Notes === | ||

[http://www.cis.upenn.edu/~ungar/Datamining/ReadingGroup/papers/sdp.pdf Unsupervised Learning of Image Manifolds by Semidefinite Programming] introduced the maximum variance unfolding technique. The results of the paper compare the proposed technique against existing dimensionality reduction techniques. The first figure compares SDE against PCA in the "faces" dataset. To maintain the entire dataset variation we need 5 dimensions using SDE, while we need 10 dimensions using PCA. The second figure compares SDE against ISOMAP in a non-convex dataset. To maintain the whole dataset variation we need only 2 dimensions using SDE, while we need more than 5 dimensions using ISOMAP. The two figures show that SDE can represent data variation using a much smaller number of eigenvectors than PCA or ISOMAP. | [http://www.cis.upenn.edu/~ungar/Datamining/ReadingGroup/papers/sdp.pdf Unsupervised Learning of Image Manifolds by Semidefinite Programming] introduced the maximum variance unfolding technique. The results of the paper compare the proposed technique against existing dimensionality reduction techniques. The first figure compares SDE against PCA in the "faces" dataset. To maintain the entire dataset variation we need 5 dimensions using SDE, while we need 10 dimensions using PCA. The second figure compares SDE against ISOMAP in a non-convex dataset. To maintain the whole dataset variation we need only 2 dimensions using SDE, while we need more than 5 dimensions using ISOMAP. The two figures show that SDE can represent data variation using a much smaller number of eigenvectors than PCA or ISOMAP. Also, if two points take the same action, the distance between theses two points should not change( this is important ). Rotation and transaction matrix are the only way to remain the distance. | ||

<gallery> | <gallery> | ||

| Line 777: | Line 903: | ||

Image:SDE2.png|Results of SDE against ISOMAP. The figure is taken from [http://www.cis.upenn.edu/~ungar/Datamining/ReadingGroup/papers/sdp.pdf here] | Image:SDE2.png|Results of SDE against ISOMAP. The figure is taken from [http://www.cis.upenn.edu/~ungar/Datamining/ReadingGroup/papers/sdp.pdf here] | ||

</gallery> | </gallery> | ||

SDE against ISOMAP showcases a known problem that ISOMAP can struggle against non-convex data. | |||

=== Action Respecting Embedding === | === Action Respecting Embedding === | ||

When we are dealing with temporal data | When we are dealing with temporal data (data in which the order is important) we could consider a technique called Action Respecting Embedding. Examples include stock prices, which are time series, or a robot that moves around and takes pictures at different positions. Usually there are various actions that transform one data point into the next data point. For example, consider a robot taking pictures at different positions: actions include stepping forward, stepping backward, stepping right, turning, etc. After each action, the robot takes a picture at its current position, then undertakes another action, continuing until a set of data points is collected. Or consider a financial time series, where actions include stock splits, interest rate reduction, etc. Or consider a patient under a treatment, where actions include taking a specific step in the treatment or taking a specific drug, which could affect blood sugar level, cholesterol, etc. | ||

Some information cannot be captured in the form of similarities. | Some information cannot be captured in the form of similarities. | ||

| Line 786: | Line 914: | ||

Action Respecting Embedding takes a set of data points <math>\, x_{1},...,x_{n}</math> along with associated discrete actions <math>\, a_{1},...,a_{n-1}</math>. The data points are assumed to be in temporal order, where <math>\, a_{i}</math> was taken between <math>\ x_{i}</math> and <math>\ x_{i+1}</math>. | Action Respecting Embedding takes a set of data points <math>\, x_{1},...,x_{n}</math> along with associated discrete actions <math>\, a_{1},...,a_{n-1}</math>. The data points are assumed to be in temporal order, where <math>\, a_{i}</math> was taken between <math>\ x_{i}</math> and <math>\ x_{i+1}</math>. | ||

Contain all actions to be distance preserving transformations in the feature space. | |||

Now we realize that intuitively, if two points have the same action, then the distance between them should not change after the action. There are only three types of transformations that do not change the distance between points. They are rotation, reflection, and translation. Since rotation and reflection have the same properties, we will call both of these rotation for our purposes. Any other transformation does not preserve distances. Note that we are able to model any action in low dimensional space by rotation, translation or any | Now we realize that intuitively, if two points have the same action, then the distance between them should not change after the action. There are only three types of transformations that do not change the distance between points. They are rotation, reflection, and translation. Since rotation and reflection have the same properties, we will call both of these rotation for our purposes. Any other transformation does not preserve distances. Note that we are able to model any action in low dimensional space by rotation, translation or any affine combination of them. | ||

Action Respecting Embedding has similar algorithm to SDE[http://www.machinelearning.org/proceedings/icml2005/papers/009_Action_BowlingEtAl.pdf]<br /> | |||

'''Algorithm''' | |||

1) Construct a neighbourhood representation | |||

2) Solve to find maximum variance subject to constraints | |||

3) Construct lower dimensional embedding from eigenvectors of the kernel | |||

Differences: | |||

1) Constructing neighbourhoods based on action pairs | |||

=== A Simple Example === | 2) Adding action constraints | ||

=== Optimization Problem === | |||

Recall that kernel function satisfies the property: <math>\, |\phi(x_i) - \phi(x_j)|^2 = | x_i - x_j |^2 </math>. | |||

We can formulate the idea of distance preserving mathematically as <math>\, |\phi(x_i) - \phi(x_j)|^2 = |\phi(x_i+1) - \phi(x_j+1)|^2 </math>, if <math>\, x_i, x_j</math> are points that perform the same type of transformations. | |||

The maximum variance unfolding problem is | |||

<div class="center"><math>\, \text{max tr}(K)</math></div> | |||

:,such that | |||

<div class="center"><math>\, |\phi(x_i) - \phi(x_j)|^2 = k_{ii} -2k_{ij} + k_{jj}</math> | |||

<math>\sum\limits_{i,j} k_{ij} = 0</math> | |||

<math>k \succeq 0 </math></div> | |||

The Action Respecting Embedding problem is similar to the maximum variance unfolding problem, with another constraint: | |||

For all '''i, j, <math>a_{i} = a_{j}</math>''', | |||

<div class="center"><math>\,k_{(i+1)(i+1)} - 2k_{(i+1)(j+1)} + k_{(j+1)(j+1)} = k_{ii} - 2k_{ij} + k_{jj}</math></div> | |||

=== A Simple Example === | |||

Consider the two examples presented in the ARE paper [http://www.machinelearning.org/proceedings/icml2005/papers/009_Action_BowlingEtAl.pdf] of a robot moving around taking pictures with some action between them. The input is a series of pictures with actions between them. Note that there are no semantics for the actions, only labels | Consider the two examples presented in the ARE paper [http://www.machinelearning.org/proceedings/icml2005/papers/009_Action_BowlingEtAl.pdf] of a robot moving around taking pictures with some action between them. The input is a series of pictures with actions between them. Note that there are no semantics for the actions, only labels | ||

| Line 814: | Line 961: | ||

We could look at the position on manifold vs. time for a robot that took 40 steps forward and 20 steps backward. We could also look at the same analysis for a robot that took 40 steps forward and 20 steps backward that are twice as big. | We could look at the position on manifold vs. time for a robot that took 40 steps forward and 20 steps backward. We could also look at the same analysis for a robot that took 40 steps forward and 20 steps backward that are twice as big. | ||

<div class="center"> | |||

<gallery> | <gallery> | ||

Image:AREaction1.PNG|Action taken by robot | Image:AREaction1.PNG|Action taken by robot | ||

Image:AREplot1.PNG|Results by ARE and SDE | Image:AREplot1.PNG|Results by ARE and SDE | ||

</gallery> | </gallery> | ||

</div> | |||

Another complicated action and the result of ARE and SDE are shown in the next figure (taken from ARE paper [http://www.machinelearning.org/proceedings/icml2005/papers/009_Action_BowlingEtAl.pdf]), where we can see that ARE captured the trajectory of the robot. | Another complicated action and the result of ARE and SDE are shown in the next figure (taken from ARE paper [http://www.machinelearning.org/proceedings/icml2005/papers/009_Action_BowlingEtAl.pdf]), where we can see that ARE captured the trajectory of the robot. | ||

<div class="center"> | |||

<gallery> | <gallery> | ||

Image:AREaction2 .PNG|Action taken by robot | Image:AREaction2 .PNG|Action taken by robot | ||

Image:AREplot2 .PNG|Results by ARE and SDE | Image:AREplot2 .PNG|Results by ARE and SDE | ||

</gallery> | </gallery> | ||

</div> | |||

Sometimes it's very hard for humans to recognize what happened by merely looking at the data, but this algorithm will make it easier for you to see exactly what happened. | Sometimes it's very hard for humans to recognize what happened by merely looking at the data, but this algorithm will make it easier for you to see exactly what happened. | ||

| Line 832: | Line 981: | ||

=== Types of Applications === | === Types of Applications === | ||

Action-respecting embeddings are very useful for many AI applications, especially those that involve robotics, because actions can be defined as simple transformations that are easy to understand. Another common type of application is planning, that is, when we need to find a sequence of decisions which achieve a desired goal, e.g. coming up with an optimal sequence of actions to get from one point to another. | |||

For example, what type of treatment should I give a patient in order to get from a particular state to a desired state? | For example, what type of treatment should I give a patient in order to get from a particular state to a desired state? | ||

| Line 841: | Line 990: | ||

===Spectral Clustering=== | ===Spectral Clustering=== | ||

Clustering is the task of grouping data | Clustering is the task of grouping data such that elements in the same group are more similar (or dissimilar) to each other compared to the elements in other groups. In spectral clustering, we represent the data as a graph first and then perform clustering. | ||

For the time being, we will only consider the case where there are two clusters. <br/> | For the time being, we will only consider the case where there are two clusters. <br/> | ||

| Line 848: | Line 997: | ||

Using intuition, we can see that the goal is to minimize the sum of the weights of the edges that we cut (i.e. edges we remove from G until we have a disconnected graph)<br/> | Using intuition, we can see that the goal is to minimize the sum of the weights of the edges that we cut (i.e. edges we remove from G until we have a disconnected graph)<br/> | ||

<math>cut(A,B)=\sum_{i\in A, j\in B} w_{ij}</math> | <div class="center"><math>cut(A,B)=\sum_{i\in A, j\in B} w_{ij}</math></div> | ||

where '''A''' and '''B''' are the partitions of the vertices in G made by a cut, and <math>\,w_{ij}</math> is the weight between points '''i''' and '''j'''. | where '''A''' and '''B''' are the partitions of the vertices in G made by a cut, and <math>\,w_{ij}</math> is the weight between points '''i''' and '''j'''. | ||

| Line 864: | Line 1,013: | ||

We define ratio-cut as: | We define ratio-cut as: | ||

<math>ratiocut = \frac{cut(A, \bar{A})}{|A|} + \frac{cut(\bar{A}, A)}{|\bar{A}|}</math> | <math>ratiocut = \frac{cut(A, \bar{A})}{|A|} + \frac{cut(\bar{A}, A)}{|\bar{A}|}</math> | ||

where <math>\, |A|</math> is the | where <math>\, |A|</math> is the cardinality of the set <math>\, A</math> and <math>\, \bar{A}</math> is the complement of <math>\, A</math>. | ||

This equation will be minimized if there is a balance between set <math>\, A</math> and <math> \bar{A}</math>. This, however, is an '''NP Hard''' problem; we must relax the constraints to arrive at a computationally feasible solution. | This equation will be minimized if there is a balance between set <math>\, A</math> and <math> \bar{A}</math>. This, however, is an '''NP Hard''' problem; we must relax the constraints to arrive at a computationally feasible solution. | ||

| Line 870: | Line 1,019: | ||

Let <math>f \in \mathbb{R}^n</math> such that | Let <math>f \in \mathbb{R}^n</math> such that | ||

<div class="center"> | |||

<math> | <math> | ||

f_{i} = \left\{ | f_{i} = \left\{ | ||

| Line 878: | Line 1,028: | ||

\right. | \right. | ||

</math> | </math> | ||