stat841f10: Difference between revisions

m (Conversion script moved page Stat841f10 to stat841f10: Converting page titles to lowercase) |

|||

| Line 1: | Line 1: | ||

==[[Schedule of Project Presentations]] == | ==[[Schedule of Project Presentations]] == | ||

==[[Proposal Fall 2010]] == | ==[[Proposal Fall 2010]] == | ||

==[[Mark your contribution here]]== | |||

==[[statf10841Scribe|Editor sign up]] == | ==[[statf10841Scribe|Editor sign up]] == | ||

{{Cleanup|date=October 8 2010|reason=Provide a summary for each topic here.}} | {{Cleanup|date=October 8 2010|reason=Provide a summary for each topic here.}} | ||

==[[f10_Stat841_digest |Digest ]] == | ==[[f10_Stat841_digest |Digest ]] == | ||

| Line 52: | Line 41: | ||

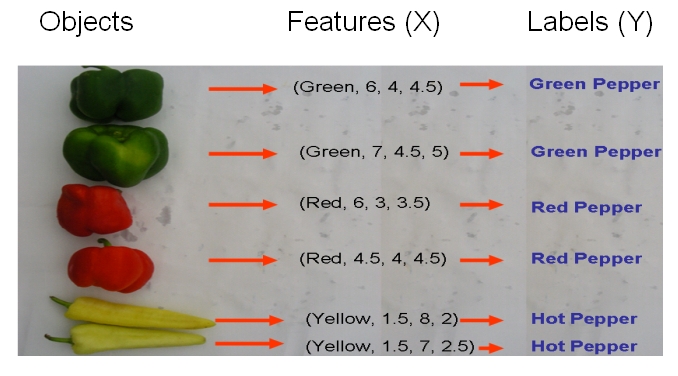

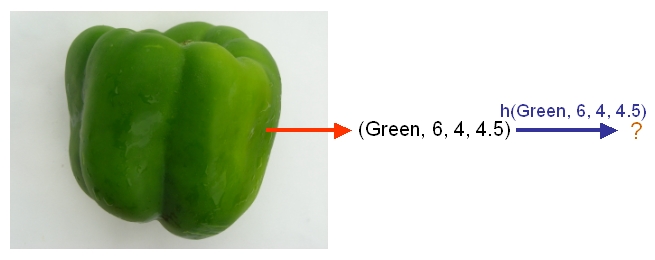

As another example, suppose we wish to classify newly-given fruits into apples and oranges by considering three features of a fruit that comprise its color, its diameter, and its weight. After selecting a classifier and constructing a model using training data <math>\,\{(X_{color, 1}, X_{diameter, 1}, X_{weight, 1}, Y_{1}), \dots , (X_{color, n}, X_{diameter, n}, X_{weight, n}, Y_{n})\}</math>, we could then use the classifier's classification rule <math>\,h</math> to assign any newly-given fruit having known feature values <math>\,x = (\,x_{color}, x_{diameter} , x_{weight})</math> the label <math>\, \hat{Y}=h(x) \in \mathcal{Y}= \{apple,orange\}</math>. | As another example, suppose we wish to classify newly-given fruits into apples and oranges by considering three features of a fruit that comprise its color, its diameter, and its weight. After selecting a classifier and constructing a model using training data <math>\,\{(X_{color, 1}, X_{diameter, 1}, X_{weight, 1}, Y_{1}), \dots , (X_{color, n}, X_{diameter, n}, X_{weight, n}, Y_{n})\}</math>, we could then use the classifier's classification rule <math>\,h</math> to assign any newly-given fruit having known feature values <math>\,x = (\,x_{color}, x_{diameter} , x_{weight})</math> the label <math>\, \hat{Y}=h(x) \in \mathcal{Y}= \{apple,orange\}</math>. | ||

=== Examples of Classification === | |||

• Email spam filtering (spam vs not spam). | |||

• Detecting credit card fraud (fraudulent or legitimate). | |||

• Face detection in images (face or background). | |||

• Web page classification (sports vs politics vs entertainment etc). | |||

• Steering an autonomous car across the US (turn left, right, or go straight). | |||

• Medical diagnosis (classification of disease based on observed symptoms). | |||

=== Independent and Identically Distributed (iid) Data Assumption === | |||

Suppose that we have training data X which contains N data points. The Independent and Identically Distributed (IID) assumption declares that the datapoints are drawn independently from identical distributions. This assumption implies that ordering of the data points does not matter, and the assumption is used in many classification problems. For an example of data that is not IID, consider daily temperature: today's temperature is not independent of the yesterday's temperature -- rather, there is a strong correlation between the temperatures of the two days. | |||

=== Error rate === | === Error rate === | ||

| Line 64: | Line 71: | ||

In practice, the empirical error rate is obtained to estimate the true error rate, whose value is impossible to be known because the parameter values of the underlying process cannot be known but can only be estimated using available data. The empirical error rate, in practice, estimates the true error rate quite well in that, as mentioned [http://www.liebertonline.com/doi/pdf/10.1089/106652703321825928 here], it is an unbiased estimator of the true error rate. | In practice, the empirical error rate is obtained to estimate the true error rate, whose value is impossible to be known because the parameter values of the underlying process cannot be known but can only be estimated using available data. The empirical error rate, in practice, estimates the true error rate quite well in that, as mentioned [http://www.liebertonline.com/doi/pdf/10.1089/106652703321825928 here], it is an unbiased estimator of the true error rate. | ||

An Error Rate Comparison of Classification Methods [http://pdfserve.informaworld.com/311525_770885140_713826662.pdf] | |||

=== Decision Theory === | |||

we can identify three distinct approaches to solving decision problems, all of which have been used in practical applications. These are given, in decreasing order of complexity, by: | |||

a. First solve the inference problem of determining the class-conditional densities <math>\ p(x|C_k)</math> for each class <math>\ C_k</math> individually. Also separately infer the prior class probabilities <math>\ p(C_k)</math>. Then use Bayes’ theorem in the form | |||

<math>\begin{align}p(C_k|x)=\frac{p(x|C_k)p(C_k)}{p(x)} \end{align}</math> | |||

to find the posterior class probabilities <math>\ p(C_k|x)</math>. As usual, the denominator in Bayes’ theorem can be found in terms of the quantities appearing in the numerator, because | |||

<math>\begin{align}p(x)=\sum_{k} p(x|C_k)p(C_k) \end{align}</math> | |||

Equivalently, we can model the joint distribution <math>\ p(x, C_k)</math> directly and then normalize to obtain the posterior probabilities. Having found the posterior probabilities, we use decision theory to determine class membership for each new input <math>\ x</math>. Approaches that explicitly or implicitly model the distribution of inputs as well as outputs are known as "generative models", because by sampling from them it is possible to generate synthetic data points in the input space. | |||

b. First solve the inference problem of determining the posterior class probabilities <math>\ p(C_k|x)</math>, and then subsequently use decision theory to assign each new <math>\ x</math> to one of the classes. Approaches that model the posterior probabilities directly | |||

are called "discriminative models". | |||

c. Find a function <math>\ f(x)</math>, called a discriminant function, which maps each input <math>\ x</math> directly onto a class label. For instance, in the case of two-class problems, <math>\ f(.)</math> might be binary valued and such that <math>\ f = 0</math> represents class <math>\ C_1</math> and <math>\ f = 1</math> represents class <math>\ C_2</math>. In this case, probabilities play no role. | |||

This topic has brought to you from Pattern Recognition and Machine Learning by Christopher M. Bishop (Chapter 1) | |||

=== Bayes Classifier === | === Bayes Classifier === | ||

| Line 172: | Line 201: | ||

:[[File:裁剪.jpg]] | :[[File:裁剪.jpg]] | ||

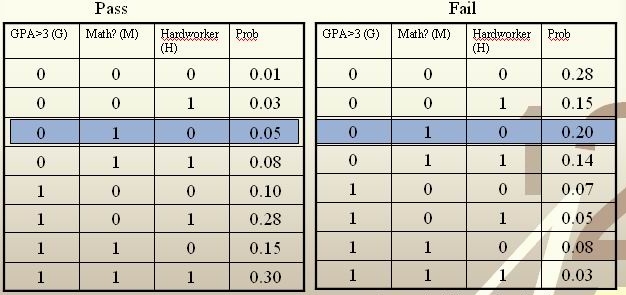

For each student, his/her feature values is <math>\, x = \{G, M, H\} </math> and his or her class is <math>\, y \in \{0, 1\} </math>. | For each student, his/her feature values is <math>\, x = \{G, M, H\} </math> and his or her class is <math>\, y \in \{0, 1\} </math>. | ||

| Line 179: | Line 207: | ||

<br /> | <br /> | ||

<math>\, \hat r(x) = P(Y=1|X =(0,1,0))=\frac{P(X=(0,1,0)|Y=1)P(Y=1)}{P(X=(0,1,0)|Y=0)P(Y=0)+P(X=(0,1,0)|Y=1)P(Y=1)}=\frac{0.05*0.5}{0.05*0.5+0.2*0.5}=\frac{0.025}{0. | <math>\, \hat r(x) = P(Y=1|X =(0,1,0))=\frac{P(X=(0,1,0)|Y=1)P(Y=1)}{P(X=(0,1,0)|Y=0)P(Y=0)+P(X=(0,1,0)|Y=1)P(Y=1)}=\frac{0.05*0.5}{0.05*0.5+0.2*0.5}=\frac{0.025}{0.125}=\frac{1}{5}<\frac{1}{2}.</math><br /> | ||

The Bayes classifier assigns the new student into the class <math>\, h^*(x)=0 </math>. Therefore, we predict that the new student would fail the course. | The Bayes classifier assigns the new student into the class <math>\, h^*(x)=0 </math>. Therefore, we predict that the new student would fail the course. | ||

| Line 213: | Line 241: | ||

More information regarding the Bayesian and the frequentist schools of thought are available [http://www.statisticalengineering.com/frequentists_and_bayesians.htm here]. Furthermore, an interesting and informative youtube video that explains the Bayesian and frequentist views of probability is available [http://www.youtube.com/watch?v=hLKOKdAircA here]. | More information regarding the Bayesian and the frequentist schools of thought are available [http://www.statisticalengineering.com/frequentists_and_bayesians.htm here]. Furthermore, an interesting and informative youtube video that explains the Bayesian and frequentist views of probability is available [http://www.youtube.com/watch?v=hLKOKdAircA here]. | ||

There is useful information about Machine Learning, Neural and Statistical Classification in this link [http://www.amsta.leeds.ac.uk/~charles/statlog/] Machine Learning, Neural and Statistical Classification; there is some description of Classification in chapter 2 Classical Statistical Methods in chapter 3 and Modern Statistical Techniques in chapter 4. | |||

=== Extension: Statistical Classification Framework === | |||

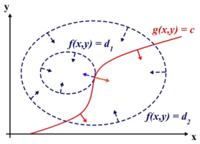

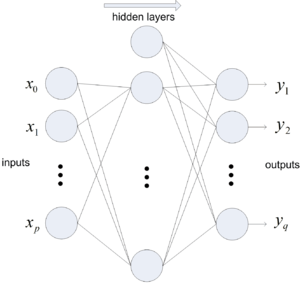

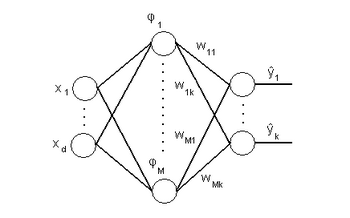

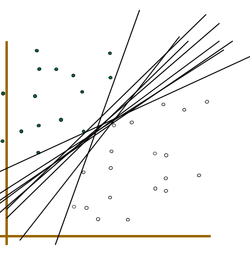

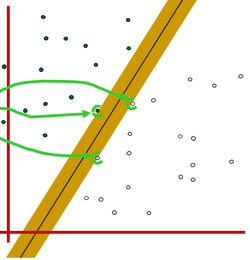

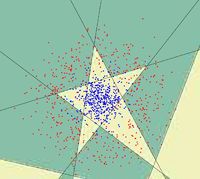

In statistical classification, each object is represented by 'd' (a set of features) a measurement vector, and the goal of classifier becomes finding compact and disjoint regions for classes in a d-dimensional feature space. Such decision regions are defined by decision rules that are known or can be trained. The simplest configuration of a classification consists of a decision rule and multiple membership functions; each membership function represents a class. The following figures illustrate this general framework. | |||

[[File:cs1.png]] | |||

Simple Conceptual Classifier. | |||

:<math>\,Pr(Y=1|X=x)=Pr(Y=0|X=x)</math> | [[File:cs2.png]] | ||

[http://www.orfeo-toolbox.org/SoftwareGuide/SoftwareGuidech17.html#x44-2480011 Statistical Classification Framework] | |||

The classification process can be described as follows: | |||

A measurement vector is input to each membership function. | |||

Membership functions feed the membership scores to the decision rule. | |||

A decision rule compares the membership scores and returns a class label. | |||

== '''Linear and Quadratic Discriminant Analysis''' == | |||

===Introduction=== | |||

'''Linear discriminant analysis''' ([http://en.wikipedia.org/wiki/Linear_discriminant_analysis LDA]) and the related '''Fisher's linear discriminant''' are methods used in statistics, pattern recognition and machine learning to find a linear combination of features which characterize or separate two or more classes of objects or events. The resulting combination may be used as a linear classifier, or, more commonly, for dimensionality reduction before later classification. | |||

LDA is also closely related to principal component analysis ([http://en.wikipedia.org/wiki/Principal_component_analysis PCA]) and [http://en.wikipedia.org/wiki/Factor_analysis factor analysis] in that both look for linear combinations of variables which best explain the data. LDA explicitly attempts to model the difference between the classes of data. PCA on the other hand does not take into account any difference in class, and factor analysis builds the feature combinations based on differences rather than similarities. Discriminant analysis is also different from factor analysis in that it is not an interdependence technique: a distinction between independent variables and dependent variables (also called criterion variables) must be made. | |||

LDA works when the measurements made on independent variables for each observation are continuous quantities. When dealing with categorical independent variables, the equivalent technique is '''discriminant correspondence analysis'''. | |||

=== Content === | |||

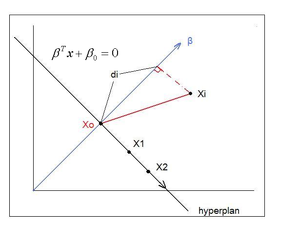

First, we shall limit ourselves to the case where there are two classes, i.e. <math>\, \mathcal{Y}=\{0, 1\}</math>. In the above discussion, we introduced the Bayes classifier's ''decision boundary'' <math>\,D(h^*)=\{x: P(Y=1|X=x)=P(Y=0|X=x)\}</math>, which represents a [http://en.wikipedia.org/wiki/Hyperplane hyperplane] that determines the class of any new data input depending on which side of the hyperplane the input lies in. Now, we shall look at how to derive the Bayes classifier's decision boundary under certain assumptions of the data. [http://en.wikipedia.org/wiki/Linear_discriminant_analysis Linear discriminant analysis (LDA)] and [http://en.wikipedia.org/wiki/Quadratic_classifier#Quadratic_discriminant_analysis quadratic discriminant analysis (QDA)] are two of the most well-known ways for deriving the Bayes classifier's decision boundary, and we shall look at each of them in turn. | |||

Let us denote the likelihood <math>\ P(X=x|Y=y) </math> as <math>\ f_y(x) </math> and the prior probability <math>\ P(Y=y) </math> as <math>\ \pi_y </math>. | |||

First, we shall examine LDA. As explained above, the Bayes classifier is optimal. However, in practice, the prior and conditional densities are not known. Under LDA, one gets around this problem by making the assumptions that both of the two classes have [http://en.wikipedia.org/wiki/Multivariate_normal_distribution multivariate normal (Gaussian) distributions] and the two classes have the same covariance matrix <math>\, \Sigma</math>. Under the assumptions of LDA, we have: <math>\ P(X=x|Y=y) = f_y(x) = \frac{1}{ (2\pi)^{d/2}|\Sigma|^{1/2} }\exp\left( -\frac{1}{2} (x - \mu_k)^\top \Sigma^{-1} (x - \mu_k) \right)</math>. Now, to derive the Bayes classifier's decision boundary using LDA, we equate <math>\, P(Y=1|X=x) </math> to <math>\, P(Y=0|X=x) </math> and proceed from there. The derivation of <math>\,D(h^*)</math> is as follows: | |||

:<math>\,Pr(Y=1|X=x)=Pr(Y=0|X=x)</math> | |||

:<math>\,\Rightarrow \frac{Pr(X=x|Y=1)Pr(Y=1)}{Pr(X=x)}=\frac{Pr(X=x|Y=0)Pr(Y=0)}{Pr(X=x)}</math> (using Bayes' Theorem) | :<math>\,\Rightarrow \frac{Pr(X=x|Y=1)Pr(Y=1)}{Pr(X=x)}=\frac{Pr(X=x|Y=0)Pr(Y=0)}{Pr(X=x)}</math> (using Bayes' Theorem) | ||

:<math>\,\Rightarrow Pr(X=x|Y=1)Pr(Y=1)=Pr(X=x|Y=0)Pr(Y=0)</math> (canceling the denominators) | :<math>\,\Rightarrow Pr(X=x|Y=1)Pr(Y=1)=Pr(X=x|Y=0)Pr(Y=0)</math> (canceling the denominators) | ||

| Line 250: | Line 308: | ||

* LDA implicitly assumes that the mean rather than the variance is the discriminating factor. | * LDA implicitly assumes that the mean rather than the variance is the discriminating factor. | ||

* LDA may over-fit the training data. | * LDA may over-fit the training data. | ||

The following link provides a comparison of discriminant analysis and artificial neural networks [http://www.jstor.org/stable/2584434?seq=4] | |||

====Different Approaches to LDA==== | |||

Data sets can be transformed and test vectors can be classified in the transformed space by two | |||

different approaches. | |||

*Class-dependent transformation: This type of approach involves maximizing the ratio of between | |||

class variance to within class variance. The main objective is to maximize this ratio so that adequate | |||

class separability is obtained. The class-specific type approach involves using two optimizing criteria | |||

for transforming the data sets independently. | |||

*Class-independent transformation: This approach involves maximizing the ratio of overall variance | |||

to within class variance. This approach uses only one optimizing criterion to transform the data sets | |||

and hence all data points irrespective of their class identity are transformed using this transform. In | |||

this type of LDA, each class is considered as a separate class against all other classes. | |||

== Further reading == | |||

The following are some applications that use LDA and QDA: | |||

1- Linear discriminant analysis for improved large vocabulary continuous speech recognition [http://ieeexplore.ieee.org/stamp/stamp.jsp?tp=&arnumber=225984 here] | |||

2- 2D-LDA: A statistical linear discriminant analysis for image matrix [http://www.sciencedirect.com/science?_ob=MImg&_imagekey=B6V15-4DK6B5P-4-1&_cdi=5665&_user=1067412&_pii=S0167865504002272&_origin=search&_coverDate=04%2F01%2F2005&_sk=999739994&view=c&wchp=dGLzVlz-zSkzV&md5=60ea1cf7ff045f76421f5bde64bf855a&ie=/sdarticle.pdf here] | |||

3- Regularization studies of linear discriminant analysis in small sample size scenarios with application to face recognition [http://www.sciencedirect.com/science?_ob=MImg&_imagekey=B6V15-4DTJVF4-2-9&_cdi=5665&_user=1067412&_pii=S0167865504002260&_origin=search&_coverDate=01%2F15%2F2005&_sk=999739997&view=c&wchp=dGLzVtb-zSkzk&md5=1bba55e357b1c79579987638dcbf6828&ie=/sdarticle.pdf here] | |||

4- The sparse discriminant vectors are useful for supervised dimension reduction for high dimensional data. | |||

Naive application of classical Fisher’s LDA to high dimensional, low sample size settings suffers from the data piling problem. In [http://www.iaeng.org/IJAM/issues_v39/issue_1/IJAM_39_1_06.pdf] they have use sparse LDA method which selects important variables for discriminant analysis and thereby | |||

yield improved classification. Introducing sparsity in the discriminant vectors is very effective in eliminating data piling and the associated overfitting | |||

problem. | |||

== '''Linear and Quadratic Discriminant Analysis cont'd - September 23, 2010''' == | == '''Linear and Quadratic Discriminant Analysis cont'd - September 23, 2010''' == | ||

| Line 309: | Line 397: | ||

In the case where we need a common covariance matrix, we get the estimate using the following equation: | In the case where we need a common covariance matrix, we get the estimate using the following equation: | ||

<math>\,\Sigma=\frac{\sum_{r=1}^{k}(n_r\Sigma_r)}{ | <math>\,\Sigma=\frac{\sum_{r=1}^{k}(n_r\Sigma_r)}{\sum_{l=1}^{k}(n_l)} </math> | ||

Where: <math>\,n_r</math> is the number of data points in class r, <math>\,\Sigma_r</math> is the covariance of class r and <math>\,n</math> is the total number of data points, | Where: <math>\,n_r</math> is the number of data points in class r, <math>\,\Sigma_r</math> is the covariance of class r and <math>\,n</math> is the total number of data points, | ||

| Line 359: | Line 447: | ||

A similar transformation of all the centers can be done from <math>\,\mu_k</math> to <math>\,\mu_k^*</math> where <math> \, \mu_k^* \leftarrow S_k^{-\frac{1}{2}}U_k^\top \mu_k </math>. | A similar transformation of all the centers can be done from <math>\,\mu_k</math> to <math>\,\mu_k^*</math> where <math> \, \mu_k^* \leftarrow S_k^{-\frac{1}{2}}U_k^\top \mu_k </math>. | ||

It is now possible to do classification with <math>\,x^*</math> and <math>\,\mu_k^*</math>, treating them as in Case 1 above. | It is now possible to do classification with <math>\,x^*</math> and <math>\,\mu_k^*</math>, treating them as in Case 1 above. This strategy is correct because by transforming <math>\, x</math> to <math>\,x^*</math> where <math> \, x^* \leftarrow S_k^{-\frac{1}{2}}U_k^\top x </math>, the new variable variance is <math>I</math> | ||

Note that when we have multiple classes, we also need to compute <math>\, log{|\Sigma_k|}</math> respectively. Then we compute <math> \,\delta_k </math> for QDA . | Note that when we have multiple classes, we also need to compute <math>\, log{|\Sigma_k|}</math> respectively. Then we compute <math> \,\delta_k </math> for QDA . | ||

| Line 370: | Line 454: | ||

If the classes have different shapes, in another word, have different covariance <math>\,\Sigma_k</math>, can we use the same method to transform all data points <math>\,x</math> to <math>\,x^*</math>? | If the classes have different shapes, in another word, have different covariance <math>\,\Sigma_k</math>, can we use the same method to transform all data points <math>\,x</math> to <math>\,x^*</math>? | ||

The answer is Yes. Consider that you have two classes with different shapes. Given a data point, justify which class this point belongs to. You just do the transformations corresponding to the 2 classes respectively, then you get <math>\,\delta_1 ,\delta_2 </math> ,then you determine which class the data point belongs to by comparing <math> \,\delta_1 </math> and <math> \,\delta_2 </math> . | The answer is Yes. Consider that you have two classes with different shapes. Given a data point, justify which class this point belongs to. You just do the transformations corresponding to the 2 classes respectively, then you get <math>\,\delta_1 ,\delta_2 </math> ,then you determine which class the data point belongs to by comparing <math> \,\delta_1 </math> and <math> \,\delta_2 </math> . | ||

| Line 381: | Line 461: | ||

:: Step 1: For each class <math>\,k</math>, apply singular value decomposition on <math>\,X_k</math> to obtain <math>\,S_k</math> and <math>\,U_k</math>. | :: Step 1: For each class <math>\,k</math>, apply singular value decomposition on <math>\,X_k</math> to obtain <math>\,S_k</math> and <math>\,U_k</math>. | ||

:: Step 2: For each class <math>\,k</math>, transform each <math>\,x</math> belonging to that class to <math>\, | :: Step 2: For each class <math>\,k</math>, transform each <math>\,x</math> belonging to that class to <math>\,x_k^* = S_k^{-\frac{1}{2}}U_k^\top x</math>, and transform its center <math>\,\mu_k</math> to <math>\,\mu_k^* = S_k^{-\frac{1}{2}}U_k^\top \mu_k</math>. | ||

:: Step 3: For each data point <math>\,x \in X</math>, find the squared Euclidean distance between the transformed data point <math>\, | :: Step 3: For each data point <math>\,x \in X</math>, find the squared Euclidean distance between the transformed data point <math>\,x_k^*</math> and the transformed center <math>\,\mu_k^*</math> of each class <math>\,k</math>, and assign <math>\,x</math> to class <math>\,k</math> such that the squared Euclidean distance between <math>\,x_k^*</math> and <math>\,\mu_k^*</math> is the least for all possible <math>\,k</math>'s. | ||

| Line 410: | Line 490: | ||

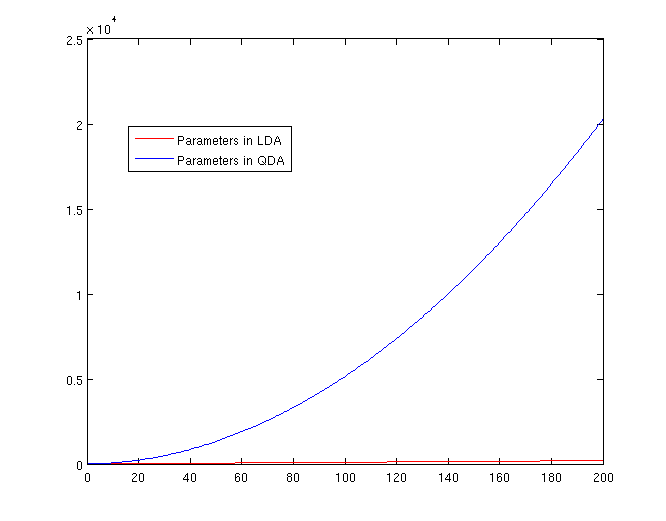

[[File:Lda-qda-parameters.png|frame|center|A plot of the number of parameters that must be estimated, in terms of (K-1). The x-axis represents the number of dimensions in the data. As is easy to see, QDA is far less robust than LDA for high-dimensional data sets.]] | [[File:Lda-qda-parameters.png|frame|center|A plot of the number of parameters that must be estimated, in terms of (K-1). The x-axis represents the number of dimensions in the data. As is easy to see, QDA is far less robust than LDA for high-dimensional data sets.]] | ||

===More information on Regularized Discriminant Analysis (RDA)=== | |||

Discriminant analysis (DA) is widely used in classification problems. Except LDA and QDA, there is also an intermediate method between LDA and QDA, a regularized version of discriminant analysis (RDA) proposed by Friedman [1989], and it has been shown to be more flexible in dealing with various class distributions. RDA applies the regularization techniques by using two regularization parameters, which are selected to jointly maximize the classification performance. The optimal pair of parameters is commonly estimated via cross-validation from a set of candidate pairs. More detail about this method can be found in the book by Hastie et al. [2001]. On the other hand, the time of computing last long for high dimensional data, especially when the candidate set is large, which limits the applications of RDA to low dimensional data. In 2006, Ye Jieping and Wang Tie develop a novel algorithm for RDA for high dimensional data. It can estimate the optimal regularization parameters from a large set of parameter candidates efficiently. Experiments on a variety of datasets confirm the claimed theoretical estimate of the efficiency, and also show that, for a properly chosen pair of regularization parameters, RDA performs favourably in classification, in comparison with other existing classification methods. For more details, see Ye, Jieping; Wang, Tie | |||

Regularized discriminant analysis for high dimensional, low sample size data Conference on Knowledge Discovery in Data: Proceedings of the 12th ACM SIGKDD international conference on Knowledge discovery and data mining; 20-23 Aug. 2006 | |||

===Further Reading for Regularized Discriminant Analysis (RDA)=== | |||

1. Regularized Discriminant Analysis and Reduced-Rank LDA | |||

[http://www.stat.psu.edu/~jiali/course/stat597e/notes2/lda2.pdf] | |||

2. Regularized discriminant analysis for the small sample size in face recognition | |||

[http://www.google.ca/url?sa=t&source=web&cd=2&sqi=2&ved=0CCQQFjAB&url=http%3A%2F%2Fciteseerx.ist.psu.edu%2Fviewdoc%2Fdownload%3Fdoi%3D10.1.1.84.6960%26rep%3Drep1%26type%3Dpdf&rct=j&q=Regularized%20Discriminant%20Analysis&ei=IPr2TJ_2MKWV4gaP5eH-Bg&usg=AFQjCNHB3fk6eVe5fSjlQCMfK44kU1-lug&sig2=5EJv_AV3W_ngSVFIa1nfRg&cad=rja.pdf] | |||

3. Regularized Discriminant Analysis and Its Application in Microarrays | |||

[http://www-stat.stanford.edu/~hastie/Papers/RDA-6.pdf] | |||

== Trick: Using LDA to do QDA - September 28, 2010== | == Trick: Using LDA to do QDA - September 28, 2010== | ||

| Line 426: | Line 521: | ||

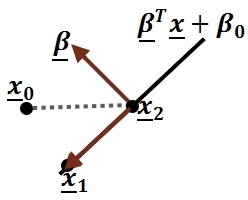

Suppose we can estimate some vector <math>\underline{w}^T</math> such that | Suppose we can estimate some vector <math>\underline{w}^T</math> such that | ||

<math>y = \underline{w}^ | <math>y = \underline{w}^T\underline{x}</math> | ||

where <math>\underline{w}</math> is a d-dimensional column vector, and <math style="vertical-align:0%;">x\ \epsilon\ \mathbb{R}^d</math> (vector in d dimensions). | where <math>\underline{w}</math> is a d-dimensional column vector, and <math style="vertical-align:0%;">\underline{x}\ \epsilon\ \mathbb{R}^d</math> (vector in d dimensions). | ||

We also have a non-linear function <math>g(x) = y = x^ | We also have a non-linear function <math>g(x) = y = \underline{x}^Tv\underline{x} + \underline{w}^T\underline{x}</math> that we cannot estimate. | ||

Using our trick, we create two new vectors, <math>\,\underline{w}^*</math> and <math>\,x^*</math> such that: | Using our trick, we create two new vectors, <math>\,\underline{w}^*</math> and <math>\,\underline{x}^*</math> such that: | ||

<math>\underline{w}^{*T} = [w_1,w_2,...,w_d,v_1,v_2,...,v_d]</math> | <math>\underline{w}^{*T} = [w_1,w_2,...,w_d,v_1,v_2,...,v_d]</math> | ||

| Line 438: | Line 533: | ||

and | and | ||

<math>x^{*T} = [x_1,x_2,...,x_d,{x_1}^2,{x_2}^2,...,{x_d}^2]</math> | <math>\underline{x}^{*T} = [x_1,x_2,...,x_d,{x_1}^2,{x_2}^2,...,{x_d}^2]</math> | ||

We can then estimate a new function, <math>g^*(x,x^2) = y^* = \underline{w}^{*T}x^*</math>. | We can then estimate a new function, <math>g^*(\underline{x},\underline{x}^2) = y^* = \underline{w}^{*T}\underline{x}^*</math>. | ||

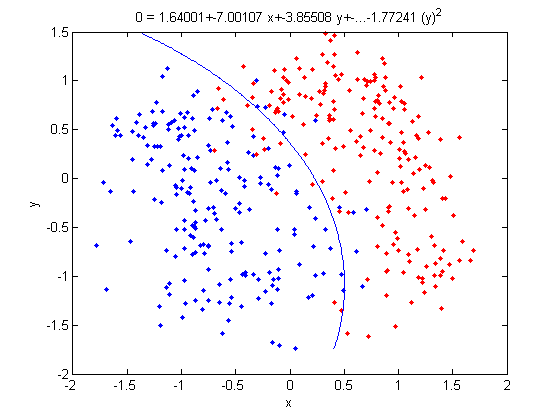

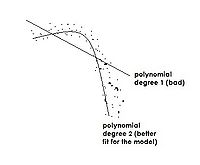

Note that we can do this for any <math>x</math> and in any dimension; we could extend a <math>D \times n</math> matrix to a quadratic dimension by appending another <math>D \times n</math> matrix with the original matrix squared, to a cubic dimension with the original matrix cubed, or even with a different function altogether, such as a <math>\,sin(x)</math> dimension. Pay attention, We don't do QDA with LDA. If we try QDA directly on this problem the resulting decision boundary will be different. Here we try to find a nonlinear boundary for a better possible boundary but it is different with general QDA method. We can call it nonlinear LDA. | Note that we can do this for any <math>\, x</math> and in any dimension; we could extend a <math>D \times n</math> matrix to a quadratic dimension by appending another <math>D \times n</math> matrix with the original matrix squared, to a cubic dimension with the original matrix cubed, or even with a different function altogether, such as a <math>\,sin(x)</math> dimension. Pay attention, We don't do QDA with LDA. If we try QDA directly on this problem the resulting decision boundary will be different. Here we try to find a nonlinear boundary for a better possible boundary but it is different with general QDA method. We can call it nonlinear LDA. | ||

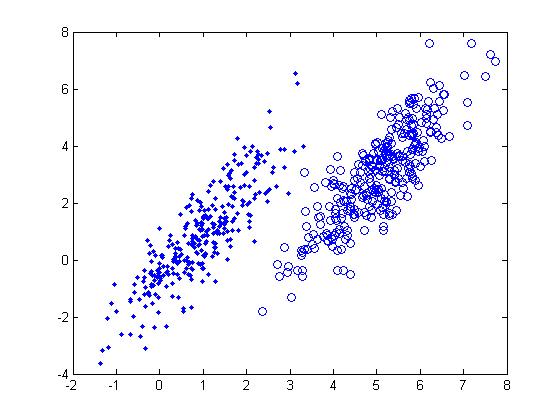

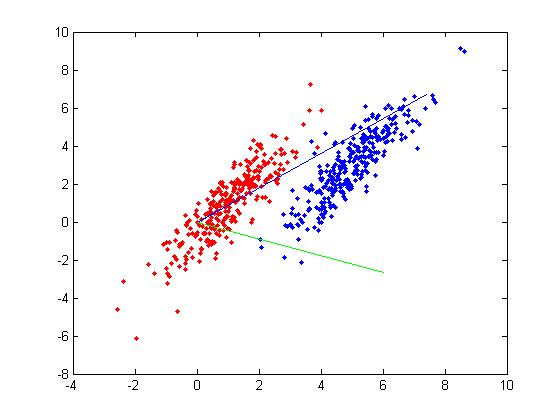

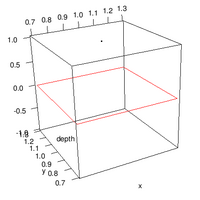

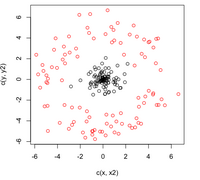

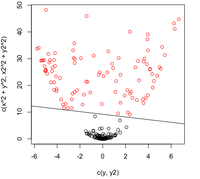

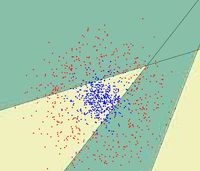

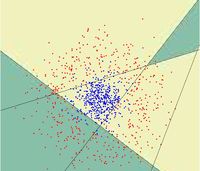

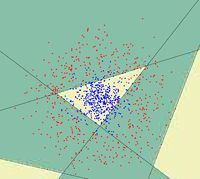

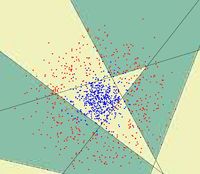

=== By Example === | === By Example === | ||

| Line 482: | Line 577: | ||

:Not only does LDA give us a better result than it did previously, it actually beats QDA, which only correctly classified 371 data points for this data set. Continuing this procedure by adding another two dimensions with <math>x^4</math> (i.e. we set <code>X_star(i,j+2) = X_star(i,j)^4</code>) we can correctly classify 376 points. | :Not only does LDA give us a better result than it did previously, it actually beats QDA, which only correctly classified 371 data points for this data set. Continuing this procedure by adding another two dimensions with <math>x^4</math> (i.e. we set <code>X_star(i,j+2) = X_star(i,j)^4</code>) we can correctly classify 376 points. | ||

=== LDA and QDA in Matlab === | ===Working Example - Diabetes Data Set=== | ||

Let's take a look at specific data set. This is a [http://archive.ics.uci.edu/ml/datasets/Diabetes diabetes data set] from the UC Irvine Machine Learning Repository. It is a fairly small data set by today's standards. The original data had eight variable dimensions. What I did here was to obtain the two prominent principal components from these eight variables. Instead of using the original eight dimensions we will just use these two principal components for this example. | |||

The Diabetes data set has two types of samples in it. One sample type are healthy individuals the other are individuals with a higher risk of diabetes. Here are the prior probabilities estimated for both of the sample types, first for the healthy individuals and second for those individuals at risk: | |||

[[File:eq1.png]] | |||

The first type has a prior probability estimated at 0.651. This means that among the data set, (250 to 300 data points), about 65% of these belong to class one and the other 35% belong to class two. Next, we computed the mean vector for the two classes separately:[[File:eq2.png]] | |||

Then we computed [[File:eq3.jpg]] using the formulas discussed earlier. | |||

Once we have done all of this, we compute the linear discriminant function and found the classification rule. Classification rule:[[File:eq4.jpg]] | |||

In this example, if you give me an <math>\, x</math>, I then plug this value into the above linear function. If the result is greater than or equal to zero, I claim that it is in class one. Otherwise, it is in class two. | |||

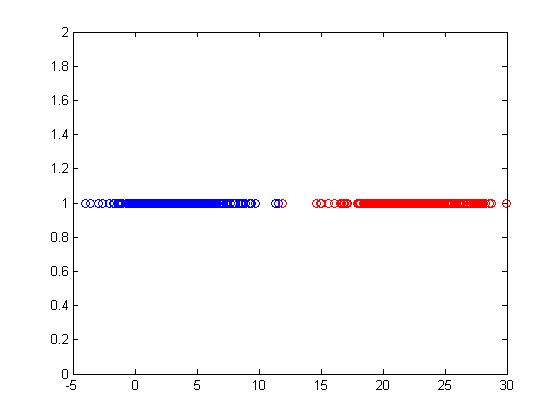

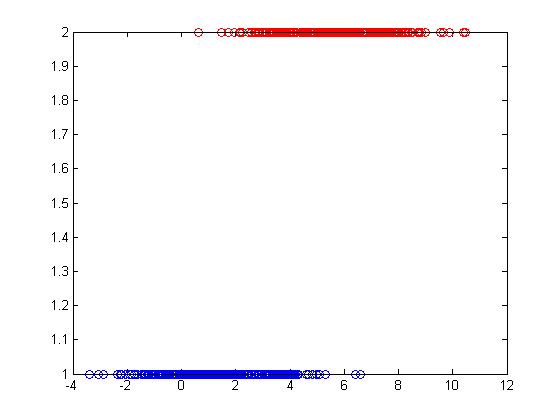

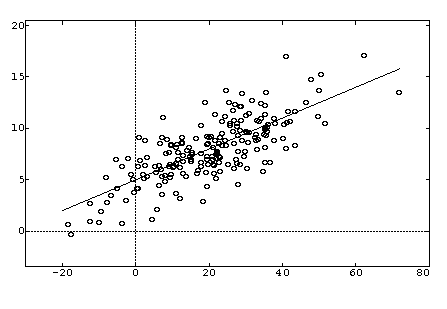

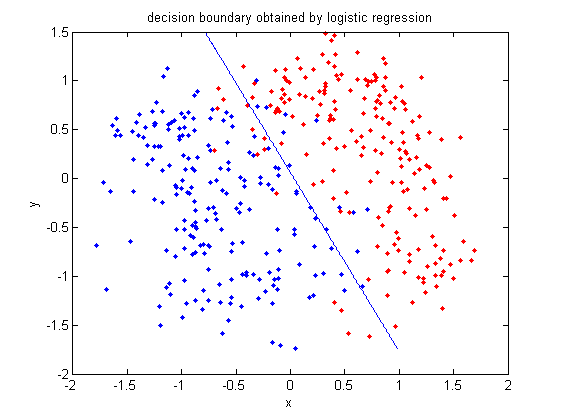

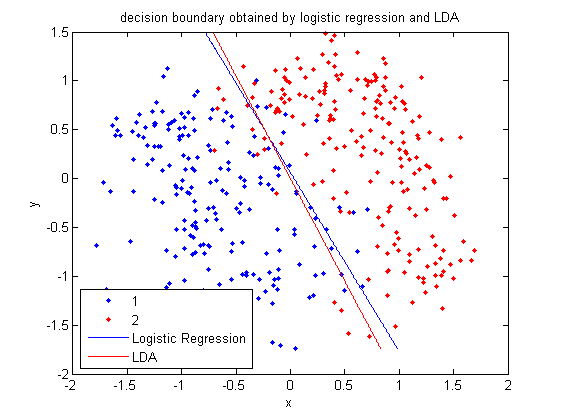

Below is a scatter plot of the dominant principle components. The two classes are represented. The first, without diabetes, is shown with red stars (class 1), and the second class, with diabetes, is shown with blue circles (class 2). The solid line represents the classification boundary obtained by LDA. It appears the two classes are not that well separated. The dashed or dotted line is the boundary obtained by linear regression of indicator matrix. In this case, the results of the two different linear boundaries are very close. | |||

[[File:eq5.jpg]] | |||

It is always good practice to visualize the scheme to check for any obvious mistakes. | |||

• Within training data classification error rate: 28.26%. | |||

• Sensitivity: 45.90%. | |||

• Specificity: 85.60%. | |||

Below is the contour plot for the density of the diabetes data (the marginal density for <math>\, x</math> is a mixture of two Gaussians, 2 classes). It looks like a single Gaussian distribution. The reason for this is that the two classes are so close together that they merge into a single mode. | |||

[[File:eq6.jpg]] | |||

=== LDA and QDA in Matlab === | |||

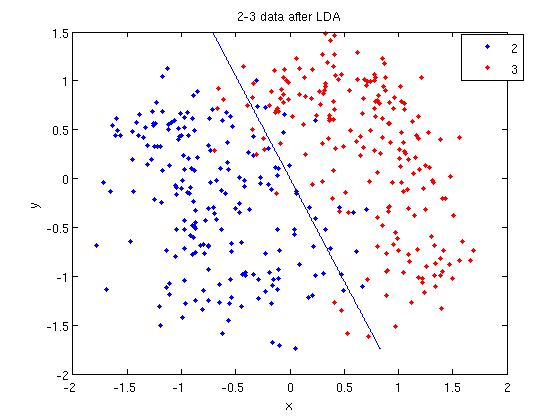

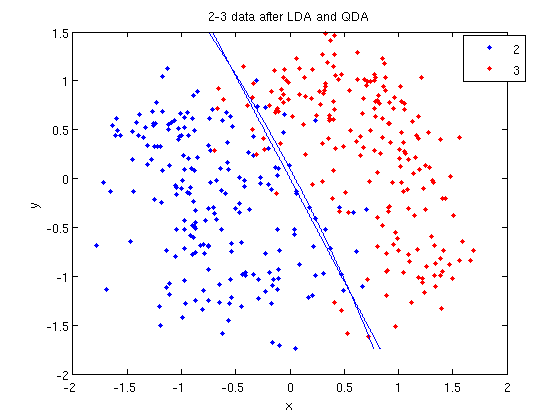

We have examined the theory behind Linear Discriminant Analysis (LDA) and Quadratic Discriminant Analysis (QDA) above; how do we use these algorithms in practice? Matlab offers us a function called [http://www.mathworks.com/access/helpdesk/help/toolbox/stats/index.html?/access/helpdesk/help/toolbox/stats/classify.html <code>classify</code>] that allows us to perform LDA and QDA quickly and easily. | We have examined the theory behind Linear Discriminant Analysis (LDA) and Quadratic Discriminant Analysis (QDA) above; how do we use these algorithms in practice? Matlab offers us a function called [http://www.mathworks.com/access/helpdesk/help/toolbox/stats/index.html?/access/helpdesk/help/toolbox/stats/classify.html <code>classify</code>] that allows us to perform LDA and QDA quickly and easily. | ||

| Line 550: | Line 674: | ||

'''Recall: An analysis of the function of <code>princomp</code> in matlab.''' | '''Recall: An analysis of the function of <code>princomp</code> in matlab.''' | ||

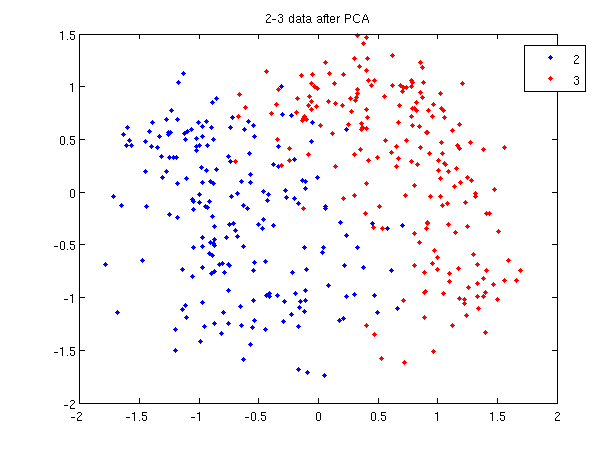

<br />In our assignment 1, we | <br />In our assignment 1, we learned how to perform Principal Component Analysis using the SVD (Singular Value Decomposition) method. In fact, matlab offers a built-in function called [http://www.mathworks.com/access/helpdesk/help/toolbox/stats/index.html?/access/helpdesk/help/toolbox/stats/princomp.html&http://www.google.cn/search?hl=zh-CN&q=mathwork+princomp&btnG=Google+%E6%90%9C%E7%B4%A2&aq=f&oq= <code>princomp</code>] which performs PCA. From the matlab help file on <code>princomp</code>, you can find the details about this function. Here we will analyze Matlab's <code>princomp()</code> code. We find something different than the SVD method we used on our first assignment. The following is Matlab's code for princomp followed by some explanations to emphasize some key steps. | ||

function [pc, score, latent, tsquare] = princomp(x); | function [pc, score, latent, tsquare] = princomp(x); | ||

| Line 585: | Line 709: | ||

tsquare = sum(tmp.*tmp)'; | tsquare = sum(tmp.*tmp)'; | ||

We should compare the following aspects of the above code with the SVD method: | |||

First, Rows of <math>\,X</math> correspond to observations, columns to variables. When using princomp on 2_3 data in assignment 1, note that we take the transpose of <math>\,X</math>. | First, Rows of <math>\,X</math> correspond to observations, columns to variables. When using princomp on 2_3 data in assignment 1, note that we take the transpose of <math>\,X</math>. | ||

| Line 595: | Line 719: | ||

The third, when <math>\,X=UdV'</math>, princomp uses <math>\,V</math> as coefficients for principal components, rather than <math>\,U</math>. | The third, when <math>\,X=UdV'</math>, princomp uses <math>\,V</math> as coefficients for principal components, rather than <math>\,U</math>. | ||

The following is an example to perform PCA using princomp and SVD respectively to get the same | The following is an example to perform PCA using princomp and SVD respectively to get the same result. | ||

:SVD method | :SVD method | ||

>> load 2_3 | >> load 2_3 | ||

| Line 608: | Line 732: | ||

Then we can see that y=score, v=U. | Then we can see that y=score, v=U. | ||

'''useful | '''useful resources:''' | ||

LDA and QDA in Matlab[http://www.mathworks.com/products/statistics/demos.html?file=/products/demos/shipping/stats/classdemo.html],[http://www.mathworks.com/matlabcentral/fileexchange/189],[http://seed.ucsd.edu/~cse190/media07/MatlabClassificationDemo.pdf] | LDA and QDA in Matlab[http://www.mathworks.com/products/statistics/demos.html?file=/products/demos/shipping/stats/classdemo.html],[http://www.mathworks.com/matlabcentral/fileexchange/189],[http://seed.ucsd.edu/~cse190/media07/MatlabClassificationDemo.pdf] | ||

| Line 634: | Line 758: | ||

[http://www.uni-leipzig.de/~strimmer/lab/courses/ss06/seminar/slides/daniela-2x4.pdf LDA & QDA] | [http://www.uni-leipzig.de/~strimmer/lab/courses/ss06/seminar/slides/daniela-2x4.pdf LDA & QDA] | ||

Using discriminant analysis for multi-class classification: an experimental investigation [http://www.springerlink.com/content/6851416084227k8p/fulltext.pdf] | |||

===Reference articles on solving a small sample size problem when LDA is applied=== | |||

( Based on Li-Fen Chen, Hong-Yuan Mark Liao, Ming-Tat Ko, Ja-Chen Lin, Gwo-Jong Yu A new LDA-based face recognition system which can solve the small sample size problem Pattern Recognition 33 (2000) 1713-1726 ) | |||

Small sample size indicates that the number of samples is smaller than the dimension of each sample. In this case, the within-class covariance we stated in class could be a singular matrix and naturally we cannot find its inverse matrix for further analysis.However, many researchers tried to solve it by different techniques:<br /> | |||

1.Goudail et al. proposed a technique which calculated 25 local autocorrelation coefficients from each sample image to achieve dimensionality reduction. (Referenced by F. Goudail, E. Lange, T. Iwamoto, K. Kyuma, N. Otsu, Face recognition system using local autocorrelations and multiscale integration, IEEE Trans. Pattern Anal. Mach. Intell. 18 (10) (1996) 1024-1028.)<br /> | |||

2.Swets and Weng applied the PCA approach to accomplish reduction of image dimensionality. (Referenced by D. Swets, J. Weng, Using discriminant eigen features for image retrieval, IEEE Trans. Pattern Anal. Mach. Intell.18 (8) (1996) 831-836.)<br /> | |||

3.Fukunaga proposed a more efficient algorithm and calculated eigenvalues and eigenvectors from an m*m matrix, where n is the dimensionality of the samples and m is the rank of the within-class scatter matrix Sw. (Referenced by K. Fukunaga, Introduction to Statistical Pattern Recognition, Academic Press, New York, 1990.)<br /> | |||

4.Tian et al. used a positive pseudoinverse matrix instead of calculating the inverse matrix Sw. (Referenced by Q. Tian, M. Barbero, Z.H. Gu, S.H. Lee, Image classification by the Foley-Sammon transform, Opt. Eng. 25 (7) (1986) 834-840.)<br /> | |||

5.Hong and Yang tried to add the singular value perturbation in Sw and made Sw a nonsingular matrix. (Referenced by Zi-Quan Hong, Jing-Yu Yang, Optimal discriminant plane for a small number of samples and design method of classifier on the plane, Pattern Recognition 24 (4) (1991) 317-324)<br /> | |||

6.Cheng et al. proposed another method based on the principle of rank decomposition of matrices. The above three methods are all based on the conventional Fisher's criterion function. (Referenced by Y.Q. Cheng, Y.M. Zhuang, J.Y. Yang, Optimal fisher discriminant analysis using the rank decomposition, Pattern Recognition 25 (1) (1992) 101-111.)<br /> | |||

7.Liu et al. modified the conventional Fisher's criterion function and conducted a number of researches based on the new criterion function. They used the total scatter matrix as the divisor of the original Fisher's function instead of merely using the within-class scatter matrix. (Referenced by K. Liu, Y. Cheng, J. Yang, A generalized optimal set of discriminant vectors, Pattern Recognition 25 (7) (1992) 731-739.) | |||

==Principal Component Analysis - September 30, 2010== | ==Principal Component Analysis - September 30, 2010== | ||

===Brief introduction on dimension reduction method=== | |||

Dimension reduction is a process to reduce the number of variables of the data by some techniques. [http://en.wikipedia.org/wiki/Principal_component_analysis Principal components analysis] (PCA) and factor analysis are two primary classical methods on dimension reduction. PCA is a method to create some new variables by a linear combination of the variables in the data and the number of new variables depends on what proportion of the variance the new ones contribute. On the contrary, factor analysis method tries to express the old variables by the linear combination of new variables. So before creating the expressions, a certain number of factors should be determined firstly by analysis on the features of old variables. In general, the idea of both PCA and factor analysis is to use as less as possible mixed variables to reflect as more as possible information. | |||

===Rough definition=== | ===Rough definition=== | ||

| Line 646: | Line 788: | ||

Furthermore, if one considers the lower dimensional representation produced by PCA as a least | Furthermore, if one considers the lower dimensional representation produced by PCA as a least square fit of our original data, then it can also be easily shown that this representation is the one that minimizes the reconstruction error of our data. It should be noted, however, that one usually does not have control over which dimensions PCA deems to be the most informative for a given set of data, and thus one usually does not know which dimensions PCA should be selected to be the most informative dimensions in order to create the lower-dimensional representation. | ||

| Line 665: | Line 807: | ||

PCA takes a sample of | PCA takes a sample of <math>\, d</math> - dimensional vectors and produces an orthogonal(zero covariance) set of <math>\, d</math> 'Principal Components'. The first Principal Component is the direction of greatest variance in the sample. The second principal component is the direction of second greatest variance (orthogonal to the first component), etc. | ||

Then we can preserve most of the variance in the sample in a lower dimension by choosing the first | Then we can preserve most of the variance in the sample in a lower dimension by choosing the first <math>\, k</math> Principle Components and approximating the data in <math>\, k</math> - dimensional space, which is easier to analyze and plot. | ||

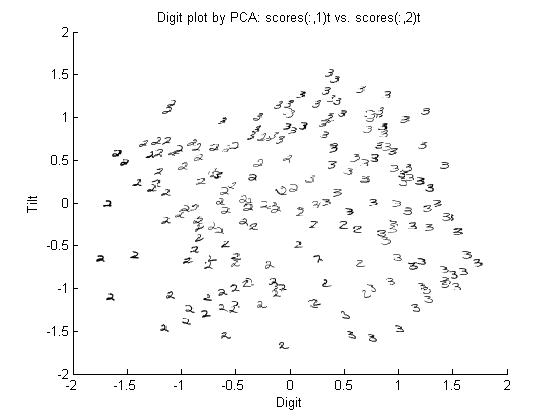

===Principal Components of handwritten digits=== | ===Principal Components of handwritten digits=== | ||

Suppose that we have a set of 130 images (28 by 23 pixels) of handwritten threes. | Suppose that we have a set of 130 images (28 by 23 pixels) of handwritten threes. | ||

| Line 694: | Line 832: | ||

===Derivation of the first Principle Component=== | ===Derivation of the first Principle Component=== | ||

For finding the direction of maximum variation, Let <math>\begin{align}\textbf{w}\end{align}</math> be an arbitrary direction, <math>\begin{align}\textbf{x}\end{align}</math> a data point, and <math>\begin{align}\displaystyle u\end{align}</math> be the length of the projection of <math>\begin{align}\textbf{x}\end{align}</math> in the direction <math>\begin{align}\textbf{w}\end{align}</math>. | |||

<br /><br /> | <br /><br /> | ||

<math>\begin{align} | <math>\begin{align} | ||

| Line 704: | Line 842: | ||

</math> | </math> | ||

<br /><br /> | <br /><br /> | ||

The direction <math>\begin{align}\textbf{w}\end{align}</math> is the same as <math>\begin{align}c\textbf{w}\end{align}</math>, for any scalar <math>c</math>, so without loss of generality | The direction <math>\begin{align}\textbf{w}\end{align}</math> is the same as <math>\begin{align}c\textbf{w}\end{align}</math>, for any scalar <math>c</math>, so without loss of generality we assume that: <br> | ||

<br /> | <br /> | ||

<math> | <math> | ||

| Line 713: | Line 851: | ||

</math> | </math> | ||

<br /><br /> | <br /><br /> | ||

Let <math>x_1, \ldots, x_D</math> be random variables, then our goal | Let <math>x_1, \ldots, x_D</math> be random variables, then we set our goal as to maximize the variance of <math>u</math>, | ||

<br /><br /> | <br /><br /> | ||

<math> | <math> | ||

| Line 719: | Line 857: | ||

</math> | </math> | ||

<br /><br /> | <br /><br /> | ||

For a finite data set we replace the covariance matrix <math>\Sigma</math> by <math>s</math> | For a finite data set we replace the covariance matrix <math>\Sigma</math> by <math>s</math>. The sample covariance matrix | ||

<br /><br /> | <br /><br /> | ||

<math>\textrm{var}(u) = \textbf{w}^T s\textbf{w} .</math> | <math>\textrm{var}(u) = \textbf{w}^T s\textbf{w} .</math> | ||

<br /><br /> | <br /><br /> | ||

The above is the variance of <math>\begin{align}\displaystyle u \end{align}</math> formed by the weight vector <math>\begin{align}\textbf{w} \end{align}</math>. The first principal component is the vector <math>\begin{align}\textbf{w} \end{align}</math> that maximizes the variance, | The above mentioned variable is the variance of <math>\begin{align}\displaystyle u \end{align}</math> formed by the weight vector <math>\begin{align}\textbf{w} \end{align}</math>. The first principal component is the vector <math>\begin{align}\textbf{w} \end{align}</math> that maximizes the variance, | ||

<br /><br /> | <br /><br /> | ||

<math> | <math> | ||

| Line 747: | Line 885: | ||

====Lagrange Multiplier==== | ====Lagrange Multiplier==== | ||

Before we can proceed, we must review Lagrange | Before we can proceed, we must review Lagrange multipliers. | ||

[[Image:LagrangeMultipliers2D.svg.png|right|thumb|200px|"The red line shows the constraint g(x,y) = c. The blue lines are contours of f(x,y). The point where the red line tangentially touches a blue contour is our solution." [Lagrange Multipliers, Wikipedia]]] | [[Image:LagrangeMultipliers2D.svg.png|right|thumb|200px|"The red line shows the constraint g(x,y) = c. The blue lines are contours of f(x,y). The point where the red line tangentially touches a blue contour is our solution." [Lagrange Multipliers, Wikipedia]]] | ||

| Line 766: | Line 904: | ||

====Example==== | ====Example==== | ||

Suppose we wish to | Suppose we wish to maximize the function <math>\displaystyle f(x,y)=x-y</math> subject to the constraint <math>\displaystyle x^{2}+y^{2}=1</math>. We can apply the Lagrange multiplier method on this example; the lagrangian is: | ||

<math>\displaystyle L(x,y,\lambda) = x-y - \lambda (x^{2}+y^{2}-1)</math> | <math>\displaystyle L(x,y,\lambda) = x-y - \lambda (x^{2}+y^{2}-1)</math> | ||

| Line 802: | Line 940: | ||

<math>\displaystyle S\textbf{w}^* = \lambda^*\textbf{w}^* </math> | <math>\displaystyle S\textbf{w}^* = \lambda^*\textbf{w}^* </math> | ||

<br><br /> | <br><br /> | ||

From the eigenvalue equation <math>\, \textbf{w}^*</math> is an eigenvector of '''S''' and <math>\, \lambda^*</math> is the corresponding eigenvalue of '''S'''. If we substitute <math>\displaystyle\textbf{w}^*</math> in <math>\displaystyle \textbf{w}^T S\textbf{w}</math> we obtain, <br /><br /> | From the eigenvalue equation <math>\, \textbf{w}^*</math> is an eigenvector of '''S''' and <math>\, \lambda^*</math> is the corresponding eigenvalue of '''S'''. If we substitute <math>\displaystyle\textbf{w}^*</math> in <math>\displaystyle \textbf{w}^T S\textbf{w}</math> we obtain, <br /><br /> | ||

| Line 810: | Line 945: | ||

<br><br /> | <br><br /> | ||

In order to maximize the objective function we choose the eigenvector corresponding to the largest eigenvalue. We choose the first PC, '''u<sub>1</sub>''' to have the maximum variance<br /> (i.e. capturing as much variability in <math>\displaystyle x_1, x_2,...,x_D </math> as possible.) Subsequent principal components will take up successively smaller parts of the total variability. | In order to maximize the objective function we choose the eigenvector corresponding to the largest eigenvalue. We choose the first PC, '''u<sub>1</sub>''' to have the maximum variance<br /> (i.e. capturing as much variability in <math>\displaystyle x_1, x_2,...,x_D </math> as possible.) Subsequent principal components will take up successively smaller parts of the total variability. | ||

D dimensional data will have D eigenvectors | D dimensional data will have D eigenvectors | ||

| Line 820: | Line 954: | ||

<math>Var(u_1) \geq Var(u_2) \geq ... \geq Var(u_D)</math> | <math>Var(u_1) \geq Var(u_2) \geq ... \geq Var(u_D)</math> | ||

If two eigenvalues happen to be equal, then the data has the same amount of variation in each of the two directions that they correspond to with. If only one of the two equal eigenvalues are to be chosen for dimensionality reduction, then either will do. Note that if ALL of the eigenvalues are the same then this means that the data is on the surface of a d-dimensional sphere (all directions have the same amount of variation). | |||

Note that the Principal Components decompose the total variance in the data: | Note that the Principal Components decompose the total variance in the data: | ||

| Line 864: | Line 999: | ||

====Application of PCA - Feature Extraction ==== | ====Application of PCA - Feature Extraction ==== | ||

PCA, depending on the field of application, it is also named the discrete Karhunen–Loève transform (KLT), the Hotelling transform or proper orthogonal decomposition (POD). | |||

One of the applications of PCA is to group similar data (e.g. images). There are generally two methods to do this. We can classify the data (i.e. give each data a label and compare different types of data) or cluster (i.e. do not label the data and compare output for classes). | One of the applications of PCA is to group similar data (e.g. images). There are generally two methods to do this. We can classify the data (i.e. give each data a label and compare different types of data) or cluster (i.e. do not label the data and compare output for classes). | ||

| Line 890: | Line 1,026: | ||

::#We can compare the reconstructed test sample to the reconstructed training sample to classify the new data. | ::#We can compare the reconstructed test sample to the reconstructed training sample to classify the new data. | ||

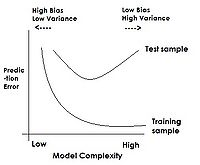

=== | ==== Feature Extraction Uses and Discussion ==== | ||

PCA, as well as other feature extraction methods not within the scope of the course [http://en.wikipedia.org/wiki/Feature_extraction] are used as a first step to classification in enhancing generalization capability: one of the classification aspects that will be discussed later in the course is model complexity. As a classification model becomes more complex over its training set, classification error over test data tends to increase. By performing feature extraction prior to attempting classification, we restrict model inputs to only the most important variables, thus decreasing complexity and potentially improving test results. | |||

Feature ''selection'' methods, that are used to select subsets of relevant features for building robust learning models, differ from extraction methods, where features are transformed. Feature selection has the added benefit of improving model interpretability. | |||

The number of dimensions that we want to reduce the data to depends on the number of classes: | |||

<br> | === Independent Component Analysis === | ||

For a 2-classes problem, we want to reduce the data to one dimension (a line), <math>\displaystyle Z \in \mathbb{R}^{1}</math> | As we have already seen, the Principal Component Analysis (PCA) performed by the Karhunen-Lokve transform produces features <math>\ y ( i ) ; i = 0, 1, . . . , N - 1</math>, that are mutually uncorrelated. The obtained by the KL transform solution is optimal when dimensionality reduction is the goal and one wishes to minimize the approximation mean square error. However, for certain applications, the obtained solution falls short of the expectations. In contrast, the more recently developed Independent Component Analysis (ICA) theory, tries to achieve much more than simple decorrelation of the data. The ICA task is casted as follows: Given the set of input samples <math>\ x</math>, determine an <math>\ N \times N</math> invertible matrix <math>\ W</math> such that the entries <math>\ y(i), i = 0, 1, . . . , N - 1</math>, of the transformed vector | ||

<br> | |||

Generally, for a k-classes problem, we want to reduce the data to k-1 dimensions, <math>\displaystyle Z \in \mathbb{R}^{k-1}</math> | <math>\ y = W.x</math> | ||

are mutually independent. The goal of statistical independence is a stronger condition than the uncorrelatedness required by the PCA. The two conditions are equivalent only for Gaussian random variables. Searching for independent rather than uncorrelated features gives us the means of exploiting a lot more of information, hidden in the higher order statistics of the data. | |||

This topic has brought to you from Pattern Recognition by Sergios Theodoridis and Konstantinos Koutroumbas. (Chapter 6) For further details on the ICA and its varieties, refer to this book. | |||

=== References === | |||

1. Probabilistic Principal Component Analysis | |||

[http://onlinelibrary.wiley.com/doi/10.1111/1467-9868.00196/abstract] | |||

2. Nonlinear Component Analysis as a Kernel Eigenvalue Problem | |||

[http://www.mitpressjournals.org/doi/abs/10.1162/089976698300017467] | |||

3. Kernel principal component analysis | |||

[http://www.springerlink.com/content/w0t1756772h41872/] | |||

4. Principal Component Analysis | |||

[http://onlinelibrary.wiley.com/doi/10.1002/0470013192.bsa501/full] and [http://support.sas.com/publishing/pubcat/chaps/55129.pdf] | |||

=== Further Readings === | |||

1. I. T. Jolliffe "Principal component analysis" Available [http://books.google.ca/books?id=_olByCrhjwIC&printsec=frontcover&dq=principal+component+analysis&hl=en&ei=TooCTaesN42YnweR843lDQ&sa=X&oi=book_result&ct=result&resnum=1&ved=0CC4Q6AEwAA#v=onepage&q&f=false here]. | |||

2. James V. Stone "Independent component analysis: a tutorial introduction" Available [http://books.google.ca/books?id=P0rROE-WFCwC&pg=PA129&dq=principal+component+analysis&hl=en&ei=TooCTaesN42YnweR843lDQ&sa=X&oi=book_result&ct=result&resnum=7&ved=0CEYQ6AEwBg#v=onepage&q=principal%20component%20analysis&f=false here]. | |||

3. Aapo Hyvärinen, Juha Karhunen, Erkki Oja "Independent component analysis" Available [http://books.google.ca/books?id=96D0ypDwAkkC&printsec=frontcover&dq=independent+component+analysis&hl=en&ei=F4wCTZqjJY2RnAew6pnlDQ&sa=X&oi=book_result&ct=result&resnum=1&ved=0CCoQ6AEwAA#v=onepage&q&f=false here]. | |||

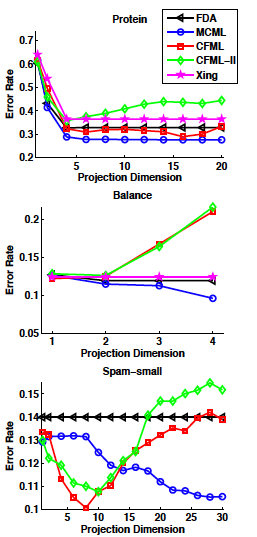

== Fisher's (Linear) Discriminant Analysis (FDA) - Two Class Problem - October 5, 2010 == | |||

===Sir Ronald A. Fisher=== | |||

Fisher's Discriminant Analysis (FDA), also known as Fisher's Linear Discriminant Analysis ([http://en.wikipedia.org/wiki/Linear_discriminant_analysis LDA]) in some sources, is a classical [http://en.wikipedia.org/wiki/Feature_extraction feature extraction] technique. It was originally described in 1936 by Sir [http://en.wikipedia.org/wiki/Ronald_A._Fisher Ronald Aylmer Fisher], an English statistician and eugenicist who has been described as one of the founders of modern statistical science. His original paper describing FDA can be found [http://digital.library.adelaide.edu.au/dspace/handle/2440/15227 here]; a Wikipedia article summarizing the algorithm can be found [http://en.wikipedia.org/wiki/Linear_discriminant_analysis#Fisher.27s_linear_discriminant here]. | |||

In this paper Fisher used for the first time the term DISCRIMINANT FUNCTION. The term DISCRIMINANT ANALYSIS was introduced later by Fisher himself in a subsequent paper which can be found [http://digital.library.adelaide.edu.au/coll/special//fisher/155.pdf here]. | |||

===Introduction=== | |||

'''Linear discriminant analysis''' ([http://en.wikipedia.org/wiki/Linear_discriminant_analysis LDA]) and the related '''Fisher's linear discriminant''' are methods used in statistics, pattern recognition and machine learning to find a linear combination of features which characterize or separate two or more classes of objects or events. The resulting combination may be used as a linear classifier, or, more commonly, for dimensionality reduction before later classification. | |||

LDA is also closely related to principal component analysis ([http://en.wikipedia.org/wiki/Principal_component_analysis PCA]) and [http://en.wikipedia.org/wiki/Factor_analysis factor analysis] in that both look for linear combinations of variables which best explain the data. LDA explicitly attempts to model the difference between the classes of data. PCA on the other hand does not take into account any difference in class, and factor analysis builds the feature combinations based on differences rather than similarities. Discriminant analysis is also different from factor analysis in that it is not an interdependence technique: a distinction between independent variables and dependent variables (also called criterion variables) must be made. | |||

LDA works when the measurements made on independent variables for each observation are continuous quantities. When dealing with categorical independent variables, the equivalent technique is '''discriminant correspondence analysis'''. | |||

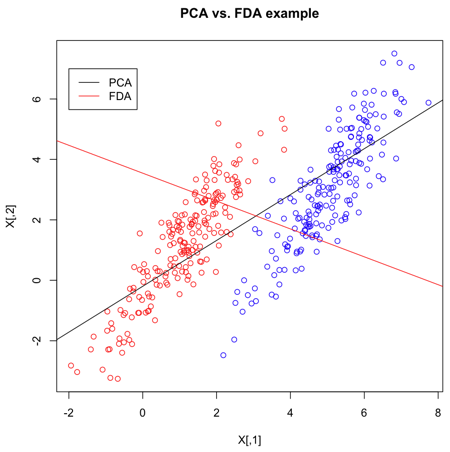

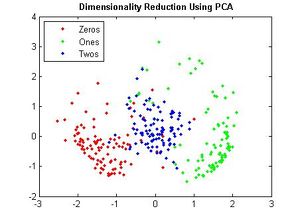

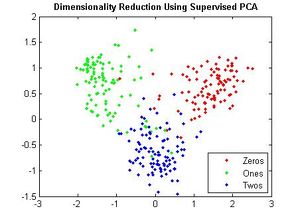

=== Contrasting FDA with PCA === | |||

As in PCA, the goal of FDA is to project the data in a lower dimension. You might ask, why was FDA invented when PCA already existed? There is a simple explanation for this that can be found [http://www.ics.uci.edu/~welling/classnotes/papers_class/Fisher-LDA.pdf here]. PCA is an unsupervised method for classification, so it does not take into account the labels in the data. Suppose we have two clusters that have very different or even opposite labels from each other but are nevertheless positioned in a way such that they are very much parallel to each other and also very near to each other. In this case, most of the total variation of the data is in the direction of these two clusters. If we use PCA in cases like this, then both clusters would be projected onto the direction of greatest variation of the data to become sort of like a single cluster after projection. PCA would therefore mix up these two clusters that, in fact, have very different labels. What we need to do instead, in this cases like this, is to project the data onto a direction that is orthogonal to the direction of greatest variation of the data. This direction is in the least variation of the data. On the 1-dimensional space resulting from such a projection, we would then be able to effectively classify the data, because these two clusters would be perfectly or nearly perfectly separated from each other taking into account of their labels. This is exactly the idea behind FDA. | |||

The main difference between FDA and PCA is that, in FDA, in contrast to PCA, we are not interested in retaining as much of the variance of our original data as possible. Rather, in FDA, our goal is to find a direction that is useful for classifying the data (i.e. in this case, we are looking for a direction that is most representative of a particular characteristic e.g. glasses vs. no-glasses). | |||

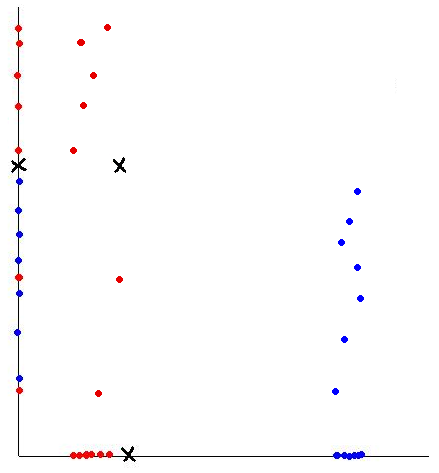

Suppose we have 2-dimensional data, then FDA would attempt to project the data of each class onto a point in such a way that the resulting two points would be as far apart from each other as possible. Intuitively, this basic idea behind FDA is the optimal way for separating each pair of classes along a certain direction. | |||

Please note dimention reduction in PCA is different from subspace cluster , see the details about the subspace cluser [http://en.wikipedia.org/wiki/Clustering_high-dimensional_data] | |||

{{Cleanup|date=October 2010|reason= Just a thought: how relevant is "Dimensionality reduction techniques" to the concept of "subspace clustering"? As in subspace clustering, the goal is to find a set of features (relevant features, the concept is referred to as local feature relevance in the literature) in the high dimensional space, where potential subspaces accommodating different classes of data points can be defined. This means; the data points are dense when they are considered in a subset of dimensions (features).}} | |||

{{Cleanup|date=October 2010|reason=If I'm not mistaken, classification techniques like FDA use labeled training data whereas clustering techniques use unlabeled training data instead. Any other input regarding this would be much appreciated. Thanks}} | |||

{{Cleanup|date=October 2010|reason=An extension of clustering is subspace clustering in which different subspace are searched through to find the relavant and appropriate dimentions. High dimentional data sets are roughly equiedistant from each other, so feature selection methods are used to remove the irrelavant dimentions. These techniques do not keep the relative distance so PCA is not useful for these applications. It should be noted that subspace clustering localize their search unlike feature selection algorithms.for more information click here[http://portal.acm.org/citation.cfm?id=1007731]}} | |||

The number of dimensions that we want to reduce the data to depends on the number of classes: | |||

<br> | |||

For a 2-classes problem, we want to reduce the data to one dimension (a line), <math>\displaystyle Z \in \mathbb{R}^{1}</math> | |||

<br> | |||

Generally, for a k-classes problem, we want to reduce the data to k-1 dimensions, <math>\displaystyle Z \in \mathbb{R}^{k-1}</math> | |||

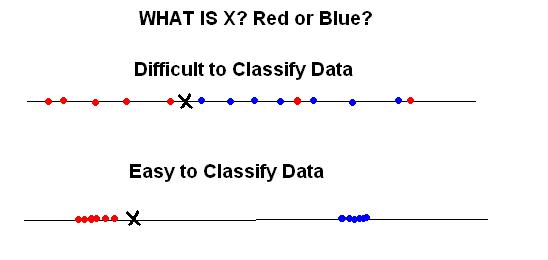

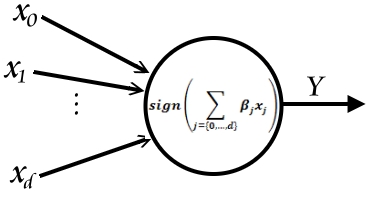

As we will see from our objective function, we want to maximize the separation of the classes and to minimize the within-variance of each class. That is, our ideal situation is that the individual classes are as far away from each other as possible, and at the same time the data within each class are as close to each other as possible (collapsed to a single point in the most extreme case). | As we will see from our objective function, we want to maximize the separation of the classes and to minimize the within-variance of each class. That is, our ideal situation is that the individual classes are as far away from each other as possible, and at the same time the data within each class are as close to each other as possible (collapsed to a single point in the most extreme case). | ||

| Line 973: | Line 1,155: | ||

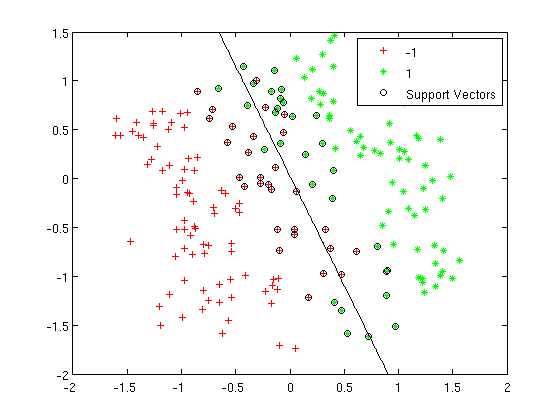

=== Two-class problem === | === Two-class problem === | ||

In the two-class problem, we have | In the two-class problem, we have prior knowledge that the data points belong to two classes. Conceptually, points of each class form a cloud around the class mean, and each class has an distinct size. To divide points among the two classes, we must determine the class whose mean is closest to each point, and we must also account for the different size of each class given by the covariance of each class. | ||

Assume <math>\underline{\mu_{1}}=\frac{1}{n_{1}}\displaystyle\sum_{i:y_{i}=1}\underline{x_{i}}</math> and <math>\displaystyle\Sigma_{1}</math>, | Assume <math>\underline{\mu_{1}}=\frac{1}{n_{1}}\displaystyle\sum_{i:y_{i}=1}\underline{x_{i}}</math> and <math>\displaystyle\Sigma_{1}</math>, | ||

| Line 987: | Line 1,169: | ||

{{Cleanup|date=October 2010|reason=In 2. above, I wonder if the computation would be much more complex if we instead find a weighted sum of the covariances of the two classes where the weights are the sizes of the two classes?}} | {{Cleanup|date=October 2010|reason=In 2. above, I wonder if the computation would be much more complex if we instead find a weighted sum of the covariances of the two classes where the weights are the sizes of the two classes?}} | ||

{{Cleanup|date=December 2010|reason= If using the weighted sum of two covariances, you will need to use the shared mean of the two classes, and the weighted sum will be the shared covariance. Doing this will result in collapsing the two classes into one point, which contradicts the purpose of using FDA}} | |||

As is demonstrated below, both of these goals can be accomplished simultaneously. | As is demonstrated below, both of these goals can be accomplished simultaneously. | ||

| Line 1,116: | Line 1,299: | ||

===Some of FDA applications=== | ===Some of FDA applications=== | ||

There are many applications for FDA in many domains | There are many applications for FDA in many domains; a few examples are stated below: | ||

* | * Speech/Music/Noise Classification in Hearing Aids | ||

FDA can be used to enhance listening comprehension when the user goes from | FDA can be used to enhance listening comprehension when the user goes from one sound environment to another different one. In practice, many people who require hearing aids do not wear them due in part to the nusiance of having to adjust the settings each time a user changes noise environments (for example, from a quiet walk in the to park to a crowded cafe). If the hearing aid itself could distinguish between the type of sound environment and automatically adjust its settings itself, many more people may be willing to wear and use the hearing aids. The paper referenced below examines the difference in using a classifier based on one level and three classes ("speech", "noisy" or "music" environments) and a classifier based on two levels with two classes each ("speech" versus "non-speech" and then for the "non-speech" group, between "noisy" and "music") and also includes a discussion about the feasibility of implementing these classifiers in the hearing aids. For more information review this paper by Alexandre et al. [http://www.eurasip.org/Proceedings/Eusipco/Eusipco2008/papers/1569101740.pdf here]. | ||

environment to another different one. For more information review this paper by Alexandre et al.[http://www.eurasip.org/Proceedings/Eusipco/Eusipco2008/papers/1569101740.pdf here] | |||

* Application to Face Recognition | * Application to Face Recognition | ||

FDA can be used in face recognition | FDA can be used in face recognition for different situations. Instead of using the one-dimensional LDA where the data is transformed into long column vectors with less-than-full-rank covariance matrices for the within-class and between-class covariance matrices, several other approaches of using FDA are suggested here including a two-dimensional approach where the data is stored as a matrix rather than a column vector. In this case, the covariance matrices are full-rank. Details can be found in the paper by Kong et al. [http://person.hst.aau.dk/pimuller/2D_FDA_Face_CVPR05fish.pdf here]. | ||

* Palmprint Recognition | * Palmprint Recognition | ||

FDA is used in biometrics | FDA is used in biometrics to implement an automated palmprint recognition system. In Tee et al. [http://www.sciencedirect.com/science?_ob=MImg&_imagekey=B6V09-4FJ5XPN-1-1&_cdi=5641&_user=1067412&_pii=S0262885605000089&_origin=search&_coverDate=05%2F01%2F2005&_sk=999769994&view=c&wchp=dGLbVzz-zSkWb&md5=a064b67c9bdaaba7e06d800b6c9b209b&ie=/sdarticle.pdf here] An Automated Palmprint Recognition System was proposed and FDA was used to match images in a compressed subspace where these subspaces best discriminate among classes. It is different from PCA in the aspect that it deals directly with class separation while PCA treats images in its entirety without considering the underlying class structure. | ||

* Other Applications | |||

Other applications can be seen in [4] where FDA was used to authenticate different olive oil types, or classify multiple fault classes [5]. As well as, applications on face recognition [6] and shape deformations to localize epilepsy [8]. | |||

=== '''References'''=== | === '''References'''=== | ||

| Line 1,155: | Line 1,336: | ||

8. Kodipaka, S.; Vemuri, B.C.; Rangarajan, A.; Leonard, C.M.; Schmallfuss, I.; Eisenschenk, S.; "Kernel Fisher discriminant for shape-based classification in epilepsy" Hournal Medical Image Analysis, 2007. [http://www.sciencedirect.com/science/article/B6W6Y-4MH8BS0-1/2/055fb314828d785a5c3ca3a6bf3c24e9 8] | 8. Kodipaka, S.; Vemuri, B.C.; Rangarajan, A.; Leonard, C.M.; Schmallfuss, I.; Eisenschenk, S.; "Kernel Fisher discriminant for shape-based classification in epilepsy" Hournal Medical Image Analysis, 2007. [http://www.sciencedirect.com/science/article/B6W6Y-4MH8BS0-1/2/055fb314828d785a5c3ca3a6bf3c24e9 8] | ||

9. Fisher LDA and Kernel Fisher LDA [http://www.ics.uci.edu/~welling/classnotes/papers_class/Fisher-LDA.pdf] | |||

==Fisher's (Linear) Discriminant Analysis (FDA) - Multi-Class Problem - October 7, 2010== | ==Fisher's (Linear) Discriminant Analysis (FDA) - Multi-Class Problem - October 7, 2010== | ||

===Obtaining Covariance Matrices=== | |||

| Line 1,326: | Line 1,509: | ||

eigenvalues with respect to | eigenvalues with respect to | ||

{{Cleanup| | {{Cleanup|reason=What if we encounter complex eigenvalues? Then concept of being large does not dense. What is the solution in that case? }} | ||

{{Cleanup|date=December 2010|reason=Covariance matrices are positive semi-definite. The inverse of a positive semi-definite matrix is positive semi-definite. The product of positive semi-definite matrices is positive semi-definite. The eigenvalues of a positive semi-definite matrix are all real, non-negative values. As a result, the eigenvalues of \mathbf{S}_{W}^{-1}\mathbf{S}_{B} will always be real, non-negative values.}} | |||

:<math> | :<math> | ||

| Line 1,428: | Line 1,612: | ||

{{Cleanup|date=October 2010|reason=Would you please show how could we reconstruct our original data from the data that its dimentionality is reduced by FDA.}} | {{Cleanup|date=October 2010|reason=Would you please show how could we reconstruct our original data from the data that its dimentionality is reduced by FDA.}} | ||

{{Cleanup|date=October 2010|reason= When you reduce the dimensionality of data in most general form you lose some features of the data and you cannot reconstruct the data from redacted space unless the data have special features that help you in reconstruction like sparsity. In FDA it seems that we cannot reconstruct data in general form using reducted version of data }} | {{Cleanup|date=October 2010|reason= When you reduce the dimensionality of data in most general form you lose some features of the data and you cannot reconstruct the data from redacted space unless the data have special features that help you in reconstruction like sparsity. In FDA it seems that we cannot reconstruct data in general form using reducted version of data }} | ||

====Advantages of FDA compared with PCA==== | |||

-PCA find components which are useful for representing data. | |||

-While there is no reason to assume that components are useful to discriminate data between classes. | |||

-In FDA , we try to use labels to find the components which are useful for discriminating data. | |||

===Generalization of Fisher's Linear Discriminant Analysis === | ===Generalization of Fisher's Linear Discriminant Analysis === | ||

| Line 1,435: | Line 1,627: | ||

Also notice that LDA can be seen as a dimensionality reduction technique. In general k-class problems, we have k means which lie on a linear subspace with dimension k-1. Given a data point, we are looking for the closest class mean to this point. In LDA, we project the data point to the linear subspace and calculate distances within that subspace. If the dimensionality of the data, d, is much larger than the number of classes, k, then we have a considerable drop in dimensionality from d dimensions to k - 1 dimensions. | Also notice that LDA can be seen as a dimensionality reduction technique. In general k-class problems, we have k means which lie on a linear subspace with dimension k-1. Given a data point, we are looking for the closest class mean to this point. In LDA, we project the data point to the linear subspace and calculate distances within that subspace. If the dimensionality of the data, d, is much larger than the number of classes, k, then we have a considerable drop in dimensionality from d dimensions to k - 1 dimensions. | ||

===Multiple Discriminant Analysis=== | |||

(MDA) is also termed Discriminant Factor Analysis and Canonical Discriminant Analysis. It adopts a similar perspective to PCA: the rows of the data matrix to be examined constitute points in a multidimensional space, as also do the group mean vectors. Discriminating axes are determined in this space, in such a way that optimal separation of the predefined groups is attained. As with PCA, the problem becomes mathematically the eigenreduction of a real, symmetric matrix. The eigenvalues represent the discriminating power of the associated eigenvectors. The nYgroups lie in a space of dimension at most <math>n_{y-1}</math>. This will be the number of discriminant axes or factors obtainable in the most common practical case when n > m > nY (where n is the number of rows, and m the number of columns of the input data matrix. | |||

===Matlab Example: Multiple Discriminant Analysis for Face Recognition=== | |||

% The following MATLAB code is an example of using MDA in face recognition. The used dataset can be % found be found [http://www.cl.cam.ac.uk/research/dtg/attarchive/facedatabase.html here]. IT contains % a set of face images taken between April 1992 and April 1994 at the lab. The database was used in the % context of a face recognition project carried out in collaboration with the Speech, Vision and % Robotics Group of the Cambridge University Engineering Department. | |||

load orl_faces_112x92.mat | |||

u=(mean(faces'))'; | |||

stfaces=faces-u*ones(1,400); | |||

S=stfaces'*stfaces; | |||

[V,E] = eig(S); | |||

U=zeros(length(stfaces),150);%%%%%% | |||

for i=400:-1:251 | |||

U(:,401-i)=stfaces*V(:,i)/sqrt(E(i,i)); | |||

end | |||

defaces=U'*stfaces; | |||

for i=1:40 | |||

for j=1:5 | |||

lsamfaces(:,j+5*i-5)=defaces(:,j+10*i-10); | |||

ltesfaces(:,j+5*i-5)=defaces(:,j+10*i-5); | |||

end | |||

end | |||

stlsamfaces=lsamfaces-lsamfaces*wdiag(ones(5,5),40)/5; | |||

Sw=stlsamfaces*stlsamfaces'; | |||

zstlsamfaces=lsamfaces-(mean(lsamfaces'))'*ones(1,200); | |||

St=zstlsamfaces*zstlsamfaces'; | |||

Sb=St-Sw; | |||

[V D]=eig(Sw\Sb); | |||

U=V(:,1:39); | |||

desamfaces=U'*lsamfaces; | |||

detesfaces=U'*ltesfaces; | |||

rightnum=0; | |||

for i=1:200 | |||

mindis=10^10;minplace=1; | |||

for j=1:200 | |||

distan=norm(desamfaces(:,i)-detesfaces(:,j)); | |||

if mindis>distan | |||

mindis=distan; | |||

minplace=j; | |||

end | |||

end | |||

if floor(minplace/5-0.2)==floor(i/5-0.2) | |||

rightnum=rightnum+1; | |||

end | |||

end | |||

rightrate=rightnum/200 | |||

===K-NNs Discriminant Analysis=== | |||

Non-parametric (distribution-free) methods dispense with the need for assumptions regarding the probability density function. They have become very popular especially in the image processing area. The K-NNs method assigns an object of unknown affiliation to the group to which the majority of its K nearest neighbours belongs. | |||

There is no best discrimination method. A few remarks concerning the advantages and disadvantages of the methods studied are as follows. | |||

:1.Analytical simplicity or computational reasons may lead to initial consideration of linear discriminant analysis or the NN-rule. | |||

:2.Linear discrimination is the most widely used in practice. Often the 2-group method is used repeatedly for the analysis of pairs of multigroup data (yielding <math>\frac{k(k-1)}{2}</math>decision surfaces for k groups). | |||

:3.To estimate the parameters required in quadratic discrimination more computation and data is required than in the case of linear discrimination. If there is not a great difference in the group covariance matrices, then the latter will perform as well as quadratic discrimination. | |||

:4.The k-NN rule is simply defined and implemented, especially if there is insufficient data to adequately define sample means and covariance matrices. | |||

:5.MDA is most appropriately used for feature selection. As in the case of PCA, we may want to focus on the variables used in order to investigate the differences between groups; to create synthetic variables which improve the grouping ability of the data; to arrive at a similar objective by discarding irrelevant variables; or to determine the most parsimonious variables for graphical representational purposes. | |||

=== Fisher Score === | |||

Fisher Discriminant Analysis should be distinguished from Fisher Score. Feature score is a means, by which we can evaluate the importance of each of the features in a binary classification task. Here is the Fisher score, or in brief <math>\ FS</math>. | |||

<math>FS_i=\frac{(\mu_i^1-\mu_i)^2+(\mu_i^2-\mu_i)^2}{var_i^1+var_i^2}</math> | |||

Where <math>\ \mu_i^1</math>, and <math>\ \mu_i^2</math> are the average of the feature <math>\ i</math> for the class 1 and 2 respectively and <math>\ \mu_i</math> is the average of the feature <math>\ i</math> over both of the classes. And <math>\ var_i^1</math>, and <math>\ var_i^2</math> are the variances of the feature <math>\ i</math> in the two classes of 1 and 2 respectively. | |||

We can estimate the FS over all of the features and then select those features with the highest FS. We want features to discriminate as much as possible between two classes and describe each of the classes as dense as possible; this is exactly the criterion that has been taken into consideration for defining the Fisher Score. | |||

===References=== | |||

1. Optimal Fisher discriminant analysis using the rank decomposition | |||

[http://www.sciencedirect.com/science?_ob=ArticleURL&_udi=B6V14-48MPMK5-14R&_user=10&_coverDate=01%2F31%2F1992&_rdoc=1&_fmt=high&_orig=search&_origin=search&_sort=d&_docanchor=&view=c&_searchStrId=1550315473&_rerunOrigin=scholar.google&_acct=C000050221&_version=1&_urlVersion=0&_userid=10&md5=b8b00da9ab59b76a40eca456f5aa99b6&searchtype=a] | |||

2. Face recognition using Kernel-based Fisher Discriminant Analysis | |||

[http://ieeexplore.ieee.org/xpl/freeabs_all.jsp?arnumber=1004157] | |||

3. Fisher discriminant analysis with kernels | |||

[http://ieeexplore.ieee.org/xpl/freeabs_all.jsp?arnumber=788121] | |||

4. Fisher LDA and Kernel Fisher LDA [http://www.ics.uci.edu/~welling/classnotes/papers_class/Fisher-LDA.pdf] | |||

5. Previous STAT 841 notes. [http://www.math.uwaterloo.ca/~aghodsib/courses/f07stat841/notes/lecture7.pdf] | |||

6. Another useful pdf introducing FDA [http://www.cedar.buffalo.edu/~srihari/CSE555/Chap3.Part6.pdf] | |||

==Random Projection== | |||

Random Project (RP) is an approach of projecting a point from a high dimensional space to a lower dimensional space. In general, a target subspace, presented as a uniform random orthogonal matrix, should be determined firstly and the projected vector can be described as v=c.p.u, where u is a d-dimension vector, p is the uniform random orthogonal matrix with d’ rows and d columns, v is the projected vector with d’-dimension and c is scaling factor such that the expected squared length of v is equal to the squared length of u. For the projected vectors by RP, they have two main properties: | |||

1. The distance between any two of the original vectors is approximately equal to the distance of their corresponding projected vectors by RP. | |||