stat441w18/Image Question Answering using CNN with Dynamic Parameter Prediction: Difference between revisions

| Line 156: | Line 156: | ||

The structure of the dynamic parameter prediction network is a series of GRU units followed by a single fully connected layer. GRU units are well suited to sentence interpretation tasks given their internal state component that considers earlier words in the sentence when encoding later words. GRUs were selected over LSTM units as a result of the findings of the 2014 [https://arxiv.org/pdf/1412.3555.pdf paper] "Empirical Evaluation of Gated Recurrent Neural Networks on Sequence Modelling" by Junyoung Chung, Caglar Gulcehre, KyungHyun Cho, Yoshua Bengio. The paper confirmed that more advanced recurrent units perform better at sequential tasks than simpler, traditional units. It also compared the recently proposed GRU units to the more broadly used LSTM units. In summary, it was found that GRU units perform similarly to LSTM units despite their simpler structure. This motivates the authors to use GRUs over LSTMs. However, the authors note that non-recurrent networks could be used to build the parameter prediction network as the pre-trained sentence embedding model (skip-thought vector model) has a GRU based structure (discussed below). | The structure of the dynamic parameter prediction network is a series of GRU units followed by a single fully connected layer. GRU units are well suited to sentence interpretation tasks given their internal state component that considers earlier words in the sentence when encoding later words. GRUs were selected over LSTM units as a result of the findings of the 2014 [https://arxiv.org/pdf/1412.3555.pdf paper] "Empirical Evaluation of Gated Recurrent Neural Networks on Sequence Modelling" by Junyoung Chung, Caglar Gulcehre, KyungHyun Cho, Yoshua Bengio. The paper confirmed that more advanced recurrent units perform better at sequential tasks than simpler, traditional units. It also compared the recently proposed GRU units to the more broadly used LSTM units. In summary, it was found that GRU units perform similarly to LSTM units despite their simpler structure. This motivates the authors to use GRUs over LSTMs. However, the authors note that non-recurrent networks could be used to build the parameter prediction network as the pre-trained sentence embedding model (skip-thought vector model) has a GRU based structure (discussed below). | ||

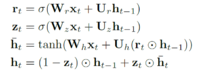

In this model, the internal states are updated using the following update rules: | |||

[[File:Update.png|200px|centre]] | |||

Where <math>r_t</math> is the reset gate, <math>z_t</math> is the update gate, <math>h_t</math> is the hidden state, <math>\bar{h_t}</math> is the candidate activation and <math>\bigodot</math> indicates element wise multiplication. Note that the W and U matrices are parameters learned during training. | |||

== Hashing == | == Hashing == | ||

Revision as of 09:31, 15 March 2018

Image Question Answering using CNN with Dynamic Parameter Prediction

Presented by

Rosie Zou, Kye Wei, Glen Chalatov, Ameer Dharamshi

Introduction

Problem Setup

Historically, computer vision has been an incredibly challenging, yet equally important task for researchers. The ultimate goal of computer vision is to develop a system that can achieve a holistic understanding of an image to extract meaningful insights. After many years with limited progress towards this goal, the field had fallen out of the spotlight. However, the past decade has seen a resurgence of interest in computer vision research due to breakthroughs in deep learning and advancements in computational capabilities. Recent advances in the recognition capabilities of such systems has paved the way for further advances towards our ultimate objective.

Image question answering (Image Q&A) is the next stage. Image Q&A is the task of asking a system various questions about an image. This extends beyond simple recognition and requires detecting many objects within an image as well as finding some level of context in order to answer the question. The core challenge of Image Q&A is that the set of objects to detect and the level of understanding required is dependent on the question being asked. If the question was static, the model could be optimized based on the question. A broader Image Q&A model must be flexible in order to adapt to different questions.

From a model perspective, the question being posed must be incorporated into the model and processed at some point. Previous methods tend to extract image features from the image and descriptors from the question and present the intersection of these two sets as the answer. Previous models incorporate the question directly as an independent variable when determining the answer. This is written as: ![]()

Where ‘ahat’ is the best predicted answer, ‘omega’ is the set of all possible answers ‘a’, ‘I’ is the image, ‘q’ is the question, and ‘theta’ is the model parameter vector.

The authors of this paper propose using the question to set certain model parameters and thus use the question directly when extracting information from the picture. This is written as ![]()

Where ‘ahat’, ‘a’, ‘I’ are the same as above but ‘thetas’ is the vector of static parameters and ‘thetad(q)’ is the vector of dynamic parameters determined by the question.

This approach hopes to adapt the model to the specifics of the question. Intuitively, it is determining the answer based on the question instead of seeking the common features of both the question and the answer.

Previous and Related Works

As mentioned in the earlier section, one of the major goals in computer vision is to achieve holistic understanding. While relatively new interest in the computer vision community, Image Question Answering already has a growing number of researchers working on this problem. There has been many past and recent efforts on this front, for instance this non-exhaustive list of papers published between 2015 and 2016 (NIPS 2015 paper, ICCV 2015 paper, AAAI 2016 paper). One key commonality in these papers is that most, if not all, of the recognition problems are defined in a simple, controlled environment with a finite set of objectives. While the question-handling strategies differ from paper to paper, a general problem-solving strategy in these papers is to use CNNs for feature extraction from images prior to handling question sentences.

In contrast, there has been less efforts in solving various recognition problems simultaneously, which is what researchers in Image Question Answering is trying to achieve. As mentioned previously, other than one paper (NIPS 2014 paper) which utilizes a Bayesian framework, the majority of the papers listed above generally propose an overall deep learning network structure, which performs very well on public bench marks but tends to fall apart when question complexity increases. The reason lies fundamentally in the complexity of the English language:

CNN + bag-of-words -- challenges from language representation

This strategy first uses CNNs to process the given image and extracts an array of image features along with their respective probabilities. Then, it uses semantic image segmentation and symbolic question reasoning to process the given questions. This would extract another set of features from the given question. After the question sentence is tokenized and processed, it then finds the intersection between the features present in the image and the features present in the question. This approach does not scale with complexity of the image or question, since neither one of them has a finite representation.

To further explain the issue with generalization, we need to discuss more about the complexity of natural languages. Renowned linguist and philosopher Noam Chomsky introduced the Chomsky Hierarchy of Grammars in 1956, which is a hierarchical venn diagram of formal grammars. In Chomsky’s hierarchy, languages are viewed as sets of strings ordered by their complexity, where the higher the language is in the hierarchy, the more complex it is. Such languages have higher generative and expressive power than languages in the lower end of the hierarchy. Additionally, there exists an inverse relationship between the level of restriction of phrase-structure rules and a language’s expressive powers: languages with more restricted phrase-structure rules have lower expressive powers.

Context-free languages and regular languages, which are in the lowest two levels in the hierarchy, can be expressed and coded using pushdown automaton and deterministic finite automaton. Visually, these two classes of languages can be expressed using parse trees and state diagrams. This means that given grammar and state transition rules, we can express all strings generated by those rules if the language is context-free or regular.

In contrast, English is not a regular language, thus cannot be represented using finite state automaton. While some argue that English is a context-free language and that grammatically correct sentences can be generated using parse trees, those sentences can easily be nonsensical due to the other important concern that is context. For instance, “ship breathes wallet” is a grammatically correct sentences but it has no practical meaning. Hence with our current knowledge in formal language theory, English cannot be represented using a finite set of rules and grammars.

Mathematical Background

CNNs

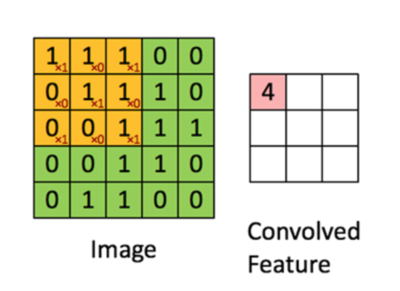

As a pre-requisite for this paper, we will discuss how CNNs use convolution to extract features from images as well as the general architecture of a CNN. Here, we describe the 3 step “convolution operation” used to extract information from the input image. Note, this can be applied in between layers as well, which will be explained below.

Step 1: Convolution

Consider a 5x5 pixel image. For simplicities sake, let’s assume that the pixel values can only take on values 0 or 1. In reality they could take on many values based on the colour scheme, but the operation will be the same. The yellow piece overlaying one part of the image is called a “filter”, and is used to derive the value in the convolved feature. For example: 1*1 + 1*0 + 1*1 + 0*0 + 1*1 + 1*0 + 0*1 + 0*0 + 1*1 = 4. In this example, we set our “stride” parameter to 1. That is, we move the filter matrix to the right by 1 to get the (2,1) element for our convolved feature matrix. Likewise, we move our filter down one to get the (1,2) element of the convolved matrix. A similar operation can be applied to vectors with an nx1 filter.

Step 2: Non-linearity

Next, we apply a non-linear function to the elements of the Convolved Feature in order to account for non-linearity. For example, we might take the function max(element,0) and apply it to the matrix

Step 3: Pooling

In the final step, we divide our convolved matrix into sub-matrices and apply sum summary function to that sub-matrix. For example, we can take the max, min or sum of elements in the sub-matrix as an element in our final matrix.

At the end of this 3 step process, we would have developed a standard vector of features that would then travel through “fully connected layers”. Note that the pooling step is not entirely necessary, but it does help in developing equivariant representations of our image accounting for small distortions in images. The structure with these layers would either be that of a FFNN layer or another “convolution layer”, where the above mentioned 3 step process is applied again to the inputs as the operation for that layer. Below we describe how one would go about training a CNN with Backpropagation.

Backpropagation and Gradient Calculation for Various CNN Components

Backpropagation is the typical method used to train neural networks. It is an extended, systematic application of chain rule, where the the weights of a network are tuned in a series of iterations through adding a multiple of the gradient of the error function w.r.t the weights, to the weights themselves. This corresponds to finding the best direction of change for a neural network’s weights that will result in a locally optimal decrease in the error metric.

Typically, a calculation of the gradient for weights is broken down into the multiplication of individual components of the path from the weight to the final error function, something like E/w = E/a a/z z/w. Backpropagation yields chained gradient (matrix) multiplications, so it is important to know the gradient calculation for each component, whether it is convolution, pooling, matrix multiplication (found in dense layers).

In a typical CNN, there are many different components, and each component warrants its own backpropagation calculation.

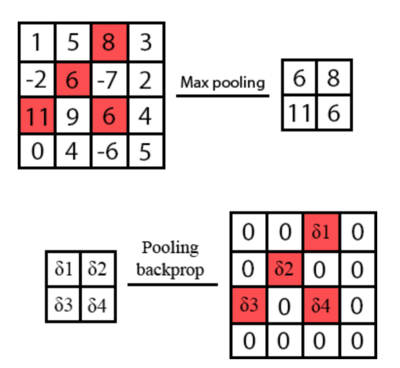

For pooling, lets say 2-d max pooling with stride 2, for example, the output is a multidimensional version of the max of a 2x2 input block. Thus, the backprop step through a max pool layer is simply 1 for whichever input in the 2x2 block is highest, and 0 for the rest. Thus it serves as a selective passthrough for the gradient at certain locations.

(Image from Medium post)

For typical activation like ReLU, which is a multidimensional (elementwise) y=max(0,x), the gradient through the component is simply 1 if x > 0 and 0 otherwise, and is again a selective passthrough of the gradient at certain locations.

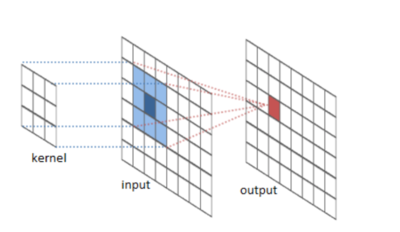

For a convolution layer, assuming 2D convolution, and that input dimension are of dimensions (h,w,c), i.e. height, width, and # of channels, and the output being (h’, w’, k), i.e. output height, width, and # of filters, we know that the the position x,y of the output of filter k is the convolution of a kernel-shaped block of input against the same sized kernel filter (which means to elementwise multiply, and sum across all elements). Thus, the gradient of the layer output w.r.t the layer input is simply the a matrix of copies of the weight and some zeros. Also, the gradient of the layer output w.r.t the weights is simply an aggregation of all the individual input activations that have been multiplied onto the weights in the convolution step.

(Image from Github)

Finally, for fully connected (i.e. dense) layers, the output of a layer is simply a matrix multiplication of the inputs. Thus the gradient of the output w.r.t the input is the weight matrix itself. The gradient of the output w.r.t. Weights are the input activations that have touched the respective elements of the weight matrix during the multiplication step, which is an aggregation of the inputs of the layer.

Through the individual gradients of each component, we can use chain rule to build the gradient of the error w.r.t all weights, and apply these gradients using typical first-order optimization methods like stochastic gradient descent, and variants thereof.

RNNs and GRUs

In addition to CNNs, an understanding of recurrent neural networks (RNNs) is necessary to understand the question component of the Image Q&A process. Standard artificial neural networks (ANNs) and CNNs do not imply an ordering of their input data and produce the same output regardless of the order of the input. However, many data sets such as sentences and videos do have an implicit ordering and thus retaining information of previous iterations is useful in predicting the next step. RNNs are designed to handle sequences of inputs by retaining information of previous time steps using an internal state vector at each node. Each step conditions on the existing internal state along with the current input when determining the output as well as updating the internal state for the next time step.

The most basic RNN structure retains the output of each layer as the internal state at the end iteration. The network structure remains unchanged, only the structure of each node changes. This recurrence connects the elements of the sequence and creates the dependency. The actual network structure remains unchanged when compared to an ANN.

In comparison with a standard ANN, consider a sequence of inputs [math]\displaystyle{ x^{(1)},x^{(2)},...,x^{(n)} }[/math] . The standard ANN update rule for each layer i is:

[math]\displaystyle{ x_i^{(k)}=\sigma(b_i+W_ix_{i-1}^{(k)}) }[/math]

Where b is the bias, W is the weight matrix and k is the current input in the sequence.

The basic RNN update rule for layer i is as follows:

[math]\displaystyle{ x_i^{(k)}=\sigma(b_i+W_ix_{i-1}^{(k)}+R_ix_i^{(k-1}) }[/math]

Where R is the recurrence matrix that relates the current input to the previous input.

A natural extension of the above model involves connecting the current layer to the output of each previous iteration. In practice, this structure is unsuccessful in that it does not facilitate long time lag dependencies (steps in the sequence that rely on far earlier steps) very well. During training, this structure often results in exploding or vanishing gradients thus eliminating the desired recurrence properties. To combat such this phenomenon, multiple paradigms have been proposed. We will focus on Long Short-Term Memory (LSTM) and Gated Recurrent Units (GRU).

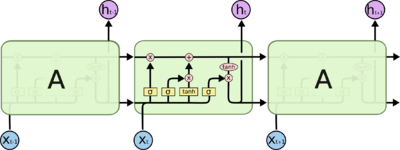

LSTM

The LTSM paradigm replaces the basic recurrent node discussed above with an LSTM unit that controls the information being retained and lost at each iteration of the network using gates. The process is as follows:

1. The forget gate activates based on the prior internal state and the current input to reduce the information passed forward from the prior state.

2. The input gate activates based on the prior internal state and the current input to create a vector of indicators that determine which elements of the state vector will be updated.

3. New memory is generated based on the prior internal state and the current input. This is then added to the post-forget gate internal state vector to determine the new internal state.

4. Lastly, the updated internal state is filtered as not all the information contained in the internal state is needed at this point. This filtered vector is outputted to the next unit and the new memory is retained for the next use of the unit.

The following image shows a 3-stage LTSM chain along with the gates.

(image from GitHub)

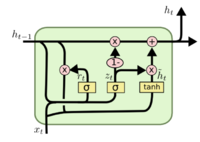

GRU

GRU units are similar to LSTM units in that they control the changes in internal state using gates but GRUs use a simpler model. A GRU unit combines the forget and input gates into a reset gate that determines the contribution of the prior state to the new memory generated. After the new memory is generated, it is passed along with the prior state to the update gate which determines the contribution of each to the new memory. This new memory is both the output and the new internal state. LSTM, in comparison, had different vectors for output and internal state. The specifics of the update rules in the context of this Image Q&A implementation are discussed below under the Model section.

The following image shows a single GRU unit with its gates.

(image from GitHub)

Model

CNN Architecture & VGG

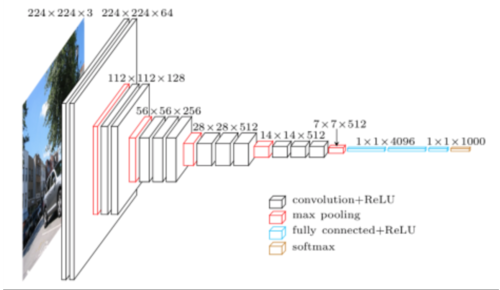

The network uses a variant of the VGG series of convolutional neural networks as proposed in the 2015 paper by Karen Simonyan & Andrew Zisserman, titled “Very Deep Convolutional Networks for Large-scale Image Recognition”. This was an important paper at the time, since it suggested that state-of-the-art results on image classification (through benchmarks like ImageNet) can be achieved with relatively small kernel sizes, but deep networks, along with a uniform structure, whereas previous CNN’s often had extended customization of kernel sizes and structures. Also, it showed that Local Response Normalization, as originally described in the AlexNet Paper from 2012 “ImageNet Classification with Deep Convolutional Neural Networks” by Alex Krizhevsky et al did not necessarily increase classification performance.

The intuition here is that not all network layers need to be spatially pooled afterwards, and that instead of a large convolution kernel layer, we have stacked small convolution layers. For example, 2 stacked 3x3 convolution layers has an effective size receptive field of 5x5, 3 stacked having 7x7, and so on. However, stacked layers of 3x3 have increased nonlinearity w.r.t their larger kernel (5x5,7x7, etc) counterparts. Thus, VGG uses 3x3 convolution kernels and layers, which spatial pooling only every few layers, pooling for a group of stacked 3x3 layers.

VGG16 in particular, which is used in this paper, has 16 layers (3 being the final densely connected layers), with doubling amounts of filters for each successive group of 3x3 filters, as the spatial pooling at the end of each group halves the dimensions of each layer. Visualization below:

(Image source at UofT CS)

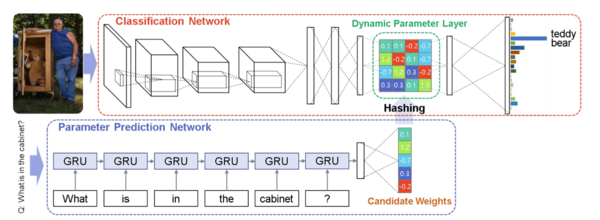

In this paper, we modify the default VGG16 net to remove the very last classification layer, and add 3 more layers, the middle one being the dynamic parameter layer. The weights from the dynamic parameter layer come from hashed weights from the Parameter Prediction Network. The dynamic parameters are added in the middle since this layer can be the smallest, and is sandwiched between the layer that would be the classification layer of the original ImageNet-tuned network and the now resulting classification layer that gives us the results we want.

(Network architecture used in this paper. Image from Figure 2 paper)

Parameter Prediction Network

Recall from the problem formulation discussion that some of the parameters of the model are determined based on the question being posed. This is accomplished using a dynamic parameter prediction model. The question is fed into this second network close and the output weights are then fed into the main model. This step is discussed in more detail below.

The structure of the dynamic parameter prediction network is a series of GRU units followed by a single fully connected layer. GRU units are well suited to sentence interpretation tasks given their internal state component that considers earlier words in the sentence when encoding later words. GRUs were selected over LSTM units as a result of the findings of the 2014 paper "Empirical Evaluation of Gated Recurrent Neural Networks on Sequence Modelling" by Junyoung Chung, Caglar Gulcehre, KyungHyun Cho, Yoshua Bengio. The paper confirmed that more advanced recurrent units perform better at sequential tasks than simpler, traditional units. It also compared the recently proposed GRU units to the more broadly used LSTM units. In summary, it was found that GRU units perform similarly to LSTM units despite their simpler structure. This motivates the authors to use GRUs over LSTMs. However, the authors note that non-recurrent networks could be used to build the parameter prediction network as the pre-trained sentence embedding model (skip-thought vector model) has a GRU based structure (discussed below).

In this model, the internal states are updated using the following update rules:

Where [math]\displaystyle{ r_t }[/math] is the reset gate, [math]\displaystyle{ z_t }[/math] is the update gate, [math]\displaystyle{ h_t }[/math] is the hidden state, [math]\displaystyle{ \bar{h_t} }[/math] is the candidate activation and [math]\displaystyle{ \bigodot }[/math] indicates element wise multiplication. Note that the W and U matrices are parameters learned during training.

Hashing

Generating the weight matrix in the dynamic parameter layers can be a computationally challenging task, since it depends on the number of parameters. Between the GRU and the fully-connected layer in the parameter prediction network, we need quadratically more parameters in order to increase the dimensionality of the prediction layer’s output. All this means that the weight matrix dimensions would blow up during the network training process. The most straightforward way to reduce the size of W is to reduce the number if inputs. However, the network could be overfitted if we were to keep the weight matrix small by limiting the number of training examples. Hence the authors of this paper are using a recent hashing trick introduced by Chen et al. that “folds” the neural network.

A quick background on hashing and direct addressing:

In a comparison-based model, Ω(log(n)) time is required to search a size-n dictionary. Direct addressing reduces the running time to O(1) by storing k keys, 0 ≤ k < M, in an array of size M. Hence the search operation is equivalent to indexing, which is constant assuming it takes a constant (and most likely negligible) amount of time to access any element in the array. Hashing is an improvement on direct addressing in the case when Direct Addressing can be space-inefficient with k << M. For keys in the universe U, a hash function h mapps the keys to an element from the set {1...M} -- i.e. h(k) ∈ {1...M}, ∀ k ∈ U. This allows for space-efficient direct addressing.

The hashing “trick” used for dimensionality reduction in this paper is a novel network architecture called “HashNets”, which as previously stated was published in this 2015 paper. It uses a low-cost hash function to randomly group connection weights into slots in the hash table, and all connections within the same slot share a single parameter value. Then, during the training process, these parameters are tuned to adjust to the HashNets weight sharing architecture with standard backpropagation. The hashing procedure introduces no additional memory overhead, and this strategy has been shown to substantially reduce the storage requirements for neural nets while mostly preserving generalization performance.

Weight sharing is implemented in the model by allowing a single parameter in the candidate weight vector p to be shared by multiple elements of W_d(q). This can be done using a pre-defined hash function that converts the 2-dimensional location in W_d(q) to a 1-dimensional index in p. As mentioned in Chen et al.’s paper, this dimensionality reduction technique maintains the accuracy of the network.

Let w^d_{mn} be the element at position (m,n) in the weight matrix W_d(q), which corresponds to the weight between the m-th output neuron and the n-th input neuron. The hashing function used in this paper is defined to be ψ(m,n) : (m,n) -> {1...K} where K = dim(p). More specifically: w^d_{mn} = p_{ψ(m,n)} • ε(m,n), where ε(m,n): N x N -> {+1, -1} is a second, independent hashing function used to remove the bias of the hashed inner product (more details on this bias term can be found in the original paper by Chen et al.).

The number of free parameters is reduced during this hashing process, which the authors believed to be reasonable since there are many redundant parameters in deep neural networks (as shown inthis 2013 NIPS paper) and that it is possible to parameterize a network using a smaller set of candidate weights. Once these candidate weights have been computed, we simply map them to the dynamic parameter layer’s weight matrix positions by applying the hash function to the weight matrix positions.

Training and Results

Training

Usage of Pre-trained VGG Net

For the CNN, pretrained weights from a VGG16 network trained on ImageNet are imported for the layers transferred from the VGG16. However, since we have added 3 new layers and removed the original last layer, weights need to be fine tuned. However, the dynamic prediction layer has weights that are predicted from the RNN, and is reported to be too volatile to directly train the entire network using backpropagation, so the paper suggests that in the beginning, the weights of the original network are frozen while only the new layers are fine-tuned/trained, and upon the validation error saturating, the entire network is then fine-tuned/trained.

The Adam optimizer (Adaptive momentum) is used with learning rate of 0.01, along with the modification of a clipped gradient at 0.1 (gradient will be min(x,0.1)). This is due to the fact that the volatility and potential significant change of weights in the dynamic prediction layer can hinder learning, and this diminishes its effect.

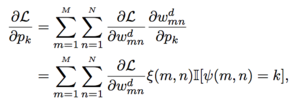

The gradient through the dynamic parameter layer is the same as the gradient through any typical dense layer, except that to associate the dynamic parameter layer weights with the original RNN output, we calculate the RNN output gradient as the sum across the gradients for all locations in the weight matrix for a particular RNN output.

The paper describes it as so:, where p_k is the RNN output, and w_mn is the projected hashed parameter in the dynamic parameter layer - and the sum across all associated locations is indicated by the Indicator function below:

(Image from formula(13) in the original paper)

Usage of Pre-trained GRUs

Comparing with other well-researched machine learning tasks that have a lot of available data collected, Image Question Answering has a relatively small amount of usable language data. For instance, a similar task undertaken in this 2015 ICCV paper only had approximately 750,000 questions to work with, which is an insufficiently low number given the scope of the task at hand. The authors of this paper used pre-trained Gated Recurrent Units (GRUs) to augment linguistic information in Image Question Answering.

Gated Recurrent Units (GRUs) are a gating mechanism in recurrent neural networks and has applications in polyphonic music modeling and speech signal modeling. GRUs used in this paper are initialized with the skip-thought vector model which is a sentence encoder, and trained on a corpus of 74 million sentences. Note that this initialization scheme is a fairly generic sentence embedding model. The GRUs are then trained in an unsupervised environment so that it predicts meanings of surrounding sentences based on the embedded sentences. Since the GRUs are initialized with a generic sentence embedding and fine-tuned in an unsupervised manner, the authors claim to obtain better generalizations for question representation.

In short, the usage of pre-trained GRUs on a large corpus of text allows for better generalization of question representation, which is necessary since the text corpus currently available for Image Q&A is not enough to train a model that is capable of high-level generalization.

Results

Scoring Methods

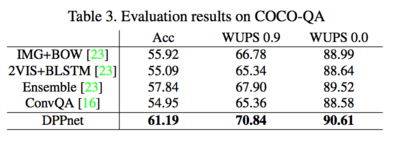

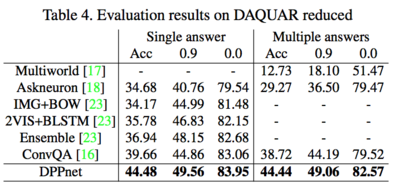

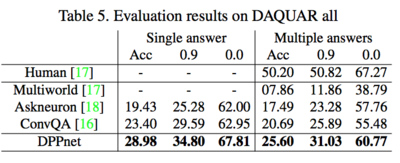

In this paper, testing was done across three different datasets, those being VQA (Visual Question Answering Dataset), DAQUAR and COCO-QA respectively.

When evaluating performance for the DAQUAR and COCO-QA datasets, scoring was performed using the WUPS measure, while scoring for the VQA dataset was done by comparing ImageQA performance to human consensus. Further details on the scoring metric can be found in This Paper. In a general sense, the metric considers the similarity between the total set of answers provided by the model and the total set of labels provided.

In the VQA dataset, each picture-question input is associated with ten answers (collected in surveys done with people). A predicted answer as classified as correct if it matches at least three of the collected responses and in this case is given a score of 1. If the predicted response matches less than three answers, then its assigned score is given by: ![]() . Finally, the average score across all observations is calculated as the accuracy of the model. This scoring method is referred to in the following section as AccVQA.

. Finally, the average score across all observations is calculated as the accuracy of the model. This scoring method is referred to in the following section as AccVQA.

Results

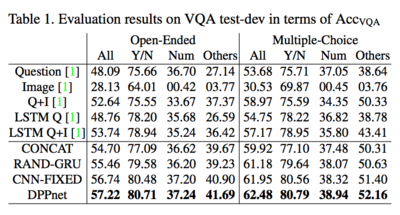

Note: all tables are from the original paper

The preceding table is strong support for why each key piece of the DPPnet architecture provides value. The CNN-FIXED model is the exact same as DPPnet, except for the fact that it does not fine tune CNN parameters. Thus, the incremental accuracy here comes from the fine tuning procedure. The RAND-GRU model is CNN-FIXED without pre-trained GRUs. The incremental accuracy between RAND-GRU and CNN-FIXED is derived from pre-training our GRUs, as explained before. Finally, the CONCAT model is the RAND-GRU model without the dynamically predicted layer, and so the incremental accuracy is derived from this new intermediary layer. The models above CONCAT are models proposed earlier to do the same task. As evident from the table, each key piece of the network adds accuracy, with the accuracy improvement; after all three parts have been implemented, sitting at around 3%.

The tables below are a comparison of DPPnet against all other algorithms that have been tested on the specified datasets. DPPnet outperforms all other previously tested models across all tests.