stat341f11

Editor Sign Up

Sampling - Sept 20, 2011

The meaning of sampling is to generate data points or numbers such that these data follow a certain distribution.

i.e. From [math]\displaystyle{ x \sim~f(x) }[/math] sample [math]\displaystyle{ \,x_{1}, x_{2}, ..., x_{1000} }[/math]

In practice, it maybe difficult to find the joint distribution of random variables. Through simulating the random variables, we can inference from the data.

Sampling from uniform distribution.

Computers can't generate random numbers as they are deterministic, but they can produce pseudo random numbers. Pseudo random numbers are numbers that mimic the properties of random numbers, but are generated using algorithms. Therefore, they are not truly random.

Multiplicative Congruential

- involves three parameters: integers [math]\displaystyle{ \,a, b, m }[/math], and an initial value [math]\displaystyle{ \,x_0 }[/math] we call the seed

- a sequence of integers is defined as

- [math]\displaystyle{ x_{k+1} \equiv (ax_{k} + b) \mod{m} }[/math]

Example: [math]\displaystyle{ \,a=13, b=0, m=31, x_0=1 }[/math] creates a uniform histogram.

MATLAB code for generating 1000 random numbers using the multiplicative congruential method:

a = 13;

b = 0;

m = 31;

x(1) = 1;

for ii = 2:1000

x(ii) = mod(a*x(ii-1)+b, m);

end

MATLAB code for displaying the values of x generated:

x

MATLAB code for plotting the histogram of x:

hist(x)

Facts about this algorithm:

- In this example, the first 30 terms in the sequence are a permutation of integers from 1 to 30 and then the sequence repeats itself.

- Values are between 0 and [math]\displaystyle{ m-1 }[/math], inclusive.

- Dividing the numbers by [math]\displaystyle{ m-1 }[/math] yields numbers in the interval [math]\displaystyle{ [0,1] }[/math].

- MATLAB's

randfunction once used this algorithm with [math]\displaystyle{ a = 7^5, b = 0, m = 2^{31}-1 }[/math], for reasons described in Park and Miller's 1988 paper "Random Number Generators: Good Ones are Hard to Find" (available online). - Visual Basic's

RNDfunction also used this algorithm with [math]\displaystyle{ a = 1140671485, b = 12820163, m = 2^{24} }[/math] (Reference)

Inverse Transform Method

This is a basic method for sampling. Theoretically using this method we can generate sample numbers at random from any probability distribution once we know its cumulative distribution function (cdf).

Theorem

Take [math]\displaystyle{ U \sim~ \mathrm{Unif}[0, 1] }[/math] and let [math]\displaystyle{ X=F^{-1}(U) }[/math]. Then X has distribution function [math]\displaystyle{ F(\cdot) }[/math], where [math]\displaystyle{ F(x)=P(X \leq x) }[/math].

Let [math]\displaystyle{ F^{-1}( ) }[/math] denote the inverse of [math]\displaystyle{ F( ) }[/math]. Therefore [math]\displaystyle{ F(x)=u \implies x=F^{-1}(u) }[/math]

Proof

Recall that

- [math]\displaystyle{ P(a \leq X\lt b)=\int_a^{b} f(x) dx }[/math]

- [math]\displaystyle{ cdf=F(x)=P(X \leq x)=\int_{-\infty}^{x} f(x) dx }[/math]

Note that if [math]\displaystyle{ U \sim~ \mathrm{Unif}[0, 1] }[/math], we have [math]\displaystyle{ P(U \leq a)=a }[/math]

- [math]\displaystyle{ \begin{align} P(F^{-1}(U) \leq x) &{}= P(F(F^{-1}(U)) \leq F(x)) \\ &{}= P(U \leq F(x)) \\ &{}= F(x) \end{align} }[/math]

Completing the proof.

Continuous Case

Generally it takes two steps to get random numbers using this method.

- Step 1. Draw [math]\displaystyle{ U \sim~ \mathrm{Unif}[0, 1] }[/math]

- Step 2. [math]\displaystyle{ X=F^{-1}(U) }[/math]

Example

Take the exponential distribution for example

- [math]\displaystyle{ \,f(x)={\lambda}e^{-{\lambda}x} }[/math]

- [math]\displaystyle{ \,F(x)=\int_0^x {\lambda}e^{-{\lambda}u} du=1-e^{-{\lambda}x} }[/math]

Let: [math]\displaystyle{ \,F(x)=y }[/math]

- [math]\displaystyle{ \,y=1-e^{-{\lambda}x} }[/math]

- [math]\displaystyle{ \,ln(1-y)={-{\lambda}x} }[/math]

- [math]\displaystyle{ \,x=\frac{ln(1-y)}{-\lambda} }[/math]

- [math]\displaystyle{ \,F^{-1}(x)=\frac{-ln(1-x)}{\lambda} }[/math]

Therefore, to get a exponential distribution from a uniform distribution takes 2 steps.

- Step 1. Draw [math]\displaystyle{ U \sim~ \mathrm{Unif}[0, 1] }[/math]

- Step 2. [math]\displaystyle{ x=\frac{-ln(1-U)}{\lambda} }[/math]

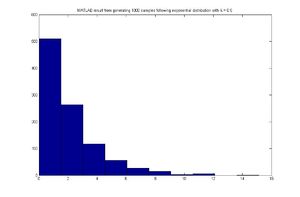

MATLAB code

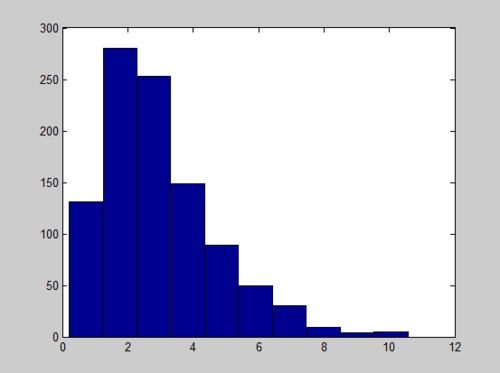

for exponential distribution case,assuming [math]\displaystyle{ \lambda=0.5 }[/math]

for ii = 1:1000

u = rand;

x(ii) = -log(1-u)/0.5;

end

hist(x)

MATLAB result

Discrete Case-Sept 22, 2011

This same technique can be applied to the discrete case. Generate a discrete random variable [math]\displaystyle{ \,x }[/math] that has probability mass function [math]\displaystyle{ \,P(X=x_i)=P_i }[/math] where [math]\displaystyle{ \,x_0\lt x_1\lt x_2... }[/math] and [math]\displaystyle{ \,\sum_i P_i=1 }[/math]

- Step 1. Draw [math]\displaystyle{ u \sim~ \mathrm{Unif}[0, 1] }[/math]

- Step 2. [math]\displaystyle{ \,x=x_i }[/math] if [math]\displaystyle{ \,F(x_{i-1})\lt u \leq F(x_i) }[/math]

Example

Let x be a discrete random variable with the following probability mass function:

- [math]\displaystyle{ \begin{align} P(X=0) = 0.3 \\ P(X=1) = 0.2 \\ P(X=2) = 0.5 \end{align} }[/math]

Given the pmf, we now need to find the cdf.

We have:

- [math]\displaystyle{ F(x) = \begin{cases} 0 & x \lt 0 \\ 0.3 & 0 \leq x \lt 1 \\ 0.5 & 1 \leq x \lt 2 \\ 1 & 2 \leq x \end{cases} }[/math]

We can apply the inverse transform method to obtain our random numbers from this distribution.

Pseudo Code for generating the random numbers:

Draw U ~ Unif[0,1]

if U <= 0.3

x = 0

else if

0.3 < U <= 0.5

x = 1

else

0.5 < U <= 1

x = 2

MATLAB code for generating 1000 random numbers in the discrete case:

for ii = 1:1000

u = rand;

if u <= 0.3

x(ii) = 0;

else if u <= 0.5

x(ii) = 1;

else

x(ii) = 2;

end

end

Pseudo code for the Discrete Case:

1. Draw U ~ Unif [0,1]

2. If [math]\displaystyle{ U \leq P_0 }[/math], deliver [math]\displaystyle{ X = x_0 }[/math]

3. Else if [math]\displaystyle{ U \leq P_0 + P_1 }[/math], deliver [math]\displaystyle{ X = x_1 }[/math]

4. Else If [math]\displaystyle{ U \leq P_0 +....+ P_k }[/math], deliver [math]\displaystyle{ X = x_k }[/math]

Limitations

Although this method is useful, it isn't practical in many cases since we can't always obtain [math]\displaystyle{ F }[/math] or [math]\displaystyle{ F^{-1} }[/math] as some functions are not integrable or invertible, and sometimes even [math]\displaystyle{ f(x) }[/math] itself cannot be obtained in closed form. Let's look at some examples:

- Continuous case

If we want to use this method to draw the pdf of normal distribution, we may find ourselves get stuck in finding its cdf. The simplest case of normal distribution is [math]\displaystyle{ f(x)=\frac{1}{\sqrt{2\pi}}e^{-\frac{x^2}{2}} }[/math], whose cdf is [math]\displaystyle{ F(x)=\frac{1}{\sqrt{2\pi}}\int_{-\infty}^{x}{e^{-\frac{u^2}{2}}}du }[/math]. This integral cannot be expressed in terms of elementary functions. So evaluating it and then finding the inverse is a very difficult task.

- Discrete case

It is easy for us to simulate when there are only a few values taken by the particular random variable, like the case above. And it is easy to simulate the binomial distribution [math]\displaystyle{ X \sim~ \mathrm{B}(n,p) }[/math] when the parameter n is not too large. But when n takes on values that are very large, say 50, it is hard to do so.

Acceptance/Rejection Method

The aforementioned difficulties of the inverse transform method motivates a sampling method that does not require analytically calculating cdf's and their inverses, which is the acceptance/rejection sampling method. Here, [math]\displaystyle{ f(x) }[/math] is approximated by another function, say [math]\displaystyle{ g(x) }[/math], with the idea being that [math]\displaystyle{ g(x) }[/math] is a "nicer" function to work with than [math]\displaystyle{ f(x) }[/math].

Suppose we assume the following:

1. There exists another distribution [math]\displaystyle{ g(x) }[/math] that is easier to work with and that you know how to sample from, and

2. There exists a constant c such that [math]\displaystyle{ f(x) \leq c \cdot g(x) }[/math] for all x

Under these assumptions, we can sample from [math]\displaystyle{ f(x) }[/math] by sampling from [math]\displaystyle{ g(x) }[/math]

General Idea

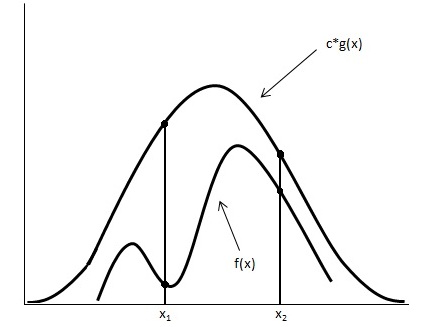

Looking at the image below we have graphed [math]\displaystyle{ c \cdot g(x) }[/math] and [math]\displaystyle{ f(x) }[/math].

Using the acceptance/rejection method we will accept some of the points from [math]\displaystyle{ g(x) }[/math] and reject some of the points from [math]\displaystyle{ g(x) }[/math]. The points that will be accepted from [math]\displaystyle{ g(x) }[/math] will have a distribution similar to [math]\displaystyle{ f(x) }[/math]. We can see from the image that the values around [math]\displaystyle{ x_1 }[/math] will be sampled more often under [math]\displaystyle{ c \cdot g(x) }[/math] than under [math]\displaystyle{ f(x) }[/math], so we will have to reject more samples taken at x1. Around [math]\displaystyle{ x_2 }[/math] the number of samples that are drawn and the number of samples we need are much closer, so we accept more samples that we get at [math]\displaystyle{ x_2 }[/math]

Procedure

1. Draw y ~ g

2. Draw U ~ Unif [0,1]

3. If [math]\displaystyle{ U \leq \frac{f(y)}{c \cdot g(y)} }[/math] then x=y; else return to 1

Proof

Mathematically, we need to show that the sample points given that they are accepted have a distribution of f(x).

[math]\displaystyle{ \begin{align} P(y|accepted) &= \frac{P(y, accepted)}{P(accepted)} \\ &= \frac{P(accepted|y) P(y)}{P(accepted)}\end{align} }[/math] (Bayes' Rule)

[math]\displaystyle{ P(y) = g(y) }[/math]

[math]\displaystyle{ P(accepted|y) =P(u\leq \frac{f(y)}{c \cdot g(y)}) =\frac{f(y)}{c \cdot g(y)} }[/math],where u ~ Unif [0,1]

[math]\displaystyle{ P(accepted) = \sum P(accepted|y)\cdot P(y)=\int^{}_y \frac{f(y)}{c \cdot g(y)}g(y) dy=\int^{}_y \frac{f(y)}{c} dy=\frac{1}{c} \cdot\int^{}_y f(y) dy=\frac{1}{c} }[/math]

So,

[math]\displaystyle{ P(y|accepted) = \frac{ \frac {f(y)}{c \cdot g(y)} \cdot g(y)}{\frac{1}{c}} =f(y) }[/math]

Continuous Case

Example

Sample from Beta(2,1)

In general:

Beta([math]\displaystyle{ \alpha, \beta) = \frac{\Gamma (\alpha + \beta)}{\Gamma(\alpha)\Gamma(\beta)} }[/math] [math]\displaystyle{ x^{\alpha-1} }[/math] [math]\displaystyle{ (1-x)^{\beta-1} }[/math], [math]\displaystyle{ 0\lt x\lt 1 }[/math]

Note: [math]\displaystyle{ \Gamma(n) = (n-1)! }[/math] if n is a positive integer

[math]\displaystyle{ \begin{align} f(x) &= Beta(2,1) \\ &= \frac{\Gamma(3)}{\Gamma(2)\Gamma(1)} x^1(1-x)^0 \\ &= \frac{2!}{1! 0!}\cdot (1) x \\ &= 2x \end{align} }[/math]

We want to choose [math]\displaystyle{ g(x) }[/math] that is easy to sample from. So we choose [math]\displaystyle{ g(x) }[/math] to be uniform distribution.

We now want a constant c such that [math]\displaystyle{ f(x) \leq c \cdot g(x) }[/math] for all x from (0,1)

So,

[math]\displaystyle{ c \geq \frac{f(x)}{g(x)} }[/math], for all x from (0,1)

[math]\displaystyle{ \begin{align}c &\geq max (\frac {f(x)}{g(x)}, 0\lt x\lt 1) \\

&= max (\frac {2x}{1},0\lt x\lt 1) \\

&= 2 \end{align} }[/math]

Now that we have c =2,

1. Draw y ~ g(x) => Draw y ~ Unif [0,1]

2. Draw u ~ Unif [0,1]

3. if [math]\displaystyle{ u \leq \frac{2y}{2 \cdot 1} }[/math] then x=y; else return to 1

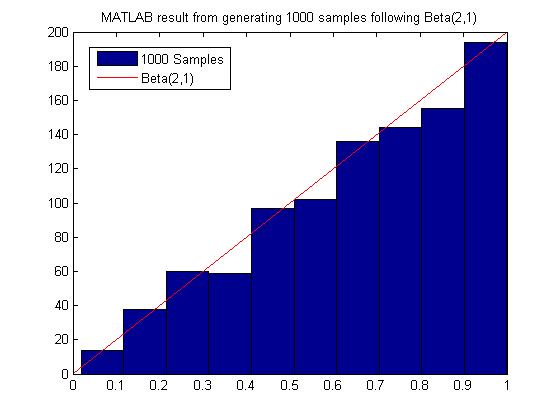

MATLAB code for generating 1000 samples following Beta(2,1):

close all

clear all

ii=1;

while ii < 1000

y = rand;

u = rand;

if u <= y

x(ii)=y;

ii=ii+1;

end

end

hist(x)

MATLAB result

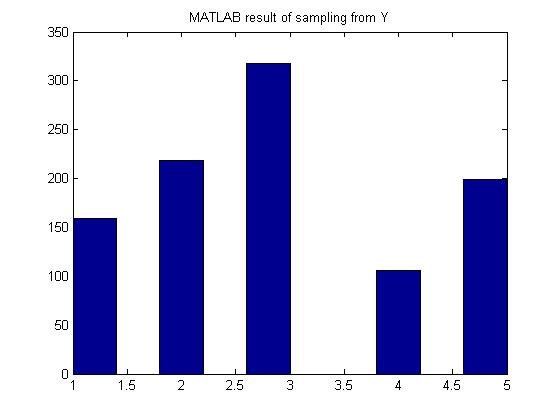

Discrete Example

Generate random variables according to the p.m.f:

- [math]\displaystyle{ \begin{align} P(Y=1) = 0.15 \\ P(Y=2) = 0.22 \\ P(Y=3) = 0.33 \\ P(Y=4) = 0.10 \\ P(Y=5) = 0.20 \end{align} }[/math]

find a g(y) discrete uniform distribution from 1 to 5

[math]\displaystyle{ c \geq \frac{P(y)}{g(y)} }[/math]

[math]\displaystyle{ c = \max \left(\frac{P(y)}{g(y)} \right) }[/math]

[math]\displaystyle{ c = \max \left(\frac{0.33}{0.2} \right) = 1.65 }[/math] Since P(Y=3) is the max of P(Y) and g(y) = 0.2 for all y.

1. Generate Y according to the discrete uniform between 1 - 5

2. U ~ unif[0,1]

3. If [math]\displaystyle{ U \leq \frac{P(y)}{1.65 \times 0.2} \leq \frac{P(y)}{0.33} }[/math], then x = y; else return to 1.

In MATLAB, the code would be:

py = [0.15 0.22 0.33 0.1 0.2];

ii =1;

while ii <= 1000

y = unidrnd(5);

u = rand;

if u <= py(y)/0.33

x(ii) = y;

ii = ii+1;

end

end

hist(x);

MATLAB result

Limitations

Most of the time we have to sample many more points from g(x) before we can obtain an acceptable amount of samples from f(x), hence this method may not be computationally efficient. It depends on our choice of g(x). For example, in the example above to sample from Beta(2,1), we need roughly 2000 samples from g(X) to get 1000 acceptable samples of f(x).

In addition, in situations where a g(x) function is chosen and used, there can be a discrepancy between the functional behaviors of f(x) and g(x) that render this method unreliable. For example, given the normal distribution function as g(x) and a function of f(x) with a "fat" mid-section and "thin tails", this method becomes useless as more points near the two ends of f(x) will be rejected, resulting in a tedious and overwhelming number of sampling points having to be sampled due to the high rejection rate of such a method.

Sampling From Gamma and Normal Distribution - Sept 27, 2011

Sampling From Gamma

Gamma Distribution

The Gamma function is written as [math]\displaystyle{ X \sim~ Gamma (t, \lambda) }[/math]

- [math]\displaystyle{ F(x) = \int_{0}^{\lambda x} \frac{e^{-y}y^{t-1}}{(t-1)!} dy }[/math]

If you have t samples of the exponential distribution,

[math]\displaystyle{ \begin{align} X_1 \sim~ Exp(\lambda)\\ \vdots \\ X_t \sim~ Exp(\lambda) \end{align}

}[/math]

The sum of these t samples has a gamma distribution,

- [math]\displaystyle{ X_1+X_2+ ... + X_t \sim~ Gamma (t, \lambda) }[/math]

- [math]\displaystyle{ \sum_{i=1}^{t} X_i \sim~ Gamma (t, \lambda) }[/math] where [math]\displaystyle{ X_i \sim~Exp(\lambda) }[/math]

method

We can sample the exponential distribution using the inverse transform method from previous class,

- [math]\displaystyle{ \,f(x)={\lambda}e^{-{\lambda}x} }[/math]

- [math]\displaystyle{ \,F^{-1}(u)=\frac{-ln(1-u)}{\lambda} }[/math]

- [math]\displaystyle{ \,F^{-1}(u)=\frac{-ln(u)}{\lambda} }[/math]

1 - u is the same as x since [math]\displaystyle{ U \sim~ unif [0,1] }[/math]

- [math]\displaystyle{ \begin{align} \frac{-ln(u_1)}{\lambda} - \frac{ln(u_2)}{\lambda} - ... - \frac{ln(u_t)}{\lambda} = x_1\\ \vdots \\ \frac{-ln(u_1)}{\lambda} - \frac{ln(u_2)}{\lambda} - ... - \frac{ln(u_t)}{\lambda} = x_t \end{align}

: }[/math]

- [math]\displaystyle{ \frac {-\sum_{i=1}^{t} ln(u_i)}{\lambda} = x }[/math]

MATLAB code for a Gamma(1,3) is

x = sum(-log(rand(1000,3)),2); hist(x)

And the Histogram of X follows a Gamma distribution with long tail:

R code for a Gamma(1,3) is

a<-apply(-log(matrix(runif(3000),nrow=1000)),1,sum); hist(a);

And the histogram is

Here is another histogram of Gamma coding with R

a<-apply(-log(matrix(runif(3000),nrow=1000)),1,sum); hist(a,freq=F); lines(density(a),col="blue"); rug(jitter(a));

Sampling From Normal Distribution

Box-Muller Transform - Sept 29, 2011

Procedure

1. Generate [math]\displaystyle{ u_1 }[/math] and [math]\displaystyle{ u_2 }[/math], two values sampled from a uniform distribution between 0 and 1.

2.

Set [math]\displaystyle{ R^2 = -2log(u_1) }[/math] so that [math]\displaystyle{ R^2 }[/math] is exponential with mean 1/2

Set [math]\displaystyle{ \theta = 2*\pi*u_2 }[/math] so that [math]\displaystyle{ \theta }[/math] ~ Unif[0, 2[math]\displaystyle{ \pi }[/math]]

3.

Set [math]\displaystyle{ X = R cos(\theta) }[/math]

Set [math]\displaystyle{ Y = R sin(\theta) }[/math]

Justification

Suppose we have X ~ N(0, 1) and Y ~ N(0, 1) where X and Y are independent normal random variables. The relative probability density function of these two random variables using Cartesian coordinates is:

[math]\displaystyle{ f(X, Y) = f(X) f(Y) = \frac{1}{\sqrt{2\pi}}e^{-x^2/2} \frac{1}{\sqrt{2\pi}}e^{-y^2/2} = \frac{1}{2\pi}e^{-(x^2+y^2)/2} }[/math]

In polar coordinates [math]\displaystyle{ R^2 = x^2 + y^2 }[/math], so the relative probability density function of these two random variables using polar coordinates is:

[math]\displaystyle{ f(R, \theta) = \frac{1}{2\pi}e^{-R^2/2} }[/math]

If we have [math]\displaystyle{ R^2 }[/math] ~ exp(1/2) and [math]\displaystyle{ \theta }[/math] ~ unif[0, 2[math]\displaystyle{ \pi }[/math]] we get an equivalent relative probability density function:

[math]\displaystyle{ f(R, \theta) = f(R) f(\theta) = \frac{1}{2}e^{-R^2/2} * \frac{1}{2\pi} = \frac{1}{4\pi}e^{-R^2/2} }[/math]

Therefore we can generate a point in polar coordinates using the uniform and exponential distributions, then convert the point to Cartesian coordinates and the resulting X and Y values will be equivalent to samples generated from N(0, 1).

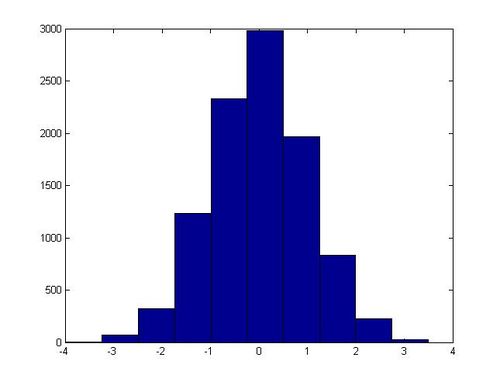

MATLAB code

In MatLab this algorithm can be implemented with the following code, which generates 20,000 samples from N(0, 1):

x = zeros(10000, 1);

y = zeros(10000, 1);

for ii = 1:10000

u1 = rand;

u2 = rand;

R2 = -2 * log(u1);

theta = 2 * pi * u2;

x(ii) = sqrt(R2) * cos(theta);

y(ii) = sqrt(R2) * sin(theta);

end

hist(x)

In one execution of this script, the following histogram for x was generated:

Non-Standard Normal Distributions

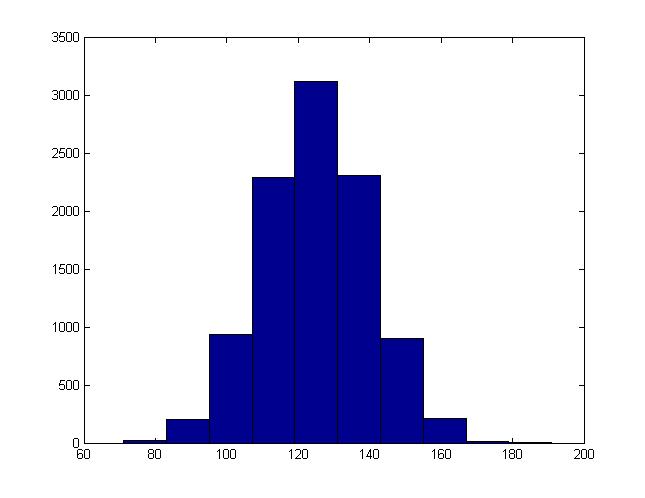

Example: single-variate normal

If X ~ Norm(0, 1) then (a + bX) has a normal distribution with a mean of a and a standard deviation of b (which is equivalent to a variance of [math]\displaystyle{ b^2 }[/math]). Using this information with the Box-Muller transform, we can generate values sampled from some random variable Y ~ Norm(a, [math]\displaystyle{ b^2 }[/math]) for arbitrary values of a and b.

1. Generate a sample u from Norm(0, 1) using the Box-Muller transform.

2. Set v = a + bu.

The values for v generated in this way will be equivalent to sample from a Norm(a, [math]\displaystyle{ b^2 }[/math]) distribution. We can modify the MatLab code used in the last section to demonstrate this. We just need to add one line before we generate the histogram:

x = a + b * x;

For instance, this is the histogram generated when b = 15, a = 125:

Example2: multivariate normal

The Box-Muller method can be extended to higher dimensions to generate multivariate normals. The objects generated will be nx1 vectors, and their variance will be described by nxn covariance matrices.

[math]\displaystyle{ \mathbf{z} = N(\mathbf{u}, \Sigma) }[/math] defines the n by 1 vector [math]\displaystyle{ \mathbf{z} }[/math] such that:

- ui is the average of zi - [math]\displaystyle{ \Sigma }[/math]ii is the variance of zi - [math]\displaystyle{ \Sigma }[/math]ij is the co-variance of zi and zj

If [math]\displaystyle{ z_1, z_2, ..., z_d }[/math] are normal variables with mean 0 and variance 1, then the vector [math]\displaystyle{ (z_1, z_2,..., z_d) }[/math] has mean 0 and variance [math]\displaystyle{ I }[/math]. Where 0 is the zero vector and [math]\displaystyle{ I }[/math] is the identity matrix. This fact suggests that the method for generating a multivariate normal is to generate each component individually as single normal variables.

The mean and the covariance matrix of a multivariate normal distribution can be adjusted in ways analogous to the single variable case. if [math]\displaystyle{ \mathbf{z} }[/math]~[math]\displaystyle{ N(0,I) }[/math], then [math]\displaystyle{ \Sigma^{1/2}\mathbf{z}+\mu }[/math]~[math]\displaystyle{ N(\mu,\Sigma) }[/math]. Note here that the covariance matrix is symmetric and nonnegative, so its square root should always exist.

We can compute [math]\displaystyle{ \mathbf{z} }[/math] in the following way:

1. Generate an n by 1 vector [math]\displaystyle{ \mathbf{x} = \begin{bmatrix}x_{1} & x_{2} & ... & x_{n}\end{bmatrix} }[/math] where [math]\displaystyle{ x_{i} }[/math] ~ Norm(0, 1) using the Box-Muller transform.

2. Calculate [math]\displaystyle{ \Sigma^\frac{1}{2} }[/math] using singular value decomposition.

3. Set [math]\displaystyle{ \mathbf{z} = \Sigma^\frac{1}{2} \mathbf{x} + \mathbf{u} }[/math].

The following MatLab code provides an example, where a scatter plot of 10000 random points is generated. In this case x and y have a co-variance of 0.9 - a very strong positive correlation.

x = zeros(10000, 1);

y = zeros(10000, 1);

for ii = 1:10000

u1 = rand;

u2 = rand;

R2 = -2 * log(u1);

theta = 2 * pi * u2;

x(ii) = sqrt(R2) * cos(theta);

y(ii) = sqrt(R2) * sin(theta);

end

E = [1, 0.9; 0.9, 1];

[u s v] = svd(E);

root_E = u * (s ^ (1 / 2));

z = (root_E * [x y]')';

z(:,1) = z(:,1) + 5;

z(:,2) = z(:,2) + -8;

scatter(z(:,1), z(:,2))

This code generated the following scatter plot:

In Matlab, we can also use the function "sqrtm()" to calculate Square root of a matrix directly. Here is an example:

E = [1, 0.9; 0.9, 1]; sqrtm(E);

R code for a multivariate normal distribution

n=10000; r2<--2*log(runif(n)); theta<-2*pi*(runif(n)); x<-sqrt(r2)*cos(theta); y<-sqrt(r2)*sin(theta); a<-matrix(c(x,y),nrow=n,byrow=F); e<-matrix(c(1,.9,09,1),nrow=2,byrow=T); svde<-svd(e); root_e<-svde$u %*% diag(svde$d)^1/2; z<-t(root_e %*%t(a)); z[,1]=z[,1]+5; z[,2]=z[,2]+ -8; par(pch=19); plot(z,col=rgb(1,0,0,alpha=0.06))

Remarks

MATLAB's randn function uses the ziggurat method to generate normal distributed samples. It is an efficient rejection method based on covering the probability density function with a set of horizontal rectangles so as to obtain points within each rectangle. It is reported that a 800 MHz Pentium III laptop can generate over 10 million random numbers from normal distribution in less than one second. (Reference)

Sampling From Binomial Distributions

In order to generate a sample x from X ~ Bin(n, p), we can follow the following procedure:

1. Generate n uniform random numbers sampled from Unif[0, 1]: [math]\displaystyle{ u_1, u_2, ..., u_n }[/math].

2. Set x to be the total number of cases where [math]\displaystyle{ u_i \lt = p }[/math] for all [math]\displaystyle{ 1 \lt = i \lt = n }[/math].

In MatLab this can be coded with a single line. The following generates a sample from X ~ Bin(n, p):

>> sum(rand(n, 1) <= p, 1)

Monte Carlo Integration Method - Oct 4, 2011

Three integration methods would be taught in this course:

- Basic Monte Carlo Integration

- Importance Sampling

- Markov Chain Monte Carlo (MCMC)

Bayesian inference vs Frequentist inference

The Bayesian method has become popular in the last few decades as simulation and computer technology makes it more applicable. For more information about its history and application, please refer to http://en.wikipedia.org/wiki/Bayesian_inference. As for frequentists, please refer to http://en.wikipedia.org/wiki/Frequentist_inference.

An example of face identification

Take the face as input x. And the person as output y. The person can be either Ali or Tom. If it is Ali, y=1. Otherwise, y=0. We can divide the picture into 100*100 pixels and then list them into a 10,000*1 column vector which is x.

If you are a frequentist, you would compare Pr(X=x|y=1) with Pr(X=x|y=0) and see which one is higher. But if you are a Bayesianist, you would compare Pr(y=1|X=x) with Pr(y=0|X=x).

Summary of differences between two schools

- Frequentist: Probability refers to limiting relative frequency. (objective)

- Bayesian: Probability describes degree of belief not frequency. (subjective)

e.g. The probability that you drank a cup of tea on May 20, 2001 is 0.62 does not refer to any frequency.

- Frequentist: Parameters are fixed, unknown constants.

- Bayesian: Parameters are random variables and we can make probabilistic statement about them.

- Frequentist: Statistical procedures should have long run frequency probabilities.

e.g. a 95% confidence interval should trap true value of the parameter for at least 95% of limited frequency

- Bayesian: It makes inferences about [math]\displaystyle{ \theta }[/math] by producing a prbability distribution for [math]\displaystyle{ \theta }[/math]. Inference (e.g. point estimation) will be extracted from this distribution.

Bayesian inference

Bayesian inference is usually carried out in the following way:

1. Choose a prior probability density function of [math]\displaystyle{ \theta }[/math] which is [math]\displaystyle{ f(\theta) }[/math]. This is our belief about [math]\displaystyle{ \theta }[/math] before we see any data.

2. Choose a statistical model [math]\displaystyle{ f(x|\theta) }[/math] that reflects our beliefs about X.

3. After observing data [math]\displaystyle{ x_1,...,x_n }[/math], we update our beliefs and calculate the posterior probability.

[math]\displaystyle{ f(\theta|x) = \frac{f(\theta,x)}{f(x)}=\frac{f(x|\theta) \cdot f(\theta)}{f(x)}=\frac{f(x|\theta) \cdot f(\theta)}{\int^{}_\theta f(x|\theta) \cdot f(\theta) d\theta} }[/math], where [math]\displaystyle{ f(\theta|x) }[/math] is the posterior probability, [math]\displaystyle{ f(\theta) }[/math] is the prior probability, [math]\displaystyle{ f(x|\theta) }[/math] is the likelihood of observing X=x given [math]\displaystyle{ \theta }[/math] and f(x) is the marginal probability of X=x.

If we have i.i.d. observations [math]\displaystyle{ x_1,...,x_n }[/math], we can replace [math]\displaystyle{ f(x|\theta) }[/math] with [math]\displaystyle{ f({x_1,...,x_n}|\theta)=\prod_{i=1}^n f(x_i|\theta) }[/math] because of independency.

We denote [math]\displaystyle{ f({x_1,...,x_n}|\theta) }[/math] as [math]\displaystyle{ L_n(\theta) }[/math] which is called likelihood. And we use [math]\displaystyle{ x^n }[/math] to denote [math]\displaystyle{ (x_1,...,x_n) }[/math].

[math]\displaystyle{ f(\theta|x^n) = \frac{f(x^n|\theta) \cdot f(\theta)}{f(x^n)}=\frac{f(x^n|\theta) \cdot f(\theta)}{\int^{}_\theta f(x^n|\theta) \cdot f(\theta) d\theta} }[/math] , where [math]\displaystyle{ \int^{}_\theta f(x^n|\theta) \cdot f(\theta) d\theta }[/math] is a constant [math]\displaystyle{ c_n }[/math]. So [math]\displaystyle{ f(\theta|x^n) \propto f(x^n|\theta) \cdot f(\theta) }[/math]. The posterior probability is proportional to the likelihood times prior probability.

[math]\displaystyle{ E(\theta)=\int^{}_\theta \theta \cdot f(\theta|x^n) d\theta }[/math] which is the posterior mean of [math]\displaystyle{ \theta }[/math].

Let [math]\displaystyle{ \tilde{\theta}=(\theta_1,...,\theta_d)^T }[/math], then [math]\displaystyle{ f(\theta_1|x^n) = \int^{} \int^{} \dots \int^{}f(\theta|X)d\theta_2d\theta_3 \dots d\theta_d }[/math] and [math]\displaystyle{ E(\theta_1)=\int^{}\theta_1 \cdot f(\theta_1|x^n) d\theta_1 }[/math]

Example 1: Estimating parameters of a univariate Gaussian distribution

Suppose X follows a univariate Gaussian distribution (i.e. a Normal distribution) with parameters [math]\displaystyle{ \mu }[/math] and [math]\displaystyle{ \sigma^2 }[/math].

(a) For Frequentists:

[math]\displaystyle{ f(x|\theta)= \frac{1}{\sqrt{2\pi}\sigma} \cdot e^{-\frac{1}{2}(\frac{x-\mu}{\sigma})^2} }[/math]

[math]\displaystyle{ L_n(\theta)= \prod_{i=1}^n \frac{1}{\sqrt{2\pi}\sigma} \cdot e^{-\frac{1}{2}(\frac{x_i-\mu}{\sigma})^2} }[/math]

[math]\displaystyle{ \ln L_n(\theta) = l(\theta) = \sum_{i=1}^n -\frac{1}{2}\ln 2\pi-\ln \sigma-\frac{1}{2}(\frac{x_i-\mu}{\sigma})^2 }[/math]

To get the maximum likelihood estimator of [math]\displaystyle{ \mu }[/math] (mle), we find the [math]\displaystyle{ \hat{\mu} }[/math] which maximizes [math]\displaystyle{ L_n(\theta) }[/math]:

[math]\displaystyle{ \frac{\partial l(\theta)}{\partial \mu}= \sum_{i=1}^n \frac{1}{\sigma}(\frac{x_i-\mu}{\sigma})=0 \Rightarrow \sum_{i=1}^n x_i = n\mu \Rightarrow \hat{\mu}_{mle}=\bar{x} }[/math]

(b) For Bayesianists:

[math]\displaystyle{ f(\theta|x) \propto f(x|\theta) \cdot f(\theta) }[/math]

Suppose [math]\displaystyle{ \mu }[/math] ~ N([math]\displaystyle{ \mu_0, \Gamma^2 }[/math]),

so [math]\displaystyle{ f(\mu) = \frac{1}{\sqrt{2\pi}\Gamma} \cdot e^{-\frac{1}{2}(\frac{\mu-\mu_0}{\Gamma})^2} }[/math]

[math]\displaystyle{ f(\mu|x) = \frac{1}{\sqrt{2\pi}\tilde{\sigma}} \cdot e^{-\frac{1}{2}(\frac{\mu-\tilde{\mu}}{\tilde{\sigma}})^2} }[/math]

[math]\displaystyle{ \tilde{\mu} = \frac{\frac{n}{\sigma^2}}{\frac{n}{\sigma^2}+\frac{1}{\Gamma^2}}\bar{x}+\frac{\frac{1}{\Gamma^2}}{\frac{n}{\sigma^2}+\frac{1}{\Gamma^2}}\mu_0 }[/math], where [math]\displaystyle{ \tilde{\mu} }[/math] is the estimator of [math]\displaystyle{ \mu }[/math].

- If prior belief about [math]\displaystyle{ \mu_0 }[/math] is strong, then [math]\displaystyle{ \Gamma }[/math] is small and [math]\displaystyle{ \frac{1}{\Gamma^2} }[/math] is large. [math]\displaystyle{ \tilde{\mu} }[/math] is close to [math]\displaystyle{ \mu_0 }[/math] and the observations will not affect too much. On the contrary, if prior belief about [math]\displaystyle{ \mu_0 }[/math] is weak, [math]\displaystyle{ \Gamma }[/math] is large and [math]\displaystyle{ \frac{1}{\Gamma^2} }[/math] is small. [math]\displaystyle{ \tilde{\mu} }[/math] depends more on observations.(This is intuitive, when our original belief is reliable, then the sample is not important in improving the result; when the belief is not reliable, then we depend a lot on the sample.)

- When the sample is large (i.e. n [math]\displaystyle{ \to \infty }[/math]), [math]\displaystyle{ \tilde{\mu} \to \bar{x} }[/math] and the impact of prior belief about [math]\displaystyle{ \mu }[/math] is weakened.

Basic Monte Carlo Integration - October 6th, 2011

The first, and most basic, method of numerical integration we will see is Monte Carlo Integration. We use this to solve an integral of the form: [math]\displaystyle{ I = \int_{a}^{b} h(x) dx }[/math]

Note the following derivation: [math]\displaystyle{ I = \int_{a}^{b} h(x)dx = \int_{a}^{b} h(x)((b-a)/(b-a)) dx = \int_{a}^{b} (h(x)(b-a))(1/(b-a))dx = \int_{a}^{b} w(x)f(x)dx = E[w(x)] }[/math] ~ [math]\displaystyle{ (1/n) \sum_{i=1}^{n} w(x) }[/math]

Where w(x) = h(x)(b-a) and f(x) is the probability density function of a uniform random variable on the interval [a,b]. The expectation, with respect to the distribution of f, of w is taken from n samples of x.

General Procedure

i) Draw n samples xi ~ uniform[a,b]

ii) Compute w(xi)

[math]\displaystyle{ \hat{I} = 1/n \sum_{i=1}^{n} w(x }[/math]i[math]\displaystyle{ ) }[/math]

By the strong law of large numbers I^ converges to I as n approaches [math]\displaystyle{ \infty }[/math]. Because of this, we can compute all sorts of useful information, such as variance, standard error, and confidence intervals.

Standard Error: [math]\displaystyle{ SE = Standard Deviation / \sqrt{n} }[/math]

Variance: [math]\displaystyle{ V = (\sum_{i=1}^{n} (w(x)-I)^2)/(n-1) }[/math]

Confidence Interval: [math]\displaystyle{ I +/- t \_(\alpha/2) SE }[/math]

Example: Uniform Distribution

Consider the integral, [math]\displaystyle{ \int_{0}^{1} x^3dx }[/math], which is easily solved through standard analytical integration methods, and is equal to .25. Now, let us check this answer with a numerical approximation using Monte Carlo Integration.

We generate a 1 by 10000 vector of uniform (on the interval [0,1]) random variables and call that vector 'u'. We see that our 'w' in this case is [math]\displaystyle{ x^3 }[/math], so we set [math]\displaystyle{ w = u^3 }[/math]. Our I^ is equal to the mean of w.

In Matlab, we can solve this integration problem with the following code:

u = rand(1,10000); w = u.^3; mean(w) ans = 0.2475

Note the '.' after 'u' in the second line of code, indicating that each entry in the matrix is cubed. Also, our approximation is close to the actual value of .25. Now let's try to get an even better approximation by generating more sample points.

u= rand(1,100000); w= u.^3; mean(w) ans = .2503

We see that when the number of sample points is increased, our approximation improves, as one would expect.

Generalization

Up to this point we have seen how to numerically approximate an integral when the distribution of f is uniform. Now we will see how to generalize this to other distributions.

[math]\displaystyle{ I = \int h(x)f(x)dx }[/math]

If f is a distribution function (pdf), then [math]\displaystyle{ I }[/math] can be estimated as Ef[h(x)]. This means taking the expectation of h with respect to the distribution of f. Our previous example is the case where f is the uniform distribution between [a,b].

Procedure for the General Case

i) Draw n samples from f

ii) Compute h(xi)

iii) [math]\displaystyle{ \hat{I} = 1/n \sum_{i=1}^{n} h(x }[/math]i[math]\displaystyle{ ) }[/math]

Example: Exponential Distribution

Find [math]\displaystyle{ E[\sqrt{x}] }[/math] for [math]\displaystyle{ f = e^{-x} }[/math], which is the exponential distribution with mean 1.

[math]\displaystyle{ I = \int_{0}^{\infty} \sqrt{x} e^{-x}dx }[/math]

We can see that we must draw samples from f, the exponential distribution.

To find a numerical solution using Monte Carlo Integration we see that:

u= rand(1,10000) X= -log(u) h= [math]\displaystyle{ \sqrt{x} }[/math] I= mean(h)

To implement this procedure in Matlab, use the following code:

u = rand(1,10000); X = -log(u); h = x.^.5; mean(h) ans = .8841

An easy way to check whether your approximation is correct is to use the built in Matlab function 'quad1' which takes a function and bounds for the integral and returns a solution for the definite integral of that function. For this specific example Matlab returns .8862, so we see that our approximation was quite close.

Example: Normal Distribution

Let [math]\displaystyle{ f(x) = (1/(2 \pi)^{1/2}) e^{(-x^2)/2} }[/math]. Compute the cumulative distribution function at some point x.

[math]\displaystyle{ F(x)= \int_{-\infty}^{x} f(s)ds = \int_{-\infty}^{x}(1)(1/(2 \pi)^{1/2}) e^{(-s^2)/2}ds }[/math]. The (1) is inserted to illustrate that our h(x) will be the constant function 1, and our f(x) is the normal distribution. To take into account the upper bound of integration, x, any values sampled that are greater than x will be set to zero.

This is the Matlab code for solving F(2):

u = randn(1,10000) h = u < 2; mean(h) ans = .9756

We generate a 1 by 10000 vector of standard normal random variables and we return a value of 1 if u is less than 2, and 0 otherwise.

Example: Binomial Distribution

In this example we will see the Bayesian Inference for 2 Binomial Distributions.

Let [math]\displaystyle{ X ~ Bin(n,p) }[/math] and [math]\displaystyle{ Y ~ Bin(m,q) }[/math], and let [math]\displaystyle{ \delta = p-q }[/math].

Therefore, [math]\displaystyle{ \delta = x/n - y/m }[/math] which is the frequentist approach.

Bayesian wants [math]\displaystyle{ f(p,q|x,y) = f(x,y|p,q)f(p,q)/f(x,y) }[/math]

Thus, [math]\displaystyle{ f(p,q|x,y) \alpha f(x,y|p,q)f(p,q) }[/math]. Now we assume that [math]\displaystyle{ f(p,q) = f(p)f(q) = 1 }[/math] and f(p) and f(q) are uniform.

Therefore, [math]\displaystyle{ f(p,q|x,y) \alpha p^x(1-p)^{n-x}q^y(1-q)^{m-y} }[/math].

[math]\displaystyle{ E[\delta] = \int_{0}^{1} \int_{0}^{1} (p-q)f(p,q|x,y)dxdy }[/math].

As you can see this is much tougher than the frequentist approach.