stat340s13: Difference between revisions

m (Conversion script moved page Stat340s13 to stat340s13: Converting page titles to lowercase) |

|||

| (283 intermediate revisions by 59 users not shown) | |||

| Line 42: | Line 42: | ||

=== Final === | === Final === | ||

Saturday August 10,2013 | Saturday August 10,2013 from 7:30pm-10:00pm | ||

=== TA(s): === | === TA(s): === | ||

| Line 186: | Line 186: | ||

if y = ax + b, then <math>b:=y \mod a</math>. <br /> | if y = ax + b, then <math>b:=y \mod a</math>. <br /> | ||

''' | '''Example 1:'''<br /> | ||

<math>30 = 4 \cdot 7 + 2</math><br /> | <math>30 = 4 \cdot 7 + 2</math><br /> | ||

| Line 201: | Line 201: | ||

<br /> | <br /> | ||

''' | '''Example 2:'''<br /> | ||

If <math>23 = 3 \cdot 6 + 5</math> <br /> | If <math>23 = 3 \cdot 6 + 5</math> <br /> | ||

| Line 214: | Line 214: | ||

Then equivalently, <math>3 := -37\mod 40</math><br /> | Then equivalently, <math>3 := -37\mod 40</math><br /> | ||

'''Example 3:'''<br /> | |||

<math>77 = 3 \cdot 25 + 2</math><br /> | |||

<math>2 := 77\mod 3</math><br /> | |||

<br /> | |||

<math>25 = 25 \cdot 1 + 0</math><br /> | |||

<math>0: = 25\mod 25</math><br /> | |||

<br /> | |||

| Line 221: | Line 233: | ||

==== Mixed Congruential Algorithm ==== | ==== Mixed Congruential Algorithm ==== | ||

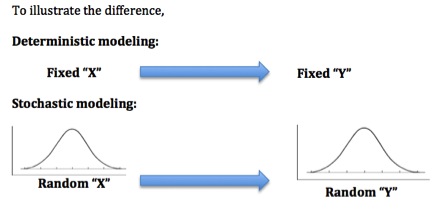

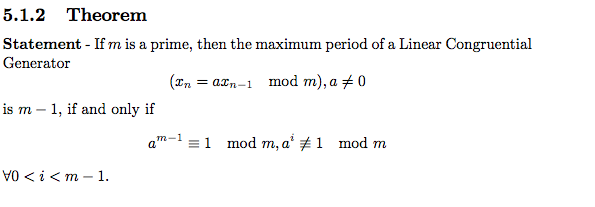

We define the Linear Congruential Method to be <math>x_{k+1}=(ax_k + b) \mod m</math>, where <math>x_k, a, b, m \in \N, \;\text{with}\; a, m \neq 0</math>. Given a '''seed''' (i.e. an initial value <math>x_0 \in \N</math>), we can obtain values for <math>x_1, \, x_2, \, \cdots, x_n</math> inductively. The Multiplicative Congruential Method, invented by Berkeley professor D. H. Lehmer, may also refer to the special case where <math>b=0</math> and the Mixed Congruential Method is case where <math>b \neq 0</math> <br /> | We define the Linear Congruential Method to be <math>x_{k+1}=(ax_k + b) \mod m</math>, where <math>x_k, a, b, m \in \N, \;\text{with}\; a, m \neq 0</math>. Given a '''seed''' (i.e. an initial value <math>x_0 \in \N</math>), we can obtain values for <math>x_1, \, x_2, \, \cdots, x_n</math> inductively. The Multiplicative Congruential Method, invented by Berkeley professor D. H. Lehmer, may also refer to the special case where <math>b=0</math> and the Mixed Congruential Method is case where <math>b \neq 0</math> <br />. Their title as "mixed" arises from the fact that it has both a multiplicative and additive term. | ||

An interesting fact about '''Linear Congruential Method''' is that it is one of the oldest and best-known pseudo random number generator algorithms. It is very fast and requires minimal memory to retain state. However, this method should not be used for applications that require high randomness. They should not be used for Monte Carlo simulation and cryptographic applications. (Monte Carlo simulation will consider possibilities for every choice of consideration, and it shows the extreme possibilities. This method is not precise enough.)<br /> | An interesting fact about '''Linear Congruential Method''' is that it is one of the oldest and best-known pseudo random number generator algorithms. It is very fast and requires minimal memory to retain state. However, this method should not be used for applications that require high randomness. They should not be used for Monte Carlo simulation and cryptographic applications. (Monte Carlo simulation will consider possibilities for every choice of consideration, and it shows the extreme possibilities. This method is not precise enough.)<br /> | ||

| Line 228: | Line 240: | ||

'''First consider the following algorithm'''<br /> | '''First consider the following algorithm'''<br /> | ||

<math>x_{k+1}=x_{k} \mod m</math> | <math>x_{k+1}=x_{k} \mod m</math> <br /> | ||

such that: if <math>x_{0}=5(mod 150)</math>, <math>x_{n}=3x_{n-1}</math>, find <math>x_{1},x_{8},x_{9}</math>. <br /> | |||

<math>x_{n}=(3^n)*5(mod 150)</math> <br /> | |||

<math>x_{1}=45,x_{8}=105,x_{9}=15</math> <br /> | |||

| Line 294: | Line 311: | ||

2. close all: closes all figures.<br /> | 2. close all: closes all figures.<br /> | ||

3. who: displays all defined variables.<br /> | 3. who: displays all defined variables.<br /> | ||

4. clc: clears screen. | 4. clc: clears screen.<br /> | ||

5. ; : prevents the results from printing.<br /><br /> | 5. ; : prevents the results from printing.<br /> | ||

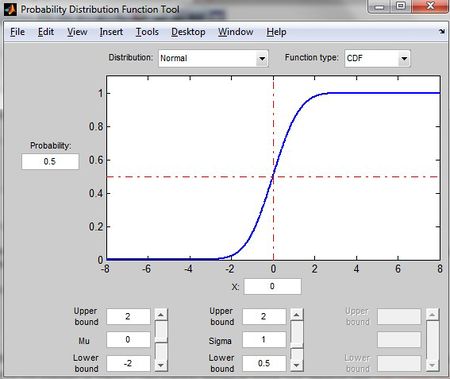

6. disstool: displays a graphing tool.<br /><br /> | |||

<pre style="font-size:16px"> | <pre style="font-size:16px"> | ||

| Line 378: | Line 396: | ||

'''Comments:'''<br /> | '''Comments:'''<br /> | ||

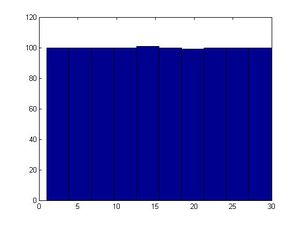

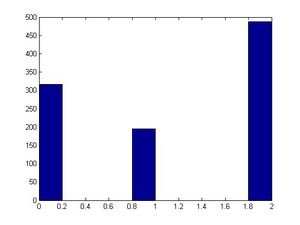

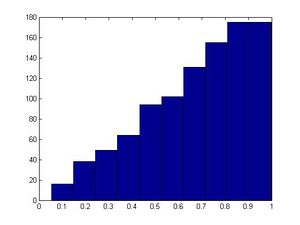

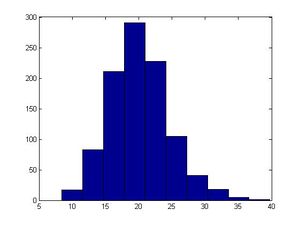

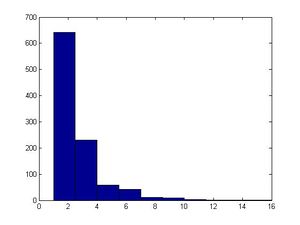

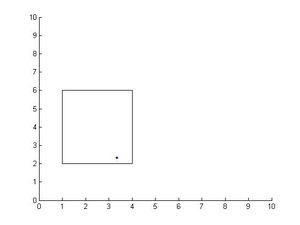

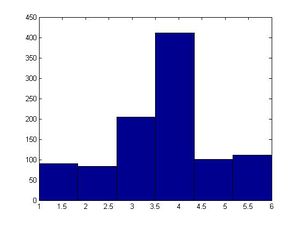

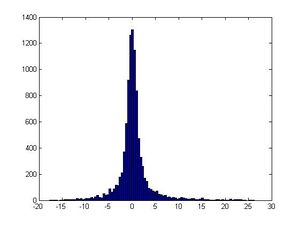

Matlab code: | |||

a=5; | |||

b=7; | |||

m=200; | |||

x(1)=3; | |||

for ii=2:1000 | |||

x(ii)=mod(a*x(ii-1)+b,m); | |||

end | |||

size(x); | |||

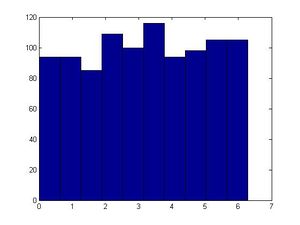

hist(x) | |||

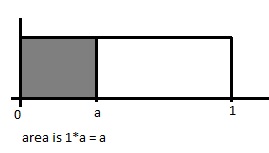

Typically, it is good to choose <math>m</math> such that <math>m</math> is large, and <math>m</math> is prime. Careful selection of parameters '<math>a</math>' and '<math>b</math>' also helps generate relatively "random" output values, where it is harder to identify patterns. For example, when we used a composite (non prime) number such as 40 for <math>m</math>, our results were not satisfactory in producing an output resembling a uniform distribution.<br /> | Typically, it is good to choose <math>m</math> such that <math>m</math> is large, and <math>m</math> is prime. Careful selection of parameters '<math>a</math>' and '<math>b</math>' also helps generate relatively "random" output values, where it is harder to identify patterns. For example, when we used a composite (non prime) number such as 40 for <math>m</math>, our results were not satisfactory in producing an output resembling a uniform distribution.<br /> | ||

| Line 429: | Line 461: | ||

</pre> | </pre> | ||

</div> | </div> | ||

Another algorithm for generating pseudo random numbers is the multiply with carry method. Its simplest form is similar to the linear congruential generator. They differs in that the parameter b changes in the MWC algorithm. It is as follows: <br> | |||

1.) x<sub>k+1</sub> = ax<sub>k</sub> + b<sub>k</sub> mod m <br> | |||

2.) b<sub>k+1</sub> = floor((ax<sub>k</sub> + b<sub>k</sub>)/m) <br> | |||

3.) set k to k + 1 and go to step 1 | |||

[http://www.javamex.com/tutorials/random_numbers/multiply_with_carry.shtml Source] | |||

=== Inverse Transform Method === | === Inverse Transform Method === | ||

| Line 442: | Line 480: | ||

'''Proof of the theorem:'''<br /> | '''Proof of the theorem:'''<br /> | ||

The generalized inverse satisfies the following: <br /> | The generalized inverse satisfies the following: <br /> | ||

<math>\ | |||

:<math>P(X\leq x)</math> <br /> | |||

<math>= P(F^{-1}(U)\leq x)</math> (since <math>X= F^{-1}(U)</math> by the inverse method)<br /> | |||

<math>= P((F(F^{-1}(U))\leq F(x))</math> (since <math>F </math> is monotonically increasing) <br /> | |||

<math>= P(U\leq F(x)) </math> (since <math> P(U\leq a)= a</math> for <math>U \sim U(0,1), a \in [0,1]</math>,<br /> | |||

<math>= F(x) , \text{ where } 0 \leq F(x) \leq 1 </math> <br /> | |||

This is the c.d.f. of X. <br /> | |||

<br /> | |||

That is <math>F^{-1}\left(u\right) \leq x \Leftrightarrow u \leq F\left(x\right)</math><br /> | That is <math>F^{-1}\left(u\right) \leq x \Leftrightarrow u \leq F\left(x\right)</math><br /> | ||

| Line 495: | Line 531: | ||

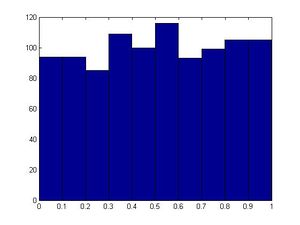

<pre style="font-size:16px"> | <pre style="font-size:16px"> | ||

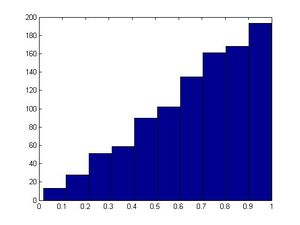

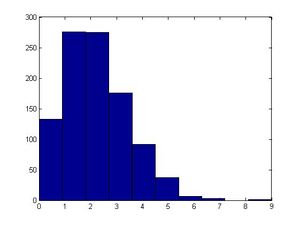

>>u=rand(1,1000); | >>u=rand(1,1000); | ||

>>hist(u) #will generate a fairly uniform diagram | >>hist(u) # this will generate a fairly uniform diagram | ||

</pre> | </pre> | ||

[[File:ITM_example_hist(u).jpg|300px]] | [[File:ITM_example_hist(u).jpg|300px]] | ||

| Line 531: | Line 567: | ||

Sol: | Sol: | ||

Let <math>y=x^5</math>, solve for x: <math>x=y^\frac {1}{5}</math>. Therefore, <math>F^{-1} (x) = x^\frac {1}{5}</math><br /> | Let <math>y=x^5</math>, solve for x: <math>x=y^\frac {1}{5}</math>. Therefore, <math>F^{-1} (x) = x^\frac {1}{5}</math><br /> | ||

Hence, to obtain a value of x from F(x), we first set u as an uniform distribution, then obtain the inverse function of F(x), and set | Hence, to obtain a value of x from F(x), we first set 'u' as an uniform distribution, then obtain the inverse function of F(x), and set | ||

<math>x= u^\frac{1}{5}</math><br /><br /> | <math>x= u^\frac{1}{5}</math><br /><br /> | ||

| Line 593: | Line 629: | ||

== Class 3 - Tuesday, May 14 == | == Class 3 - Tuesday, May 14 == | ||

=== Recall the Inverse Transform Method === | === Recall the Inverse Transform Method === | ||

Let U~Unif(0,1),then the random variable X = F<sup>-1</sup>(u) has distribution F. <br /> | |||

To sample X with CDF F(x), <br /> | To sample X with CDF F(x), <br /> | ||

<math>1) U~ \sim~ Unif [0,1] </math> | |||

'''2) X = F<sup>-1</sup>(u) '''<br /> | '''2) X = F<sup>-1</sup>(u) '''<br /> | ||

<br /> | <br /> | ||

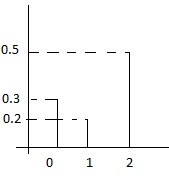

| Line 662: | Line 690: | ||

Note that after generating a random U, the value of X can be determined by finding the interval <math>[F(x_{j-1}),F(x_{j})]</math> in which U lies. <br /> | Note that after generating a random U, the value of X can be determined by finding the interval <math>[F(x_{j-1}),F(x_{j})]</math> in which U lies. <br /> | ||

In summary: | |||

Generate a discrete r.v.x that has pmf:<br /> | |||

P(X=xi)=Pi, x0<x1<x2<... <br /> | |||

1. Draw U~U(0,1);<br /> | |||

2. If F(x(i-1))<U<F(xi), x=xi.<br /> | |||

| Line 907: | Line 941: | ||

'''Problems'''<br /> | '''Problems'''<br /> | ||

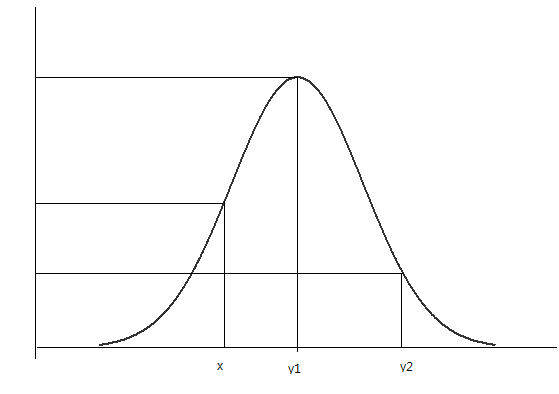

Though this method is very easy to use and apply, it does have a major disadvantage/limitation: | |||

We need to find the inverse cdf F^{-1}(\cdot) . In some cases the inverse function does not exist, or is difficult to find because it requires a closed form expression for F(x). | |||

For example, it is too difficult to find the inverse cdf of the Gaussian distribution, so we must find another method to sample from the Gaussian distribution. | |||

In conclusion, we need to find another way of sampling from more complicated distributions | |||

Flipping a coin is a discrete case of uniform distribution, and the code below shows an example of flipping a coin 1000 times; the result is close to the expected value 0.5.<br> | Flipping a coin is a discrete case of uniform distribution, and the code below shows an example of flipping a coin 1000 times; the result is close to the expected value 0.5.<br> | ||

Example 2, as another discrete distribution, shows that we can sample from parts like 0,1 and 2, and the probability of each part or each trial is the same.<br> | Example 2, as another discrete distribution, shows that we can sample from parts like 0,1 and 2, and the probability of each part or each trial is the same.<br> | ||

| Line 962: | Line 997: | ||

3. Mixed continues discrete | 3. Mixed continues discrete | ||

'''Advantages of Inverse-Transform Method''' | '''Advantages of Inverse-Transform Method''' | ||

| Line 1,188: | Line 1,218: | ||

== Class 4 - Thursday, May 16 == | == Class 4 - Thursday, May 16 == | ||

*When we want to find target distribution | |||

'''Goals'''<br> | |||

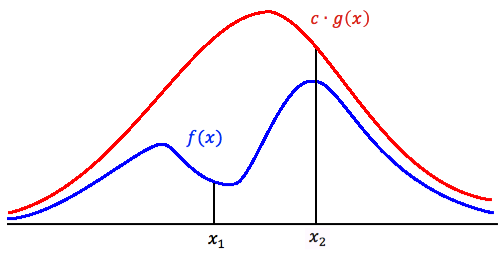

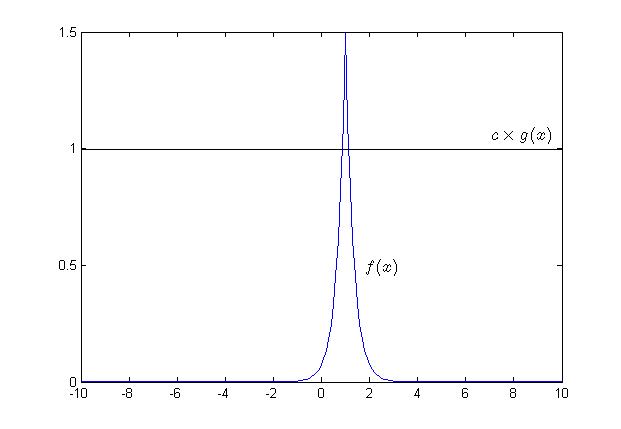

*When we want to find target distribution <math>f(x)</math>, we need to first find a proposal distribution <math>g(x)</math> that is easy to sample from. <br> | |||

*Relationship between the proposal distribution and target distribution is: <math> c \cdot g(x) \geq f(x) </math>, where c is constant. This means that the area of f(x) is under the area of <math> c \cdot g(x)</math>. <br> | *Relationship between the proposal distribution and target distribution is: <math> c \cdot g(x) \geq f(x) </math>, where c is constant. This means that the area of f(x) is under the area of <math> c \cdot g(x)</math>. <br> | ||

*Chance of acceptance is less if the distance between <math>f(x)</math> and <math> c \cdot g(x)</math> is big, and vice-versa, we use <math> c </math> to keep <math> \frac {f(x)}{c \cdot g(x)} </math> below 1 (so <math>f(x) \leq c \cdot g(x)</math>). Therefore, we must find the constant <math> C </math> to achieve this.<br /> | *Chance of acceptance is less if the distance between <math>f(x)</math> and <math> c \cdot g(x)</math> is big, and vice-versa, we use <math> c </math> to keep <math> \frac {f(x)}{c \cdot g(x)} </math> below 1 (so <math>f(x) \leq c \cdot g(x)</math>). Therefore, we must find the constant <math> C </math> to achieve this.<br /> | ||

*In other words, <math>C</math> is chosen to make sure <math> c \cdot g(x) \geq f(x) </math>. However, it will not make sense if <math>C</math> is simply chosen to be arbitrarily large. We need to choose <math>C</math> such that <math>c \cdot g(x)</math> fits <math>f(x)</math> as tightly as possible. This means that we must find the minimum c such that the area of f(x) is under the area of c*g(x). <br /> | *In other words, <math>C</math> is chosen to make sure <math> c \cdot g(x) \geq f(x) </math>. However, it will not make sense if <math>C</math> is simply chosen to be arbitrarily large. We need to choose <math>C</math> such that <math>c \cdot g(x)</math> fits <math>f(x)</math> as tightly as possible. This means that we must find the minimum c such that the area of f(x) is under the area of c*g(x). <br /> | ||

*The constant c cannot be a negative number.<br /> | *The constant c cannot be a negative number.<br /> | ||

'''How to find C''':<br /> | '''How to find C''':<br /> | ||

<math>\begin{align} | <math>\begin{align} | ||

&c \cdot g(x) \geq f(x)\\ | &c \cdot g(x) \geq f(x)\\ | ||

| Line 1,200: | Line 1,234: | ||

&c= \max \left(\frac{f(x)}{g(x)}\right) | &c= \max \left(\frac{f(x)}{g(x)}\right) | ||

\end{align}</math><br> | \end{align}</math><br> | ||

If <math>f</math> and <math> g </math> are continuous, we can find the extremum by taking the derivative and solve for <math>x_0</math> such that:<br/> | If <math>f</math> and <math> g </math> are continuous, we can find the extremum by taking the derivative and solve for <math>x_0</math> such that:<br/> | ||

<math> 0=\frac{d}{dx}\frac{f(x)}{g(x)}|_{x=x_0}</math> <br/> | <math> 0=\frac{d}{dx}\frac{f(x)}{g(x)}|_{x=x_0}</math> <br/> | ||

Thus <math> c = \frac{f(x_0)}{g(x_0)} </math><br/> | Thus <math> c = \frac{f(x_0)}{g(x_0)} </math><br/> | ||

Note: This procedure is called the Acceptance-Rejection Method.<br> | |||

The Acceptance-Rejection method involves finding a distribution that we know how to sample from, g(x), and multiplying g(x) by a constant c so that <math>c \cdot g(x)</math> is always greater than or equal to f(x). Mathematically, we want <math> c \cdot g(x) \geq f(x) </math>. | |||

And it means, c has to be greater or equal to <math>\frac{f(x)}{g(x)}</math>. So the smallest possible c that satisfies the condition is the maximum value of <math>\frac{f(x)}{g(x)}</math><br/>. | '''The Acceptance-Rejection method''' involves finding a distribution that we know how to sample from, g(x), and multiplying g(x) by a constant c so that <math>c \cdot g(x)</math> is always greater than or equal to f(x). Mathematically, we want <math> c \cdot g(x) \geq f(x) </math>. | ||

And it means, c has to be greater or equal to <math>\frac{f(x)}{g(x)}</math>. So the smallest possible c that satisfies the condition is the maximum value of <math>\frac{f(x)}{g(x)}</math><br/>. | |||

But in case of c being too large, the chance of acceptance of generated values will be small, thereby losing efficiency of the algorithm. Therefore, it is best to get the smallest possible c such that <math> c g(x) \geq f(x)</math>. <br> | |||

'''Important points:'''<br> | |||

*For this method to be efficient, the constant c must be selected so that the rejection rate is low. (The efficiency for this method is <math>\left ( \frac{1}{c} \right )</math>)<br> | *For this method to be efficient, the constant c must be selected so that the rejection rate is low. (The efficiency for this method is <math>\left ( \frac{1}{c} \right )</math>)<br> | ||

*It is easy to show that the expected number of trials for an acceptance is <math> \frac{Total Number of Trials} {C} </math>. <br> | *It is easy to show that the expected number of trials for an acceptance is <math> \frac{Total Number of Trials} {C} </math>. <br> | ||

*recall the acceptance rate is 1/c. (Not rejection rate) | *recall the '''acceptance rate is 1/c'''. (Not rejection rate) | ||

:Let <math>X</math> be the number of trials for an acceptance, <math> X \sim~ Geo(\frac{1}{c})</math><br> | :Let <math>X</math> be the number of trials for an acceptance, <math> X \sim~ Geo(\frac{1}{c})</math><br> | ||

:<math>\mathbb{E}[X] = \frac{1}{\frac{1}{c}} = c </math> | :<math>\mathbb{E}[X] = \frac{1}{\frac{1}{c}} = c </math> | ||

*The number of trials needed to generate a sample size of <math>N</math> follows a negative binomial distribution. The expected number of trials needed is then <math>cN</math>.<br> | *The number of trials needed to generate a sample size of <math>N</math> follows a negative binomial distribution. The expected number of trials needed is then <math>cN</math>.<br> | ||

*So far, the only distribution we know how to sample from is the '''UNIFORM''' distribution. <br> | *So far, the only distribution we know how to sample from is the '''UNIFORM''' distribution. <br> | ||

'''Procedure''': <br> | '''Procedure''': <br> | ||

1. Choose <math>g(x)</math> (simple density function that we know how to sample, i.e. Uniform so far) <br> | 1. Choose <math>g(x)</math> (simple density function that we know how to sample, i.e. Uniform so far) <br> | ||

The easiest case is | The easiest case is <math>U~ \sim~ Unif [0,1] </math>. However, in other cases we need to generate UNIF(a,b). We may need to perform a linear transformation on the <math>U~ \sim~ Unif [0,1] </math> variable. <br> | ||

2. Find a constant c such that :<math> c \cdot g(x) \geq f(x) </math>, otherwise return to step 1. | 2. Find a constant c such that :<math> c \cdot g(x) \geq f(x) </math>, otherwise return to step 1. | ||

| Line 1,226: | Line 1,268: | ||

#If <math>U \leq \frac{f(Y)}{c \cdot g(Y)}</math> then X=Y; else return to step 1 (This is not the way to find C. This is the general procedure.) | #If <math>U \leq \frac{f(Y)}{c \cdot g(Y)}</math> then X=Y; else return to step 1 (This is not the way to find C. This is the general procedure.) | ||

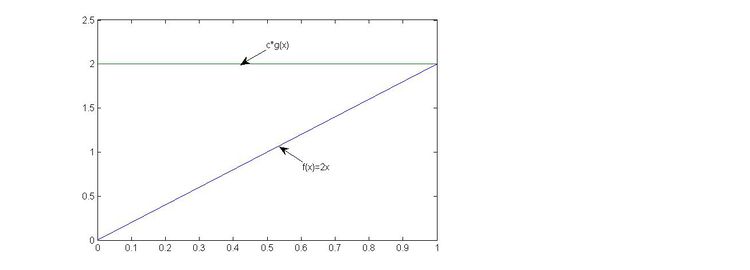

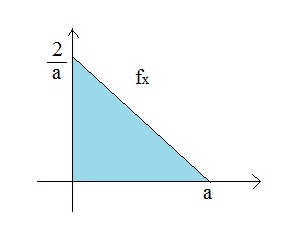

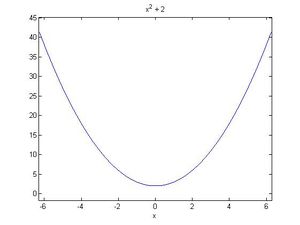

<hr><b>Example: Generate a random variable from the pdf</b><br> | <hr><b>Example: <br> | ||

Generate a random variable from the pdf</b><br> | |||

<math> f(x) = | <math> f(x) = | ||

\begin{cases} | \begin{cases} | ||

| Line 1,261: | Line 1,305: | ||

[[File:Beta(2,1)_example.jpg|750x750px]] | [[File:Beta(2,1)_example.jpg|750x750px]] | ||

Note: g follows uniform distribution, it only covers half of the graph which runs from 0 to 1 on y-axis. Thus we need to multiply by c to ensure that <math>c\cdot g</math> can cover entire f(x) area. In this case, c=2, so that makes g run from 0 to 2 on y-axis which covers f(x). | '''Note:''' g follows uniform distribution, it only covers half of the graph which runs from 0 to 1 on y-axis. Thus we need to multiply by c to ensure that <math>c\cdot g</math> can cover entire f(x) area. In this case, c=2, so that makes g run from 0 to 2 on y-axis which covers f(x). | ||

Comment: | '''Comment:'''<br> | ||

From the picture above, we could observe that the area under f(x)=2x is a half of the area under the pdf of UNIF(0,1). This is why in order to sample 1000 points of f(x), we need to sample approximately 2000 points in UNIF(0,1). | From the picture above, we could observe that the area under f(x)=2x is a half of the area under the pdf of UNIF(0,1). This is why in order to sample 1000 points of f(x), we need to sample approximately 2000 points in UNIF(0,1). | ||

And in general, if we want to sample n points from a distritubion with pdf f(x), we need to scan approximately <math>n\cdot c</math> points from the proposal distribution (g(x)) in total. <br> | And in general, if we want to sample n points from a distritubion with pdf f(x), we need to scan approximately <math>n\cdot c</math> points from the proposal distribution (g(x)) in total. <br> | ||

| Line 1,274: | Line 1,318: | ||

</ol> | </ol> | ||

Note: In the above example, we sample 2 numbers. If second number (u) is less than or equal to first number (y), then accept x=y, if not then start all over. | '''Note:''' In the above example, we sample 2 numbers. If second number (u) is less than or equal to first number (y), then accept x=y, if not then start all over. | ||

<span style="font-weight:bold;color:green;">Matlab Code</span> | <span style="font-weight:bold;color:green;">Matlab Code</span> | ||

| Line 1,354: | Line 1,398: | ||

=====Example of Acceptance-Rejection Method===== | =====Example of Acceptance-Rejection Method===== | ||

<math> f(x) = 3x^2, 0<x<1 </math> | <math>\begin{align} | ||

<math>g(x)=1, 0<x<1</math> | & f(x) = 3x^2, 0<x<1 \\ | ||

\end{align}</math><br\> | |||

<math>\begin{align} | |||

& g(x)=1, 0<x<1 \\ | |||

\end{align}</math><br\> | |||

<math>c = \max \frac{f(x)}{g(x)} = \max \frac{3x^2}{1} = 3 </math><br> | <math>c = \max \frac{f(x)}{g(x)} = \max \frac{3x^2}{1} = 3 </math><br> | ||

| Line 1,361: | Line 1,410: | ||

1. Generate two uniform numbers in the unit interval <math>U_1, U_2 \sim~ U(0,1)</math><br> | 1. Generate two uniform numbers in the unit interval <math>U_1, U_2 \sim~ U(0,1)</math><br> | ||

2. If <math>U_2 \leqslant {U_1}^2</math>, accept <math>U_1</math> as the random variable with pdf <math>f</math>, if not return to Step 1 | 2. If <math>U_2 \leqslant {U_1}^2</math>, accept <math>\begin{align}U_1\end{align}</math> as the random variable with pdf <math>\begin{align}f\end{align}</math>, if not return to Step 1 | ||

We can also use <math>g(x)=2x</math> for a more efficient algorithm | We can also use <math>\begin{align}g(x)=2x\end{align}</math> for a more efficient algorithm | ||

<math>c = \max \frac{f(x)}{g(x)} = \max \frac {3x^2}{2x} = \frac {3x}{2} </math>. | <math>c = \max \frac{f(x)}{g(x)} = \max \frac {3x^2}{2x} = \frac {3x}{2} </math>. | ||

Use the inverse method to sample from <math>g(x)</math> | Use the inverse method to sample from <math>\begin{align}g(x)\end{align}</math> | ||

<math>G(x)=x^2</math>. | <math>\begin{align}G(x)=x^2\end{align}</math>. | ||

Generate <math>U</math> from <math>U(0,1)</math> and set <math>x=sqrt(u)</math> | Generate <math>\begin{align}U\end{align}</math> from <math>\begin{align}U(0,1)\end{align}</math> and set <math>\begin{align}x=sqrt(u)\end{align}</math> | ||

1. Generate two uniform numbers in the unit interval <math>U_1, U_2 \sim~ U(0,1)</math><br> | 1. Generate two uniform numbers in the unit interval <math>U_1, U_2 \sim~ U(0,1)</math><br> | ||

2. If <math>U_2 \leq \frac{3\sqrt{U_1}}{2}</math>, accept <math>U_1</math> as the random variable with pdf <math>f</math>, if not return to Step 1 | 2. If <math>U_2 \leq \frac{3\sqrt{U_1}}{2}</math>, accept <math>U_1</math> as the random variable with pdf <math>f</math>, if not return to Step 1 | ||

*Note :the function q(x) = c * g(x) is called an envelop or majoring function.<br> | *Note :the function <math>\begin{align}q(x) = c * g(x)\end{align}</math> is called an envelop or majoring function.<br> | ||

To obtain a better proposing function g(x), we can first assume a new q(x) and then solve for the normalizing constant by integrating.<br> | To obtain a better proposing function <math>\begin{align}g(x)\end{align}</math>, we can first assume a new <math>\begin{align}q(x)\end{align}</math> and then solve for the normalizing constant by integrating.<br> | ||

In the previous example, we first assume q(x) = 3x. To find the normalizing constant, we need to solve | In the previous example, we first assume <math>\begin{align}q(x) = 3x\end{align}</math>. To find the normalizing constant, we need to solve <math>k *\sum 3x = 1</math> which gives us k = 2/3. So,<math>\begin{align}g(x) = k*q(x) = 2x\end{align}</math>. | ||

*Source: http://www.cs.bgu.ac.il/~mps042/acceptance.htm* | |||

'''Possible Limitations''' | '''Possible Limitations''' | ||

| Line 1,504: | Line 1,554: | ||

3) A constant c where <math>f(x)\leq c\cdot g(x)</math><br/> | 3) A constant c where <math>f(x)\leq c\cdot g(x)</math><br/> | ||

4) A uniform draw<br/> | 4) A uniform draw<br/> | ||

==== Interpretation of 'C' ==== | ==== Interpretation of 'C' ==== | ||

| Line 1,514: | Line 1,563: | ||

In order to ensure the algorithm is as efficient as possible, the 'C' value should be as close to one as possible, such that <math>\tfrac{1}{c}</math> approaches 1 => 100% acceptance rate. | In order to ensure the algorithm is as efficient as possible, the 'C' value should be as close to one as possible, such that <math>\tfrac{1}{c}</math> approaches 1 => 100% acceptance rate. | ||

>> close All | |||

>> clear All | |||

>> i=1 | |||

>> j=0; | |||

>> while ii<1000 | |||

y=rand | |||

u=rand | |||

if u<=y; | |||

x(ii)=y | |||

ii=ii+1 | |||

end | |||

end | |||

== Class 5 - Tuesday, May 21 == | == Class 5 - Tuesday, May 21 == | ||

| Line 1,539: | Line 1,602: | ||

>>hist(x,30) #30 is the number of bars | >>hist(x,30) #30 is the number of bars | ||

</pre> | </pre> | ||

calculate process: | |||

<math>u_{1} <= \sqrt (1-(2u-1)^2) </math> <br> | |||

<math>(u_{1})^2 <=(1-(2u-1)^2) </math> <br> | |||

<math>(u_{1})^2 -1 <=(-(2u-1)^2) </math> <br> | |||

<math>1-(u_{1})^2 >=((2u-1)^2-1) </math> <br> | |||

MATLAB tips: hist(x,y) plots a histogram of variable x, where y is the number of bars in the graph. | MATLAB tips: hist(x,y) plots a histogram of variable x, where y is the number of bars in the graph. | ||

| Line 1,618: | Line 1,688: | ||

>>close all | >>close all | ||

>>clear all | >>clear all | ||

>>p=[.1 .3 .6]; | >>p=[.1 .3 .6]; %This a vector holding the values | ||

>>ii=1; | >>ii=1; | ||

>>while ii < 1000 | >>while ii < 1000 | ||

y=unidrnd(3); | y=unidrnd(3); %generates random numbers for the discrete uniform distribution with maximum 3 | ||

u=rand; | u=rand; | ||

if u<= p(y)/0.6 | if u<= p(y)/0.6 | ||

x(ii)=y; | x(ii)=y; | ||

ii=ii+1; | ii=ii+1; %else ii=ii+1 | ||

end | end | ||

end | end | ||

| Line 1,633: | Line 1,703: | ||

* '''Example 3'''<br> | * '''Example 3'''<br> | ||

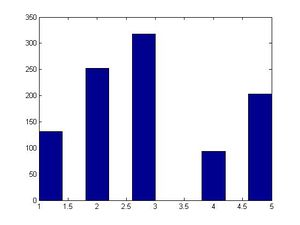

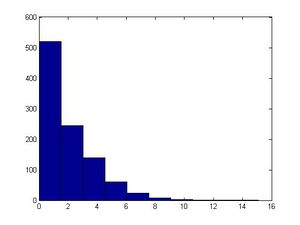

Suppose <math>\begin{align}p_{x} = e^{-3}3^{x}/x! , x\geq 0\end{align}</math> (Poisson distribution) | |||

'''First:''' Try the first few <math>\begin{align}p_{x}'s\end{align}</math>: 0.0498, 0.149, 0.224, 0.224, 0.168, 0.101, 0.0504, 0.0216, 0.0081, 0.0027 for <math>\begin{align} x = 0,1,2,3,4,5,6,7,8,9 \end{align}</math><br> | |||

3 | |||

Note: In this case, f(x)/g(x) is extremely difficult to differentiate so we were required to test points. If the function is | '''Proposed distribution:''' Use the geometric distribution for <math>\begin{align}g(x)\end{align}</math>;<br> | ||

<math>\begin{align}g(x)=p(1-p)^{x}\end{align}</math>, choose <math>\begin{align}p=0.25\end{align}</math><br> | |||

Look at <math>\begin{align}p_{x}/g(x)\end{align}</math> for the first few numbers: 0.199 0.797 1.59 2.12 2.12 1.70 1.13 0.647 0.324 0.144 for <math>\begin{align} x = 0,1,2,3,4,5,6,7,8,9 \end{align}</math><br> | |||

We want <math>\begin{align}c=max(p_{x}/g(x))\end{align}</math> which is approximately 2.12<br> | |||

'''The general procedures to generate <math>\begin{align}p(x)\end{align}</math> is as follows:''' | |||

1. Generate <math>\begin{align}U_{1} \sim~ U(0,1); U_{2} \sim~ U(0,1)\end{align}</math><br> | |||

2. <math>\begin{align}j = \lfloor \frac{ln(U_{1})}{ln(.75)} \rfloor+1;\end{align}</math><br> | |||

3. if <math>U_{2} < \frac{p_{j}}{cg(j)}</math>, set <math>\begin{align}X = x_{j}\end{align}</math>, else go to step 1. | |||

Note: In this case, <math>\begin{align}f(x)/g(x)\end{align}</math> is extremely difficult to differentiate so we were required to test points. If the function is very easy to differentiate, we can calculate the max as if it were a continuous function then check the two surrounding points for which is the highest discrete value. | |||

* Source: http://www.math.wsu.edu/faculty/genz/416/lect/l04-46.pdf* | |||

*'''Example 4''' (Hypergeometric & Binomial)<br> | *'''Example 4''' (Hypergeometric & Binomial)<br> | ||

| Line 1,724: | Line 1,805: | ||

The CDF of the Gamma distribution <math>Gamma(t,\lambda)</math> is(t denotes the shape, <math>\lambda</math> denotes the scale: <br> | The CDF of the Gamma distribution <math>Gamma(t,\lambda)</math> is(t denotes the shape, <math>\lambda</math> denotes the scale: <br> | ||

<math> F(x) = \int_0^{x} \frac{e^{-y}y^{t-1}}{(t-1)!} \mathrm{d}y, \; \forall x \in (0,+\infty)</math>, where <math>t \in \N^+ \text{ and } \lambda \in (0,+\infty)</math>.<br> | <math> F(x) = \int_0^{x} \frac{e^{-y}y^{t-1}}{(t-1)!} \mathrm{d}y, \; \forall x \in (0,+\infty)</math>, where <math>t \in \N^+ \text{ and } \lambda \in (0,+\infty)</math>.<br> | ||

Note that the CDF of the Gamma distribution does not have a closed form. | |||

The gamma distribution is often used to model waiting times between a certain number of events. It can also be expressed as the sum of infinitely many independent and identically distributed exponential distributions. This distribution has two parameters: the number of exponential terms n, and the rate parameter <math>\lambda</math>. In this distribution there is the Gamma function, <math>\Gamma </math> which has some very useful properties. "Source: STAT 340 Spring 2010 Course Notes" <br/> | The gamma distribution is often used to model waiting times between a certain number of events. It can also be expressed as the sum of infinitely many independent and identically distributed exponential distributions. This distribution has two parameters: the number of exponential terms n, and the rate parameter <math>\lambda</math>. In this distribution there is the Gamma function, <math>\Gamma </math> which has some very useful properties. "Source: STAT 340 Spring 2010 Course Notes" <br/> | ||

| Line 1,836: | Line 1,919: | ||

:<math>f(x) = \frac{1}{\sqrt{2\pi}}\, e^{- \frac{\scriptscriptstyle 1}{\scriptscriptstyle 2} x^2}</math> | :<math>f(x) = \frac{1}{\sqrt{2\pi}}\, e^{- \frac{\scriptscriptstyle 1}{\scriptscriptstyle 2} x^2}</math> | ||

*Warning : the General Normal distribution is | *Warning : the General Normal distribution is: | ||

: | |||

<table> | <table> | ||

<tr> | <tr> | ||

| Line 1,889: | Line 1,971: | ||

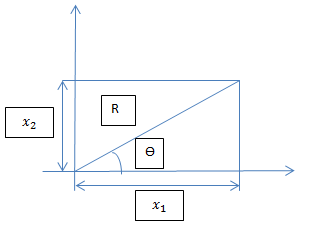

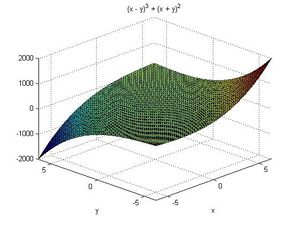

Let <math> \theta </math> and R denote the Polar coordinate of the vector (X, Y) | Let <math> \theta </math> and R denote the Polar coordinate of the vector (X, Y) | ||

where <math> X = R \cdot \sin\theta </math> and <math> Y = R \cdot \cos \theta </math> | |||

[[File:rtheta.jpg]] | [[File:rtheta.jpg]] | ||

| Line 1,905: | Line 1,988: | ||

We know that | We know that | ||

<math> | <math>R^{2}= X^{2}+Y^{2}</math> and <math> \tan(\theta) = \frac{y}{x} </math> where X and Y are two independent standard normal | ||

:<math>f(x) = \frac{1}{\sqrt{2\pi}}\, e^{- \frac{\scriptscriptstyle 1}{\scriptscriptstyle 2} x^2}</math> | :<math>f(x) = \frac{1}{\sqrt{2\pi}}\, e^{- \frac{\scriptscriptstyle 1}{\scriptscriptstyle 2} x^2}</math> | ||

:<math>f(y) = \frac{1}{\sqrt{2\pi}}\, e^{- \frac{\scriptscriptstyle 1}{\scriptscriptstyle 2} y^2}</math> | :<math>f(y) = \frac{1}{\sqrt{2\pi}}\, e^{- \frac{\scriptscriptstyle 1}{\scriptscriptstyle 2} y^2}</math> | ||

:<math>f(x,y) = \frac{1}{\sqrt{2\pi}}\, e^{- \frac{\scriptscriptstyle 1}{\scriptscriptstyle 2} x^2} * \frac{1}{\sqrt{2\pi}}\, e^{- \frac{\scriptscriptstyle 1}{\scriptscriptstyle 2} y^2}=\frac{1}{2\pi}\, e^{- \frac{\scriptscriptstyle 1}{\scriptscriptstyle 2} (x^2+y^2)} </math><br /> - Since for independent distributions, their joint probability function is the multiplication of two independent probability functions | :<math>f(x,y) = \frac{1}{\sqrt{2\pi}}\, e^{- \frac{\scriptscriptstyle 1}{\scriptscriptstyle 2} x^2} * \frac{1}{\sqrt{2\pi}}\, e^{- \frac{\scriptscriptstyle 1}{\scriptscriptstyle 2} y^2}=\frac{1}{2\pi}\, e^{- \frac{\scriptscriptstyle 1}{\scriptscriptstyle 2} (x^2+y^2)} </math><br /> - Since for independent distributions, their joint probability function is the multiplication of two independent probability functions. It can also be shown using 1-1 transformation that the joint distribution of R and θ is given by, 1-1 transformation:<br /> | ||

It can also be shown using 1-1 transformation that the joint distribution of R and θ is given by, | |||

1-1 transformation:<br /> | |||

Let <math>d=R^2</math><br /> | '''Let <math>d=R^2</math>'''<br /> | ||

<math>x= \sqrt {d}\cos \theta </math> | <math>x= \sqrt {d}\cos \theta </math> | ||

<math>y= \sqrt {d}\sin \theta </math> | <math>y= \sqrt {d}\sin \theta </math> | ||

then | then | ||

<math>\left| J\right| = \left| \dfrac {1} {2}d^{-\frac {1} {2}}\cos \theta d^{\frac{1}{2}}\cos \theta +\sqrt {d}\sin \theta \dfrac {1} {2}d^{-\frac{1}{2}}\sin \theta \right| = \dfrac {1} {2}</math> | <math>\left| J\right| = \left| \dfrac {1} {2}d^{-\frac {1} {2}}\cos \theta d^{\frac{1}{2}}\cos \theta +\sqrt {d}\sin \theta \dfrac {1} {2}d^{-\frac{1}{2}}\sin \theta \right| = \dfrac {1} {2}</math> | ||

It can be shown that the | It can be shown that the joint density of <math> d /R^2</math> and <math> \theta </math> is: | ||

:<math>\begin{matrix} f(d,\theta) = \frac{1}{2}e^{-\frac{d}{2}}*\frac{1}{2\pi},\quad d = R^2 \end{matrix},\quad for\quad 0\leq d<\infty\ and\quad 0\leq \theta\leq 2\pi </math> | :<math>\begin{matrix} f(d,\theta) = \frac{1}{2}e^{-\frac{d}{2}}*\frac{1}{2\pi},\quad d = R^2 \end{matrix},\quad for\quad 0\leq d<\infty\ and\quad 0\leq \theta\leq 2\pi </math> | ||

| Line 1,923: | Line 2,007: | ||

Note that <math> \begin{matrix}f(r,\theta)\end{matrix}</math> consists of two density functions, Exponential and Uniform, so assuming that r and <math>\theta</math> are independent | Note that <math> \begin{matrix}f(r,\theta)\end{matrix}</math> consists of two density functions, Exponential and Uniform, so assuming that r and <math>\theta</math> are independent | ||

<math> \begin{matrix} \Rightarrow d \sim~ Exp(1/2), \theta \sim~ Unif[0,2\pi] \end{matrix} </math> | <math> \begin{matrix} \Rightarrow d \sim~ Exp(1/2), \theta \sim~ Unif[0,2\pi] \end{matrix} </math> | ||

::* <math> \begin{align} R^2 = x^2 + y^2 \end{align} </math> | ::* <math> \begin{align} R^2 = d = x^2 + y^2 \end{align} </math> | ||

::* <math> \tan(\theta) = \frac{y}{x} </math> | ::* <math> \tan(\theta) = \frac{y}{x} </math> | ||

<math>\begin{align} f(d) = Exp(1/2)=\frac{1}{2}e^{-\frac{d}{2}}\ \end{align}</math> | <math>\begin{align} f(d) = Exp(1/2)=\frac{1}{2}e^{-\frac{d}{2}}\ \end{align}</math> | ||

| Line 1,929: | Line 2,013: | ||

<math>\begin{align} f(\theta) =\frac{1}{2\pi}\ \end{align}</math> | <math>\begin{align} f(\theta) =\frac{1}{2\pi}\ \end{align}</math> | ||

<br> | <br> | ||

To sample from the normal distribution, we can generate a pair of independent standard normal X and Y by:<br /> | To sample from the normal distribution, we can generate a pair of independent standard normal X and Y by:<br /> | ||

1) Generating their polar coordinates<br /> | 1) Generating their polar coordinates<br /> | ||

2) Transforming back to rectangular (Cartesian) coordinates.<br /> | 2) Transforming back to rectangular (Cartesian) coordinates.<br /> | ||

Step 1: Generate <math>u_{1}</math> ~<math>Unif(0,1)</math> | '''Alternative Method of Generating Standard Normal Random Variables'''<br /> | ||

Step 2: Generate <math>Y_{1}</math> ~<math>Exp(1)</math>,<math>Y_{2}</math>~<math>Exp(2)</math> | |||

Step 3: If <math>Y_{2} \geq(Y_{1}-1)^2/2</math>,set <math>V=Y1</math>,otherwise,go to step 1 | Step 1: Generate <math>u_{1}</math> ~<math>Unif(0,1)</math><br /> | ||

Step 4: If <math>u_{1} \leq 1/2</math>,then <math>X=-V</math> | Step 2: Generate <math>Y_{1}</math> ~<math>Exp(1)</math>,<math>Y_{2}</math>~<math>Exp(2)</math><br /> | ||

Step 3: If <math>Y_{2} \geq(Y_{1}-1)^2/2</math>,set <math>V=Y1</math>,otherwise,go to step 1<br /> | |||

Step 4: If <math>u_{1} \leq 1/2</math>,then <math>X=-V</math><br /> | |||

===Expectation of a Standard Normal distribution===<br /> | |||

The expectation of a standard normal distribution is 0<br /> | |||

The expectation of a standard normal distribution is 0 | |||

: | '''Proof:''' <br /> | ||

:<math>\operatorname{E}[X]= \;\int_{-\infty}^{\infty} x \frac{1}{\sqrt{2\pi}} e^{-x^2/2} \, dx.</math> | :<math>\operatorname{E}[X]= \;\int_{-\infty}^{\infty} x \frac{1}{\sqrt{2\pi}} e^{-x^2/2} \, dx.</math> | ||

| Line 1,951: | Line 2,040: | ||

:<math>= - \left[\phi(x)\right]_{-\infty}^{\infty}</math> | :<math>= - \left[\phi(x)\right]_{-\infty}^{\infty}</math> | ||

:<math>= 0</math><br /> | :<math>= 0</math><br /> | ||

'''Note,''' more intuitively, because x is an odd function (f(x)+f(-x)=0). Taking integral of x will give <math>x^2/2 </math> which is an even function (f(x)=f(-x)). This is in relation to the symmetrical properties of the standard normal distribution. If support is from negative infinity to infinity, then the integral will return 0.<br /> | |||

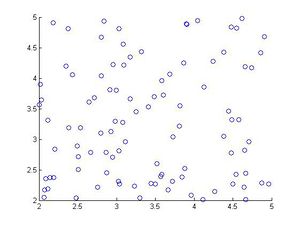

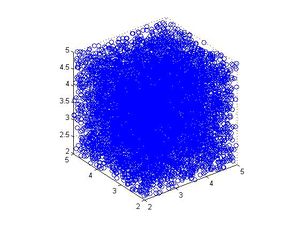

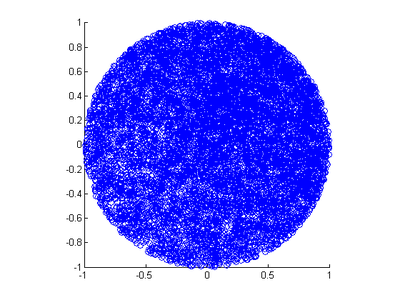

'''Procedure (Box-Muller Transformation Method):''' <br /> | |||

Pseudorandom approaches to generating normal random variables used to be limited. Inefficient methods such as inverse Gaussian function, sum of uniform random variables, and acceptance-rejection were used. In 1958, a new method was proposed by George Box and Mervin Muller of Princeton University. This new technique was easy to use and also had the accuracy to the inverse transform sampling method that it grew more valuable as computers became more computationally astute. <br> | Pseudorandom approaches to generating normal random variables used to be limited. Inefficient methods such as inverse Gaussian function, sum of uniform random variables, and acceptance-rejection were used. In 1958, a new method was proposed by George Box and Mervin Muller of Princeton University. This new technique was easy to use and also had the accuracy to the inverse transform sampling method that it grew more valuable as computers became more computationally astute. <br> | ||

The Box-Muller method takes a sample from a bivariate independent standard normal distribution, each component of which is thus a univariate standard normal. The algorithm is based on the following two properties of the bivariate independent standard normal distribution: <br> | The Box-Muller method takes a sample from a bivariate independent standard normal distribution, each component of which is thus a univariate standard normal. The algorithm is based on the following two properties of the bivariate independent standard normal distribution: <br> | ||

if <math>Z = (Z_{1}, Z_{2}</math>) has this distribution, then <br> | if <math>Z = (Z_{1}, Z_{2}</math>) has this distribution, then <br> | ||

1.<math>R^2=Z_{1}^2+Z_{2}^2</math> is exponentially distributed with mean 2, i.e. <br> | 1.<math>R^2=Z_{1}^2+Z_{2}^2</math> is exponentially distributed with mean 2, i.e. <br> | ||

<math>P(R^2 \leq x) = 1-e^{-x/2}</math>. <br> | <math>P(R^2 \leq x) = 1-e^{-x/2}</math>. <br> | ||

2.Given <math>R^2</math>, the point <math>(Z_{1},Z_{2}</math>) is uniformly distributed on the circle of radius R centered at the origin. <br> | 2.Given <math>R^2</math>, the point <math>(Z_{1},Z_{2}</math>) is uniformly distributed on the circle of radius R centered at the origin. <br> | ||

We can use these properties to build the algorithm: <br> | We can use these properties to build the algorithm: <br> | ||

1) Generate random number <math> \begin{align} U_1,U_2 \sim~ \mathrm{Unif}(0, 1) \end{align} </math> <br /> | 1) Generate random number <math> \begin{align} U_1,U_2 \sim~ \mathrm{Unif}(0, 1) \end{align} </math> <br /> | ||

| Line 1,979: | Line 2,073: | ||

Note: In steps 2 and 3, we are using a similar technique as that used in the inverse transform method. <br /> | '''Note:''' In steps 2 and 3, we are using a similar technique as that used in the inverse transform method. <br /> | ||

The Box-Muller Transformation Method generates a pair of independent Standard Normal distributions, X and Y (Using the transformation of polar coordinates). <br /> | The Box-Muller Transformation Method generates a pair of independent Standard Normal distributions, X and Y (Using the transformation of polar coordinates). <br /> | ||

If you want to generate a number of independent standard normal distributed numbers (more than two), you can run the Box-Muller method several times.<br/> | If you want to generate a number of independent standard normal distributed numbers (more than two), you can run the Box-Muller method several times.<br/> | ||

For example: <br /> | For example: <br /> | ||

| Line 1,987: | Line 2,082: | ||

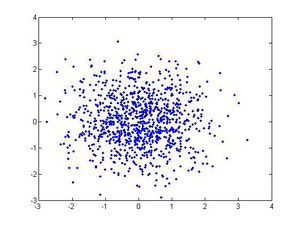

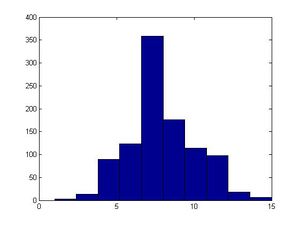

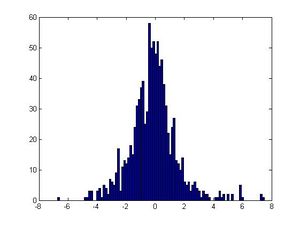

'''Matlab Code'''<br /> | |||

<pre style="font-size:16px"> | <pre style="font-size:16px"> | ||

>>close all | >>close all | ||

| Line 2,002: | Line 2,098: | ||

>>hist(y) | >>hist(y) | ||

</pre> | </pre> | ||

<br> | |||

'''Remember''': For the above code to work the "." needs to be after the d to ensure that each element of d is raised to the power of 0.5.<br /> Otherwise matlab will raise the entire matrix to the power of 0.5."<br> | |||

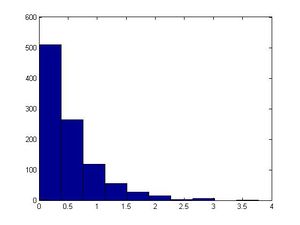

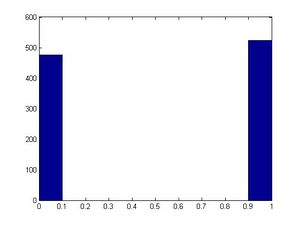

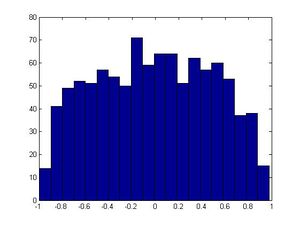

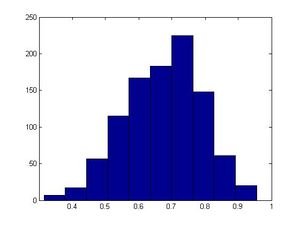

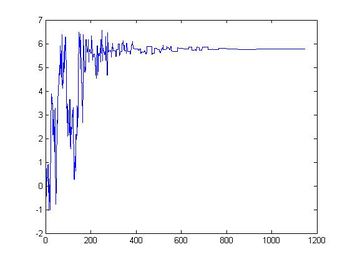

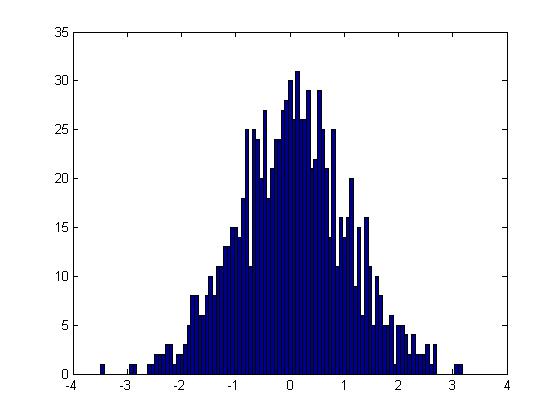

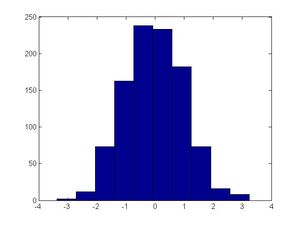

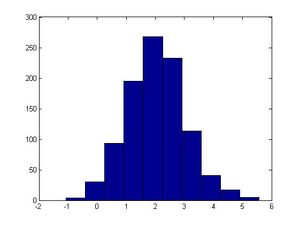

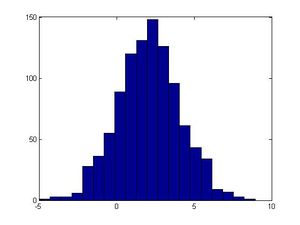

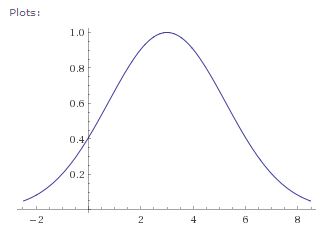

'''Note:'''<br>the first graph is hist(tet) and it is a uniform distribution.<br>The second one is hist(d) and it is a exponential distribution.<br>The third one is hist(x) and it is a normal distribution.<br>The last one is hist(y) and it is also a normal distribution. | |||

Note:<br>the first graph is hist(tet) and it is a uniform distribution.<br>The second one is hist(d) and it is a exponential distribution.<br>The third one is hist(x) and it is a normal distribution.<br>The last one is hist(y) and it is also a normal distribution. | |||

Attention:There is a "dot" between sqrt(d) and "*". It is because d and tet are vectors. <br> | Attention:There is a "dot" between sqrt(d) and "*". It is because d and tet are vectors. <br> | ||

| Line 2,022: | Line 2,118: | ||

>>hist(x) | >>hist(x) | ||

>>hist(x+2) | >>hist(x+2) | ||

>>hist(x*2+2) | >>hist(x*2+2)<br> | ||

</pre> | </pre> | ||

<br> | |||

Note: randn is random sample from a standard normal distribution.<br /> | '''Note:'''<br> | ||

1. randn is random sample from a standard normal distribution.<br /> | |||

2. hist(x+2) will be centered at 2 instead of at 0. <br /> | |||

3. hist(x*3+2) is also centered at 2. The mean doesn't change, but the variance of x*3+2 becomes nine times (3^2) the variance of x.<br /> | |||

[[File:Normal_x.jpg|300x300px]][[File:Normal_x+2.jpg|300x300px]][[File:Normal(2x+2).jpg|300px]] | [[File:Normal_x.jpg|300x300px]][[File:Normal_x+2.jpg|300x300px]][[File:Normal(2x+2).jpg|300px]] | ||

<br /> | <br /> | ||

<b>Comment</b>: Box-Muller transformations are not computationally efficient. The reason for this is the need to compute sine and cosine functions. A way to get around this time-consuming difficulty is by an indirect computation of the sine and cosine of a random angle (as opposed to a direct computation which generates U and then computes the sine and cosine of 2πU. <br /> | <b>Comment</b>:<br /> | ||

Box-Muller transformations are not computationally efficient. The reason for this is the need to compute sine and cosine functions. A way to get around this time-consuming difficulty is by an indirect computation of the sine and cosine of a random angle (as opposed to a direct computation which generates U and then computes the sine and cosine of 2πU. <br /> | |||

'''Alternative Methods of generating normal distribution'''<br /> | '''Alternative Methods of generating normal distribution'''<br /> | ||

1. Even though we cannot use inverse transform method, we can approximate this inverse using different functions.One method would be '''rational approximation'''.<br /> | 1. Even though we cannot use inverse transform method, we can approximate this inverse using different functions.One method would be '''rational approximation'''.<br /> | ||

2.'''Central limit theorem''' : If we sum 12 independent U(0,1) distribution and subtract 6 (which is E(ui)*12)we will approximately get a standard normal distribution.<br /> | 2.'''Central limit theorem''' : If we sum 12 independent U(0,1) distribution and subtract 6 (which is E(ui)*12)we will approximately get a standard normal distribution.<br /> | ||

| Line 2,047: | Line 2,148: | ||

=== Proof of Box Muller Transformation === | === Proof of Box Muller Transformation === | ||

Definition: | '''Definition:'''<br /> | ||

A transformation which transforms from a '''two-dimensional continuous uniform''' distribution to a '''two-dimensional bivariate normal''' distribution (or complex normal distribution). | A transformation which transforms from a '''two-dimensional continuous uniform''' distribution to a '''two-dimensional bivariate normal''' distribution (or complex normal distribution). | ||

| Line 2,067: | Line 2,168: | ||

u<sub>2</sub> = g<sub>2</sub> ^-1(x1,x2) | u<sub>2</sub> = g<sub>2</sub> ^-1(x1,x2) | ||

Inverting the above | Inverting the above transformation, we have | ||

u1 = exp^{-(x<sub>1</sub> ^2+ x<sub>2</sub> ^2)/2} | u1 = exp^{-(x<sub>1</sub> ^2+ x<sub>2</sub> ^2)/2} | ||

u2 = (1/2pi)*tan^-1 (x<sub>2</sub>/x<sub>1</sub>) | u2 = (1/2pi)*tan^-1 (x<sub>2</sub>/x<sub>1</sub>) | ||

| Line 2,331: | Line 2,432: | ||

Procedure: | Procedure: | ||

1) Generate U~Unif | 1) Generate U~Unif (0, 1)<br> | ||

2) Set <math>x=F^{-1}(u)</math><br> | 2) Set <math>x=F^{-1}(u)</math><br> | ||

3) X~f(x)<br> | 3) X~f(x)<br> | ||

'''Remark'''<br> | '''Remark'''<br> | ||

1) The preceding can be written algorithmically as | 1) The preceding can be written algorithmically for discrete random variables as <br> | ||

Generate a random number U | Generate a random number U ~ U(0,1] <br> | ||

If U<<sub> | If U < p<sub>0</sub> set X = x<sub>0</sub> and stop <br> | ||

If U<<sub> | If U < p<sub>0</sub> + p<sub>1</sub> set X = x<sub>1</sub> and stop <br> | ||

... | ... <br> | ||

2) If the <sub> | 2) If the x<sub>i</sub>, i>=0, are ordered so that x<sub>0</sub> < x<sub>1</sub> < x<sub>2</sub> <... and if we let F denote the distribution function of X, then X will equal x<sub>j</sub> if F(x<sub>j-1</sub>) <= U < F(x<sub>j</sub>) | ||

'''Example 1'''<br> | '''Example 1'''<br> | ||

| Line 2,368: | Line 2,469: | ||

Step1: Generate U~ U(0, 1)<br> | Step1: Generate U~ U(0, 1)<br> | ||

Step2: set <math>y=\, {-\frac {1}{{\lambda_1 +\lambda_2}}} ln(u)</math><br> | |||

Step2: set <math>y=\, {-\frac {1}{{\lambda_1 +\lambda_2}}} ln(1-u)</math><br> | |||

or set <math>y=\, {-\frac {1} {{\lambda_1 +\lambda_2}}} ln(u)</math><br> | |||

Since it is a uniform distribution, therefore after generate a lot of times 1-u and u are the same. | |||

* '''Matlab Code'''<br /> | |||

<pre style="font-size:16px"> | |||

>> lambda1 = 1; | |||

>> lambda2 = 2; | |||

>> u = rand; | |||

>> y = -log(u)/(lambda1 + lambda2) | |||

</pre> | |||

If we generalize this example from two independent particles to n independent particles we will have:<br> | If we generalize this example from two independent particles to n independent particles we will have:<br> | ||

| Line 2,524: | Line 2,638: | ||

=== Example of Decomposition Method === | === Example of Decomposition Method === | ||

<math>F_x(x) = \frac {1}{3} x+\frac {1}{3} x^2+\frac {1}{3} x^3, 0\leq x\leq 1</math> | |||

Let <math>U =F_x(x) = \frac {1}{3} x+\frac {1}{3} x^2+\frac {1}{3} x^3</math>, solve for x. | |||

<math>P_1=\frac{1}{3}, F_{x1} (x)= x, P_2=\frac{1}{3},F_{x2} (x)= x^2, | |||

P_3=\frac{1}{3},F_{x3} (x)= x^3</math> | |||

'''Algorithm:''' | '''Algorithm:''' | ||

Generate U | Generate <math>\,U \sim Unif [0,1)</math> | ||

Generate V | Generate <math>\,V \sim Unif [0,1)</math> | ||

if 0 | if <math>0\leq u \leq \frac{1}{3}, x = v</math> | ||

else if u | else if <math>u \leq \frac{2}{3}, x = v^{\frac{1}{2}}</math> | ||

else x = v | else <math>x=v^{\frac{1}{3}}</math> <br> | ||

| Line 2,606: | Line 2,720: | ||

For More Details, please refer to http://www.stanford.edu/class/ee364b/notes/decomposition_notes.pdf | For More Details, please refer to http://www.stanford.edu/class/ee364b/notes/decomposition_notes.pdf | ||

===Fundamental Theorem of Simulation=== | ===Fundamental Theorem of Simulation=== | ||

| Line 2,617: | Line 2,730: | ||

Inverse each part of partial CDF, the partial CDF is divided by the original CDF, partial range is uniform distribution.<br /> | Inverse each part of partial CDF, the partial CDF is divided by the original CDF, partial range is uniform distribution.<br /> | ||

More specific definition of the theorem can be found here.<ref>http://www.bus.emory.edu/breno/teaching/MCMC_GibbsHandouts.pdf</ref> | More specific definition of the theorem can be found here.<ref>http://www.bus.emory.edu/breno/teaching/MCMC_GibbsHandouts.pdf</ref> | ||

Matlab code: | |||

<pre style="font-size:16px"> | |||

close all | |||

clear all | |||

ii=1; | |||

while ii<1000 | |||

u=rand | |||

y=R*(2*U-1) | |||

if (1-U^2)>=(2*u-1)^2 | |||

x(ii)=y; | |||

ii=ii+1 | |||

end | |||

</pre> | |||

===Question 2=== | ===Question 2=== | ||

| Line 2,659: | Line 2,787: | ||

===The Bernoulli distribution=== | ===The Bernoulli distribution=== | ||

The Bernoulli distribution is a special case of the binomial distribution, where n = 1. X ~ Bin(1, p) has the same meaning as X ~ Ber(p), where p is the probability | The Bernoulli distribution is a special case of the binomial distribution, where n = 1. X ~ Bin(1, p) has the same meaning as X ~ Ber(p), where p is the probability of success and 1-p is the probability of failure (we usually define a variate q, q= 1-p). The mean of Bernoulli is p and the variance is p(1-p). Bin(n, p), is the distribution of the sum of n independent Bernoulli trials, Bernoulli(p), each with the same probability p, where 0<p<1. <br> | ||

For example, let X be the event that a coin toss results in a "head" with probability ''p'', then ''X~Bernoulli(p)''. <br> | For example, let X be the event that a coin toss results in a "head" with probability ''p'', then ''X~Bernoulli(p)''. <br> | ||

P(X=1)= p | |||

P(X=0)= q = 1-p | |||

Therefore, P(X=0) + P(X=1) = p + q = 1 | |||

'''Algorithm: ''' | '''Algorithm: ''' | ||

| Line 2,672: | Line 2,802: | ||

when <math>U \geq p, x=0</math><br> | when <math>U \geq p, x=0</math><br> | ||

3) Repeat as necessary | 3) Repeat as necessary | ||

* '''Matlab Code'''<br /> | |||

<pre style="font-size:16px"> | |||

>> p = 0.8 % an arbitrary probability for example | |||

>> for i = 1: 100 | |||

>> u = rand; | |||

>> if u < p | |||

>> x(ii) = 1; | |||

>> else | |||

>> x(ii) = 0; | |||

>> end | |||

>> end | |||

>> hist(x) | |||

</pre> | |||

===The Binomial Distribution=== | ===The Binomial Distribution=== | ||

| Line 2,780: | Line 2,924: | ||

P (X > x) = (1-p)<sup>x</sup>(because first x trials are not successful) <br/> | P (X > x) = (1-p)<sup>x</sup>(because first x trials are not successful) <br/> | ||

NB: An advantage of using this method is that nothing is rejected. We accept all the points, and the method is more efficient. Also, this method is closer to the inverse transform method as nothing is being rejected. <br /> | |||

'''Proof''' <br/> | '''Proof''' <br/> | ||

| Line 3,002: | Line 3,148: | ||

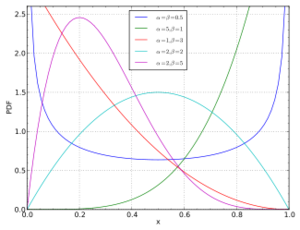

There are two positive shape parameters in this distribution defined as alpha and beta: <br> | There are two positive shape parameters in this distribution defined as alpha and beta: <br> | ||

-Both parameters greater than 0, and X within the interval [0,1]. <br> | -Both parameters are greater than 0, and X is within the interval [0,1]. <br> | ||

-Alpha is used as exponents of the random variable. <br> | -Alpha is used as exponents of the random variable. <br> | ||

-Beta is used to control the shape of the this distribution. We use the beta distribution to build the model of the behavior of random variables, which are limited to intervals of finite length. <br> | -Beta is used to control the shape of the this distribution. We use the beta distribution to build the model of the behavior of random variables, which are limited to intervals of finite length. <br> | ||

| Line 3,052: | Line 3,198: | ||

:<math>\displaystyle \text{f}(x) = \frac{\Gamma(\alpha+1)}{\Gamma(\alpha)\Gamma(1)}x^{\alpha-1}(1-x)^{1-1}=\alpha x^{\alpha-1}</math><br> | :<math>\displaystyle \text{f}(x) = \frac{\Gamma(\alpha+1)}{\Gamma(\alpha)\Gamma(1)}x^{\alpha-1}(1-x)^{1-1}=\alpha x^{\alpha-1}</math><br> | ||

By integrating <math>f(x)</math>, we find the CDF of X is <math>F(x) = x^{\alpha}</math>. | |||

As <math>F(x)^{-1} = x^\frac {1}{\alpha}</math>, using the inverse transform method, <math> X = U^\frac {1}{\alpha} </math> with U ~ U[0,1]. | |||

<math> | |||

'''Algorithm''' | '''Algorithm''' | ||

| Line 3,075: | Line 3,214: | ||

</pre> | </pre> | ||

'''Case 3:'''<br\> To sample from beta in general | '''Case 3:'''<br\> To sample from beta in general, we use the property that <br\> | ||

:if <math>Y_1</math> follows gamma <math>(\alpha,1)</math><br\> | :if <math>Y_1</math> follows gamma <math>(\alpha,1)</math><br\> | ||

| Line 3,471: | Line 3,610: | ||

'''Definition:''' In probability theory, a stochastic process /stoʊˈkæstɪk/, or sometimes random process (widely used) is a collection of random variables; this is often used to represent the evolution of some random value, or system, over time. This is the probabilistic counterpart to a deterministic process (or deterministic system). Instead of describing a process which can only evolve in one way (as in the case, for example, of solutions of an ordinary differential equation), in a stochastic or random process there is some indeterminacy: even if the initial condition (or starting point) is known, there are several (often infinitely many) directions in which the process may evolve. (from Wikipedia) | '''Definition:''' In probability theory, a stochastic process /stoʊˈkæstɪk/, or sometimes random process (widely used) is a collection of random variables; this is often used to represent the evolution of some random value, or system, over time. This is the probabilistic counterpart to a deterministic process (or deterministic system). Instead of describing a process which can only evolve in one way (as in the case, for example, of solutions of an ordinary differential equation), in a stochastic or random process there is some indeterminacy: even if the initial condition (or starting point) is known, there are several (often infinitely many) directions in which the process may evolve. (from Wikipedia) | ||

A stochastic process is non-deterministic. This means that | A stochastic process is non-deterministic. This means that even if we know the initial condition(state), and we know some possibilities of the states to follow, the exact value of the final state remains to be uncertain. | ||

We can illustrate this with an example of speech: if "I" is the first word in a sentence, the set of words that could follow would be limited (eg. like, want, am), and the same happens for the third word and so on. The words then have some probabilities among them such that each of them is a random variable, and the sentence would be a collection of random variables. <br> | We can illustrate this with an example of speech: if "I" is the first word in a sentence, the set of words that could follow would be limited (eg. like, want, am), and the same happens for the third word and so on. The words then have some probabilities among them such that each of them is a random variable, and the sentence would be a collection of random variables. <br> | ||

| Line 3,483: | Line 3,622: | ||

2. Markov Process- This is a stochastic process that satisfies the Markov property which can be understood as the memory-less property. The property states that the jump to a future state only depends on the current state of the process, and not of the process's history. This model is used to model random walks exhibited by particles, the health state of a life insurance policyholder, decision making by a memory-less mouse in a maze, etc. <br> | 2. Markov Process- This is a stochastic process that satisfies the Markov property which can be understood as the memory-less property. The property states that the jump to a future state only depends on the current state of the process, and not of the process's history. This model is used to model random walks exhibited by particles, the health state of a life insurance policyholder, decision making by a memory-less mouse in a maze, etc. <br> | ||

=====Example===== | =====Example===== | ||

| Line 3,491: | Line 3,628: | ||

stochastic process always has state space and the index set to limit the range. | stochastic process always has state space and the index set to limit the range. | ||

The state space is the set of cars , while <math>x_t</math> are sport cars. | The state space is the set of cars, while <math>x_t</math> are sport cars. | ||

Births in a hospital occur randomly at an average rate | Births in a hospital occur randomly at an average rate | ||

| Line 3,525: | Line 3,662: | ||

the rate parameter may change over time; such a process is called a non-homogeneous Poisson process | the rate parameter may change over time; such a process is called a non-homogeneous Poisson process | ||

==== | ==== Examples ==== | ||

<br /> | <br /> | ||

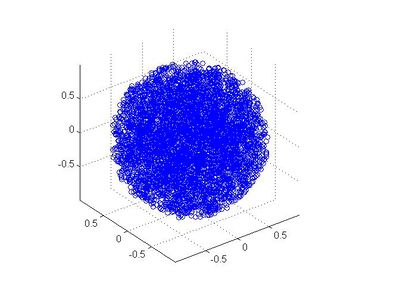

'''How to generate a multivariate normal with the built-in function "randn": (example)'''<br /> | '''How to generate a multivariate normal with the built-in function "randn": (example)'''<br /> | ||

| Line 3,585: | Line 3,722: | ||

===Poisson Process=== | ===Poisson Process=== | ||

A Poisson Process is a stochastic approach to count number of events in a certain time period. <s>Strike-through text</s> | |||

A discrete stochastic variable ''X'' is said to have a Poisson distribution with parameter ''λ'' > 0 if | A discrete stochastic variable ''X'' is said to have a Poisson distribution with parameter ''λ'' > 0 if | ||

:<math>\!f(n)= \frac{\lambda^n e^{-\lambda}}{n!} \qquad n= 0,1,2,3,4,5,\ldots,</math>. | :<math>\!f(n)= \frac{\lambda^n e^{-\lambda}}{n!} \qquad n= 0,1,2,3,4,5,\ldots,</math>. | ||

| Line 3,608: | Line 3,746: | ||

'''Generate a Poisson Process'''<br /> | '''Generate a Poisson Process'''<br /> | ||

1. set <math>T_{0}=0</math> and n=1<br/> | 1. set <math>T_{0}=0</math> and n=1<br/> | ||

| Line 3,692: | Line 3,827: | ||

</pre> | </pre> | ||

<br> | |||

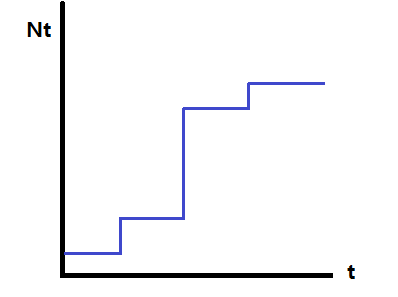

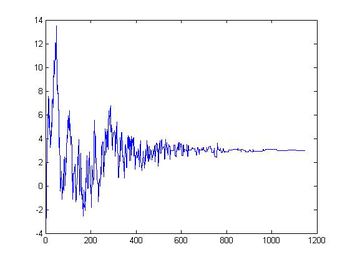

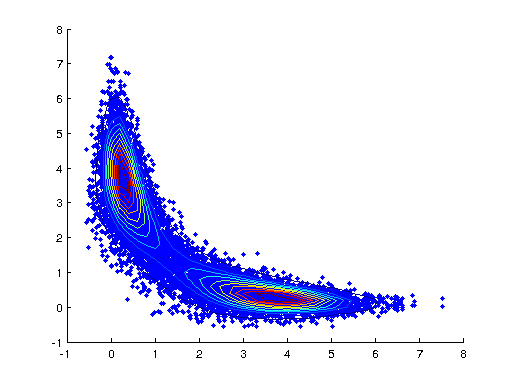

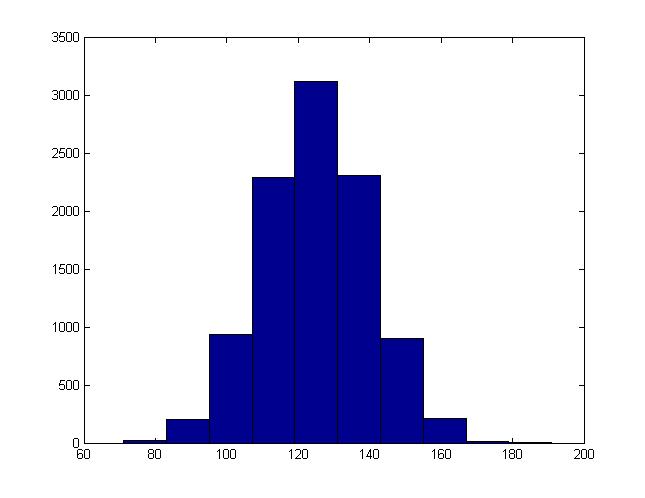

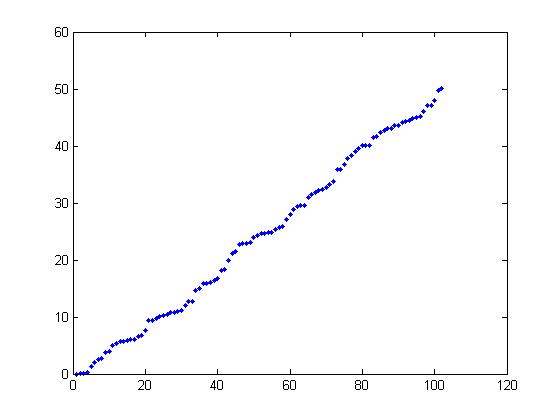

The following plot is using TT = 50.<br> | The following plot is using TT = 50.<br> | ||

The number of points generated every time on average should be <math>\lambda</math> * TT. <br> | The number of points generated every time on average should be <math>\lambda</math> * TT. <br> | ||

The maximum value of the points should be TT. <br> | The maximum value of the points should be TT. <br> | ||

[[File:Poisson.jpg]] | [[File:Poisson.jpg]]<br> | ||

when TT be big, the plot of the graph will be linear, when we set the TT be 5 or small number, the plot graph looks like discrete distribution. | when TT be big, the plot of the graph will be linear, when we set the TT be 5 or small number, the plot graph looks like discrete distribution. | ||

| Line 3,804: | Line 3,939: | ||

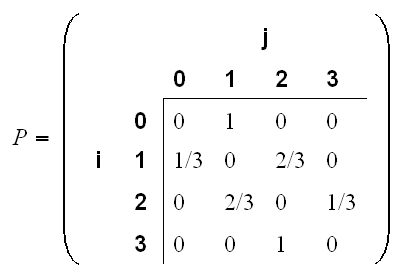

=== Examples of Transition Matrix === | === Examples of Transition Matrix === | ||

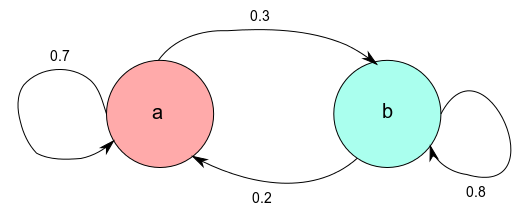

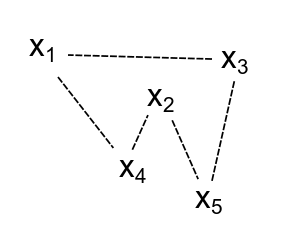

[[File:Mark13.png]] | [[File:Mark13.png]]<br> | ||

The picture is from http://www.google.ca/imgres?imgurl=http://academic.uprm.edu/wrolke/esma6789/graphs/mark13.png&imgrefurl=http://academic.uprm.edu/wrolke/esma6789/mark1.htm&h=274&w=406&sz=5&tbnid=6A8GGaxoPux9kM:&tbnh=83&tbnw=123&prev=/search%3Fq%3Dtransition%2Bmatrix%26tbm%3Disch%26tbo%3Du&zoom=1&q=transition+matrix&usg=__hZR-1Cp6PbZ5PfnSjs2zU6LnCiI=&docid=PaQvi1F97P2urM&sa=X&ei=foTxUY3DB-rMyQGvq4D4Cg&sqi=2&ved=0CDYQ9QEwAQ&dur=5515) | The picture is from http://www.google.ca/imgres?imgurl=http://academic.uprm.edu/wrolke/esma6789/graphs/mark13.png&imgrefurl=http://academic.uprm.edu/wrolke/esma6789/mark1.htm&h=274&w=406&sz=5&tbnid=6A8GGaxoPux9kM:&tbnh=83&tbnw=123&prev=/search%3Fq%3Dtransition%2Bmatrix%26tbm%3Disch%26tbo%3Du&zoom=1&q=transition+matrix&usg=__hZR-1Cp6PbZ5PfnSjs2zU6LnCiI=&docid=PaQvi1F97P2urM&sa=X&ei=foTxUY3DB-rMyQGvq4D4Cg&sqi=2&ved=0CDYQ9QEwAQ&dur=5515) | ||

| Line 3,840: | Line 3,975: | ||

</div> | </div> | ||

<math>x_k+1= (ax_k+c) mod</math> <math>m</math><br /> | <math>\begin{align}x_k+1= (ax_k+c) \mod m\end{align}</math><br /> | ||

Where a, c, m and x<sub>1</sub> (the seed) are values we must chose before running the algorithm. While there is no set value for each, it is best for m to be large and prime. For example, Matlab uses a = 75,b = 0,m = 231 − 1. | |||

'''Examples:'''<br> | |||

1. <math>\begin{align}X_{0} = 10 ,a = 2 , c = 1 , m = 13 \end{align}</math><br> | |||

<math>\begin{align}X_{1} = 2 * 10 + 1\mod 13 = 8\end{align}</math><br> | |||

<math>\begin{align}X_{2} = 2 * 8 + 1\mod 13 = 4\end{align}</math> ... and so on<br> | |||

2. <math>\begin{align}X_{0} = 44 ,a = 13 , c = 17 , m = 211\end{align}</math><br> | |||

<math>\begin{align}X_{1} = 13 * 44 + 17\mod 211 = 167\end{align}</math><br> | |||

<math>\begin{align}X_{2} = 13 * 167 + 17\mod 211 = 78\end{align}</math><br> | |||

<math>\begin{align}X_{3} = 13 * 78 + 17\mod 211 = 187\end{align}</math> ... and so on<br> | |||

=== Inverse Transformation Method === | === Inverse Transformation Method === | ||

| Line 4,100: | Line 4,237: | ||

<br>N-Step Transition Matrix: a matrix <math> P_n </math> whose elements are the probability of moving from state i to state j in n steps. <br/> | <br>N-Step Transition Matrix: a matrix <math> P_n </math> whose elements are the probability of moving from state i to state j in n steps. <br/> | ||

<math>P_n (i,j)=Pr(X_{m+n}=j|X_m=i)</math> <br/> | <math>P_n (i,j)=Pr(X_{m+n}=j|X_m=i)</math> <br/> | ||

Explanation: (with an example) Suppose there 10 states { 1, 2, ..., 10}, and suppose you are on state 2, then P<sub>8</sub>(2, 5) represent the probability of moving from state 2 to state 5 in 8 steps. | |||

One-step transition probability:<br/> | One-step transition probability:<br/> | ||

| Line 4,180: | Line 4,319: | ||

Note: <math>P_2 = P_1\times P_1; P_n = P^n</math><br /> | Note: <math>P_2 = P_1\times P_1; P_n = P^n</math><br /> | ||

The equation above is a special case of the Chapman-Kolmogorov equations.<br /> | The equation above is a special case of the Chapman-Kolmogorov equations.<br /> | ||

It is true because of the Markov property or | It is true because of the Markov property or the memoryless property of Markov chains, where the probabilities of going forward to the next state <br /> | ||

the memoryless property of Markov chains, where the probabilities of going forward to the next state <br /> | |||

only depends on your current state, not your previous states. By intuition, we can multiply the 1-step transition <br /> | only depends on your current state, not your previous states. By intuition, we can multiply the 1-step transition <br /> | ||

matrix n-times to get a n-step transition matrix.<br /> | matrix n-times to get a n-step transition matrix.<br /> | ||

| Line 4,228: | Line 4,366: | ||

The vector <math>\underline{\mu_0}</math> is called the initial distribution. <br/> | The vector <math>\underline{\mu_0}</math> is called the initial distribution. <br/> | ||

<math> | <math> P^2~=P\cdot P </math> (as verified above) | ||

In general, | In general, | ||

<math> | <math> P^n~= \Pi_{i=1}^{n} P</math> (P multiplied n times)<br/> | ||

<math>\mu_n~=\mu_0 | <math>\mu_n~=\mu_0 P^n</math><br/> | ||

where <math>\mu_0</math> is the initial distribution, | where <math>\mu_0</math> is the initial distribution, | ||

and <math>\mu_{m+n}~=\mu_m | and <math>\mu_{m+n}~=\mu_m P^n</math><br/> | ||

N can be negative, if P is invertible. | N can be negative, if P is invertible. | ||

| Line 4,267: | Line 4,405: | ||

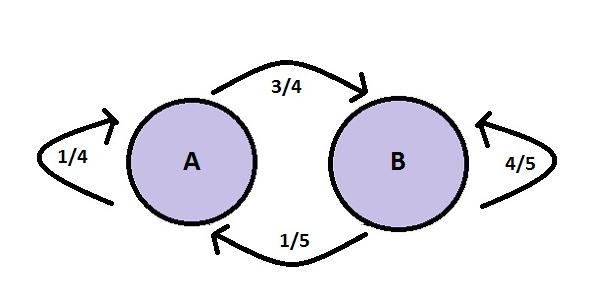

<math>\pi</math> is stationary distribution of the chain if <math>\pi</math>P = <math>\pi</math> | <math>\pi</math> is stationary distribution of the chain if <math>\pi</math>P = <math>\pi</math> In other words, a stationary distribution is when the markov process that have equal probability of moving to other states as its previous move. | ||

where <math>\pi</math> is a probability vector <math>\pi</math>=(<math>\pi</math><sub>i</sub> | <math>i \in X</math>) such that all the entries are nonnegative and sum to 1. It is the eigenvector in this case. | where <math>\pi</math> is a probability vector <math>\pi</math>=(<math>\pi</math><sub>i</sub> | <math>i \in X</math>) such that all the entries are nonnegative and sum to 1. It is the eigenvector in this case. | ||

| Line 4,274: | Line 4,412: | ||

The above conditions are used to find the stationary distribution | The above conditions are used to find the stationary distribution | ||

In matlab, we could use <math>P^n</math> to find the stationary distribution.(n is usually larger than 100)<br/> | |||

'''Comments:'''<br/> | '''Comments:'''<br/> | ||

| Line 4,481: | Line 4,621: | ||

<math>\displaystyle \pi=(\frac{1}{3},\frac{4}{9}, \frac{2}{9})</math> | <math>\displaystyle \pi=(\frac{1}{3},\frac{4}{9}, \frac{2}{9})</math> | ||

<math>\displaystyle \lambda u=A u</math> | Note that <math>\displaystyle \pi=\pi p</math> looks similar to eigenvectors/values <math>\displaystyle \lambda vec{u}=A vec{u}</math> | ||

<math>\pi</math> can be considered as an eigenvector of P with eigenvalue = 1. | <math>\pi</math> can be considered as an eigenvector of P with eigenvalue = 1. But note that the vector <math>vec{u}</math> is a column vector and o we need to transform our <math>\pi</math> into a column vector. | ||

But the vector u | |||

<math>\pi</math><sup>T</sup>= P<sup>T</sup><math>\pi</math><sup>T</sup> | <math>=> \pi</math><sup>T</sup>= P<sup>T</sup><math>\pi</math><sup>T</sup><br/> | ||

Then <math>\pi</math><sup>T</sup> is an eigenvector of P<sup>T</sup> with eigenvalue = 1. <br /> | Then <math>\pi</math><sup>T</sup> is an eigenvector of P<sup>T</sup> with eigenvalue = 1. <br /> | ||

MatLab tips:[V D]=eig(A), where D is a diagonal matrix of eigenvalues and V is a matrix of eigenvectors of matrix A<br /> | MatLab tips:[V D]=eig(A), where D is a diagonal matrix of eigenvalues and V is a matrix of eigenvectors of matrix A<br /> | ||

==== MatLab Code ==== | ==== MatLab Code ==== | ||

<pre style='font-size:14px'> | |||

P = [1/3 1/3 1/3; 1/4 3/4 0; 1/2 0 1/2] | |||

pii = [1/3 4/9 2/9] | |||

[vec val] = eig(P') %% P' is the transpose of matrix P | |||

vec(:,1) = [-0.5571 -0.7428 -0.3714] %% this is in column form | |||

a = -vec(:,1) | |||

>> a = | |||

[0.5571 0.7428 0.3714] | |||

%% a is in column form | |||

%% Since we want this vector a to sum to 1, we have to scale it | |||

b = a/sum(a) | |||

>> b = | |||

[0.3333 0.4444 0.2222] | |||

%% b is also in column form | |||

%% Observe that b' = pii | |||

</pre> | |||

</br> | |||

==== Limiting distribution ==== | ==== Limiting distribution ==== | ||

A Markov chain has limiting distribution <math>\pi</math> if | A Markov chain has limiting distribution <math>\pi</math> if | ||

| Line 4,518: | Line 4,686: | ||

, find stationary distribution.<br/> | , find stationary distribution.<br/> | ||

We have:<br/> | We have:<br/> | ||

<math>0 | <math>0\times \pi_0+0\times \pi_1+1\times \pi_2=\pi_0</math><br/> | ||

<math>1 | <math>1\times \pi_0+0\times \pi_1+0\times \pi_2=\pi_1</math><br/> | ||

<math>0 | <math>0\times \pi_0+1\times \pi_1+0\times \pi_2=\pi_2</math><br/> | ||

<math>\pi_0+\pi_1+\pi_2=1</math><br/> | <math>\,\pi_0+\pi_1+\pi_2=1</math><br/> | ||

this gives <math>\pi = \left [ \begin{matrix} | this gives <math>\pi = \left [ \begin{matrix} | ||

\frac{1}{3} & \frac{1}{3} & \frac{1}{3} \\[6pt] | \frac{1}{3} & \frac{1}{3} & \frac{1}{3} \\[6pt] | ||

| Line 4,529: | Line 4,697: | ||

In general, there are chains with stationery distributions that don't converge, this means that they have stationary distribution but are not limiting.<br/> | In general, there are chains with stationery distributions that don't converge, this means that they have stationary distribution but are not limiting.<br/> | ||

=== MatLab Code === | |||

<pre style='font-size:14px'> | |||

MATLAB | |||

>> P=[0, 1, 0;0, 0, 1; 1, 0, 0] | |||

P = | |||

0 1 0 | |||

0 0 1 | |||

1 0 0 | |||

0 | |||

0 | |||

>> pii=[1/3, 1/3, 1/3] | |||

pii = | |||

0.3333 0.3333 0.3333 | |||

>> pii*P | |||

ans = | |||

0.3333 0.3333 0.3333 | |||

>> P^1000 | |||

ans = | |||

0 1 0 | |||

0 0 1 | |||

1 0 0 | |||

>> P^10000 | |||

> | |||

ans = | |||

0 | 0 1 0 | ||

0 | 0 0 1 | ||

0 | 1 0 0 | ||

>> P^ | >> P^10002 | ||

ans = | ans = | ||

0 | 1 0 0 | ||

0 | 0 1 0 | ||

0 0 1 | |||

>> P^ | >> P^10003 | ||

ans = | ans = | ||

0 | 0 1 0 | ||

0 | 0 0 1 | ||

0 | 1 0 0 | ||

>> P^ | >> %P^10000 = P^10003 | ||

>> % This chain does not have limiting distribution, it has a stationary distribution. | |||

This chain does not converge, it has a cycle. | |||

</pre> | |||

The first condition of limiting distribution is satisfied; however, the second condition where <math>\pi</math><sub>j</sub> has to be independent of i (i.e. all rows of the matrix are the same) is not met.<br> | |||

This example shows the distinction between having a stationary distribution and convergence(having a limiting distribution).Note: <math>\pi=(1/3,1/3,1/3)</math> is the stationary distribution as <math>\pi=\pi*p</math>. However, upon repeatedly multiplying P by itself (repeating the step <math>P^n</math> as n goes to infinite) one will note that the results become a cycle (of period 3) of the same sequence of matrices. The chain has a stationary distribution, but does not converge to it. Thus, there is no limiting distribution.<br> | |||

'''Example:''' | |||

<math> P= \left [ \begin{matrix} | |||

\frac{4}{5} & \frac{1}{5} & 0 & 0 \\[6pt] | |||

\frac{1}{5} & \frac{4}{5} & 0 & 0 \\[6pt] | |||

0 & 0 & \frac{4}{5} & \frac{1}{5} \\[6pt] | |||

0 & 0 & \frac{1}{10} & \frac{9}{10} \\[6pt] | |||

\end{matrix} \right] </math> | |||

This chain converges but is not a limiting distribution as the rows are not the same and it doesn't converge to the stationary distribution.<br /> | |||

<br /> | |||

Double Stichastic Matrix: a double stichastic matrix is a matrix whose all colums sum to 1 and all rows sum to 1.<br /> | |||

If a given transition matrix is a double stichastic matrix with n colums and n rows, then the stationary distribution matrix has all<br/> | |||

elements equals to 1/n.<br/> | |||

>> | <br/> | ||

Example:<br/> | |||

For a stansition matrix <math> P= \left [ \begin{matrix} | |||

0 & \frac{1}{2} & \frac{1}{2} \\[6pt] | |||

\frac{1}{2} & 0 & \frac{1}{2} \\[6pt] | |||

\frac{1}{2} & \frac{1}{2} & 0 \\[6pt] | |||

\end{matrix} \right] </math>,<br/> | |||

We have:<br/> | |||

<math>0\times \pi_0+\frac{1}{2}\times \pi_1+\frac{1}{2}\times \pi_2=\pi_0</math><br/> | |||

<math>\frac{1}{2}\times \pi_0+0\times \pi_1+\frac{1}{2}\times \pi_2=\pi_1</math><br/> | |||

<math>\frac{1}{2}\times \pi_0+\frac{1}{2}\times \pi_1+0\times \pi_2=\pi_2</math><br/> | |||

<math>\pi_0+\pi_1+\pi_2=1</math><br/> | |||

The stationary distribution is <math>\pi = \left [ \begin{matrix} | |||

\frac{1}{3} & \frac{1}{3} & \frac{1}{3} \\[6pt] | |||

\end{matrix} \right] </math> <br/> | |||

<span style="font-size:20px;color:red">The following contents are problematic. Please correct it if possible.</span><br /> | |||

Suppose we're given that the limiting distribution <math> \pi </math> exists for stochastic matrix P, that is, <math> \pi = \pi \times P </math> <br> | |||

WLOG assume P is diagonalizable, (if not we can always consider the Jordan form and the computation below is exactly the same. <br> | |||

> | Let <math> P = U \Sigma U^{-1} </math> be the eigenvalue decomposition of <math> P </math>, where <math>\Sigma = diag(\lambda_1,\ldots,\lambda_n) ; |\lambda_i| > |\lambda_j|, \forall i < j </math><br> | ||

Suppose <math> \pi^T = \sum a_i u_i </math> where <math> a_i \in \mathcal{R} </math> and <math> u_i </math> are eigenvectors of <math> P </math> for <math> i = 1\ldots n </math> <br> | |||

By definition: <math> \pi^k = \pi P = \pi P^k \implies \pi = \pi(U \Sigma U^{-1}) (U \Sigma U^{-1} ) \ldots (U \Sigma U^{-1}) </math> <br> | |||

>> | Therefore <math> \pi^k = \sum a_i \lambda_i^k u_i </math> since <math> <u_i , u_j> = 0, \forall i\neq j </math>. <br> | ||

Therefore <math> \lim_{k \rightarrow \infty} \pi^k = \lim_{k \rightarrow \infty} \lambda_i^k a_1 u_1 = u_1 </math> | |||

=== MatLab Code === | |||

<pre style='font-size:14px'> | |||

>> P=[1/3, 1/3, 1/3; 1/4, 3/4, 0; 1/2, 0, 1/2] % We input a matrix P. This is the same matrix as last class. | |||

P = | |||

0.3333 0.3333 0.3333 | |||

0.2500 0.7500 0 | |||

0.5000 0 0.5000 | |||

>> P^2 | |||

ans = | |||

0.3611 0.3611 0.2778 | |||

0 | 0.2708 0.6458 0.0833 | ||

0 | 0.4167 0.1667 0.4167 | ||

>> P^3 | |||

ans = | |||

The | 0.3495 0.3912 0.2593 | ||

0.2934 0.5747 0.1319 | |||

0.3889 0.2639 0.3472 | |||

>> P^10 | |||

The example of code and an example of stand distribution, then the all the pi probability in the matrix are the same. | |||

ans = | |||

0.3341 0.4419 0.2240 | |||

0.3314 0.4507 0.2179 | |||

0.3360 0.4358 0.2282 | |||

>> P^100 % The stationary distribution is [0.3333 0.4444 0.2222] since values keep unchanged. | |||

= | ans = | ||

0.3333 0.4444 0.2222 | |||

0.3333 0.4444 0.2222 | |||

0.3333 0.4444 0.2222 | |||

>> | >> [vec val]=eigs(P') % We can find the eigenvalues and eigenvectors from the transpose of matrix P. | ||

vec = | |||

-0.5571 0.2447 0.8121 | |||

-0.7428 -0.7969 -0.3324 | |||

-0.3714 0.5523 -0.4797 | |||

val = | |||

0 | 1.0000 0 0 | ||

0 0.6477 0 | |||

0 0 -0.0643 | |||

>> | >> a=-vec(:,1) % The eigenvectors can be mutiplied by (-1) since λV=AV can be written as λ(-V)=A(-V) | ||

a = | |||

0.5571 | |||

0.7428 | |||

0.3714 | |||

>> | >> sum(a) | ||

ans = | ans = | ||

1.6713 | |||

>> | >> a/sum(a) | ||

ans = | ans = | ||

0.3333 | |||

0.4444 | |||

0.2222 | |||

</pre> | </pre> | ||

This is <math>\pi_j = lim[p^n]_(ij)</math> exist and is independent of i | |||

This | |||

Another example: | Another example: | ||

| Line 4,771: | Line 4,921: | ||

'''Note:'''if there's a finite number N then every other state can be reached in N steps. | '''Note:'''if there's a finite number N then every other state can be reached in N steps. | ||

'''Note:'''Also note that a Ergodic chain is irreducible (all states communicate) and aperiodic (d = 1). An Ergodic chain is promised to have a stationary and limiting distribution. | '''Note:'''Also note that a Ergodic chain is irreducible (all states communicate) and aperiodic (d = 1). An Ergodic chain is promised to have a stationary and limiting distribution.<br/> | ||

'''Ergodicity:''' A state i is said to be ergodic if it is aperiodic and positive recurrent. In other words, a state i is ergodic if it is recurrent, has a period of 1 and it has finite mean recurrence time. If all states in an irreducible Markov chain are ergodic, then the chain is said to be ergodic.<br/> | |||

'''Some more:'''It can be shown that a finite state irreducible Markov chain is ergodic if it has an aperiodic state. A model has the ergodic property if there's a finite number N such that any state can be reached from any other state in exactly N steps. In case of a fully connected transition matrix where all transitions have a non-zero probability, this condition is fulfilled with N=1.<br/> | |||

| Line 4,841: | Line 4,993: | ||

<math> \pi_0 = \frac{4}{19} </math> <br> | <math> \pi_0 = \frac{4}{19} </math> <br> | ||

<math> \pi = [\frac{4}{19}, \frac{15}{19}] </math> <br> | <math> \pi = [\frac{4}{19}, \frac{15}{19}] </math> <br> | ||

<math> \pi </math> is the long run distribution | <math> \pi </math> is the long run distribution, and this is also a limiting distribution. | ||

We can use the stationary distribution to compute the expected waiting time to return to state 'a' <br/> | We can use the stationary distribution to compute the expected waiting time to return to state 'a' <br/> | ||

| Line 4,848: | Line 5,000: | ||

state 'a' given that we start at state 'a' is 19/4.<br/> | state 'a' given that we start at state 'a' is 19/4.<br/> | ||