show, Attend and Tell: Neural Image Caption Generation with Visual Attention

Introduction

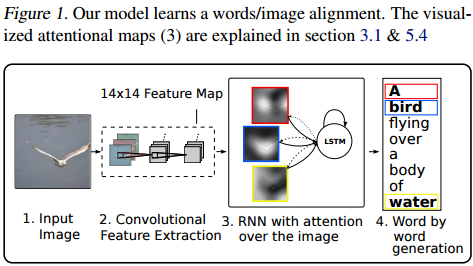

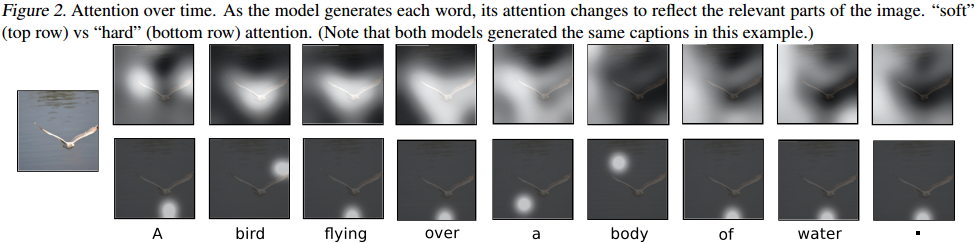

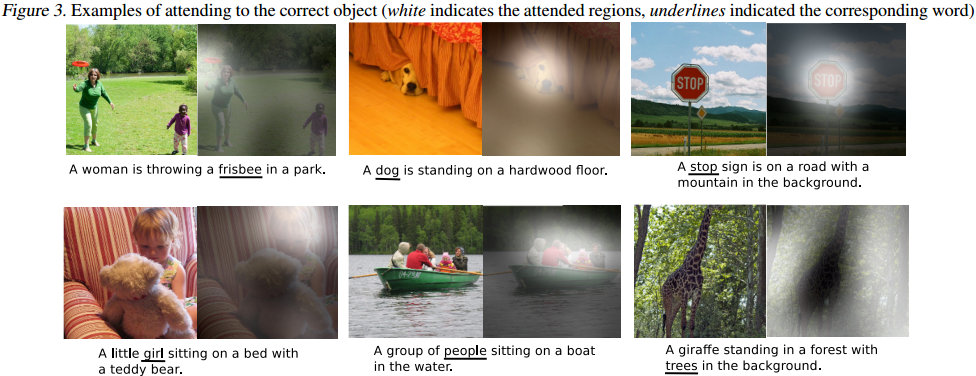

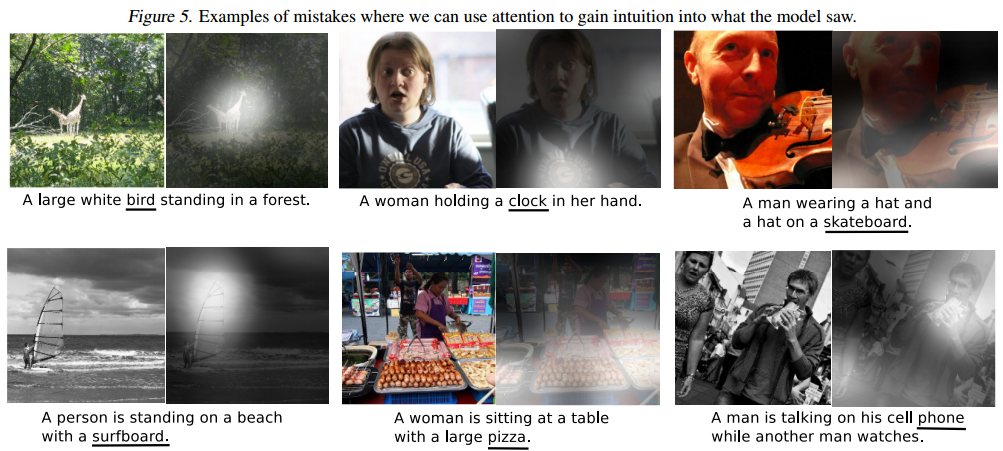

This paper introduces an attention based model that automatically learns to describe the content of images. It is able to focus on salient parts of the image while generating the corresponding word in the output sentence. A visualization is provided showing which part of the image was attended to to generate each specific word in the output. This can be used to get a sense of what is going on in the model and is especially useful for understanding the kinds of mistakes it makes. The model is tested on three datasets, Flickr8k, Flickr30k, and MS COCO.

Motivation

Caption generation and compressing huge amounts of salient visual information into descriptive language were recently improved by combination of convolutional neural networks and recurrent neural networks. . Using representations from the top layer of a convolutional net that distill information in image down to the most salient objects can lead to losing information which could be useful for richer, more descriptive captions. Retaining this information using more low-level representation was the motivation for the current work.

Contributions

- Two attention-based image caption generators using a common framework. A "soft" deterministic attention mechanism and a "hard" stochastic mechanism.

- Show how to gain insight and interpret results of this framework by visualizing "where" and "what" the attention focused on.

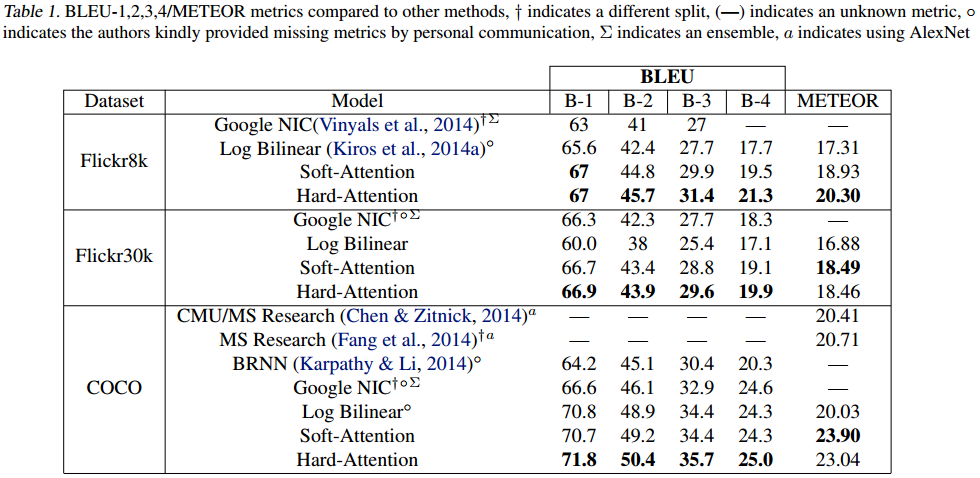

- Quantitatively validate the usefulness of attention in caption generation with state of the art performance on three datasets (Flickr8k, Flickr30k, and MS COCO)

Model

Encoder: Convolutional Features

Model takes in a single image and generates a caption of arbitrary length. The caption is a sequence of one-hot encoded words from a given vocabulary.

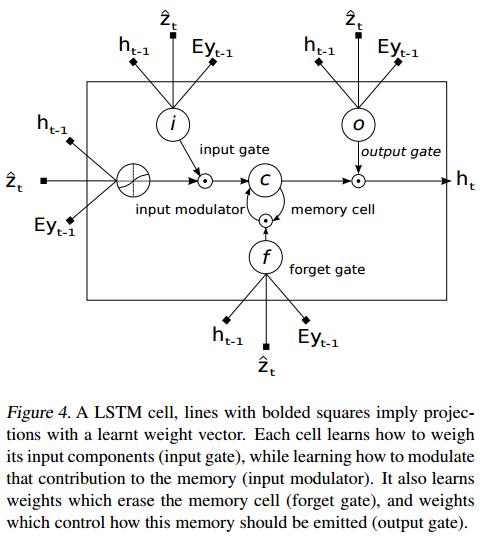

Decoder: Long Short-Term Memory Network

Properties

"where" the network looks next depends on the sequence of words that has already been generated.

The attention framework learns latent alignments from scratch instead of explicitly using object detectors. This allows the model to go beyond "objectness" and learn to attend to abstract concepts.

Training

Two regularization techniques were used, used drop out and early stopping on BLEU score.

The MS COCO dataset has more than 5 reference sentences for some of the images, while the Flickr datasets have exactly 5. For consistency, the reference sentences for all images in the MS COCO dataset was truncated to 5. There was also some basic tokenization applied to the MS COCO dataset to be consistent with the tokenization in the Flickr datasets.

On the largest dataset (MS COCO) the attention model took less than 3 days to train on NVIDIA Titan Black GPU.

Results

Results reported with the BLEU and METEOR metrics.

References

<references />