scene Parsing with Multiscale Feature Learning, Purity Trees, and Optimal Covers Machines: Difference between revisions

| Line 33: | Line 33: | ||

A simple grid search was used to find the best learning rate and regularization parameters (weight decay). A holdout of 10% of the training data was used as a validation set during the parameter search. For both datasets, jitter was used to artificially expand the size of the training data, to try to allow features to not overfit irrelevant biases present in the data. This jitter included horizontal flipping, and rotations between -8 and 8 degrees. | A simple grid search was used to find the best learning rate and regularization parameters (weight decay). A holdout of 10% of the training data was used as a validation set during the parameter search. For both datasets, jitter was used to artificially expand the size of the training data, to try to allow features to not overfit irrelevant biases present in the data. This jitter included horizontal flipping, and rotations between -8 and 8 degrees. | ||

The hierarchy used to find the optimal cover is a constructed on the raw image gradient, based on a standard volume criterion<ref> | |||

F. Meyer and L. Najman. [http://onlinelibrary.wiley.com/doi/10.1002/9781118600788.ch9/summary "Segmentation, minimum spanning tree and hierarchies."] In L. Najman and H. Talbot, editors, Mathematical Morphology: from theory to application, chapter 9, pages 229–261. ISTE-Wiley, London, 2010. | |||

</ref>, completed by removing non-informative small components (less than 100 pixels). Traditionally segmentation methods use a partition of segments (i.e. finding an optimal cut in the tree) rather than a cover. A number of graph cut methods were tried, but the results were systematically worse than the optimal cover method. | |||

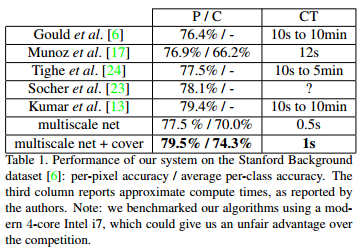

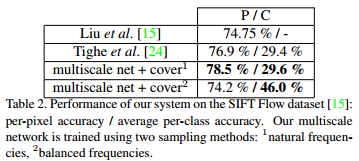

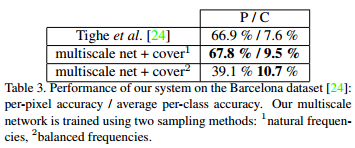

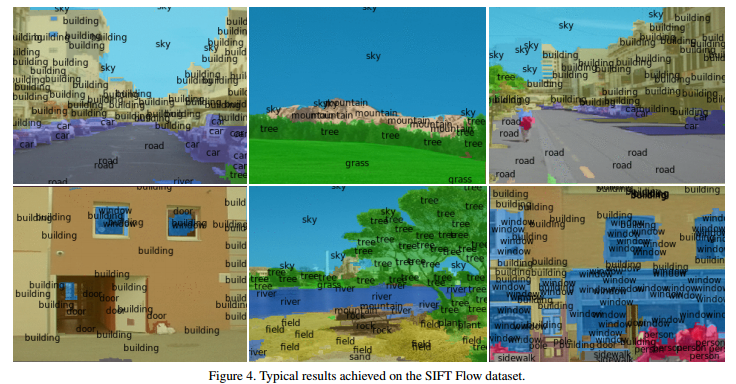

Two sampling methods for learning the multiscale features were tried on each dataset. One uses the natural frequencies of each class in the dataset, while the other balances them so that an equal number of each class is shown to the network. The results from each of these methods varied with the dataset used and are reported in the tables below. The authors only included the results for the frequency balancing method for the Stanford Background dataset as it consistently gave better results, but it could still be useful to have the results from the other method to help guide future work. Training with balanced frequencies allows better discrimination of small objects, and although it tends to have lower overall pixel-wise accuracy, it performs better from a recognition point of view. This observation can be seen in the tables below. The per-pixel accuracy for frequency balancing in the Barcelona dataset is quite poor, which the authors attribute by the fact that the dataset has a large amount of classes with very few training examples, leading to overfitting when trying to model them in this manner. | Two sampling methods for learning the multiscale features were tried on each dataset. One uses the natural frequencies of each class in the dataset, while the other balances them so that an equal number of each class is shown to the network. The results from each of these methods varied with the dataset used and are reported in the tables below. The authors only included the results for the frequency balancing method for the Stanford Background dataset as it consistently gave better results, but it could still be useful to have the results from the other method to help guide future work. Training with balanced frequencies allows better discrimination of small objects, and although it tends to have lower overall pixel-wise accuracy, it performs better from a recognition point of view. This observation can be seen in the tables below. The per-pixel accuracy for frequency balancing in the Barcelona dataset is quite poor, which the authors attribute by the fact that the dataset has a large amount of classes with very few training examples, leading to overfitting when trying to model them in this manner. | ||

Revision as of 18:28, 16 November 2015

Introduction

This paper<ref> Farabet, Clement, et al. "Scene parsing with multiscale feature learning, purity trees, and optimal covers." arXiv preprint arXiv:1202.2160 (2012). </ref> presents an approach to full scene labelling (FSL). This is the task of giving a label to each pixel in an image corresponding to which category of object it belongs to. FSL involves solving the problems of detection, segmentation, recognition, and contextual integration simultaneously. One of the main obstacles of FSL is that the information required for labelling a particular pixel could come from very distant pixels as well as their labels. This distance often depends on the particular label as well (e.g. the presence of a wheel might mean there is a vehicle nearby, while an object like the sky or water could span the entire image, and figuring out to which class a particular blue pixel belongs could be challenging).

Overview

The proposed method for FSL works by first computing a tree of segments from a graph of pixel dissimilarities. A set of dense feature vectors is then computed, encoding regions of multiple sizes centered on each pixel. Feature vectors are aggregated and fed to a classifier which estimates the distribution of object categories in a segment. A subset of tree nodes that cover the image are selected to maximize the average "purity" of the class distributions (i.e. maximizing the likelihood that each segment will contain a single object). The convolutional network feature extractor is trained end-to-end from raw pixels, so there is no need for engineered features.

There are five main ingredients to this new method for FSL:

- Trainable, dense, multi-scale feature extraction

- Segmentation tree

- Regionwise feature aggregation

- Class histogram estimation

- Optimal purity cover

The three main contributions of this paper are:

- Using a multi-scale convolutional net to learn good features for region classification

- Using a class purity criterion to decide if a segment contains a single object, as opposed to several objects, or part of an object

- An efficient procedure to obtain a cover that optimizes the overall class purity of a segmentation

Model

Results

For all experiments, a 2-stage convolutional network was used. The input is a 3-channel image, and it is transformed into a 16-dimensional feature map, using a bank of 16 7x7 filters followed by tanh units. This feature map is then pooled using a 2x2 max-pooling layer. The second layer transforms the 16-dimensional feature map into a 64-dimensional feature map, with each component being produced by a combination of 8 7x7 filters (for an effective total of 512 filters), followed by tanh units. This map is also pooled using a 2x2 max-pooling layer. This 64-dimensional feature map is transformed into a 256-dimensional feature map by using a combination of 16 7x7 filters (2048 filters).

The network is applied to a locally normalized Laplacian pyramid constructed on the input image. The pyramid contains three rescaled versions of the input: 320x240, 160x120, and 80x60. All of the inputs are properly padded and the outputs of each of the three networks are upsampled and concatenated to produce a 768-dimensional feature vector map (256x3). The network is trained on all three scales in parallel.

A simple grid search was used to find the best learning rate and regularization parameters (weight decay). A holdout of 10% of the training data was used as a validation set during the parameter search. For both datasets, jitter was used to artificially expand the size of the training data, to try to allow features to not overfit irrelevant biases present in the data. This jitter included horizontal flipping, and rotations between -8 and 8 degrees.

The hierarchy used to find the optimal cover is a constructed on the raw image gradient, based on a standard volume criterion<ref> F. Meyer and L. Najman. "Segmentation, minimum spanning tree and hierarchies." In L. Najman and H. Talbot, editors, Mathematical Morphology: from theory to application, chapter 9, pages 229–261. ISTE-Wiley, London, 2010. </ref>, completed by removing non-informative small components (less than 100 pixels). Traditionally segmentation methods use a partition of segments (i.e. finding an optimal cut in the tree) rather than a cover. A number of graph cut methods were tried, but the results were systematically worse than the optimal cover method.

Two sampling methods for learning the multiscale features were tried on each dataset. One uses the natural frequencies of each class in the dataset, while the other balances them so that an equal number of each class is shown to the network. The results from each of these methods varied with the dataset used and are reported in the tables below. The authors only included the results for the frequency balancing method for the Stanford Background dataset as it consistently gave better results, but it could still be useful to have the results from the other method to help guide future work. Training with balanced frequencies allows better discrimination of small objects, and although it tends to have lower overall pixel-wise accuracy, it performs better from a recognition point of view. This observation can be seen in the tables below. The per-pixel accuracy for frequency balancing in the Barcelona dataset is quite poor, which the authors attribute by the fact that the dataset has a large amount of classes with very few training examples, leading to overfitting when trying to model them in this manner.

References

<references />