parsing natural scenes and natural language with recursive neural networks: Difference between revisions

No edit summary |

|||

| Line 106: | Line 106: | ||

::::<math>s(RNN(\theta,x_i,\widehat y))=\sum_{d\in N(\widehat y)}s_d</math> | ::::<math>s(RNN(\theta,x_i,\widehat y))=\sum_{d\in N(\widehat y)}s_d</math> | ||

= Learning = | |||

The objective function ''J'' is not differentiable due to hinge loss. Therefore, we must opt for the subgradient method (Ratliff et al., 2007) which computes a gradient-like method called the subgradient. Let <math>\theta = (W^sem,W,W^score,W^label)</math> | |||

= Results = | = Results = | ||

Revision as of 17:34, 19 October 2015

Introduction

This paper uses Recurrent Neural Networks to find a recursive structure that is commonly found in the inputs of different modalities such as natural scene images or natural language sentences. This is the first deep learning work which learns full scene segmentation, annotation and classification. The same algorithm can be used both to provide a competitive syntactic parser for natural language sentences from the Penn Treebank and to outperform alternative approaches for semantic scene segmentation, annotation and classification.

This particular approach for NLP is different in that it handles variable sized sentences in a natural way and captures the recursive nature of natural language. Furthermore, it jointly learns parsing decisions, categories for each phrase and phrase feature embeddings which capture the semantics of their constituents.

Core Idea

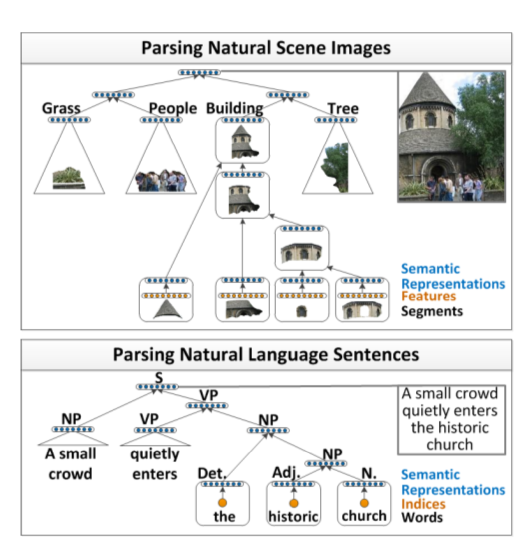

The following figure describes the recursive structure that is present in the images and the sentences.

Images are first over segmented into regions which are later mapped to semantic feature vector using a neural network. These features are then used as an input to the RNN, which decides whether or not to merge the neighbouring images. This is decided based on a score which is higher if the neighbouring images are similar.

In total the RNN computed 3 outputs :

- Score, indicating whether the neighboring regions should be merged or not

- A new semantic feature representation for this larger region

- Class label

The same procedure is applied to parsing of words too. The semantic features are given as an input to the RNN, then they are merged into phrases in a syntactically and semantically meaningful order.

Input Representation

Each image is divided into 78 segments, and 119 Features from each segment are extracted. These features include color and texture features , boosted pixel classifier scores (trained on the labelled training data), as well as appearance and shape features.

Each of these images are then transformed semantically by applying it to a neural network layer using a logistic function as the activation unit.

ai=f(WsemFi + bsem)

where W is the weight that we want to learn, F is the Feature Vector, b is the bias and f is the sigmoid function. In this version of experiments, the original sigmoid function [math]\displaystyle{ f(x)=\tfrac{1}{1 + e^{-x}} }[/math] was used.

For the sentences, the Features are simply the Bag of words model. The activation function for the sentences is

ai=Lek

where L is the word embedding matrix. This matrix usually captures co-occurrence statistics and its values are learned. Assume we are given an ordered list of N words words from a sentence x. Each word i = 1, . . . , Nwords has an associated vocabulary index k into the columns of the embedding matrix. The activation function is

a i = Lek

where ek is the binary vector used to get the word at a particular index.

Recursive Neural Networks for Structure Prediction

In our discriminative parsing architecture, the goal is to learn a function f : X → Y, where Y is the set of all possible binary parse trees. An input x consists of two parts: (i) A set of activation vectors {a 1 , . . . , a N segs }, which represent input elements such as image segments or words of a sentence. (ii) A symmetric adjacency matrix A, where A(i, j) = 1, if segment i neighbors j. This matrix defines which elements can be merged. For sentences, this matrix has a special form with 1’s only on the first diagonal below and above the main diagonal.

The following figure illustrates how the inputs to RNN look like and what the correct label is. For trees, there will be more than one correct binary parse trees, but for sentences there will only have one correct tree.

The structural loss margin for RNN to predict the tree is defined as follows [math]\displaystyle{ \Delta }[/math](x,l,yproposed)=[math]\displaystyle{ \Kappa }[/math][math]\displaystyle{ \Sigma }[/math] 1{subTree(d)[math]\displaystyle{ \not\in }[/math]Y (x, l)} / Y (x, l)

where the summation is over all non terminal nodes and [math]\displaystyle{ \Kappa }[/math] is a parameter. Y(x,l) is the set of correct tree corresponding to input x and label l.

Given the training set, the algorithm will search for a function f with small expected loss on unseen inputs, i.e. File:pic3.png where θ are all the parameters needed to compute a score s with an RNN. The score of a tree y is high if the algorithm is confident that the structure of the tree is correct.

An additional constraint imposed is that the score of the highest scoring tree should be greater than margin defined y the structural loss function so that the model output's as high score as possible on the correct tree and as low score as possible on the wrong tree. This constraint can be expressed as File:pic4.png

With these constraints minimizing the following objective function maximizes the correct tree’s score and minimizes (up to a margin) the score of the highest scoring but incorrect tree. File:pic5.png

For learning the RNN structure, the authors used activation vectors and adjacency matrix as inputs, as well as a greedy approximation since there is no efficient dynamic programming algorithms for their RNN setting.

With an adjacency matrix A, neighboring segments are found with the algorithm and their activations added to a set of potential child node pairs:

- [math]\displaystyle{ C = \{ [a_i, a_j]: A(i, j) = 1 \} }[/math]

So for example, from the image in Fig 2. we would have the following pairs:

- [math]\displaystyle{ C = \{[a_1, a_2], [a_1, a_3], [a_2, a_1], [a_2, a_4], [a_3, a_1], [a_3, a_4], [a_4, a_2], [a_4, a_3], [a_4, a_5], [a_5, a_4]\} }[/math]

Where these are concatenated and given as inputs into the neural network. Potential parent representations for possible child nodes are calculated with:

- [math]\displaystyle{ p(i, j) = f(W[c_i: c_j] + b) }[/math]

And the local score with:

- [math]\displaystyle{ s(i, j) = W^{score} p(i, j) }[/math]

Once the scores for all pairs are calculated, three steps are performed:

1. The highest scoring pair [math]\displaystyle{ [a_i, a_j] }[/math] will be removed from the set of potential child node pairs [math]\displaystyle{ C }[/math]. As well as any other pair containing either [math]\displaystyle{ a_i }[/math] or [math]\displaystyle{ a_j }[/math].

2. Adjacency Matrix [math]\displaystyle{ A }[/math] is updated with a new row and column that reflects new segment along with its child segments.

3. Potential new child pairs are added to [math]\displaystyle{ C }[/math].

Steps 1-3 are repeated until all pairs are merged and only one parent activation is left in the set [math]\displaystyle{ C }[/math]. The last remaining activation is at the root of the Recursive Neural Network that represents the whole image.

The equation that determines the quality of the structure amongst other variants is simply the sum of all the local decisions:

- [math]\displaystyle{ s(RNN(\theta,x_i,\widehat y))=\sum_{d\in N(\widehat y)}s_d }[/math]

Learning

The objective function J is not differentiable due to hinge loss. Therefore, we must opt for the subgradient method (Ratliff et al., 2007) which computes a gradient-like method called the subgradient. Let [math]\displaystyle{ \theta = (W^sem,W,W^score,W^label) }[/math]

Results

The parameters to tune in this algorithm are n, the size of the hidden layer; κ, the penalization term for incorrect parsing decisions and λ, the regularization parameter. The parameter values that gave the best results were n = 100, κ = 0.05 and λ = 0.001..

A linear SVM was trained using all the node activations in the tree as features. The Stanford Background dataset was used for training and testing. With an accuracy of 88.1%, this algorithm outperforms the state-of-the art features for scene categorization, Gist descriptors ,which obtain only 84.0%. The results are summarized in the following figure. File:pic6.png