compressed Sensing Reconstruction via Belief Propagation: Difference between revisions

| Line 23: | Line 23: | ||

==Compressed Sensing Reconstruction Algorithms== | ==Compressed Sensing Reconstruction Algorithms== | ||

Linear programming is the core approach for designing CS decoding algorithms. This approach is tractable with complexity of <math>\ O(N^3) </math>, however this complexity is intractable for large scale problems. For example a <math>\ 256\times 256 </math> image will need <math>\ 2^{48} </math> operations for decoding! One the other hand the encoding stage will need <math>\ O(NM) </math> computations for a dense sensing matrix. For this reason one important direction in this filed is to design and simplify the encoding and decoding algorithms. | |||

One alternative decoding approach is iterative greedy algorithm. This approach needs slightly more measurements but it decreases computational complexity significantly. Perhaps the most well know algorithms in this category are Orthogonal Matching Pursuit <ref name="R4"> J. Tropp and A. C. Gilbert, “Signal recovery from partial information via orthogonal matching pursuit,” Apr. 2005, Preprint.</ref> and Matching Pursuit (MP) <ref name="R5"> EM. F. Duarte, M. B. Wakin, and R. G. Baraniuk, “Fast reconstruction of piece-wise smooth signals from random projections ,” in Proc. SPARS05, France, Nov. 2005.</ref>. OMP requires <math>\ 2K ln(N) </math> measurements and the coputational complexity is <math>\ O(KN^2) </math>. Even though it is better but it still is intractable for large <math>\ N </math> and <math>\ K </math>. More advanced versions like StOMP can recover the signal in <math>\ Nlog(N) </math> complexity. It is much faster and can be used for larger class of signals. | |||

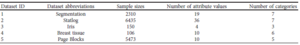

The common assumption in these approaches is that sensing matrix is assumed to be dense. Recently some researchers are trying to use sparse sensing matrix and use it to simplify the decoding algorithms. For example chaining pursuit uses this assumption and recovers the signal with complexity <math>\ K log^2(N) log^2(K)</math>. Table. 1 summaries CS schemes including their complexity as well as required measurements for each method. These results indicates that wecan reduce the complexity only at expense of having more measurements. | |||

[[File:table1.png|thumb|right|Table.1 CS schemes.]] | |||

==Connecting CS decoding to graph decoding algorithms== | ==Connecting CS decoding to graph decoding algorithms== | ||

Revision as of 14:02, 2 November 2011

Introduction

One of the key theorem in digital signal processing is Shannon/Nyquist theorem. This theorem specifies the conditions on which a band limited signal can be reconstructed uniquely from its discrete samples. This property of band limited signals made signal processing viable on natural analog signals. However, in some applications even the sampled signal lays in a extremely high dimensional vector space. To make signal processing algorithms computationally tractable, many researchers work on compressiblity of signals. Here, it is assumed that most of the information content of a signal lays in a few samples with large magnitude. This lead to study and investigation on a class of signals, known as compressible signals.

Compressible signals can be stored efficiently by ignoring small value coefficients. Naturally it means that during sampling procedure loosing those samples is unimportant. This lead to a natural questions: Can we sample compressible signals in a compressed way? Is there any method to sense only those large value coefficients? In parallel works by Donoho <ref name="R1"> D. Donoho, “Compressed Sensing,” in IEEE Trans. on Info. theory, vol 52, no 4,pp. 1289–1306, Apr. 2006.</ref> and Candes et. al <ref name="R2"> E. Candes, J. Romberg, J.; T. Tao, “Robust uncertainty principles: Exact signal reconstruction from highly incomplete frequency information,” in IEEE Trans. on Info. theory, vol 52, no 2,pp. 489–509, Feb. 2006.</ref> this question was answered. They showed that this procedure can be done by measuring a small number of random linear projection of the source signal. They also provided a decoding scheme for reconstructing the source signal using those measurements and provided theoretical proofs for different aspects of this topic.

Tow important questions in CS theory is: 1. How many measurement do we need to be able to reconstruct a specific signal in stable and robust way? 2. What is the decoding algorithm complexity? These are vital questions which must be answered in order to make CS theory an alternative procedure for ordinary sampling. These questions have been answered by various researchers. Possibly the most important limitation of CS theory is the complexity of the current decoding algorithms. Those algorithms with strong theoretical background which can be applied to different applications are computationally expensive and can not be used for large scale problems. For this reason one of the active research topics in this field, is to develop tractable CS decoding algorithms. A recently published paper <ref name="R3"> D. Baron, S. Sarvotham, and R.G. Baraniuk, “Compressive Sensing Via Belief Propagation ,” in IEEE Trans. on Signal Processing, vol 58, no 1,pp. 269–280, Jan. 2010.</ref> tries to provide a decoding algorithms via belief propagation (BP). Authors connect CS to graphical models in an interesting approach and then use BP on the corresponding graph to provide a decoding algorithm. In the current paper summary we will follow their approach which indicates the importance of graphical models and the wide range of problems that these models can be used on. It is shown that this approach leads to a decoding algorithm with much less computational load.

Compressed Sensing

Compressive sensing is a method for sampling and representing compressible signals at below the Nyquist rate measurement rates. Let [math]\displaystyle{ \ x }[/math] is a [math]\displaystyle{ N \times 1 }[/math] column vector in [math]\displaystyle{ \mathbb{R}^N }[/math] that only has [math]\displaystyle{ K \le N }[/math] non-zero entries. To measure this source signal we measure a small number of linear combinations of its elements, [math]\displaystyle{ \ M }[/math], as follows

where [math]\displaystyle{ \ y \in \mathbb{R}^M }[/math] is the vector containing the measurements and [math]\displaystyle{ \psi \in \mathbb{R}^{M \times N} }[/math] is a matrix that carries the coordinate basis we use in our application. The goal of CS theory is, given [math]\displaystyle{ \psi }[/math] and [math]\displaystyle{ \ y }[/math],to recover the source signal in a stable and robust approach.

It is obvious that this problem is ill-posed and infinitely many solutions satisfy (1). Since we assume that our source signal is sparse we can take advantage of this knowledge and assert sparsity as prior to limit the feasible region of solutions. Interestingly it has been proven that under this priority if the source signal is sparse enough then it can be recovered as the solution of optimization problem <ref name="R1"/> and <ref name="R2"/>:

where [math]\displaystyle{ \ ||.||_0 }[/math] denotes [math]\displaystyle{ \ l_0 }[/math] norm that measures the number of non-zero entries. Unfortunately [math]\displaystyle{ \ l_0 }[/math] optimization is NP hard and needs combinatorial enumeration and thus is intractable. Interestingly, it has been proven that if the sensing matrix satisfies restricted isometry property (RIP), then (2) can be equivalently solves as:

Here we have replaced [math]\displaystyle{ \ l_0 }[/math] norm by [math]\displaystyle{ \ l_1 }[/math] norm which is convex and robust towards additive noise. This optimization can be solved efficiently using linear programming and reconstruction complexity is [math]\displaystyle{ \ O(N^3) }[/math] . It has been shown that matrices with independently identical distributed Gaussian or Bernoulli entries satisfy RIP condition and can be used effectively as sensing matrix. In such cases the linear programming decoder requires [math]\displaystyle{ \ K log(1+N/K) }[/math] measurements for signal reconstruction.

Compressed Sensing Reconstruction Algorithms

Linear programming is the core approach for designing CS decoding algorithms. This approach is tractable with complexity of [math]\displaystyle{ \ O(N^3) }[/math], however this complexity is intractable for large scale problems. For example a [math]\displaystyle{ \ 256\times 256 }[/math] image will need [math]\displaystyle{ \ 2^{48} }[/math] operations for decoding! One the other hand the encoding stage will need [math]\displaystyle{ \ O(NM) }[/math] computations for a dense sensing matrix. For this reason one important direction in this filed is to design and simplify the encoding and decoding algorithms.

One alternative decoding approach is iterative greedy algorithm. This approach needs slightly more measurements but it decreases computational complexity significantly. Perhaps the most well know algorithms in this category are Orthogonal Matching Pursuit <ref name="R4"> J. Tropp and A. C. Gilbert, “Signal recovery from partial information via orthogonal matching pursuit,” Apr. 2005, Preprint.</ref> and Matching Pursuit (MP) <ref name="R5"> EM. F. Duarte, M. B. Wakin, and R. G. Baraniuk, “Fast reconstruction of piece-wise smooth signals from random projections ,” in Proc. SPARS05, France, Nov. 2005.</ref>. OMP requires [math]\displaystyle{ \ 2K ln(N) }[/math] measurements and the coputational complexity is [math]\displaystyle{ \ O(KN^2) }[/math]. Even though it is better but it still is intractable for large [math]\displaystyle{ \ N }[/math] and [math]\displaystyle{ \ K }[/math]. More advanced versions like StOMP can recover the signal in [math]\displaystyle{ \ Nlog(N) }[/math] complexity. It is much faster and can be used for larger class of signals.

The common assumption in these approaches is that sensing matrix is assumed to be dense. Recently some researchers are trying to use sparse sensing matrix and use it to simplify the decoding algorithms. For example chaining pursuit uses this assumption and recovers the signal with complexity [math]\displaystyle{ \ K log^2(N) log^2(K) }[/math]. Table. 1 summaries CS schemes including their complexity as well as required measurements for each method. These results indicates that wecan reduce the complexity only at expense of having more measurements.

Connecting CS decoding to graph decoding algorithms

CS-LDPC decoding of sparse signals

Source model

Decoding via statistical inference

Exact solution to CS statistical inference

Approximate solution to CS statistical inference via message passing

Numerical results

Conclusion

References

<references />