Zero-Shot Visual Imitation: Difference between revisions

| (61 intermediate revisions by 17 users not shown) | |||

| Line 2: | Line 2: | ||

==Introduction== | ==Introduction== | ||

The dominant paradigm for imitation learning relies on strong supervision of expert actions to learn both ''what'' and ''how'' to imitate for a certain task. For example, in the robotics field, Learning from Demonstration (LfD) (Argall et al., 2009; Ng & Russell, 2000; Pomerleau, 1989; Schaal, 1999) requires an expert to manually move robot joints (kinesthetic teaching) or teleoperate the robot to teach | The dominant paradigm for imitation learning relies on strong supervision of expert actions to learn both ''what'' and ''how'' to imitate for a certain task. For example, in the robotics field, Learning from Demonstration (LfD) (Argall et al., 2009; Ng & Russell, 2000; Pomerleau, 1989; Schaal, 1999) requires an expert to manually move robot joints (kinesthetic teaching) or teleoperate the robot to teach the desired task. The expert will, in general, provide multiple demonstrations of a specific task at training time which the agent will form into observation-action pairs to then distill into a policy for performing the task. In the case of demonstrations for a robot, this heavily supervised process is tedious and unsustainable especially looking at the fact that new tasks need a set of new demonstrations for the robot to learn from. In this paper, an alternative | ||

paradigm is pursued wherein an agent first explores the world without any expert supervision and then distills its experience into a goal-conditioned skill policy with a novel forward consistency loss. | |||

Videos, models, and more details are available at [[https://pathak22.github.io/zeroshot-imitation/]]. | |||

===Paper Overview=== | ===Paper Overview=== | ||

''Observational Learning'' (Bandura & Walters, 1977), a term from the field of psychology, suggests a more general formulation where the expert communicates ''what'' needs to be done (as opposed to ''how'' something is to be done) by providing observations of the desired world states via video or sequential images. This is the proposition of the paper and while this is a harder learning problem, it is possibly more useful because the expert can now distill a large number of tasks easily (and quickly) to the agent. | ''Observational Learning'' (Bandura & Walters, 1977), a term from the field of psychology, suggests a more general formulation where the expert communicates ''what'' needs to be done (as opposed to ''how'' something is to be done) by providing observations of the desired world states via video or sequential images, instead of observation-action pairs. This is the proposition of the paper and while this is a harder learning problem, it is possibly more useful because the expert can now distill a large number of tasks easily (and quickly) to the agent. | ||

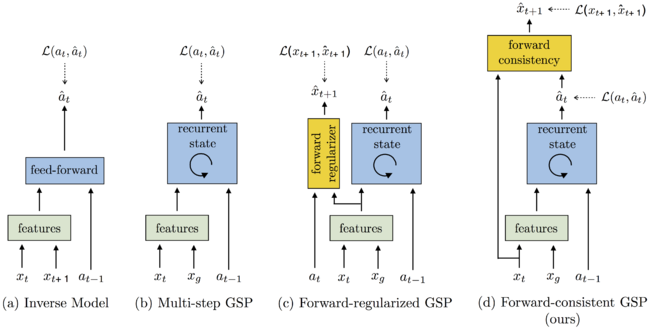

[[File:1-GSP.png | 650px|thumb|center|Figure 1: The goal-conditioned skill policy (GSP) takes as input the current and goal observations and outputs an action sequence that would lead to that goal. We compare the performance of the following GSP models: (a) Simple inverse model; (b) | [[File:1-GSP.png | 650px|thumb|center|Figure 1: The goal-conditioned skill policy (GSP) takes as input the current and goal observations and outputs an action sequence that would lead to that goal. We compare the performance of the following GSP models: (a) Simple inverse model; (b) Multi-step GSP with previous action history; (c) Multi-step GSP with previous action history and a forward model as regularizer, but no forward consistency; (d) Multi-step GSP with forward consistency loss proposed in this work.]] | ||

This paper follows (Agrawal et al., 2016; Levine et al., 2016; Pinto & Gupta, 2016) where an agent first explores the environment independently and then distills its observations into goal-directed skills. The word 'skill' is used to denote a function that predicts the sequence of actions to take the agent from the current observation to the goal. This function is what is known as a ''goal-conditioned skill policy (GSP)'' and | This paper follows (Agrawal et al., 2016; Levine et al., 2016; Pinto & Gupta, 2016) where an agent first explores the environment independently and then distills its observations into goal-directed skills. The word 'skill' is used to denote a function that predicts the sequence of actions to take the agent from the current observation to the goal. This function is what is known as a ''goal-conditioned skill policy (GSP)'', and is learned by re-labeling states that the agent visited as goals and the actions the agent taken as prediction targets via self-supervised way. During inference, the GSP recreates the task step-by-step given the goal observations from the demonstration. | ||

A challenge of learning the GSP is that the distribution of trajectories from one state to another is multi-modal; | A major challenge of learning the GSP is that the distribution of trajectories from one state to another is multi-modal; there are many possible ways of traversing from one state to another. This issue is addressed with the main contribution of this paper, the ''forward-consistent loss'', which essentially says that reaching the goal is more important than how it is reached. First, a forward model that predicts the next observation from the given action and current observation is learned. The difference in the output of the forward model for the GSP-selected action and the ground-truth next state is used to train the model. This forward-consistent loss does not inadvertently penalize actions that are ''consistent'' with the ground-truth action, even though the actions are not exactly the same (but lead to the same next state). | ||

As a simple example to explain the forward-consistent loss, imagine a scenario where a robot must grab an object some distance ahead with an obstacle along the pathway. Now suppose that during demonstration the obstacle is avoided by going to the right and then grabbing the object while the agent during training decides to go left and then grab the object. The forward-consistent loss would characterize the action of the robot as ''consistent'' with the ground-truth action of the demonstrator and not penalize the robot for going left instead of right. | As a simple example to explain the forward-consistent loss, imagine a scenario where a robot must grab an object some distance ahead with an obstacle along the pathway. Now suppose that during demonstration the obstacle is avoided by going to the right and then grabbing the object while the agent during training decides to go left and then grab the object. The forward-consistent loss would characterize the action of the robot as ''consistent'' with the ground-truth action of the demonstrator and not penalize the robot for going left instead of right. | ||

Of course, when introducing something like | Of course, when introducing something like forward-consistent loss, issues related to the number of steps needed to reach a certain goal become of interest since different goals require different number of steps. To address this, the paper pairs the GSP with a goal recognizer (as an optimizer) to determines whether the goal has been satisfied with respect to some metrics. Figure 1 shows various GSPs along with diagram (d) showing the forward-consistent loss proposed in this paper. | ||

The zero-shot imitator is tested on a Baxter robot performing tasks involving rope manipulation, a TurtleBot performing office navigation and navigation experiments in ''VizDoom''. Positive results are shown for all three experiments leading to the conclusion that the forward-consistent GSP can be used to imitate a variety of tasks without making environmental or task-specific assumptions. | The paper refers to this method as zero-shot, as the agent never has access to expert actions regardless of being in the training or task demonstration phase. This is different from one-shot imitation learning, where agents have full knowledge of actions and expert demos during the training phase. The agent learns to imitate instead of learning by imitation. The zero-shot imitator is tested on a Baxter robot performing tasks involving rope manipulation, a TurtleBot performing office navigation, and a series of navigation experiments in ''VizDoom''. Positive results are shown for all three experiments leading to the conclusion that the forward-consistent GSP can be used to imitate a variety of tasks without making environmental or task-specific assumptions. | ||

===Related Work=== | ===Related Work=== | ||

Some key ideas related to this paper are '''imitation learning''', '''visual demonstration''', '''forward/inverse dynamics and consistency''' and finally, '''goal conditioning'''. The paper has more on each of these topics including citations to related papers. The propositions in this paper are related to imitation learning but the problem being addressed is different in that there is less supervision and the model requires generalization across tasks during inference. | Some key ideas related to this paper are '''imitation learning''', '''visual demonstration''', '''forward/inverse dynamics and consistency''' and finally, '''goal conditioning'''. The paper has more on each of these topics including citations to related papers. The propositions in this paper are related to imitation learning but the problem being addressed is different in that there is less supervision and the model requires generalization across tasks during inference. | ||

Imitation Learning: The two main threads are behavioral cloning and inverse reinforcement learning. For recent work in imitation learning, it required the expert actions to expert actions. Compared with this paper, it does not need this. | |||

Visual Demonstration: Several papers focused on relaxing this supervision to visual observations alone and the end-to-end learning improved results. | |||

Forward/Inverse Dynamics and Consistency: Forward dynamics model for planning actions has been learned but there is not consistent optimizer between the forward and inverse dynamics. | |||

Goal Conditioning: In this paper, systems work from high-dimensional visual inputs instead of knowledge of the true states and do not use a task reward during training. | |||

==Learning to Imitate Without Expert Supervision== | ==Learning to Imitate Without Expert Supervision== | ||

| Line 26: | Line 36: | ||

In this section (and the included subsections) the methods for learning the GSP, ''forward consistency loss'' and ''goal recognizer'' network are described. | In this section (and the included subsections) the methods for learning the GSP, ''forward consistency loss'' and ''goal recognizer'' network are described. | ||

Let <math display="inline">S : \{x_1, a_1, x_2, a_2, ..., x_T\}</math> be the sequence of observation-action pairs generated by the agent as it explores the environment | Let <math display="inline">S : \{x_1, a_1, x_2, a_2, ..., x_T\}</math> be the sequence of observation-action pairs generated by the agent as it explores the environment. This exploration data is used to learn the GSP policy. | ||

| Line 32: | Line 42: | ||

The | The learned GSP policy (<math display="inline">π</math>) takes as input a pair of observations <math display="inline">(x_i, x_g)</math> and outputs a sequence of actions <math display="inline">(\overrightarrow{a}_τ : a_1, a_2, ..., a_K)</math> to reach the goal observation <math display="inline">x_g</math> starting from the current observation <math display="inline">x_i</math>. The states (observations) <math display="inline">x_i</math> and <math display="inline">x_g</math> are sampled from <math display="inline">S</math> and need not be consecutive. Given the start and stop states, the number of actions <math display="inline">K</math> is also known. <math display="inline">π</math> can be though of as a deep network with parameters <math display="inline">θ_π</math>. | ||

At test time, the expert demonstrates a task from which the agent captures a sequence of observations. This set of images is denoted by <math display="inline">D: \{x_1^d, x_2^d, ..., x_N^d\}</math>. The sequence needs to have at least one entry and can be as temporally dense as needed (i.e. the expert can show as many goals or sub-goals as needed to the agent). The agent then uses its learned policy to start from initial state <math display="inline">x_0</math> and generate actions predicted by <math display="inline">π(x_0, x_1^d; θ_π)</math> to follow the observations in <math display="inline">D</math>. | |||

A separate ''goal recognizer'' network is needed to ascertain if the current observation is close to the goal or not. This is because multiple actions might be required to reach close to <math display="inline">x_1^d</math>. Knowing this, let <math display="inline">x_0^\prime</math> be the observation after executing the predicted action. The goal recognizer evaluates whether <math display="inline">x_0^\prime</math> is sufficiently close to the goal and if not, the agent executes | The agent does not have access to the sequence of actions performed by the expert. Hence, it must use the observations to determine if it has reached the goal. A separate ''goal recognizer'' network is needed to ascertain if the current observation is close to the current goal or not. This is because multiple actions might be required to reach close to <math display="inline">x_1^d</math>. Knowing this, let <math display="inline">x_0^\prime</math> be the observation after executing the predicted action. The goal recognizer evaluates whether <math display="inline">x_0^\prime</math> is sufficiently close to the goal and if not, the agent executes | ||

<math display="inline">a = π(x_0^\prime, x_1^d; θ_π)</math>. This process is executed repeatedly for each image in <math display="inline">D</math> until the final goal is reached. | <math display="inline">a = π(x_0^\prime, x_1^d; θ_π)</math>. Then after reaching sufficiently close to <math display="inline">x_1^d</math>, the agent sets <math display="inline">x_2^d</math> as the goal and executes actions. This process is executed repeatedly for each image in <math display="inline">D</math> until the final goal is reached. | ||

===Learning the Goal-Conditioned Skill Policy (GSP)=== | ===Learning the Goal-Conditioned Skill Policy (GSP)=== | ||

In this section, first, the one-step version GSP policy is described. Next, it is extend it to the multi-step version. | |||

A one-step trajectory can be described as <math display="inline">(x_t; a_t; x_{t+1})</math>. Given <math display="inline">(x_t, x_{t+1})</math> the GSP policy estimates an action, <math display="inline">\hat{a}_t = π(x_t; x_{t+1}; θ_π)</math>. During training, cross-entropy loss is used to learn GSP parameters <math display="inline">θ_π</math>: | |||

| Line 47: | Line 59: | ||

<math display="inline"> | <math display="inline">a_t</math> and <math display="inline">\hat{a}_t</math> are the ground-truth and predicted actions respectively. The conditional distribution <math display="inline">p</math> is not readily available so it needs to be empirically approximated using the data. In a standard deep learning problem it is common to assume <math display="inline">p</math> as a delta function at <math display="inline">a_t</math>; given a specific input, the network outputs a single output. However, in this problem multiple actions can lead to the same output. Multiple outputs given a single input can be modeled using a variation auto-encoder. However, the authors use a different approach explained in sections 2.2-2.4 and in the following sections. | ||

===Forward Consistency Loss=== | ===Forward Consistency Loss=== | ||

To deal with multi-modality, this paper proposes the ''forward consistency loss'' where instead of penalizing actions predicted by the GSP to match the ground truth, the parameters of the GSP are learned such that they minimize the distance between observation <math display="inline">\hat{x}_{t+1}</math> ( | To deal with multi-modality, this paper proposes the ''forward consistency loss'' where instead of penalizing actions predicted by the GSP to match the ground truth, the parameters of the GSP are learned such that they minimize the distance between observation <math display="inline">\hat{x}_{t+1}</math> (the observation from executing the action predicted by GSP <math display="inline">\hat{a}_t = π(x_t, x_{t+1}; θ_π)</math> ) and the observation <math display="inline">x_{t+1}</math> (ground truth). This is done so that the predicted action is not penalized if it leads to the same next state as the ground-truth action. This will in turn reduce the variation in gradients (for actions that result in the same next observation) and aid the learning process. This is what is denoted as ''forward consistency loss''. | ||

To operationalize the forward consistency loss | To operationalize the forward consistency loss, we need a differentiable "forward dynamics" model that can reliably predict results of an action. The forward dynamics <math display="inline">f</math> are learned from the data by another model. Given an observation and the action performed, <math display="inline">f</math> predicts the next observation, <math display="inline">\widetilde{x}_{t+1} = f(x_t, a_t; θ_f)</math>. Since <math display="inline">f</math> is not analytic, there is no guarantee that <math display="inline">\widetilde{x}_{t+1} = \hat{x}_{t+1} </math> so an additional term is added to the loss: <math display="inline">||x_{t+1} - \hat{x}_{t+1}||_2^2 </math>. The parameters of <math display="inline">θ_f</math> are inferred by minimizing <math display="inline">||x_{t+1} - \widetilde{x}_{t+1}||_2^2 + λ||x_{t+1} - \hat{x}_{t+1}||_2^2 </math> where λ is a scalar hyper-parameter. The first term ensures that the learned model explains the ground truth transitions while the second term ensures consistency with the GSP network. In summary, the loss function is given below: | ||

| Line 61: | Line 73: | ||

<div style="text-align: center;font-size:80%"><math>\hat{a}_t = π(x_t, x_{t+1}; θ_π)</math></div> | <div style="text-align: center;font-size:80%"><math>\hat{a}_t = π(x_t, x_{t+1}; θ_π)</math></div> | ||

Past works have | Past works have shown that learning forward dynamics in the feature space as opposed to raw observation space is more robust. This paper incorporates this by making the GSP predict feature representations denoted <math>\phi(x_t), \phi(x_{t+1})</math> rather than the input space. | ||

Learning the two models <math>θ_π,θ_f</math> simultaneously from scratch can cause noisier gradient updates. This is addressed by pre-training the forward model with the first term and GSP separately by blocking gradient flow. Fine-tuning is then done with <math>θ_π,θ_f</math> jointly. | |||

The generalization to multi-step GSP <math>π_m</math> is shown below where <math>\phi</math> refers to the feature space rather than observation space which was used in the single-step case: | The generalization to multi-step GSP <math>π_m</math> is shown below where <math>\phi</math> refers to the feature space rather than observation space which was used in the single-step case: | ||

| Line 76: | Line 90: | ||

===Goal Recognizer=== | ===Goal Recognizer=== | ||

The goal recognizer network was introduced to figure out if the current goal is reached. This allows the agent to take multiple steps between goals without being penalized. In this paper, goal recognition was taken as a binary classification problem | The goal recognizer network was introduced to figure out if the current goal is reached. This allows the agent to take multiple steps between goals without being penalized. In this paper, goal recognition was taken as a binary classification problem that given an observation <math>x_i</math>, goal <math>x_g</math> infers whether <math>x_i</math> is close to <math>x_g</math>. Goal observations is drawn at random from the agent's experience due to lack of expert supervision of the goals, using those observations is because they are feasible. Additionally, a maximum number of iterations is also used to prevent the sequence of actions from getting too long. | ||

The goal recognizer was trained on data from the agent's random exploration. Pseudo-goal states were samples from the visited states, and all observations within a few timesteps of these were considered as positive results (close to the goal). The goal classifier was trained using the standard cross-entropy loss. | |||

The authors found that training a separate goal recognition network outperformed simply adding a 'stop' action to the action space of the policy network. | |||

===Ablations and Baselines=== | ===Ablations and Baselines=== | ||

To summarize, the GSP formulation is composed of (a) recurrent variable-length skill policy network, (b) explicitly encoding previous action in the recurrence, (c) goal recognizer, (d) forward consistency loss function, and (w) learning forward dynamics in the feature space instead of raw observation space. | To summarize, the GSP formulation is composed of (a) recurrent variable-length skill policy network, (b) explicitly encoding the previous action in the recurrence, (c) goal recognizer, (d) forward consistency loss function, and (w) learning forward dynamics in the feature space instead of raw observation space. | ||

To show the importance of each component a systematic ablation (removal) of components for each experiment is done to show the impact on visual imitation. The following methods will be evaluated in the experiments section: | To show the importance of each component a systematic ablation (removal) of components for each experiment is done to show the impact on visual imitation. The following methods will be evaluated in the experiments section: | ||

| Line 86: | Line 104: | ||

# Classical methods: In visual navigation, the paper attempts to compare against the state-of-the-art ORB-SLAM2 and Open-SFM. | # Classical methods: In visual navigation, the paper attempts to compare against the state-of-the-art ORB-SLAM2 and Open-SFM. | ||

# Inverse model: Nair et al. (2017) leverage vanilla inverse dynamics to follow demonstration in rope manipulation setup. | # Inverse model: Nair et al. (2017) leverage vanilla inverse dynamics to follow demonstration in rope manipulation setup. | ||

# '''GSP-NoPrevAction-NoFwdConst''' is the removal of the paper's recurrent GSP without previous action history and without | # '''GSP-NoPrevAction-NoFwdConst''' is the removal of the paper's recurrent GSP without previous action history and without forwarding consistency loss. | ||

# '''GSP-NoFwdConst''' refers to the recurrent GSP with previous action history, but without | # '''GSP-NoFwdConst''' refers to the recurrent GSP with previous action history, but without forwarding consistency objective. | ||

# '''GSP-FwdRegularizer''' refers to the model where forward prediction is only used to regularize the features of GSP but has no role to play in the loss function of predicted actions. | # '''GSP-FwdRegularizer''' refers to the model where forward prediction is only used to regularize the features of GSP but has no role to play in the loss function of predicted actions. | ||

# '''GSP''' refers to the complete method with all the components. | # '''GSP''' refers to the complete method with all the components. | ||

| Line 99: | Line 117: | ||

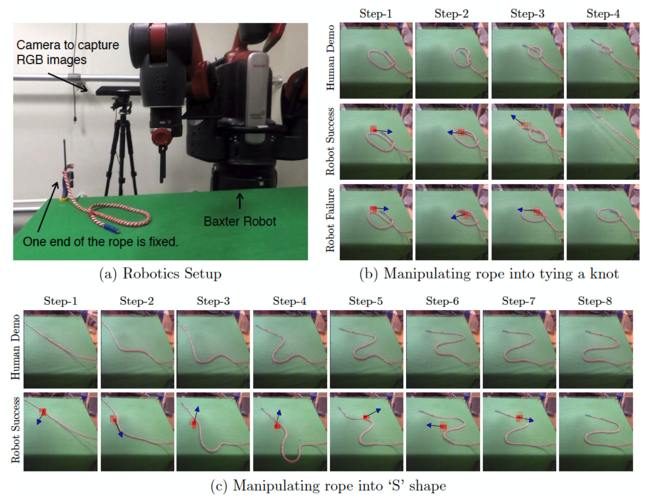

Rope manipulation is an interesting task because even humans learn complex rope manipulation, such as tying knots, via observing an expert perform it. | Rope manipulation is an interesting task because even humans learn complex rope manipulation, such as tying knots, via observing an expert perform it. | ||

In this paper, rope manipulation data collected by Nair et al. (2017) is used, where a Baxter robot manipulated a rope kept on a table in front of it. During this exploration the robot picked up the | In this paper, rope manipulation data collected by Nair et al. (2017) is used, where a Baxter robot manipulated a rope kept on a table in front of it. During this exploration, the robot picked up the rope at a random point and displaced it randomly on the table. 60K interaction pairs were collected of the form <math>(x_t, a_t, x_{t+1})</math>. These were used to train the GSP proposed in this paper. | ||

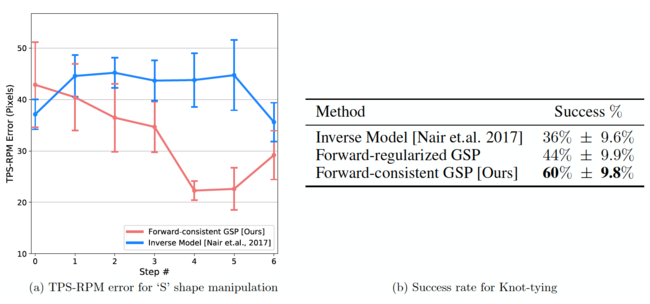

For this experiment, the Baxter robot is set up exactly like the one presented in Nair et al. (2017). The robot is tasked with manipulating the rope into an 'S' as well as tying a knot as shown in Figure 2. In testing, the robot was only provided with images of intermediate states of the rope, and not the actions taken by the human trainer. The thin plate spline robust point matching technique (TPS-RPM) (Chui & Rangarajan, 2003) is used to measure the performance of constructing the 'S' shape as shown in Figure 3. Visual verification (by a human) was used to assess the tying of a successful knot. | |||

The | The base architecture consisted of a pre-trained AlexNet whose features were fed into a skill policy network that predicts the location of grasp, the direction of displacement and the magnitude of displacement. All models were optimized using Asam with a learning rate of 1e-4. For the first 40K iterations, the AlexNet weights were frozen and then fine-tuned jointly with the later layers. More details are provided in the appendix of the paper. | ||

The approach of this paper is compared to (Nair et al., 2017) where they did similar experiments using an inverse model. The results in Figure 3 show that for the 'S' shape construction, zero-shot visual imitation achieves a success rate of 60% versus the 36% baseline from the inverse model. | The approach of this paper is compared to (Nair et al., 2017) where they did similar experiments using an inverse model. The results in Figure 3 show that for the 'S' shape construction, zero-shot visual imitation achieves a success rate of 60% versus the 36% baseline from the inverse model. | ||

[[File:2-Rope_manip.png | | [[File:2-Rope_manip.png | 650px|thumb|center|Figure 2: Qualitative visualization of results for rope manipulation task using Baxter robot. (a) The | ||

robotics system setup. (b) The sequence of human demonstration images provided by the human | robotics system setup. (b) The sequence of human demonstration images provided by the human | ||

during inference for the task of knot-tying (top row), and the sequences of observation states reached | during inference for the task of knot-tying (top row), and the sequences of observation states reached | ||

| Line 112: | Line 132: | ||

shape. Our agent is able to successfully imitate the demonstration.]] | shape. Our agent is able to successfully imitate the demonstration.]] | ||

[[File:3-GSP_graph.png | | [[File:3-GSP_graph.png | 650px|thumb|center|Figure 3: GSP trained using forward consistency loss significantly outperforms the baselines at the task of (a) manipulating rope into 'S' shape as measured by TPS-RPM error and (b) knot-tying where a success rate is reported with bootstrap standard deviation]] | ||

===Navigation in Indoor Office Environments=== | ===Navigation in Indoor Office Environments=== | ||

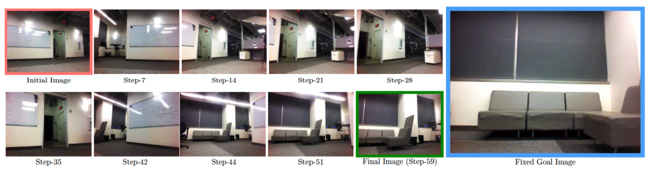

In this experiment, the robot was shown a single image or multiple images to lead it to the goal. The robot, a TurtleBot2, autonomously moves to the goal. For learning the GSP, an automated self-supervised method for data collection was devised that didn't require human supervision. The robot explored two floors of an academic building and collected 230K interactions <math>(x_t, a_t, x_{t+1})</math> (more detail is provided I the appendix of the paper). The robot was then placed into an unseen floor of the building with different textures and furniture layout for performing visual imitation at test time. | |||

The collected data was used to train a ''recurrent forward-consistent GSP''. The base architecture for the model was an ImageNet pre-trained ResNet-50 network. The loss weight of the forward model is 0.1 and the objective is minimized using Adam with a learning rate of 5e-4. More details on the implementation are given in the appendix of the paper. | |||

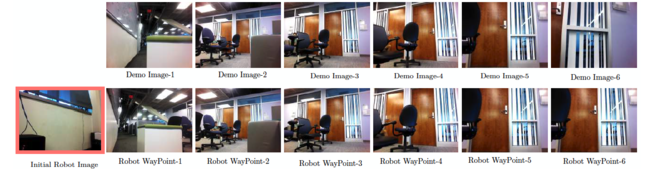

[[File:4-TurtleBot_visualization.png | | Figure 4 shows the robot's observations during testing. Table 1 shows the results of this experiment; as can be seen, GSP fairs much better than all previous baselines. | ||

(top-left). Since the initial and goal image | |||

[[File:4-TurtleBot_visualization.png | 650px|thumb|center|Figure 4: Visualization of the TurtleBot trajectory to reach a goal image (right) from the initial image | |||

(top-left). Since the initial and goal image has no overlap, the robot first explores the environment | |||

by turning in place. Once it detects overlap between its current image and goal image (i.e. step 42 | by turning in place. Once it detects overlap between its current image and goal image (i.e. step 42 | ||

onward), it moves towards the goal. Note that we did not explicitly train the robot to explore and | onward), it moves towards the goal. Note that we did not explicitly train the robot to explore and | ||

such exploratory behavior naturally emerged from the self-supervised learning.]] | such exploratory behavior naturally emerged from the self-supervised learning.]] | ||

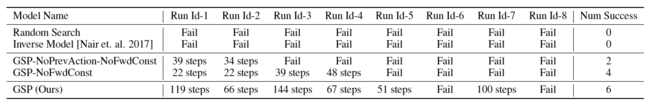

[[File:5-Table1.png | | [[File:5-Table1.png | 650px|thumb|center|Table 1: Quantitative evaluation of various methods on the task of navigating using a single image | ||

of goal in an unseen environment. Each column represents a different run of our system for a | of goal in an unseen environment. Each column represents a different run of our system for a | ||

different initial/goal image pair. | different initial/goal image pair. The full GSP model takes longer to reach the goal on average given | ||

a successful run but reaches the goal successfully at a much higher rate.]] | a successful run but reaches the goal successfully at a much higher rate.]] | ||

[[File:6-Turtlebot_visual_2.png | | Figure 5 and table 1 show the results for the robot performing a task with multiple waypoints, i.e. the robot was shown multiple sub-goals instead of just one final goal state. This was required when the end goal was far away form the robot, such as in another room. It is good to note that zero-shot visual imitation is robust to a changing environment where every frame need not match the demonstrated frame. This is achieved by providing sparse landmarks. | ||

images (top row). The TurtleBot is positioned in a manner such that the first image in demonstration | |||

has no overlap with its current observation. Even under this condition the robot is able to move | [[File:6-Turtlebot_visual_2.png | 650px|thumb|center|Figure 5: The performance of TurtleBot at following a visual demonstration given as a sequence of | ||

images (top row). The TurtleBot is positioned in a manner such that the first image in the demonstration | |||

has no overlap with its current observation. Even under this condition, the robot is able to move closer | |||

to the first demo image (shown as Robot WayPoint-1) and then follow the provided demonstration | to the first demo image (shown as Robot WayPoint-1) and then follow the provided demonstration | ||

until the end. This also exemplifies a failure case for classical methods; there are no possible keypoint | until the end. This also exemplifies a failure case for classical methods; there are no possible keypoint | ||

| Line 135: | Line 162: | ||

WayPoint-1.]] | WayPoint-1.]] | ||

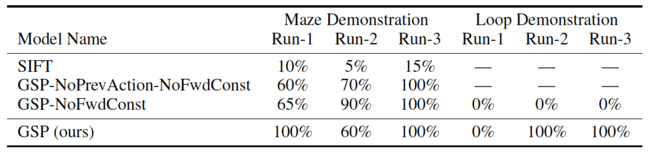

[[File:5-Table2.png | | [[File:5-Table2.png | 650px |thumb|center|Table 2: Quantitative evaluation of TurtleBot’s performance at following visual demonstrations in | ||

two scenarios: maze and the loop. We report the % of landmarks reached by the agent across three | two scenarios: maze and the loop. We report the % of landmarks reached by the agent across three | ||

runs of two different demonstrations. Results show that our method outperforms the baselines. Note | runs of two different demonstrations. Results show that our method outperforms the baselines. Note | ||

| Line 143: | Line 170: | ||

===3D Navigation in VizDoom=== | ===3D Navigation in VizDoom=== | ||

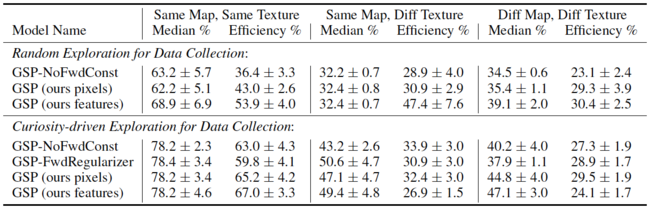

[[File:8-Table3.png | | To round off the experiments, a VizDoom simulation environment was used to test the GSP. VizDoom is a Doom-based popular Reinforcement Learning testbed. It allows agents to play the doom game using only a screen buffer. It is a 3D simulation environment that is traditionally considered to be harder than 2D domain like Atari. The goal was to measure the robustness of each method with proper error bars, the role of initial self-supervised data collection and the quantitative difference in modeling forward consistency loss in feature space in comparison to raw visual space. | ||

Data were collected using two methods: random exploration and curiosity-driven exploration (Pathak et al., 2017). The hypothesis here is that better data rather than just random exploration can lead to a better learned GSP. More details on the implementation are given in the paper appendix. | |||

Table 3 shows the results of the VizDoom experiments. They have reported the median of maximum distance reached by the robot in following the give sequence of demonstration images. The maximum distance reached is the distance of farthest landmark point that the agent reaches contiguously. Additionally, the ratio of number of steps taken by the agent to reach the landmark with respect to the number of steps shown in human demonstrations is also reported. The key takeaway that the data collected via curiosity seems to improve the final imitation performance across all methods. | |||

[[File:8-Table3.png | 650px |thumb|center| Table 3: Quantitative evaluation of our proposed GSP and the baseline models at following visual | |||

demonstrations in VizDoom 3D Navigation. Medians and 95% confidence intervals are reported for | demonstrations in VizDoom 3D Navigation. Medians and 95% confidence intervals are reported for | ||

demonstration completion and efficiency over 50 seeds and 5 human paths per environment type.]] | demonstration completion and efficiency over 50 seeds and 5 human paths per environment type.]] | ||

| Line 155: | Line 188: | ||

The expert demonstrations are also purely imitated; that is, the agent does not learn the demonstrations. Future work could look into learning the demonstration so as to richen its exploration techniques. | The expert demonstrations are also purely imitated; that is, the agent does not learn the demonstrations. Future work could look into learning the demonstration so as to richen its exploration techniques. | ||

This work used a sequence of images to provide a demonstration but the work in general does not make image-specific assumptions. Thus the work could be extended to using formal language to communicate goals, an idea left for future work. | This work used a sequence of images to provide a demonstration but the work, in general, does not make image-specific assumptions. Thus the work could be extended to using formal language to communicate goals, an idea left for future work. Future work would also explore how multiple tasks can be combined into a single model, where different tasks might come from different contexts. Finally, it would be exciting to explore explicit handling of domain shift in future work, so as to handle large differences in embodiment and learn skills directly from videos of human demonstrators obtained, for example, from the Internet. | ||

The architecture introduced in the paper for performing imitation without explicit demostrations but relying only on autonomous exploration is one of the main areas of research in current robotics. This approach could be turned into a more robust system by making use of other sensors, in particular LIDAR scanners. 3D sensors give the agent a highly detailed representation of its environment; which in turn could shorten the time required for completing the task and improve the overall accuracy for both localization and object manipulation. | |||

==Critique== | |||

1. The paper is well written and could be easily understood. In addition, the experimental evaluations are promising. Also, the proposed method is a novel and interesting so that it could be used as an alternative to pure RL. | |||

2. In the paper, the authors didn't mention clearly why zero-shot imitation instead of a trained reinforcement learning model should be used. So, they need to provide more details about this issue. | |||

3. It is surprised that experimental evaluations on real robots. However, the scalability of this paper is not demonstrated, how to extend it to higher dimensional action spaces and whether it is expensive in high dimensional action spaces. | |||

4. I think having another test where the goal is fixed and the robot remains in its original position would show some interesting insight. Even having the obstacles move around would be some possible to integrate in the test. | |||

==References== | ==References== | ||

[1] D.Pathak, P.Mahmoudieh, G.Luo, P.Agrawal, D.Chen, Y.Shentu, E.Shelhamer, J.Malik, A.A.Efros, and T. Darrell. Zero-shot Visual Imitation. In ICLR, 2018. | [1] D.Pathak, P.Mahmoudieh, G.Luo, P.Agrawal, D.Chen, Y.Shentu, E.Shelhamer, J.Malik, A.A.Efros, and T. Darrell. Zero-shot Visual Imitation. In ICLR, 2018. | ||

[2] Brenna D Argall, Sonia Chernova, Manuela Veloso, and Brett Browning. A survey of robot learning | |||

from demonstration. Robotics and autonomous systems, 2009. | |||

[3] Albert Bandura and Richard H Walters. Social learning theory, volume 1. Prentice-hall Englewood | |||

Cliffs, NJ, 1977. | |||

[4] Pulkit Agrawal, Ashvin Nair, Pieter Abbeel, Jitendra Malik, and Sergey Levine. Learning to poke | |||

by poking: Experiential learning of intuitive physics. NIPS, 2016. | |||

[5] Sergey Levine, Peter Pastor, Alex Krizhevsky, and Deirdre Quillen. Learning hand-eye coordination | |||

for robotic grasping with large-scale data collection. In ISER, 2016. | |||

[6] Lerrel Pinto and Abhinav Gupta. Supersizing self-supervision: Learning to grasp from 50k tries and | |||

700 robot hours. ICRA, 2016. | |||

[7] Ashvin Nair, Dian Chen, Pulkit Agrawal, Phillip Isola, Pieter Abbeel, Jitendra Malik, and Sergey | |||

Levine. Combining self-supervised learning and imitation for vision-based rope manipulation. | |||

ICRA, 2017. | |||

[8] Deepak Pathak, Pulkit Agrawal, Alexei A. Efros, and Trevor Darrell. Curiosity-driven exploration | |||

by self-supervised prediction. In ICML, 2017. | |||

Latest revision as of 18:42, 16 December 2018

This page contains a summary of the paper "Zero-Shot Visual Imitation" by Pathak, D., Mahmoudieh, P., Luo, G., Agrawal, P. et al. It was published at the International Conference on Learning Representations (ICLR) in 2018.

Introduction

The dominant paradigm for imitation learning relies on strong supervision of expert actions to learn both what and how to imitate for a certain task. For example, in the robotics field, Learning from Demonstration (LfD) (Argall et al., 2009; Ng & Russell, 2000; Pomerleau, 1989; Schaal, 1999) requires an expert to manually move robot joints (kinesthetic teaching) or teleoperate the robot to teach the desired task. The expert will, in general, provide multiple demonstrations of a specific task at training time which the agent will form into observation-action pairs to then distill into a policy for performing the task. In the case of demonstrations for a robot, this heavily supervised process is tedious and unsustainable especially looking at the fact that new tasks need a set of new demonstrations for the robot to learn from. In this paper, an alternative paradigm is pursued wherein an agent first explores the world without any expert supervision and then distills its experience into a goal-conditioned skill policy with a novel forward consistency loss. Videos, models, and more details are available at [[1]].

Paper Overview

Observational Learning (Bandura & Walters, 1977), a term from the field of psychology, suggests a more general formulation where the expert communicates what needs to be done (as opposed to how something is to be done) by providing observations of the desired world states via video or sequential images, instead of observation-action pairs. This is the proposition of the paper and while this is a harder learning problem, it is possibly more useful because the expert can now distill a large number of tasks easily (and quickly) to the agent.

This paper follows (Agrawal et al., 2016; Levine et al., 2016; Pinto & Gupta, 2016) where an agent first explores the environment independently and then distills its observations into goal-directed skills. The word 'skill' is used to denote a function that predicts the sequence of actions to take the agent from the current observation to the goal. This function is what is known as a goal-conditioned skill policy (GSP), and is learned by re-labeling states that the agent visited as goals and the actions the agent taken as prediction targets via self-supervised way. During inference, the GSP recreates the task step-by-step given the goal observations from the demonstration.

A major challenge of learning the GSP is that the distribution of trajectories from one state to another is multi-modal; there are many possible ways of traversing from one state to another. This issue is addressed with the main contribution of this paper, the forward-consistent loss, which essentially says that reaching the goal is more important than how it is reached. First, a forward model that predicts the next observation from the given action and current observation is learned. The difference in the output of the forward model for the GSP-selected action and the ground-truth next state is used to train the model. This forward-consistent loss does not inadvertently penalize actions that are consistent with the ground-truth action, even though the actions are not exactly the same (but lead to the same next state).

As a simple example to explain the forward-consistent loss, imagine a scenario where a robot must grab an object some distance ahead with an obstacle along the pathway. Now suppose that during demonstration the obstacle is avoided by going to the right and then grabbing the object while the agent during training decides to go left and then grab the object. The forward-consistent loss would characterize the action of the robot as consistent with the ground-truth action of the demonstrator and not penalize the robot for going left instead of right.

Of course, when introducing something like forward-consistent loss, issues related to the number of steps needed to reach a certain goal become of interest since different goals require different number of steps. To address this, the paper pairs the GSP with a goal recognizer (as an optimizer) to determines whether the goal has been satisfied with respect to some metrics. Figure 1 shows various GSPs along with diagram (d) showing the forward-consistent loss proposed in this paper.

The paper refers to this method as zero-shot, as the agent never has access to expert actions regardless of being in the training or task demonstration phase. This is different from one-shot imitation learning, where agents have full knowledge of actions and expert demos during the training phase. The agent learns to imitate instead of learning by imitation. The zero-shot imitator is tested on a Baxter robot performing tasks involving rope manipulation, a TurtleBot performing office navigation, and a series of navigation experiments in VizDoom. Positive results are shown for all three experiments leading to the conclusion that the forward-consistent GSP can be used to imitate a variety of tasks without making environmental or task-specific assumptions.

Related Work

Some key ideas related to this paper are imitation learning, visual demonstration, forward/inverse dynamics and consistency and finally, goal conditioning. The paper has more on each of these topics including citations to related papers. The propositions in this paper are related to imitation learning but the problem being addressed is different in that there is less supervision and the model requires generalization across tasks during inference.

Imitation Learning: The two main threads are behavioral cloning and inverse reinforcement learning. For recent work in imitation learning, it required the expert actions to expert actions. Compared with this paper, it does not need this.

Visual Demonstration: Several papers focused on relaxing this supervision to visual observations alone and the end-to-end learning improved results.

Forward/Inverse Dynamics and Consistency: Forward dynamics model for planning actions has been learned but there is not consistent optimizer between the forward and inverse dynamics.

Goal Conditioning: In this paper, systems work from high-dimensional visual inputs instead of knowledge of the true states and do not use a task reward during training.

Learning to Imitate Without Expert Supervision

In this section (and the included subsections) the methods for learning the GSP, forward consistency loss and goal recognizer network are described.

Let [math]\displaystyle{ S : \{x_1, a_1, x_2, a_2, ..., x_T\} }[/math] be the sequence of observation-action pairs generated by the agent as it explores the environment. This exploration data is used to learn the GSP policy.

The learned GSP policy ([math]\displaystyle{ π }[/math]) takes as input a pair of observations [math]\displaystyle{ (x_i, x_g) }[/math] and outputs a sequence of actions [math]\displaystyle{ (\overrightarrow{a}_τ : a_1, a_2, ..., a_K) }[/math] to reach the goal observation [math]\displaystyle{ x_g }[/math] starting from the current observation [math]\displaystyle{ x_i }[/math]. The states (observations) [math]\displaystyle{ x_i }[/math] and [math]\displaystyle{ x_g }[/math] are sampled from [math]\displaystyle{ S }[/math] and need not be consecutive. Given the start and stop states, the number of actions [math]\displaystyle{ K }[/math] is also known. [math]\displaystyle{ π }[/math] can be though of as a deep network with parameters [math]\displaystyle{ θ_π }[/math].

At test time, the expert demonstrates a task from which the agent captures a sequence of observations. This set of images is denoted by [math]\displaystyle{ D: \{x_1^d, x_2^d, ..., x_N^d\} }[/math]. The sequence needs to have at least one entry and can be as temporally dense as needed (i.e. the expert can show as many goals or sub-goals as needed to the agent). The agent then uses its learned policy to start from initial state [math]\displaystyle{ x_0 }[/math] and generate actions predicted by [math]\displaystyle{ π(x_0, x_1^d; θ_π) }[/math] to follow the observations in [math]\displaystyle{ D }[/math].

The agent does not have access to the sequence of actions performed by the expert. Hence, it must use the observations to determine if it has reached the goal. A separate goal recognizer network is needed to ascertain if the current observation is close to the current goal or not. This is because multiple actions might be required to reach close to [math]\displaystyle{ x_1^d }[/math]. Knowing this, let [math]\displaystyle{ x_0^\prime }[/math] be the observation after executing the predicted action. The goal recognizer evaluates whether [math]\displaystyle{ x_0^\prime }[/math] is sufficiently close to the goal and if not, the agent executes [math]\displaystyle{ a = π(x_0^\prime, x_1^d; θ_π) }[/math]. Then after reaching sufficiently close to [math]\displaystyle{ x_1^d }[/math], the agent sets [math]\displaystyle{ x_2^d }[/math] as the goal and executes actions. This process is executed repeatedly for each image in [math]\displaystyle{ D }[/math] until the final goal is reached.

Learning the Goal-Conditioned Skill Policy (GSP)

In this section, first, the one-step version GSP policy is described. Next, it is extend it to the multi-step version.

A one-step trajectory can be described as [math]\displaystyle{ (x_t; a_t; x_{t+1}) }[/math]. Given [math]\displaystyle{ (x_t, x_{t+1}) }[/math] the GSP policy estimates an action, [math]\displaystyle{ \hat{a}_t = π(x_t; x_{t+1}; θ_π) }[/math]. During training, cross-entropy loss is used to learn GSP parameters [math]\displaystyle{ θ_π }[/math]:

[math]\displaystyle{ a_t }[/math] and [math]\displaystyle{ \hat{a}_t }[/math] are the ground-truth and predicted actions respectively. The conditional distribution [math]\displaystyle{ p }[/math] is not readily available so it needs to be empirically approximated using the data. In a standard deep learning problem it is common to assume [math]\displaystyle{ p }[/math] as a delta function at [math]\displaystyle{ a_t }[/math]; given a specific input, the network outputs a single output. However, in this problem multiple actions can lead to the same output. Multiple outputs given a single input can be modeled using a variation auto-encoder. However, the authors use a different approach explained in sections 2.2-2.4 and in the following sections.

Forward Consistency Loss

To deal with multi-modality, this paper proposes the forward consistency loss where instead of penalizing actions predicted by the GSP to match the ground truth, the parameters of the GSP are learned such that they minimize the distance between observation [math]\displaystyle{ \hat{x}_{t+1} }[/math] (the observation from executing the action predicted by GSP [math]\displaystyle{ \hat{a}_t = π(x_t, x_{t+1}; θ_π) }[/math] ) and the observation [math]\displaystyle{ x_{t+1} }[/math] (ground truth). This is done so that the predicted action is not penalized if it leads to the same next state as the ground-truth action. This will in turn reduce the variation in gradients (for actions that result in the same next observation) and aid the learning process. This is what is denoted as forward consistency loss.

To operationalize the forward consistency loss, we need a differentiable "forward dynamics" model that can reliably predict results of an action. The forward dynamics [math]\displaystyle{ f }[/math] are learned from the data by another model. Given an observation and the action performed, [math]\displaystyle{ f }[/math] predicts the next observation, [math]\displaystyle{ \widetilde{x}_{t+1} = f(x_t, a_t; θ_f) }[/math]. Since [math]\displaystyle{ f }[/math] is not analytic, there is no guarantee that [math]\displaystyle{ \widetilde{x}_{t+1} = \hat{x}_{t+1} }[/math] so an additional term is added to the loss: [math]\displaystyle{ ||x_{t+1} - \hat{x}_{t+1}||_2^2 }[/math]. The parameters of [math]\displaystyle{ θ_f }[/math] are inferred by minimizing [math]\displaystyle{ ||x_{t+1} - \widetilde{x}_{t+1}||_2^2 + λ||x_{t+1} - \hat{x}_{t+1}||_2^2 }[/math] where λ is a scalar hyper-parameter. The first term ensures that the learned model explains the ground truth transitions while the second term ensures consistency with the GSP network. In summary, the loss function is given below:

Past works have shown that learning forward dynamics in the feature space as opposed to raw observation space is more robust. This paper incorporates this by making the GSP predict feature representations denoted [math]\displaystyle{ \phi(x_t), \phi(x_{t+1}) }[/math] rather than the input space.

Learning the two models [math]\displaystyle{ θ_π,θ_f }[/math] simultaneously from scratch can cause noisier gradient updates. This is addressed by pre-training the forward model with the first term and GSP separately by blocking gradient flow. Fine-tuning is then done with [math]\displaystyle{ θ_π,θ_f }[/math] jointly.

The generalization to multi-step GSP [math]\displaystyle{ π_m }[/math] is shown below where [math]\displaystyle{ \phi }[/math] refers to the feature space rather than observation space which was used in the single-step case:

The forward consistency loss is computed at each time step, t, and jointly optimized with the action prediction loss over the whole trajectory. [math]\displaystyle{ \phi(.) }[/math] is represented by a CNN with parameters [math]\displaystyle{ θ_{\phi} }[/math]. The multi-step forward consistent GSP [math]\displaystyle{ \pi_m }[/math] is implemented via a recurrent network with inputs current state, goal states, actions at previous time step and the internal hidden representation denoted [math]\displaystyle{ h_{t-1} }[/math], and outputs the actions to take.

Goal Recognizer

The goal recognizer network was introduced to figure out if the current goal is reached. This allows the agent to take multiple steps between goals without being penalized. In this paper, goal recognition was taken as a binary classification problem that given an observation [math]\displaystyle{ x_i }[/math], goal [math]\displaystyle{ x_g }[/math] infers whether [math]\displaystyle{ x_i }[/math] is close to [math]\displaystyle{ x_g }[/math]. Goal observations is drawn at random from the agent's experience due to lack of expert supervision of the goals, using those observations is because they are feasible. Additionally, a maximum number of iterations is also used to prevent the sequence of actions from getting too long.

The goal recognizer was trained on data from the agent's random exploration. Pseudo-goal states were samples from the visited states, and all observations within a few timesteps of these were considered as positive results (close to the goal). The goal classifier was trained using the standard cross-entropy loss.

The authors found that training a separate goal recognition network outperformed simply adding a 'stop' action to the action space of the policy network.

Ablations and Baselines

To summarize, the GSP formulation is composed of (a) recurrent variable-length skill policy network, (b) explicitly encoding the previous action in the recurrence, (c) goal recognizer, (d) forward consistency loss function, and (w) learning forward dynamics in the feature space instead of raw observation space.

To show the importance of each component a systematic ablation (removal) of components for each experiment is done to show the impact on visual imitation. The following methods will be evaluated in the experiments section:

- Classical methods: In visual navigation, the paper attempts to compare against the state-of-the-art ORB-SLAM2 and Open-SFM.

- Inverse model: Nair et al. (2017) leverage vanilla inverse dynamics to follow demonstration in rope manipulation setup.

- GSP-NoPrevAction-NoFwdConst is the removal of the paper's recurrent GSP without previous action history and without forwarding consistency loss.

- GSP-NoFwdConst refers to the recurrent GSP with previous action history, but without forwarding consistency objective.

- GSP-FwdRegularizer refers to the model where forward prediction is only used to regularize the features of GSP but has no role to play in the loss function of predicted actions.

- GSP refers to the complete method with all the components.

Experiments

The model is evaluated by testing performance on a rope manipulation task using a Baxter Robot, navigation of a TurtleBot in cluttered office environments and simulated 3D navigation in VizDoom. A good skill policy will generalize to unseen environments and new goals while staying robust to irrelevant distractors and observations. For the rope manipulation task this is tested by making the robot tie a knot, a task it did not observe during training. For the navigation tasks, generalization is checked by getting the agents to traverse new buildings and floors.

Rope Manipulation

Rope manipulation is an interesting task because even humans learn complex rope manipulation, such as tying knots, via observing an expert perform it.

In this paper, rope manipulation data collected by Nair et al. (2017) is used, where a Baxter robot manipulated a rope kept on a table in front of it. During this exploration, the robot picked up the rope at a random point and displaced it randomly on the table. 60K interaction pairs were collected of the form [math]\displaystyle{ (x_t, a_t, x_{t+1}) }[/math]. These were used to train the GSP proposed in this paper.

For this experiment, the Baxter robot is set up exactly like the one presented in Nair et al. (2017). The robot is tasked with manipulating the rope into an 'S' as well as tying a knot as shown in Figure 2. In testing, the robot was only provided with images of intermediate states of the rope, and not the actions taken by the human trainer. The thin plate spline robust point matching technique (TPS-RPM) (Chui & Rangarajan, 2003) is used to measure the performance of constructing the 'S' shape as shown in Figure 3. Visual verification (by a human) was used to assess the tying of a successful knot.

The base architecture consisted of a pre-trained AlexNet whose features were fed into a skill policy network that predicts the location of grasp, the direction of displacement and the magnitude of displacement. All models were optimized using Asam with a learning rate of 1e-4. For the first 40K iterations, the AlexNet weights were frozen and then fine-tuned jointly with the later layers. More details are provided in the appendix of the paper.

The approach of this paper is compared to (Nair et al., 2017) where they did similar experiments using an inverse model. The results in Figure 3 show that for the 'S' shape construction, zero-shot visual imitation achieves a success rate of 60% versus the 36% baseline from the inverse model.

In this experiment, the robot was shown a single image or multiple images to lead it to the goal. The robot, a TurtleBot2, autonomously moves to the goal. For learning the GSP, an automated self-supervised method for data collection was devised that didn't require human supervision. The robot explored two floors of an academic building and collected 230K interactions [math]\displaystyle{ (x_t, a_t, x_{t+1}) }[/math] (more detail is provided I the appendix of the paper). The robot was then placed into an unseen floor of the building with different textures and furniture layout for performing visual imitation at test time.

The collected data was used to train a recurrent forward-consistent GSP. The base architecture for the model was an ImageNet pre-trained ResNet-50 network. The loss weight of the forward model is 0.1 and the objective is minimized using Adam with a learning rate of 5e-4. More details on the implementation are given in the appendix of the paper.

Figure 4 shows the robot's observations during testing. Table 1 shows the results of this experiment; as can be seen, GSP fairs much better than all previous baselines.

Figure 5 and table 1 show the results for the robot performing a task with multiple waypoints, i.e. the robot was shown multiple sub-goals instead of just one final goal state. This was required when the end goal was far away form the robot, such as in another room. It is good to note that zero-shot visual imitation is robust to a changing environment where every frame need not match the demonstrated frame. This is achieved by providing sparse landmarks.

To round off the experiments, a VizDoom simulation environment was used to test the GSP. VizDoom is a Doom-based popular Reinforcement Learning testbed. It allows agents to play the doom game using only a screen buffer. It is a 3D simulation environment that is traditionally considered to be harder than 2D domain like Atari. The goal was to measure the robustness of each method with proper error bars, the role of initial self-supervised data collection and the quantitative difference in modeling forward consistency loss in feature space in comparison to raw visual space.

Data were collected using two methods: random exploration and curiosity-driven exploration (Pathak et al., 2017). The hypothesis here is that better data rather than just random exploration can lead to a better learned GSP. More details on the implementation are given in the paper appendix.

Table 3 shows the results of the VizDoom experiments. They have reported the median of maximum distance reached by the robot in following the give sequence of demonstration images. The maximum distance reached is the distance of farthest landmark point that the agent reaches contiguously. Additionally, the ratio of number of steps taken by the agent to reach the landmark with respect to the number of steps shown in human demonstrations is also reported. The key takeaway that the data collected via curiosity seems to improve the final imitation performance across all methods.

Discussion

This work presented a method for imitating expert demonstrations from visual observations alone. The key idea is to learn a GSP utilizing data collected by self-supervision. A limitation of this approach is that the quality of the learned GSP is restricted by the exploration data. For instance, moving to a goal in between rooms would not be possible without an intermediate sub-goal. So, future research in zero-shot imitation could aim to generalize the exploration such that the agent is able to explore across different rooms for example.

A limitation of the work in this paper is that the method requires first-person view demonstrations. Extending to the third-person may yield a learning of a more general framework. Also, in the current framework, it is assumed that the visual observations of the expert and agent are similar. When the expert performs a demonstration in one setting such as daylight, and the agent performs the task in the evening, results may worsen.

The expert demonstrations are also purely imitated; that is, the agent does not learn the demonstrations. Future work could look into learning the demonstration so as to richen its exploration techniques.

This work used a sequence of images to provide a demonstration but the work, in general, does not make image-specific assumptions. Thus the work could be extended to using formal language to communicate goals, an idea left for future work. Future work would also explore how multiple tasks can be combined into a single model, where different tasks might come from different contexts. Finally, it would be exciting to explore explicit handling of domain shift in future work, so as to handle large differences in embodiment and learn skills directly from videos of human demonstrators obtained, for example, from the Internet.

The architecture introduced in the paper for performing imitation without explicit demostrations but relying only on autonomous exploration is one of the main areas of research in current robotics. This approach could be turned into a more robust system by making use of other sensors, in particular LIDAR scanners. 3D sensors give the agent a highly detailed representation of its environment; which in turn could shorten the time required for completing the task and improve the overall accuracy for both localization and object manipulation.

Critique

1. The paper is well written and could be easily understood. In addition, the experimental evaluations are promising. Also, the proposed method is a novel and interesting so that it could be used as an alternative to pure RL.

2. In the paper, the authors didn't mention clearly why zero-shot imitation instead of a trained reinforcement learning model should be used. So, they need to provide more details about this issue.

3. It is surprised that experimental evaluations on real robots. However, the scalability of this paper is not demonstrated, how to extend it to higher dimensional action spaces and whether it is expensive in high dimensional action spaces.

4. I think having another test where the goal is fixed and the robot remains in its original position would show some interesting insight. Even having the obstacles move around would be some possible to integrate in the test.

References

[1] D.Pathak, P.Mahmoudieh, G.Luo, P.Agrawal, D.Chen, Y.Shentu, E.Shelhamer, J.Malik, A.A.Efros, and T. Darrell. Zero-shot Visual Imitation. In ICLR, 2018.

[2] Brenna D Argall, Sonia Chernova, Manuela Veloso, and Brett Browning. A survey of robot learning from demonstration. Robotics and autonomous systems, 2009.

[3] Albert Bandura and Richard H Walters. Social learning theory, volume 1. Prentice-hall Englewood Cliffs, NJ, 1977.

[4] Pulkit Agrawal, Ashvin Nair, Pieter Abbeel, Jitendra Malik, and Sergey Levine. Learning to poke by poking: Experiential learning of intuitive physics. NIPS, 2016.

[5] Sergey Levine, Peter Pastor, Alex Krizhevsky, and Deirdre Quillen. Learning hand-eye coordination for robotic grasping with large-scale data collection. In ISER, 2016.

[6] Lerrel Pinto and Abhinav Gupta. Supersizing self-supervision: Learning to grasp from 50k tries and 700 robot hours. ICRA, 2016.

[7] Ashvin Nair, Dian Chen, Pulkit Agrawal, Phillip Isola, Pieter Abbeel, Jitendra Malik, and Sergey Levine. Combining self-supervised learning and imitation for vision-based rope manipulation. ICRA, 2017.

[8] Deepak Pathak, Pulkit Agrawal, Alexei A. Efros, and Trevor Darrell. Curiosity-driven exploration by self-supervised prediction. In ICML, 2017.