Word translation without parallel data

Presented by

Xia Fan

Introduction

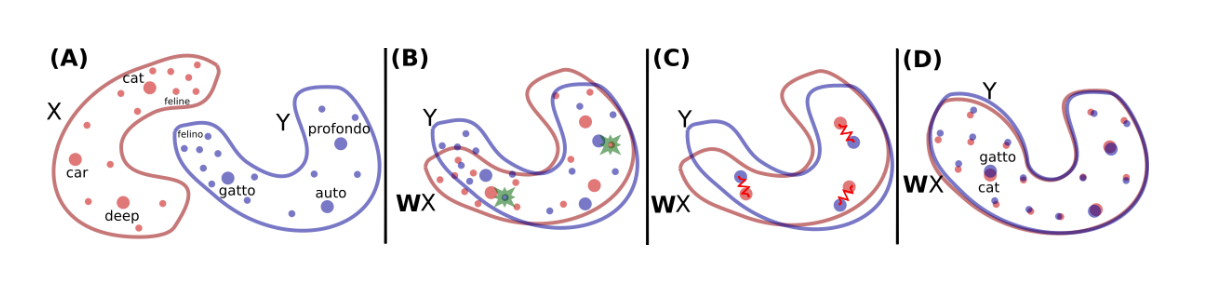

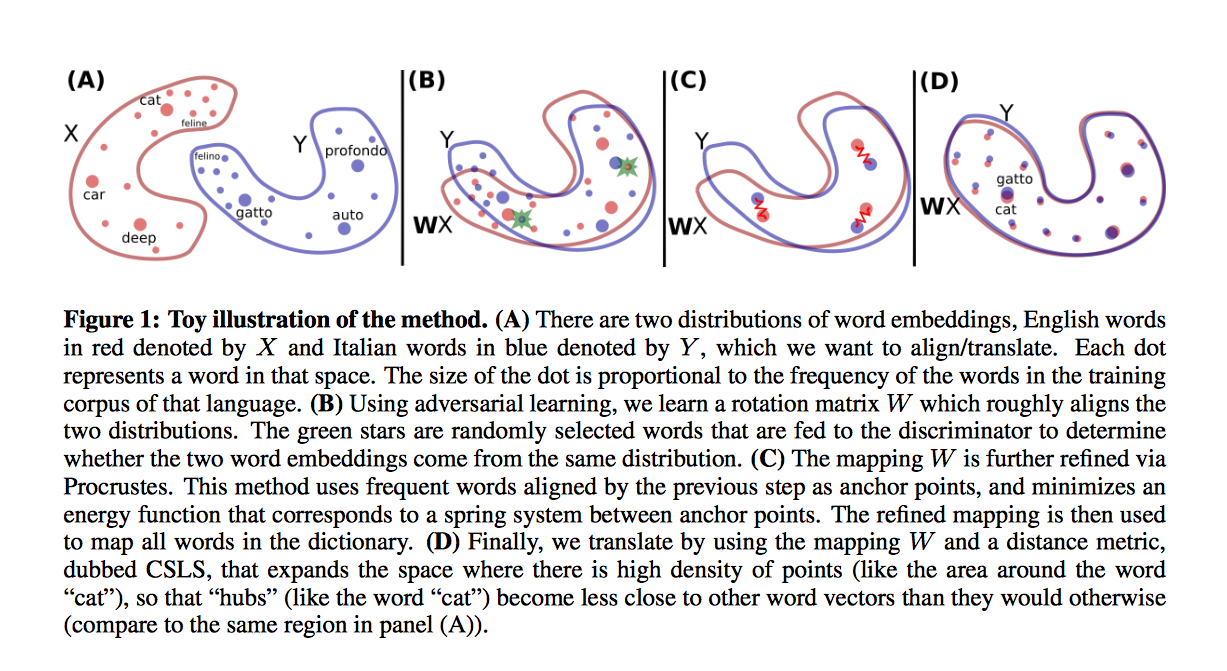

This paper introduce a model that either is on par, or outperforms supervised state-of-the-art methods, without employing any cross-lingual annotated data. This method use a similar idea with GAN: it leverages adversarial training to learn a linear mapping from a source to a distinguish between the mapped source embeddings and the target embeddings, while the mapping is jointly trained to fool the discriminator. Second, this paper extract a synthetic dictionary from the resulting shared embedding space and fine-tune the mapping with the closed-form Procrustes solution from Schonemann (1966). Third, this paper also introduce an unsupervised selection metric that is highly correlated with the mapping quality and that we use both as a stopping criterion and to select the best hyper-parameters.

Model

Estimation of Word Representations in Vector Space

This model focuses on learning a mapping between the two sets such that translations are close in the shared space. Before talking about the model it used, a model which can exploit the similarities of monolingual embedding spaces should be introduced. Mikolov et al.(2013) use a known dictionary of n=5000 pairs of words [math]\displaystyle{ \{x_i,y_i\}_{i\in{1,n}} }[/math]. and learn a linear mapping W between the source and the target space such that

\begin{align} W^*=argmin_{W{\in}M_d(R)}||WX-Y||_F \hspace{1cm} (1) \end{align}

where d is the dimension of the embeddings, [math]\displaystyle{ M_d(R) }[/math] is the space of d*d matrices of real numbers, and X and Y are two aligned matrices of size d*n containing the embeddings of the words in the parallel vocabulary.

Xing et al. (2015) showed that these results are improved by enforcing orthogonality constraint on W. In that case, the equation (1) boils down to the Procrustes problem, which advantageously offers a closed form solution obtained from the singular value decomposition (SVD) of [math]\displaystyle{ YX^T }[/math] :

\begin{align} W^*=argmin_{W{\in}M_d(R)}||WX-Y||_F=UV^T, with U\Sigma V^T=SVD(YX^T) \end{align}

This paper shows how to learn this mapping W without cross-lingual supervision. An illustration of the approach is given in Fig. 1. First, this model learn an initial proxy of W by using an adversarial criterion. Then, it use the words that match the best as anchor points for Procrustes. Finally, it improve performance over less frequent words by changing the metric of the space, which leads to spread more of those points in dense region.

Reall that an Artifical Neural Network (ANN) is a nonlinear function [math]\displaystyle{ \mathcal{N}:\mathbb{R}^{p_0} \rightarrow \mathbb{R} }[/math] that computes an output through iterative nonlinear function applications to an input vector [math]\displaystyle{ \mathbf{z}_0\in \mathbb{R}^{p_0} }[/math]. The updates are said to occur in layers, producing the sequence of vectors associated with each layer as [math]\displaystyle{ \left\{\mathbf{z}_0,\mathbf{z}_1,\dots,\mathbf{z}_N\right\} }[/math]. Any [math]\displaystyle{ \mathbf{z}_n }[/math] for [math]\displaystyle{ n \in \left\{1,\ldots,N\right\} }[/math] is computed recursively from [math]\displaystyle{ \mathbf{z}_0 }[/math] with the weight matrices and bias vectors as per the rule

[math]\displaystyle{ \mathbf{z}_n = \sigma_n\left(\mathbf{W}_{n}\mathbf{z}_{n-1} + \mathbf{b}_{n}\right), \quad 1 \leq n \leq N }[/math]

where [math]\displaystyle{ \mathbf{W}_{n} \in \mathbb{R}^{p_n \times p_{n-1}} }[/math] is the weight matrix and [math]\displaystyle{ \mathbf{b}_{n} \in \mathbb{R}^{p_n} }[/math] is the bias vector associated with the [math]\displaystyle{ n }[/math]th layer in the network.

The element-wise vector function [math]\displaystyle{ \sigma_n\left(\cdot\right) }[/math] is sigmoid-like for each component in its domain, outputing a value that ranges in [math]\displaystyle{ [0,1] }[/math]. Typically, the functions [math]\displaystyle{ \left\{\sigma_n\left(\cdot\right)\right\} }[/math] are the same for [math]\displaystyle{ n \lt N }[/math], but the final output [math]\displaystyle{ \sigma_N(\cdot) }[/math] depends on the network architecture—for instance, it may be a softmax function for multi-label classification. Thus the network is completed characterized by its weights and biases as the tuple [math]\displaystyle{ (\left\{\mathbf{W}_{n}\right\},\left\{\mathbf{b}_{n}\right\}) }[/math].

A sample network for [math]\displaystyle{ N = 2 }[/math] is depicted below: a graph of an ANN with [math]\displaystyle{ N = 2 }[/math], where the vertices represent the vectors [math]\displaystyle{ \left\{\mathbf{z}_n\right\}_{n=0}^2 }[/math] in their respective layers. Edges denote computation, where the vector transformations have been overlaid to show sample dimensions of [math]\displaystyle{ \mathbf{W}_1 }[/math] and [math]\displaystyle{ \mathbf{W}_2 }[/math], such that they match the vectors [math]\displaystyle{ \mathbf{z}_0 }[/math] and [math]\displaystyle{ \mathbf{z}_1 }[/math].

A Recurrent Neural Network is a generalization of an ANN for a sequence of inputs [math]\displaystyle{ \left\{\mathbf{z}_0^{[t]}\right\} }[/math] where [math]\displaystyle{ t \in \left\{1,\ldots,T\right\} }[/math] such that there are recurrent connections between the intermediary vectors [math]\displaystyle{ \left\{\mathbf{z}_n\right\} }[/math] for different so-called time steps. These connections are made to represent conditioning on the previous vectors in the sequence: supposing the sequence were a vectorized representation of the words, an input to the network could be: [math]\displaystyle{ \left\{\mathbf{z}_0^{[1]},\mathbf{z}_0^{[2]},\mathbf{z}_0^{[3]}\right\} = \left\{\text{pass}, \text{the}, \text{sauce}\right\} }[/math]. In a language modelling problem for predictive text, the probability of obtaining [math]\displaystyle{ \mathbf{z}_0^{[3]} }[/math] is strongly conditioned on the previous words in the sequence. As such, additional recurrence weight matrices are added to the update rule for [math]\displaystyle{ 1 \leq n \leq N }[/math] and [math]\displaystyle{ t \gt 1 }[/math] to produce the recurrent update rule

[math]\displaystyle{ \mathbf{z}_n^{[t]} = \sigma_n\left( \mathbf{b}_{n} + \mathbf{W}_{n}\mathbf{z}_{n-1}^{[t]} + \mathbf{R}_n\mathbf{z}_{n}^{[t-1]} \right) }[/math]

where [math]\displaystyle{ \mathbf{R}_n \in \mathbb{R}^{p_n \times p_n} }[/math] is the recurrence matrix that relates the [math]\displaystyle{ n }[/math]th layer’s output for item [math]\displaystyle{ t }[/math] to its previous output for item [math]\displaystyle{ t-1 }[/math]. The network architecture for a single layer [math]\displaystyle{ n }[/math] at step [math]\displaystyle{ t }[/math] is pictured below. This is a schematic of an RNN layer [math]\displaystyle{ n }[/math] at step [math]\displaystyle{ t }[/math] with recurrence on the output of [math]\displaystyle{ \mathbf{z}_n^{[t-1]} }[/math], with the dimensions of the matrices [math]\displaystyle{ \mathbf{R}_{n} }[/math] and [math]\displaystyle{ \mathbf{W}_{n} }[/math] pictured.

RNN Architecture by Graves, 2013

The RNN update rule used by Sutskever et al. comes from a paper by Graves (2013). The connections between layers are denser in this case. The final layer is fully connected to every preceding layer execept for the input [math]\displaystyle{ \mathbf{z}_0^{[t]} }[/math], and follows the update rule

[math]\displaystyle{ \mathbf{z}_{N}^{[t]} = \sigma_n\left( \mathbf{b}_N + \displaystyle\sum_{n' = 1}^{N-1} \mathbf{W}_{N,n'}\mathbf{z}_{n'}^{[t]} \right) }[/math]

where [math]\displaystyle{ \mathbf{W}_{N,n'} \in \mathbb{R}^{p_N\times p_{n'}} }[/math] denotes the weight matrix between layer [math]\displaystyle{ n' }[/math] and [math]\displaystyle{ N }[/math].

The layers 2 through [math]\displaystyle{ N-1 }[/math] have additional connections to [math]\displaystyle{ \mathbf{z}_0^{[t]} }[/math] as

[math]\displaystyle{ \mathbf{z}_n^{[t]} = \sigma_n\left( \mathbf{b}_{n} + \mathbf{W}_{n}\mathbf{z}_{n-1}^{[t]} + \mathbf{W}_{n,0}\mathbf{z}_0^{[t]} + \mathbf{R}_n\mathbf{z}_{n}^{[t-1]} \right), }[/math]

where, again, [math]\displaystyle{ \mathbf{W}_{n,n'} }[/math] must be of size [math]\displaystyle{ \mathbb{R}^{p_n\times p_{n'}} }[/math]. The first layer has the typical RNN input rule as before,

[math]\displaystyle{ \mathbf{z}_{1}^{[t]} = \sigma_1\left( \mathbf{b}_{1} + \mathbf{W}_{1}\mathbf{z}_{0}^{[t]} + \mathbf{R}_{1}\mathbf{z}_{1}^{[t-1]} \right). }[/math]

Long Short-Term Memory Recurrent Neural Network (LSTM)

Recurrent neural networks are a variation of deep neural networks that are capable of storing information about previous hidden states in special memory layers.<ref name=lstm> Hochreiter, Sepp, and Jürgen Schmidhuber. "Long short-term memory." Neural computation 9.8 (1997): 1735-1780. </ref> Unlike feed forward neural networks that take in a single fixed length vector input and output a fixed length vector output, recurrent neural networks can take in a sequence of fixed length vectors as input, because of their ability to store information and maintain a connection between inputs through this memory layer. By comparison, previous inputs would have no impact on current output for feed forward neural networks, whereas they can impact current input in a recurrent neural network. (This paper used the LSTM formulation from Graves<ref name=grave> Graves, Alex. "Generating sequences with recurrent neural networks." arXiv preprint arXiv:1308.0850 (2013). </ref>)

This form of input fits naturally with language translation, as sentences are sequences of words, and many problems regarding representing variable length sentences as fixed length vectors can be avoided. However, training recurrent neural networks to learn long time lag dependencies--where inputs many time steps back can heavily influence current output--is difficult, and generally results in exploding or vanishing gradients. A variation of recurrent neural networks, the long short-term memory neural network, was used instead for this paper, as they do not suffer as much from the vanishing gradient problem.

The purpose of LSTM in this case is to estimate the conditional probability of the output sequence, [math]\displaystyle{ \,(y_1,\cdots,y_{T'}) }[/math], based on the input sequence, [math]\displaystyle{ \,(x_1,\cdots,x_{T}) }[/math], where [math]\displaystyle{ \,T }[/math] does not have to equal [math]\displaystyle{ \,T' }[/math]

Let [math]\displaystyle{ \,v }[/math] represent the state of hidden layers after [math]\displaystyle{ \,(x_1,\cdots,x_{T}) }[/math] have been inputted into the LSTM, i.e. what has been stored in the neural network's memory. Then

- [math]\displaystyle{ \,p(y_1,\cdots,y_{T'}|x_1,\cdots,x_{T})=\prod_{t=1}^{T'} p(y_t|v,y_1,\cdots,y_{t-1}) }[/math]

For each [math]\displaystyle{ \,p(y_t|v,y_1,\cdots,y_{t-1}) }[/math], The LSTM neural network at time step [math]\displaystyle{ \,t }[/math] after [math]\displaystyle{ \,(x_1,\cdots,x_T,y_1,\cdots,y_{t-1}) }[/math] have been inputted would output the relative probability of each word in the vocabulary and softmax function, [math]\displaystyle{ \,\frac{e^{x_b}}{\sum_{t=1}^N e^{x_t}}\, }[/math] can be applied to this output vector to generate the corresponding probability. From this, we can calculate any [math]\displaystyle{ \,p(y_1,\cdots,y_{T'}|x_1,\cdots,x_{T}) }[/math] by repeatedly adding [math]\displaystyle{ \,y_t }[/math] as input into the LSTM neural network to calculate the new set of probabilities.

The objective function used during the training process was:

- [math]\displaystyle{ \,\frac{1}{|T_r|}\sum_{(S,T)\in T_r} \log(p(T|S))\, }[/math]

Where [math]\displaystyle{ \,S }[/math] is the base/source sentence, [math]\displaystyle{ \,T }[/math] is the paired translated sentence and [math]\displaystyle{ \,T_r }[/math] is the total training set. This objective function is to maximize the log probability of a correct translation [math]\displaystyle{ \,T }[/math] given the base/source sentence [math]\displaystyle{ \,S }[/math] over the entire training set. Once the training is complete, translations are produced by finding the most likely translation according to LSTM:

- [math]\displaystyle{ \hat{T} = \underset{T}{\operatorname{arg\ max}}\ p(T|S) }[/math]

It has been showed that Long Short-Term Memory recurrent neural networks have the ability to generate both discrete and real-valued sequences with complex, long-range structure using next-step prediction <ref name=grave>

Reference

</ref>.

Input and Output Data Transformation

About 12 million English-French sentence pairs were used during the training with a vocabulary of 160,000 for English and 80,000 for French. Any unknown words were replaced with a special token. All sentences were attached with an <EOS> token to indicate end of sentence.

Additionally, input sentences were entered backwards as the researchers found this significantly increased accuracy. For example, using the sentence "Today I went to lectures.", the input order would be "lectures,to,went,I,Today". They suspect this is due to reduction of time lag between the beginning of each sentence.

To decode a translation after training, a simple left to right beam search algorithm is used. This process goes as follows, a small number of initial translations with highest probabilities are picked at the start. Each translation is then re-entered into the LSTM independently and a new small set of words with highest probabilities are appended to the end of each translation. This repeats until <EOS> token is chosen and the completely translated sentence is added to the final translation set which is then ranked and highest ranking translation chosen.

Training and Results

Training Method

Two LSTM neural networks were used overall; one to generate a fixed vector representation from the input sequence and another to generate the output sequence from the fixed vector representation. Each neural network had 4 layers and 1000 cells per layer and [math]\displaystyle{ \,v }[/math] can be represented by the 8000 real numbers in each cell's memory after the input sequence has been entered. Stochastic gradient descent with a batch size of 128 and learning rate of 0.7 was used. Initial parameters were set using a uniform distribution between -0.08 and 0.08. LSTM does not suffer from the vanishing gradient problem, but it can be affected by exploding gradients which is taken into account by enforcing a hard constraint on the norm of the gradient.

Scoring Method

Scoring was done using the BLEU (Bilingual Evaluation Understudy) metric. This is an algorithm created for evaluating the quality of machine translated text. This is done by using a modified form of precision to compare a produced translation against a set of reference translations. This metric tends to correlate well with human judgement across a corpus, but performs badly if used to evaluate individual sentences. More information can be found in the BLEU paper and the wikipedia article. These resources both state that the BLEU score is a number between 0 and 1, with closer to 1 corresponding to a better translation. The LSTM paper reports BLEU scores in percentage values.

Results

The resulting LSTM neural networks outperformed standard Statistical Machine Translation (SMT) with a BLEU score of 34.8 against 33.3 and with certain heuristics or modification, was very close to matching the best performing system. Additionally, it could recognize sentences in both active and passive voice as being similar.

Active Voice: I ate an apple.

Passive Voice: The apple was eaten by me.

An interesting result is the fact that reversing the source sentences (not test sentences) improved the long sentence decoding, which in turn increased the BLEU score from 25.9 to 30.6. While the authors do not have a complete explanation, they theorize the improvement in performance is due to the introduction of many short term dependencies to the data-set, by reversing the source sentences they minimize the time lag between the end of the source and the start of the target sentence. This reduction in the time lag is what the authors believe help the LSTM architecture establish a link between source and target and utilize the memory feature of the network better. Note, that the mean time lag does not change. Given the input sequence [math]\displaystyle{ (x_1, \dots, x_T) }[/math] and the target sequence [math]\displaystyle{ (y_1, \dots, y_T) }[/math], the sequence of time lags is [math]\displaystyle{ \Delta t = (T, \dots, T) }[/math] and [math]\displaystyle{ \frac{1}{T} \sum_{\Delta t_i} T = T }[/math]. If, however, the input is reversed, the sequence of time lags of corresponding inputs is [math]\displaystyle{ \Delta t = (1, 3, \dots, 2T - 1) }[/math] which still has a mean time lag of [math]\displaystyle{ \frac{1}{T} \sum_{\Delta t_i} (2i - 1) = \frac{1}{T} \sum_{i = 1}^{T/2} (2i + 2(T-i)) = T }[/math] (assuming even T, but odd T can be shown similarly). Thus, half of the time lags are shorter with the reversed input sequence.

For example, let "I saw the man" be the source sentence, "with the binoculars" be the target sentence, if we concatenate both source and target sentences we have "I saw the man with the binoculars". By reversing the source sentence ("man the saw I") the subject "man" is now closer to the context target "binoculars", compared to if the source sentence is not reversed. Reversing the input sentences leads to more confident predictions in the early parts of the target sentence and to less confident predictions in the later parts. Also, it results in LSTMs with better memory utilization.

In summary the LSTM method has proven to be quite capable of translating long sentences despite potentially long delay between input time steps. However, it still falls short of [Edinburgh's specialised statistical model http://www.statmt.org/OSMOSES/sysdesc.pdf].

Some developments of LSTM

Dealing with the very rare words are a challenge for the LSTM. A weakness of LSTM is its inability to (correctly) translate the very rare words that comes up with out-of-vocabulary words, i.e. no translation. The long short-term dependencies that are induced in this method can lead to less likelihood for a long sequence words come after the unknown words. In the other hand, these untranslated words impose a longer temporal memory that decrease the overall efficiency of network. Sutskever I ([1]) suggested a method to address the rare word problem. They assumed that if the origin of any undefined word is known then the word can be look up by introducing a post-processing step. This step would replace each unknown word in the system’s output with a translation of its source word. They proposed three strategies to track the source and translate it using either a dictionary or the identity translation.

Open questions

The results of the paper pose some interesting questions which are not discussed in the paper itself:

- Instead of reversing the input sequence the target sequence could be reversed. This would change the time lags between corresponding words in a similar way, but instead of reducing the time lag between the first half of corresponding words, it is reduced between the last half of the words. This might allow conclusions about whether the improved performance is purely due to the reduced minimal time lag or whether structure in natural language is also important (e.g. when a short time lag between the first few words is better than a short time lag between the last few words of sentence).

- For half of the words the time lag increases to more than the average. Thus, they might have only a minor contribution to the model performance. It could be interesting to see how much the performance is affected by leaving those words out of the input sequence. Or more generally, one could ask, how does the performance related to the number of used input words?

More Formulations of Recurrent Neural Networks

The standard RNN is formalized as follows

- [math]\displaystyle{ \,h_t=\tanh(W_{hx}x_t+W_{hh}h_{t-1}+b_h) }[/math]

- [math]\displaystyle{ \,o_t=W_{oh}h_t+b_o }[/math]

Given sequence of input vectors [math]\displaystyle{ \,(x_1,\cdots,x_{T}) }[/math], the RNN computes a sequence of hidden states [math]\displaystyle{ \,(h_1,\cdots,h_{T}) }[/math] and a sequence of output [math]\displaystyle{ \,(o_1,\cdots,o_{T}) }[/math] by iterating the above equations. [math]\displaystyle{ \,W_{hx} }[/math] is the input to hidden weight matrix, [math]\displaystyle{ \,W_{hh} }[/math] is the hidden to hidden weight matrix, [math]\displaystyle{ \,W_{oh} }[/math] is the hidden to output weight matrix. Vector [math]\displaystyle{ \,b_{h} }[/math] and [math]\displaystyle{ \,b_{o} }[/math] are the biases. When t=1, the undefined [math]\displaystyle{ \,W_{hh}h_{t-1} }[/math] is replace with a special initial bias vector, [math]\displaystyle{ \,h_{init} }[/math].

It may seem to train RNNs with gradient descent, but in reality, gradient decays exponentially as it is backpropagated through time. The relation between parameter and dynamics of the RNN is highly unstable, which makes gradient descent ineffective. Thus, it argues that RNN can not learn long-range temporal dependencies when gradient descent is used for training. A good way to deal with inability of gradient descent to learn long-range temporal structure in RNN is known as "Long-Short Term memory". (http://www.cs.utoronto.ca/~ilya/pubs/2011/LANG-RNN.pdf)

There are different variants of LSTM<ref name=grave> </ref><ref> Gers, Felix, and Jürgen Schmidhuber. "Recurrent nets that time and count." Neural Networks, 2000. IJCNN 2000, Proceedings of the IEEE-INNS-ENNS International Joint Conference on. Vol. 3. IEEE, 2000. </ref><ref> Cho, Kyunghyun, et al. "Learning phrase representations using rnn encoder-decoder for statistical machine translation." arXiv preprint arXiv:1406.1078 (2014). </ref> other than the original one proposed by Hochreiter et al.<ref name=lstm> </ref> Greff et al. compare the performance of some different popular variants in their work<ref> Greff, Klaus, et al. "LSTM: A Search Space Odyssey." arXiv preprint arXiv:1503.04069 (2015). </ref> and draw the conclusion that they are about the same. While Jozefowicz, et al. suggest that some architecture can perform better than LSTM on certain tasks<ref> Jozefowicz, Rafal, Wojciech Zaremba, and Ilya Sutskever. "An Empirical Exploration of Recurrent Network Architectures." Proceedings of the 32nd International Conference on Machine Learning (ICML-15). 2015. </ref>.

Criticisms

There is some concern regarding whether this model will be able to provide a truly scalable solution to MT. In particular, it is not obvious that this model will be able to sufficiently scale to long sentences as is evident in the reported results. The model is severely limited, in general, by working only in the absence of infrequent words. These theoretical limitations alongside sparse experimental results give rise to skepticism about the overarching validity of the model.

Source

Sutskever, I. Vinyals, O. & Le. Q. V. Sequence to sequence learning with neural networks. In Proc. Advances in Neural Information Processing Systems 27 3104–3112 (2014). <references />