Word translation without parallel data

Presented by

Xia Fan

Introduction

Many successful methods for learning relationships between languages stem from the hypothesis that there is a relationship between the context of words and their meanings. This means that if an adequate representation of a language is found in a high dimensional space (this is called an embedding), then words similar to a given word are close to one another in this space (ex. some norm can be minimized to find a word with similar context). Historically, another significant hypothesis is that these embedding spaces show similar structures over different languages. That is to say that given an embedding space for English and one for Spanish, a mapping could be found that aligns the two spaces and such a mapping could be used as a tool for translation. Many papers exploit these hypotheses, but use large parallel datasets for training. Recently, to remove the need for supervised training, methods have been implemented that utilize identical character strings (ex. letters or digits) in order to try to align the embeddings. The downside of this approach is that the two languages need to be similar to begin with as they need to have some shared basic building block. The method proposed in this paper uses an adversarial method to find this mapping between the embedding spaces of two languages without the use of large parallel datasets.

This paper introduces a model that either is on par, or outperforms supervised state-of-the-art methods, without employing any cross-lingual annotated data. This method uses an idea similar to GANs: it leverages adversarial training to learn a linear mapping from a source to distinguish between the mapped source embeddings and the target embeddings, while the mapping is jointly trained to fool the discriminator. Second, this paper extracts a synthetic dictionary from the resulting shared embedding space and fine-tunes the mapping with the closed-form Procrustes solution from Schonemann (1966). Third, this paper also introduces an unsupervised selection metric that is highly correlated with the mapping quality and that the authors use both as a stopping criterion and to select the best hyper-parameters.

Model

Estimation of Word Representations in Vector Space

This model focuses on learning a mapping between the two sets such that translations are close in the shared space. Before talking about the model it used, a model which can exploit the similarities of monolingual embedding spaces should be introduced. Mikolov et al.(2013) use a known dictionary of n=5000 pairs of words [math]\displaystyle{ \{x_i,y_i\}_{i\in{1,n}} }[/math]. and learn a linear mapping W between the source and the target space such that

\begin{align} W^*=argmin_{W{\in}M_d(R)}||WX-Y||_F \hspace{1cm} (1) \end{align}

where d is the dimension of the embeddings, [math]\displaystyle{ M_d(R) }[/math] is the space of d*d matrices of real numbers, and X and Y are two aligned matrices of size d*n containing the embeddings of the words in the parallel vocabulary.

Xing et al. (2015) showed that these results are improved by enforcing orthogonality constraint on W. In that case, equation (1) boils down to the Procrustes problem, which advantageously offers a closed form solution obtained from the singular value decomposition (SVD) of [math]\displaystyle{ YX^T }[/math] :

\begin{align} W^*=argmin_{W{\in}M_d(R)}||WX-Y||_F=UV^T, with U\Sigma V^T=SVD(YX^T). \end{align}

This can be proven as follows. First note that \begin{align} &||WX-Y||_F\\ &= \langle WX, WX \rangle_F -2 \langle W X, Y \rangle_F + \langle Y, Y \rangle_F \\ &= ||X||_F^2 -2 \langle W X, Y \rangle_F + || Y||_F^2, \end{align}

where [math]\displaystyle{ \langle \cdot, \cdot \rangle_F }[/math] denotes the Frobenius inner-product and we have used the orthogonality of [math]\displaystyle{ W }[/math]. It follows that we need only maximize the inner-product above. Let [math]\displaystyle{ u_1, \ldots, u_d }[/math] denote the columns of [math]\displaystyle{ U }[/math]. Let [math]\displaystyle{ v_1, \ldots , v_d }[/math] denote the columns of [math]\displaystyle{ V }[/math]. Let [math]\displaystyle{ \sigma_1, \ldots, \sigma_d }[/math] denote the diagonal entries of [math]\displaystyle{ \Sigma }[/math]. We have \begin{align} &\langle W X, Y \rangle_F \\ &= \text{Tr} (W^T Y X^T)\\ &=\sum_i \sigma_i \text{Tr}(W^T u_i v_i^T)\\ &=\sum_i \sigma_i ((Wv_i)^T u_i )\\ &\le \sum_i \sigma_i ||Wv_i|| ||u_i||\\ &= \sum_i \sigma_i \end{align} where we have used the invariance of trace under cyclic permutations, Cauchy-Schwarz, and the orthogonality of the columns of U and V. Note that choosing \begin{align} W=UV^T \end{align} achieves the bound. This completes the proof.

Domain-adversarial setting

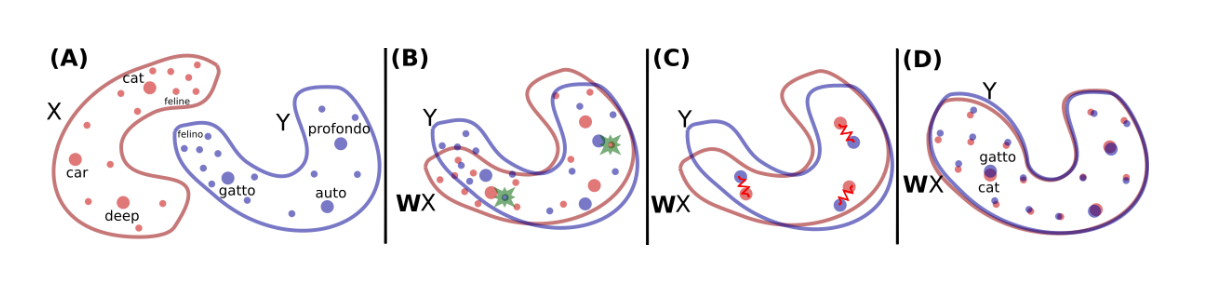

This paper shows how to learn this mapping W without cross-lingual supervision. An illustration of the approach is given in Fig. 1. First, this model learn an initial proxy of W by using an adversarial criterion. Then, it use the words that match the best as anchor points for Procrustes. Finally, it improve performance over less frequent words by changing the metric of the space, which leads to spread more of those points in dense region.

Let [math]\displaystyle{ X={x_1,...,x_n} }[/math] and [math]\displaystyle{ Y={y_1,...,y_m} }[/math] be two sets of n and m word embeddings coming from a source and a target language respectively. A model is trained is trained to discriminate between elements randomly sampled from [math]\displaystyle{ WX={Wx_1,...,Wx_n} }[/math] and Y, We call this model the discriminator. W is trained to prevent the discriminator from making accurate predictions. As a result, this is a two-player game, where the discriminator aims at maximizing its ability to identify the origin of an embedding, and W aims at preventing the discriminator from doing so by making WX and Y as similar as possible. This approach is in line with the work of Ganin et al.(2016), who proposed to learn latent representations invariant to the input domain, where in this case, a domain is represented by a language(source or target).

1. Discriminator objective

Refer to the discriminator parameters as [math]\displaystyle{ \theta_D }[/math]. Consider the probability [math]\displaystyle{ P_{\theta_D}(source = 1|z) }[/math] that a vector z is the mapping of a source embedding (as opposed to a target embedding) according to the discriminator. The discriminator loss can be written as:

\begin{align} L_D(\theta_D|W)=-\frac{1}{n} \sum_{i=1}^n log P_{\theta_D}(source=1|Wx_i)-\frac{1}{m} \sum_{i=1}^m log P_{\theta_D}(source=0|y_i) \end{align}

2. Mapping objective

In the unsupervised setting, W is now trained so that the discriminator is unable to accurately predict the embedding origins:

\begin{align} L_W(W|\theta_D)=-\frac{1}{n} \sum_{i=1}^n log P_{\theta_D}(source=0|Wx_i)-\frac{1}{m} \sum_{i=1}^m log P_{\theta_D}(source=1|y_i) \end{align}

3. Learning algorithm To train the model, the authors follow the standard training procedure of deep adversarial networks of Goodfellow et al. (2014). For every input sample, the discriminator and the mapping matrix W are trained successively with stochastic gradient updates to respectively minimize [math]\displaystyle{ L_D }[/math] and [math]\displaystyle{ L_W }[/math]

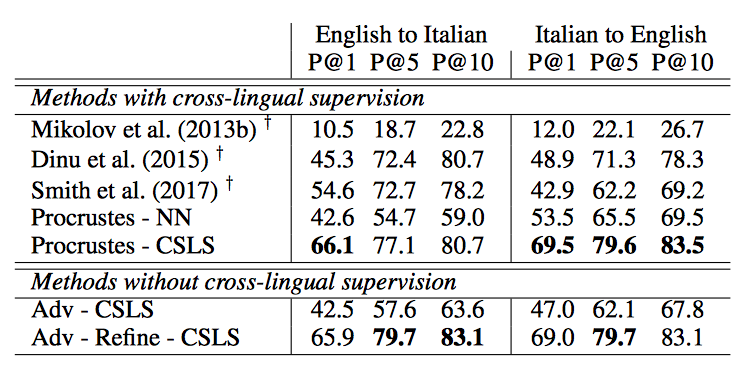

Refinement procedre

The matrix W obtained with adversarial training gives good performance (see Table 1), but the results are still not on par with the supervised approach. In fact, the adversarial approach tries to align all words irrespective of their frequencies. However, rare words have embeddings that are less updated and are more likely to appear in different contexts in each corpus, which makes them harder to align. Under the assumption that the mapping is linear, it is then better to infer the global mapping using only the most frequent words as anchors. Besides, the accuracy on the most frequent word pairs is high after adversarial training. To refine the mapping, this paper build a synthetic parallel vocabulary using the W just learned with adversarial training. Specifically, this paper consider the most frequent words and retain only mutual nearest neighbors to ensure a high-quality dictionary. Subsequently, this paper apply the Procrustes solution in (2) on this generated dictionary. Considering the improved solution generated with the Procrustes algorithm, it is possible to generate a more accurate dictionary and apply this method iteratively, similarly to Artetxe et al. (2017). However, given that the synthetic dictionary obtained using adversarial training is already strong, this paper only observe small improvements when doing more than one iteration, i.e., the improvements on the word translation task are usually below 1%.

Cross-Domain Similarity Local Scaling (CSLS)

This paper considers a bi-partite neighborhood graph, in which each word of a given dictionary is connected to its K nearest neighbors in the other language. [math]\displaystyle{ N_T(Wx_s) }[/math] is used to denote the neighborhood, on this bi-partite graph, associated with a mapped source word embedding [math]\displaystyle{ Wx_s }[/math]. All K elements of [math]\displaystyle{ N_T(Wx_s) }[/math] are words from the target language. Similarly we denote by [math]\displaystyle{ N_S(y_t) }[/math] the neighborhood associated with a word t of the target language. Consider the mean similarity of a source embedding [math]\displaystyle{ x_s }[/math] to its target neighborhood as

\begin{align} r_T(Wx_s)=\frac{1}{K}\sum_{y\in N_T(Wx_s)}cos(Wx_s,y_t) \end{align}

where cos(,) is the cosine similarity. Likewise, the mean similarity of a target word [math]\displaystyle{ y_t }[/math] to its neighborhood is denotes as [math]\displaystyle{ r_S(y_t) }[/math]. This is used to define similarity measure CSLS(.,.) between mapped source words and target words,as

\begin{align} CSLS(Wx_s,y_t)=2cos(Wx_s,y_t)-r_T(Wx_s)-r_S(y_t) \end{align}

This process increases the similarity associated with isolated word vectors, but decreases the similarity of vectors lying in dense areas.

Training and architectural choices

Architecture

This paper use unsupervised word vectors that were trained using fastText2. These correspond to monolingual embeddings of dimension 300 trained on Wikipedia corpora; therefore, the mapping W has size 300 × 300. Words are lower-cased, and those that appear less than 5 times are discarded for training. As a post-processing step, only the first 200k most frequent words were selected in the experiments. For the discriminator, it use a multilayer perceptron with two hidden layers of size 2048, and Leaky-ReLU activation functions. The input to the discriminator is corrupted with dropout noise with a rate of 0.1. As suggested by Goodfellow (2016), a smoothing coefficient s = 0.2 is included in the discriminator predictions. This paper use stochastic gradient descent with a batch size of 32, a learning rate of 0.1 and a decay of 0.95 both for the discriminator and W .

Discriminator inputs

The embedding quality of rare words is generally not as good as the one of frequent words (Luong et al., 2013), and it is observed that feeding the discriminator with rare words had a small, but not negligible negative impact. As a result, this paper only feed the discriminator with the 50,000 most frequent words. At each training step, the word embeddings given to the discriminator are sampled uniformly. Sampling them according to the word frequency did not have any noticeable impact on the results.

Orthogonality

In this work, it propose to use a simple update step to ensure that the matrix W stays close to an orthogonal matrix during training (Cisse et al. (2017)). Specifically, the following update rule on the matrix W is used :

\begin{align} W \leftarrow (1+\beta)W-\beta(WW^T)W \end{align}

where β = 0.01 is usually found to perform well. This method ensures that the matrix stays close to the manifold of orthogonal matrices after each update.

This update rule can be justified as follows. Consider the function \begin{align} g: \mathbb{R}^{d\times d} \to \mathbb{R}^{d \times d} \end{align} defined by \begin{align} g(W)= W^T W -I. \end{align}

The derivative of g at W is is the linear map \begin{align} Dg[W]: \mathbb{R}^{d \times d} \to \mathbb{R}^{d \times d} \end{align} defined by \begin{align} Dg[W](H)= H^T W + W^T H. \end{align}

The adjoint of this linear map is

\begin{align} D^\ast g[W](H)= WH^T +WH. \end{align}

Now consider the function f \begin{align} f: \mathbb{R}^{d \times d} \to \mathbb{R} \end{align}

defined by

\begin{align} f(W)=||g(W) ||_F^2=||W^TW -I ||_F^2. \end{align}

f has gradient: \begin{align} \nabla f (W) = 2D^\ast g[W] (g(W ) ) =2W(W^TW-I) +2W(W^TW-I)=4W W^TW-4W. \end{align}

Thus the update \begin{align} W \leftarrow (1+\beta)W-\beta(WW^T)W \end{align} amounts to a step in the direction opposite the gradient of f. That is, a step toward the set of orthogonal matrices.

Dictionary generation

The refinement step requires to generate a new dictionary at each iteration. In order for the Procrustes solution to work well, it is best to apply it on correct word pairs. As a result, the CSLS method is used to select more accurate translation pairs in the dictionary. To increase even more the quality of the dictionary, and ensure that W is learned from correct translation pairs, only mutual nearest neighbors were considered, i.e. pairs of words that are mutually nearest neighbors of each other according to CSLS. This significantly decreases the size of the generated dictionary, but improves its accuracy, as well as the overall performance.

Validation criterion for unsupervised model selection

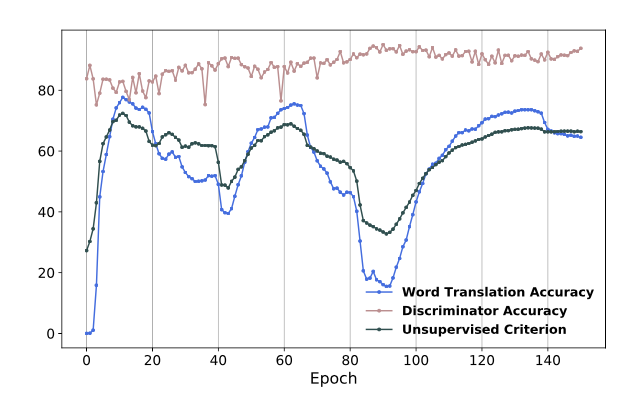

This paper consider the 10k most frequent source words, and use CSLS to generate a translation for each of them, then compute the average cosine similarity between these deemed translations, and use this average as a validation metric. Figure 2 shows the correlation between the evaluation score and this unsupervised criterion (without stabilization by learning rate shrinkage)

Results

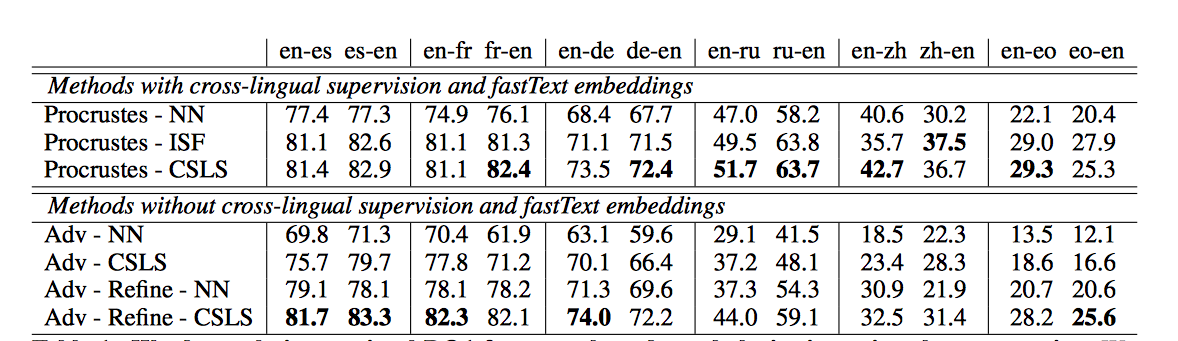

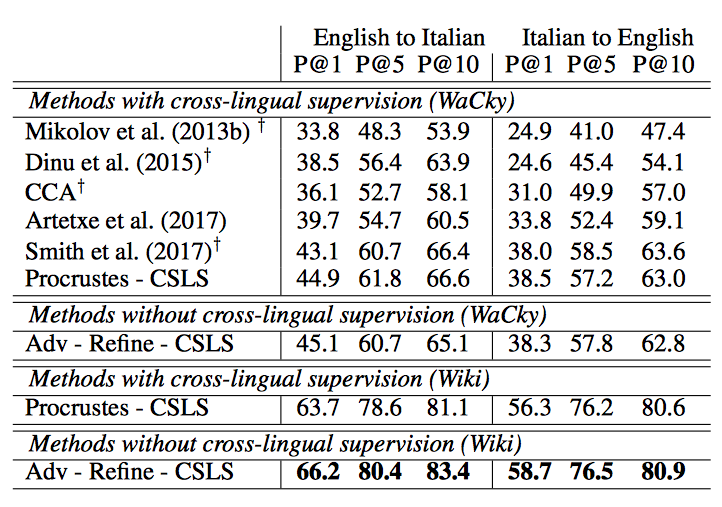

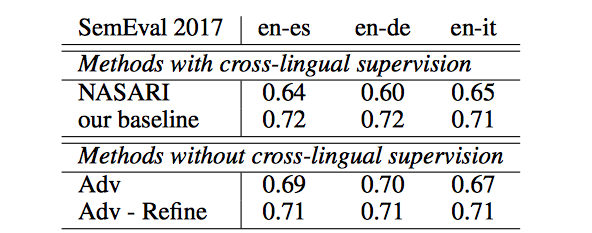

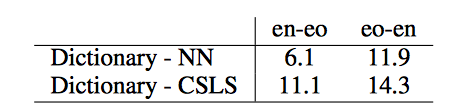

In what follows, the results on word translation retrieval using the bilingual dictionaries were presented in Table 1 and the comparison to previous work in Table 2 where unsupervised model significantly outperform previous approaches. The results on the sentence translation retrieval task were also presented in Table 3 and the cross-lingual word similarity task in Table 4. Finally, the results on word-by-word translation for English-Esperanto was presented in Table 5.

Conclusion

This paper shows for the first time that one can align word embedding spaces without any cross-lingual supervision, i.e., solely based on unaligned datasets of each language, while reaching or outperforming the quality of previous supervised approaches in several cases. Using adversarial training, the model is able to initialize a linear mapping between a source and a target space, which is also used to produce a synthetic parallel dictionary. It is then possible to apply the same techniques proposed for supervised techniques, namely a Procrustean optimization.

Source

Lample, Guillaume; Denoyer, Ludovic; Ranzato, Marc'Aurelio | Unsupervised Machine Translation Using Monolingual Corpora Only | arVix: 1701.04087