Visual Reinforcement Learning with Imagined Goals

Introduction and Motivation

Humans are able to accomplish many tasks without any explicit or supervised training, simply by exploring their environment. If I were dropped in the middle of Moscow, simply by walking around in an undirected manner, I could accomplish a specific task (ex. go to the grocery store) without ever having seen this task before simply by knowing where the store was located from past experience. We are able to set our own goals and learn from our experiences, and thus able to accomplish specific tasks without ever having been trained explicitly for them, a core principle of generalization.

Naturally, the next question for any machine learning scientist is: can an autonomous agent also set its own goals and learn from its environment. In the paper “Visual Reinforcement Learning with Imagined Goals”, the authors are able to devise such an unsupervised reinforcement learning system. Specifically, they introduce a system that sets abstract goals and autonomously learns to achieve those goals. They then show that they can use these autonomously learned skills to perform a variety of user-specified goals, such as pushing objects, grasping objects, and opening doors, without any additional learning. Lastly, they demonstrate that their method is efficient enough to work in the real world on a Sawyer robot. The robot learns to set and achieve goals involving pushing an object to a specific location, with only images as the input to the system.

Related Work

Many previous works on vision-based deep reinforcement learning for robotics studied a variety of behaviors such as grasping, pushing, navigation, and other manipulation task. However, their assumptions on the models limit their suitability for training general-purpose robots. The authors utilize a goal-conditioned value function to tackle more general tasks through goal relabeling. Specifically, they use a model-free Q-learning method that operates on raw state observations and actions.

Unsupervised learning have been used in a number of prior works to acquire better representations of RL. In these methods, the learned representation is used as a substitute for the state for the policy. However, these methods require additional information, such as access to the ground truth reward function based on the true state during training time, expert trajectories, human demonstrations, or pre-trained object-detection features. In contrast, the authors learn to generate goals and use the learned representation to get a reward function for those goals without any of these extra sources of supervision.

Goal-Conditioned Reinforcement Learning

The ultimate directive in reinforcement learning is to learn a policy, that when given a state and goal, can dictate the optimal action. In this paper, goals are not explicitly defined during training. If a goal is not explicity defined, a set of synthetic goals must be autogenerated by the agent. Thus, suppose we let an autonomous agent explore an environment with a random policy. After executing each action, state observations are collected and stored. These state observations are structured in the form of images. The agent can randomly select goals from the set of state observations, and can also randomly select initial states from the set of state observations.

Now given a set of all possible states, a goal, and an initial state, a reinforcement learning framework can be used to find the optimal policy such that the value function is maximized. However, to implement such a framework, we need to define a reward function. One choice for the reward is the negative distance between the current state and the goal state, so that maximizing the reward corresponds to minimizing the distance to a goal state.

We can train a single policy to maximize rewards and therefore reach goal states by first learning a goal-conditioned Q function. A goal-conditioned Q function Q(s,a,g) tells us how good an action a is, given the current state s and goal g. For example, a Q function tells us, “How good is it to move my hand up (action a), if I’m holding a plate (state s) and want to put the plate on the table (goal g)?” Once this Q function is trained, you can extract a goal-conditioned policy by performing the following optimization

which effectively says, “choose the best action according to this Q function.” By using this procedure, we obtain a policy that maximizes the sum of rewards, i.e. reaches various goals.

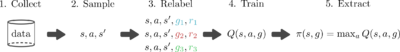

One reason that Q learning is popular is that in can be done in an off-policy manner, meaning that the only things we need to train our Q function are samples of state, action, next state, goal, and reward: (s,a,s′,g,r). This data can be collected by any policy and can be reused across multiples tasks. So a simple goal-conditioned Q-learning algorithm looks like this:

The main bottleneck in this training procedure is collecting data. If we could artificially generate more data, we could in theory learn to solve various tasks without even interacting with the world. Unfortunately, learning an accurate model of the world is difficult, so we usually have to rely on sampling to get state-action-next-state data, (s,a,s′). However, if we have access to the reward function r(s,g), we can retroactively relabeled goals and recompute rewards, allowing us to artificially generate more data given a single (s,a,s′) tuple. So, we can modify this training procedure like so:

The nice thing about this goal resampling is that we can simultaneously learn how to reach multiple goals at once without needing more data from the environment. Overall, this simple modification can result in substantially faster learning.

The method outlined above makes two major assumptions: (1) you have access to a reward function and (2) you have access to a goal sampling distribution p(g). Prior works that use this goal relabeling strategy ( Kaelbling ‘93 , Andrychowicz ‘17 , Pong ‘18 ) operate on ground truth state information (e.g., the Cartesian position of an object), where it is easy to manually design both the goal distribution p(g) and reward function. However, when moving to vision-based tasks where goals are images, both of these assumptions introduce practical concerns.

For one, a fundamental problem with this reward function is that it assumes that the distance between raw images will yield semantically useful information. Images are noisy. A large amount of information in an image that may not be related to the object we analyze. Thus, the distance between two images may not correlate with their semantic distance.

Second, because our goals are images, we need a goal image distribution p(g) from which we can sample goal images. Manually designing a distribution over goal images is a non-trivial task and image generation is still an active field of research. Instead, we would like our agent to autonomously imagine its own goals and learn how to reach them.

Variational Autoencoder (VAE)

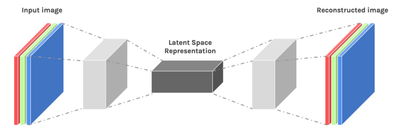

An autoencoder is a type of machine learning model that can learn to extract a robust, space-efficient feature vector from an image. The model has two parts — an encoder (e) and a decoder (p). The encoder takes as an input the image, and outputs a low-dimensional feature vector. The decoder takes as an input this low-dimensional feature vector, and recreates the original shape.

This generative model converts high-dimensional observations x, like images, into low-dimensional latent variables z, and vice versa. The model is trained so that the latent variables capture the underlying factors of variation in an image, similar to the abstract representations a human may use to interpret the world and goals. Given a current image x and goal image xg, we convert them into latent variables z and zg respectively. We then use these latent variables to representation the state and goal for our reinforcement learning algorithm. Learning Q functions and policies on top of this low-dimensional latent space rather than directly on images results in faster learning.

Using the latent variable representations for the images and goals also solves another problem: how to compute rewards. Rather than using pixel-wise error as our reward, we use the distance in the latent space for the reward to train our agent to reach a goal. In the full research paper describing our method, we show that this corresponds to maximizing the probability of reaching the goal and provides a much more effective learning signal.

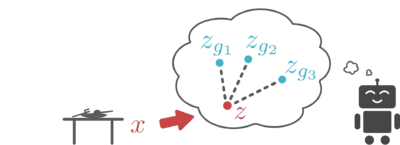

This generative model is also important because it allows an agent to easily generate goals in the latent space. In particular, our generative model is designed so that sampling latent variables is trivial: we just sample latents from the VAE prior. We use this sampling mechanism for two reasons: First, it provides a mechanism for an agent set its own goals. The agent simply samples a value for the latent variable from our generative model, and tries to reach that latent goal. Second, this resampling mechanism is also used to relabel goals as mentioned above. Because our generative model is trained to encode real images into the prior, the samples from our latent variable prior correspond to meaningful latent goals.

All together, the latent variable representation of images (1) captures the underlying factors of a scene, (2) provides meaningful distances to optimize, and (3) provides an efficient goal sampling mechanism, allowing us to efficiently train a goal-conditioned reinforcement learning agent that operates directly on pixels. We call the overall method reinforcement learning with imagined goals (RIG).

Experiments

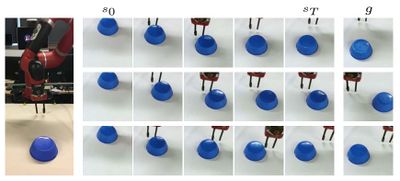

We conducted experiments to test if we RIG would be sample-efficient enough to train a real world robot policy in a reasonable amount of time. We tested the robot’s ability to reach user-specified positions and push objects to desired locations, as indicated by a goal image. The robot is trained with access only to 84x84 RGB images and without access to joint angles or object positions. The robot first learns by settings its own goals in the latent space. By setting its own goals, the robot can autonomously practice reaching different positions without human involvement. The only human involvement is when a person wants the robot to perform a specific task. At this point, the robot is given a goal image. Because the robot has practiced reaching so many goals, we see that it is able to reach this goal without additional training:

We also used RIG to train a policy to push objects to target locations:

Training a policy directly from images makes it easy to change tasks from reaching to object pushing. We simply added an object, added a table, and adjusted the camera. Lastly, despite working directly from pixels, these experiments did not take long to run. The reaching results took about an hour, while the pushing results took about 4.5 hours of real-robot interaction time. Many real-world robot reinforcement learning results use ground-truth state information like the position of an object. However, this usually requires additional machinery, like purchasing and setting up extra sensors or training an object-detection system. In contrast, our method only requires an RGB camera and works directly from the images.

Future work

The author suggests that one could instead use other modalities, such as language and demonstrations, to represent goals. Also, while the paper provides a mechanism to sample goals for autonomous exploration, one can choose these goals in a more principled way to perform even better exploration. Incorporating ideas from intrinsic motivation would allow our policy to actively choose goals that will inform the policy to learn more quickly about what it can and cannot reach. Another future direction is to train the generative model so that it is aware of the dynamics. Encoding information about the environment dynamics could make the latent space even better suited for reinforcement learning, resulting in faster learning. Lastly, there are a variety of robot tasks whose state representation would be difficult to capture with sensors, such as manipulating deformable objects or handling scenes with variable number of objects. Scaling up RIG to solve these tasks would be an exciting next step.

Critique

1. From the videos, one major concern is that the output of robotic arm's position is not stable during train ans test time. It is likely that the encoder reduces the image features too much so that the images in the latent space are too blury to be used goal images.

2. The algorithm seems to perform better when there is only one object in the images. For example, in visual multi-object pusher experiment, the two pucks in one

References

1. Searching For Efficient Multi-Scale Architectures For Dense Image Prediction, [[1]].

2. E. Real, A. Aggarwal, Y. Huang, and Q. V. Le. Regularized evolution for image classifier architecture search. arXiv:1802.01548, 2018.

3. C. Liu, B. Zoph, M. Neumann, J. Shlens, W. Hua, L.-J. Li, L. Fei-Fei, A. Yuille, J. Huang, and K. Murphy. Progressive neural architecture search. In ECCV, 2018.