video-based face recognition using Adaptive HMM

Introduction

Human face recognition

Human face recognition is a subarea of object recognition which aims to identify a face given a scene or still images. It is very complex problem with high dimensionality due to the nature of digital images. Face recognition benefits many fields such as computer security and video compression. Two approaches are commonly used in face recognition are video-based and still images. Since the 80's, image-based recognition is more dominant in face recognition in comparison with the video-based approach. Few recent studies took advantages of the features of video scenes as it provides more dynamic characteristic of the human face that help the recognition process. Also, farm sequences provide more features of 3D representation and high resolution images. Besides, in video-based recognition the prediction accuracy can be improved using the farm sequence.

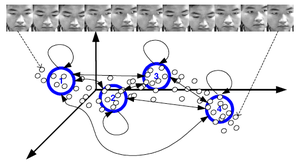

Motivated by speaker adaptation, this paper presents an Adaptive Hidden Markov model to recognize human face from frames sequence. The proposed model trains HMM on the training data and then improves the recognition constantly using the test data. A sample figure is displayed in Figure 1 that captures the following:

- HMM is used to study the temporal dynamics in the training process

- Then the temporal features of this test sequence is analyzed over time by the HMM of each subject

- The likelihood's are then compared to obtain the identity of the test video sequence

One advantage of this proposed idea is that the model can include dynamical characteristics.

Hidden Markov Model (HMM)

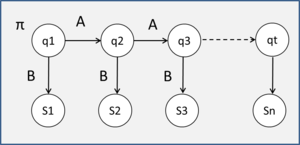

Hidden Markov Model is graphical model that suitable to represent sequential data. HMM consists of initial state [math]\displaystyle{ \pi_i }[/math], unobserved states [math]\displaystyle{ q_t }[/math], transition matrix A, and emission matrix B. HMM characterized by [math]\displaystyle{ \lambda=(A,B,\pi) }[/math] :

Given N of states [math]\displaystyle{ S ={S_1 ,S_2 , ,S_N } }[/math] and [math]\displaystyle{ q_t }[/math] state of time T

A a transition matrix where [math]\displaystyle{ a_ij }[/math] is the (i,j) entry in A:

[math]\displaystyle{ a_ij=P(q_t=S_j|q_{t-1}=S_i) }[/math] where [math]\displaystyle{ 1\leq i,j \leq N }[/math]

B the observation pdf [math]\displaystyle{ B={b_i(O)} }[/math]

[math]\displaystyle{ b_i(O)=\sum_{k=1}^M c_{ik} N(O,\mu_{ik},U_{ik}) }[/math] where [math]\displaystyle{ 1\leq i \leq N }[/math]

where [math]\displaystyle{ c_{ik} }[/math] is the mixture coefficient for [math]\displaystyle{ k_th }[/math] mixure component of [math]\displaystyle{ S_i }[/math]

M number of component in Gaussian mixture model .

[math]\displaystyle{ \mu_{ik} }[/math] is the mean vector and [math]\displaystyle{ U_ik }[/math] is the covariance matrix .

the intial state [math]\displaystyle{ \pi_i=p(q_t=S_i) }[/math] wherer [math]\displaystyle{ 1\leq i \leq N }[/math]

Features extraction

In computer vision there are common approaches that are used for feature extraction such as Pixel value ,Eigen-coefficients,and DCT. These approaches help us to reduce the dimensionality and solve the problem in feature space. Without doing this step our problem will be computationally intractable. In this study Principal Component Analysis PCA was used to represent the images in low-dimensional features. The Eigenanalysis was performed to produce new features vectors projected in the eigenspace by computing the covariance, eigenvectors and eigenvalues. The Feature extraction procedure was as follows. The given face database contains T number of images for each subject, where we have a total of L subjects:

[math]\displaystyle{ \, F_l = \{ f_{l,1},f_{1,2},f_{l,3},……f_{l,t} \} }[/math]

[math]\displaystyle{ \, 1 \leq l \leq L }[/math]

[math]\displaystyle{ F_l }[/math] is a tuple of T training face images of subject l. The images in this dataset only contains the face portion of the subjects. Several eigenvectors, [math]\displaystyle{ {V_1, V_2, ..., V_d} }[/math], obtained by performing eigen-analysis on the L*T training samples. Corresponding feature vector of each image, [math]\displaystyle{ e_{l,t} }[/math], is then generated by projecting the training images into the obtained eigenvectors. The set of all the projected training images (feature vectors) is then used as observations to train the HMM.

Temporal HMM

Each subject l modeled by fully connected HMM consisted of N states and observed variables O. The training started by initializing the HMM [math]\displaystyle{ \lambda=(A,B,\pi) }[/math]. Then the observation vectors are separated using vector quantization into N classes which then used to initially estimate of the probability density function B. Then the MLE [math]\displaystyle{ P(O|\lambda) }[/math]was iteratively computed using EM algorithm as define below:

The probability of initial state is [math]\displaystyle{ \pi_i=\frac{P(O,q_1=i|\lambda)}{P(O|\lambda)} }[/math]

The transition matrix [math]\displaystyle{ a{ij}=\frac {\sum_{t=1}^T P(O,q_{t-1}=i, q_{t}=j|\lambda)}{\sum_{t=1}^T P(O,q_{t-1}=i|\lambda)} }[/math]

The mixture coefficient [math]\displaystyle{ c{ik}=\frac {\sum_{t=1}^T P(q_{t}=i, m_{q,t}=k|O,\lambda)}{\sum_{t=1}^T \sum{k=1}^M P(q_{t}=i,m_{q,t}=k|O,\lambda)} }[/math]

The mean vector [math]\displaystyle{ \mu{ij}=\frac {O_t\sum_{t=1}^T P(q_{t}=i, m_{qt}=k|O,\lambda)}{\sum_{t=1}^T P(q_{t}=i,m_{qt}=k|O,\lambda)} }[/math]

The covariance [math]\displaystyle{ U{ik}=(1-\alpha)C_e+\alpha\frac {\sum_{t=1}^T (O_t-\mu_{ik})(O_t-\mu{it})^T P(q_{t}=i, m_{qt}=k|O,\lambda)}{\sum_{t=1}^T P(q_{t}=i,m_{q,t}=k|O,\lambda)} }[/math], where [math]\displaystyle{ m_{qt} }[/math] denotes the mixture component of state [math]\displaystyle{ q }[/math]. [math]\displaystyle{ P(O|\lambda_k)=\max_l P(o|\lambda_l) }[/math]

Adaptive HMM

Motivated by speech speaker-dependent recognition this paper proposed an adaptive HMM that trains the HMM during the recognition process. At the recognition process step; after a test sequence is recognized for one subject;, this same sequence is used to update the HMM of that subject. Two questions have to be addressed in this scenario. Firstly, the basis on which we justify the current sequence to be used as an updating sequence for its successive iterations, and secondly using HMM. Hence, this adaptive learning approach has some challenges as there is a need to estimate the correctness and the value of the new information. The proposed model in this study computes the likelihood difference between the estimated likelihood and a predefined threshold determine through experiments. Then the model uses EM algorithm to iteratively estimate the Maximum a posterior MAP which used to adapt the HMM given initial state [math]\displaystyle{ \lambda_{old} }[/math],and observation vectors O. We should mention that the covariance is not updated but the mean is updated as follow.

[math]\displaystyle{ \mu_{ik}=(1-\beta)\mu^{old}_{ik}+\beta\frac {\sum_{t=1}^T O_t P(q_{t}=i, m_{qt}=k|O,\lambda)}{\sum_{t=1}^T P(q_{t}=i,m_{qt}=k|O,\lambda)} }[/math]

Where [math]\displaystyle{ \beta }[/math] is a weighting factor between zero and one, which sets the tendency towards the new value of the mean. By decreasing the value of this parameter, each new [math]\displaystyle{ \mu }[/math] will be closer to the previous value. In this work authors have set the value of this parameter to 0.3.

Model Evaluation

The proposed model was tested on 3 datasets: Task, Task-new,and Mobo[1].Task database is consisted of videos of 21 subjects while they reading and typing on the computer while the new task datasets contain video of 11 subject in different lighting and cameras settings. Mobo data consisted of 24 video for each subjects while they are in different walking positions. The video frames were cropped manually to 16x16 pixels in Task database and to 48x48 pixels for Mobo database. For each subject, 150 frames were used to for training and 150 for testing. The frames’ location and length were randomly chosen from each user video. To evaluate the performance of the proposed model, the model was compared with a baseline image-recognition algorithm and with the temporal HMM .

The Baseline algorithm

The baseline algorithm in this study is individual PCA (IPCA )a commonly used in imaged-based face recognition algorithm recognition Applying the baseline algorithm on Task database yielded a 9.9% error rate with 12 eigenvectors. In Mobo dataset , the baseline algorithm recognition yielded 2.4% error rate using 7 eigenvectors.

Adaptive HMM

The HMM model of Task database consisted of 45 eigenvectors and 12 states for each subjects. The temporal HMM yielded an error rate of 7.0% and the adaptive HMM yielded 4.0% error recognition rate. Temporal HMM and adaptive HMM yielded an error rate of 1.6%, 1.2%, respectively using 30 eigenvectors and 12 HMM states.

The results of the evaluation shows that the proposed model outperforms the image-based algorithm as it uses more dynamic and temporal features and enhances the model by using the new observed features in the test sets. Also, IPCA are based on the assumption of single Gaussian distribution while the proposed model based on the assumption of mixture of Gaussian which model the observation better.

Conclusion

The adaptive model in this paper train the HMM on each subjects video sequence and temporal information. Then during the recognition process, the model estimate the likelihood of HMMS using the test data and the highest score will be the identity of the test video. The proposed model evaluated with image-based methods and found to provide better results; however, this could be attributed to different reasons rather than using video-based features or adaptive approach such as using Gaussian mixture model to computed the probability density distribution. One of the future directions is to combine the spatial HMM with the temporal HMM of video sequences; which might pave a way to model both face recognition and face tracking in one model. {{

Template:namespace detect

| type = style | image = | imageright = | style = | textstyle = | text = This article may require cleanup to meet Wikicoursenote's quality standards. The specific problem is: It is worth to add experiential results and comparisons and to mention the superiority of this approach over other alternatives.. Please improve this article if you can. (November 2011) | small = | smallimage = | smallimageright = | smalltext = }}