User:T358wang

Group

Rui Chen, Zeren Shen, Zihao Guo, Taohao Wang

Introduction

Landmark recognition is an image retrieval task with its own specific challenges and this paper provides a new and effective method to recognize landmark images. And this method has been successfully applied to actual images. In this way, statue buildings and characteristic objects can be effectively identified.

There are many difficulties encountered in the development process:

1. The first problem is that the concept of landmarks cannot be strictly defined, because landmarks can be any object and building.

2. The second problem is that the same landmark can be photographed from different angles. The results of the multi-angle shooting will result in very different picture characteristics. But the system needs to accurately identify different landmarks.

3. The third problem is that the landmark recognition system must recognize a large number of landmarks, and the recognition must achieve high accuracy.

These problems require that the system should have a very low false alarm rate and high recognition accuracy. There are also three potential problems:

1. The processed data set contains a little error content, the image content is not clean and the quantity is huge.

2. The algorithm for learning the training set must be fast and scalable.

3. While displaying high-quality judgment landmarks, there is no image geographic information mixed.

The article describes the deep convolutional neural network (CNN) architecture, loss function, training method, and inference aspects. The results of quantitative experiments will be displayed through testing and deployment effect analysis to prove the effectiveness of our model. Finally, it introduces the comparison results between the method proposed in this paper and the latest model and gives a conclusion.

Related Work

Landmark recognition can be regarded as one of the tasks of image retrieval, and a large number of documents are concentrated on image retrieval tasks. In the past two decades, the field of image retrieval has made significant progress, and the main methods can be divided into two categories. The first is a classic retrieval method using local features, a method based on local feature descriptors organized in bag-of-words, spatial verification, Hamming embedding, and query expansion. These methods are dominant in image retrieval. Later, until the rise of deep convolutional neural networks (CNN), deep convolutional neural networks (CNN) were used to generate global descriptors of input images. Another method is to selectively match the kernel Hamming embedding method extension. With the advent of deep convolutional neural networks, the most effective image retrieval method is based on training CNNs for specific tasks. Deep networks are very powerful for semantic feature representation, which allows us to effectively use them for landmark recognition. This method shows good results but brings additional memory and complexity costs. The DELF (DEep local feature) by Noh et al. proved promising results. This method combines the classic local feature method with deep learning. This allows us to extract local features from the input image and then use RANSAC for geometric verification. The goal of the project is to describe a method for accurate and fast large-scale landmark recognition using the advantages of deep convolutional neural networks.

Methodology

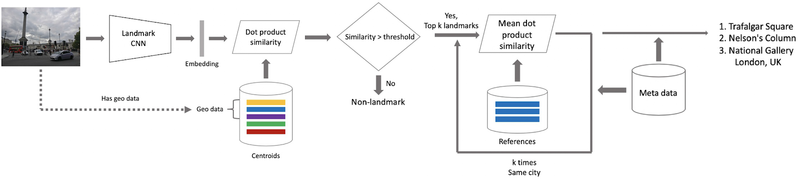

This section will describe in detail the CNN architecture, loss function, training procedure, and inference implementation of the landmark recognition system. The figure below is an overview of the landmark recognition system.

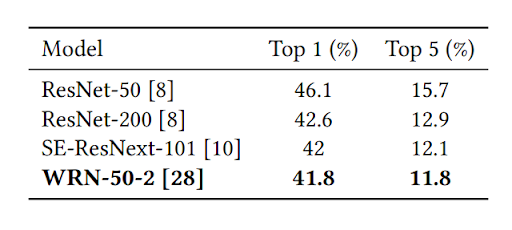

The landmark CNN consists of three parts including the main network, the embedding layer, and the classification layer. To obtain a CNN main network suitable for training landmark recognition model, fine-tuning is applied and several pre-trained backbones (Residual Networks) based on other similar datasets, including ResNet-50, ResNet-200, SE-ResNext-101, and Wide Residual Network (WRN-50-2), are evaluated based on inference quality and efficiency. Based on the evaluation results, WRN-50-2 is selected as the optimal backbone architecture.

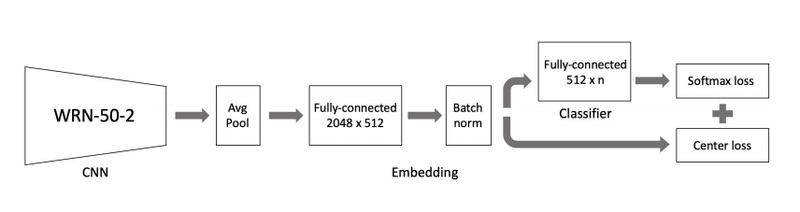

For the embedding layer, as shown in figure x, the last fully-connected layer after the averaging pool is removed. Instead, a fully-connected 2048 [math]\displaystyle{ \times }[/math] 512 layer and a batch normalization are added as the embedding layer. After the batch norm, a fully-connected 512 [math]\displaystyle{ \times }[/math] n layer is added as the classification layer. The below figure shows the overview of the CNN architecture of the landmark recognition system.

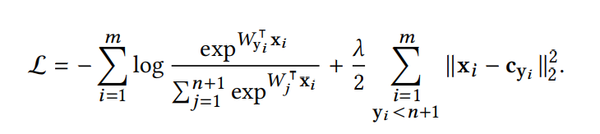

To effectively determine the embedding vectors for each landmark class (centroids), the network needs to be trained to have the members of each class to be as close as possible to the centroids. Several suitable loss functions are evaluated including Contrastive Loss, Arcface, and Center loss. The center loss is selected since it achieves the optimal test results and it trains a center of embeddings of each class and penalizes distances between image embeddings as well as their class centers. In addition, the center loss is a simple addition to softmax loss and is trivial to implement.

When implementing the loss function, a new additional class that includes all non-landmark instances needs to be added and the center loss function needs to be modified as follows: Let n be the number of landmark classes, m be the mini-batch size, [math]\displaystyle{ x_i \in R^d }[/math] is the i-th embedding and [math]\displaystyle{ y_i }[/math] is the corresponding label where [math]\displaystyle{ y_i \in }[/math] {1,...,n,n+1}, n+1 is the label of the non-landmark class. Denote [math]\displaystyle{ W \in R^{d \times n} }[/math] as the weights of the classifier layer, [math]\displaystyle{ W_j }[/math] as its j-th column. Let [math]\displaystyle{ c_{y_i} }[/math] be the [math]\displaystyle{ y_i }[/math] th embeddings center from Center loss and [math]\displaystyle{ \lambda }[/math] be the balancing parameter of Center loss. Then the final loss function will be:

In the training procedure, the stochastic gradient descent(SGD) will be used as the optimizer with momentum=0.9 and weight decay = 5e-3. For the center loss function, the parameter [math]\displaystyle{ \lambda }[/math] is set to 5e-5. Each image is resized to 256 [math]\displaystyle{ \times }[/math] 256 and several data augmentations are applied to the dataset including random resized crop, color jitter and random flip. The training dataset is divided into four parts based on the geographical affiliation of cities where landmarks are located: Europe/Russia, North America/Australia/Oceania, Middle East/North Africa, and the Far East.

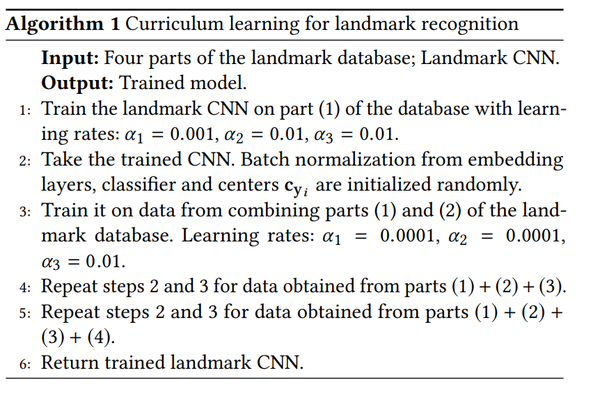

The paper introduces curriculum learning for landmark recognition, which is shown in the below figure. The algorithm is trained for 30 epochs and the learning rate [math]\displaystyle{ \alpha_1, \alpha_2, \alpha_3 }[/math] will be reduced by a factor of 10 at the 12th epoch and 24th epoch.

In the inference phase, the paper introduces the term “centroids” which are embedding vectors that are calculated by averaging embeddings and are used to describe landmark classes. The calculation of centroids is significant to effectively determine whether a query image contains a landmark. The paper proposes two approaches to help the inference algorithm to calculate the centroids. First, instead of using the entire training data for each landmark, data cleaning is done to remove most of the redundant and irrelevant elements in the image. Second, since each landmark can have different shooting angles, it is more efficient to calculate a separate centroid for each shooting angle. Hence, a hierarchical agglomerative clustering algorithm is proposed to partition training data into several valid clusters for each landmark and the set of centroids for a landmark L can be represented by [math]\displaystyle{ \mu_{l_j} = \frac{1}{v} \sum_{i \in C_j} x_i, j \in 1,...,v }[/math] where v is the number of valid clusters for landmark L.

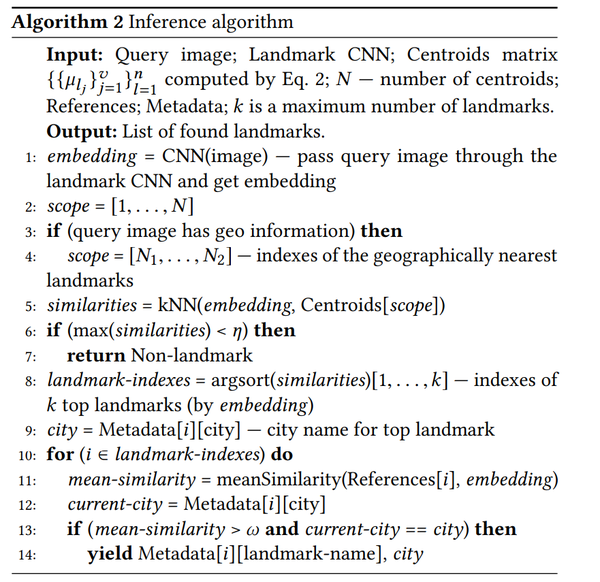

Once the centroids are calculated for each landmark class, the system can make decisions whether there is any landmark in an image. The query image is passed through the landmark CNN and the resulting embedding vector is compared with all centroids by dot product similarity using approximate k-nearest neighbors (AKNN). To distinguish landmark classes from non-landmark, a threshold [math]\displaystyle{ \eta }[/math] is set and it will be compared with the maximum similarity to determine if the image contains any landmarks.

The full inference algorithm is described in the below figure.

Experiments and Analysis

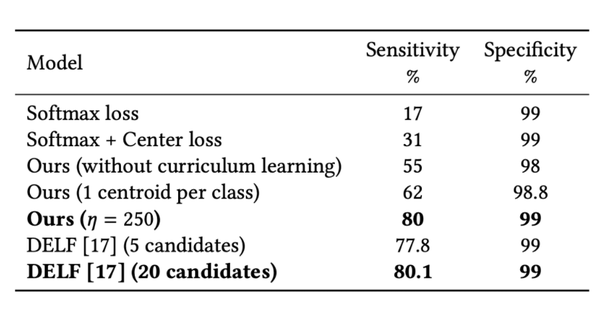

Offline test

In order to measure the quality of the model, an offline test set was collected and manually labeled. According to the calculations, photos containing landmarks make up 1 − 3% of the total number of photos on average. This distribution was emulated in an offline test, and the geo-information and landmark references weren’t used. The results of this test are presented in Table 4. Two metrics were used to measure the results of experiments: Sensitivity — the accuracy of a model on images with landmarks (also called Recall) and Specificity — the accuracy of a model on images without landmarks. Several types of DELF were evaluated, and the best results in terms of sensitivity and specificity were included in Table 4. The table also contains the results of the model trained only with Softmax loss, Softmax, and Center loss. Thus, Table 4 reflects improvements in our approach with the addition of new elements in it.

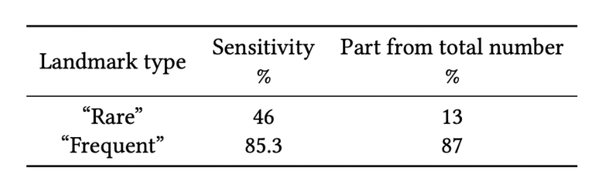

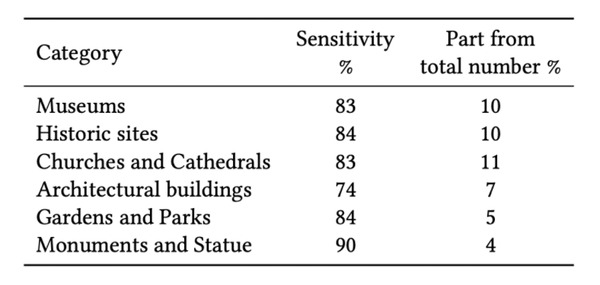

It’s very important to understand how a model works on “rare” landmarks due to the small amount of data for them. Therefore, the behavior of the model was examined separately on “rare” and “frequent” landmarks in Table 5. The column “Part from total number” shows what percentage of landmark examples in the offline test has the corresponding type of landmarks.

Analysis of the behavior of the model in different categories of landmarks in the offline test is presented in Table 6. These results show that the model can successfully work with various categories of landmarks. Predictably better results (92% of sensitivity and 99.5% of specificity) could also be obtained when the offline test with geo-information was launched on the model.

Revisited Paris dataset

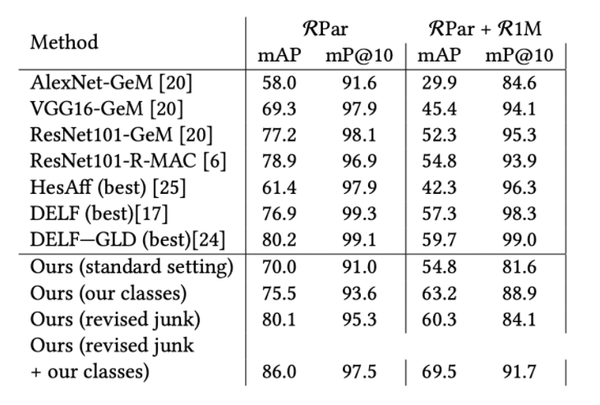

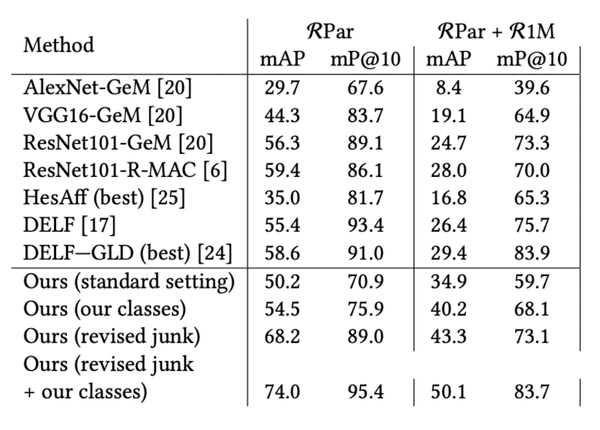

Revisited Paris dataset (RPar) was also used to measure the quality of the landmark recognition approach. This dataset with Revisited Oxford (ROxf) is standard benchmarks for the comparison of image retrieval algorithms. In the recognition, it is important to determine the landmark, which is contained in the query image. Images of the same landmark can have different shooting angles or taken inside/outside the building. Thus, it is reasonable to measure the quality of the model in the standard and adapt it to the task settings. That means not all classes from queries are presented in the landmark dataset. For those images containing correct landmarks but taken from different shooting angles within the building, we transferred them to the “junk” category, which does not influence the final score and makes the test markup closer to our model’s goal. Results on RPar with and without distractors in medium and hard modes are presented in Tables 7 and 8.

Comparison

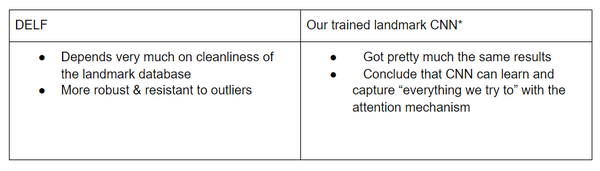

Recent most efficient approaches to landmark recognition are built on fine-tuned CNN. We chose to compare our method to DELF on how well each performs on recognition tasks. A brief summary is given below:

Offline test and timing

Both approaches obtained similar results for image retrieval in the offline test (shown in table 4), but the proposed approach is much faster on the inference stage and more memory efficient.

To be more detailed, during the inference stage, DELF needs more forward passes through CNN, has to search the entire database, and performs the RANSAC method for geometric verification. All of them make it much more time-consuming than our proposed approach. Our approach mainly uses centroids, this makes it take less time and needs to store fewer elements.

Conclusion

In this paper we were hoping to solve some difficulties emerging when trying to apply landmark recognition to the production level: there might not be a clean & sufficiently large database for interesting tasks, algorithms should be fast, scalable, and should aim for low FP and high accuracy.

While aiming for these goals, we presented a way of cleaning landmark data. And most importantly, we introduced the usage of embeddings of deep CNN to make recognition fast and scalable, trained by curriculum learning techniques with modified versions of Center loss. Compared to the state-of-the-art methods, this approach shows similar results but is much faster and suitable for implementation on a large scale.