User:As2na

Introduction

The paper generalizes some approaches in language modelling that seek to overcome some of the shortcomings of neural networks including the phenomenon of catastrophic forgetting using memory-based adaptation. Catastrophic forgetting occurs when neural networks perform poorly on old tasks after they have been trained to perform well on a new task. The paper also presents experimental results where the model in question is applied to continual and incremental learning tasks.

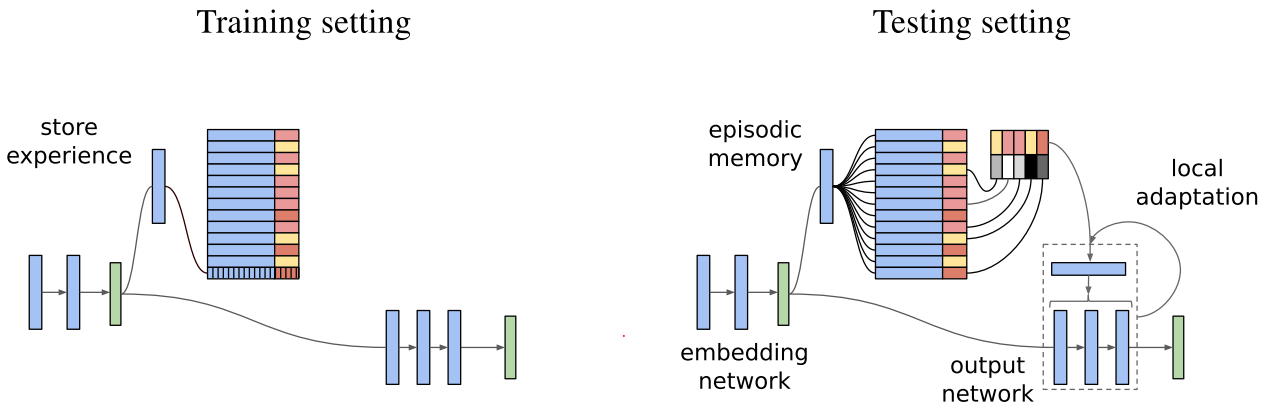

Model-based parameter adaptation (MbPA) is based on the theory of complementary learning systems which states that intelligent agents must possess two learning systems, one that allows the gradual acquisition of knowledge and another that allows rapid learning of the specifics of individual experiences, Kumaran, 2016. Similarly, MbPA consists of two components: a parametric component and a non-parametric component. The parametric component is the standard neural network which learns slowly (low learning rates) but generalizes well. The non-parametric component, on the other hand, is a neural network augmented with an episodic memory that allows storing of previous experiences and local adaptation of the weights of the parametric component. The parametric and non-parametric components therefore serve different purposes during the training and testing phases.