Understanding the Effective Receptive Field in Deep Convolutional Neural Networks: Difference between revisions

Rahulniyer (talk | contribs) No edit summary |

|||

| Line 77: | Line 77: | ||

Buessler, J.-L., Smagghe, P., & Urban, J.-P. (2014). Image receptive fields for artificial neural networks. Neurocomputing, 144(Supplement C), 258–270. https://doi.org/10.1016/j.neucom.2014.04.045 | Buessler, J.-L., Smagghe, P., & Urban, J.-P. (2014). Image receptive fields for artificial neural networks. Neurocomputing, 144(Supplement C), 258–270. https://doi.org/10.1016/j.neucom.2014.04.045 | ||

Dilated Convolutions in Neural Network - [http://www.erogol.com/dilated-convolution/] | |||

Revision as of 09:41, 5 November 2017

Introduction

What is the Receptive Field (RF) of a unit?

Receptive field of a unit is the region of input where the unit 'sees' and responds to.

Why is RF important?

The concept of receptive field is important for understanding and diagnosing how deep Convolutional neural networks (CNNs) work. Since anywhere in an input image outside the receptive field of a unit does not affect the value of that unit, it is necessary to carefully control the receptive field, to ensure that it covers the entire relevant image region. The property of receptive field allows the response is mostly sensitive to a local region in the image and to specific stimuli; similar stimuli trigger activations of similar magnitudes (Buessler, Smagghe, & Urban, 2014). The initialization of each receptive field depends on the neuron's degrees of freedom (Buessler, Smagghe, & Urban, 2014). One example outlined in this paper is that "the weights can be either of the same sign or centered with zero mean. This latter case favors a response to the contrast between the central and peripheral region of the receptive field." (Buessler, Smagghe, & Urban, 2014). In many tasks, especially dense prediction tasks like semantic image segmentation, stereo and optical flow estimation, where we make a prediction for each single pixel in the input image,it is critical for each output pixel to have a big receptive field, such that no important information is left out when making the prediction.

How to increase RF size?

Make the network deeper by stacking more layers, which increases the receptive field size linearly by theory, as each extra layer increases the receptive field size by the kernel size.

Add sub-sampling layers to increase the receptive field size multiplicatively.

Modern deep CNN architectures like the VGG networks and Residual Networks use a combination of these techniques.

Intuition behind Effective Receptive Fields

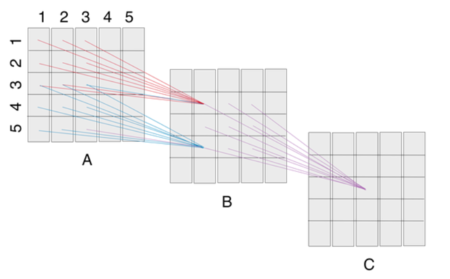

The pixels at the center of a RF have a much larger impact on an output:

- In the forward pass, central pixels can propagate information to the output through many different paths, while the pixels in the outer area of the receptive field have very few paths to propagate its impact.

- In the backward pass, gradients from an output unit are propagated across all the paths, and therefore the central pixels have a much larger magnitude for the gradient from that output [More paths always mean larger gradient?].

Authors prove that in many cases the distribution of impact in a receptive field distributes as a Gaussian. Since Gaussian distributions generally decay quickly from the center, the effective receptive field, only occupies a fraction of the theoretical receptive field.

Experiments

Verifying Theoretical Results

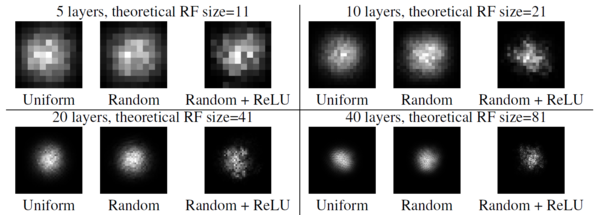

ERFs are Gaussian distributed: By looking at the figure

, we can observe perfect Gaussian shapes for uniformly and randomly weighted convolution kernels without nonlinear activations, and near Gaussian shapes for randomly weighted kernels with nonlinearity. Adding the ReLU nonlinearity makes the distribution a bit less Gaussian, as the ERF distribution depends on the input as well. Another reason is that ReLU units output exactly zero for half of its inputs and it is very easy to get a zero output for the center pixel on the output plane, which means no path from the receptive field can reach the output, hence the gradient is all zero. Here the ERFs are averaged over 20 runs with different random seed.

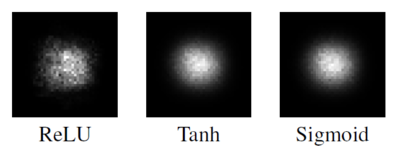

Figures below show the ERF for networks with 20 layers of random weights, with different nonlinearities. Here the results are averaged both across 100 runs with different random weights as well as different random inputs. In this setting the receptive fields are a lot more Gaussian-like.

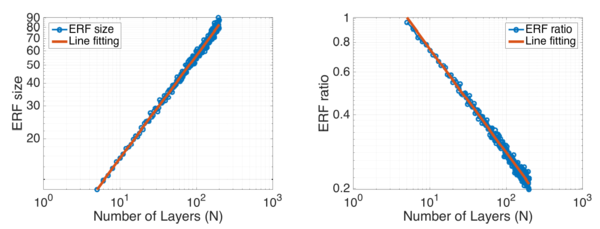

[math]\displaystyle{ \sqrt{n} }[/math] absolute growth and [math]\displaystyle{ 1/\sqrt{n} }[/math] relative shrinkage: The figure

shows the change of ERF size and the relative ratio of ERF over theoretical RF wrt number of convolution layers. The fitted line for ERF size has the slope of 0.56 in log domain, while the line for ERF ratio has the slope of -0.43. This indicates ERF size is growing linearly wrt [math]\displaystyle{ \sqrt{n} }[/math] and ERF ratio is shrinking linearly wrt [math]\displaystyle{ 1/\sqrt{n} }[/math].

They used 2 standard deviations as the measurement for ERF size, i.e. any pixel with value greater than 1 - 95.45% of center point is considered in ERF. The ERF size is represented by the square root of number of pixels within ERF, while the theoretical RF size is the side length of the square in which all pixel has a non-zero impact on the output pixel, no matter how small. All experiments here are averaged over 20 runs.

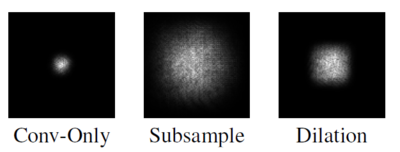

Subsampling & dilated convolution increases receptive field: The figure shows that the effect of subsampling and dilated convolution. The reference baseline is a CNN with 15 dense convolution layers. Its ERF is shown in the left-most figure. Replacing 3 of the 15 convolutional layers with stride-2 convolution results in the ERF for the ‘Subsample’ figure. Finally, replacing those 3 convolutional layers with dilated convolution with factor 2,4 and 8 gives the ‘Dilation’ figure. Both of them are able to increase the effect receptive field significantly. Note the ‘Dilation’ figure shows a rectangular ERF shape typical for dilated convolutions (why?).

How the ERF evolves during training

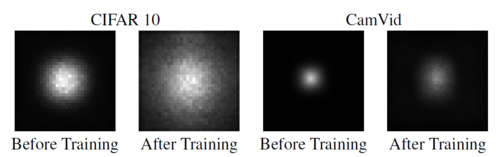

Authors looked at how the ERF of units in the top-most convolutional layers of a classification CNN and a semantic segmentation CNN evolve during training. For both tasks, they adopted the ResNet architecture which makes extensive use of skip-connections. As expected their analysis showed the ERF of these networks are significantly smaller than the theoretical receptive field. Also, as the networks learns, the ERF got bigger so that at the end of training was significantly larger than the initial ERF.

The classification network was a ResNet with 17 residual blocks trained on the CIFAR-10 dataset. Figure shows the ERF on the 32x32 image space at the beginning of training (with randomly initialized weights) and at the end of training when it reaches best validation accuracy. Note that the theoretical receptive field of the network is actually 74x74, bigger than the image size, but the ERF is not filling the image completely. Comparing the results before and after training demonstrates that ERF has grown significantly.

The semantic segmentation network was trained on the CamVid dataset for urban scene segmentation. The 'front-end' of the model was a purely convolutional network that predicted the output at a slightly lower resolution. And then, a ResNet with 16 residual blocks interleaved with 4 subsampling operations each with a factor of 2 was implemented. Due to subsampling operations the output was 1/16 of the input size. For this model, the theoretical RF of the top convolutional layer units was 505x505. However, as Figure shows the ERF only got a fraction of that with a diameter of 100 at the beginning of training, and at the end of training reached almost a diameter around 150.

Discussion

ERF only takes a small portion of the theoretical receptive field, which is undesirable for tasks that require a large RF. So authors suggest two solutions:

- New Initialization scheme to make the weights at the center of the convolution kernel to be smaller and the weights on the outside larger, which diffuses the concentration on the center out to the periphery. One way to implement this is to initialize the network with any initialization method, and then scale the weights according to a distribution that has a lower scale at the center and higher scale on the outside. They tested this solution for the CIFAR-10 classification task, with several random seeds. In a few cases they get a 30% speed-up of training compared to the more standard initializations. But overall the benefit of this method is not always significant.

- Architectural changes of CNNs is the 'better' approach that may change the ERF in more fundamental ways. For example, instead of connecting each unit in a CNN to a local rectangular convolution window, we can sparsely connect each unit to a larger area in the lower layer using the same number of connections. Dilated convolution belongs to this category, but we may push even further and use sparse connections that are not grid-like.

Summary & Conclusion

Authors showed, theoretically and experimentally, that the distribution of impact within the receptive field (the effective receptive field) is asymptotically Gaussian, and the ERF only takes up a fraction of the full theoretical receptive field. They also studied the effects of some standard CNN approaches on the effective receptive field. They found that dropout does not change the Gaussian ERF shape. Subsampling and dilated convolutions are effective ways to increase receptive field size quickly but skip-connections make ERFs smaller.

They argued that since larger ERFs are required for higher performance, new methods to achieve larger ERF will not only help the network to train faster but may also improve performance.

Critique

Authors claim that the ERF results in the experimental section have Gaussian shapes but they never prove this claim. For example, they could fit different 2D-functions, including 2D-Gaussian, to the kernels and show that 2D-Gaussian gives the best fit. Furthermore, the pictures given as proof of the claim that the ERF has a Gaussian distribution only show the ERF of the center pixel of the output [math]\displaystyle{ y_{0,0} }[/math]. Intuitively, the ERF of an node near the boundary of the output layer may have a a significantly different shape. This was not addressed in the paper.

Another weakness is in the discussion section, where they make a connection to the biological networks. They jumped to disprove a well-observed phenomena in the brain. The fact that the neurons in the higher areas of the visual hierarchy gradually lose their retinotpic property has been shown in countless number of neuroscience studies. For example, grandmother cells do not care about the position of grandmother's face in the visual field. In general, the similarity between deep CNNs and biological visual systems is not as strong, hence we should take any generalization from CNNs to biological networks with a grain of salt.

References

Luo, Wenjie, Yujia Li, Raquel Urtasun, and Richard Zemel. "Understanding the effective receptive field in deep convolutional neural networks." In Advances in Neural Information Processing Systems, pp. 4898-4906. 2016.

Buessler, J.-L., Smagghe, P., & Urban, J.-P. (2014). Image receptive fields for artificial neural networks. Neurocomputing, 144(Supplement C), 258–270. https://doi.org/10.1016/j.neucom.2014.04.045

Dilated Convolutions in Neural Network - [1]