The Detection of Black Ice Accidents Using CNNs

Presented by

Introduction

As automated vehicles become more popular it is critical for these cars to be tested on every realistic driving scenario. Since AVs aim to improve safety on the road they must be able to handle all kinds of road conditions. One way an AV can prevent an accident is going from a passive safety system to an active safety system once a risk is identified.

Every country has their own challenges and in Canada for example, AVs need to understand how to drive in the winter. However, not enough testing and training has been done to mitigate winter risks. Black ice is one of the leading causes of accidents in the winter and is very challenging to see since it is a thin, transparent layer of ice. Because of this, focus needs to be placed on AVs identifying black ice.

Previous Work

In the past other methods of detecting black ice included using:

- Sensors

- Electric current sensors imbedded in concrete

- Change of electrical current resistance between stainless steel columns inside the concrete based on how what is on top of the road

- Sound Waves:

- Used 3 different soundwaves

- Road conditions detected through reflectance of the waves

- To be used for basic data in the development of road condition detectors

- Light Sources

- Different road conditions have unique light reflection

- Specular and diffuse reflections

- Types of ice were classified based on thickness and volume

- Other road conditions could be determined through reflection as well

Transportation in general has been using artificial intelligence for many different purposes.

Vehicle and pedestrian detection has been using various forms of convolutional neural networks like AlexNet, YOLO, R-CNN, Faster R-CNN, etc. Some models had better performance whereas others had a faster processing time but overall great success has been achieved.

In addition, the identification of traffic signs has had studies using similar CNN structures. These algorithms are able to process high-definition images quickly and recognize the boundary of the traffic sign allowing for quick processing.

Lastly, the detection of cracks in the road used CNN algorithms to identify the existence of a crack and classifying the it’s length with a maximum misclassification of 1cm.

Significant progress has been made for transportation but there is a lack of training on winter roads and black ice specifically. Since CNN has great success with quickly identifying objects of interest in images, using CNN for black ice detection and accident prevention is a natural extension.

Data collection

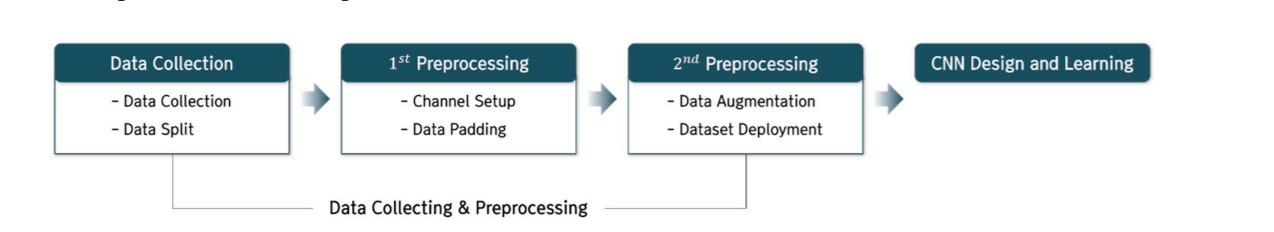

CNN is a popular class of Artificial Neural Networks (ANN) that is commonly used in image analysis due to its excellent performance in object detection using images.It differs from ANN in that it maintains and delivers spatial information on images by adding synthetic and pooling layers to a normal ANN. As mentioned earlier, various studies regarding the transportation sector had used CNN, but the study of black ice detection on the road has only thus far been conducted using other methodologies (sensors and optics). This study aims to detect black ice by utilizing CNN on images of various road conditions.. In this chapter, the details of data collection, 1st preprocessing, and 2nd preprocessing, how the model was designed, and the training undertaken (see Figure 1) are discussed.

1. Data Collection

Image data was collected using Google Image Search for four categories of road condition: road, wet road, snow road and black ice. Images were of different regions and road environments and make up a total of 2230 images.

2. Data Split

To assist in feature extraction, objects such as road structures, lanes, and shoulders within each image were removed so that the road characteristics of interest can be clearly identified. Consideration was given in the decision of the image size by weighing the pros and cons. In general, making images smaller will cause a loss of information. However, smaller image sizes allow for a larger number of images and deep neural network implementations. On the other hand, when the image size is large, feature extraction can be more accurate as the finer features are not lost, and the network can learn more robust features, but the disadvantage is that the number of images is reduced, and a deep neural network is difficult to implement. In this study, a 128 x 128 px size is selected to proceed with training. The results of the data split are shown in Figure 2.

1st Preprocessing

In the 1st stage of Preprocessing, the channel was set up and data padding was performed on the training data.

1. Channel Setup

The color image of 128 × 128 px obtained earlier through data split has the advantage of having three channels available to help identify the characteristics. However, because of the three channels of data, the size of the data is large, which limits the number of training data and the implementation of deep neural networks. Therefore, this study has transformed the data into grayscale image data.

2. Data padding

Data padding is used to resize training images by adding spaces and meaningless symbols to the end of existing data. When training was done without data padding, very low accuracy (25%) and high loss values were achieved (Table 4). This is because the edges of the image data are distorted by the data enhancement.

Therefore, in this study, the image data were padded to prevent distortion of the edges of the data.

2nd preprocessing

After the 1st preprocessing, during which channel setup and data padding were performed, image data of 150 x 150 px in GRAYSCALE format were obtained with the following categories: 4900 road and wet road image data and 4900 snow road and black ice image data (Table 5).

3. Data Augmentation

In the 2nd preprocessing stage, to improve the diversity in the image data obtained through Google Image Search, additional image data was created through data augmentation on existing image data.

This is done in hopes to improve the accuracy of the model since large amounts of data are essential for high accuracy and prevention of overfitting.

Data augmentation would help greatly, especially for this study, which aims to identify black ice, which is not only seasonal but also reliant on very specific conditions to form, thus making image data on black ice more sparse relative to other types of data. To improve the accuracy of CNN, the ImageDataGenerator function provided by the Keras library was used to augment the data under the conditions in (Table 6).

The process of building the training data through data augmentation is as follows.

From the original 17,600 sheets of data, 1000 were randomly extracted from each class and designated as test data. The rest of the data, which is the training data, was augmented using the ImageDataGenerator function, which increases the total number of images to 10,000 per class. Then, from there, the data was split into the train data and validation data at a ratio of 8:2. Therefore, the final ratio of train, validation, and test data for each class was 8:2:1. (Figure 3 and Table 7).

Model Architecture

The model structure consists of 2 main components: Feature extraction and Classification. The Feature extraction component can be broken down into 2 sections. The model begins with 2 convolutional layers, using a 3x3 kernel size, paired with the ReLU activation function to avoid vanishing gradients. The goal of the convolutional layers is to extract the main features from the input image like edges, orientation, color, and other important features to distinguish black ice. It is followed by 2 max-pooling layers with a 2x2 stride. The pooling layers map the grid of values in each window to a single output value, reducing the output size of the convolutional layers. This allows the most relevant features to be picked out while reducing the amount of computation needed downstream. The max-pooling operation is used which will yield only the maximum value out of each window. A 20% dropout layer is then used, which randomly “drops” 20% of the weights from the previous convolutional layers during training which aims to improve generalization and avoid over-fitting. This structure is then repeated, making up the first component of the Feature Extraction workflow.

The previous layout is then repeated but with 1 convolutional layer, followed by one max-pooling layer, instead of 2, and one Dropout layer with the same parameters.

The classification component of the architecture consists of fully connected layers feeding into a softmax’ed output. There are 4 fully-connected layers with 3 dropout layers in between.

Finally, the Stochastic Gradient Descent Optimizer was used, and 200 epochs were applied using a batch-size of 32. The model training is stopped if the validation loss does not fall below the minimum value encountered so far within 20 epochs.