Synthesizing Programs for Images usingReinforced Adversarial Learning: Difference between revisions

No edit summary |

No edit summary |

||

| Line 24: | Line 24: | ||

= Related Work = | = Related Work = | ||

Related works in this filed is summarized as follows: | Related works in this filed is summarized as follows: | ||

* There has been a huge amount of studies on inverting simulators to interpret images (Nair et al., 2008; | * There has been a huge amount of studies on inverting simulators to interpret images (Nair et al., 2008; Paysan et al., 2009; Mansinghka et al., 2013; Loper & Black, 2014; Kulkarni et al., 2015a; Jampani et al., 2015) | ||

Paysan et al., 2009; Mansinghka et al., 2013; Loper & Black, 2014; Kulkarni et al., 2015a; Jampani et al., 2015) | |||

* Inferring motor programs for reconstruction of MNIST digits (Nair & Hinton, 2006) | * Inferring motor programs for reconstruction of MNIST digits (Nair & Hinton, 2006) | ||

Revision as of 15:02, 23 October 2018

Synthesizing Programs for Images usingReinforced Adversarial Learning: Summary of the ICML 2018 paper http://proceedings.mlr.press/v80/ganin18a.html

Presented by

1. Nekoei, Hadi [Quest ID: 20727088]

Motivation

Conventional neural generative models have major problems.

- Firstly, it is not clear how to inject knowledge to the model about the data.

- Secondly, latent space is not easily interpretable.

The provided solution in this paper is to generate programs to incorporate tools, e.g. graphics editors, illustration software, CAD. and creating more meaningful API(sequence of complex actions vs raw pixels).

Introduction

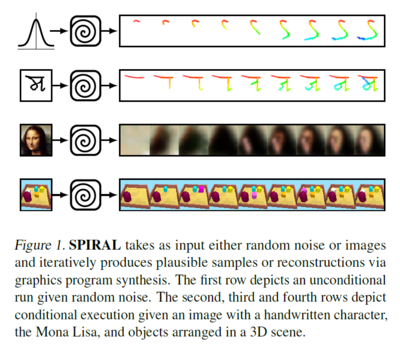

Humans, frequently, use the ability to recover structured representation from raw sensation to understand their environment. Decomposing a picture of a hand-written character into strokes or understanding the layout of a building can be exploited to learn how actually our brain works. To address these problems, a new approach is presented for interpreting and generating images using Deep Reinforced Adversarial Learning in order to solve the need for a large amount of supervision and scalability to larger real-world datasets. In this approach, an adversarially trained agent (SPIRAL) generates a program which is executed by a graphics engine to generate images, either conditioned on data or unconditionally. The agent is rewarded by fooling a discriminator network and is trained with distributed reinforcement learning without any extra supervision. The discriminator network itself is trained to distinguish between generated and real images.

Related Work

Related works in this filed is summarized as follows:

- There has been a huge amount of studies on inverting simulators to interpret images (Nair et al., 2008; Paysan et al., 2009; Mansinghka et al., 2013; Loper & Black, 2014; Kulkarni et al., 2015a; Jampani et al., 2015)

- Inferring motor programs for reconstruction of MNIST digits (Nair & Hinton, 2006)

- Visual program induction in the context of hand-written characters on the OMNIGLOT dataset (Lake et al., 2015)

- inferring and learning feed-forward or recurrent procedures for image generation (LeCun et al., 2015; Hinton & Salakhutdinov, 2006; Goodfellow et al., 2014; Ackley et al., 1987; Kingma & Welling, 2013; Oord et al., 2016; Kulkarni et al., 2015b; Eslami et al., 2016; Reed et al., 2017; Gregor et al., 2015).

However, all of these methods have limitations such as:

- Scaling to larger real-world datasets

- Requiring hand-crafted parses and supervision in the form of sketches and corresponding images

- Lack the ability to infer structured representations of images