Roberta

Presented by

Danial Maleki

Introduction

Self-training methods in the NLP domain(Natural Language Processing) like ELMo[1], GPT[2], BERT[3], XLM[4], and XLNet[5] have shown significant improvements, but knowing which part the methods have the most contribution is challenging to determine. Roberta replications BERT pretraining, which investigates the effects of hyperparameters tuning and training set size. In summary, what they did can be categorized by (1) they modified some BERT design choices and training schemes. (2) they used a new set of new datasets. These 2 modification categories help them to improve performance on the downstream tasks.

Background

In this section, they tried to have an overview of BERT as they used this architecture. In short terms, BERT uses transformer architecture[6] with 2 training objectives; they use masks language modelling (MLM) and next sentence prediction(NSP) as their objectives. The MLM objectives randomly sampled some of the tokens in the input sequence and replaced them with the special token [MASK]. Then they try to predict these tokens base on the surrounding information. NSP is a binary classification loss for the prediction of whether the two sentences follow each other or not. They use Adam optimization with some specific parameters to train the network. They used different types of datasets to train their networks. Finally, they did some experiments on the evaluation tasks such as GLUE[7], SQuAD[7], RACE[8] and show their performance on those downstream tasks.

Training Procedure Analysis

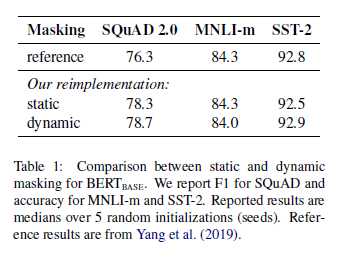

In this section, they elaborate on which choices are important for successfully pretraining BERT. First, they discussed static vs. dynamic masking. As I mentioned in the previous section, the masked language modelling objective in BERT pretraining masks a few tokens from each sequence at random and then predicts these tokens. However, in the original implementation of BERT, the sequences are masked just once in the preprocessing. This implies that the same masking pattern is used for the same sequence in all the training steps.

Unlike static masking, dynamic masking was tried, wherein a masking pattern is generated every time a sequence is fed to the model. The results show that dynamic masking has slightly better performance in comparison to the static one.

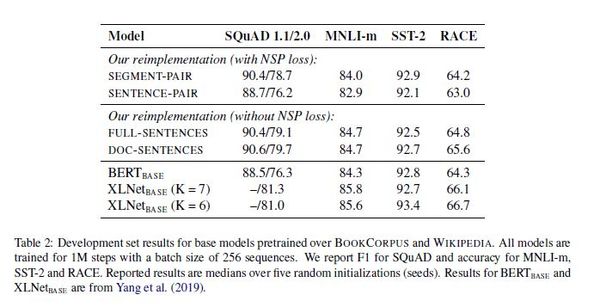

The next thing they tried to investigate was the necessity of the next sentence prediction objection. They tried different settings to show they would help with eliminating the NSP loss in pretraining.

1-Segment-Pair + NSP: Each input has a pair of segments (segments, not sentences) from either the original document or some different document at random with a probability of 0.5 and then these are trained for a textual entailment or a Natural Language Inference (NLI) objective. The total combined length must be < 512 tokens (which is the maximum fixed sequence length for the BERT model). This is the input representation used in the BERT implementation.

2-Sentence-Pair + NSP: Same as the segment-pair representation, just with pairs of sentences. However, it is evident that the total length of sequences here would be a lot less than 512. Hence a larger batch size is used so that the number of tokens processed per training step is similar to that in the segment-pair representation.

3-Full-Sentences: In this setting, they didn't use any kind of NSP loss. Input sequences consist of full sentences from one or more documents. If one document ends, then sentences from the next document are taken and separated using an extra separator token until the length of the sequence is at most 512.

4-Doc-Sentences: Similar to the full sentences setting they didn't use NSP loss in their loss again. This is the same as Full-Sentences, just that the sequence doesn’t cross document boundaries, i.e. once the document is over, sentences from the next ones aren’t added to the sequence. Here, since the document lengths are varying, to solve this problem they used some kind of padding to make all of the inputs in the same length.

In the following table you can see the performance of each setting on each downstream task. As you can see the best result achieved in the DOC-SENTENCES setting with removing the NSP loss.

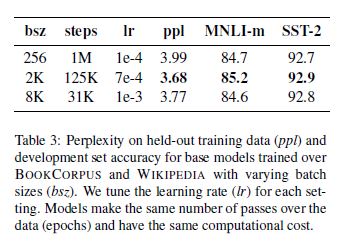

Next thing they tried to investigate was the importance of the larg batchsize. They tried different number of batch size and realize the 2k batch size has the best performance among the other ones. Below table show their results for different number of batch size.