Predicting Floor Level For 911 Calls with Neural Network and Smartphone Sensor Data

Introduction

In highly populated cities with many buildings, locating individuals in the case of an emergency is an important task. For emergency responders, time is of essence. Therefore, accurately locating a 911 caller plays an integral role in this important process.

The motivation for this problem is in the context of 911 calls: victims trapped in a tall building who seek immediate medical attention, locating emergency personnel such as firefighters or paramedics, or a minor calling on behalf of an incapacitated adult.

In this paper, a novel approach is presented to accurately predict floor level for 911 calls by leveraging neural networks and sensor data from smartphones.

In large cities with tall buildings, relying on GPS or Wi-Fi signals does not always lead to an accurate location of a caller.

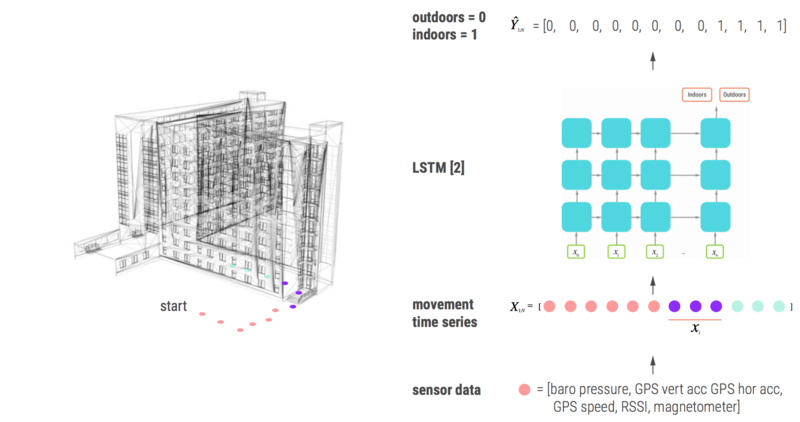

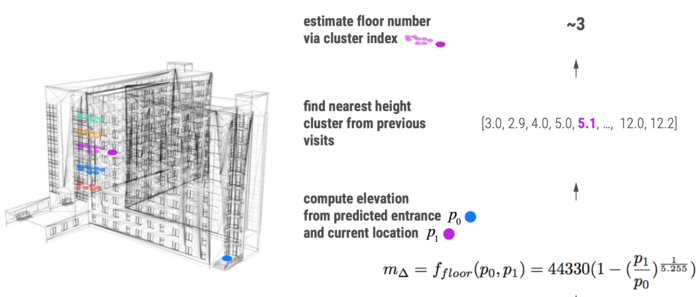

In this work, there are two major contributions. The first is that they trained a LSTM to classify whether a smartphone was either inside or outside a building using GPS, RSSI, and magnetometer sensor readings. The model is compared with baseline models like feed-forward neural networks, logistic regression, SVM, HMM, and Random Forests. The second contribution is an algorithm, which uses the output of the trained LSTM, to predict change in the barometric pressure of the smartphone from when it first entered the building against that of its current location within the building. In the final part of their algorithm, they are able to predict the floor level by clustering the measurements of height.

The model does not rely on the external sensors placed inside the building, prior knowledge of the building, nor user movement behaviour. The only input it looks at is the GPS and the barometric signal from the phone. Finally, they also talk about the application of this algorithm in a variety of other real-world situations.

All the codes and data related to this article are available here[[1]]

Related Work

In general, previous work falls under two categories. The first category of methods is the classification methods based on the user's activity. Therefore, some current methods leverage the user's activity to predict which is based on the offset in their movement [2]. These activities include running, walking, and moving through the elevator. The second set of methods focus more on the use of a barometer which measures the atmospheric pressure. As a result, utilizing a barometer can provide the changes in altitude.

Avinash Parnandi and his coauthors used multiple classifiers in the predicting the floor level [2]. The steps in their algorithmic process are:

- Classifier to predict whether the user is indoors or outdoors

- Classifier to identify if the activity of the user, i.e. walking, standing still etc.

- Classifier to measure the displacement

One of the downsides of this work is to achieve the high accuracy that the user's step size is needed, therefore heavily relying on pre-training to the specific users. In a real world application of this method, this would not be practical.

Song and his colleagues model the way or cause of ascent. That is, was the ascent a result of taking the elevator, stairs or escalator [3]. Then by using infrastructure support of the buildings and as well as additional tuning they are able to predict floor level.

This method also suffers from relying on data specific to the building.

Overall, these methods suffer from relying on pre-training to a specific user, needing additional infrastructure support, or data specific to the building. The method proposed in this paper aims to predict floor level without these constraints.

Method

In their paper, the authors claim that to their knowledge "there does not exist a dataset for predicting floor heights" [4].

To collect data, the authors developed an iOS application (called Sensory) that runs on an iPhone 6s to aggregate the data. They used the smartphone's sensors to record different features such as barometric pressure, GPS course, GPS speed, RSSI strength, GPS longitude, GPS latitude, and altitude. The app streamed data at 1 sample per second, and each datum contained the different sensor measurements mentions earlier along with environment contexts like building floors, environment activity, city name, country name, and magnetic strength.

The data collection procedure for indoor-outdoor classifier was described as follows: 1) Start outside a building. 2) Turn Sensory on, set indoors to 0. 3) Start recording. 4) Walk into and out of buildings over the next n seconds. 5) As soon as we enter the building (cross the outermost door) set indoors to 1. 6) As soon as we exit, set indoors to 0. 7) Stop recording. 8) Save data as CSV for analysis. This procedure can start either outside or inside a building without loss of generality.

The following procedure generates data used to predict a floor change from the entrance floor to the end floor: 1) Start outside a building. 2) Turn Sensory on, set indoors to 0. 3) Start recording. 4) Walk into and out of buildings over the next n seconds. 5) As soon as we enter the building (cross the outermost door) set indoors to 1. 6) Finally, enter a building and ascend/descend to any story. 7) Ascend through any method desired, stairs, elevator, escalator, etc. 8) Once at the floor, stop recording. 9) Save data as CSV for analysis.

Their algorithm was used to predict floor level is a 3 part process:

- Classifying whether smartphone is indoor or outdoor

- Indoor/Outdoor Transition detector

- Estimating vertical height and resolving to absolute floor level

1) Classifying Indoor/Outdoor

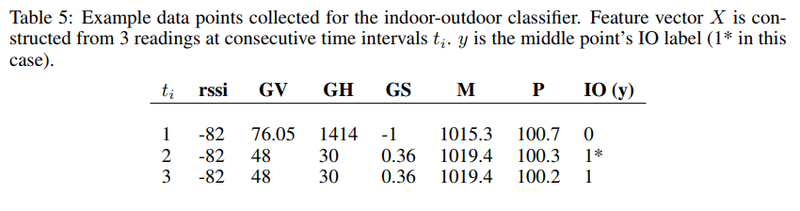

From [5] they are using 6 features which were found through forests of trees feature reduction. The features are smartphone's barometric pressure ([math]\displaystyle{ P }[/math]), GPS vertical accuracy ([math]\displaystyle{ GV }[/math]), GPS horizontal accuracy ([math]\displaystyle{ GH }[/math]), GPS speed ([math]\displaystyle{ S }[/math]), device RSSI level ([math]\displaystyle{ rssi }[/math]), and magnetometer total reading ([math]\displaystyle{ M }[/math]).

The magnetometer total reading was calculated from given the 3-dimensional reading [math]\displaystyle{ x, y, z }[/math]

They used a 3 layer LSTM where the inputs are [math]\displaystyle{ d }[/math] consecutive time steps. The output [math]\displaystyle{ y = 1 }[/math] if smartphone is indoor and [math]\displaystyle{ y = 0 }[/math] if smartphone is outdoor.

In their design they set [math]\displaystyle{ d = 3 }[/math] by random search [6]. The point to make is that they wanted the network to learn the relationship given a little bit of information from both the past and future.

For the overall signal sequence: [math]\displaystyle{ \{x_1, x_2,x_j, ... , x_n\} }[/math] the aim is to classify [math]\displaystyle{ d }[/math] consecutive sensor readings [math]\displaystyle{ X_i = \{x_1, x_2, ..., x_d \} }[/math] as [math]\displaystyle{ y = 1 }[/math] or [math]\displaystyle{ y = 0 }[/math] as noted above.

This is a critical part of their system and they only focus on the predictions in the subspace of being indoors.

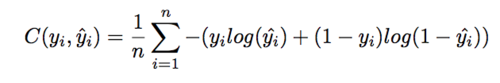

They have trained the LSTM to minimize the binary cross entropy between the true indoor state [math]\displaystyle{ y }[/math] of example [math]\displaystyle{ i }[/math].

The cost function is shown below:

The final output of the LSTM is a time-series [math]\displaystyle{ T = {t_1, t_2, ..., t_i, t_n} }[/math] where each [math]\displaystyle{ t_i = 0, t_i = 1 }[/math] if the point is outside or inside respectively.

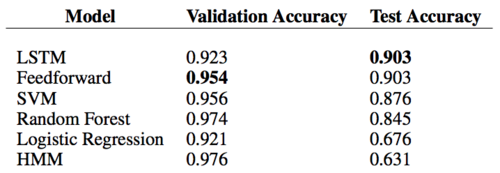

2) Transition Detector

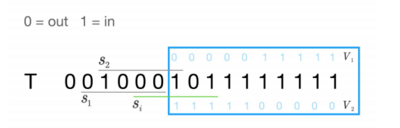

Given the predictions from the previous step, now the next part is to find when the transition of going in or out of a building has occurred.

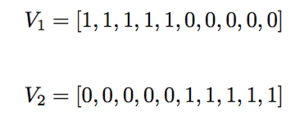

In this figure, they convolve filters [math]\displaystyle{ V_1, V_2 }[/math] across the predictions T and they pick a subset [math]\displaystyle{ s_i }[/math] such that the Jacard distance (defined below) is [math]\displaystyle{ \gt = 0.4 }[/math]

Jacard Distance:

After this process, we are now left with a set of [math]\displaystyle{ b_i }[/math]'s describing the index of each indoor/outdoor transition. The process is shown in the first figure.

3) Vertical height and floor level

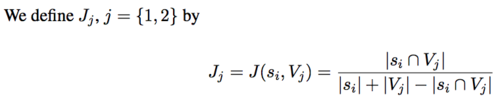

In the final part of the system, the vertical offset needs to be computed given the smartphone's last known location i.e. the last known transition which can easily be computed given the set of transitions from the previous step. All that needs to be done is to pull the index of most recent transition from the previous step and set [math]\displaystyle{ p_0 }[/math] to the lowest pressure within a ~ 15-second window around that index.

The second parameter is [math]\displaystyle{ p_1 }[/math] which is the current pressure reading. In order to generate the relative change in height [math]\displaystyle{ m_\Delta }[/math]

After plugging this into the formula defined above we are now left with a scalar value which represents the height displacement between the entrance and the smartphone's current location of the building [7].

In order to resolve to an absolute floor level, they use the index number of the clusters of [math]\displaystyle{ m_\Delta }[/math] 's. As seen above [math]\displaystyle{ 5.1 }[/math] is the third cluster implying floor number 3.

Experiments and Results

Dataset

In this paper, an iOS app called Sensory is developed which is used to collect data on an iPhone 6. The following sensor readings were recorded: indoors, created at, session id, floor, RSSI strength, GPS latitude, GPS longitude, GPS vertical accuracy, GPS horizontal accuracy, GPS course, GPS speed, barometric relative altitude, barometric pressure, environment context, environment mean building floors, environment activity, city name, country name, magnet x, magnet y, magnet z, magnet total.

As soon as the user enters or exits a building, the indoor-outdoor data has to be manually entered. To gather the data for the floor level prediction, the authors conducted 63 trials among five different buildings throughout New York City. Since unsupervised learning was being used, the actual floor level was recorded manually for the validation purposes only.

All of these classifiers were trained and validated on data from a total of 5082 data points. The set split was 80% training and 20% validation. For the LSTM the network was trained for a total of 24 epochs with a batch size of 128 and using an Adam optimizer where the learning rate was 0.006. Although the baselines performed considerably well the objective here was to show that an LSTM can be used in the future to model the entire system with an LSTM.

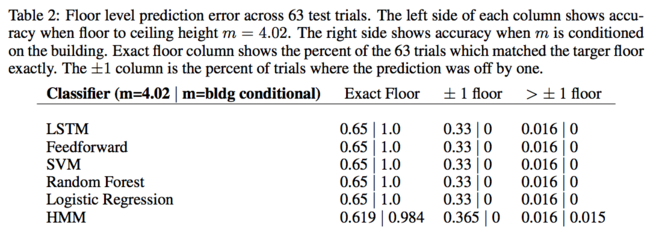

The above chart shows the success that their system is able to achieve in the floor level prediction.

The performance was measured in terms of how many floors were travelled rather than the absolute floor number. Because different buildings might have their floors differently numbered. They used different m values in 2 tests. One applies the same m value across all building and the other one applied specific m values on different buildings. The result showed that this specification on m values hugely increased the accuracy.

Future Work

The first part of the system used an LSTM for indoor/outdoor classification. Therefore, this separate module can be used in many other location problems. Working on this separate problem seems to be an approach that the authors will take. They also would like to aim towards modeling the whole problem within the LSTM in order to generate the floor level predictions solely from sensor reading data.

Critique

In this paper, the authors presented a novel system which can predict a smartphone's floor level with 100% accuracy, which has not been done. Previous work relied heavily on pre-training and information regarding the building or users beforehand. Their work can generalize well to many types of tall buildings which are more than 19 stories. Another benefit to their system is that they don't need any additional infrastructure support in advance making it a practical solution for deployment.

A weakness is that they claim they can get 100% accuracy, but this is only if they know the floor to ceiling height, and their accuracy relies on this key piece of information. Otherwise, when conditioned on the height of the building their accuracy drops by 35% to 65%. Also, the article's ideas are sometimes out of order and are repeated in cycles.

It is also not clear that the LSTM is the best approach especially since a simple feedforward network achieved the same accuracy in their experiments.

They also go against their claim stated at the beginning of the paper where they say they "..does not require the use of beacons, prior knowledge of the building infrastructure..." as in their clustering step they are in a way using prior knowledge from previous visits [4].

The authors also recognize several potential failings of their method. One is that their algorithm will not differentiate based on the floor of the building the user entered on (if there are entrances on multiple floors). In addition, they state that a user on the roof could be detected as being on the ground floor. It was not mentioned/explored in the paper, but a person being on a balcony (ex: attached to an apartment) may have the same effect. These sources of error will need to be corrected before this or a similar algorithm is implemented; otherwise, the algorithm may provide the misleading data to rescue crews, etc.

Overall this paper is not too novel, as they don't provide any algorithmic improvement over the state of the art. Their methods are fairly standard ML techniques and they have only used out of the box solutions. There is no clear intuition why the proposed work well for the authors. This application could be solved using simpler methods like having an emergency push button on each floor. Moreover, authors don't provide sufficient motivation for why deep learning would be a good solution to this problem.

The proposed model could introduce privacy risks such as illegal surveillance of mobile phone user and private facilities.

References

[1] Sepp Hochreiter and Jurgen Schmidhuber. Long short-term memory. Neural Computation, 9(8): 1735–1780, 1997.

[2] Parnandi, A., Le, K., Vaghela, P., Kolli, A., Dantu, K., Poduri, S., & Sukhatme, G. S. (2009, October). Coarse in-building localization with smartphones. In International Conference on Mobile Computing, Applications, and Services (pp. 343-354). Springer, Berlin, Heidelberg.

[3] Wonsang Song, Jae Woo Lee, Byung Suk Lee, Henning Schulzrinne. "Finding 9-1-1 Callers in Tall Buildings". IEEE WoWMoM '14. Sydney, Australia, June 2014.

[4] W Falcon, H Schulzrinne, Predicting Floor-Level for 911 Calls with Neural Networks and Smartphone Sensor Data, 2018

[5] Kawakubo, Hideko and Hiroaki Yoshida. “Rapid Feature Selection Based on Random Forests for High-Dimensional Data.” (2012).

[6] James Bergstra and Yoshua Bengio. 2012. Random search for hyper-parameter optimization. J. Mach. Learn. Res. 13 (February 2012), 281-305.

[7] Greg Milette, Adam Stroud: Professional Android Sensor Programming, 2012, Wiley India