Pre-Training Tasks For Embedding-Based Large-Scale Retrieval

Contributors

Pierre McWhannel wrote this summary with editorial and technical contributions from STAT 946 fall 2020 classmates. The summary is based on the paper "Pre-Training Tasks for Embedding-Based Large-Scale Retrieval" which was presented at ICLR 2020. The authors of this paper are Wei-Cheng Chang, Felix X. Yu, Yin-Wen Chang, Yiming Yang, Sanjiv Kumar [1].

Introduction

This problem domain which the paper is centered on is large-scale query-document retrieval. This problem is: given a query/question to collect relevant documents which contain the answer(s) and identify the span of words which contain the answer(s). This problem is often separated into two steps 1) a retrieval phase which reduces a large corpus to a much smaller subset and 2) a reader which is applied to read the shortened list of documents and score them based on their ability to answer the query and identify the span containing the answer, these spans can be ranked according to some scoring function. In the setting of open-domain question answering the query, [math]\displaystyle{ q }[/math] is a question and the documents [math]\displaystyle{ d }[/math] represent a passage which may contain the answer(s). The focus of this paper is on the retriever in open-domain question answering, in this setting a scoring function [math]\displaystyle{ f: \mathcal{X} \times \mathcal{Y} \rightarrow \mathbb{R} }[/math] is utilized to map [math]\displaystyle{ (q,d) \in \mathcal{X} \times \mathcal{Y} }[/math] to a real value which represents the score of how relevant the passage is to the query. We desire high scores for relevant passages and and low scores otherwise and this is the job of the retriever. Certain characteristics desired of the retriever are high recall since the reader will only be applied to a subset meaning there is an an opportunity to identify false positive but not false negatives. The other characteristic desired of a retriever is low latency. The popular approach to meet these heuristics has been the BM-25 which is a token-based matching technique

Typically there are

Contributions of this paper

- Introduce a set of tasks that model natural feedback from a teacher and hence assess the feasibility of dialog-based language learning.

- Evaluated some baseline models on this data and compared them to standard supervised learning.

- Introduced a novel forward prediction model, whereby the learner tries to predict the teacher’s replies to its actions, which yields promising results, even with no reward signal at all

Code for this paper can be found on Github:https://github.com/facebook/MemNN/tree/master/DBLL

Background on Memory Networks

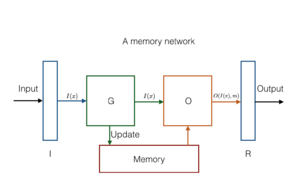

A memory network combines learning strategies from the machine learning literat

References=

[1] Wei-Cheng Chang, Felix X Yu, Yin-Wen Chang, Yiming Yang, and Sanjiv Kumar. Pre-training tasks for embedding-based large-scale retrieval. arXiv preprint arXiv:2002.03932, 2020. [2]Stephen Robertson, Hugo Zaragoza, et al. The probabilistic relevance framework: BM25 and beyond. Foundations and Trends in Information Retrieval, 3(4):333–389, 2009.