Poison Frogs Neural Networks

Presented by

Eric Anderson, Chengzhi Wang, YiJing Zhou, Kai Zhong

Introduction

Poison Frogs! Targeted Clean-Label Poisoning Attacks on Neural Networks from NeurIPS 2018 is written by Ali Shafahi, W. Ronny Huang, Mahyar Najibi, Octavian Suciu, Christoph Studer, Tudor Dumitras, and Tom Goldstein.

Data poisoning attacks are performed by an attacker by adding examples (poisons) to a training set to manipulate the model’s behavior on a test set. The ability for deep learning models to tolerate such poisons is necessary if they are to be deployed in high stakes, security-critical situations. Targeted attacks aim to only manipulate classifier behavior with respect to a specific test instance, and clean-label attacks do not require the attacker to have control over the poison’s labeling.

This paper presents a method to create poisons to effectively make targeted, clean-label poisoning attacks on neural networks, along with techniques to boost lethality. The proposed poisoning technique achieves an 100% success rate on a pretrained InceptionV3 network and sees up to 70% success rate on end-to-end trained scaled-down AlexNet architecture when using watermarks and multiple poison instances.

Previous Work

Motivation

The previous section shows that while there are studies related to poisoning attacks on SVMs and Bayesian classifiers, poisons for deep neural networks (DNNs) have rarely been studied, with the existing few studies indicating DNNs are extremely susceptible to poison attacks (Steinhardt et al. 2017).

Furthermore, poisons studied prior to this paper can be classified into at least one of the following:

- Focus on classical attacks that degrade model accuracy indiscriminately

- Require test-time instances to be manually modified

- Require the attacker to have some degree of control over the labelling of the training set

- Only achieve acceptable success rates with high poison doses

The proposal of targeted, clean-label poisons in this paper thus opens the door to more efficient, deadly poisons that future neural networks will have to find ways to tolerate.

Basic Concept

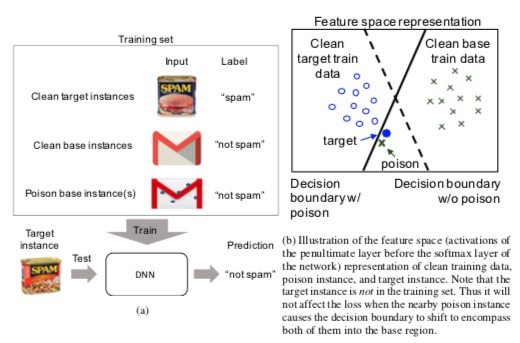

Crafting the basic concoction assumes the attacker has no knowledge of the training data but does have knowledge of the model they intend to poison. Base instance b is chosen from the training set and test instance t is chosen from the testing set. The goal is to have the poisoned model misclassify t to class b at testing, while the poison p is discernible from b to the human data labeller.

[Figure 1 (a) Schematic of the clean-label poisoning attack. (b) Schematic of how a successful attack might work by shifting the decision boundary.]

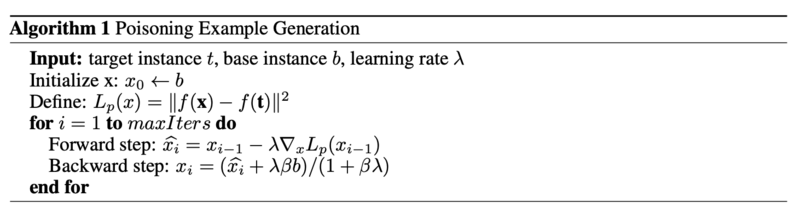

[math]\displaystyle{ \mathbf{p} = argmin_x||f(\mathbf{x}) - f(\mathbf{t})||_2^2 + ||\mathbf{x}-\mathbf{b}||_2^2 }[/math]

With f being the function that propagates input x through the network to the penultimate layer. So the first term aims to minimize differences between the poison and test instance t in the eyes of the model, while the second term aims to minimize differences between the poison and base instance b to a human. The authors then use the following algorithm for optimization:

Human assistance is needed to tune β so that the poison still resembles the base instance to someone who labels the data.

Boosting Poison Effectiveness

With the basic concoction in place, the authors introduce several methods to further boost lethality. As we will see in the results section, while the basic concoction is sufficiently deadly for a transfer learning trained model, successful attacks on end-to-end trained models require the help of the following techniques:

- Watermarking: The poison is overlapped with a low-opacity base instance (the watermark) to “allow for some inseparable feature[s] [to] overlap while remaining visually distinct” (P7)

- Multiple poison instance attacks: Multiple poison instances derived from different base instances are introduced into the training set

- Targeting outliers: By targeting instances farther away from instances in the training class (like in Figure 1b), the authors reason the class label should easier to flip

Results

Conclusion

This article studied targeted clean-label poisoning methods. These attacks are difficult to detect because they involve non-suspicious (correctly labeled) training data, and do not degrade the performance on non-targeted examples. Poison images collide with a target image in feature space, therefore the network will be very hard to distinguish them. Multiple poison images and a watermarking trick will make the attack more powerful. While our poisoned dataset training does indeed make the network more robust to base-class adversarial examples designed to be misclassified as the target, it also has the effect of causing the unaltered target instance to be misclassified as a base. Finally the paper hopes that it can raise attention for the important issue of data reliability and provenance.

Critiques

By using nearly 5 million parameters, GoogLeNet, compared to previous architectures like VGGNet and AlexNet, reduced the number of parameters in the network by almost 92%. This enabled Inception to be used for many big data applications where a huge amount of data was needed to be processed at a reasonable cost while the computational capacity was limited. However, the Inception network is still complex and susceptible to scaling. If the network is scaled up, large parts of the computational gains can be lost immediately. Also, there was no clear description about the various factors that lead to the design decision of this inception architecture, making it harder to adapt to other applications while maintaining the same computational efficiency.

-

References

[1] Min Lin, Qiang Chen, and Shuicheng Yan. Network in network. CoRR, abs/1312.4400, 2013.

[2] Ross B. Girshick, Jeff Donahue, Trevor Darrell, and Jitendra Malik. Rich feature hierarchies for accurate object detection and semantic segmentation. In Computer Vision and Pattern Recognition, 2014. CVPR 2014. IEEE Conference on, 2014.

[3] Sanjeev Arora, Aditya Bhaskara, Rong Ge, and Tengyu Ma. Provable bounds for learning some deep representations. CoRR, abs/1310.6343, 2013.

[4] ¨Umit V. C¸ ataly¨urek, Cevdet Aykanat, and Bora Uc¸ar. On two-dimensional sparse matrix partitioning: Models, methods, and a recipe. SIAM J. Sci. Comput., 32(2):656–683, February 2010.

Footnote 1: Hebbian theory is a neuroscientific theory claiming that an increase in synaptic efficacy arises from a presynaptic cell's repeated and persistent stimulation of a postsynaptic cell. It is an attempt to explain synaptic plasticity, the adaptation of brain neurons during the learning process.

Footnote 2: For more explanation on 1 by 1 convolution refer to: https://iamaaditya.github.io/2016/03/one-by-one-convolution/