One-Shot Object Detection with Co-Attention and Co-Excitation

Presented By

Gautam Bathla

Background

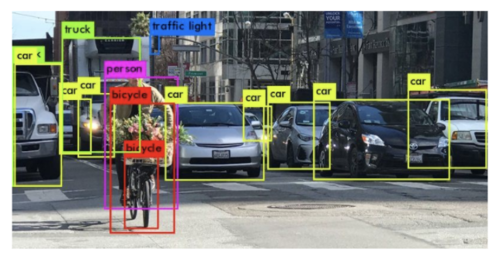

Object Detection is a technique where the model gets an image as an input and outputs the class and location of all the objects present in the image.

Figure 1 shows an example where the model identifies and locates all the instances of different objects present in the image successfully. It encloses each object within a bounding box and annotates each box with the class of the object present inside the box.

State-of-the-Art object detectors are trained on thousands of images for different classes before the model can accurately predict the class and spatial location for unseen images belonging to the classes the model has been trained on. When a model is trained with K labeled instances for each of N classes, then this setting is known as N-way K-shot classification. K = 0 for zero-shot learning, K = 1 for one-shot learning and k > 1 for few shot learning.

Introduction

The problem this paper is trying to tackle is given a query image p, the model needs to find all the instances in the target image of the object present in the query image. Consider the same task when given to a human, i.e. the task of identifying and locating the instances of never-before-seen object in the target image based on the query image, the person will try to compare different characteristics of the object like shape, texture, color, etc. along with applying attention for localization. The human visual system can achieve this even in varying conditions like lighting conditions, viewing angles, etc. The authors are trying to incorporate the same functionality into the model to achieve this task. The target and query image do not need to be exactly the same and are allowed to have variations as long as they share some attributes so that they can belong to the same category.

In this paper, the authors have made contributions to three technical areas. First is the use of non-local operations to generate better region proposals for the target image based on the query image. This operation can be thought of as a co-attention mechanism. Second contribution is proposing a Squeeze and Co-Excitation mechanism to identify and give more importance to relevant features to filter out relevant proposals and hence the instances in the target image. Third, the authors designed a margin-based ranking loss which will be useful for predicting the similarity of region proposals with the given query image irrespective of whether the label of the class is seen or unseen during the training process.