Neural Audio Synthesis of Musical Notes with WaveNet autoencoders: Difference between revisions

No edit summary |

No edit summary |

||

| Line 14: | Line 14: | ||

== WaveNet Autoencoder == | == WaveNet Autoencoder == | ||

While the proposed autoencoder structure is very similar to that of WaveNet the authors argue that the algorithm is novel in two ways: | |||

* It is able to attain consistent long-term structure without any external conditioning | |||

* Creating meaningful embedding which can be interpolated between | |||

The authors accomplish this by passing the raw audio throw the encoder to produce an embedding <math>Z = f(x) </math>, next the input is shifted and feed into the decoder which reproduces the input. The resulting probability distribution: | |||

\begin{align} | |||

p(x) = \prod_{i=1}^N\{x_i | x_1, … , x_N-1, f(x) \} | |||

\end{align} | |||

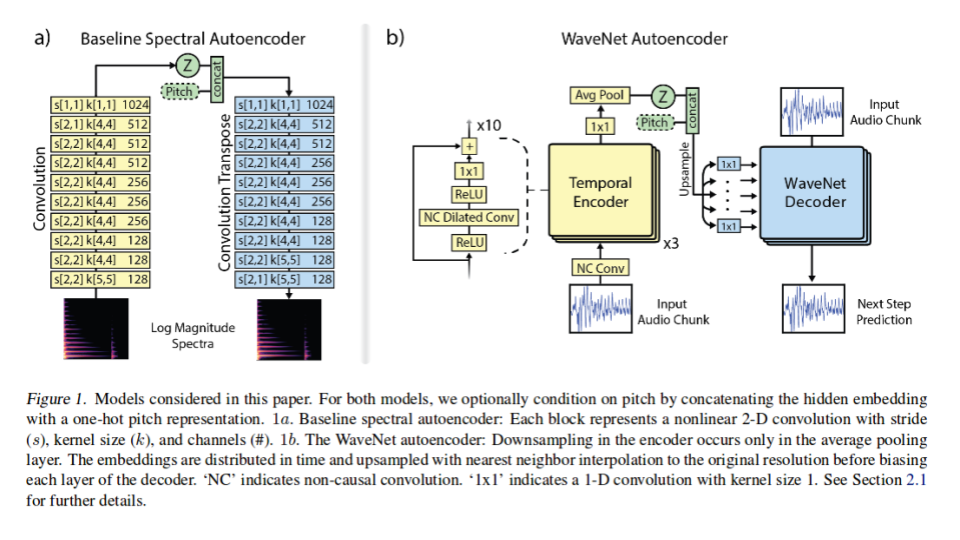

A detailed block diagram of the modified WaveNet structure can be seen in figure 1b. This diagram demonstrates the encoder as a 30 layer network in each each node is a ReLU nonlinearity followed by a NC dilated convolution. The resulting convolution is 128 channels all feed into another ReLU nonlinearity which is feed into another 1x1 convolution before getting down sampled with average pooling to produce a 16 dimension <math>Z </math> distribution. Each <math>Z </math> encoding is for a specific temporal resolution which the authors of the paper tuned to 32ms. This means that there are 125, 16 dimension <math>Z </math> encodings for each 4 second note present in the NSynth database (1984 embeddings). | |||

Before the <math>Z </math> embedding enters the decoder it is first upsampled to the original audio rate using nearest neighbor interpolation to 256 values. The embedding then passes through the decoder to recreate the original audio note. | |||

== Baseline: Spectral Autoencoder == | == Baseline: Spectral Autoencoder == | ||

Revision as of 22:47, 22 March 2018

Introduction

The authors of this paper have pointed out that the method in which most notes are created are hand-designed instruments modifying pitch, velocity and filter parameters to produce the required tone, timbre and dynamics of a sound. The authors suggest that this may be a problem and thus suggest a data-driven approach to audio synthesis. To train such a data expensive model the authors highlight the need for a large dataset much like imagenet for music.

Contributions

To solve the problem highlighted above the authors propose two main contributions of their paper:

- Wavenet-style autoencoder that learn to encode temural data over a long term audio structures without requiring external conditioning

- NSynth: a large dataset of musical notes inspired by the emerging of large image datasets

Models

WaveNet Autoencoder

While the proposed autoencoder structure is very similar to that of WaveNet the authors argue that the algorithm is novel in two ways:

- It is able to attain consistent long-term structure without any external conditioning

- Creating meaningful embedding which can be interpolated between

The authors accomplish this by passing the raw audio throw the encoder to produce an embedding [math]\displaystyle{ Z = f(x) }[/math], next the input is shifted and feed into the decoder which reproduces the input. The resulting probability distribution:

\begin{align} p(x) = \prod_{i=1}^N\{x_i | x_1, … , x_N-1, f(x) \} \end{align}

A detailed block diagram of the modified WaveNet structure can be seen in figure 1b. This diagram demonstrates the encoder as a 30 layer network in each each node is a ReLU nonlinearity followed by a NC dilated convolution. The resulting convolution is 128 channels all feed into another ReLU nonlinearity which is feed into another 1x1 convolution before getting down sampled with average pooling to produce a 16 dimension [math]\displaystyle{ Z }[/math] distribution. Each [math]\displaystyle{ Z }[/math] encoding is for a specific temporal resolution which the authors of the paper tuned to 32ms. This means that there are 125, 16 dimension [math]\displaystyle{ Z }[/math] encodings for each 4 second note present in the NSynth database (1984 embeddings). Before the [math]\displaystyle{ Z }[/math] embedding enters the decoder it is first upsampled to the original audio rate using nearest neighbor interpolation to 256 values. The embedding then passes through the decoder to recreate the original audio note.

Baseline: Spectral Autoencoder

Being unable to find an alternative fully deep model which the authors could use to compare to there proposed WaveNet autoencoder to, the authors just made a strong baseline. The baseline algorithm that the authors developed is a spectral autoencoder. The block diagram of its architecture can be seen in figure 1a. The baseline network is 10 layer deep. Each layer has a 4x4 kernels with 2x2 strides followed by a leaky-ReLU (0.1) and batch normalization. The final hidden vector(Z) was set to 1984 to exactly match the hidden vector of the WaveNet autoencoder.

The authors attempted to train the baseline on multiple input: raw waveforms, FFT, and log magnitude of spectrum finding the latter to be best correlated with perceptual distortion. The authors also explored several representations of phase, finding that estimating magnitude and using established iterative techniques to reconstruct phase to be most effective. A final heuristic that was used by the authors to increase the accuracy of the baseline was weighting the mean square error (MSE) loss starting at 10 for 0 HZ and decreasing linearly to 1 at 4000 Hz and above. This is valid as the fundamental frequency of most instrument are found at lower frequencies.

Training

Both the modified WaveNet and the baseline autoencoder used stochastic gradient descent with an Adam optimizer. The authors trained the baseline autoencoder model asynchronously for 1800000 epocs with a batch size of 8 with a learning rate of 1e-4. Where as the WaveNet modules were trained synchronously for 250000 epocs with a batch size of 32 with a decaying learning rate ranging from 2e-4 to 6e-6.

The NSynth Dataset

The NSynth dataset has 306 043 unique musical notes all 4 seconds in length sampled at 16,000 Hz. The data set consists of 1006 different instruments playing on average of 65.4 different pitches across on average 4.75 different velocities. Average pitches and velocities are used as not all instruments, can reach all 88 MIDI frequencies, or the 5 velocities desired by the authors. The dataset has the following split: training set with 289,205 notes, validation set with 12,678 notes, and test set with 4,096 notes.

Along with each note the authors also included the following annotations:

- Source - The way each sound was produced. There were 3 classes ‘acoustic’, ‘electronic’ and ‘synthetic’

- Family - The family class of instruments that produced each note. There is 11 classes which include: {‘bass’, ‘brass’, ‘vocal’ ext.}

- Qualities - Sonic qualities about each note

The full dataset is publicly available here: https://magenta.tensorflow.org/datasets/nsynth.

Evaluation

To fully analyze all aspects of WaveNet the authors proposed three evaluations:

- Reconstruction - Both Quantitative and Qualitative analysis were considered

- Interpolation in Timbre and Dynamics

- Entanglement of Pitch and Timbre

Sound is historically very difficult to quantify from a picture representation as it requires training and expertise to analyze. Even with expertise it can be difficult to complete a full analyses as two very different sound can look quite similar in the respective pictorial representation. This is why the authors recommend all readers to listen to the created notes which can be sound here: https://magenta.tensorflow.org/nsynth.

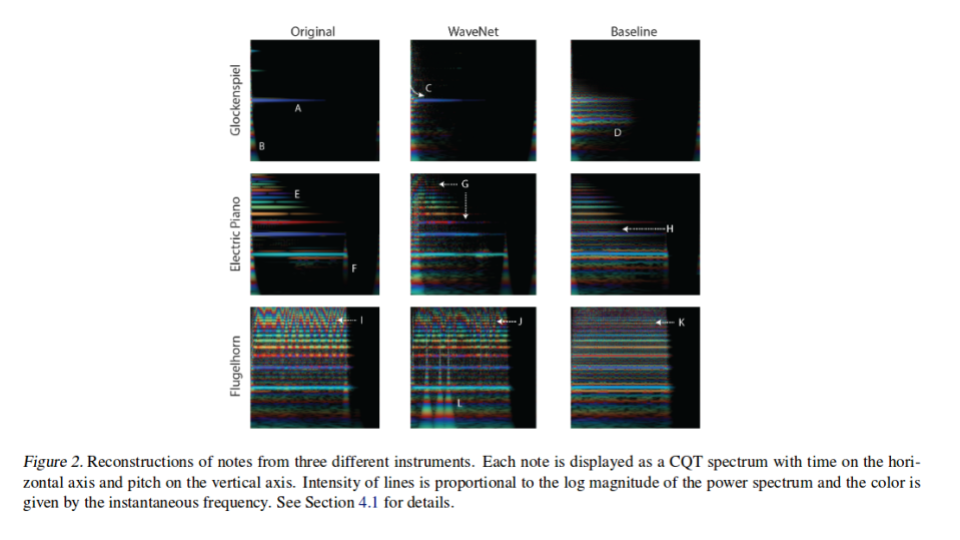

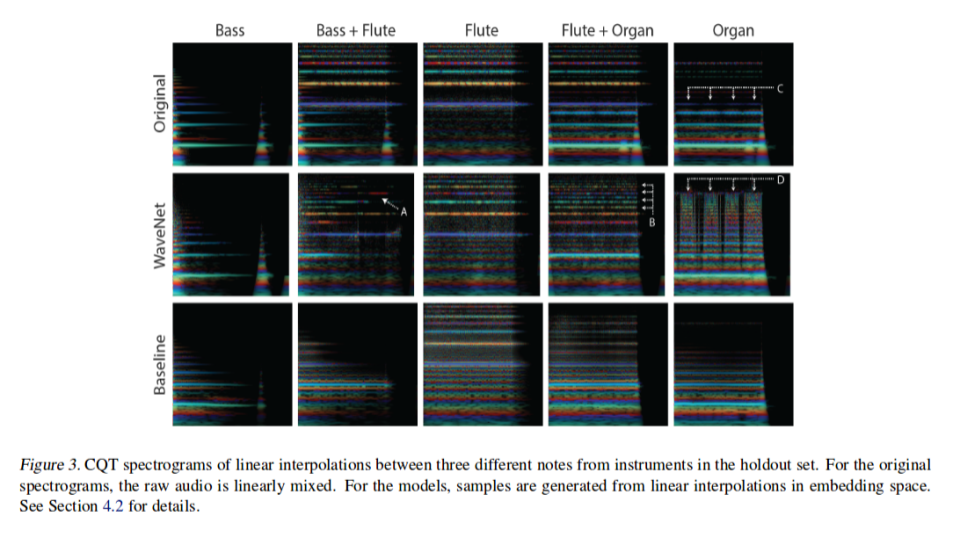

However, even when taking this under consideration the authors do pictorially demonstrate differences in the two proposed algorithms along with the original note, as it is hard to publish a paper with sound included. To demonstrate the pictorial difference the authors demonstrate each note using constant-q transform (CQT) which is able to capture the dynamics of timbre along with representing the frequencies of the sound.

Reconstruction

Qualitative Comparison

In the Glockenspiel the WaveNet autoencoder is able to reproduce the magnitude, phase of the fundamental frequency (A and C in figure 2), and the attack (B in figure 2) of the instrument; Whereas the Baseline autoencoder introduces non existing harmonics (D in figure 2). The flugelhorn on the other hand, presents the starkest difference between the WaveNet and baseline autoencoders. The WaveNet while not perfect is able to reproduce the verbarto (I and J in figure 2) across multiple frequencies, which results in a natural sounding note. The baseline not only fails to do this but also adds extra noise (K in figure 2). The authors do add that the WaveNet produces some strikes (L in figure 2) however they argue that they are inaudible.

Quantitative Comparison

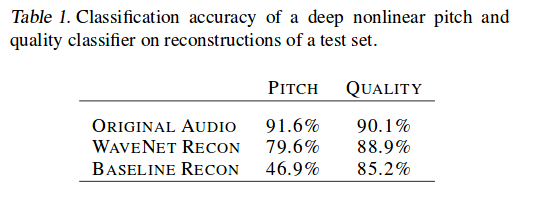

For a quantitative comparison the authors trained a separate multi-task classifier to classify a note using given pitch or quality of a note. The results of both the Baseline and the WaveNet where then inputted and attempted to be classified. As seen in table 1 WaveNet significantly outperformed the Baseline in both metrics posting a ~70% increase when only considering pitch.

Interpolation in Timbre and Dynamics

For this evaluation the authors reconstructed from linear interpolations in Z space among different instruments and compared these to superimposed position of the original two instruments. Not surprisingly the model fuse aspects of both instruments during the recreation. The authors claim however, that WaveNet produces much more realistic sounding results. To support their claim the authors the authors point to WaveNet ability to create dynamic mixing of overtone in time, even jumping to higher harmonics (A in figure 3), capturing the timbre and dynamics of both the bass and flute. This can be once again seen in (B in figure 3) where Wavenet adds additional harmonics as well as a sub-harmonics to the original flute note.

Entanglement of Pitch and Timbre

To study the entanglement between pitch and Z space the authors constructed a classifier which was expected to drop in accuracy if the representation of pitch and timbre is disentangled as it relies heavily on the pitch information. This is clearly demonstrated by the first two rows of table 2 where WaveNet relies more strongly on pitch then the baseline algorithm. The authors provide a more qualitative demonstrating in figure 4. They demonstrate a situation in which a classifier may be confused; a note with pitch of +12 is almost exactly the same as the original apart from an emergence of sub-harmonics.

Future Directions

One significant area which the authors claim great improvement is needed is the large memory constraints required by there algorithm. Due to the large memory requirement the current WaveNet must rely on down sampling thus being unable to fully capture the global context.

Open Source Code base

Google has released all code related to this paper at the following open source repository: https://github.com/tensorflow/magenta/tree/master/magenta/models/nsynth

References

- Engel, J., Resnick, C., Roberts, A., Dieleman, S., Norouzi, M., Eck, D. & Simonyan, K.. (2017). Neural Audio Synthesis of Musical Notes with WaveNet Autoencoders. Proceedings of the 34th International Conference on Machine Learning, in PMLR 70:1068-1077

- NSynth: Neural Audio Synthesis. (2017, April 06). Retrieved March 19, 2018, from https://magenta.tensorflow.org/nsynth

- The NSynth Dataset. (2017, April 05). Retrieved March 19, 2018, from https://magenta.tensorflow.org/datasets/nsynth