Modular Multitask Reinforcement Learning with Policy Sketches

Introduction & Background

This paper describes a framework for learning composable deep subpolicies in a multitask setting. These policies are guided only by abstract sketches which are representative of the high-level behavior in the environment. General reinforcement learning algorithms allow agents to solve tasks in complex environments. Vanilla policies find it difficult to deal with tasks featuring extremely delayed rewards. Most approaches often require in-depth supervision in the form of explicitly specified high-level actions, subgoals, or behavioral primitives. The proposed methodology is particularly suitable where rewards are difficult to engineer by hand. It is enough to tell the learner about the abstract policy structure, without indicating how high-level behaviors should try to use primitive percepts or actions.

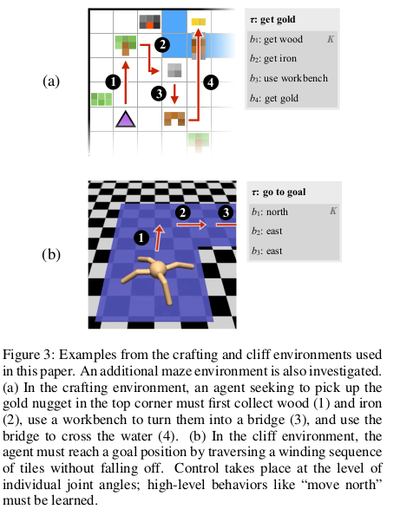

This paper explores a multitask reinforcement learning setting where the learner is presented with policy sketches. Policy sketches are defined as short, ungrounded, symbolic representations of a task. It describe its components, as shown in Figure 1. While symbols might be shared across different tasks ( the predicate "get wood" appears in sketches for both the tasks : "make planks" and "make sticks"). The learner is not shown or told anything about what these symbols mean, either in terms of observations or intermediate rewards.

The agent learns from policy sketches by associating each high-level action with a parameterization of a low-level subpolicy. It jointly optimizes over concatenated task-specific policies by tying/sharing parameters across common subpolicies. They find that this architecture uses the high-level guidance provided by sketches to drastically accelerate learning of complex multi-stage behaviors. The experiments show that most benefits of learning from very detailed low-level supervision (e.g. from subgoal rewards) can also be obtained from fairly coarse high-level policy sketches. Most importantly, sketches are much easier to construct. They require no additions or modifications to the environment dynamics or reward function, and can be easily provided by non-experts (third party mechanical turk providers). This makes it possible to extend the benefits of hierarchical RL to challenging environments where it may not be possible to specify by hand the details of relevant subtasks. This paper shows that their approach drastically outperforms purely unsupervised methods that do not provide the learner with any task-specific guidance. The specific use of sketches to parameterize modular subpolicies makes better use of sketches than conditioning on them directly.

The modular structure of this whole approach, which associates every high-level action symbol with a discrete subpolicy, naturally leads to a library of interpretable policy fragments which can be are easily recombined. The authors evaluate the approach in a variety of different data conditions:

- Learning the full collection of tasks jointly via reinforcement learning

- In a zero-shot setting where a policy sketch is available for a held-out task

- In a adaptation setting, where sketches are hidden and the agent must learn to use and adapt a pretrained policy to reuse high-level actions in a new task.

The code has been released at http://github.com/jacobandreas/psketch.

Related Work

The approach in this paper is a specific case of the options framework developed by Sutton et al., 1999. In that work, options are introduced as "closed-loop policies for taking action over period of time". They show that options enable temporally abstract information to be included in reinforcement learning algorithms, though it was published before the large-scale populairty of neural networks for reinforcement.

Learning Modular Policies from Sketches

The paper considers a multitask reinforcement learning problem arising from a family of infinite-horizon discounted Markov decision processes in a shared environment. This environment is specified by a tuple $(S, A, P, \gamma )$, with

- $S$ a set of states

- $A$ a set of low-level actions

- $P : S \times A \times S \to R$ a transition probability distribution

- $\gamma$ a discount factor

Each task $t \in T$ is then specified by a pair $(R_t, \rho_t)$, with $R_t : S \to R$ a task-specific reward function and $\rho_t: S \to R$, an initial distribution over states. For a fixed sequence ${(s_i, a_i)}$ of states and actions obtained from a rollout of a given policy, we will denote the empirical return starting in state $s_i$ as $q_i = \sum_{j=i+1}^\infty \gamma^{j-i-1}R(s_j)$. In addition to the components of a standard multitask RL problem, we assume that tasks are annotated with sketches $K_t$ , each consisting of a sequence $(b_{t1},b_{t2},...)$ of high-level symbolic labels drawn from a fixed vocabulary $B$.

Model

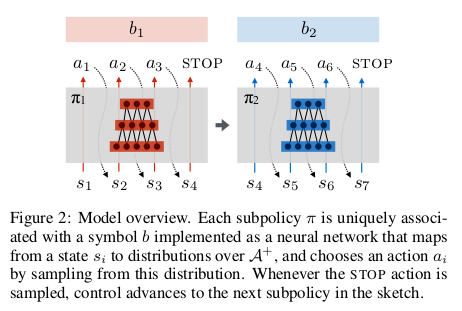

The authors exploit the structural information provided by sketches by constructing for each symbol b a corresponding subpolicy $\pi_b$. By sharing each subpolicy across all tasks annotated with the corresponding symbol, their approach naturally learns the tied/shared abstraction for the corresponding subtask.

At every timestep, a subpolicy selects either a low-level action $a \in A$ or a special STOP action. The augmented state space is denoted as $A^+ := A \cup \{STOP\}$. At a high level, this framework is agnostic to the implementation of subpolicies: any function that takes a representation of the current state onto a distribution over $A^+$ will work fine with the approach.

In this paper, $\pi_b$ is represented as a neural network. These subpolicies may be viewed as options of the kind described by [2], with the key distinction that they have no initiation semantics, but are instead invokable everywhere, and have no explicit representation as a function from an initial state to a distribution over final states (instead this paper uses the STOP action to terminate).

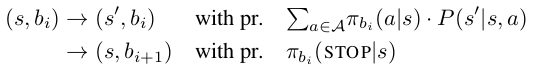

Given a fixed sketch $(b_1, b_2,....)$, a task-specific policy $\Pi_r$ is formed by concatenating its associated subpolicies in sequence. In particular, the high-level policy maintains a sub-policy index i (initially 0), and executes actions from $\pi_{b_i}$ until the STOP symbol is emitted, at which point control is passed to bi+1 . We may thus think of as inducing a Markov chain over the state space $S \times B$, with transitions:

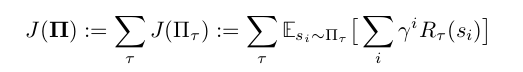

Note that $\Pi_r$ is semi-Markov with respect to projection of the augmented state space $S \times B$ onto the underlying state space S. The complete family of task-specific policies is denoted as $\Pi := \bigcup_r \{ \Pi_r \}$. Assume each $\pi_b$ be an arbitrary function of the current environment state parameterized by some weight vector $\theta_b$. The learning problem is to optimize over all $\theta_b$ to maximize expected discounted reward

across all tasks $t \in T$.

Policy Optimization

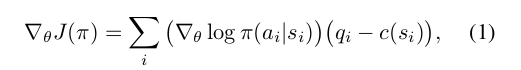

Here that optimization is accomplished through a simple decoupled actor–critic method. In a standard policy gradient approach, with a single policy $\pi$ with parameters $\theta$, the gradient steps are of the form:

where the baseline or “critic” c can be chosen independently of the future without introducing bias into the gradient. Recalling the previous definition of $q_i$ as the empirical return starting from $s_i$, this form of the gradient corresponds to a generalized advantage estimator with $\lambda = 1$. Here c achieves close to the optimal variance[6] when it is set exactly equal to the state-value function $V_{\pi} (s_i) = E_{\pi} q_i$ for the target policy $\pi$ starting in state $s_i$.

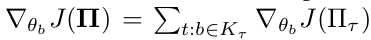

In the case of generalizing to modular policies built by sequencing sub-policies the authors suggest to have one subpolicy per symbol but one critic per task. This is because subpolicies $\pi_b$ might participate in many compound policies $\Pi_r$, each associated with its own reward function $R_r$ . Thus individual subpolicies are not uniquely identified or differentiated with value functions. The actor–critic method is extended to allow decoupling of policies from value functions by allowing the critic to vary per-sample (per-task-and-timestep) based on the reward function with which that particular sample is associated. Noting that

i.e. the sum of gradients of expected rewards across all tasks in which $\pi_b$ participates, we have:

where each state-action pair $(s_{t_i}, a_{t_i})$ was selected by the subpolicy $\pi_b$ in the context of the task t.

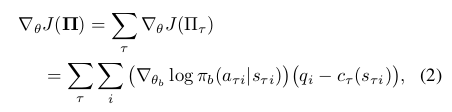

Now minimization of the gradient variance requires that each $c_t$ actually depend on the task identity. (This follows immediately by applying the corresponding argument in [6] individually to each term in the sum over t in Equation 2.) Because the value function is itself unknown, an approximation must be estimated from data. Here it is allowed that these $c_t$ to be implemented with an arbitrary function approximator with parameters $\eta_t$ . This is trained to minimize a squared error criterion, with gradients given by

Alternative forms of the advantage estimator (e.g. the TD residual $R_t (s_i) + \gamma V_t(s_{i+1} - V_t(s_i))$ or any other member of the generalized advantage estimator family) can be used to substitute by simply maintaining one such estimator per task. Experiments show that conditioning on both the state and the task identity results in dramatic performance improvements, suggesting that the variance reduction given by this objective is important for efficient joint learning of modular policies.

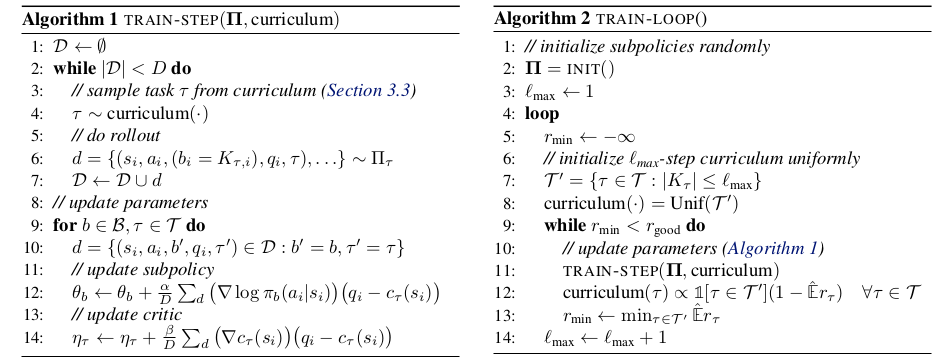

The complete algorithm for computing a single gradient step is given in Algorithm 1. (The outer training loop over these steps, which is driven by a curriculum learning procedure, is shown in Algorithm 2.) Note that this is an on-policy algorithm. In every step, the agent samples tasks from a task distribution provided by a curriculum (described in the following subsection). The current family of policies $\Pi$ is used to perform rollouts for every sampled task, accumulating the resulting tuples of (states, low-level actions, high-level symbols, rewards, and task identities) into a dataset $D$. Once $D$ reaches a maximum size D, it is used to compute gradients with respect to both policy and critic parameters, and the parameter vectors are updated accordingly. The step sizes $\alpha$ and $\beta$ in Algorithm 1 can be chosen adaptively using any first-order method.

Curriculum Learning

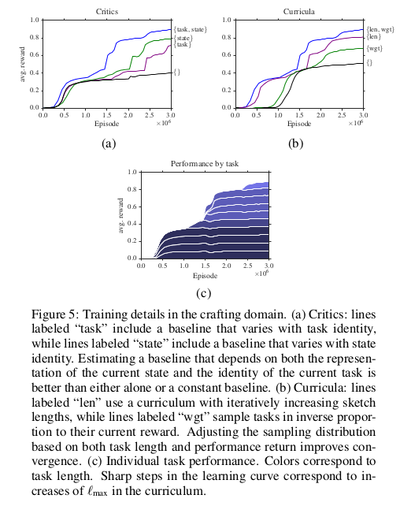

For complex tasks, like the one depicted in Figure 3b, it is difficult for the agent to discover any states with positive reward until many subpolicy behaviors have already been learned. It is thus a better use of the learner’s time (and computational resources) to focus on “easy” tasks, where many rollouts will result in high reward from which relevant subpolicy behavior can be obtained. But there is a fundamental tradeoff involved here: if the learner spends a lot of its time on easy tasks before being told of the existence of harder ones, it may overfit and learn subpolicies that exhibit the desired structural properties or no longer generalize.

To resolve these issues, a curriculum learning scheme is used that allows the model to smoothly scale up from easy tasks to more difficult ones without overfitting. Initially the model is presented with tasks associated with short sketches. Once average reward on all these tasks reaches a certain threshold, the length limit is incremented. It is assumed that rewards across tasks are normalized with maximum achievable reward $0 < q_i < 1$ . Let $Er_t$ denote the empirical estimate of the expected reward for the current policy on task T. Then at each timestep, tasks are sampled in proportion $1-Er_t$, which by assumption must be positive.

Intuitively, the tasks that provide the strongest learning signal are those in which

- The agent does not on average achieve reward close to the upper bound

- Many episodes result in high reward.

The expected reward component of the curriculum solves condition (1) by making sure that time is not spent on nearly solved tasks, while the length bound component of the curriculum addresses condition (2) by ensuring that tasks are not attempted until high-reward episodes are likely to be encountered. The experiments performed show that both components of this curriculum learning scheme improve the rate at which the model converges to a good policy.

The complete curriculum-based training algorithm is written as Algorithm 2 above. Initially, the maximum sketch length $l_{max}$ is set to 1, and the curriculum initialized to sample length-1 tasks uniformly. For each setting of $l_{max}$, the algorithm uses the current collection of task policies to compute and apply the gradient step described in Algorithm 1. The rollouts obtained from the call to TRAIN-STEP can also be used to compute reward estimates $Er_t$ ; these estimates determine a new task distribution for the curriculum. The inner loop is repeated until the reward threshold $r_{good}$ is exceeded, at which point $l_{max}$ is incremented and the process repeated over a (now-expanded) collection of tasks.

Experiments

This paper considers three families of tasks: a 2-D Minecraft-inspired crafting game (Figure 3a), in which the agent must acquire particular resources by finding raw ingredients, combining them together in the correct order, and in some cases building intermediate tools that enable the agent to alter the environment itself; a 2-D maze navigation task that requires the agent to collect keys and open doors, and a 3-D locomotion task (Figure 3b) in which a quadrupedal robot must actuate its joints to traverse a narrow winding cliff.

In all tasks, the agent receives a reward only after the final goal is accomplished. For the most challenging tasks, involving sequences of four or five high-level actions, a task-specific agent initially following a random policy essentially never discovers the reward signal, so these tasks cannot be solved without considering their hierarchical structure. These environments involve various kinds of challenging low-level control: agents must learn to avoid obstacles, interact with various kinds of objects, and relate fine-grained joint activation to high-level locomotion goals.

Implementation

In all of the experiments, each subpolicy is implemented as a neural network with ReLU nonlinearities and a hidden layer with 128 hidden units. Each critic is a linear function of the current state. Each subpolicy network receives as input a set of features describing the current state of the environment, and outputs a distribution over actions. The agent acts at every timestep by sampling from this distribution. The gradient steps given in lines 8 and 9 of Algorithm 1 are implemented using RMSPROP with a step size of 0.001 and gradient clipping to a unit norm. They take the batch size D in Algorithm 1 to be 2000, and set $\gamma$= 0.9 in both environments. For curriculum learning, the improvement threshold $r_{good}$ is 0.8.

Environments

The environment in Figure 3a is inspired by the popular game Minecraft, but is implemented in a discrete 2-D world. The agent interacts with objects in the environment by executing a special USE action when it faces them. Picking up raw materials initially scattered randomly around the environment adds to an inventory. Interacting with different crafting stations causes objects in the agent’s inventory to be combined or transformed. Each task in this game corresponds to some crafted object the agent must produce; the most complicated goals require the agent to also craft intermediate ingredients, and in some cases build tools (like a pickaxe and a bridge) to reach ingredients located in initially inaccessible regions of the world.

The maze environment is very similar to “light world” described by [4]. The agent is placed in a discrete world consisting of a series of rooms, some of which are connected by doors. The agent needs to first pick up a key to open them. For our experiments, each task corresponds to a goal room that the agent must reach through a sequence of intermediate rooms. The agent senses the distance to keys, closed doors, and open doors in each direction. Sketches specify a particular sequence of directions for the agent to traverse between rooms to reach the goal. The sketch always corresponds to a viable traversal from the start to the goal position, but other (possibly shorter) traversals may also exist.

The cliff environment (Figure 3b) proves the effectiveness of the approach in a high-dimensional continuous control environment where a quadrupedal robot [5] is placed on a variable-length winding path, and must navigate to the end without falling off. This is a challenging RL problem since the walker must learn the low-level walking skill before it can make any progress. The agent receives a small reward for making progress toward the goal, and a large positive reward for reaching the goal square, with a negative reward for falling off the path.

Multitask Learning

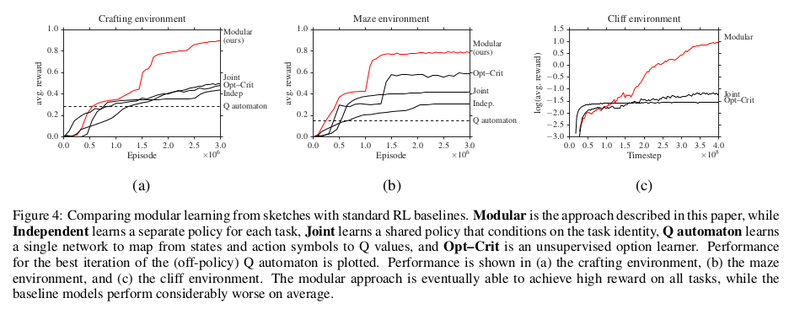

The primary experimental question in this paper is whether the extra structure provided by policy sketches alone is enough to enable fast learning of coupled policies across tasks. The aim is to explore the differences between the approach described and relevant prior work that performs either unsupervised or weakly supervised multitask learning of hierarchical policy structure. Specifically,they compare their modular approach to:

- Structured hierarchical reinforcement learners:

- the fully unsupervised option–critic algorithm of Bacon & Precup[1]

- a Q automaton that attempts to explicitly represent the Q function for each task / subtask combination (essentially a HAM [8] with a deep state abstraction function)

- Alternative ways of incorporating sketch data into standard policy gradient methods:

- learning an independent policy for each task

- learning a joint policy across all tasks, conditioning directly on both environment features and a representation of the complete sketch

The joint and independent models performed best when trained with the same curriculum described in Section 3.3, while the option–critic model performed best with a length–weighted curriculum that has access to all tasks from the beginning of training.

Learning curves for baselines and the modular model are shown in Figure 4. It can be seen that in all environments, our approach substantially outperforms the baselines: it induces policies with substantially higher average reward and converges more quickly than the policy gradient baselines. It can further be seen in Figure 4c that after policies have been learned on simple tasks, the model is able to rapidly adapt to more complex ones, even when the longer tasks involve high-level actions not required for any of the short tasks.

Ablations

In addition to the overall modular parameter tying structure induced by sketches, the other critical component of the training procedure is the decoupled critic and the curriculum. The next experiments investigate the extent to which these are necessary for good performance.

To evaluate the the critic, consider three ablations:

- Removing the dependence of the model on the environment state, in which case the baseline is a single scalar per task

- Removing the dependence of the model on the task, in which case the baseline is a conventional generalised advantage estimator

- Removing both, in which case the baseline is a single scalar, as in a vanilla policy gradient approach.

Results are shown in Figure 5a. Introducing both state and task dependence into the baseline leads to faster convergence of the model: the approach with a constant baseline achieves less than half the overall performance of the full critic after 3 million episodes. Introducing task and state dependence independently improve this performance; combining them gives the best result.

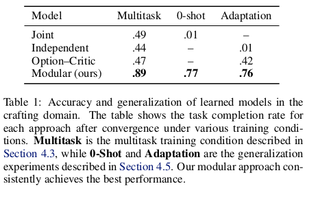

Zero-shot and Adaptation Learning

In the final experiments, the authors test the model’s ability to generalize beyond the standard training condition. Consider two tests of generalization: a zero-shot setting, in which the model is provided a sketch for the new task and must immediately achieve good performance, and a adaptation setting, in which no sketch is provided leaving the model to learn the form of a suitable sketch via interaction in the new task.They hold out two length-four tasks from the full inventory used in Section 4.3, and train on the remaining tasks. For zero-shot experiments, the concatenated policy is formed to describe the sketches of the held-out tasks, and repeatedly executing this policy (without learning) in order to obtain an estimate of its effectiveness. For adaptation experiments, consider ordinary RL over high-level actions B rather than low-level actions A, implementing the high-level learner with the same agent architecture as described in Section 3.1. Results are shown in Table 1. The held-out tasks are sufficiently challenging that the baselines are unable to obtain more than negligible reward: in particular, the joint model overfits to the training tasks and cannot generalize to new sketches, while the independent model cannot discover enough of a reward signal to learn in the adaptation setting. The modular model does comparatively well: individual subpolicies succeed in novel zero-shot configurations (suggesting that they have in fact discovered the behavior suggested by the semantics of the sketch) and provide a suitable basis for adaptive discovery of new high-level policies.

Conclusion & Critique

The paper's contributions are:

- A general paradigm for multitask, hierarchical, deep reinforcement learning guided by abstract sketches of task-specific policies.

- A concrete recipe for learning from these sketches, built on a general family of modular deep policy representations and a multitask actor–critic training objective.

They have described an approach for multitask learning of deep multitask policies guided by symbolic policy sketches. By associating each symbol appearing in a sketch with a modular neural subpolicy, they have shown that it is possible to build agents that share behavior across tasks in order to achieve success in tasks with sparse and delayed rewards. This process induces an inventory of reusable and interpretable subpolicies which can be employed for zero-shot generalisation when further sketches are available, and hierarchical reinforcement learning when they are not.

References

[1] Bacon, Pierre-Luc and Precup, Doina. The option-critic architecture. In NIPS Deep Reinforcement Learning Work-shop, 2015.

[2] Sutton, Richard S, Precup, Doina, and Singh, Satinder. Be-tween MDPs and semi-MDPs: A framework for tempo-ral abstraction in reinforcement learning. Artificial intel-ligence, 112(1):181–211, 1999.

[3] Stolle, Martin and Precup, Doina. Learning options in reinforcement learning. In International Symposium on Abstraction, Reformulation, and Approximation, pp. 212– 223. Springer, 2002.

[4] Konidaris, George and Barto, Andrew G. Building portable options: Skill transfer in reinforcement learning. In IJ-CAI, volume 7, pp. 895–900, 2007.

[5] Schulman, John, Moritz, Philipp, Levine, Sergey, Jordan, Michael, and Abbeel, Pieter. Trust region policy optimization. In International Conference on Machine Learning, 2015b.

[6] Greensmith, Evan, Bartlett, Peter L, and Baxter, Jonathan. Variance reduction techniques for gradient estimates in reinforcement learning. Journal of Machine Learning Research, 5(Nov):1471–1530, 2004.

[7] Andre, David and Russell, Stuart. Programmable reinforce-ment learning agents. In Advances in Neural Information Processing Systems, 2001.

[8] Andre, David and Russell, Stuart. State abstraction for pro-grammable reinforcement learning agents. In Proceedings of the Meeting of the Association for the Advance-ment of Artificial Intelligence, 2002.

[9] Author Jacob Andreas presenting the paper - https://www.youtube.com/watch?v=NRIcDEB64x8