LightRNN: Memory and Computation-Efficient Recurrent Neural Networks: Difference between revisions

No edit summary |

|||

| (31 intermediate revisions by 14 users not shown) | |||

| Line 1: | Line 1: | ||

= Introduction = | = Introduction = | ||

The study of natural language processing has been around for more than fifty years. It begins in the 1950s | The study of natural language processing has been around for more than fifty years. It begins in the 1950s when the specific field of natural language processing (NLP) is still embedded in the subject of linguistics (Hirschberg & Manning, 2015). After the emergence of strong computational power, computational linguistics began to evolve and gradually branch out to various applications in NLP, such as text classification, speech recognition and question answering (Brownlee, 2017). Computational linguistics or natural language processing is usually defined as “subfield of computer science concerned with using computational techniques to learn, understand, and produce human language content” (Hirschberg & Manning, 2015, p. 261). | ||

With the development of deep neural networks, one type of neural network, namely recurrent neural networks (RNN) have performed significantly well in many natural language processing tasks. The reason is that nature of RNN takes into account the past inputs as well as the current input without resulting in vanishing or exploding gradient. More detail of how RNN works in the context of NLP will be discussed in the section of NLP using RNN. However, one limitation of RNN used in NLP is its enormous size of input vocabulary. This will result in a very complex RNN model with too many parameters to train and makes the training process both time and memory-consuming. This serves as the major motivation for this paper’s authors to develop a new technique utilized in RNN, which is particularly efficient at processing large | With the development of deep neural networks, one type of neural network, namely recurrent neural networks (RNN) have performed significantly well in many natural language processing tasks. One of the first examples applies RNN to speech recognition tasks (Mikolov et. al. 2010). The reason is that nature of RNN takes into account the past inputs as well as the current input without resulting in vanishing or exploding gradient. More detail of how RNN works in the context of NLP will be discussed in the section of NLP using RNN. However, one limitation of RNN used in NLP is its enormous size of input vocabulary (i.e. the vocabulary is too large to compute). This will result in a very complex RNN model with too many parameters to train and makes the training process both time and memory-consuming. This serves as the major motivation for this paper’s authors to develop a new technique utilized in RNN, which is particularly efficient at processing a large vocabulary in many NLP tasks, namely LightRNN. In this work, the authors propose "LightRNN" which uses two-component (2C) shared word embedding for word representations. | ||

= Motivations = | = Motivations = | ||

| Line 10: | Line 10: | ||

This method has 3 major limitations: | This method has 3 major limitations: | ||

* Memory Constraint | |||

:: When input vocabulary contains an enormous amount of unique words, which is very common in various NLP tasks, the size of the model becomes very large. This means the number of trainable parameters is very big, which makes it difficult to fit such model on a regular GPU device. | |||

* Computationally Heavy to Train | |||

:: As previously mentioned, the probability distribution of all other words in the vocabulary needs to be computed to determine what predicted word it would be. When the size of the vocabulary is large, such calculation can be computationally heavy. | |||

* Low Compressibility | |||

:: Due to the memory and computation-consuming process of RNN applied in NLP tasks, mobile devices cannot usually handle such algorithm, which makes it undesirable and limits its usage. | |||

Previously, there were some works focusing on reducing the computing complexity in Softmax layer. By building a hierarchical binary tree where each node stands for a word, the time complexity is reduced to $\log(|V|)$. However, the space complexity remains same. In addition, some technicals, such as Character-level convolution filters, tried to reduce the model size by shrinking the input-embedding matrix, whereas brings no improvement in terms of speed. | |||

An alternative approach to handle the overhead is by leveraging weak lower-level learners by Boosting. But a drawback is that this technique has only been implemented for a few specific tasks in the past such as Time Series predictions [Boné et. al.]. | An alternative approach to handle the overhead is by leveraging weak lower-level learners by Boosting. But a drawback is that this technique has only been implemented for a few specific tasks in the past such as Time Series predictions [Boné et. al.]. | ||

| Line 27: | Line 26: | ||

The authors of the paper proposed a new structure that effectively reduces the size of the model by arranging all words in the vocabulary into a word table, which is referred as “2-Component (2C) shared embedding for word representation”. This is done by factorizing a vocabulary's embedding into two shared components (row and column). Thus, a word is indexed by its location in such table, which in terms is characterized by the corresponding row and column components. Each row and column component are unique row vector and column vector respectively. By organizing each word in the vocabulary in this manner, multiple words can share the same row component or column component and it can reduce the number of trainable parameters significantly. | The authors of the paper proposed a new structure that effectively reduces the size of the model by arranging all words in the vocabulary into a word table, which is referred as “2-Component (2C) shared embedding for word representation”. This is done by factorizing a vocabulary's embedding into two shared components (row and column). Thus, a word is indexed by its location in such table, which in terms is characterized by the corresponding row and column components. Each row and column component are unique row vector and column vector respectively. By organizing each word in the vocabulary in this manner, multiple words can share the same row component or column component and it can reduce the number of trainable parameters significantly. | ||

The next question is how to construct such word table. More specifically, how to allocate each word in the vocabulary to different positions so that semantically similar words are in the same row or column. The authors proposed a bootstrap method to solve this problem. Essentially, we first randomly distribute words into the table. Then, we let the model “learn” better position of each word by minimizing training error. By repeating this process, each word can be allocated to a particular position within the table so that similar words share common row or column components. More details of those 2 parts of LightRNN structure will be discussed in the following sections. | The next question is how to construct such word table. More specifically, how to allocate each word in the vocabulary to different positions so that semantically similar words are in the same row or column. The authors proposed a bootstrap method to solve this problem. Essentially, we first randomly distribute words into the table. Then, we let the model “learn” better position of each word by minimizing training error. By repeating this process, each word can be allocated to a particular position within the table so that similar words share a common row or column components. More details of those 2 parts of LightRNN structure will be discussed in the following sections. | ||

There are 2 major benefits of the proposed technique: | There are 2 major benefits of the proposed technique: | ||

* Computationally efficient | |||

The name “LightRNN” is to illustrate the small model size and fast training speed. Because of these features of the new RNN architecture, it’s possible to launch such model onto regular GPU and other mobile devices. | :: The name “LightRNN” is to illustrate the small model size and fast training speed. Because of these features of the new RNN architecture, it’s possible to launch such model onto regular GPU and other mobile devices. | ||

* Higher scalability | |||

The authors briefly explained this algorithm is scalable because if parallel-computing is needed to train such model, the difficulty of combining smaller models is low. | :: The authors briefly explained this algorithm is scalable because if parallel-computing is needed to train such model, the difficulty of combining smaller models is low. | ||

| Line 50: | Line 49: | ||

== Part II: How 2C Shared Embedding is Used in LightRNN == | == Part II: How 2C Shared Embedding is Used in LightRNN == | ||

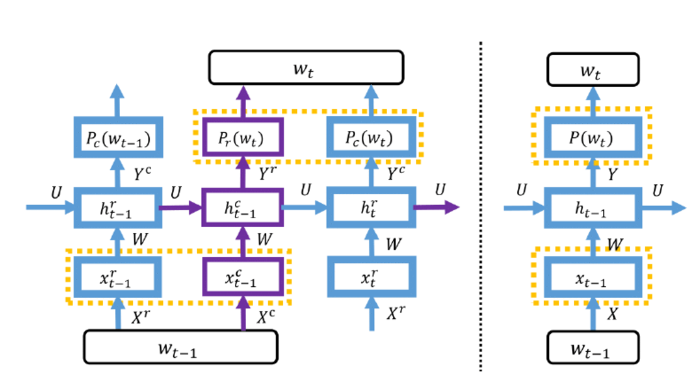

After constructing such word representation table, those 2-component shared embedding matrices are fed into the recurrent neural network. The following Figure 2 demonstrates a portion of LightRNN structure (left) with comparison with the regular RNN (right). Compared to regular RNN where a single input <math>x_{t-1}</math> is fed into the network each time, 2 elements of a single input <math>x_{t-1}</math>: <math>x^{r}_{t-1}</math> and <math>x^{c}_{t-1}</math> are fed into LightRNN. | After constructing such word representation table, those 2-component shared embedding matrices are fed into the recurrent neural network. The following Figure 2 demonstrates a portion of LightRNN structure (left) with comparison with the regular RNN (right). Compared to regular RNN where a single input <math>x_{t-1}</math> is fed into the network each time, 2 elements of a single input <math>x_{t-1}</math>: <math>x^{r}_{t-1}</math> and <math>x^{c}_{t-1}</math> are fed into LightRNN. With the 2-Component shared embedding, we can construct the LightRNN model by doubling the basic units of a vanilla RNN model. If <math>n , m</math> denote the dimension of a row/column input vector and that of a hidden state vector respectively. To compute the probability distribution of $w_t$, we need to use the column vector <math> x_{t−1}^c ∈ R^n</math> the row vector <math>x_t^r ∈ R^n</math>, and the hidden | ||

state vector <math>h_{t−1}^r ∈ R^m</math>. | |||

[[File:LightRNN.PNG |700px|thumb|centre|Fig 2. LightRNN Structure & Regular RNN]] | [[File:LightRNN.PNG |700px|thumb|centre|Fig 2. LightRNN Structure & Regular RNN]] | ||

As mentioned before, the last hidden layer will produce the probabilities of <math>word_{t}</math>. Based on the diagram below, the following formulas are used: | As mentioned before, the last hidden layer will produce the probabilities of <math>word_{t}</math>. Based on the diagram below, the following formulas are used: | ||

Let n | Let $n$ be the dimension/length of a row input vector/a column input vector, <math>X^{c}, X^{r} \in \mathbb{R}^{n \times \sqrt{|V|}}</math> denotes the input-embedding matrices: | ||

<center> | <center> | ||

: row vector <math>x^{r}_{t-1} \in \mathbb{R}^n</math> | : row vector <math>x^{r}_{t-1} \in \mathbb{R}^n</math> | ||

| Line 68: | Line 68: | ||

where <math>W \in \mathbb{R}^{m \times n}</math>, <math>U \in \mathbb{R}^{m \times m}</math>, and <math>b \in \mathbb{R}^m</math> and <math>f</math> is a nonlinear activation function | where <math>W \in \mathbb{R}^{m \times n}</math>, <math>U \in \mathbb{R}^{m \times m}</math>, and <math>b \in \mathbb{R}^m</math> and <math>f</math> is a nonlinear activation function | ||

The final step in LightRNN is to calculate <math>P_{r}(w_{t})</math> and <math>P_{c}(w_{t})</math> , which means the probability of a word w at time t, using the following formulas: | The final step in LightRNN is to calculate <math>P_{r}(w_{t})</math> and <math>P_{c}(w_{t})</math> , which means the probability of a word w at time t, using the following softmax formulas: | ||

<center> | <center> | ||

: <math>P_{r}(w_t) = \frac{exp(h_{t-1}^{c} y_{r(w)}^{r})}{\sum\nolimits_{i \in S_r} exp(h_{t-1}^{c} y_{i}^{r}) }</math> | : <math>P_{r}(w_t) = \frac{exp(h_{t-1}^{c} y_{r(w)}^{r})}{\sum\nolimits_{i \in S_r} exp(h_{t-1}^{c} y_{i}^{r}) }</math> | ||

| Line 84: | Line 84: | ||

</center> | </center> | ||

We can see that by using above equation, we effectively reduce the computation of the probability of the next word from a $|V|$-way normalization (in standard RNN models) to two $\sqrt {|V|}$-way normalizations. Note that we don't see the t-th word before predicting it. So in the above diagram, given the input column vector <math>x^c_{t-1} </math> of the (t-1)-th word, we first infer the row probability <math>P_r(w_t)</math> of the t-th word, and then choose the index of the row the largest probability in <math>P_r(w_t)</math> to look up the next input row vector <math>x^r_{t} </math>. Similarly, we can infer the column probability <math>P_c(w_t)</math> of the t-th word. | We can see that by using above equation, we effectively reduce the computation of the probability of the next word from a $|V|$-way normalization (in standard RNN models) to two $\sqrt {|V|}$-way normalizations. Note that we don't see the t-th word before predicting it. So in the above diagram, given the input column vector <math>x^c_{t-1} </math> of the (t-1)-th word, we first infer the row probability <math>P_r(w_t)</math> of the t-th word, and then choose the index of the row the largest probability in <math>P_r(w_t)</math> to look up the next input row vector <math>x^r_{t} </math>. Similarly, we can infer the column probability <math>P_c(w_t)</math> of the t-th word. It should be noted that the input and output use different embedding matrices but they share the same word-allocation table. | ||

Essentially, in LightRNN, the prediction of the word at time t (<math> w_t </math>) based on word at time t-1 (<math> w_{t-1} </math>) is achieved by selecting the index <math> r </math> and <math> c </math> with the highest probabilities <math> P_{r}(w_t) </math>, <math> P_{c}(w_t) </math>. Then, the probability of each word is computed based on the multiplication of <math> P_{r}(w_t) </math> and <math> P_{c}(w_t) </math>. | Essentially, in LightRNN, the prediction of the word at time t (<math> w_t </math>) based on word at time t-1 (<math> w_{t-1} </math>) is achieved by selecting the index <math> r </math> and <math> c </math> with the highest probabilities <math> P_{r}(w_t) </math>, <math> P_{c}(w_t) </math>. Then, the probability of each word is computed based on the multiplication of <math> P_{r}(w_t) </math> and <math> P_{c}(w_t) </math>. | ||

| Line 90: | Line 90: | ||

== Part III: Bootstrap for Word Allocation == | == Part III: Bootstrap for Word Allocation == | ||

As mentioned before, the major innovative aspect of LightRNN is the development of 2-component shared embedding. Such structure can be used in building a recurrent neural network called LightRNN. However, how | As mentioned before, the major innovative aspect of LightRNN is the development of 2-component shared embedding. Such structure can be used in building a recurrent neural network called LightRNN. However, how such word table representation is constructed is the key part of building a successful LightRNN model. In this section, the procedures of constructing 2C shared embedding structure are explained. | ||

The fundamental idea is using bootstrap method by minimizing a loss function (namely, negative log-likelihood function). The detailed procedures are described as the following: | The fundamental idea is using a bootstrap method by minimizing a loss function (namely, negative log-likelihood function of the next word in a sequence). The detailed procedures are described as the following: | ||

Step 1: First, all words in a vocabulary are randomly assigned to individual position within the word table | Step 1: First, all words in a vocabulary are randomly assigned to individual position within the word table | ||

| Line 99: | Line 99: | ||

Step 3: By fixing the training results of input and output embedding matrices (W & U) from step 2, adjust the position of words by minimizing the loss function over all the words. Then, repeat from step 2 | Step 3: By fixing the training results of input and output embedding matrices (W & U) from step 2, adjust the position of words by minimizing the loss function over all the words. Then, repeat from step 2 | ||

Given a context with $T$ words, the authors presented the overall loss function for word w moving to position [i, j] using a negative log-likelihood function (NLL) as the following: | |||

<center> | <center> | ||

<math> NLL = \sum\limits_{t=1}^T -logP(w_t) = \sum\limits_{t=1}^T -log[P_{r}(w_t) P_{c}(w_t)] = \sum\limits_{t=1}^T -log[P_{r}(w_t)] – log[P_{c}(w_t)] = \sum\limits_{w=1}^{|V|} NLL_w </math> | <math> NLL = \sum\limits_{t=1}^T -logP(w_t) = \sum\limits_{t=1}^T -log[P_{r}(w_t) P_{c}(w_t)] = \sum\limits_{t=1}^T -log[P_{r}(w_t)] – log[P_{c}(w_t)] = \sum\limits_{w=1}^{|V|} NLL_w </math> | ||

| Line 110: | Line 110: | ||

<math> = \sum\limits_{t \in S_w} -logP_r(w_t) + \sum\limits_{t \in S_w} -logP_c(w_t) = l_r(w,r(w)) + l_c(w,c(w))</math> <br> | <math> = \sum\limits_{t \in S_w} -logP_r(w_t) + \sum\limits_{t \in S_w} -logP_c(w_t) = l_r(w,r(w)) + l_c(w,c(w))</math> <br> | ||

<math> = \sum\limits_{t \in S_w} -log (\frac{exp(h_{t-1}^{c} y_{i}^{r})}{\sum\nolimits_{k} exp(h_{t-1}^{c} y_{i}^{k})}) + \sum\limits_{t \in S_w} -log (\frac{exp(h_{t}^{r} y_{j}^{c})}{\sum\nolimits_{k} exp(h_{t}^{r} y_{k}^{c}) }) </math> <br> | <math> = \sum\limits_{t \in S_w} -log (\frac{exp(h_{t-1}^{c} y_{i}^{r})}{\sum\nolimits_{k} exp(h_{t-1}^{c} y_{i}^{k})}) + \sum\limits_{t \in S_w} -log (\frac{exp(h_{t}^{r} y_{j}^{c})}{\sum\nolimits_{k} exp(h_{t}^{r} y_{k}^{c}) }) </math> <br> | ||

where <math> S_w </math> is the set of all possible positions | where <math> S_w </math> is the set of all possible positions for the word w in the corpus </center> | ||

</center> | |||

In summary, the overall loss function for word w to move to position [i, j] is the sum of its row loss and column loss of moving to position [i, j]. Therefore, total loss of moving to position [i, j] <math> l(w, i, j) = l_r(w, i) + l_c(w, j)</math>. Thus, to update the table by reallocating each word, we are looking for position [i, j] for each word w that minimize the total loss function, mathematically written as for the following: | In summary, the overall loss function for word w to move to position [i, j] is the sum of its row loss and column loss of moving to position [i, j]. Therefore, total loss of moving to position [i, j] <math> l(w, i, j) = l_r(w, i) + l_c(w, j)</math>. Thus, to update the table by reallocating each word, we are looking for position [i, j] for each word w that minimize the total loss function, mathematically written as for the following: | ||

<center> | <center> | ||

<math> \min\limits_{a} \sum\limits_{w,i,j} l(w,i,j)a(w,i,j) </math> such that <br> | <math> \min\limits_{a} \sum\limits_{w,i,j} l(w,i,j)a(w,i,j) </math> such that <br> | ||

<math> \sum\limits_{(i,j)} a(w,i,j) = 1 \space \forall w \in V, \sum\limits_{(w)} a(w,i,j) = 1 \space \forall i \in S_r, j \in S_j</math> <br> | <math> \sum\limits_{(i,j)} a(w,i,j) = 1 \space \forall w \in V, \sum\limits_{(w)} a(w,i,j) = 1 \space \forall i \in S_r, j \in S_j</math> <br> | ||

<math> a(w,i,j) \in {0,1}, \forall w \in V, i \in S_r, j \in S_j</math> <br> | <math> a(w,i,j) \in \left\{0,1\right\}, \forall w \in V, i \in S_r, j \in S_j</math> <br> | ||

where <math> a(w,i,j) =1 </math> indicates moving word w to position [i, j] | where <math> a(w,i,j) =1 </math> indicates moving word w to position [i, j] | ||

</center> | </center> | ||

After calculating $l(w, i, j)$ for all possible $w, i, j$, the above optimization leads forcing $a(w, i, j)$ to be equal to 1 for $i, j$ in which $l(w, i, j)$ is minimum and 0 elsewhere (i.e. finding the best place for the word $w$ in the table). | After calculating $l(w, i, j)$ for all possible $w, i, j$, the above optimization leads forcing $a(w, i, j)$ to be equal to 1 for $i, j$ in which $l(w, i, j)$ is minimum and 0 elsewhere (i.e. finding the best place for the word $w$ in the table). This minimization problem is a classical assignment problem, which can be solved in polynomial time $O(|V|^3)$. So word table representation allocation would not occupy much computational time. | ||

= LightRNN Example = | = LightRNN Example = | ||

| Line 138: | Line 137: | ||

[[File:Table2YH.PNG|700px|thumb|centre|Table 2. Testing PPL of LightRNN on ACLW-French dataset w.r.t. embedding size]] | [[File:Table2YH.PNG|700px|thumb|centre|Table 2. Testing PPL of LightRNN on ACLW-French dataset w.r.t. embedding size]] | ||

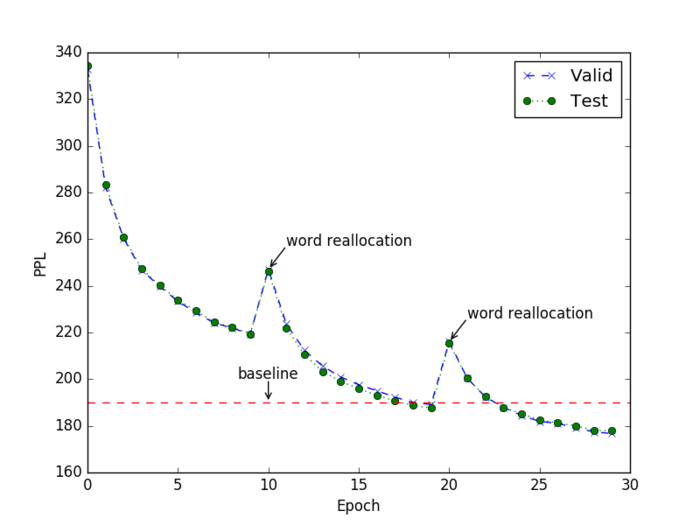

* In the official implement Github repo, Figure 3 shows the training process of LightRNN on ACLW-French dataset. | |||

[[File:ACLWFR.png|700px|thumb|centre|Figure 3.. Training process on ACLW-French]] | |||

'''Advantage 1: small model size''' | '''Advantage 1: small model size''' | ||

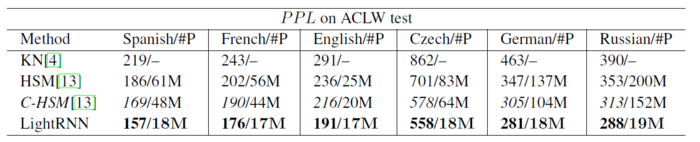

One of the major advantages of using LightRNN on NLP tasks is significantly reduced model size, which means fewer number of parameters to estimate. By comparing LightRNN with two other RNN algorithms and the baseline language model with Kneser-Ney smoothing. Those two RNN algorithms are: HSM which uses LSTM RNN algorithm with hierarchical softmax for word prediction; C-HSM which uses both hierarchical softmax and character-level convolutional filters for input embedding. From the results table shown below, we can see that LightRNN has the lowest perplexity while keeping the model size significantly smaller compared to the other three algorithms. | One of the major advantages of using LightRNN on NLP tasks is significantly reduced the model size, which means a fewer number of parameters to estimate. By comparing LightRNN with two other RNN algorithms and the baseline language model with Kneser-Ney smoothing. Those two RNN algorithms are: HSM which uses LSTM RNN algorithm with hierarchical softmax for word prediction; C-HSM which uses both hierarchical softmax and character-level convolutional filters for input embedding. From the results table shown below, we can see that LightRNN has the lowest perplexity while keeping the model size significantly smaller compared to the other three algorithms. | ||

[[File:Table5YH.PNG|700px|thumb|centre|Table 3. PPL Results in test set on ACLW datasets]] | [[File:Table5YH.PNG|700px|thumb|centre|Table 3. PPL Results in test set on ACLW datasets]] | ||

| Line 148: | Line 150: | ||

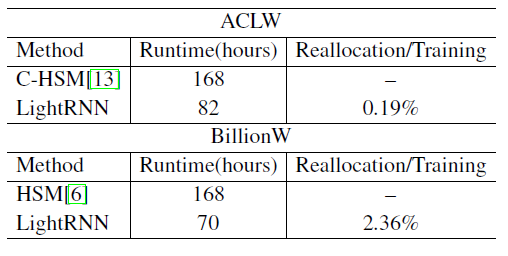

'''Advantage 2: high training efficiency''' | '''Advantage 2: high training efficiency''' | ||

Another advantage of LightRNN model is its shorter training time while maintaining same level of perplexity compared to other RNN algorithms. When comparing to both C-HSM and HSM (shown below in Table 4), LightRNN only takes half the runtime but achieve same level of perplexity when applied to both ACLW and BillionW datasets. In the last column of Table 3, the amount of time used for word table reconstruction is presented as the percentage of the total runtime. As we can see, the training time for word reallocation takes up only a very small proportion of the total runtime. However, the resulting reconstructed word table can be used as a valuable output, which is further explained in the next section. | Another advantage of LightRNN model is its shorter training time while maintaining the same level of perplexity compared to other RNN algorithms. When comparing to both C-HSM and HSM (shown below in Table 4), LightRNN only takes half the runtime but achieve the same level of perplexity when applied to both ACLW and BillionW datasets. In the last column of Table 3, the amount of time used for word table reconstruction is presented as the percentage of the total runtime. As we can see, the training time for word reallocation takes up only a very small proportion of the total runtime. However, the resulting reconstructed word table can be used as a valuable output, which is further explained in the next section. | ||

[[File:Table3YH.PNG|700px|thumb|centre|Table 4. Runtime comparisons in order to achieve the HSMs’ baseline PPL]] | [[File:Table3YH.PNG|700px|thumb|centre|Table 4. Runtime comparisons in order to achieve the HSMs’ baseline PPL]] | ||

| Line 160: | Line 162: | ||

= Remarks = | = Remarks = | ||

In summary, the proposed method in this paper is mainly on developing a new way of using word embedding. Words with similar semantic meanings are embedded using similar vectors. Those vectors are then divided into row and column components where similar words are grouped together by having shared row and column components in the word representation table. This is indeed a dimensional reduction, i.e. use the row and column "semantic vectors" to approximate the coded word. Because of this structural change of input word embedding, RNN model needs to adapt by having both row and column components being fed into the network. However, the fundamental structure of RNN model does not | |||

In summary, the proposed method in this paper is mainly on developing a new way of using word embedding which is a natural extension of a 1-layer word embedding look-up table towards a 2-layer look-up table. Words with similar semantic meanings are embedded using similar vectors. Those vectors are then divided into row and column components where similar words are grouped together by having shared row and column components in the word representation table. The bootstrap step is promising since it learns a good word allocation (similar to word clustering). There could be a large impact on various natural language applications. From a computational and application perspective, there were two key contributions provided in this paper. | |||

# Reduction in size of word embedding matrix. | |||

# Reduction in computations of word probabilities. | |||

The proposed model makes no assumptions about the structure of the words, which makes it potentially useful outside of NLP. In contrast, character-based word embedding models also reduce the model size but do need to access the internal structure of words (i.e. their characters). These two points ensure that one does not need hierarchical softmax or Monte Carlo estimations of the model's training cost. | |||

This is indeed a dimensional reduction, i.e. use the row and column "semantic vectors" to approximate the coded word. Because of this structural change of input word embedding, RNN model needs to adapt by having both row and column components being fed into the network. However, the fundamental structure of RNN model does not change. Therefore, personally, I would say it’s a new word embedding technique rather than a new development in model construction. One major confusion I have when reading this paper is how those row and column components in the word allocation table are determined. From the paper itself, the authors didn’t explain how they are constructed. | |||

Such shared word embedding technique is prevalently used in NLP. For instance, in language translation, similar words from different languages are grouped together so that the machine can translate sentences from one language to another. In Socher et al. (2013a), English and Chinese words are embedded in the same space so that we can find similar English (Chinese) words for Chinese (English) words. (Zou, Socher, Cer, & Manning, 2013). Word2vec is also a commonly used technique for word embedding, which uses a two-layer neural network to transform text into numeric vectors where similar words will have similar numeric values. The key feature of word2vec is that semantically similar words (which is now represented by numeric vectors) can be grouped together (“Word2vec,” n.d.; Bengio, Ducharme, & Vincent, 2001; Bengio, Ducharme, Vincent, & Jauvin, 2003). | Such shared word embedding technique is prevalently used in NLP. For instance, in language translation, similar words from different languages are grouped together so that the machine can translate sentences from one language to another. In Socher et al. (2013a), English and Chinese words are embedded in the same space so that we can find similar English (Chinese) words for Chinese (English) words. (Zou, Socher, Cer, & Manning, 2013). Word2vec is also a commonly used technique for word embedding, which uses a two-layer neural network to transform text into numeric vectors where similar words will have similar numeric values. The key feature of word2vec is that semantically similar words (which is now represented by numeric vectors) can be grouped together (“Word2vec,” n.d.; Bengio, Ducharme, & Vincent, 2001; Bengio, Ducharme, Vincent, & Jauvin, 2003). | ||

An interesting area of further exploration proposed by the authors is an extension of this method to k-component shared embeddings where k>2. Words probably share similar semantic meanings in more than two dimensions, and this extension could reduce network size even further. However, it could also further complicate the bootstrapping phase of training. | An interesting area of further exploration proposed by the authors is an extension of this method to k-component shared embeddings where k>2. Words probably share similar semantic meanings in more than two dimensions, and this extension could reduce network size even further. However, it could also further complicate the bootstrapping phase of training. | ||

Since no assumptions were made about the structure of the words, one could seek uses of this algorithm outside the context of natural language processing. | |||

It is ultimately unclear why the authors felt the need to adjust the structure of the RNN, sequentially feeding in row-column pairs. A vector derived by the concatenation of the row and the column components could easily be fed into a standard RNN, eliminating the need. It would have been useful to see whether this approach was fruitful. If the benefits seen in the paper are not derived as a result of the novel RNN structure, but instead as a result of the embedding, then this may provide grounds for use in other network structures, and perhaps as an embedding algorithm itself. Additionally, if the simpler model grants faster optimization, it may be a worthwhile investigation. | |||

Overall, two-component embedding approach is interesting. However, the reported numbers on the one-billion word benchmark are worse than the best results reported in (Chelba et al 2013). In addition, the authors don't report run times so we can't figure out how much additional training time is added by the table allocation optimizer. This is particularly troublesome as, while the authors do suggest that it is only a small percentage of the total training time the model incurs, the algorithm used runs with quadratic complexity based on the number of words; it is thus conceivable that the added time benefits of a simpler model, in addition to a simpler embedding outweigh any computational benefits of the smaller size for many use cases. | |||

Code for LightRNN can be found on Github : | Code for LightRNN can be found on Github : | ||

Official Implementation(CNTK): https://github.com/Microsoft/CNTK/tree/master/Examples/Text/LightRNN | Official Implementation(CNTK): https://github.com/Microsoft/CNTK/tree/master/Examples/Text/LightRNN | ||

Tensorflow : https://github.com/YisenWang/LightRNN-NIPS2016-Tensorflow_code | Tensorflow : https://github.com/YisenWang/LightRNN-NIPS2016-Tensorflow_code | ||

= Reference = | = Reference = | ||

Bengio, Y, Ducharme, R., & Vincent, P. (2001). A Neural Probabilistic Language Model. In Journal of Machine Learning Research (Vol. 3, pp. 932–938). https://doi.org/10.1162/153244303322533223 | Bengio, Y, Ducharme, R., & Vincent, P. (2001). A Neural Probabilistic Language Model. In Journal of Machine Learning Research (Vol. 3, pp. 932–938). https://doi.org/10.1162/153244303322533223 | ||

| Line 193: | Line 211: | ||

Boné R., Assaad M., Crucianu M. (2003) Boosting Recurrent Neural Networks for Time Series Prediction. In: Pearson D.W., Steele N.C., Albrecht R.F. (eds) Artificial Neural Nets and Genetic Algorithms. Springer, Vienna | Boné R., Assaad M., Crucianu M. (2003) Boosting Recurrent Neural Networks for Time Series Prediction. In: Pearson D.W., Steele N.C., Albrecht R.F. (eds) Artificial Neural Nets and Genetic Algorithms. Springer, Vienna | ||

Mikolov T., Karafiat M., Burget L., Cernocky J. H., Khudanpur S. Recurrent neural network based language model. Interspeech 2010. | |||

Chelba et al 2013, One Billion Word Benchmark for Measuring Progress in Statistical Language Modeling. | |||

Latest revision as of 20:18, 28 November 2017

Introduction

The study of natural language processing has been around for more than fifty years. It begins in the 1950s when the specific field of natural language processing (NLP) is still embedded in the subject of linguistics (Hirschberg & Manning, 2015). After the emergence of strong computational power, computational linguistics began to evolve and gradually branch out to various applications in NLP, such as text classification, speech recognition and question answering (Brownlee, 2017). Computational linguistics or natural language processing is usually defined as “subfield of computer science concerned with using computational techniques to learn, understand, and produce human language content” (Hirschberg & Manning, 2015, p. 261).

With the development of deep neural networks, one type of neural network, namely recurrent neural networks (RNN) have performed significantly well in many natural language processing tasks. One of the first examples applies RNN to speech recognition tasks (Mikolov et. al. 2010). The reason is that nature of RNN takes into account the past inputs as well as the current input without resulting in vanishing or exploding gradient. More detail of how RNN works in the context of NLP will be discussed in the section of NLP using RNN. However, one limitation of RNN used in NLP is its enormous size of input vocabulary (i.e. the vocabulary is too large to compute). This will result in a very complex RNN model with too many parameters to train and makes the training process both time and memory-consuming. This serves as the major motivation for this paper’s authors to develop a new technique utilized in RNN, which is particularly efficient at processing a large vocabulary in many NLP tasks, namely LightRNN. In this work, the authors propose "LightRNN" which uses two-component (2C) shared word embedding for word representations.

Motivations

In language modelling, researchers used to represent words by arbitrary codes, such as “Id143” is the code for “dog” (“Vector Representations of Words,” 2017). Such coding of words is completely random, and it loses the meaning of the words and (more importantly) connection with other words. Nowadays, one-hot representation of words is commonly used, in which a word is represented by a vector of numbers and the dimension of the vector is related to the size of the vocabulary. In RNN, all words in the vocabulary are coded using one-hot representation and then mapped to an embedding vector (Li, Qin, Yang, Hu, & Liu, 2016). Such embedding vector is “a continuous vector space where semantically similar words are mapped to nearby points” (“Vector Representations of Words” 2017, para. 6). Popular RNN structure used in NLP task is long short-term memory (LSTM). In order to predict the probability of the next word, the last hidden layer of the network needs to calculate the probability distribution over all other words in the vocabulary. Note that the most time-consuming operation in RNNs is to calculate a probability distribution over all the words in the vocabulary, which requires the multiplication of the output-embedding matrix and the hidden state at each position of a sequence. Lastly, an activation function (commonly, softmax function) is used to select the next word with the highest probability. This method has 3 major limitations:

- Memory Constraint

- When input vocabulary contains an enormous amount of unique words, which is very common in various NLP tasks, the size of the model becomes very large. This means the number of trainable parameters is very big, which makes it difficult to fit such model on a regular GPU device.

- Computationally Heavy to Train

- As previously mentioned, the probability distribution of all other words in the vocabulary needs to be computed to determine what predicted word it would be. When the size of the vocabulary is large, such calculation can be computationally heavy.

- Low Compressibility

- Due to the memory and computation-consuming process of RNN applied in NLP tasks, mobile devices cannot usually handle such algorithm, which makes it undesirable and limits its usage.

Previously, there were some works focusing on reducing the computing complexity in Softmax layer. By building a hierarchical binary tree where each node stands for a word, the time complexity is reduced to $\log(|V|)$. However, the space complexity remains same. In addition, some technicals, such as Character-level convolution filters, tried to reduce the model size by shrinking the input-embedding matrix, whereas brings no improvement in terms of speed.

An alternative approach to handle the overhead is by leveraging weak lower-level learners by Boosting. But a drawback is that this technique has only been implemented for a few specific tasks in the past such as Time Series predictions [Boné et. al.].

LightRNN Structure

The authors of the paper proposed a new structure that effectively reduces the size of the model by arranging all words in the vocabulary into a word table, which is referred as “2-Component (2C) shared embedding for word representation”. This is done by factorizing a vocabulary's embedding into two shared components (row and column). Thus, a word is indexed by its location in such table, which in terms is characterized by the corresponding row and column components. Each row and column component are unique row vector and column vector respectively. By organizing each word in the vocabulary in this manner, multiple words can share the same row component or column component and it can reduce the number of trainable parameters significantly. The next question is how to construct such word table. More specifically, how to allocate each word in the vocabulary to different positions so that semantically similar words are in the same row or column. The authors proposed a bootstrap method to solve this problem. Essentially, we first randomly distribute words into the table. Then, we let the model “learn” better position of each word by minimizing training error. By repeating this process, each word can be allocated to a particular position within the table so that similar words share a common row or column components. More details of those 2 parts of LightRNN structure will be discussed in the following sections.

There are 2 major benefits of the proposed technique:

- Computationally efficient

- The name “LightRNN” is to illustrate the small model size and fast training speed. Because of these features of the new RNN architecture, it’s possible to launch such model onto regular GPU and other mobile devices.

- Higher scalability

- The authors briefly explained this algorithm is scalable because if parallel-computing is needed to train such model, the difficulty of combining smaller models is low.

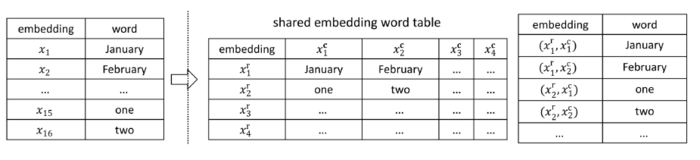

The key aspect of LightRNN structure is its innovative method of word representation, namely 2-Component Shared Embedding. All words in the vocabulary are organized into a table with row components and column components. Each pair of the element in a row component and a column component is corresponding to a unique word in the vocabulary. For instance, the [math]\displaystyle{ i^{th} }[/math] row and [math]\displaystyle{ j^{th} }[/math] column are the row and column indexes for [math]\displaystyle{ X_{ij} }[/math]. As shown in the following graph, [math]\displaystyle{ x_{1} }[/math] is corresponding to the words “January”. In 2C shared embedding table, it’s indexed by 2 elements: [math]\displaystyle{ x^{r}_{1} }[/math] and [math]\displaystyle{ x^{c}_{1} }[/math] where the subscript indicates which row component and column component this word belongs to. Ideally, words that share similar semantic features should be assigned to the same row or column. The shared embedding word table in Figure 1 serves as a good example: the word “one” and “January” are assigned to the same column, while the word “one” and “two” are allocated to the same row.

The main advantage of using such word representation is it reduces the number of vector/element needed for input word embedding. For instance, if there are 25 unique words in the vocabulary, the number of vectors to represent all the words is 10, namely 5 row vectors/elements and 5 column vectors/elements. Therefore, the shared embedding word table is a 5 by 5 matrix. In general, the formula for calculating number of vector/element needed to represent [math]\displaystyle{ |V| }[/math] words is [math]\displaystyle{ 2\sqrt{|V|} }[/math].

After constructing such word representation table, those 2-component shared embedding matrices are fed into the recurrent neural network. The following Figure 2 demonstrates a portion of LightRNN structure (left) with comparison with the regular RNN (right). Compared to regular RNN where a single input [math]\displaystyle{ x_{t-1} }[/math] is fed into the network each time, 2 elements of a single input [math]\displaystyle{ x_{t-1} }[/math]: [math]\displaystyle{ x^{r}_{t-1} }[/math] and [math]\displaystyle{ x^{c}_{t-1} }[/math] are fed into LightRNN. With the 2-Component shared embedding, we can construct the LightRNN model by doubling the basic units of a vanilla RNN model. If [math]\displaystyle{ n , m }[/math] denote the dimension of a row/column input vector and that of a hidden state vector respectively. To compute the probability distribution of $w_t$, we need to use the column vector [math]\displaystyle{ x_{t−1}^c ∈ R^n }[/math] the row vector [math]\displaystyle{ x_t^r ∈ R^n }[/math], and the hidden state vector [math]\displaystyle{ h_{t−1}^r ∈ R^m }[/math].

As mentioned before, the last hidden layer will produce the probabilities of [math]\displaystyle{ word_{t} }[/math]. Based on the diagram below, the following formulas are used: Let $n$ be the dimension/length of a row input vector/a column input vector, [math]\displaystyle{ X^{c}, X^{r} \in \mathbb{R}^{n \times \sqrt{|V|}} }[/math] denotes the input-embedding matrices:

- row vector [math]\displaystyle{ x^{r}_{t-1} \in \mathbb{R}^n }[/math]

- column vector [math]\displaystyle{ x^{c}_{t-1} \in \mathbb{R}^n }[/math]

Let [math]\displaystyle{ h^{c}_{t-1}, h^{r}_{t-1} \in \mathbb{R}^m }[/math] denotes the two hidden layers where m = dimension of the hidden layer:

- [math]\displaystyle{ h^{c}_{t-1} = f(W x_{t-1}^{c} + U h_{t-1}^{r} + b) }[/math]

- [math]\displaystyle{ h^{r}_{t} = f(W x_{t}^{r} + U h_{t-1}^{c} + b) }[/math]

where [math]\displaystyle{ W \in \mathbb{R}^{m \times n} }[/math], [math]\displaystyle{ U \in \mathbb{R}^{m \times m} }[/math], and [math]\displaystyle{ b \in \mathbb{R}^m }[/math] and [math]\displaystyle{ f }[/math] is a nonlinear activation function

The final step in LightRNN is to calculate [math]\displaystyle{ P_{r}(w_{t}) }[/math] and [math]\displaystyle{ P_{c}(w_{t}) }[/math] , which means the probability of a word w at time t, using the following softmax formulas:

- [math]\displaystyle{ P_{r}(w_t) = \frac{exp(h_{t-1}^{c} y_{r(w)}^{r})}{\sum\nolimits_{i \in S_r} exp(h_{t-1}^{c} y_{i}^{r}) } }[/math]

- [math]\displaystyle{ P_{c}(w_t) = \frac{exp(h_{t}^{r} y_{c(w)}^{c})}{\sum\nolimits_{i \in S_c} exp(h_{t}^{r} y_{i}^{c}) } }[/math]

- [math]\displaystyle{ P(w_t) = P_{r}(w_t) P_{c}(w_t) }[/math]

where

- [math]\displaystyle{ r(w) }[/math] = row index of word w

- [math]\displaystyle{ c(w) }[/math] = column index of word w

- [math]\displaystyle{ y_{i}^{r} \in \mathbb{R}^m }[/math] = i-th vector of [math]\displaystyle{ Y^r \in \mathbb{R}^{m \times \sqrt{|V|}} }[/math]

- [math]\displaystyle{ y_{i}^{c} \in \mathbb{R}^m }[/math] = i-th vector of [math]\displaystyle{ Y^c \in \mathbb{R}^{m \times \sqrt{|V|}} }[/math]

- [math]\displaystyle{ S_r }[/math] = the set of rows of the word table

- [math]\displaystyle{ S_c }[/math] = the set of columns of the word table

We can see that by using above equation, we effectively reduce the computation of the probability of the next word from a $|V|$-way normalization (in standard RNN models) to two $\sqrt {|V|}$-way normalizations. Note that we don't see the t-th word before predicting it. So in the above diagram, given the input column vector [math]\displaystyle{ x^c_{t-1} }[/math] of the (t-1)-th word, we first infer the row probability [math]\displaystyle{ P_r(w_t) }[/math] of the t-th word, and then choose the index of the row the largest probability in [math]\displaystyle{ P_r(w_t) }[/math] to look up the next input row vector [math]\displaystyle{ x^r_{t} }[/math]. Similarly, we can infer the column probability [math]\displaystyle{ P_c(w_t) }[/math] of the t-th word. It should be noted that the input and output use different embedding matrices but they share the same word-allocation table.

Essentially, in LightRNN, the prediction of the word at time t ([math]\displaystyle{ w_t }[/math]) based on word at time t-1 ([math]\displaystyle{ w_{t-1} }[/math]) is achieved by selecting the index [math]\displaystyle{ r }[/math] and [math]\displaystyle{ c }[/math] with the highest probabilities [math]\displaystyle{ P_{r}(w_t) }[/math], [math]\displaystyle{ P_{c}(w_t) }[/math]. Then, the probability of each word is computed based on the multiplication of [math]\displaystyle{ P_{r}(w_t) }[/math] and [math]\displaystyle{ P_{c}(w_t) }[/math].

Part III: Bootstrap for Word Allocation

As mentioned before, the major innovative aspect of LightRNN is the development of 2-component shared embedding. Such structure can be used in building a recurrent neural network called LightRNN. However, how such word table representation is constructed is the key part of building a successful LightRNN model. In this section, the procedures of constructing 2C shared embedding structure are explained. The fundamental idea is using a bootstrap method by minimizing a loss function (namely, negative log-likelihood function of the next word in a sequence). The detailed procedures are described as the following:

Step 1: First, all words in a vocabulary are randomly assigned to individual position within the word table

Step 2: Train LightRNN model based on word table produced in step 1 until certain criteria are met

Step 3: By fixing the training results of input and output embedding matrices (W & U) from step 2, adjust the position of words by minimizing the loss function over all the words. Then, repeat from step 2

Given a context with $T$ words, the authors presented the overall loss function for word w moving to position [i, j] using a negative log-likelihood function (NLL) as the following:

[math]\displaystyle{ NLL = \sum\limits_{t=1}^T -logP(w_t) = \sum\limits_{t=1}^T -log[P_{r}(w_t) P_{c}(w_t)] = \sum\limits_{t=1}^T -log[P_{r}(w_t)] – log[P_{c}(w_t)] = \sum\limits_{w=1}^{|V|} NLL_w }[/math]

where [math]\displaystyle{ NLL_w }[/math] is the negative log-likelihood of a word w.

Since in 2-component shared embedding structure, a word (w) is represented by one row vector and one column vector, [math]\displaystyle{ NLL_w }[/math] can be rewritten as [math]\displaystyle{ l(w, r(w), c(w)) }[/math] where [math]\displaystyle{ r(w) }[/math] and [math]\displaystyle{ c(w) }[/math] are the position index of word w in the word table. Next, the authors defined 2 more terms to explain the meaning of [math]\displaystyle{ NLL_w }[/math]: [math]\displaystyle{ l_r(w,r(w)) }[/math] and [math]\displaystyle{ l_c(w,c(w)) }[/math], namely the row component and column component of [math]\displaystyle{ l(w, r(w), c(w)) }[/math]. The above can be summarised by the following formulas:

[math]\displaystyle{ NLL_w = \sum\limits_{t \in S_w} -logP(w_t) = l(w, r(w), c(w)) }[/math]

[math]\displaystyle{ = \sum\limits_{t \in S_w} -logP_r(w_t) + \sum\limits_{t \in S_w} -logP_c(w_t) = l_r(w,r(w)) + l_c(w,c(w)) }[/math]

[math]\displaystyle{ = \sum\limits_{t \in S_w} -log (\frac{exp(h_{t-1}^{c} y_{i}^{r})}{\sum\nolimits_{k} exp(h_{t-1}^{c} y_{i}^{k})}) + \sum\limits_{t \in S_w} -log (\frac{exp(h_{t}^{r} y_{j}^{c})}{\sum\nolimits_{k} exp(h_{t}^{r} y_{k}^{c}) }) }[/math]

In summary, the overall loss function for word w to move to position [i, j] is the sum of its row loss and column loss of moving to position [i, j]. Therefore, total loss of moving to position [i, j] [math]\displaystyle{ l(w, i, j) = l_r(w, i) + l_c(w, j) }[/math]. Thus, to update the table by reallocating each word, we are looking for position [i, j] for each word w that minimize the total loss function, mathematically written as for the following:

[math]\displaystyle{ \min\limits_{a} \sum\limits_{w,i,j} l(w,i,j)a(w,i,j) }[/math] such that

[math]\displaystyle{ \sum\limits_{(i,j)} a(w,i,j) = 1 \space \forall w \in V, \sum\limits_{(w)} a(w,i,j) = 1 \space \forall i \in S_r, j \in S_j }[/math]

[math]\displaystyle{ a(w,i,j) \in \left\{0,1\right\}, \forall w \in V, i \in S_r, j \in S_j }[/math]

where [math]\displaystyle{ a(w,i,j) =1 }[/math] indicates moving word w to position [i, j]

After calculating $l(w, i, j)$ for all possible $w, i, j$, the above optimization leads forcing $a(w, i, j)$ to be equal to 1 for $i, j$ in which $l(w, i, j)$ is minimum and 0 elsewhere (i.e. finding the best place for the word $w$ in the table). This minimization problem is a classical assignment problem, which can be solved in polynomial time $O(|V|^3)$. So word table representation allocation would not occupy much computational time.

LightRNN Example

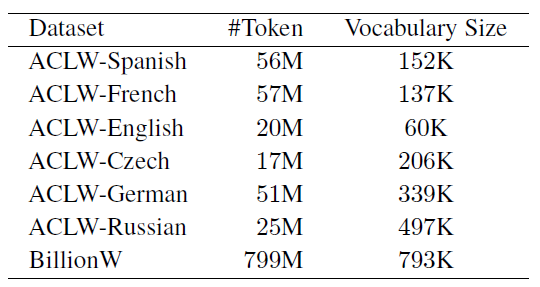

After describing the theoretical background of the LightRNN algorithm, the authors applied this method to 2 datasets (2013 ACL Workshop Morphological Language Dataset (ACLW) & One-Billion-Word Benchmark Dataset (BillonW)) and compared its performance with several other state-of-the-art RNN algorithms. The following table shows some summary statistics of those 2 datasets:

The goal of a probabilistic language model is either to compute the probability distribution of a sequence of given words (e.g. [math]\displaystyle{ P(W) = P(w_1, w_2, … , w_n) }[/math]) or to compute the probability of the next word given some previous words (e.g. [math]\displaystyle{ P(w_5 | w_1, w_2, w_3, w_4) }[/math]) (Jurafsky, 2017). In this paper, the evaluation matrix for the performance of LightRNN algorithm is perplexity [math]\displaystyle{ PPL }[/math] which is defined as the following:

[math]\displaystyle{ PPL = exp(\frac{NLL}{T}) }[/math]

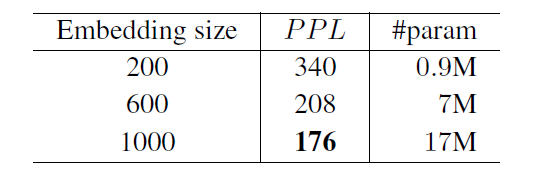

where T = number of tokens in the test set

Based on the mathematical definition of PPL, a well-performed model will have a lower perplexity. The authors then trained “LSTM-based LightRNN using stochastic gradient descent with truncated backpropagation through time” (Li, Qin, Yang, Hu, & Liu, 2016). To begin with, the authors first used the ACLW French dataset to determine the size of embedding matrix. From the results shown in Table 2, larger embedding size corresponds to higher accuracy rate (expressed in terms of perplexity). Therefore, they adopted embedding size of 1000 to be used in LightRNN to analyze the ACLW datasets.

- In the official implement Github repo, Figure 3 shows the training process of LightRNN on ACLW-French dataset.

Advantage 1: small model size

One of the major advantages of using LightRNN on NLP tasks is significantly reduced the model size, which means a fewer number of parameters to estimate. By comparing LightRNN with two other RNN algorithms and the baseline language model with Kneser-Ney smoothing. Those two RNN algorithms are: HSM which uses LSTM RNN algorithm with hierarchical softmax for word prediction; C-HSM which uses both hierarchical softmax and character-level convolutional filters for input embedding. From the results table shown below, we can see that LightRNN has the lowest perplexity while keeping the model size significantly smaller compared to the other three algorithms.

Italic results are the previous state-of-the-art. #P denotes the number of parameters.

Advantage 2: high training efficiency

Another advantage of LightRNN model is its shorter training time while maintaining the same level of perplexity compared to other RNN algorithms. When comparing to both C-HSM and HSM (shown below in Table 4), LightRNN only takes half the runtime but achieve the same level of perplexity when applied to both ACLW and BillionW datasets. In the last column of Table 3, the amount of time used for word table reconstruction is presented as the percentage of the total runtime. As we can see, the training time for word reallocation takes up only a very small proportion of the total runtime. However, the resulting reconstructed word table can be used as a valuable output, which is further explained in the next section.

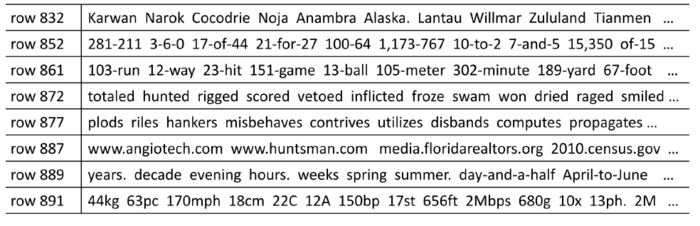

Advantage 3: semantically valid word allocation table

As explained in the previous section, LightRNN uses a word allocation table that gets updated in every iteration of the algorithm. The optimal structure of the table should assign semantically similar words onto the same row or column in order to reduce the number of parameters to estimate. Below is a snapshot of the reconstructed word table used in LightRNN algorithm. Evidently, we can see in row 887, all URL addresses are grouped together and in row 872 all verbs in past tense are grouped together. As the authors explained in the paper, LightRNN doesn’t assume independence of each word but instead using a shared embedding table. In this way, it reduces the model size by utilizing common embedding elements of the table/matrix, and also uses such preprocessed data to improve the efficiency of this algorithm.

Remarks

In summary, the proposed method in this paper is mainly on developing a new way of using word embedding which is a natural extension of a 1-layer word embedding look-up table towards a 2-layer look-up table. Words with similar semantic meanings are embedded using similar vectors. Those vectors are then divided into row and column components where similar words are grouped together by having shared row and column components in the word representation table. The bootstrap step is promising since it learns a good word allocation (similar to word clustering). There could be a large impact on various natural language applications. From a computational and application perspective, there were two key contributions provided in this paper.

- Reduction in size of word embedding matrix.

- Reduction in computations of word probabilities.

The proposed model makes no assumptions about the structure of the words, which makes it potentially useful outside of NLP. In contrast, character-based word embedding models also reduce the model size but do need to access the internal structure of words (i.e. their characters). These two points ensure that one does not need hierarchical softmax or Monte Carlo estimations of the model's training cost. This is indeed a dimensional reduction, i.e. use the row and column "semantic vectors" to approximate the coded word. Because of this structural change of input word embedding, RNN model needs to adapt by having both row and column components being fed into the network. However, the fundamental structure of RNN model does not change. Therefore, personally, I would say it’s a new word embedding technique rather than a new development in model construction. One major confusion I have when reading this paper is how those row and column components in the word allocation table are determined. From the paper itself, the authors didn’t explain how they are constructed.

Such shared word embedding technique is prevalently used in NLP. For instance, in language translation, similar words from different languages are grouped together so that the machine can translate sentences from one language to another. In Socher et al. (2013a), English and Chinese words are embedded in the same space so that we can find similar English (Chinese) words for Chinese (English) words. (Zou, Socher, Cer, & Manning, 2013). Word2vec is also a commonly used technique for word embedding, which uses a two-layer neural network to transform text into numeric vectors where similar words will have similar numeric values. The key feature of word2vec is that semantically similar words (which is now represented by numeric vectors) can be grouped together (“Word2vec,” n.d.; Bengio, Ducharme, & Vincent, 2001; Bengio, Ducharme, Vincent, & Jauvin, 2003).

An interesting area of further exploration proposed by the authors is an extension of this method to k-component shared embeddings where k>2. Words probably share similar semantic meanings in more than two dimensions, and this extension could reduce network size even further. However, it could also further complicate the bootstrapping phase of training.

Since no assumptions were made about the structure of the words, one could seek uses of this algorithm outside the context of natural language processing.

It is ultimately unclear why the authors felt the need to adjust the structure of the RNN, sequentially feeding in row-column pairs. A vector derived by the concatenation of the row and the column components could easily be fed into a standard RNN, eliminating the need. It would have been useful to see whether this approach was fruitful. If the benefits seen in the paper are not derived as a result of the novel RNN structure, but instead as a result of the embedding, then this may provide grounds for use in other network structures, and perhaps as an embedding algorithm itself. Additionally, if the simpler model grants faster optimization, it may be a worthwhile investigation.

Overall, two-component embedding approach is interesting. However, the reported numbers on the one-billion word benchmark are worse than the best results reported in (Chelba et al 2013). In addition, the authors don't report run times so we can't figure out how much additional training time is added by the table allocation optimizer. This is particularly troublesome as, while the authors do suggest that it is only a small percentage of the total training time the model incurs, the algorithm used runs with quadratic complexity based on the number of words; it is thus conceivable that the added time benefits of a simpler model, in addition to a simpler embedding outweigh any computational benefits of the smaller size for many use cases.

Code for LightRNN can be found on Github :

Official Implementation(CNTK): https://github.com/Microsoft/CNTK/tree/master/Examples/Text/LightRNN

Tensorflow : https://github.com/YisenWang/LightRNN-NIPS2016-Tensorflow_code

Reference

Bengio, Y, Ducharme, R., & Vincent, P. (2001). A Neural Probabilistic Language Model. In Journal of Machine Learning Research (Vol. 3, pp. 932–938). https://doi.org/10.1162/153244303322533223

Bengio, Yoshua, Ducharme, R., Vincent, P., & Jauvin, C. (2003). A Neural Probabilistic Language Model. Journal of Machine Learning Research, 3(Feb), 1137–1155.

Brownlee, J. (2017, September 20). 7 Applications of Deep Learning for Natural Language Processing. Retrieved October 27, 2017, from https://machinelearningmastery.com/applications-of-deep-learning-for-natural-language-processing/

Hirschberg, J., & Manning, C. D. (2015). Advances in natural language processing. Science, 349(6245), 261–266. https://doi.org/10.1126/science.aaa8685

Jurafsky, D. (2017, January). Language Modeling Introduction to N grams. Presented at the CS 124: From Languages to Information, Stanford University. Retrieved from https://web.stanford.edu/class/cs124/lec/languagemodeling.pdf

Li, X., Qin, T., Yang, J., Hu, X., & Liu, T. (2016). LightRNN: Memory and Computation-Efficient Recurrent Neural Networks. Advances in Neural Information Processing Systems 29, 4385–4393.

Recurrent Neural Networks. (n.d.). Retrieved October 8, 2017, from https://www.tensorflow.org/tutorials/recurrent

Vector Representations of Words. (2017, August 17). Retrieved October 8, 2017, from https://www.tensorflow.org/tutorials/word2vec

Word2vec. (n.d.). Retrieved October 26, 2017, from https://deeplearning4j.org/word2vec.html

Zou, W. Y., Socher, R., Cer, D., & Manning, C. D. (2013). Bilingual word embeddings for phrase-based machine translation, 1393–1398.

Kneser Ney Smoothing - : https://en.wikipedia.org/wiki/Kneser%E2%80%93Ney_smoothing & http://www.foldl.me/2014/kneser-ney-smoothing/

Boné R., Assaad M., Crucianu M. (2003) Boosting Recurrent Neural Networks for Time Series Prediction. In: Pearson D.W., Steele N.C., Albrecht R.F. (eds) Artificial Neural Nets and Genetic Algorithms. Springer, Vienna

Mikolov T., Karafiat M., Burget L., Cernocky J. H., Khudanpur S. Recurrent neural network based language model. Interspeech 2010.

Chelba et al 2013, One Billion Word Benchmark for Measuring Progress in Statistical Language Modeling.