Learning to Navigate in Cities Without a Map: Difference between revisions

No edit summary |

No edit summary |

||

| Line 9: | Line 9: | ||

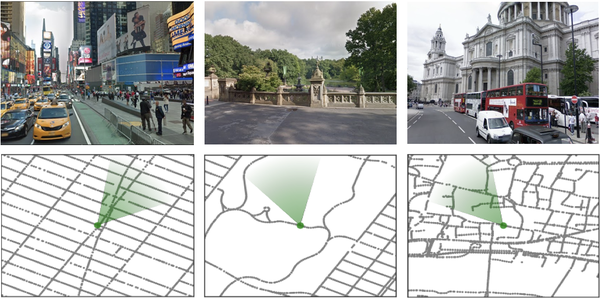

In this article, based on this fact that human can learn to navigate through cities without using any special tool such as maps or GPS, authors propose new methods to show that a neural network agent can do the same thing by using visual observations. To do so, an interactive environment using Google StreetView Images and a dual pathway agent architecture are designed. As shown in figure 1, some parts of environment are built using Google StreetView images of New York City (Times Square, Central Park) and London (St. Paul’s Cathedral). The green cone represents the agent’s location and orientation. Although learning to navigate using visual aids is shown to be successful in some domains such as games and simulated environments using deep reinforcement learning (RL), it suffers from data inefficiency and sensitivity to changes in environment. Thus, it is unclear whether this method could be used for large-scale navigation. That’s why it became the subject of investigation on this paper. | In this article, based on this fact that human can learn to navigate through cities without using any special tool such as maps or GPS, authors propose new methods to show that a neural network agent can do the same thing by using visual observations. To do so, an interactive environment using Google StreetView Images and a dual pathway agent architecture are designed. As shown in figure 1, some parts of environment are built using Google StreetView images of New York City (Times Square, Central Park) and London (St. Paul’s Cathedral). The green cone represents the agent’s location and orientation. Although learning to navigate using visual aids is shown to be successful in some domains such as games and simulated environments using deep reinforcement learning (RL), it suffers from data inefficiency and sensitivity to changes in environment. Thus, it is unclear whether this method could be used for large-scale navigation. That’s why it became the subject of investigation on this paper. | ||

[[File:figure1-soroush.png|600px|thumb|center|Figure 1. Our environment is built of real-world places from StreetView. The figure shows diverse views and corresponding local maps in New York City (Times Square, Central Park) and London (St. Paul’s Cathedral). The green cone represents the agent’s location and orientation.]] | [[File:figure1-soroush.png|600px|thumb|center|Figure 1. Our environment is built of real-world places from StreetView. The figure shows diverse views and corresponding local maps in New York City (Times Square, Central Park) and London (St. Paul’s Cathedral). The green cone represents the agent’s location and orientation.]] | ||

==Related Works== | |||

==Contribution== | ==Contribution== | ||

Revision as of 20:13, 2 November 2018

Paper: Learning to Navigate in Cities Without a Map[1]

A video of the paper is available here[2].

Introduction

Navigation is an attractive topic in many research disciplines and technology related domains such as neuroscience and robotics. The majority of algorithms are based on the following steps. 1. Building an explicit map 2. Planning and acting using that map. In this article, based on this fact that human can learn to navigate through cities without using any special tool such as maps or GPS, authors propose new methods to show that a neural network agent can do the same thing by using visual observations. To do so, an interactive environment using Google StreetView Images and a dual pathway agent architecture are designed. As shown in figure 1, some parts of environment are built using Google StreetView images of New York City (Times Square, Central Park) and London (St. Paul’s Cathedral). The green cone represents the agent’s location and orientation. Although learning to navigate using visual aids is shown to be successful in some domains such as games and simulated environments using deep reinforcement learning (RL), it suffers from data inefficiency and sensitivity to changes in environment. Thus, it is unclear whether this method could be used for large-scale navigation. That’s why it became the subject of investigation on this paper.

Related Works

Contribution

This paper has made 3 the following contributions.

1. Goal-dependent learning. This means that the policy and value functions must adapt themselves to a sequence of goals that are provided as our input.

2. Leveraging a recurrent neural network (RNN) for supporting both locale-specific and general navigation. Using that, not only could navigation through a city be possible, but also the model is scalable for navigation in new cities.

3. Using a new environment which is built on top of Google StreetView. This provides real-world images for agent’s observation. Using this environment, agent should navigate from an arbitrary starting point to a goal and then to another goal etc. Also, London, Paris, and New York City are chosen for navigating through them.