Learning The Difference That Makes A Difference With Counterfactually-Augmented Data

Presented by

Syed Saad Naseem

Introduction

This paper addresses the problem of building models for NLP tasks that are robust against spurious correlations in the data. The authors tackle this problem by introducing a human-in-the-loop method in which human annotators are hired to modify data in order to change the meaning of the text or make it in a way that it represents the opposite label for example if a text had a positive sentiment to it, the annotators change the text such that it represents the negative sentiment label with minimal changes to the text. They refer to this process as counterfactual augmentation. The authors apply this method to the IMDB sentiment dataset and to SNLI and show that many models can not perform well on the augmented dataset if trained only on the original dataset.

Background

What are spurious patterns in NLP, and why do they occur?

Current supervised machine learning systems try to learn the underlying features of input data that associate the inputs with the corresponding labels. Take Twitter sentiment analysis as an example, there might be lots of negative tweets about Donald Trump. If we use those tweets as training data, the ML systems tend to associate "Trump" with the label: Negative. However, the text itself is completely neutral. The association between the text trump and the label negative is spurious. One way to explain why this occurs is that association does not necessarily mean causation. For example, the color gold might be associated with success. But it does not cause success. Current ML systems might learn such undesired associations and then deduce from them.

Data Collection

The authors used Amazon’s Mechanical Turk which is a crowdsourcing platform using to recruit editors. They hired these editors to revise each document. For sentiment analysis, they directed the annotators to revise this negative movie review to make it positive, without making any gratuitous changes. For the NLI tasks, which are 3-class classification tasks, the annotators were asked to modify the premise of the text while keeping the hypothesis intact and vice versa.

After the data collection, a different set of workers was employed to verify whether the given label accurately described the relationship between each premise-hypothesis pair. Each pair was presented to 3 workers and the pair was only accepted if all 3 of the workers approved that the text is accurate. This entire process cost the authors about $10778.

Example

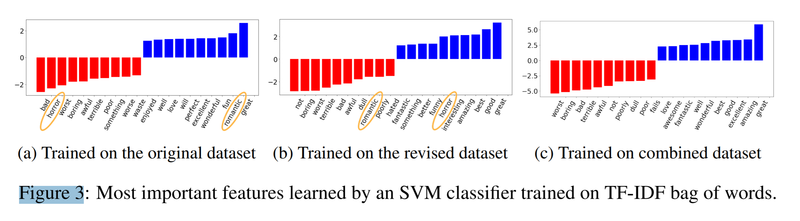

In the picture below, we can see an example of spurious correlation and how the method presented here can address that. The picture shows the most important features learned by SVM. As we can see in the left plot, when the model is trained only on the original data, the word "horror" is associated with negative label and the word "romantic" is associated to the positive label. This is an example of spurious correlation, because we definitely can have both bad romantic and good horror movies. The middle plot shows the case that the model is trained only on the revised dataset. As we expected the situation is vice versa, that is, "horror" and "romantic" are associated to the labels positive and negative respectively. However, the problem is solved when the authors trained the model on both the original and the revised datasets, because the words "horror" and "romantic" are no longer among the most important features.

Experiments

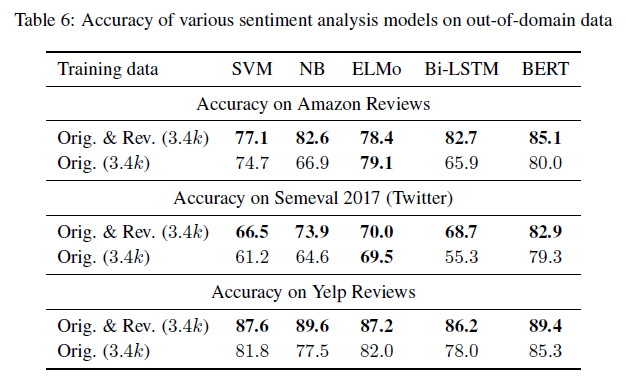

The authors carried out experiments on a total of 5 models: Support Vector Machines (SVMs), Naive Bayes (NB) classifiers, Bidirectional Long Short-Term Memory Networks, ELMo models with LSTM, and fine-tuned BERT models. Furthermore, they evaluated their models on Amazon reviews datasets aggregated over six genres, they also evaluated the models on twitters sentiment dataset and on Yelp reviews released as part of a Yelp dataset challenge. They showed that almost all cases, models trained on the counterfactually-augmented IMDb dataset perform better than models trained on comparable quantities of original data, this is shown in the table below.

Conclusion

The authors propose a new way to augment textual datasets for the task of sentiment analysis, this helps the learning methods used to generalize better by concentrating on learning the different that makes a difference. I believe that the main contribution of the paper is the introduction of the idea of counterfactual datasets for sentiment analysis. The paper proposes an interesting approach to tackle NLP problems, shows intriguing experimental results, and presents us with an interesting dataset that may be useful for future research.