Learning The Difference That Makes A Difference With Counterfactually-Augmented Data

Presented by

Syed Saad Naseem

Introduction

This paper addresses the problem of building models for NLP tasks that are robust against spurious correlations in the data. The authors tackle this problem by introducing a human-in-the-loop method in which human annotators were hired to modify data in order to make it in a way that represents the opposite label. For example, if a text had a positive sentiment to it, the annotators change the text such that it represents the negative sentiment while making minimal changes to the text. They refer to this process as counterfactual augmentation. The authors apply this method to the IMDB sentiment dataset and to SNLI and show that many models can not perform well on the augmented dataset when trained only on the original dataset and vice versa. The human-in-the-loop system which is designed for counterfactually manipulating documents aims that by intervening only upon the factor of interest, they might disentangle the spurious and non-spurious associations, yielding classifiers that hold up better when spurious associations do not transport out of the domain.

Background

What are spurious patterns in NLP, and why do they occur?

Current supervised machine learning systems try to learn the underlying features of input data that associate the inputs with the corresponding labels. Take Twitter sentiment analysis as an example, there might be lots of negative tweets about Donald Trump. If we use those tweets as training data, the ML systems tend to associate "Trump" with the label: Negative. However, the text itself is completely neutral. The association between the text trump and the label negative is spurious. One way to explain why this occurs is that association does not necessarily mean causation. For example, the color gold might be associated with success. But it does not cause success. Current ML systems might learn such undesired associations and then deduce from them. This is typically caused by an inherent bias within the data. ML models then learn the inherent bias which leads to biased predictions.

Data Collection

The authors used Amazon’s Mechanical Turk which is a crowdsourcing platform using to recruit editors. They hired these editors to revise each document.

Sentiment Analysis

The dataset to be analyzed is the IMDb movie review dataset. The annotators were directed to revise the reviews to make them counterfactual, without making any gratuitous changes. There are several types of changes that were applied and two examples are listed below, where red represents original text and blue represents modified text.

| Type of Change | Original Review | Modified Review |

|---|---|---|

| Change ratings | one of the worst ever scenes in a sports movie. 3 stars out of 10. | one of the wildest ever scenes in a sports movie. 8 stars out of 10. |

| Suggest sarcasm | thoroughly captivating thriller-drama, taking a deep and realistic view. | thoroughly mind numbing “thriller-drama”, taking a “deep” and “realistic” (who are they kidding?) view. |

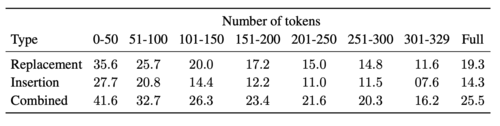

A deeper understanding of what is actually causing the reviews to be positive/negative could be obtained when the counterfactually-revised reviews were compared with corresponding original reviews. The indices corresponding to replacements/insertions were marked and the edits in the original review were represented by a binary vector. Jaccard similarity was evaluated between the two reviews and a negative correlation was observed (seen in the above table) with the length of the review.

Natural Language Inference

The NLI is a 3-class classification task, where the inputs are a premise and a hypothesis. Given the inputs, the model predicts a label that is meant to describe the relationship between the facts stated in each sentence. The labels can be entailment, contradiction, or neutral. The annotators were asked to modify the premise of the text while keeping the hypothesis intact and vice versa. Some examples of modifications are given below with labels given in the parentheses.

| Premise | Original Hypothesis | Modified Hypothesis |

|---|---|---|

| A young dark-haired woman crouches on the banks of a river while washing dishes. | A woman washes dishes in the river while camping (Neutral) | A woman washes dishes in the river. (Entailment) |

| Students are inside of a lecture hall | Students are indoors. (Entailment) | Students are on the soccer field. (Contradiction) |

| An older man with glasses raises his eyebrows in surprise. | The man has no glasses. (Contradiction) | The man wears bifocals. (Neutral) |

After the data collection, a different set of workers was employed to verify whether the given label accurately described the relationship between each premise-hypothesis pair. Each pair was presented to 3 workers and the pair was only accepted if all 3 of the workers approved that the text is accurate. This entire process cost the authors about $10778.

Example

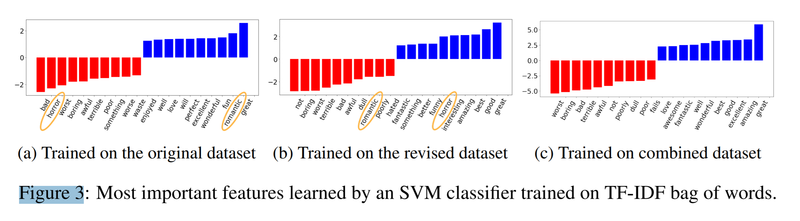

In the picture below, we can see an example of spurious correlation and how the method presented here can address that. The picture shows the most important features learned by SVM. As we can see in the left plot when the model is trained only on the original data, the word "horror" is associated with the negative label and the word "romantic" is associated with the positive label. This is an example of spurious correlation because we definitely can have both bad romantic and good horror movies. The middle plot shows the case that the model is trained only on the revised dataset. As we expected the situation is vice versa, that is, "horror" and "romantic" are associated with the positive and negative labels respectively. However, the problem is solved in the right plot where the authors trained the model on both the original and the revised datasets. The words "horror" and "romantic" are no longer among the most important features which is what we wanted.

Experiments

Sentiment Analysis

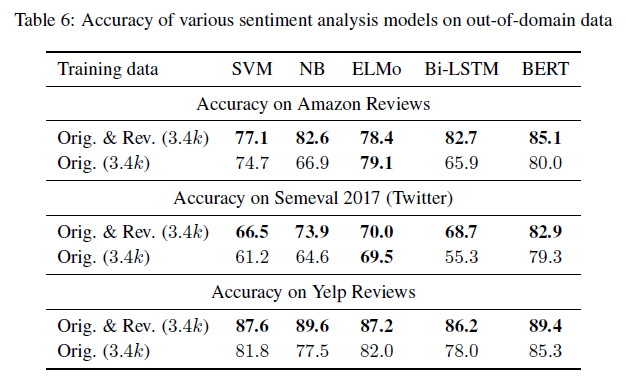

The authors carried out experiments on a total of 5 models: Support Vector Machines (SVMs), Naive Bayes (NB) classifiers, Bidirectional Long Short-Term Memory Networks, ELMo models with LSTM, and fine-tuned BERT models. Furthermore, they evaluated their models on Amazon reviews datasets aggregated over six genres, they also evaluated the models on twitters sentiment dataset and on Yelp reviews released as part of a Yelp dataset challenge. They showed that almost all cases, models trained on the counterfactually-augmented IMDb dataset perform better than models trained on comparable quantities of original data, this is shown in the table below.

Natural Language Inference

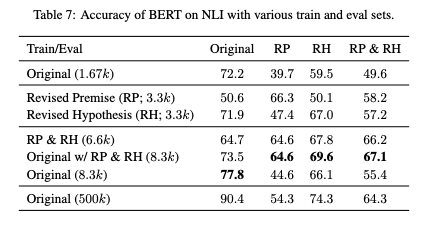

To see the results of BERT model on the SNLI tasks, the authors used different sets of train and eval sets. The fine-tuned version of BERT on the original data(1.67k) performs well on the original eval set; however, the accuracy drops from 72.2% to 39.7% when evaluated on the RP(Revised Premise) set. It is also the case even with the full original set(500k) i.e. the accuracy of the model drops significantly on the RP, RH (Revised Hypothesis), and RP&RH datasets. In Table 7, you can see that the BERT model which was fine-tuned on a combination of RP and RH leads to consistent performance on all datasets.

Source Code

The official codes are available at https://github.com/acmi-lab/counterfactually-augmented-data .

Conclusion

The authors propose a new way to augment textual datasets for the task of sentiment analysis, this helps the learning methods used to generalize better by concentrating on learning the different that makes a difference. I believe that the main contribution of the paper is the introduction of the idea of counterfactual datasets for sentiment analysis. The paper proposes an interesting approach to tackle NLP problems, shows intriguing experimental results, and presents us with an interesting dataset that may be useful for future research. Indeed, this work has been cited in several interesting works examining gender bias in NLP [1], making AI programs more ethical [2], and generating humor text [3].

References

[1] Lu, K., Mardziel, P., Wu, F., Amancharla, P., & Datta, A. (2018). Gender Bias in Neural Natural Language Processing.

[2] Hendrycks, D., Burns, C., Basart, S., Critch, A., Li, J., Song, D., & Steinhardt, J. (2020). Aligning AI With Shared Human Values. 1–22.

[3] Weller, O., Fulda, N., & Seppi, K. (2020). Can Humor Prediction Datasets be used for Humor Generation? Humorous Headline Generation via Style Transfer. 186–191.