Hierarchical Question-Image Co-Attention for Visual Question Answering: Difference between revisions

(Minor fixes) |

No edit summary |

||

| Line 8: | Line 8: | ||

natural language as illustrated in Figure 1. | natural language as illustrated in Figure 1. | ||

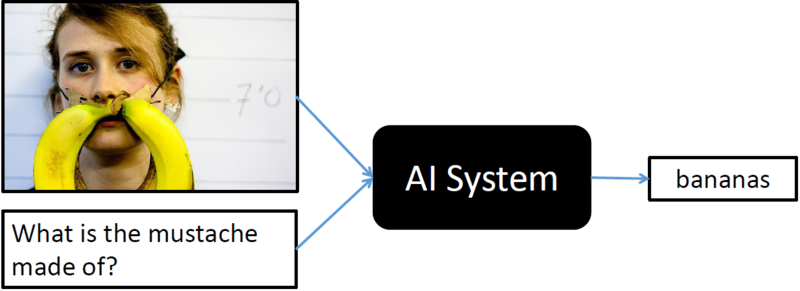

[[File:vqa-overview.png|thumb|800px|center|Figure 1: Figure illustrates a VQA system; whereby | [[File:vqa-overview.png|thumb|800px|center|Figure 1: Figure illustrates a VQA system; whereby machine learning algorithms takes an image and a text-based visual question about the image as input and outputs the answer for the visual question in natural language (ref: http://www.visualqa.org/static/img/challenge.png)]] | ||

Recently, visual attention based models have been explored for VQA, where the | |||

attention mechanism typically produces a spatial map highlighting image regions | |||

relevant to answering the question. However, to correctly answer a visual | |||

question about an image, the machine not only needs to understand or "attend" | |||

regions in the image but it is equally important to "attend" the parts of the | |||

question as well. In this paper, authors have developed a novel co-attention | |||

technique to combine "where to look" or visual-attention along with "what words | |||

to listen to" or question-attention. The co-attention mechanism for VQA allows | |||

model to jointly reasons about image and question thus improving the state of | |||

art results. | |||

Revision as of 00:07, 21 November 2017

Introduction

Visual Question Answering (VQA) is a recent problem in computer vision and natural language processing that has garnered a large amount of interest from the deep learning, computer vision, and natural language processing communities. In VQA, an algorithm needs to answer text-based questions about images in natural language as illustrated in Figure 1.

Recently, visual attention based models have been explored for VQA, where the attention mechanism typically produces a spatial map highlighting image regions relevant to answering the question. However, to correctly answer a visual question about an image, the machine not only needs to understand or "attend" regions in the image but it is equally important to "attend" the parts of the question as well. In this paper, authors have developed a novel co-attention technique to combine "where to look" or visual-attention along with "what words to listen to" or question-attention. The co-attention mechanism for VQA allows model to jointly reasons about image and question thus improving the state of art results.