Hierarchical Question-Image Co-Attention for Visual Question Answering: Difference between revisions

Jump to navigation

Jump to search

(Created page with "Under progress") |

(Introduction) |

||

| Line 1: | Line 1: | ||

= Introduction = | |||

Visual Question Answering (VQA) is a recent problem in computer vision and | |||

natural language processing that has garnered a large amount of interest from | |||

the deep learning, computer vision, and natural language processing communities. | |||

In VQA, an algorithm needs to answer text-based questions about images in | |||

natural language as illustrated in Figure <xr="fig:vqa-overview"/>. | |||

<figure id="fig:vqa-overview"> | |||

[[File:vqa-overview.png|thumb|800px|center|Figure 1: Figure illustrates a VQA system; whereby AI System takes an image and a text-based visual question about the image as input and outputs the answer for the visual question in natural language]]. | |||

</figure> | |||

Revision as of 00:01, 21 November 2017

Introduction

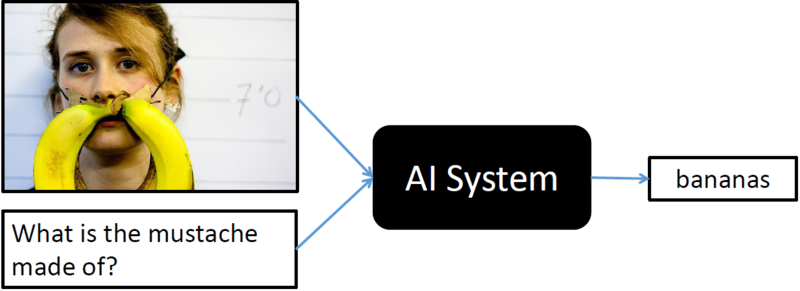

Visual Question Answering (VQA) is a recent problem in computer vision and natural language processing that has garnered a large amount of interest from the deep learning, computer vision, and natural language processing communities. In VQA, an algorithm needs to answer text-based questions about images in natural language as illustrated in Figure <xr="fig:vqa-overview"/>.

<figure id="fig:vqa-overview">

.

</figure>