Efficient kNN Classification with Different Numbers of Nearest Neighbors

Presented by

Cooper Brooke, Daniel Fagan, Maya Perelman

Introduction

Traditional model-based approaches for classification problem requires to train a model on training observations before predicting test samples. In contrast, the model-free k-Nearest Neighbors (KNNs) method classifies observations with a majority rule approach, labeling each piece of test data based on its k closest training observations (neighbors). This method has become very popular due to its relatively robust performance given how simple it is to implement. It is robust because the predicted value is only depend on the label of the closest data and that is not significantly affected by outliers.

There are two main approaches to conduct kNN classification in respect of the choice for k. The first is to use a fixed k value to classify all test samples, while the second is to use a different k value each time, either for different k values for each test sample or different k values for each class. The former, while easy to implement, has shown to be impractical in real-world machine learning applications. It is more reasonable and practical to select a unique value of k for each test sample to allow for a better fit of the data. Therefore, it is of immense interest to develope an efficient way to determine the optimal k value for each test sample. The authors of this paper presented the kTree and k*Tree methods to solve this research question.

Previous Work and Motivation

The problem of finding an optimal fixed k value for all test samples is well-studied. Lall and Sharma [9] incorporated a certainty factor measure to solve for an optimal fixed k. This resulted in the conclusion that k should be [math]\displaystyle{ \sqrt{n} }[/math] (where n is the number of training samples) when n > 100. The method Song et al.[2] explored involves selecting a subset of the most informative samples from neighbourhoods. Vincent and Bengio [3] took the unique approach of designing a k-local hyperplane distance to solve for k. Premachandran and Kakarala [4] had the solution of selecting a robust k using the consensus of multiple rounds of kNNs. These fixed k methods are valuable however are impractical for data mining and machine learning applications.

Finding an efficient approach to assigning varied k values has also been previously studied. Tuning approaches such as the ones taken by Zhu et al. as well as Sahugara et al. have been popular. Zhu et al. [5] determined that optimal k values should be chosen using cross validation while Sahugara et al. [6] proposed using Monte Carlo validation to select varied k parameters. Other learning approaches such as those taken by Zheng et al. and Góra and Wojna also show promise. Zheng et al. [7] applied a reconstruction framework to learn suitable k values. Góra and Wojna [8] proposed using rule induction and instance-based learning to learn optimal k-values for each test sample. While all these methods are valid, their processes of either learning an optimal-k-value for each test sample or scanning all training samples for finding nearest neighbors are time-consuming. It is challenging for simultaneously addressing these issues of kNN method including optimal-k-values learning for different samples, time cost reduction, and performance improvement.

Due to the previously mentioned drawbacks of fixed-k and current varied-k kNN classification, the paper’s authors sought to design a new approach to solve for different k values. The kTree and k*Tree approach seek to calculate optimal values of k while avoiding computationally costly steps such as cross-validation.

A secondary motivation of this research was to ensure that the kTree method would perform better than kNN using fixed values of k given that running costs would be similar in this instance.

Approach

kTree Classification

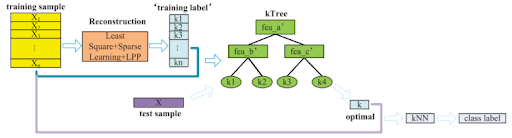

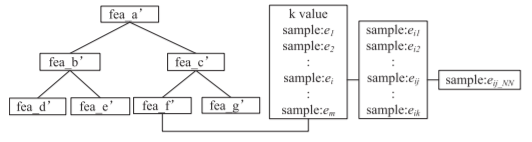

The proposed kTree method is illustrated by the following flow chart:

Reconstruction

The first step is to use the training samples to reconstruct themselves. The goal of this is to find the matrix of correlations between the training samples themselves, [math]\displaystyle{ \textbf{W} }[/math], such that the distance between an individual training sample and the corresponding correlation vector multiplied by the entire training set is minimized. This least square loss function where [math]\displaystyle{ \mathbf{X}\in \mathbb{R}^{d\times n} = [x_1,...,x_n] }[/math] represents the training set which can be written as:

$$\begin{aligned} \mathop{min}_{\textbf{W}} \sum_{i=1}^n ||Xw_i - x_i||^2 \end{aligned}$$

In addition, an [math]\displaystyle{ l_1 }[/math] regularization term multiplied by a tuning parameter, [math]\displaystyle{ \rho_1 }[/math], is added to ensure that sparse results are generated as the objective is to minimize the number of training samples that will eventually be depended on by the test samples.

$$\begin{aligned} \mathop{min}_{\textbf{W}} \sum_{i=1}^n ||Xw_i - x_i||^2 + \rho||\textbf{W}||^2_2 \end{aligned}$$

This is called ridge regression and it has a close solution where $$W = (X^TX+\rho I)^{-1}X^TX$$

However, this objective function does not provide a sparse result, there we further employe a sparse objective function:

$$W = (X^TX+\rho I)^{-1}X^TX, W >= 0$$

The least square loss function is then further modified to account for samples that have similar values for certain features yielding similar results. It is penalized with the function:

$$\frac{1}{2} \sum^{d}_{i,j} ||x^iW-x^jW||^2_2$$

with sij denotes the relation between feature vectors. It uses a radial basis function kernel to calculate Sij. After some transformations, this second regularization term that has tuning parameter [math]\displaystyle{ \rho_2 }[/math] is:

$$\begin{aligned} R(W) = Tr(\textbf{W}^T \textbf{X}^T \textbf{LXW}) \end{aligned}$$

where [math]\displaystyle{ \mathbf{L} }[/math] is a Laplacian matrix that indicates the relationship between features. The Laplacian matrix, also called the graph Laplacian, is a matrix representation of a graph.

This gives a final objective function of:

$$\begin{aligned} \mathop{min}_{\textbf{W}} \sum_{i=1}^n ||Xw_i - x_i||^2 + \rho_1||\textbf{W}|| + \rho_2R(\textbf{W}) \end{aligned}$$

Since this is a convex function, an iterative method can be used to find the optimal solution [math]\displaystyle{ \mathbf{W^*} }[/math].

Calculate k for training set

Each element [math]\displaystyle{ w_{ij} }[/math] in [math]\displaystyle{ \textbf{W*} }[/math] represents the correlation between the ith and jth training sample so if a value is 0, it can be concluded that the jth training sample has no effect on the ith training sample which means that it should not be used in the prediction of the ith training sample. Consequently, all non-zero values in the [math]\displaystyle{ w_{.j} }[/math] vector would be useful in predicting the ith training sample which gives the result that the number of these non-zero elements for each sample is equal to the optimal k value for each sample.

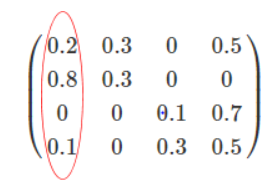

For example, if there was a 4x4 training set where [math]\displaystyle{ \textbf{W*} }[/math] had the form:

The optimal k value for training sample 1 would be 2 since the correlation between training sample 1 and both training samples 2 and 4 are non-zero.

Train a Decision Tree using k as the label

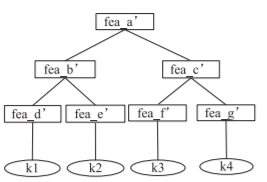

A decision tree is trained using the traditional ID3 method; (1) calculate the entropy of every feature in your data set, (2) split the data-set based on the feature whose entropy is minimized after splitting (in the example below, this was feature a'), (3) make a decision tree node based on that feature, (4) repeat steps (1)-(3) recursively on the formed subsets using the remaining features, replacing the label by the previously learned optimal k value for each sample. More specifically, whereas in a normal decision tree, the target data are the labels themselves, in the kTree method, the target data is the optimal k value for each sample that was solved for in the previous step. As a result, the decision tree formed by the kTree method has the following form:

Making Predictions for Test Data

The optimal k values for each testing sample are easily obtainable using the kTree solved for in the previous step. The only remaining step is to predict the labels of the testing samples by finding the majority class of the optimal k nearest neighbors across all of the training data.

k*Tree Classification

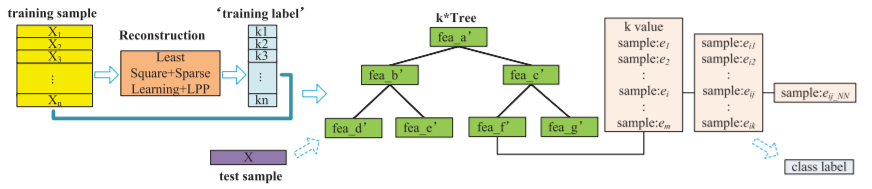

The proposed k*Tree method is illustrated by the following flow chart:

Clearly, this is a very similar approach to the kTree as the k*Tree method attempts to sacrifice very little in predictive power in return for a substantial decrease in complexity when actually implementing the traditional kNN on the testing data once the optimal k values have been found.

While all steps previous are the exact same, the difference comes from additional data stored in the leaf nodes. k*Tree method not only stores the optimal k value but also the following information:

- The training samples that have the same optimal k

- The k nearest neighbours of the previously identified training samples

- The nearest neighbor of each of the previously identified k nearest neighbours

The data stored in each node is summarized in the following figure:

When testing, the constructed k*Tree is searched for its optimal k values well as its nearest neighbours in the leaf node. It then selects a number of its nearest neighbours from the subset of training samples and assigns the test sample with the majority label of these nearest neighbours.

In the kTree method, predictions were made based on all of the training data, whereas in the k*Tree method, predicting the test labels will only be done using the samples stored in the applicable node of the tree.

Experiments

In order to assess the performance of the proposed method against existing methods, a number of experiments were performed to measure classification accuracy and run time. The experiments were run on twenty public datasets provided by the UCI Repository of Machine Learning Data, and contained a mix of data types varying in size, in dimensionality, in the number of classes, and in imbalanced nature of the data. Ten-fold cross-validation was used to measure classification accuracy, and the following methods were compared against:

- k-Nearest Neighbor: The classical kNN approach with k set to k=1,5,10,20 and square root of the sample size [9]; the best result was reported.

- kNN-Based Applicability Domain Approach (AD-kNN) [11]

- kNN Method Based on Sparse Learning (S-kNN) [10]

- kNN Based on Graph Sparse Reconstruction (GS-kNN) [7]

- Filtered Attribute Subspace-based Bagging with Injected Randomness (FASBIR) [12], [13]

- Landmark-based Spectral Clustering kNN (LC-kNN) [14]

The experimental results were then assessed based on classification tasks that focused on different sample sizes, and tasks that focused on different numbers of features.

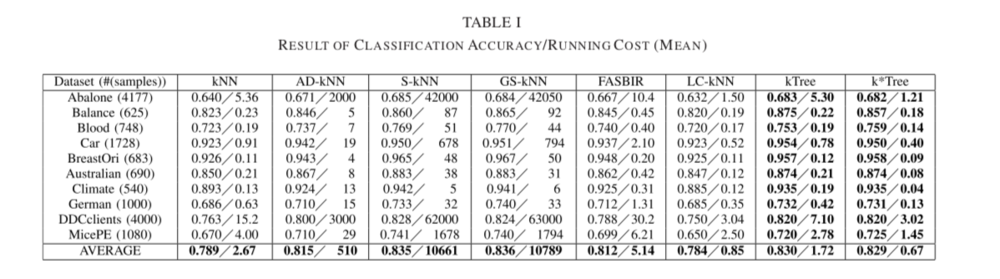

A. Experimental Results on Different Sample Sizes

The running cost and (cross-validation) classification accuracy based on experiments on ten UCI datasets can be seen in Table I below.

The following key results are noted:

- Regarding classification accuracy, the proposed methods (kTree and k*Tree) outperformed kNN, AD-KNN, FASBIR, and LC-kNN on all datasets by 1.5%-4.5%, but had no notable improvements compared to GS-kNN and S-kNN.

- Classification methods which involved learning optimal k-values (for example the proposed kTree and k*Tree methods, or S-kNN, GS-kNN, AD-kNN) outperformed the methods with predefined k-values, such as traditional kNN.

- The proposed k*Tree method had the lowest running cost of all methods. However, the k*Tree method was still outperformed in terms of classification accuracy by GS-kNN and S-kNN, but ran on average 15 000 times faster than either method. In addition, the kTree had the highest accuracy and it's running cost was lower than any other methods except the k*Tree method.

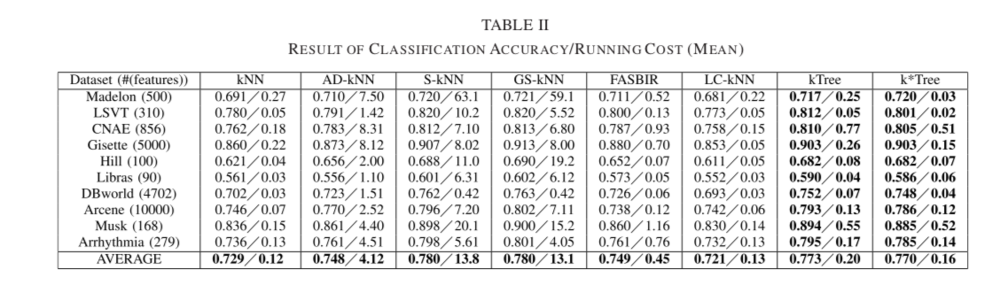

B. Experimental Results on Different Feature Numbers

The goal of this section was to evaluate the robustness of all methods under differing numbers of features; results can be seen in Table II below. The Fisher score, an algorithm that solves maximum likelihood equations numerically [15], was used to rank and select the most information features in the datasets.

From Table II, the proposed kTree and k*Tree approaches outperformed kNN, AD-kNN, FASBIR and LC-KNN when tested for varying feature numbers. The S-kNN and GS-kNN approaches remained the best in terms of classification accuracy, but were greatly outperformed in terms of running cost by k*Tree. The cause for this is that k*Tree only scans a subsample of the training samples for kNN classification, while S-kNN and GS-kNN scan all training samples.

Conclusion

This paper introduced two novel approaches for kNN classification algorithms that can determine optimal k-values for each test sample. The proposed kTree and k*Tree methods can classify the test samples efficiently and effectively, by designing a training step that reduces the run time of the test stage and thus enhances the performance. Based on the experimental results for varying sample sizes and differing feature numbers, it was observed that the proposed methods outperformed existing ones in terms of running cost while still achieving similar or better classification accuracies. Future areas of investigation could focus on the improvement of kTree and k*Tree for high-dimensional data.

Critiques

- The paper only assessed classification accuracy through cross-validation accuracy. However, it would be interesting to investigate how the proposed methods perform using different metrics, such as AUC, precision-recall curves, or in terms of holdout test data set accuracy.

- The authors addressed that some of the UCI datasets contained imbalanced data (such as the Climate and German data sets) while others did not. However, the nature of the class imbalance was not extreme, and the effect of imbalanced data on algorithm performance was not discussed or assessed. Moreover, it would have been interesting to see how the proposed algorithms performed on highly imbalanced datasets in conjunction with common techniques to address imbalance (e.g. oversampling, undersampling, etc.).

- While the authors contrast their kTree and k*Tree approach with different kNN methods, the paper could contrast their results with more of the approaches discussed in the Related Work section of their paper. For example, it would be interesting to see how the kTree and k*Tree results compared to Góra and Wojna varied optimal k method.

- The paper conducted an experiment on kNN, AD-kNN, S-kNN, GS-kNN,FASBIR and LC-kNN with different sample sizes and feature numbers. It would be interesting to discuss why the running cost of FASBIR is between that of kTree and k*Tree in figure 21.

- A different paper also discusses optimizing the K value for the kNN algorithm in clustering. However, this paper suggests using the expectation-maximization algorithm as a means of finding the optimal k value.

- It would be nice to have a comparison of the running costs of different methods to see how much faster kTree and k*Tree performed

- It would be better to show the key result only on a summary rather than stacking up all results without screening.

- In the results section, it was mentioned that in the experiment on data sets with different numbers of features, the kTree and k*Tree model did not achieve GS-kNN or S-kNN's accuracies, but was faster in terms of running cost. It might be helpful here if the authors add some more supporting arguments about the benefit of this tradeoff, which appears to be a minor decrease in accuracy for a large improvement in speed. This could further showcase the advantages of the kTree and k*Tree models. More quantitative analysis or real-life scenario examples could be some choices here.

- An interesting thing to notice while solving for the optimal matrix [math]\displaystyle{ W^* }[/math] that minimizes the loss function is that [math]\displaystyle{ W^* }[/math] is not necessarily a symmetric matrix. That is, the correlation between the [math]\displaystyle{ i^{th} }[/math] entry and the [math]\displaystyle{ j^{th} }[/math] entry is different from that between the [math]\displaystyle{ j^{th} }[/math] entry and the [math]\displaystyle{ i^{th} }[/math] entry, which makes the resulting W* not really semantically meaningful. Therefore, it would be interesting if we may set a threshold on the allowing difference between the [math]\displaystyle{ ij^{th} }[/math] entry and the [math]\displaystyle{ ji^{th} }[/math] entry in [math]\displaystyle{ W^* }[/math] and see if this new configuration will give better or worse results compared to current ones, which will provide better insights of the algorithm.

- It would be interesting to see how the proposed model works with highly non-linear datasets. In the event it does not work well, it would pose the question: would replacing the k*Tree with a SVM or a neural network improve the accuracy? There could be experiments to show if this variant would prove superior over the original models.

- The key results are a little misleading - for example they claim "the kTree had the highest accuracy and it's running cost was lower than any other methods except the k*Tree method" is false. The kTree method had slightly lower accuracy than both GS-kNN and S-kNN and kTree was also slower than LC-kNN

- I want to point to the discussion on k*Tree's structure. In order for k*Tree to work effectively, its leaf nodes needs to store additional information. In addition to the optimal k value, it also needs to store things like the training samples that have the optimal k, and the k nearest neighbours of the previously identified training samples. How big of am impact does this structure have on storage cost? Since the number of leaf nodes can be large, the storage cost may be large as well. This can potentially make k*tree ineffective to use in practice, especially for very large datasets.

- It would be better if the author can explain more on KTree method and the similarity of KTree method and KNN method.

- Even though we are given a table with averages on the accuracy and mean running cost, it would have been nice to see a direct visual comparison in the figures followed below. In addition to comparing to other algorithms, it would be helpful to see the average expected cost of these algorithms to show as control or rather a standard to accuracy and compute cost to assess the overall general expected cost of running such classification algorithm to fully assess its efficacy.

- It doesn't clearly mention what's the definition/similarity/difference between Ktree and KNN methods. If the authors could put some detailed explanations in the beginning, the flow of this paper would have been much better.

- It would be better to know if the paper indicates the performance difference between small and large dataset. Would the performance increase be negligible in small features datasets?

- It would be more clear if the experiment connect with the approach part tightly, like even just mention how to apply the approach to get these results.

- It would be better if the author had provided several paragraphs discussing the complexity of these models. It seems like the highlight of kTree is that it offers similar performance at a significantly lower cost.

References

[1] C. Zhang, Y. Qin, X. Zhu, and J. Zhang, “Clustering-based missing value imputation for data preprocessing,” in Proc. IEEE Int. Conf., Aug. 2006, pp. 1081–1086.

[2] Y. Song, J. Huang, D. Zhou, H. Zha, and C. L. Giles, “IKNN: Informative K-nearest neighbor pattern classification,” in Knowledge Discovery in Databases. Berlin, Germany: Springer, 2007, pp. 248–264.

[3] P. Vincent and Y. Bengio, “K-local hyperplane and convex distance nearest neighbor algorithms,” in Proc. NIPS, 2001, pp. 985–992.

[4] V. Premachandran and R. Kakarala, “Consensus of k-NNs for robust neighborhood selection on graph-based manifolds,” in Proc. CVPR, Jun. 2013, pp. 1594–1601.

[5] X. Zhu, S. Zhang, Z. Jin, Z. Zhang, and Z. Xu, “Missing value estimation for mixed-attribute data sets,” IEEE Trans. Knowl. Data Eng., vol. 23, no. 1, pp. 110–121, Jan. 2011.

[6] F. Sahigara, D. Ballabio, R. Todeschini, and V. Consonni, “Assessing the validity of QSARS for ready biodegradability of chemicals: An applicability domain perspective,” Current Comput.-Aided Drug Design, vol. 10, no. 2, pp. 137–147, 2013.

[7] S. Zhang, M. Zong, K. Sun, Y. Liu, and D. Cheng, “Efficient kNN algorithm based on graph sparse reconstruction,” in Proc. ADMA, 2014, pp. 356–369.

[8] X. Zhu, L. Zhang, and Z. Huang, “A sparse embedding and least variance encoding approach to hashing,” IEEE Trans. Image Process., vol. 23, no. 9, pp. 3737–3750, Sep. 2014.

[9] U. Lall and A. Sharma, “A nearest neighbor bootstrap for resampling hydrologic time series,” Water Resour. Res., vol. 32, no. 3, pp. 679–693, 1996.

[10] D. Cheng, S. Zhang, Z. Deng, Y. Zhu, and M. Zong, “KNN algorithm with data-driven k value,” in Proc. ADMA, 2014, pp. 499–512.

[11] F. Sahigara, D. Ballabio, R. Todeschini, and V. Consonni, “Assessing the validity of QSARS for ready biodegradability of chemicals: An applicability domain perspective,” Current Comput.-Aided Drug Design, vol. 10, no. 2, pp. 137–147, 2013.

[12] Z. H. Zhou and Y. Yu, “Ensembling local learners throughmultimodal perturbation,” IEEE Trans. Syst. Man, B, vol. 35, no. 4, pp. 725–735, Apr. 2005.

[13] Z. H. Zhou, Ensemble Methods: Foundations and Algorithms. London, U.K.: Chapman & Hall, 2012.

[14] Z. Deng, X. Zhu, D. Cheng, M. Zong, and S. Zhang, “Efficient kNN classification algorithm for big data,” Neurocomputing, vol. 195, pp. 143–148, Jun. 2016.

[15] K. Tsuda, M. Kawanabe, and K.-R. Müller, “Clustering with the fisher score,” in Proc. NIPS, 2002, pp. 729–736.