Don't Just Blame Over-parametrization: Difference between revisions

mNo edit summary |

|||

| Line 3: | Line 3: | ||

Jared Feng, Xipeng Huang, Mingwei Xu, Tingzhou Yu | Jared Feng, Xipeng Huang, Mingwei Xu, Tingzhou Yu | ||

== Introduction == | == Introduction == | ||

''Don't Just Blame Over-parametrization for Over-confidence: Theoretical Analysis of Calibration in Binary Classification'' is a paper from ICML 2021 written by Yu Bai, Song Mei, Huan Wang, Caiming Xiong. | |||

Machine learning models such as deep neural networks have high accuracy. However, the predicted top probability (confidence) does not reflect the actual accuracy of the model, which tends to be '''over-confident'''. For example, a WideResNet 32 on CIFAR100 has on average a predicted top probability of 87%, while the actual test accuracy is only 72% in (Guo et al., 17'). To address this issue, more and more researchers work on improving the '''calibration''' of models, which can reduce the over-confidence and preserve (or even improve) the accuracy in (Ovadia et al., 19'). | |||

== Experiments == | == Experiments == | ||

Revision as of 06:29, 12 November 2021

Presented by

Jared Feng, Xipeng Huang, Mingwei Xu, Tingzhou Yu

Introduction

Don't Just Blame Over-parametrization for Over-confidence: Theoretical Analysis of Calibration in Binary Classification is a paper from ICML 2021 written by Yu Bai, Song Mei, Huan Wang, Caiming Xiong.

Machine learning models such as deep neural networks have high accuracy. However, the predicted top probability (confidence) does not reflect the actual accuracy of the model, which tends to be over-confident. For example, a WideResNet 32 on CIFAR100 has on average a predicted top probability of 87%, while the actual test accuracy is only 72% in (Guo et al., 17'). To address this issue, more and more researchers work on improving the calibration of models, which can reduce the over-confidence and preserve (or even improve) the accuracy in (Ovadia et al., 19').

Experiments

The authors conducted two experiments to test the theories: the first was based on simulation, and the second used the data CIFAR10.

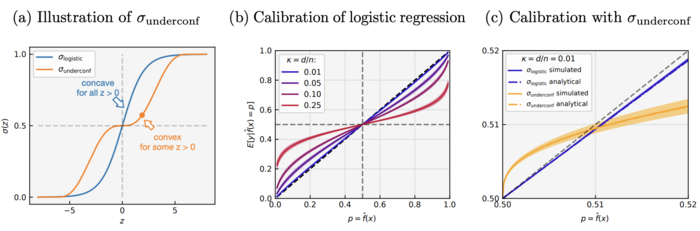

There are two activations used in the simulation: well-specified under-parametrized logistic regression as well as general convex ERM with the under-confident activation [math]\displaystyle{ \sigma_{underconf} }[/math]. The “calibration curves” were plotted for both activations: the x-axis is p, the y-axis is the average probability given the prediction.

The figure above shows four main results: First, the logistic regression is over-confident at all [math]\displaystyle{ \kappa }[/math]. Second, over-confidence is more severe when [math]\displaystyle{ \kappa }[/math] increases, suggests the conclusion of the theory holds more broadly than its assumptions. Third, [math]\displaystyle{ \sigma_{underconf} }[/math] leads to under-confidence for [math]\displaystyle{ p \in (0.5, 0.51) }[/math], which verifies Theorem 2 and Corollary 3. Finally, theoretical prediction closely matches the simulation, further confirms the theory.

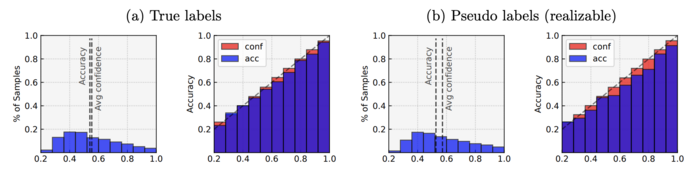

The generality of the theory beyond the Gaussian input assumption and the binary classification setting was further tested using dataset CIFAR10 by running multi-class logistic regression on the first five classes on it. The author performed logistic regression on two kinds of labels: true label and pseudo-label generated from the multi-class logistic (softmax) model.

The figure above indicates that the logistic regression is over-confident on both labels, where the over-confidence is more severe on the pseudo-labels than the true labels. This suggests the result that logistic regression is inherently over-confident may hold more broadly for other under-parametrized problems without strong assumptions on the input distribution, or even when the labels are not necessarily realizable by the model.