Dense Passage Retrieval for Open-Domain Question Answering

Presented by

Nicole Yan

1. Introduction

Open domain question answering is a task that finds question answers from a large collection of documents. Nowadays open domain QA systems usually use a two-stage framework: (1) a retriever that selects a subset of documents, and (2) a reader that fully reads the document subset and selects the answer spans. Stage one (1) is usually done through bag-of-words models, which count overlapping words and their frequencies in documents. Each document is represented by a high-dimensional, sparse vector. A common bag-of-words method that has been used for years is BM25, which ranks all documents based on the query terms appearing in each document. Stage one produces a small subset of documents where the answer might appear, and then in stage two, a reader would read the subset and locate the answer spans. Stage two is usually done through neural models, like Bert. While stage two benefits a lot from the recent advancement of neural language models, stage one still relies on traditional term-based models. This paper tries to improve stage one by using dense retrieval methods that generate dense, latent semantic document embedding, and demonstrates that dense retrieval methods can not only outperform BM25, but also improve the end-to-end QA accuracies.

2. Background

The following example clearly shows what problems open domain QA systems tackle. Given a question: "What is Uranus?", a system should find the answer spans from a large corpus. The corpus size can be billions of documents. In stage one, a retriever would select a small set of potentially relevant documents, which then would be fed to a neural reader in stage two for the answer spans extraction. Only a filtered subset of documents is processed by a neural reader since neural reading comprehension is expensive. It's impractical to process billions of documents using a neural reader.

3. Dense Passage Retriever

This paper focuses on improving the retrieval component and proposed a framework called Dense Passage Retriever (DPR) which aims to efficiently retrieve the top K most relevant passages from a large passage collection. The key component of DPR is a dual-BERT model which encodes queries and passages in a vector space where relevant pairs of queries and passages are closer than irrelevant ones.

3.1 Model Architecture Overview

DPR has two independent BERT encoders: a query encoder Eq, and a passage encoder Ep. They map each input sentence to a d dimensional real-valued vector, and the similarity between a query and a passage is defined as the dot product of their vectors. DPR uses the [CLS] token output as embedding vectors, so d = 768.

3.2 Training

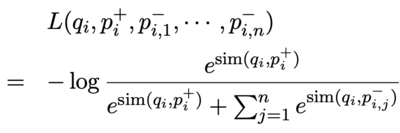

The training data can be viewed as m instances of (query, positive passage, negative passages) pairs. The loss function is defined as the negative log likelihood of the positive passages.

While positive passage selection is simple, where the passage contains the answer is selected, negative passage selection is less explicit. The authors experimented with three types of negative passages: (1) Random passage from the corpus; (2) false positive passages returned by BM25; (3) Gold positive passages from the training set — i.e., a positive passage for one query is considered as a negative passage for another query. The authors got the best model by using gold positive passages from the same batch as negatives. This trick is called in-batch negatives. Assume there are B pairs of (query q_i, positive passage p_i) in a mini-batch, then the negative passages for query q_i are the passages p_j when j is not equal to i.

4. Experimental Setup

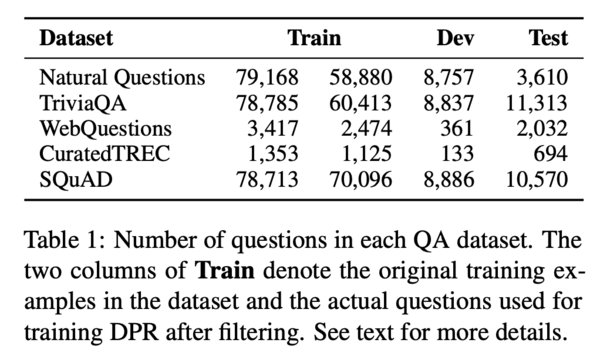

The authors pre-processed Wikipedia documents and split each document into passages of length 100 words. These passages form a candidate pool. Five QA datasets are used: Natural Questions (NQ), TriviaQA, WebQuestions (WQ), CuratedTREC (TREC), and SQuAD v1.1. To build the training data, the authors match each question in the five datasets with a passage that contains the correct answer. The dataset statistics are summaries below.

5. Retrieval Performance Evaluation

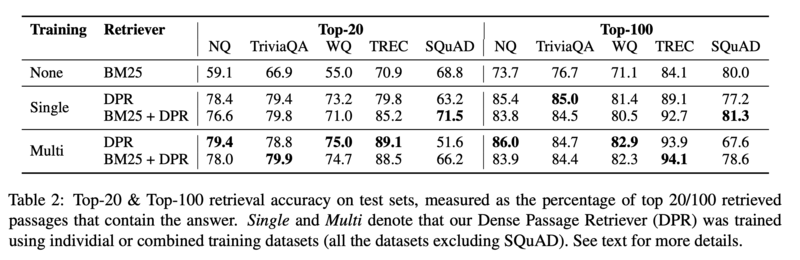

The authors trained DPR on five datasets separately, and on the combined dataset. They compared DPR performance with the performance of the term-frequency based model BM25, and BM25+DPR.

5.1 Main Results

The table below shows the retrieval accuracy on test sets. DPR generally performs better on datasets excluding SQuAD. The authors speculated that the high lexical overlap between queries and passages in SQuAD results in BM25 outperforming DPR.

5.2 Ablation Study on Model Training

5.3 Qualitative Analysis

5.4 Run-time Efficiency

6. Experiments: Question Answering

7. Related Work

8. Conclusion

Critiques

References

[1] Vladimir Karpukhin, Barlas Oğuz, Sewon Min, Patrick Lewis, Ledell Wu, Sergey Edunov, Danqi Chen, Wen-tau Yih. Dense Passage Retrieval for Open-Domain Question Answering. EMNLP 2020.