DCN plus: Mixed Objective And Deep Residual Coattention for Question Answering

Introduction

Question Answering(QA) is one of the challenging computer science tasks that need an understanding of the natural language and the ability to reason efficiently. To accurately answer the question, the model must first have a detailed understanding of the context the question is being asked from. Because the questions are usually very detailed, having a shallow knowledge from the context would lead to poor and unacceptable performance. Moreover, The model should gather all the information provided in the question and match them with its knowledge from the context. Generating the answer is another interesting task. Based on the dataset the model is meant for, the output of the model might be in a completely different form. In the past years, QA datasets have improved significantly. Previous datasets were really simple and they usually did not simulate a real-world question-answer pair. For example, Children's book test was one of the popular datasets that have been used for QA for a long time. But the real task for this dataset was to just fill empty spaces in given sentences with the appropriate words. During the past years, the importance of the QA tasks and their practical uses encouraged many to gather and crowdsource useful and more realistic datasets. The Stanford Question Answering Dataset(SQuAD), Microsoft MAchine Reading COmprehension Dataset(MS MARCO), and Visual Question Answering Dataset(VQA) are only a few examples of the currently advanced datasets. As a result of these advancements, many researchers are focusing to improve the performance of the question answering models on these datasets. Deep neural networks were able to outperform the human accuracy on a few of these datasets, but in many cases, there is still a gap between the state-of-the-art and human performance. Previously, Dynamic Coattention Networks(DCN) proved to be efficient on the SQuAD, achieving state-of-the-art performance at the time. In this work, a further modification to DCN has been done which improves the accuracy of the model by proposing a mixed objective that combines cross entropy loss with self-critical policy learning.

Overview of previous work

Most of the current QA models are made from different modules and usually stacked on top of each other. Improving one of the modules would lead to an overall performance of the model. Thus, to evaluate the efficiency of an improvement, researchers usually take a previously submitted model and replace their own improved module with the current one in the model. This is mostly because QA is an interesting discipline and has practical uses.

- Embedding layer: This layer maps each word (or images in the case of visual QA) to a vector space. There are many options to choose for the embedding layer. While pre-trained GloVes or Word2Vecs showed promising results on many tasks, most models use a combination of GloVe and character level embeddings. The character level embeddings are especially useful when dealing with out-of-vocab words. In the case of dealing with images, the embeddings are usually generated using pre-trained ResNets. Using different embedding layers for images has shown to change the overall performance of the model drastically.

- Contextual_layer: The purpose of this layer is to add more features to each word embedding based on the surrounding words and the context. This layer is not presented in many models including the DCN.

- Attention layer: There has been a lot of investigation on the attention mechanisms in recent years. These works, mostly inspired by Bahdanau et al. (2014), try to either modify the basic matrix-based attention mechanism or to develop innovative ones. The sole purpose of the attention mechanism is to make the model able to understand a context, based on the information gathered from somewhere else. For example, in image-based QA, attention layer helps the model to understand the question based on the information provided in the image such as object classes. This way, the model can realize what parts of the question are more important.

- Output layer: This is the final layer of all models, generating the answer of the question based on the information provided from all the previous layers.

DCN+ structure

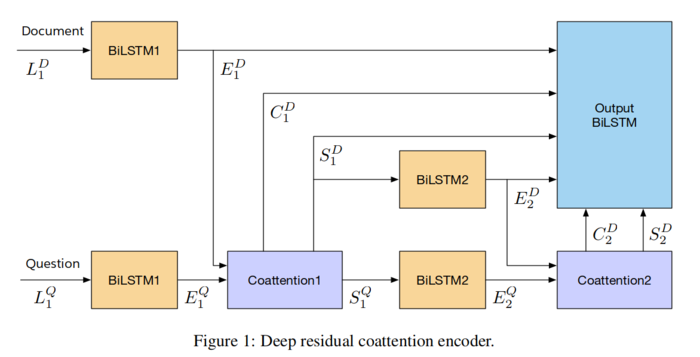

The DCN+ is an improvement on the previous DCN model. The overall structure of the model is the same as before. The first improvement is on the co-attention module. By introducing a deep residual co-attention encoder, the output of the attention layer becomes more feature-rich. The second improvement is achieved by mixing the previous cross-entropy loss with reinforcement learning rewards from self-critical policy learning.

- Deep residual co-attention encoder:

The previous co-attention module was unable to grasp complex information based on the context and the question. Recent studies showed that stacked attention mechanisms are outperforming the single layer attention modules. In DCN+, the co-attention module is stacked to make it able to self-attend to the context and grasp more information. The second modification is to use residual connectors when merging the co-attention output from each layer.

let [math]\displaystyle{ L^D \in R^{m×d} }[/math] and [math]\displaystyle{ L^Q \in R^{n×d} }[/math] denote the word embedding for the context and the question respectively. Here, [math]\displaystyle{ d, m, n }[/math] are the embedding vector size, document word count, and question word count respectively. The model uses a bidirectional LSTM as the contextual layer with shared wights. Also, an additional sentinel token is added at the end of the document and question to make it possible for the model to distinguish between the document and question. [math]\displaystyle{ E^D }[/math] and [math]\displaystyle{ E^Q }[/math] are outputs of the encoder(contextual) layer.

\begin{align} E^D = BiLSTM(L^D) \in R^(h×(m+1)) \end{align} \begin{align} E^Q = tanh(W BiLSTM(L^Q) \in R^(h×(n+1)) \end{align}

Here [math]\displaystyle{ h }[/math] is the hidden size of the LSTM. The affinity matrix is created based on the output of the encoder. The affinity matrix is the matrix that the attention module is created based on it. Then

Experiments

To achieve optimal performance, the hyperparameters and training environment are fine-tuned. For tokenizing the documents, the Stanford CoreNLP reversible tokenizers has been used. For word embeddings, a pre-trained GloVE (trained on 840B common crawl) is used. The optimizer has been set to Adam and a dropout is also applied on word embeddings that zeros a word embedding with a probability of 0.075.

Results

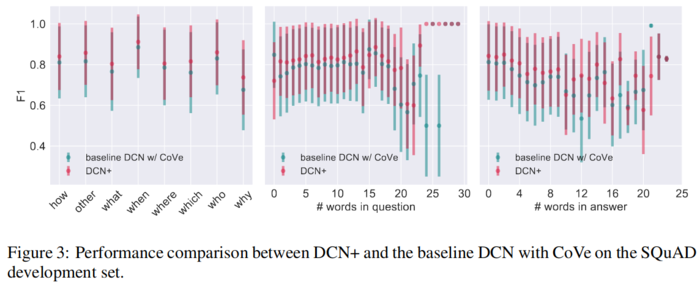

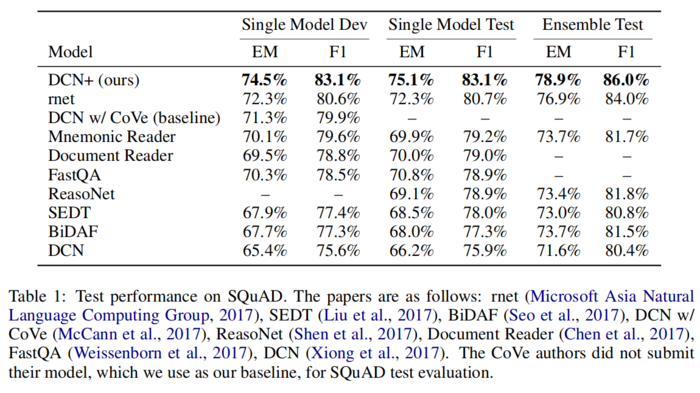

At the time of submission, the model was able to achieve state-of-the-art results on the SQuAD, outperforming the second model on the leaderboard by 2.0% both on the exact match and F1 scores. It is worth mentioning that a 5% improvement was also achieved with respect to the original DCN model.

In general, DCN+ was able to a achieve consistent performance improvement in almost evey question category.