DCN plus: Mixed Objective And Deep Residual Coattention for Question Answering

Introduction

Question Answering(QA) is one of the challenging computer science tasks that need an understanding of the natural language and the ability to reason efficiently. To accurately answer the question, the model must first have a detailed understanding of the context the question is being asked from. Because the questions are usually very detailed, having a shallow knowledge from the context would lead to poor and unacceptable performance. Moreover, the model should gather all the information provided in the question and match them with its knowledge from the context. Generating the answer is another interesting task. Based on the dataset the model is meant for, the output of the model might be in a completely different form. In the past years, QA datasets have improved significantly. Previous datasets were really simple and they usually did not simulate a real-world question-answer pair. For example, Children's book test was one of the popular datasets that have been used for QA for a long time. But the real task for this dataset was to just fill empty spaces in given sentences with the appropriate words. During the past years, the importance of the QA tasks and their practical uses encouraged many to gather and crowdsource useful and more realistic datasets. The Stanford Question Answering Dataset(SQuAD), Microsoft Machine Reading Comprehension Dataset(MS MARCO), and Visual Question Answering Dataset(VQA) are only a few examples of the currently advanced datasets. As a result of these advancements, many researchers are focusing to improve the performance of the question answering models on these datasets. Deep neural networks were able to outperform the human accuracy on a few of these datasets, but in many cases, there is still a gap between the state-of-the-art and human performance. Previously, Dynamic Coattention Networks(DCN) proved to be efficient on the SQuAD, achieving state-of-the-art performance at the time. In this work, a further modification to DCN has been done which improves the accuracy of the model by proposing a mixed objective that combines cross entropy loss with self-critical policy learning. Moreover, the rewards used are based on the word overlap to find a solution for the evaluation metric and objective misalignment.

Overview of previous work

Most of the current QA models are made from different modules and usually stacked on top of each other. Improving one of the modules would lead to an overall performance of the model. Thus, to evaluate the efficiency of an improvement, researchers usually take a previously submitted model and replace their own improved module with the current one in the model. This is mostly because QA is an interesting discipline and has practical uses.

The state of the art approaches to this problem can be divided into 3.

1. Neural Models for question answering: Models like coattention, bidirectional attention flow and self-matching attention build codependent representations of the question and the document. After building these representations, the models predict the answer by generating the start position and the end position corresponding to the estimated answer span. The generation process utilizes a pointer network. Another approach uses a dynamic decoder that iteratively proposes answers by alternating between the start position and end position estimates, which in some cases allows it to recover from initial mistakes in predictions.

2. Neural Attention Models: Models like self-attention have been applied to language modeling and sentimental analysis. A deep version of the same called deep self-attention networks attained state-of-the-art results in machine translation. For an intuitive understanding of attention based neural network models refer to the following links: Attention Models Intuition 1 Attention Models Intuition 2. Coattention, bidirectional attention and self-matching attention are some of the methods that build codependent representation between the question and the document.

3. Reinforcement learning in NLP: Hierarchical RL techniques have been proposed for generating text in a simulated way finding domain. DQN have been used to learn policies in text-based games using game rewards as feedback. Neural conversational model has been proposed, that is trained using policy gradient methods, whose reward function consisted of heuristics for ease of answering, information flow, and semantic coherence. General actor-critic temporal-difference methods for sequence prediction have also been experimented, performing metric optimization on language modeling and machine translation. Direct word overlap metric optimization has also been applied to summarization and machine translation.

Important Terms

- Embedding layer: This layer maps each word (or images in the case of visual QA) to a vector space. There are many options to choose for the embedding layer. While pre-trained GloVes or Word2Vecs showed promising results on many tasks, most models use a combination of GloVe and character level embeddings. The character level embeddings are especially useful when dealing with out-of-vocab words. In the case of dealing with images, the embeddings are usually generated using pre-trained ResNets. Using different embedding layers for images has shown to change the overall performance of the model drastically.

- Contextual_layer: The purpose of this layer is to add more features to each word embedding based on the surrounding words and the context. This layer is not presented in many models including the DCN.

- Attention layer: There has been a lot of investigation on the attention mechanisms in recent years. These works, mostly inspired by Bahdanau et al. (2014), try to either modify the basic matrix-based attention mechanism or to develop innovative ones. The sole purpose of the attention mechanism is to make the model able to understand a context, based on the information gathered from somewhere else. For example, in image-based QA, attention layer helps the model to understand the question based on the information provided in the image such as object classes. This way, the model can realize what parts of the question are more important. This model uses co-attention layers (Xiong, 2017). Given two inputs sources (text and question), internal representations are built conditioned on one of the sources. In a way, this can be thought of as retaining (attending to) parts of the input that is relevant to the other source. From the text, only parts that are 'useful' to the question are kept, while from the question, parts that are useful for the text are retained. The intuition stems from the fact that it is easier to answer a question from a text, knowing the question beforehand compared with when the question is only available at the end. In the former, only information relevant to the question is kept, while in the latter case, all information from the text needs to be kept.

- Output layer: This is the final layer of all models, generating the answer of the question based on the information provided from all the previous layers.

DCN+ structure

The DCN+ is an improvement on the previous DCN model. The overall structure of the model is the same as before. The first improvement is on the coattention module. By introducing a deep residual coattention encoder, the output of the attention layer becomes more feature-rich. The second improvement is achieved by mixing the previous cross-entropy loss with reinforcement learning rewards from self-critical policy learning. DCN+ has a decoder module that is only applicable to the SQuAD dataset since the decoder only predicts an answer span from the given context.

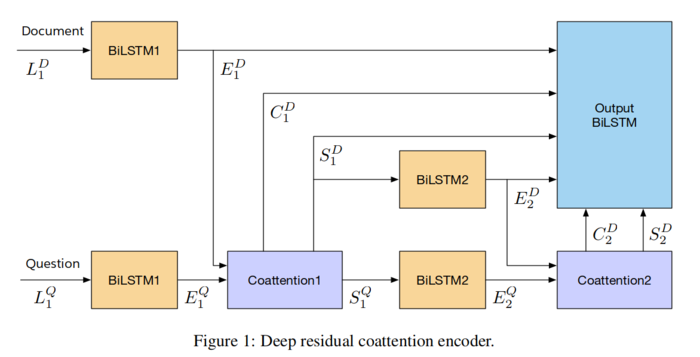

Deep residual coattention encoder

The previous coattention module was unable to grasp complex information based on the context and the question. Recent studies showed that stacked attention mechanisms are outperforming the single layer attention modules. In DCN+, the coattention module is stacked to make it able to self-attend to the context and grasp more information. The second modification is to use residual connectors when merging the coattention output from each layer.

let [math]\displaystyle{ L^D \in R^{m×d} }[/math] and [math]\displaystyle{ L^Q \in R^{n×d} }[/math] denote the word embedding for the context and the question respectively. Here, [math]\displaystyle{ d, m, n }[/math] are the embedding vector size, document word count, and question word count respectively. The model uses a bidirectional LSTM as the contextual layer with shared wights. Also, an additional sentinel token is added at the end of the document and question to make it possible for the model to distinguish between the document and question. [math]\displaystyle{ E^D }[/math] and [math]\displaystyle{ E^Q }[/math] are outputs of the encoder(contextual) layer.

\begin{align} E_1^D = BiLSTM_1(L^D) \in R^{(h×(m+1))} \end{align} \begin{align} E_1^Q = tanh(W BiLSTM_1(L^Q) \in R^{(h×(n+1))} \end{align}

Here [math]\displaystyle{ h }[/math] is the hidden size of the LSTM. The affinity matrix is created based on the output of the encoder. The affinity matrix is the matrix that the has been used in the attention module from the introduction of attention. By performing a column-wise softmax function on the affinity matrix a vector would be generated that is a representation of the importance of each question token, based on the model's understanding of the context. Similarly, if a row-wise softmax function is applied to the affinity matrix, the output vector would represent the importance of each context word, based on the question. By multiplying these vectors to the outputs of the encoder layer, question-aware context and context-aware question representations would be created.

\begin{align} A = {(E_1^D)}^T E_1^Q \in R^{(m+1)×(n+1)} \end{align} \begin{align} {S_1^D} = E_1^Q softmax(A^T) \in R^{h×(m+1)} \end{align} \begin{align} {S_1^Q} = E_1^D softmax(A) \in R^{h×(n+1)} \end{align}

To make the question-aware context representation even deeper and more feature-rich, an output (called the co-attention context, [math]\displaystyle{ C_1^D }[/math]) of the first co-attention layer is fed directly into the decoder using a residual connection.

\begin{align} {C_1^D} = S_1^Q softmax(A^T) \in R^{h×m} \end{align}

Note that the model drops the dimension corresponding to the sentinel vector. The summaries also get encoded after this stage, using two bidirectional LSTMs with shared variables.

\begin{align} {E_2^D} = BiLSTM_2(S_1^Q) \in R^{2h×m} \end{align} \begin{align} {E_2^Q} = BiLSTM_2(S_1^D) \in R^{2h×n} \end{align}

Finally, The [math]\displaystyle{ E_2^D }[/math] and [math]\displaystyle{ E_2^Q }[/math] are fed into the second co-attention layer. Similar to the first co-attention layer, three outputs are produced, [math]\displaystyle{ S_2^D, S_2^Q, C_2^D }[/math]. However, [math]\displaystyle{ S_2^Q }[/math] is not used. These co-attention modules can easily get stacked to create a deeper attention mechanism.

The output of the second co-attention layer are concatenated with residual connections from [math]\displaystyle{ C_1^D, S_1^D, E_2^D }[/math]. The final output of model is obtained by passing the concatenated representation through another bi-direction LSTM:

\begin{align} U = BiLSTM(concat(E_1^D;E_2^D;S_1^D;S_2^D;C_1^D;C_2^D) \in R^{2h×m} \end{align}

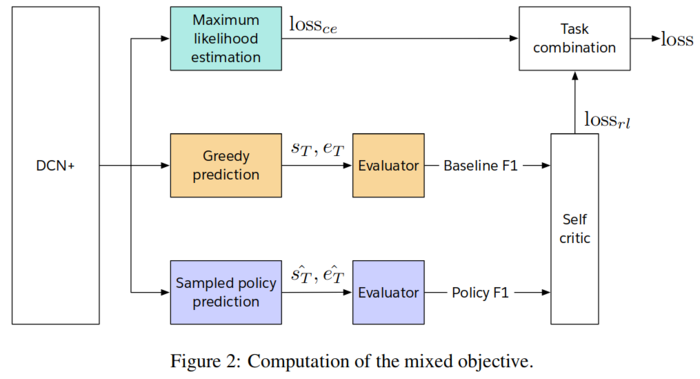

Mixed objective using self-critical policy learning

DCN produces a distribution over that start and end positions of the answer span. Because of the dynamic nature of the decoder module, it estimates separate distributions over the start and end position of the answer dynamically.

\begin{align} l_{ce}(\theta) = - \sum_{t} (log \ p_t^{start}(s|s_{t-1},e_{t-1};\theta) + log \ p_t^{end}(e|s_{t-1},e_{t-1};\theta)) \end{align}

In the above equation, [math]\displaystyle{ s }[/math] and [math]\displaystyle{ e }[/math] denote the respective start and end points of the ground truth answer. [math]\displaystyle{ s_t }[/math] and [math]\displaystyle{ e_t }[/math] denote the greedy estimation of the start and end positions at the [math]\displaystyle{ t }[/math]th decoding time step. Similarly, [math]\displaystyle{ p_t^{start} \in R^m }[/math] and [math]\displaystyle{ p_t^{end} \in R^m }[/math] denote the distribution of the start and end positions respectively. The problem with the above loss functions is that it does not consider the F1 metric for evaluation of the model. There are two metrics to estimate QA models accuracy. The first metric is the exact match and it is a binary score. If the answer string does not match with the ground truth answer even by a single character, the exact match score would be zero. The second metric is the F1 score. F1 score is basically the degree of the overlap between the predicted answer and the ground truth. For example, suppose there are more than two correct answer spans in a context, [math]\displaystyle{ A }[/math] and [math]\displaystyle{ B }[/math], but none of the match the ground truth positions. If A has an exact string match but B does not, The cross-entropy loss would penalize both of them equally. However, if we include can F1 scores in our calculations, the loss function would penalize B and not A.

The main problem with including F1 score directly into cost functions is that it is non-differentiable. A trick from (Sutton et al.,1999; Schulman et al., 2015) is used to approximate the expected gradient. For this, DCN+ uses a self-critical reinforcement learning objective.

\begin{align} l_{rl}(\theta) = -E_{\hat{\tau} \sim p_\tau} [R(s,e,\hat{s}_T,\hat{e}_T;\theta)] \end{align}

\begin{align} \approx -E_{\hat{\tau} \sim p_\tau} [F_1 (ans(\hat{s}_T, \hat{e}_T), ans(s, e)) - F_1(ans(s_T, e_T), ans(s, e))] \end{align}

Here [math]\displaystyle{ \hat{s} \sim p_t^{start} }[/math] and [math]\displaystyle{ \hat{e} \sim p_t^{end} }[/math] denote the sampled start and end positions respectively from the estimated distributions at [math]\displaystyle{ t }[/math]th decoding step. [math]\displaystyle{ \hat{\tau} }[/math] is the sequence of sampled start and end positions during all [math]\displaystyle{ T }[/math] decoder steps, [math]\displaystyle{ R }[/math] is the expected reward, [math]\displaystyle{ F_1 }[/math] is the F1 score between the predicted answer and the expected answer. Rather than using the raw F1 score, mean subtracted F1 score is used (baseline). Previous studies show that using a baseline for the reward reduces the variance of gradient estimates and facilitates convergence. DCN+ uses a self-critic that uses the F1 produced during greedy inference by the current model.

Dataset

The dataset SQuAD (Reference: Stanford NP Group) was used in training the network. The SQuAD 1.1 dataset contains 100 000 questions, based on a set of Wikipedia articles. These questions are designed to be answered by a segment of text from the article. The solutions to each question are represented by a start location, and the text of the answer. An example question-answer pair of the SQuAD 2.0 dataset is: Q: "When did Beyonce start becoming popular?", A: (text: "in the late 1990s", start: 269).

The SQuAD 2.0 dataset augments the SQuAD 1.1 collection with 50 000 unanswerable questions, designed in an adversarial manner. Samples were generated by crowdworkers in both cases.

Experiments

To achieve optimal performance, the hyperparameters and training environment are fine-tuned. The hyperparameters of DCN are duplicated. The model was trained and evaluated using the Stanford Question Answering Dataset (SQuAD). For tokenizing the documents, the Stanford CoreNLP reversible tokenizers has been used. For word embeddings, a pre-trained GloVE (trained on 840B common crawl), as well as character ngram embeddings by Hashimoto et al. (2017), is used. Furthermore, these embeddings are then concatenated with context vectors (CoVe) trained on WMT. Words which are not found in the vocabulary have their embedding and context vectors set to zero. The optimizer has been set to Adam and a dropout is also applied on word embeddings that zeros a word embedding with a probability of 0.075. PyTorch is used to build the model.

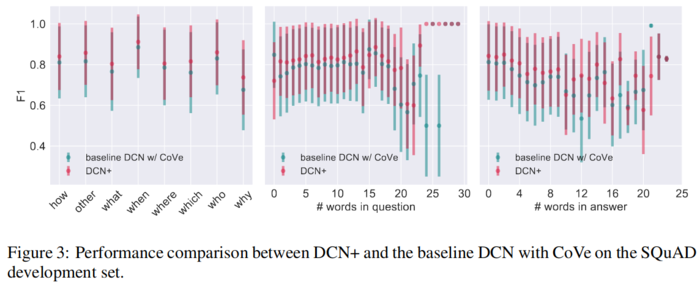

Comparison to baseline DCN with CoVe: The experiments from the paper concluded that DCN+ outperforms the baseline, by 3.2 % exact match and accuracy and 3.2% F1 on SQuAD development dataset. In particular DCN+ , gives significant advantages for long questions.

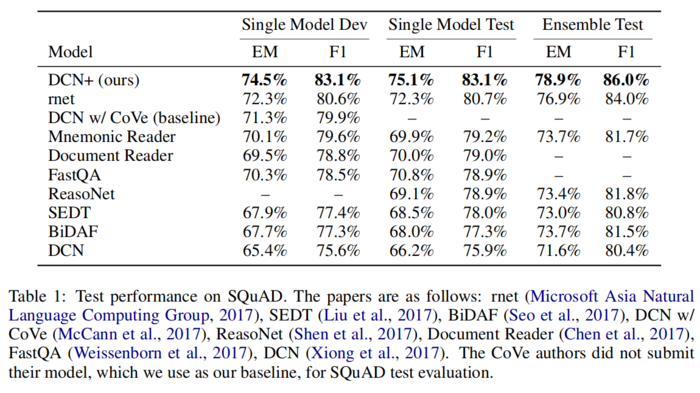

Results

At the time of submission, the model was able to achieve state-of-the-art results on the SQuAD, outperforming the second model on the leaderboard by 2.0% both on the exact match and F1 scores. It is worth mentioning that a 5% improvement was also achieved with respect to the original DCN model.

In general, DCN+ was able to a achieve consistent performance improvement in almost every question category.

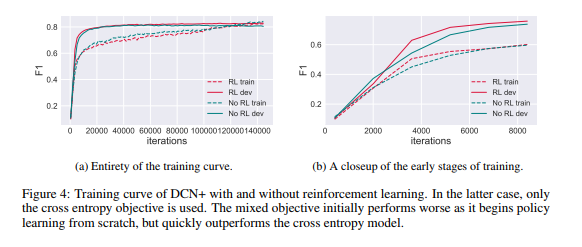

The training curves for DCN+ with reinforcement learning and DCN+ without reinforcement learning are shown in Figure 4 to illustrate the effectiveness of our proposed mixed objective.

Ablation Study

An analysis of the significance of each part of the model found that the deep residual coattention contributed the most to the overall performance. The second highest contributor was the mixed objective. The sparse mixture of experts layer in the decoder also provided some minor contributions to improving the overall performance.

Summary and Critiques

This paper introduces a novel model for the task of question answering where the cross-entropy loss commonly used for such problems previously has been combined with self-critical policy learning. The rewards are obtained from word overlap to solve misalignment metric and optimization objective. This paper improves the state of the art in a popular question-answer data set. The critical drawback in this paper is that it only shows experimental improvements on one question answer dataset. Previous works in the same field have considered performances on at least three different comprehensive question answer data sets. This paper is only an incremental improvement over the previous algorithm DCN which was released a year back. For the policy learning objective, the authors consider the task as a multi-task learning problem where the dual losses are linearly combined. The authors should have used a weighted combination instead as the positional match objective using cross entropy is far more important than the word overlap objective with ground truth. Additionally, some methods adopted by the authors are not intuitive and not much explanation is given for the same. For example, it is not very clear why the F1 scores have been used as RL rewards as against some other distance objectives commonly used in previous works in the same field like cross entropy. The authors mention a common problem in using Reinforcement learning in NLP problems. NLP domains are discontinuous and discrete domains which the agents have to repeatedly explore to find a good policy. RL is very data hungry, but NLP domains don't offer sufficient datasets for exploration in most cases. The paper says that it is treating the optimization problem as a multi-task learning problem to get around the exploration problem. It is not clear how this is effected.

Other Sources

- An easy to understand blog on the base DCN model can be found at [1].

- Tensorflow Source code for this model can be found at [2]

References

Dzmitry Bahdanau, Kyunghyun Cho, and Yoshua Bengio. Neural machine translation by jointly learning to align and translate. In ICLR, 2015.

Dzmitry Bahdanau, Philemon Brakel, Kelvin Xu, Anirudh Goyal, Ryan Lowe, Joelle Pineau, Aaron C. Courville, and Yoshua Bengio. An actor-critic algorithm for sequence prediction. In ICLR, 2017.

Danqi Chen, Adam Fisch, Jason Weston, and Antoine Bordes. Reading Wikipedia to answer open-domain questions. In ACL, 2017.

Nina Dethlefs and Heriberto Cuayahuitl. Combining hierarchical reinforcement learning and ´ bayesian networks for natural language generation in situated dialogue. In Proceedings of the 13th European Workshop on Natural Language Generation, pp. 110–120. Association for Computational Linguistics, 2011.

Evan Greensmith, Peter L. Bartlett, and Jonathan Baxter. Variance reduction techniques for gradient estimates in reinforcement learning. Journal of Machine Learning Research, 5:1471–1530, 2001.

Kazuma Hashimoto, Caiming Xiong, Yoshimasa Tsuruoka, and Richard Socher. A joint many-task model: Growing a neural network for multiple NLP tasks. In EMNLP, 2017.

Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Deep residual learning for image recognition. 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 770– 778, 2016.

Sepp Hochreiter and Jurgen Schmidhuber. Long short-term memory. Neural computation, 9 8: 1735–80, 1997.

Alex Kendall, Yarin Gal, and Roberto Cipolla. Multi-task learning using uncertainty to weigh losses for scene geometry and semantics. CoRR, abs/1705.07115, 2017. Diederik P. Kingma and Jimmy Ba. Adam: A method for stochastic optimization. CoRR, abs/1412.6980, 2014.

Vijay R. Konda and John N. Tsitsiklis. Actor-critic algorithms. In NIPS, 1999. Jiwei Li, Will Monroe, Alan Ritter, Michel Galley, Jianfeng Gao, and Dan Jurafsky. Deep reinforcement learning for dialogue generation. In EMNLP, 2016.

Rui Liu, Junjie Hu, Wei Wei, Zi Yang, and Eric Nyberg. Structural embedding of syntactic trees for machine comprehension. In ACL, 2017.

Jiasen Lu, Jianwei Yang, Dhruv Batra, and Devi Parikh. Hierarchical question-image co-attention for visual question answering. In NIPS, 2016.

Christopher D. Manning, Mihai Surdeanu, John Bauer, Jenny Rose Finkel, Steven Bethard, and David McClosky. The stanford corenlp natural language processing toolkit. In ACL, 2014.

Bryan McCann, James Bradbury, Caiming Xiong, and Richard Socher. Learned in translation: Contextualized word vectors. In NIPS, 2017.

Microsoft Asia Natural Language Computing Group. R-net: Machine reading comprehension with self-matching networks. 2017.

Karthik Narasimhan, Tejas D. Kulkarni, and Regina Barzilay. Language understanding for textbased games using deep reinforcement learning. In EMNLP, 2015.

Romain Paulus, Caiming Xiong, and Richard Socher. A deep reinforced model for abstractive summarization. CoRR, abs/1705.04304, 2017.

Jeffrey Pennington, Richard Socher, and Christopher D. Manning. Glove: Global vectors for word representation. In EMNLP, 2014.

Pranav Rajpurkar, Jian Zhang, Konstantin Lopyrev, and Percy Liang. Squad: 100, 000+ questions for machine comprehension of text. In EMNLP, 2016.

John Schulman, Nicolas Heess, Theophane Weber, and Pieter Abbeel. Gradient estimation using stochastic computation graphs. In NIPS, 2015.

Min Joon Seo, Aniruddha Kembhavi, Ali Farhadi, and Hannaneh Hajishirzi. Bidirectional attention flow for machine comprehension. In ICLR, 2017.

Noam Shazeer, Azalia Mirhoseini, Krzysztof Maziarz, Andy Davis, Quoc Le, Geoffrey Hinton, and Jeff Dean. Outrageously large neural networks: The sparsely-gated mixture-of-experts layer. In ICLR, 2017.

Yelong Shen, Po-Sen Huang, Jianfeng Gao, and Weizhu Chen. Reasonet: Learning to stop reading in machine comprehension. In Proceedings of the 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 1047–1055. ACM, 2017.

Richard S. Sutton, David A. McAllester, Satinder P. Singh, and Yishay Mansour. Policy gradient methods for reinforcement learning with function approximation. In NIPS, 1999.

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, and Illia Polosukhin. Attention is all you need. In NIPS, 2017.

Oriol Vinyals, Meire Fortunato, and Navdeep Jaitly. Pointer networks. In NIPS, 2015.

Shuohang Wang and Jing Jiang. Machine comprehension using match-lstm and answer pointer. In ICLR, 2017.

Dirk Weissenborn, Georg Wiese, and Laura Seiffe. Making neural qa as simple as possible but not simpler. In CoNLL, 2017.

Yonghui Wu, Mike Schuster, Zhifeng Chen, Quoc V Le, Mohammad Norouzi, Wolfgang Macherey, Maxim Krikun, Yuan Cao, Qin Gao, Klaus Macherey, et al. Google’s neural machine translation system: Bridging the gap between human and machine translation. arXiv preprint arXiv:1609.08144, 2016.

Caiming Xiong, Victor Zhong, and Richard Socher. Dynamic coattention networks for question answering. In ICLR, 2017.

Stanford NLP Group. Squad 2.0: The Stanford Question Answering Dataset. https://rajpurkar.github.io/SQuAD-explorer/. Accessed October 24, 2018.