CatBoost: unbiased boosting with categorical features

Presented by

Jessie Man Wai Chin, Yi Lin Ooi, Yaqi Shi, Shwen Lyng Ngew

Introduction

This paper presents a deep convolutional neural network architecture codenamed Inception. This newly designed architecture enhances the utilization of the computing resources by increasing the depth and width of the network, while maintaining the computational budget constraint. DNNs are powerful because they can perform arbitrary parallel computation for a modest number of steps. The proposed architecture was implemented through a 22 layers deep network called GoogLeNet and significantly outperformed the state of the art in the ImageNet Large-Scale Visual Recognition Challenge 2014 (ILSVRC14).

Previous Work

The current architecture is built on the network-in-network approach proposed by Lin et al.[1] for the purpose of increase the representation power of the neural networks. They added additional 1 X 1 convolutional layers, serving as dimension reduction modules to significantly reduce the number of parameters of the model. The paper also took inspiration from the Regions with Convolutional Neural Networks (R-CNN) proposed by Girshick et al. [2]. The overall detection problem is divided into two subproblems:

1. Utilize low-level cues for potential object proposals

2. Classify object categories with CNN

Motivation

Gradient boosting is known to be a popular and powerful machine learning method that could potentially increase the accuracy of a model. It aims to identify strong predictors in a model by performing gradient descent. Nevertheless, there exists a statistical issue which makes gradient boosting less powerful. The paper identified this issue as prediction shift caused by target leakage.

A prediction shift happens when there is a shift between the predicted model trained on the training samples and the distribution of the test samples. This often occurs as the predicted model is trained using all training samples and not all training samples are fully representative of the test samples. In gradient boosting, the current estimate [math]\displaystyle{ F^t }[/math] is based on the previous model [math]\displaystyle{ F^{t-1} }[/math] we built on earlier in each iteration. To better demonstrate a prediction shift, it essentially means that: $$F^{t-1}(\bf{x_k})|\bf{x_k}$$

Model Architecture

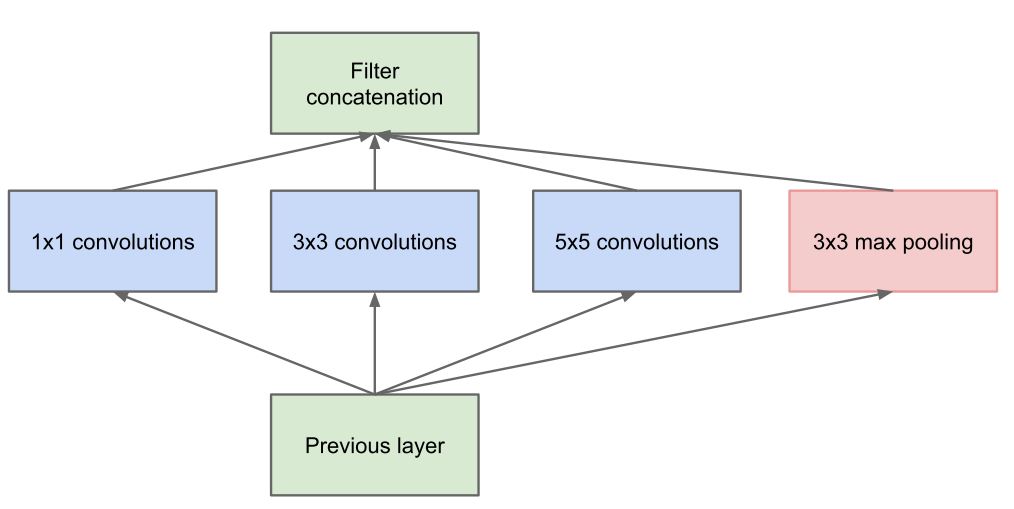

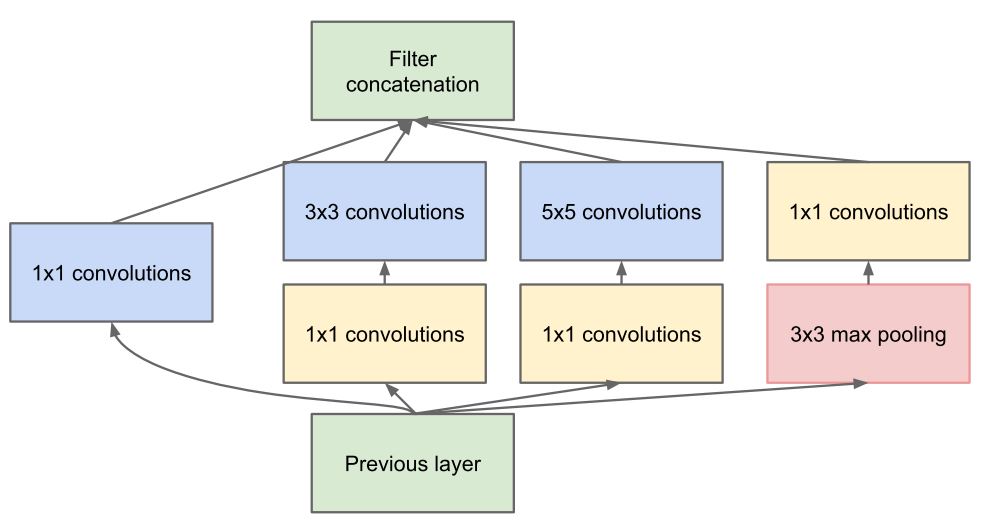

The Inception architecture consists of stacking blocks called the inception modules. The idea is that to increase the depth and width of model by finding local optimal sparse structure and repeating it spatially. Traditionally, in each layer of convolutional network pooling operation and convolution and its size (1 by 1, 3 by 3 or 5 by 5) should be decided while all of them are beneficial for the modeling power of the network. Whereas, in Inception module instead of choosing, all these various options are computed simultaneously (Fig. 1a). Inspired by layer-by-layer construction of Arora et al. [3], in Inception module statistics correlation of the last layer is analyzed and clustered into groups of units with high correlation. These clusters form units of next layer and are connected to the units of previous layer. Each unit from the earlier layer corresponds to some region of the input image and the outputs of them are concatenated into a filter bank. Additionally, because of the beneficial effect of pooling in the convolutional networks, a parallel path of pooling has been added in each module. The Inception module in its naïve form (Fig. 1a) suffers from high computation and power cost. In addition, as the concatenated output from the various convolutions and the pooling layer will be an extremely deep channel of output volume, the claim that this architecture has an improved memory and computation power use looks like counterintuitive. However, this issue has been addressed by adding a 1 by 1 convolution before costly 3 by 3 and 5 by 5 convolutions. The idea of 1 by 1 convolution was first introduced by Lin et al. and called network in network [1]. This 1x1 convolution mathematically is equivalent to a multilayer perceptron which reduces the dimension of filter space (the depth of the output volume) and on top of that they also act as a non-linear rectifying activation layer ReLu to add to the non-linearity immediately after each 1 by 1 convolution (Fig. 1b). This enables less over-fitting due to smaller Kernel size (1 by 1). This distinctive dimensionality reduction feature of the 1 by 1 convolution allows shielding of the large number of input filters of the previous stage to the next stage (Footnote 2).

The combination of various layers of convolution has some similarity with human eyes in interpreting the visual information in a sense that human eyes also process the visual information at various scale and combines to extract the features from different scale simultaneously. Similarly, in inception design network in network designs extract the fine grain details of input volume while medium- and large-sized filters cover a large receptive field of the inputs and extract their features and with pooling operations overfitting can be overcome by reducing the spatial sizes.

ILSVRC 2014 Challenge Results

The proposed architecture was implemented through a deep network called GoogLeNet as a submission for ILSVRC14’s Classification Challenge and Detection Challenge.

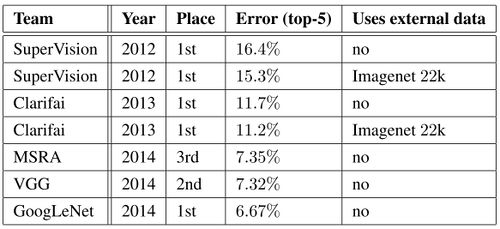

The classification challenge is to classify images into one of 1000 categories in the Imagenet hierarchy. The top-5 error rate - the percentage of test examples for which the correct class is not in the top 5 predicted classes - is used for measuring accuracy. The results of the classification challenge is shown in Table 1. The final submission of GoogLeNet obtains a top-5 error of 6.67% on both the validation and testing data, ranking first among all participants, significantly outperforming top teams in previous years, and not utilizing external data.

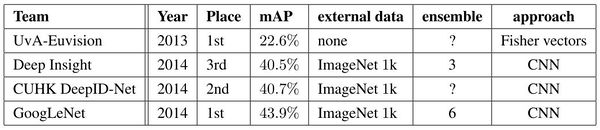

The ILSVRC detection challenge asks to produce bounding boxes around objects in images among 200 classes. Detected objects count as correct if they match the class of the groundtruth and their bounding boxes overlap by at least 50%. Each image may contain multiple objects (with different scales) or none. The mean average precision (mAP) is used to report performance. The results of the detection challenge is listed in Table 2. Using the Inception model as a region classifier, combining Selective Search and using an ensemble of 6 CNNs, GoogLeNet gave top detection results, almost doubling accuracy of the the 2013 top model.

Conclusion

Googlenet outperformed the other previous deep learning networks, and it became a proof of concept that approximating the expected optimal sparse structure by readily available dense building blocks (or the inception modules) is a viable method for improving the neural networks in computer vision. The significant quality gain is at a modest increase for the computational requirement is the main advantage for this method. Even without performing any bounding box operations to detect objects, this architecture gained a significant amount of quality with a modest amount of computational resources.

Critiques

By using nearly 5 million parameters, GoogLeNet, compared to previous architectures like VGGNet and AlexNet, reduced the number of parameters in the network by almost 92%. This enabled Inception to be used for many big data applications where a huge amount of data was needed to be processed at a reasonable cost while the computational capacity was limited. However, the Inception network is still complex and susceptible to scaling. If the network is scaled up, large parts of the computational gains can be lost immediately. Also, there was no clear description about the various factors that lead to the design decision of this inception architecture, making it harder to adapt to other applications while maintaining the same computational efficiency.

-

References

[1] L. Bottou and Y. L. Cun. Large scale online learning. In Advances in neural information processing systems, pages 217–224, 2004.

[2] L. Breiman. Out-of-bag estimation, 1996.

[3] L. Breiman. Using iterated bagging to debias regressions. Machine Learning, 45(3):261–277, 2001.

[4] L. Breiman, J. Friedman, C. J. Stone, and R. A. Olshen. Classification and regression trees. CRC press, 1984.

[5] R. Caruana and A. Niculescu-Mizil. An empirical comparison of supervised learning algorithms. In Proceedings of the 23rd international conference on Machine learning, pages 161–168. ACM, 2006.

[6] B. Cestnik et al. Estimating probabilities: a crucial task in machine learning. In ECAI, volume 90, pages 147–149, 1990.

[7] O. Chapelle, E. Manavoglu, and R. Rosales. Simple and scalable response prediction for display advertising. ACM Transactions on Intelligent Systems and Technology (TIST), 5(4):61, 2015.

[8] T. Chen and C. Guestrin. Xgboost: A scalable tree boosting system. In Proceedings of the 22Nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pages 785–794. ACM, 2016.

[9] M. Ferov and M. Modr `y. Enhancing lambdamart using oblivious trees. arXiv preprint arXiv:1609.05610, 2016.

[10] J. Friedman, T. Hastie, and R. Tibshirani. Additive logistic regression: a statistical view of boosting. The annals of statistics, 28(2):337–407, 2000.

[11] J. Friedman, T. Hastie, and R. Tibshirani. The elements of statistical learning, volume 1. Springer series in statistics New York, 2001.

[12] J. H. Friedman. Greedy function approximation: a gradient boosting machine. Annals of statistics, pages 1189–1232, 2001.

[13] J. H. Friedman. Stochastic gradient boosting. Computational Statistics & Data Analysis, 38(4):367–378, 2002.

[14] A. Gulin, I. Kuralenok, and D. Pavlov. Winning the transfer learning track of yahoo!’s learning to rank challenge with yetirank. In Yahoo! Learning to Rank Challenge, pages 63–76, 2011.

[15] X. He, J. Pan, O. Jin, T. Xu, B. Liu, T. Xu, Y. Shi, A. Atallah, R. Herbrich, S. Bowers, et al. Practical lessons from predicting clicks on ads at facebook. In Proceedings of the Eighth International Workshop on Data Mining for Online Advertising, pages 1–9. ACM, 2014.

[16] G. Ke, Q. Meng, T. Finley, T. Wang, W. Chen, W. Ma, Q. Ye, and T.-Y. Liu. Lightgbm: A highly efficient gradient boosting decision tree. In Advances in Neural Information Processing Systems, pages 3149–3157, 2017.

[17] M. Kearns and L. Valiant. Cryptographic limitations on learning boolean formulae and finite automata. Journal of the ACM (JACM), 41(1):67–95, 1994.

[18] J. Langford, L. Li, and T. Zhang. Sparse online learning via truncated gradient. Journal of Machine Learning Research, 10(Mar):777–801, 2009. 10

[19] LightGBM. Categorical feature support. http://lightgbm.readthedocs.io/en/latest/Advanced-Topics.html#categorical-feature-support, 2017.

[20] LightGBM. Optimal split for categorical features. http://lightgbm.readthedocs.io/en/latest/Features.html#optimal-split-for-categorical-features, 2017.

[21] LightGBM. feature_histogram.cpp. https://github.com/Microsoft/LightGBM/blob/master/src/treelearner/feature_histogram.hpp, 2018.

[22] X. Ling, W. Deng, C. Gu, H. Zhou, C. Li, and F. Sun. Model ensemble for click prediction in bing search ads. In Proceedings of the 26th International Conference on World Wide Web Companion, pages 689–698. International World Wide Web Conferences Steering Committee, 2017.

[23] Y. Lou and M. Obukhov. Bdt: Gradient boosted decision tables for high accuracy and scoring efficiency. In Proceedings of the 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pages 1893–1901. ACM, 2017.

[24] L. Mason, J. Baxter, P. L. Bartlett, and M. R. Frean. Boosting algorithms as gradient descent. In Advances in neural information processing systems, pages 512–518, 2000.

[25] D. Micci-Barreca. A preprocessing scheme for high-cardinality categorical attributes in classifi-cation and prediction problems. ACM SIGKDD Explorations Newsletter, 3(1):27–32, 2001.

[26] B. P. Roe, H.-J. Yang, J. Zhu, Y. Liu, I. Stancu, and G. McGregor. Boosted decision trees as an alternative to artificial neural networks for particle identification. Nuclear Instruments and Methods in Physics Research Section A: Accelerators, Spectrometers, Detectors and Associated Equipment, 543(2):577–584, 2005.

[27] L. Rokach and O. Maimon. Top–down induction of decision trees classifiers — a survey. IEEE Transactions on Systems, Man, and Cybernetics, Part C (Applications and Reviews), 35(4):476–487, 2005.

[28] D. B. Rubin. The bayesian bootstrap. The annals of statistics, pages 130–134, 1981.

[29] Q. Wu, C. J. Burges, K. M. Svore, and J. Gao. Adapting boosting for information retrieval measures. Information Retrieval, 13(3):254–270, 2010.

[30] K. Zhang, B. Schölkopf, K. Muandet, and Z. Wang. Domain adaptation under target and conditional shift. In International Conference on Machine Learning, pages 819–827, 2013.

[31] O. Zhang. Winning data science competitions. https://www.slideshare.net/ShangxuanZhang/winning-data-science-competitions-presented-by-owen-zhang,2015.

[32] Y. Zhang and A. Haghani. A gradient boosting method to improve travel time prediction. Transportation Research Part C: Emerging Technologies, 58:308–324, 2015.