CRITICAL ANALYSIS OF SELF-SUPERVISION: Difference between revisions

| Line 7: | Line 7: | ||

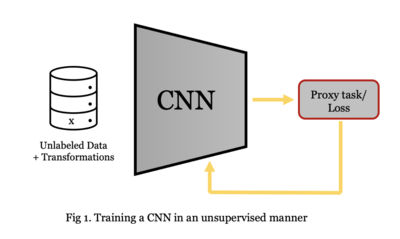

The main goal of self-supervised learning is to take advantage of vast amount of unlabeled data for training CNNs and finding a generalized image representation. | The main goal of self-supervised learning is to take advantage of vast amount of unlabeled data for training CNNs and finding a generalized image representation. | ||

In self-supervised learning, data generate ground truth labels per se by pretext tasks such as rotation estimation. For example, we have a picture of a cat without the label "cat". We rotate the cat image by 90 degrees clockwise and the CNN is trained in a way that to find the rotation axis. | In self-supervised learning, data generate ground truth labels per se by pretext tasks such as rotation estimation. For example, we have a picture of a cat without the label "cat". We rotate the cat image by 90 degrees clockwise and the CNN is trained in a way that to find the rotation axis. | ||

[[File:Unsupervised.png|400px|center]] | |||

== Previous Work == | == Previous Work == | ||

Revision as of 19:50, 26 November 2020

Presented by

Maral Rasoolijaberi

Introduction

This paper evaluated the performance of state-of-the-art unsupervised (self-supervised) methods on learning weights of convolutional neural networks (CNNs) to figure out whether current self-supervision techniques can learn deep features from only one image. The main goal of self-supervised learning is to take advantage of vast amount of unlabeled data for training CNNs and finding a generalized image representation. In self-supervised learning, data generate ground truth labels per se by pretext tasks such as rotation estimation. For example, we have a picture of a cat without the label "cat". We rotate the cat image by 90 degrees clockwise and the CNN is trained in a way that to find the rotation axis.

Previous Work

In recent literature, several papers addressed unsupervised learning methods and learning from a single sample.

A BiGAN [Donahue et al., 2017], or Bidirectional GAN, is simply a generative adversarial network plus an encoder. The generator maps latent samples to generated data and the encoder performs as the opposite of the generator. After training BiGAN, the encoder has learned to generate a rich image representation. In RotNet method [Gidaris et al., 2018], images are rotated and the CNN learns to figure out the direction. DeepCluster [Caron et al., 2018] alternates k-means clustering to learn stable feature representations under several image transformations.

Method

In the self-supervision methods, a hypothesis function should be defined without target labels. Let [math]\displaystyle{ x }[/math] be a sample from the unlabeled dataset. The weights of the CNN are learnt in a way that minimizes [math]\displaystyle{ ||h(x)-x|| }[/math] where [math]\displaystyle{ h(x) }[/math] is a hypothesis function, i.e. BiGAN, RoTNet and DeepCluster. AlexNet as CNN, and various methods of data augmentation including cropping, rotation, scaling, contrast changes, and adding noise, have been used in this paper. To measure the quality of features, they train a linear classifier on top of each convolutional layer of AlexNet to find whether features are linearly separable. In general, the main purpose of CNN is to reach a linearly separable representation for images. Next, they compared the results of a million images in the ImageNet dataset with a million augmented imaged generated from a single image.