Augmix: New Data Augmentation method to increase the robustness of the algorithm

Presented by

Abhinav Chanana

Introduction

Often a times machine learning algorithms assume that the training data is the correct representation of the data encountered during deployment. Algorithms generally ignore the chances of receiving little corruption which leads to less robust and reduction in accuracy as the models try to fit the noise as well for predictions. A small amount of corruptions has the potential to reduce the performance of various models like stated in the Hendrycks & Dietterich (2019) showing that the classification error rises from 25% to 62% when some corruption was introduced on the ImageNet test set. The problem with introducing some corruptions is that it encourages the models or network to memorize the specific corruptions and is unable to generalize the corruptions. The paper also provides evidences that networks trained on translation augmentations are highly sensitive to shifting of pixels. The paper comes with a new algorithm known as AugMix, a method which achieves new state-of-the-art results for robustness and uncertainty estimation while maintaining accuracy on standard benchmark datasets. The paper uses CIFAR 10 , CIFAR100 , ImageNet datasets for confirming the results. AUGMIX utilizes stochasticity and diverse augmentations, a Jensen-Shannon Divergence consistency loss, and a formulation to mix multiple augmented images to achieve state-of-the-art performance

Approach

At a high level, AugMix does some basic augmentations techniques. These augmentations are often layered to create a high diversity of augmented images. The loss is calculated using the Jensen-Shannon divergence method. The method proposed by the author can be divided into 3 major sections: 1. Augmentations: The author uses basic data augmentation chains and the composition of data augmentation operations using AutoAugment. A chain is created like shown in the figure above 2. Mixing: The resulting images from these augmentation chains are combined by mixing. The author chose to use elementwise convex combinations for simplicity. The k-dimensional vector of convex coefficients is randomly sampled from a Dirichlet(α, . . . , α) distribution. Once these images are mixed, the author uses a “skip connection” to combine the result of the augmentation chain and the original image through a second random convex combination sampled from a Beta(α, α) distribution.

3. Jensen-Shannon divergence

Data Set Used

The authors use the following datasets for conducting the experiment.

1. CIFAR 10 - https://www.cs.toronto.edu/~kriz/cifar.html 2. CIFAR 100 - https://www.cs.toronto.edu/~kriz/cifar.html 3. ImageNet - http://image-net.org/download

Experiments

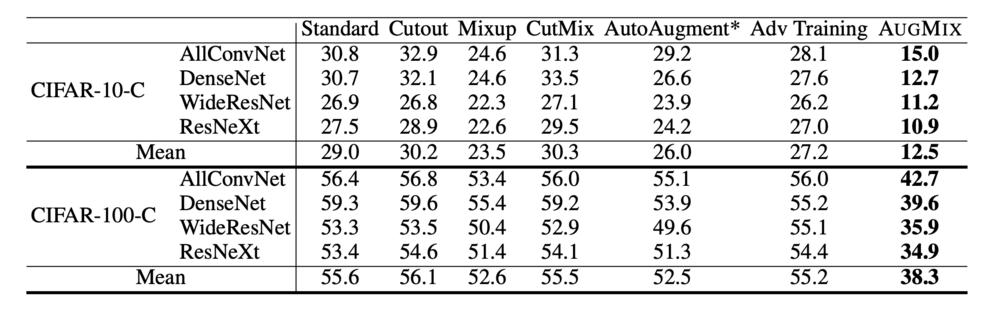

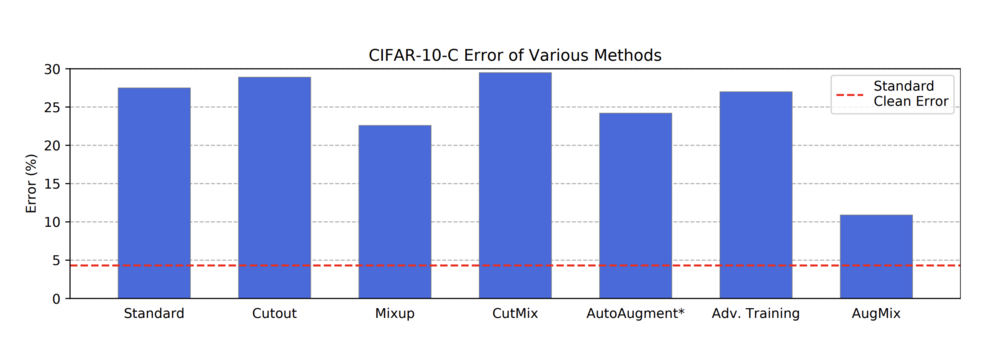

The author used CIFAR-10-C, CIFAR-100-C, and ImageNet-C datasets which are constructed by adding corruption to the original datasets. The CIFAR-10-P, CIFAR-100-P, and ImageNet-P datasets also modify the original CIFAR and ImageNet datasets. These datasets contain smaller perturbations than CIFAR-C and are used to measure the classifier’s prediction stability. The metrics used for comparison of the models is the error rate of the algorithm. The clean error is achieved by getting the error rates without applying any corruption of the datasets. In the experiment, the author uses 15 corruption techniques hence the error rate after corruption is taken as the average of all the error rates achieved by the specific model. In order to assess a model’s uncertainty estimates, we measure its miscalibration. The author uses Brier Score or d RMS Calibration Error for this purpose.

RESUTLS ON CIFAR DATASET

For CIFAR datasets, 15 corruptions have been applied

Setup: The author has used three models for comparison:

1.A DenseNet-BC (k = 12, d = 100)

2.A 40-2 Wide ResNet

3.A ResNeXt-29

The All Convolutional Network and Wide ResNet train for 100 epochs, and the DenseNet and ResNeXt require 200 epochs for convergence and weight decay of 0.0001 for Mixup and 0.0005 otherwise.

The author has further compared it to other state-of-the-art algorithms used for data augmentation, which can be seen in the above figure. The AugMix algorithm performs the best with 16.6% lower absolute corruption error. This method only uses ResNeXt on CIFAR-10-C for comparison purposes.

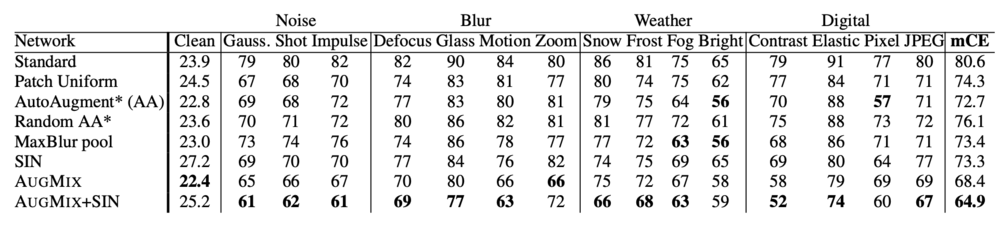

RESULTS ON ImageNet DATASET

This shows Clean Error, Corruption Error (CE), and mCE values for various methods on ImageNet-C. The mCE value is computed by averaging across all 15 CE values. AUGMIX reduces corruption error while improving clean accuracy, and it can be combined with SIN for greater corruption robustness.

Conclusion

AUGMIX is a data processing technique which mixes randomly generated augmentations and uses a Jensen-Shannon loss to enforce consistency. Our simple-to-implement technique obtains state-of-the-art performance on CIFAR-10/100-C, ImageNet-C, CIFAR-10/100-P, and ImageNet-P. AUGMIX models achieve state-of-the-art calibration and can maintain calibration even as the distribution shifts. We hope that AUGMIX will enable more reliable models, a necessity for models deployed in safety-critical environments.