ALBERT: A Lite BERT for Self-supervised Learning of Language Representations

Presented by

Maziar Dadbin

Introduction

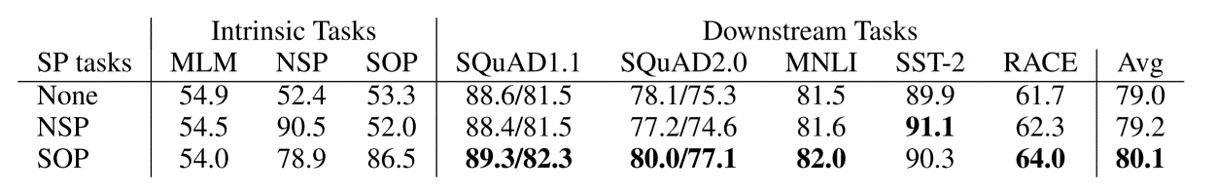

In this paper, the authors have made some changes to the BERT model and the result is ALBERT, a model that out-performs BERT on GLUE, SQuAD, and RACE benchmarks. The important point is that ALBERT has fewer number of parameters than BERT-large, but still it gets better results. The above mentioned changes are Factorized embedding parameterization and Cross-layer parameter sharing which are two methods of parameter reduction. They also introduced a new loss function and replaced it with one of the loss functions being used in BERT (i.e. NSP). The last change is removing dropout from the model.

Motivation

In natural language representations, larger models often result in improved performance. However, at some point GPU/TPU memory and training time constraints limit our ability to increase the model size any further. There exist some attempts to reduce the memory consumption but at the cost of speed (look at Chen et al. (2016), Gomez et al. (2017), and also Raffel et al. (2019)). The authors of this paper claim that there parameter reduction techniques reduce memory consumption and increase training speed.