Pre-Training-Tasks-For-Embedding-Based-Large-Scale-Retrieval

This page is a summary for NIPS 2016 paper Dialog-based Language Learning [1].

Introduction

One of the ways humans learn language, especially second language or language learning by students, is by communication and getting its feedback. However, most existing research in Natural Language Understanding has focused on supervised learning from fixed training sets of labeled data. This kind of supervision is not realistic of how humans learn, where language is both learned by, and used for, communication. When humans act in dialogs (i.e., make speech utterances) the feedback from other human’s responses contain very rich information. This is perhaps most pronounced in a student/teacher scenario where the teacher provides positive feedback for successful communication and corrections for unsuccessful ones.

This paper is about dialog-based language learning, where supervision is given naturally and implicitly in the response of the dialog partner during the conversation. This paper is a step towards the ultimate goal of being able to develop an intelligent dialog agent that can learn while conducting conversations. Specifically, this paper explores whether we can train machine learning models to learn from dialog.

Contributions of this paper

- Introduce a set of tasks that model natural feedback from a teacher and hence assess the feasibility of dialog-based language learning.

- Evaluated some baseline models on this data and compared them to standard supervised learning.

- Introduced a novel forward prediction model, whereby the learner tries to predict the teacher’s replies to its actions, which yields promising results, even with no reward signal at all

Code for this paper can be found on Github:https://github.com/facebook/MemNN/tree/master/DBLL

Background on Memory Networks

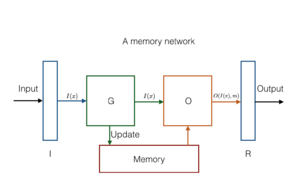

A memory network combines learning strategies from the machine learning literature with a memory component that can be read and written to.