Hierarchical Question-Image Co-Attention for Visual Question Answering

Paper Summary

| Conference |

|

| Authors | Jiasen Lu, Jianwei Yang, Dhruv Batra, Devi Parikh |

| Abstract | A number of recent works have proposed attention models for Visual Question Answering (VQA) that generate spatial maps highlighting image regions relevant to answering the question. In this paper, we argue that in addition to modeling "where to look" or visual attention, it is equally important to model "what words to listen to" or question attention. We present a novel co-attention model for VQA that jointly reasons about image and question attention. In addition, our model reasons about the question (and consequently the image via the co-attention mechanism) in a hierarchical fashion via a novel 1-dimensional convolution neural networks (CNN). Our model improves the state-of-the-art on the VQA dataset from 60.3% to 60.5%, and from 61.6% to 63.3% on the COCO-QA dataset. By using ResNet, the performance is further improved to 62.1% for VQA and 65.4% for COCO-QA. |

Introduction

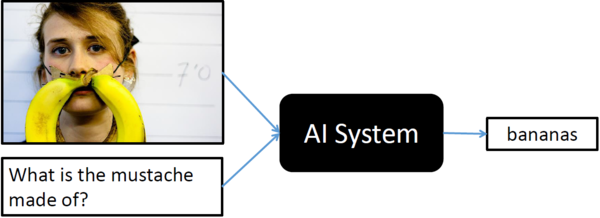

Visual Question Answering (VQA) is a recent problem in computer vision and natural language processing that has garnered a large amount of interest from the deep learning, computer vision, and natural language processing communities. In VQA, an algorithm needs to answer text-based questions about images in natural language as illustrated in Figure 1.

Recently, visual-attention based models have gained traction for VQA tasks, where the attention mechanism typically produces a spatial map highlighting image regions relevant for answering the visual question about the image. However, to correctly answer the question, the machine not only needs to understand or "attend" regions in the image but also parts of the question as well. In this paper, authors have proposed a novel co-attention technique to combine "where to look" or visual-attention along with "what words to listen to" or question-attention VQA allowing their model to jointly reasons about image and question thus improving upon existing state of the art results. They also propose a novel convolution-pooling strategy to adaptively select phrase sizes whose representations are passed to the question level.

"Attention" Models

You may skip this section if you already know about "attention" in the context of deep learning. Since this paper talks about "attention" almost everywhere, I decided to put this section to give a very informal and brief introduction to the concept of the "attention" mechanism especially visual "attention"; however, it can be expanded to any other type of "attention".

Visual attention in CNN is inspired by the biological visual system. As humans, we have the ability to focus our cognitive processing onto a subset of the environment that is more relevant to the given situation. Imagine, you witness a bank robbery where robbers are trying to escape on a car, as a good citizen, you will immediately focus your attention on number plate and other physical features of the car and robbers in order to give your testimony later, however, you may not remember things which otherwise interests you more. Such selective visual attention for a given context (robbery in above example) can also be implemented in traditional CNNs as well. This allows CNNs to be more robust and superior for certain tasks and it even helps algorithm designers to visualize what spatial features (regions within image) were more important than others. Attention guided deep learning is particularly very helpful for image caption and VQA tasks.

Role of Visual Attention in VQA

This section is not a part of the actual paper that is been summarized, however, it gives an overview of how visual attention can be incorporated in training of a network for VQA tasks, eventually, helping readers to absorb and understand the actually proposed ideas from the paper more effortlessly. Das et al. [5] provided a research study on 'human attention' in Visual Question Answering (VQA) to understand where humans choose to look to answer questions about images compared with deep models. The concept of "visual-attention" has also been implemented in VQA tasks, which is explored in [6].

Generally for implementing attention, network tries to learn the conditional distribution $P_{i \in [1,n]}(Li|c)$ representing individual importance for all the features extracted from each of the dsicrete $n$ locations within the image conditioned on some context vector $c$. In order words, given $n$ features $L_i = [L_1, ..., L_n]$ from $n$ different spacial regions within the image (top-left, top-middle, top-right, and so on), then "attention" module learns a parameteric function $F(c;\theta)$ that outputs an importance mapping of each of these individual feature for a given context vector $c$ or a discrete probability distribution of size $n$, can be achived by $softmax(n)$.

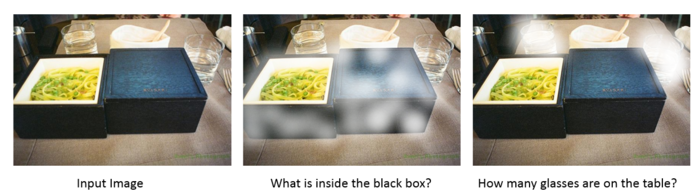

In order to incorporate the visual attention in VQA task, one can define context vector $c$ as a representation of the visual question asked by a user (using RNN perhaps LSTM). The context $c$ can then be used to generate an attention map for corresponding image locations (as shown in Figure 2) further improving the accuracy on final end-to-end training. Most work that exists in literature regarding use of visual-attention in VQA tasks are generally further specialization of the similar ideas.

Motivation and Main Contributions

So far, all attention models for VQA in literature have focused on the problem of identifying "where to look" or visual attention. In this paper, authors argue that the problem of identifying "which words to listen to" or question attention is equally important. Consider the questions "how many horses are in this image?" and "how many horses can you see in this image?". They have the same meaning, essentially captured by the first three words. A machine that attends to the first three words would arguably be more robust to linguistic variations irrelevant to the meaning and answer of the question. Motivated by this observation, in addition to reasoning about visual attention, the paper has addressed the problem of question attention. Basically, main contributions of the paper are as follows.

- A novel co-attention mechanism for VQA that jointly performs question-guided visual attention and image-guided question attention.

- A hierarchical architecture to represent the question, and consequently construct image-question co-attention maps at 3 different levels: word level, phrase level and question level. These co-attended features are then recursively combined from word level to question

level for the final answer prediction

- A novel convolution-pooling strategy at phase-level to adaptively select the phrase sizes whose representations are passed to the question level representation.

- Results on VQA and COCO-QA and ablation studies to quantify the roles of different components in the model

Related Work

The authors claim that no previous work has explored combined language-visual attention in VQA. There are now several other papers that do use such a combined approach. However, chronologically the present paper appears to the be first to do so and is referenced in the following works.

- Dual Attention Networks (DANs) [7]: These authors use a soft attention mechanism for the image. It computes weights for each input vector using a two layer feed forward neural network and a softmax function. For textual attention, the authors use a very similar mechanism as for visual attention. Image features are extracted using a 152-layer ResNet and bidirectional LSTMs are used to generate text features.

- Multi-level Attention Networks [8]: The authors use a context-aware visual attention algorithm. A bidirectional Gate Recurrent Unit (GRU) layer is used with the feature vectors from the last layer of a CNN as input. The GRU is run in both forward and backward directions for each region of the image generating a context-aware visual representation of the image. Textual attention is achieved using deep neural network concept detector trained on COCO. A second network is trained to measure the relevance between the question and the learned concepts. Lastly, textual and visual attention is combined by computing a joint feature vector with a softmax layer to select the correct answer.

Both papers present state-of-the-art results.

Method

This section is broken down into four parts: (i) notations used within the paper and also throughout this summary, (ii) hierarchical representation for a visual question, (iii) the proposed co-attention mechanism and (iv) predicting answers.

Notations

| Notation | Explaination |

| $Q = \{q_1,...q_T\}$ | One-hot encoding of a visual question with $T$ words. Paper uses three different representation of visual question, one for each level of hierarchy, they are as follows:

$Q^{w,p,s}$ has exactly $T$ number of embeddings in it (sequential data with temporal dimension), regardless of its position in the hierarchy i.e. word, phrase or question. |

| $V = {v_1,..,v_N}$ | $V$ represented various vectors from $N$ different locations within the given image. Therefore, $v_n$ is feature vector from the image at location $n$. $V$ collectively covers the entire spatial reachings of the image. One can extract these location sensitive features from convolution layer of CNN. |

| $\hat{v}^r$ and $\hat{q}^r$ | The co-attention features of image and question at each level in the hierarchy where $r \in \{w,p,s\}$. Basically, its a sum of $Q$ or $V$ after the dot product with attention $a^q$ or $a^v$ at each level of hierarchy.

For example, at "word" level, $a^q_w$ and $a^v_w$ is a probability distribution representing importance of each words in visual question and each location within image respectively, whereas $\hat{q}^w$ and $\hat{v}^w$ are final features vectors for the given question and image with attention maps ($a^q_w$ and $a^v_w$ applied) at the "word" level, and similarly for "phrase" and "question" level as well. |

Note: Throughout the paper, $W$ represents the learnable weights and biases are not used within the equations for simplicity (reader must assume it to exist).

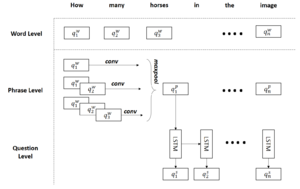

Question Hierarchy

There are three levels of granularities for their hierarchical representation of a visual question: (i) word, (ii) phrase and (iii) question level. It is important to note, each level depends on the previous one, so, phrase level representations are extracted from word level and question level representations come from phrase level as depicted in Figure 4.

Word Level

1-hot encoding of question's words $Q = \{q_1,..q_T\}$ are transformed into vector space (learned end-to-end) which represents word level embeddings of a visual question i.e. $Q^w = \{q^w_1,...q^w_T\}$. Paper has learned this transformation end-to-end instead of some pretrained models such as word2vec.

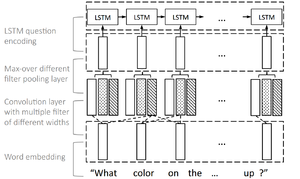

Phrase Level

Phrase level embedding vectors are calculated by using 1-D convolutions on the word level embedding vectors. Concretely, at each word location, the inner product of the word vectors with filters of three window sizes: unigram, bigram and trigram are computed as illustrated in Figure 4. For the t-th word, the output from convolution for window size s is given by

$$ \hat{q}^p_{s,t} = tanh(W_c^sq^w_{t:t+s-1}), \quad s \in \{1,2,3\} $$

Where $W_c^s$ is the weight parameters. The features from three n-grams are combined together using maxpool operator to obtain the phrase-level embeddings vectors.

$$ q_t^p = max(\hat{q}^p_{1,t}, \hat{q}^p_{2,t}, \hat{q}^p_{3,t}), \quad t \in \{1,2,...,T\} $$

Question Level

For question level representation, LSTM is used to encode the sequence $q_t^p$ after max-pooling. The corresponding question-level feature at time t $q_t^s$ is the LSTM hidden vector at time t $h_t$.

$$ \begin{align*} h_t &= LSTM(q_t^p, h_{t-1})\\ q_t^s &= h_t, \quad t \in \{1,2,...,T\} \end{align*} $$

Co-Attention Mechanism

Paper has proposed two co-attention mechanisms that differ in the order in which image and question attention maps are generated:

| Parallel co-attention | Generates image and question attention simultaneously. |

| Alternating co-attention | Sequentially alternates between generating image and question attentions. |

These co-attention mechanisms are executed at all three levels of the question hierarchy yielding $\hat{v}^r$ and $\hat{q}^r$ where $r$ is levels in hierarchy i.e. $r \in \{w,p,s\}$ (refer to Notations section).

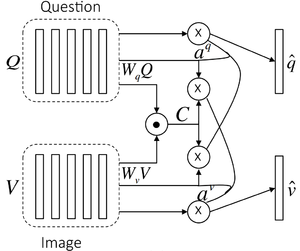

Parallel Co-Attention

Parallel co-attention attends to the image and question simultaneously as shown in Figure 5 by calculating the similarity between image and question features at all pairs of image-locations and question-locations. In the paper, "affinity matrix" has been mentioned as the way to calculate the "attention" or affinity for every pair of image location and question part for each level in the hierarchy (word, phrase, and question). Remember, there are $N$ image locations and $T$ question parts, thus affinity matrix is $\mathbb{R}^{T \times N}$. Specifically, for a given image with feature map $V \in \mathbb{R}^{d \times N}$, and the question representation $Q \in \mathbb{R}^{d \times T}$, the affinity matrix $C \in \mathbb{R}^{T \times N}$ is calculated by

$$ C = tanh(Q^TW_bV) $$

where,

- $W_b \in \mathbb{R}^{d \times d}$ contains the weights.

After computing this affinity matrix, one possible way of computing the image (or question) attention is to simply maximize out the affinity over the locations of other modality, i.e. $a_v[n] = \underset{i}{max}(C_{i,n})$ and $a_q[t] = \underset{j}{max}(C_{t,j})$. Their notation here is not rigorous. $a_v[n]$ is actually row number $\underset{i}{argmax}(C_{i,n})$ of matrix $C$, and $a_q[t]$ is column number $\underset{j}{argmax}(C_{t,j})$ of that matrix. Instead of choosing the max activation, paper has considered the affinity matrix as a feature and learn to predict image and question attention maps via the following

$$ H_v = tanh(W_vV + (W_qQ)C), \quad H_q = tanh(W_qQ + (W_vV )C^T )\\ a_v = softmax(w_{hv}^T Hv), \quad aq = softmax(w_{hq}^T H_q) $$

where,

- $W_v, W_q \in \mathbb{R}^{k \times d}$, $w_{hv}, w_{hq} \in \mathbb{R}^k$ are the weight parameters.

- $a_v \in \mathbb{R}^N$ and $a_q \in \mathbb{R}^T$ are the attention probabilities of each image region $v_n$ and word $q_t$ respectively.

The intuition behind above equation is that, image/question attention maps should be the function of question and image features jointly, therefore, authors have developed two intermediate parametric functions $H_v$ and $H_q$ that takes affinity matrix $C$, image features $V$ and question features $Q$ as input. The affinity matrix $C$ transforms question attention space to image attention space (vice versa for $C^T$). Based on the above attention weights, the image and question attention vectors are calculated as the weighted sum of the image features and question features, i.e.,

$$\hat{v} = \sum_{n=1}^{N}{a_n^v v_n}, \quad \hat{q} = \sum_{t=1}^{T}{a_t^q q_t}$$

The parallel co-attention is done at each level in the hierarchy, leading to $\hat{v}^r$ and $\hat{q}^r$ where $r \in \{w,p,s\}$. The reason they are using $tanh$ for $H_q$ and $H_v$is not specified in the paper. But my assumption is that they want to have negative impacts for certain unfavorable pair of image location and question fragment. Unlike $RELU$ or $Sigmoid$, $tanh$ can be between $[-1, 1]$ thus appropriate choice.

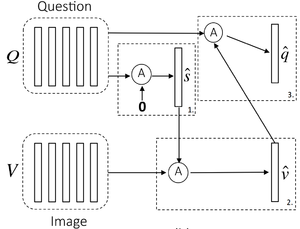

Alternating Co-Attention

In this attention mechanism, authors sequentially alternate between generating image and question attention as shown in Figure 6. Briefly, this consists of three steps

- Summarize the question into a single vector $q$

- Attend to the image based on the question summary $q$

- Attend to the question based on the attended image feature.

Concretely, paper defines an attention operation $\hat{x} = \mathcal{A}(X, g)$, which takes the image (or question) features $X$ and attention guidance $g$ derived from the question (or image) as inputs, and outputs the attended image (or question) vector. The operation can be expressed in the following steps

$$ \begin{align*} H &= tanh(W_xX + (W_gg)𝟙^T)\\ a_x &= softmax(w_{hx}^T H)\\ \hat{x} &= \sum{a_i^x x_i} \end{align*} $$

where,

- $𝟙$ is a vector with all elements to be 1.

- $W_x, W_g \in \mathbb{R}^{k\times d}$ and $w_{hx} \in \mathbb{R}^k$ are parameters.

- $a_x$ is the attention weight of feature $X$.

Breifly,

- At the first step of alternating coattention, $X = Q$, and $g$ is $0$.

- At the second step, $X = V$ where $V$ is the image features, and the guidance $g$ is intermediate attended question feature $\hat{s}$ from the first step

- Finally, we use the attended image feature $\hat{v}$ as the guidance to attend the question again, i.e., $X = Q$ and $g = \hat{v}$.

Similar to the parallel co-attention, the alternating co-attention is also done at each level of the hierarchy, leading to $\hat{v}^r$ and $\hat{q}^r$ where $r \in \{w,p,s\}$.

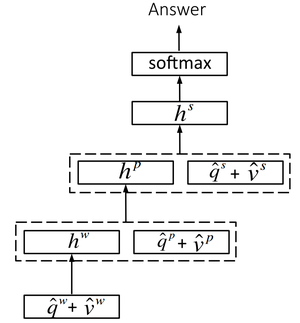

Encoding for Predicting Answers

Paper treats predicting final answer as a classification task. It was surprising because I always thought answer would be a sequence, however, by using MLP it is apparent that answer must be a single word. Co-attended image and question features from all three levels are combined together for the final prediction, see Figure 7. Basically, a multi-layer perceptron (MLP) is deployed to recursively encode the attention features as follows. $$ \begin{align*} h_w &= tanh(W_w(\hat{q}^w + \hat{v}^w))\\ h_p &= tanh(W_p[(\hat{q}^p + \hat{v}^p), h_w])\\ h_s &= tanh(W_s[(\hat{q}^s + \hat{v}^s), h_p])\\ p &= softmax(W_hh^s) \end{align*} $$

where

- $W_w, W_p, W_s$ and $W_h$ are the weight parameters.

- $[·]$ is the concatenation operation on two vectors.

- $p$ is the probability of the final answer.

Experiments

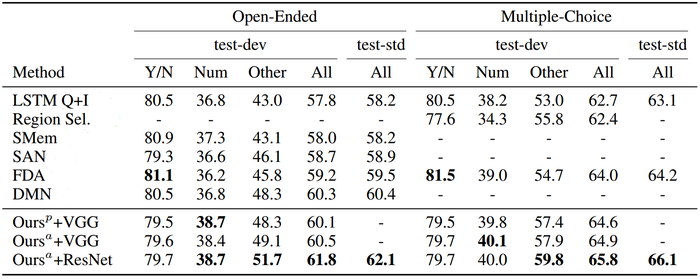

Evaluation of the proposed model is performed using two datasets, the VQA dataset [1] and the COCO-QA dataset [2].

- VQA dataset is the largest dataset for this problem, containing human annotated questions and answers on Microsoft COCO dataset.

- COCO-QA dataset is automatically generated from captions in the Microsoft COCO dataset.

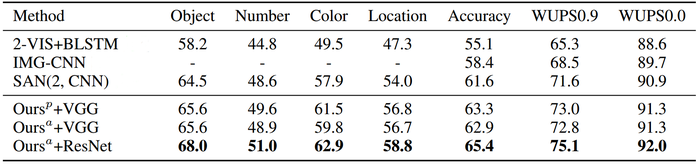

The proposed approach seems to outperform most of the state-of-art techniques as shown in Table 1 and 2.

Ablation Study

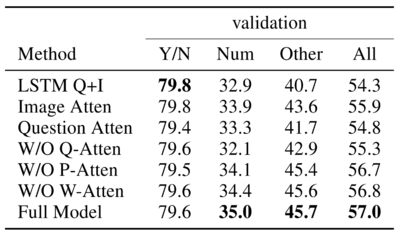

In this part, the authors quantified the importance of individual components in the infrastructure. The idea is re-training the model with ablated components. The detailed settings are listed as follows.

- Image Attention alone(to verify that improvements are not the result of better optimization or better CNN features)

- Question Attention alone

- W/O Conv(replace convolution and pooling by stacking another word embedding layer on the top of word level outputs)

- W/OW-Atten(replace the word level attention with a uniform distribution)

- W/O P-Atten(no phrase level co-attention is performed, and the phrase level attention is set to be uniform. Word and question level co-attentions are still modeled)

- W/O Q-Atten(no question level co-attention is performed while word and phrase level co-attentions are still modeled)

The results of such ablation experiments can be seen in Table 3. It should be noted that "attention" at top of the hierarchy i.e question level or phrase level matters the most as seen in Table 3.

Compared to the full model, it is clear that the ablated model under-performs generally. However, it is interesting to see in some settings, the full model does not excel the ablated model.

Also, it is evident from the study that the question and phrase level attention are more important than word level attention, since the model performs okay without word attention (not much drop) but drops by 1.7% without question level attention.

Qualitative Results

We now visualize some co-attention maps generated by their method in Figure 8.

| Word level |

|

| Phrase level |

|

| Question level |

|

Because their model performs co-attention at three levels, it often captures complementary information from each level, and then combines them to predict the answer. However, it somewhat un-intuitive to visualize the phrase and question level attention mapping applied directly to the words of the question, since phrase and question level features are compound features from multiple words, thus their attention contribution on the actual words from the question cannot be clearly understood.

Conclusion

- A hierarchical co-attention model for visual question answering is proposed.

- Coattention allows the model to attend to different regions of the image as well as different fragments of the question.

- Question is hierarchically represented at three levels to capture information from different granularities.

- Visualization shows model co-attends to interpretable regions of images and questions for predicting the answer.

- Though their model was evaluated on visual question answering, it can be potentially applied to other tasks involving vision and language.

Critique

- This is a very intuitively relevant idea that closely resembles the way human brains tackle VQA tasks. Therefore this could be developed more into delivering sequence based answers and sentence generation. Therefore, the authors could have used a more powerful, more scalable word-encoding technique such as Glove or Bag-of-words which result in smaller dimensional vectors, thereby opening doors for more learning techniques like sentence-answer-generation. Since word-encoding is treated as a separate task here, Bag-of-words could work, but if we need a more temporal technique, we could use the Position Encoding mechanism [3] which accounts for the position of the word in the sequence itself. This abstraction could help the model generalize better to a multitude of tasks.

- The idea that image attentions and question attentions can jointly guide each other makes sense. However, if the image is complex or the question itself is too long, will such side attention be misleading? A further study could be: compared to a simple question, whether a long and complex question will influence the performance of the model.

- The idea of the paper seems great, but 0.2% improvement over the state-of-the-art performance on VQA dataset isn't significant. It would have been good to show some incorrect samples to indicate why the error was still so high. In fact, there is already a new paper [4] that won the 2017 VQA challenge and it significantly outperforms all the previous methods on VQA dataset giving an accuracy of 69%. External training questions/answers the Visual Genome (VG) are used in this model. It is not fair to compare the results directly. But it is interesting to see that their models can benefit from larger datasets.

References

- K. Kafle and C. Kanan, “Visual Question Answering: Datasets, Algorithms, and Future Challenges,” Computer Vision and Image Understanding, Jun. 2017.

- Mengye Ren, Ryan Kiros, and Richard Zemel. Exploring models and data for image question answering. NIPS, 2015.

- Sainbayar Sukhbaatar, Arthur Szlam, Jason Weston and Rob Fergus. 2015. End-To-End Memory Networks. Advances in Neural Information Processing Systems (NIPS) 28

- Damien Teney, Peter Anderson, Xiaodong He, Anton van den Hengel, Tips and Tricks for Visual Question Answering: Learnings from the 2017 Challenge, CVPR 2017

- A. Das, H. Agrawal, L. Zitnick, D. Parikh and D. Batra, "Human Attention in Visual Question Answering: Do Humans and Deep Networks Look at the Same Regions?", Computer Vision and Image Understanding, vol. 163, pp. 90-100, 2017.

- Kan Chen, Jiang Wang, Liang-Chieh Chen, Haoyuan Gao, Wei Xu, Ram Nevatia, "ABC-CNN: An Attention Based Convolutional Neural Network for Visual Question Answering", Computer Vision and Pattern Recognition, 2015.

- Ha, J., Kim, J., & Nam, H. (2017). Dual Attention Networks for Multimodal Reasoning and Matching. 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2156-2164.

- Fu, J., Mei, T., Rui, Y., & Yu, D. (2017). Multi-level Attention Networks for Visual Question Answering. 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 4187-4195.

Implementation: github.com/jiasenlu/HieCoAttenVQA